Abstract

This paper aims to address a new version of Newton’s method for solving constrained generalized equations. This method can be seen as a combination of the classical Newton’s method applied to generalized equations with a procedure to obtain a feasible inexact projection. Using the contraction mapping principle, we establish a local analysis of the proposed method under appropriate assumptions, namely metric regularity or strong metric regularity and Lipschitz continuity. Metric regularity is assumed to guarantee that the method generates a sequence that converges to a solution. Under strong metric regularity, we show the uniqueness of the solution in a suitable neighborhood, and that all sequences starting in this neighborhood converge to this solution. We also require the assumption of Lipschitz continuity to establish a linear or superlinear convergence rate for the method.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Generalized equations were introduced by S. M. Robinson in the early 1970s, as a general tool for describing, analyzing, and solving various problems in a unified manner. In the years since they have been an object of intense research. For a comprehensive study on generalized equations and their applications, see [15, 27, 36, 38, 40] and references therein. Owing to its attractive convergence properties, the applications of Newton’s method and its variations to generalized equations have been investigated in many studies, including but not limited to [2, 3, 8, 12, 14, 16,17,18]. In these papers, the superlinear and/or quadratic local convergences of Newton-type methods have been established under the assumption of the metric regularity or strong metric regularity of the partial linearization of the function that defines a generalized equation. Furthermore, Lipschitz-like conditions on the derivative of the vector-valued function in this equation are assumed. One of the main reasons behind the increasing interest in developing theoretical and computational tools for solving generalized equations is that they provide an abstract model for several families of problems, such as equilibrium problems, linear and nonlinear complementary problems, and variational inequality problems. For further details, see [15, 19, 37,38,39,40].

In this paper, we propose a method for solving generalized equations subject to a set of constraints. Namely, we propose a method for solving the problem of finding \(x\in {{\mathbb {R}}}^n \) such that

where \(f:{\varOmega }\rightarrow {{\mathbb {R}}}^m\) is a continuously differentiable function, \( \varOmega \subseteq {{\mathbb {R}}}^n\) is an open set, \(C \subset \varOmega \), C is a closed convex set, and \(F:\varOmega \rightrightarrows {{\mathbb {R}}}^m\) is a set-valued mapping with a closed nonempty graph. Constrained Variational Inequality Problem, see [9], and in particular, Split Variational Inequality Problem, see [9, 25], can be stated as special cases of constrained generalized equations (1). Further details are given in Sect. 4 below. It is known that if F is the zero mapping, i.e., \(F\equiv \{0\}\), then the problem (1) reduces to a constrained system of nonlinear equations, i.e., that of solving \(f(x) = 0\) such that \(x \in C\). This class of problems has been addressed in several studies, and various methods have been proposed for solving them. See, for example, [5, 6, 22, 23, 28,29,30, 32, 33, 43].

Newton’s method for unconstrained generalized equations, which has its origin in the work of Josephy [27], is formulated as follows. For the current iterate \(x_k \in {\mathbb {R}}^n\), the next iterate \(x_{k+1}\) is computed as a point satisfying the following inclusion:

where \(f'\) is the derivative of f. Note that at each iteration, a partially linearized inclusion at the current iterate is to be solved. The method (2) can be seen as a model for various iterative procedures in numerical nonlinear programming. For instance, when \(F\equiv \{0\}\), this method corresponds to the usual Newton’s method for solving a system of nonlinear equations. If F is the product of the negative orthant in \({\mathbb {R}}^{s}\) with the origin at \({\mathbb {R}}^{m-s}\), i.e., \(F = {\mathbb {R}}^s_{-}\times \{0\}^{m-s}\), then (2) becomes Newton’s method for solving a system of nonlinear equalities and inequalities. See [11]. On the other hand, if the problem (1) with \(C = {\mathbb {R}}^n\) represents the Karush–Kuhn–Tucker optimality conditions for a nonlinear programming problem, then (2) describes the well-known sequential quadratic programming method. See [15, p. 384] and [13, 26].

In this paper, we propose Newton’s method with a feasible inexact projection (Newton-InexP method) for solving the problem (1). Taking into account that Newton’s iterates satisfying (2) can be infeasible for the constraint set, a procedure to obtain a feasible point is applied to obtain them again for the feasible set. If an exact projection onto the feasible set is a computationally expensive task, then a procedure to compute a feasible inexact projection may feature a low computation cost per iteration in comparison with one that computes the exact projection. Therefore, we define the concept of a feasible inexact projection, which we will adopt in the proposed method. This also accepts an exact projection when it is easy to obtain. For instance, the exact projections onto a box constraint or Lorentz cone is very easy to obtain. See, respectively [35, p. 520] and [21, Proposition 3.3]. A feasible inexact projection onto a polyhedral closed convex set can be obtained using quadratic programming methods that generate feasible iterates, such as feasible active set methods and interior point methods. See, for example, [24, 35, 42]. It is worth mentioning that in the case in which \(C = {\mathbb {R}}^n\), the Newton-InexP method becomes the classical Newton’s method applied to generalized equations [12]. On the contrary, if \(F\equiv \{0\}\) and the conditional gradient procedure (CondG procedure), see for example, [20, 31], is used to obtain a feasible inexact projection, then our method reduces to the Newton-CondG method for solving a constrained system of nonlinear equations. See [23]. We also establish the local convergence of the proposed method under appropriate assumptions, namely metric regularity or strong metric regularity and the Lipschitz condition. Metric regularity is assumed to guarantee that the Newton-InexP method generates a sequence that converges to a solution. Under strong metric regularity, we demonstrate the uniqueness of a solution in a suitable neighborhood, and that every sequence starting in this neighborhood converges to that solution. We also require the assumption of Lipschitz continuity of the derivative \(f'\) to establish a linear or superlinear convergence rate for the Newton-InexP method.

The remainder of this paper is organized as follows. In Sect. 2, we present the notations and some technical results that are used throughout the paper. In Sect. 3, we describe the Newton-InexP method, and present its local convergence properties. In particular, Sect. 3.1 is devoted to the proof of the local convergence theorem. In Sect. 4, we present a concrete application of the main result and some examples of constrained generalized equations. We conclude the paper with some remarks in Sect. 5.

2 Notation and auxiliary results

In this section, we present some notations, definitions, and results used throughout the paper. For further details, see [15]. We begin with some concepts of analysis and that of a set-valued mapping. Let the open and closed balls of radius \(\delta > 0\), centered at x, be respectively defined by

The vector space consisting of all continuous linear mappings\(A:{{\mathbb {R}}}^n \rightarrow {{\mathbb {R}}}^m\) is denoted by \({ {\mathcal {L}}}({{\mathbb {R}}}^n,{{\mathbb {R}}}^m)\), and the norm of A is defined by \(\Vert A\Vert :=\sup \,\{\Vert A x\Vert ~:~\Vert x\Vert \le 1 \}\). Let \(\varOmega \subseteq {{\mathbb {R}}}^n\) be an open set and \(f:{\varOmega }\rightarrow {{\mathbb {R}}}^m\) be differentiable at all \(x\in \varOmega \). Then, the derivative of f at x is the linear mapping \(f'(x):{\mathbb R}^n \rightarrow {{\mathbb {R}}}^m\), which is continuous. The graph of the set-valued mapping \(F:{{\mathbb {R}}}^n \rightrightarrows {{\mathbb {R}}}^m\) is the set \(\text{ gph }\, F:= \left\{ (x,u)\in {{\mathbb {R}}}^n \times {{\mathbb {R}}}^m ~:~ u \in F(x)\right\} \). The domain and range of the set-valued mapping F, respectively, are the sets \(\text{ dom }\, F := \{x\in {{\mathbb {R}}}^n ~:~ F(x)\ne \varnothing \} \) and \( \text{ rge }\, F := \{u\in {{\mathbb {R}}}^m ~:~ u \in F(x) ~\text{ for } \text{ some } ~x\in {{\mathbb {R}}}^n \}\). The inverse of F is the set-valued mapping \(F^{-1}:{{\mathbb {R}}}^m \rightrightarrows {{\mathbb {R}}}^n\) defined by \( F^{-1}(u):=\{x \in {{\mathbb {R}}}^n ~: ~ u \in F(x)\}\). The partial linearization of \(f + F\) at \(x\in \varOmega \) is the set-valued mapping \(L_{f+F}(x, \cdot ):\varOmega \rightrightarrows {{\mathbb {R}}}^m\) defined by

For sets C and D in \({{\mathbb {R}}}^n\), the distance fromxtoD and the excess ofCbeyondD are respectively defined by

where the convention is adopted that \( d(x, D)=+\infty \) when \(D= \varnothing \), \(e( \varnothing , D)=0\) when \(D\ne \varnothing \), and \(e( \varnothing , \varnothing )=+\infty \). In the following, we present the notion of metric regularity, which plays an important role in the subsequent analysis.

Definition 1

Let \(\varOmega \subset {\mathbb {R}}^n \) be open and nonempty set. A set-valued mapping \(G: \varOmega \rightrightarrows {\mathbb {R}}^m\) is said to be metrically regular at \({{\bar{x}}}\in \varOmega \) for \({\bar{u}}\in {\mathbb {R}}^m\) when \({{\bar{u}}} \in G({{\bar{x}}})\), the graph of G is locally closed at \(({{\bar{x}}}, {{\bar{u}}})\), and there exist constants \(\kappa \ge 0\), \(a>0\), and \(b>0\) such that \(B_a[{\bar{x}}]\subset \varOmega \) and \(d(x, G^{-1}(u))\le \kappa d(u, G(x))\), for all \((x, u) \in B_a[{{\bar{x}}}] \times B_b[{{\bar{u}}}].\) Moreover, if the mapping \(B_b[{{\bar{u}}}] \ni u\mapsto G^{-1}(u)\cap B_a[{{\bar{x}}}]\) is single-valued, then G is called strongly metrically regular at \({{\bar{x}}}\in \varOmega \) for \({{\bar{u}}}\in {\mathbb {R}}^m\), with associated constants \(\kappa \ge 0\), \(a>0\), and \(b>0\).

When the mapping \(B_b[{{\bar{u}}}] \ni u\mapsto G^{-1}(u)\cap B_a[{\bar{x}}]\) in Definition 1 is single-valued, then for the sake of simplicity we hereafter adopt the notation \(w= G^{-1}(u)\cap B_a[{{\bar{x}}}]\) instead of \(\{w\}= G^{-1}(u)\cap B_a[{{\bar{x}}}]\).

Remark 1

If G is strongly metrically regular at \({{\bar{x}}}\in \varOmega \) for \({{\bar{u}}}\in {\mathbb {R}}^m\) with constants \(\kappa \ge 0\), \(a>0\), and \(b>0\), then the mapping \(B_b[{{\bar{u}}}] \ni u\mapsto G^{-1}(u)\cap B_a[{{\bar{x}}}]\) is single-valued and Lipschitz continuous on \(B_b[{{\bar{u}}}]\) with Lipschitz constant \(\kappa \), i.e., \( \left\| G^{-1}(u)\cap B_a[{{\bar{x}}}]- G^{-1}(v)\cap B_a[{\bar{x}}] \right\| \le \kappa \Vert u-v\Vert \) for all \( u, v \in B_b[{{\bar{u}}}]\). See [15, Proposition 3G.1, p. 193].

We end this section by defining a generalization of the contraction mapping principle for set-valued mappings. For a prove of this, see [15, Theorem 5E.2, p. 313].

Theorem 1

Let \(\varPhi : {{\mathbb {R}}}^n \rightrightarrows {{\mathbb {R}}}^n\) be a set-valued mapping and let \({{\bar{x}}}\in {{\mathbb {R}}}^n\). Suppose that there exist scalars \(\rho > 0\) and \(\lambda \in (0, 1)\) such that the set \(gph \, \varPhi \cap ( B_{\rho }[{{\bar{x}}}]\times B_{\rho }[{{\bar{x}}}])\) is closed and the following conditions hold:

-

(i)

\(d({{\bar{x}}}, \varPhi ({{\bar{x}}}))\le {\rho }(1-\lambda )\);

-

(ii)

\(e\left( \varPhi (p)\cap B_{\rho }[{{\bar{x}}}], \varPhi (q)\right) \le \lambda \Vert p- q\Vert \) for all \(p, q\in B_{\rho }[{\bar{x}}]\).

Then, \(\varPhi \) has a fixed point in \(B_{\rho }[{{\bar{x}}}]\). That is, there exists \(y \in B_{\rho }[{{\bar{x}}}]\) such that \(y\in \varPhi (y)\).

3 Proposed method and its local convergence analysis

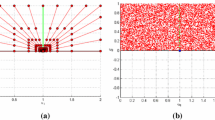

In this section, we present the Newton-InexP method for solving the problem (1). We will also study the local convergence properties of a sequence generated using this method. Our analysis is performed under the assumption of metric regularity and strong metric regularity for an approximation of the set-valued mapping \(f+F\) and assuming the Lipschitz continuity of the derivative \(f'\). To ensure the feasibility of the Newton’s iterates, our method incorporates a procedure to obtain a feasible inexact projection onto the feasible set. In the following, we introduce the concept of a feasible inexact projection.

Definition 2

Let \(C\subset {{\mathbb {R}}}^n\) be a closed convex set, with \(x\in C\) and \(\theta \ge 0\). The feasible inexact projection mapping relative to x with error tolerance \(\theta \), denoted by \(P_C(\cdot ,x,\theta ): {{\mathbb {R}}}^n \rightrightarrows C\) is the set-valued mapping defined as follows:

Each point \(w\in P_C(y,x,\theta ) \) is called a feasible inexact projection ofyontoCwith respect toxand with error tolerance\(\theta \).

Remark 2

Because \(C\subset {{\mathbb {R}}}^n\) is a closed convex set, [7, Proposition 2.1.3, p. 201] implies that for each \(y\in {{\mathbb {R}}}^n\) we have \(P_{C}(y)\in P_C(y,x,\theta )\), where \(P_{C}\) denotes the exact projection mapping. Therefore, \(P_C(y,x,\theta )\ne \varnothing \) for all \(y\in {{\mathbb {R}}}^n\) and \(x\in C\). If \(\theta = 0\) in Definition 2, then \(P_C(y,x,0)=\{P_{C}(y)\}\) for all \(y\in {{\mathbb {R}}}^n\) and \(x\in C\). We used\(P_C(y,x,0)=P_{C}(y)\)instead of\(P_C(y,x,0)=\{P_{C}(y)\}\).

The next result plays an important role in the remainder of this paper. It presents a basic property of the feasible inexact projection, the proof is similar to [23, Lemma 4]. For the sake of completeness, we decided to present the proof here.

Lemma 1

Let \(y, {{{\tilde{y}}}}\in {{\mathbb {R}}}^n\), \(x, {{\tilde{x}}} \in C\), and \(\theta \ge 0\). Then, for any \(w \in P_C(y, x, \theta ) \), we have

Proof

To simplify the notation we set \({{\tilde{w}}} = P_C({{\tilde{y}}}, {{\tilde{x}}}, 0)\), and take \(w \in P_C(y, x, \theta )\). First, note that \(\Vert y - {{\tilde{y}}}\Vert ^2 = \Vert (y-w) - ({{\tilde{y}}} - {{\tilde{w}}})\Vert ^2 + \Vert w - {{\tilde{w}}}\Vert ^2 + 2 \langle (y -{{\tilde{y}}}) - (w- {\tilde{w}}), w - {{\tilde{w}}} \rangle \), which implies that

Because \({{\tilde{w}}} = P_C({{\tilde{y}}}, {{\tilde{x}}}, 0)\) and \(w \in P_C(y, x, \theta )\), by using Definition 2 and the fact that \({{\tilde{w}}}, w\in C\), we can conclude that \(\langle y-w,\, {{\tilde{w}}} - w\rangle \le \theta \Vert y - x\Vert ^2\) and \(\langle {{\tilde{y}}}-{{\tilde{w}}},\, w - {{\tilde{w}}}\rangle \le 0.\) Thus, the combination of these three previous inequalities yields \(\Vert w - {{\tilde{w}}}\Vert ^2 \le \Vert y - {{\tilde{y}}}\Vert ^2 + 2 \theta \Vert y - x\Vert ^2\), and then \(\Vert w - {{\tilde{w}}}\Vert \le \Vert y - {{\tilde{y}}}\Vert + \sqrt{2 \theta } \Vert y - x\Vert \), giving the desired inequality. \(\square \)

The conceptual Newton’s method, named the Newton-InexP method, for solving (1), with a feasible inexact projection, and with \(x_0\in C\) and \(\{\theta _k\}\subset [0, +\infty )\) as the input data, is formally described as follows.

Remark 3

In Step 1, we check if the current iterate \(x_k\) is a solution of the problem (1). Otherwise, we compute a point \(y_k\) satisfying the inclusion (5). Because the point \(y_k\) in Step 1 may be infeasible for the constraint set C, the Newton-InexP method applies a procedure to obtain a feasible inexact projection, and consequently the new iterate \(x_{k+1}\) in C. In particular, the point \(x_{k+1}\) obtained in (6) is an approximate feasible solution for the projection subproblem \(\min _{z \in C}\{\Vert z - y_k\Vert ^2/2\}\), satisfying \(\langle y_k-x_{k+1}, z - x_{k+1}\rangle \le \theta _k\Vert y_k - x_k\Vert ^2\) for any \(z \in C\). As we will see, the specific choice of the tolerance \(\theta _k\) is essential to establish the local convergence of the Newton-InexP method.

In the following, we state our main result for the Newton-InexP method. The proof constitutes a combination of the results that will be studied in the sequel.

Theorem 2

Let \(\varOmega \subset {{\mathbb {R}}}^n\) be an open set, \(f: \varOmega \rightarrow {{\mathbb {R}}}^m\) be continuously differentiable in \(\varOmega \), and \(F:\varOmega \rightrightarrows {{\mathbb {R}}}^m\) be a set-valued mapping with closed graph. Assume that \(C \subset \varOmega \) is a closed convex set, \(x_*\in C\), \(f(x_*) + F(x_*)\ni 0\), there exists \(L > 0\) such that

and the set-valued mapping \(\varOmega \ni y \mapsto L_{f+F}(x_{*}, y)\) is metrically regular at \(x_{*}\) for 0, with constants \(\kappa > 0\), \(a > 0\), and \(b > 0\). Let \(r:=\sup \left\{ t\in {{\mathbb {R}}}~:~ B_t(x_*)\subset \varOmega \right\} \), \(\{\theta _k\}\subset [0, 1/2)\) and

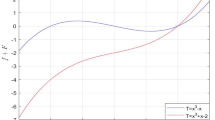

Then, for every \(x_0 \in C \cap B_{ r_{*}}(x_*)\backslash \{x_*\}\), there exists a sequence \(\{x_k\}\) generated by the Newton-InexP method that solves (1), associated to \(\{\theta _k\}\) and starting at \(x_0\), which is contained in \( B_{ r_{*}}(x_*)\cap C\) and converges to \(x_*\) with the following rate of convergence:

As a consequence, if \(\lim _{k\rightarrow +\infty }\theta _k=0\) then \(\{x_k\}\) converges to \(x_*\) superlinearly. In particular, if \( \theta _k=0\) for all \(k=0, 1 \ldots \), then

and \(\{x_k\}\) converges to \(x_*\)Q-quadratically. Furthermore, if the mapping \(L_{f+F}(x_{*}, \cdot )\) is strongly metrically regular at \(x_{*}\) for 0, then \(x_*\) is the unique solution of (1) in \(B_{r_{*}}(x_*)\), and every sequence generated by the Newton-InexP method associated to \(\{\theta _k\}\) and starting at \(x_0 \in C \cap B_{ r_{*}}(x_*)\backslash \{x_*\}\) satisfies (9) and converges to \(x_*\).

Remark 4

In particular, (9) implies that \(\limsup _{k\rightarrow +\infty } [\Vert x_{*} - x_{k+1}\Vert /\Vert x_{*} - x_{k}\Vert ] \le \sqrt{2{\hat{\theta }}}\), where \({{\hat{\theta }}}=\limsup _{k\rightarrow +\infty } {\theta _k}\). Note that if \(C = {{\mathbb {R}}}^n\), then \(\theta _k\equiv 0\), and using [15, Theorem 3E.7, p. 178] we can conclude that with some adjustment Theorem 2 reduces to [12, Theorem 1]. If we have \( y_k\in C\) in the Newton-InexP method for all \(k = 0, 1, \ldots \), then the procedure to obtain a feasible inexact projection plays no role. In this case, the convergence rate is Q-quadratic, as in (10).

Henceforth, we assume that all the assumptions of Theorem 2 hold except the strong metric regularity, which will be considered to hold only when explicitly stated. In order to prove Theorem 2, we require some preliminary results. We begin with a technical result that will be useful in our context.

Lemma 2

The following inequality holds: \(\Vert f(q)-f(p)-f'(p)(q-p)\Vert \le (L/2)\Vert q-p\Vert ^2,\) for all \(p, q\in B_{r}(x_*)\). Moreover, if \(\Vert x_*-p\Vert < r_{*}\), then

Proof

Because \(q+(1-\tau )(p-q)\in B_{r}(x_*)\) for all \(\tau \in [0,1]\) and f is continuously differentiable in \(\varOmega \), applying the fundamental theorem of calculus and the properties of the norm we obtain that

On the other hand, by using (7) and performing the integration, the last inequality leads the first inequality of the lemma. We proceed to prove the second inequality. For this purpose, let \(0<\Vert x_*-p\Vert < r_{*}\) and \(0<\Vert x_* - z\Vert <r_{*}\). By applying the triangle inequality we have

Thus, the first inequality of this lemma together with the Lipschitz condition in (7) implies that

Hence, by combining this inequality with (11) we conclude that

Taking into account that \(\Vert x_*-p\Vert < r_*\), \(\Vert x_* - z\Vert < r_*\) and \(r_* \le \sqrt{2b/ 3L}\), the desired inequality follows from the last inequality. Therefore, the proof of lemma is complete. \(\square \)

To state the next result, for each fixed \(x\in {{\mathbb {R}}}^n\) we define the following auxiliary set-valued mapping \( \varPhi _{x}: \varOmega \rightrightarrows {{\mathbb {R}}}^n\):

where \( {{\mathbb {R}}}^m \ni u \mapsto L_{f+F}\,(x_*, u )^{-1}:= \left\{ z \in {{\mathbb {R}}}^n ~: ~ u \in L_{f+F}(x_*, z)\right\} \) is the inverse of \( L_{f+F}\) defined in (3). Therefore, \(y\in \varPhi _{x}(y)\) if and only if x and y satisfy the following inclusion:

i.e., y is the next Newton’s iterate from x. In the next lemma, we establish existence of a fixed point of \(\varPhi _{x}\) for all x in a suitable neighborhood of \(x_*\). Moreover, we present an important bound on the distance between \(x_*\) and this fixed point, and establish its uniqueness under strong metric regularity. The statement of this result is as follows.

Lemma 3

If \(0<\Vert x_*-x\Vert < r_{*}\), then there exists a fixed point \(y\in \varPhi _{x}(y)\) such that

In particular, \(y\in B_{r_*}(x_*)\). In addition, if \(L_{f+F}(x_{*}, \cdot )\) is strongly metrically regular at \(x_{*}\) for 0, then for all \(x \in B_{r_*}(x_*)\) the mapping \(\varPhi _x\) has only one fixed point in \(B_{r_*}(x_*)\) satisfying (13).

Proof

To prove the first part of the lemma, we will first prove the following two inequalities:

-

(i)

\(d\left( x_*, \varPhi _{x}(x_*)\right) \le \rho \left( 1- \kappa L\Vert x_*-x\Vert \right) \);

-

(ii)

\(e\left( \varPhi _{x}(p)\cap B_{\rho }[x_*], \varPhi _{x}(q)\right) \le \kappa L\Vert x_*-x\Vert \left\| p-q\right\| , \qquad \forall ~ p, q\in B_{\rho }[x_*], \)

where the scalar \( \rho > 0\) is defined by

In order to prove (i), first note that the definition of the mapping \( \varPhi _{x}\) given in (12) implies that

Thus, taking into account that the second part of Lemma 2 with \(p= x\) and \(z = x_*\) implies that \(\Vert f(x_*)-f(x)- f'(x)(x_*-x)\Vert < b\), and considering that \(x_* \in B_a[x_*]\) and \(0 \in L_{f+F}(x_*, x_*)\), we can apply Definition 1 to conclude that

Because Lemma 2 with \(p= x\) and \(q = x_*\) implies that \(\Vert f(x_*) - f(x) - f'(x)(x_* - x)\Vert \le (L/2)\Vert x_* - x\Vert ^2\), combining the two last inequalities we obtain that \(d(x_*, \varPhi _{x}(x_*)) \le (\kappa L/2)\Vert x_*-x\Vert ^2\), which after some manipulation yields

This inequality, together with Definition (14), proves item (i). To prove item (ii), we take \(p, q\in B_{\rho }[x_*]\). Owing to Definition (14), taking into account that \( r_{*}\le 2/(3\kappa L)\) and \(\Vert x_*-x\Vert < r_{*}\), we can verify that \(\rho < r_* \). Thus, Lemma 2 implies that \(\Vert f(x_*)- f(x)- f'(x)(p-x)+ f'(x_*)(p-x_*)\Vert < b\) and \(\Vert f(x_*)- f(x)- f'(x)(q-x)+ f'(x_*)(q-x_*)\Vert < b\). Because \(e(\varnothing , \varPhi _x(q)) = 0\), we can assume without loss of generality that \(\varPhi _{x}(p)\cap B_{a}[x_*] \ne \varnothing \) for all \(p\in B_{\rho }[x_*]\). Let \(z \in \varPhi _{x}(p)\cap B_{a}[x_*]\). Then, from Definition 1 with \({{\bar{x}}}=x_*\), \({{\bar{u}}}=0\), \( x = z\), \(u=f(x_*) - f(x) - f'(x)(q - x)+ f'(x_*)(q - x_*)\), and \(G = L_{f+F}(x_*, \cdot )\), we have

Because \(z \in \varPhi _{x}(p)\) implies that \(f(x_*) - f(x) - f'(x)(p - x)+ f'(x_*)(p - x_*) \in L_{f+F}(x_*, z)\), by using the definition of distance given in (4), we obtain

Hence, combining the two last inequalities we conclude that

Taking the supremum with respect to \(z \in \varPhi _{x}(p)\cap B_{a}[x_*]\) in the last inequality and using the definition of excess given in (4), we have

Because \(\rho < r_* \le a\), we have \(e(\varPhi _{x}(p)\cap B_{\rho }[x_*], \varPhi _{x}(q)) \le e(\varPhi _{x}(p)\cap B_{a}[x_*], \varPhi _{x}(q))\). Hence, from the last inequality and the properties of the norm, we obtain

By using the fact that \(f'\) is Lipschitz continuous with constant \(L>0\), the latter inequality becomes

and thus item (ii) is proved. Because \( r_{*}\le 2/(3\kappa L)\) implies that \(\kappa L\Vert x_*-x\Vert < 1\), we can apply Theorem 1 with \(\varPhi =\varPhi _{x}\), \( \bar{x} = x_{*}\), and \(\lambda = \kappa L\Vert x_*-x\Vert \) to conclude that there exists \(y \in B_{\rho }[x_*]\), i.e., the inequality (13) holds, with that \(y\in \varPhi _{x}(y)\). To prove that \(y\in B_{r_*}(x_*)\), we use the fact that \( r_{*}\le 2/(3\kappa L)\) and (13) to conclude that

which implies the desired result. Therefore, the proof of the first part of the lemma is complete. Now, we assume that \(L_{f+F}(x_{*}, \cdot )\) is strongly metrically regular at \(x_{*}\) for 0. Suppose that there exist \({{\hat{y}}}\) and \({{\tilde{y}}}\in B_{\rho }[x_*] \subset B_{ r_{*}}(x_*)\) such that \({{\hat{y}}}\in \varPhi _{x}({{\hat{y}}})\) and \({{\tilde{y}}}\in \varPhi _{x}({{\tilde{y}}})\). We know that the mapping \(z\mapsto L_{f+F}(x_*, z)^{-1}\cap B_a[x_*]\) is single-valued on \(B_{b}[0]\), and thus the definition of \(\varPhi _{x}\) in (12) and the second part of Lemma 2 imply that \({{\hat{y}}} = \varPhi _{x}({{\hat{y}}})\) and \({{\tilde{y}}} = \varPhi _{x}({{\tilde{y}}})\). Using the definition of excess in (4), item (ii), and the fact that \(r_* \le 2/(3 \kappa L)\), we obtain

which is a contradiction. Thus, \({{\hat{y}}} = {{\tilde{y}}}\), and the proof is concluded. \(\square \)

The next lemma plays an important role in the convergence analysis. In particular, it will be used to prove the well-definedness of a sequence \(\{x_k\} \subset B_{r_*}(x_*)\cap C\) and its convergence to a solution of the problem (1).

Lemma 4

If \( \theta \ge 0\), \(x \in C \cap B_{r_*}(x_*)\backslash \{x_*\}\) and \(y\in \varPhi _{x}(y)\) satisfies (13), then it holds that

In addition, if \(\theta <1/2\), then \(P_C(y, x, \theta ) \subset B_{ r_{*}}(x_{*})\cap C\).

Proof

Take \(w\in P_C(y, x, \theta )\). Then, applying Lemma 1 with \({{\tilde{y}}} = x_*\) and \({{\tilde{x}}} = x_*\), we have

On the other hand, because \(\Vert x_*-x\Vert < r_{*}\), by applying Lemma 3 and some manipulations, we conclude that

Hence, owing to the fact that \(P_C(x_{*}, x_{*}, 0) = x_{*}\), the last inequality and (16) yield (15). The conditions (8) together with \(\theta <1/2\) imply that \((1+ \sqrt{2\theta } )[(\kappa L\Vert x_*-x\Vert )/(2(1- \kappa L\Vert x_*-x\Vert ))] + \sqrt{2\theta } <1\). Thus, it follows from (15) that

and because \(\Vert x_*-x\Vert < r_*\) we obtain that \(P_C(y, x, \theta ) \subset B_{r_*}(x_*)\). Because \(P_C(y, x, \theta )\subset C\), the last statement of the lemma follows, which concludes the proof. \(\square \)

Now, let us study the uniqueness of the solution for (1) in the neighborhood \(B_{r_*}(x_*).\)

Lemma 5

If the mapping \(L_{f+F}(x_{*}, \cdot )\) is strongly metrically regular at \(x_{*}\) for 0, then \(x_*\) is the unique solution of (1) in \(B_{r_{*}}(x_*)\).

Proof

Let \({{\hat{x}}}\) be a solution of (1) in \(B_{r_{*}}(x_*)\). Thus, \(\Vert {{\hat{x}}} - x_*\Vert < r_*\le \sqrt{2b/3L}\), which together with the first part of Lemma 2 implies that

Moreover, considering that \(x_*\in B_{r_*}[x_*]\) and \(r_* \le a\), we can apply Definition 1 to conclude that

Thus, owing to the fact that \(0 \in L_{f+F}(x_*, x_*)\), we can apply the first inequality in (17) and the definition of distance in (4) to conclude that

On the other hand, because the mapping \(L_{f+F}(x_{*}, \cdot )\) is strongly metrically regular at \(x_{*}\) for 0, the mapping \( z\mapsto L_{f+F}(x_*, z)^{-1}\cap B_a[x_*]\) is single-valued on \(B_{b}[0]\). Furthermore, we know that \(0\in f({{\hat{x}}}) + F({{\hat{x}}})= f({{\hat{x}}}) - f(x_*) - f'(x_*)({{\hat{x}}} - x_*) + L_{f+F}(x_*, {{\hat{x}}})\). Hence, we conclude that \({{\hat{x}}} = L_{f+F}(x_*, -f({\hat{x}}) + f(x_*)+f'(x_*)({{\hat{x}}} - x_*))^{-1}\), and we obtain from the last inequality that

If \(\Vert {{\hat{x}}}-x_*\Vert \ne 0\), then last inequality implies that \(\Vert {{\hat{x}}} - x_*\Vert \ge 2/(\kappa L)> 2/(3\kappa L)\ge r_{*}\), which is absurd, because \(\Vert {{\hat{x}}} - x_*\Vert < r_*\). Therefore, \(\Vert {{\hat{x}}}-x_*\Vert =0\), and thus \(x_*\) is the unique solution of (1) in \(B_{r_*}(x_*).\)\(\square \)

Our final task in this section is to prove Theorem 2. The proof comprises a convenient combination of Lemmas 3, 4, and 5.

3.1 Proof of Theorem 2

Proof

First, we will show by induction on k that there exists a sequence \(\{x_k\}\) generated by the Newton-InexP method solving (1), associated to \(\{\theta _k\}\) and starting in \(x_0\), which satisfies the following two conditions:

for all \(k = 0, 1, \ldots \). Take \(x_{0} \in C \cap B_{r_{*}}(x_*)\backslash \{x_*\}\) and \(k = 0\). Because \(\Vert x_* - x_0\Vert < r_{*}\), applying the first part of Lemma 3 with \(x=x_0\), we obtain that there exists \(y_0\in \varPhi _{x_0}(y_0)\) such that \(y_0 \in B_{ r_{*}}(x_{*})\). If \(y_0\in C\), then \(x_1 = y_0\in B_{ r_{*}}(x_{*}) \cap C\), and by using (13) we can conclude that (18) holds for \(k=0\). Otherwise if \(y_0\notin C\), then take \(x_{1} \in P_C(y_0, x_0, \theta _0)\). Moreover, by using the first part of Lemma 4 with \(x=x_0\), we obtain that (18) holds for \(k=0\). Furthermore, the conditions (8) imply that \((1+ \sqrt{2\theta _0} )[(\kappa L\Vert x_*-x_0\Vert )/(2(1- \kappa L\Vert x_*-x_0\Vert ))] + \sqrt{2\theta _0} <1\), and so the second part of Lemma 4 give us that \(x_1 \in B_{ r_{*}}(x_{*}) \cap C\). Therefore, there exits \(x_1\) satisfying (18) for \(k=0\). Assume for induction that the two assertions in (18) hold for \(k=0, 1, \ldots , j-1\). Because \(x_{j} \in B_{r_{*}}(x_*)\cap C\), we can apply Lemma 3 with \(x = x_{j}\) to conclude that there exists \(y_{j}\in \varPhi _{x_j}(y_{j})\) such that \(y_j\in B_{ r_{*}}(x_{*})\). If \(y_j\in C\), then \(x_{j+1}=y_j\in B_{ r_{*}}(x_{*}) \cap C\), and (13) implies that (18) holds for \(k=j\). Otherwise, if \(y_j\notin C\) then take \(x_{j+1} \in P_C(y_j, x_j, \theta _j)\). Hence, using first part of Lemma 4 we obtain that the inequality in (18) holds for \(k=j\). Because (8) implies that \((1+ \sqrt{2\theta _j} )[(\kappa L\Vert x_*-x_j\Vert )/(2(1- \kappa L\Vert x_*-x_j\Vert ))] + \sqrt{2\theta _j} <1\), the second part of Lemma 4 yields that \(x_{j+1} \in B_{ r_{*}}(x_{*}) \cap C\). Thus, there exists \(x_{j+1}\) satisfying (18) for \(k=j\), and the induction step is complete. Therefore, there exists a sequence \(\{x_k\}\) generated by the Newton-InexP method solving (1), associated to \(\{\theta _k\}\) and starting in \(x_0\), and it satisfies the two conditions in (18). Now, we proceed to prove that the sequence \(\{x_k\}\) converges to \(x_*\). Indeed, because \(\Vert x_*-x_k\Vert < r_*\) for all \(k = 0, 1, \ldots \), \({{\tilde{\theta }}} = \sup _k\theta _k < 1/2\) and \(r_{*}\le [2(1-\sqrt{2{\tilde{\theta }}})]/[(3-\sqrt{2{{\tilde{\theta }}}})\kappa L]\), we conclude from (18) that \( \Vert x_{*} - x_{k+1}\Vert < \Vert x_*-x_k\Vert . \) This implies that the sequence \(\{\Vert x_* - x_k\Vert \}\) converges. Let us say that \(t_*=\lim _{k\rightarrow +\infty }\Vert x_*-x_k\Vert \le \Vert x_*-x_{0}\Vert <r_{*}\). Because \(\{x_{k}\}\subset B_{r_{*}}(x_*)\cap C\), we can conclude that \(t_* <r_*\). On the other hand, by combining the inequality in (18) with the second condition in (8), we obtain

for all \(k = 0, 1, \ldots \). Thus, taking the limit in this inequality as k goes to \(+ \infty \), we have

If \(t_*\ne 0\), we obtain from the last inequality that \( [2(1-\sqrt{2{{\tilde{\theta }}}})]/[(3-\sqrt{2{{\tilde{\theta }}}}) \kappa L]\le t_*\), which contradicts the first assertion in (8), because \( t_*< r_*\). Hence, \(t_*= 0\), and consequently the sequence \(\{x_k\}\) converges to \(x_*\). In particular, if \(\lim _{k \rightarrow +\infty } \theta _k = 0\), then by taking the limit in (9) as k goes to \(+ \infty \) we obtain \(\limsup _{k\rightarrow +\infty } [\Vert x_{*} - x_{k+1}\Vert /\Vert x_{*} - x_{k}\Vert ] =0\), i.e., the sequence \(\{x_k\}\) converges to \(x_*\) superlinearly. On the other hand, if \(\theta _k = 0\) for all \(k = 0, 1,\ldots \), then \({{\tilde{\theta }}} = 0\). Hence, from (9) and the first equality in (8), we have

for all \(k = 0, 1, \ldots \), and consequently \(\{x_k\}\) converges to \(x_*\)Q-quadratically. Furthermore, if the mapping \(L_{f+F} (x_*, \cdot )\) is strongly metrically regular at \(x_*\) for 0, then Lemma 5 implies that \(x_*\) is the unique solution of (1) in \(B_{r_*}(x_*)\). To prove the last statement of the theorem, take \(x_0 \in C \cap B_{ r_{*}}(x_*)\backslash \{x_*\}\). Then, the second part of Lemma 3 implies that there exist a unique\(y_0 \in B_{\rho _0}(x_*)\) such that \(y_0\in \varPhi _{x_0}(y_{0})\), i.e., there exists a unique solution \(y_0\) of (5) for \(k=0\), where

Furthermore, Lemma 4 implies that every \(x_{1} \in P_C(y_0, x_0, \theta _0)\) satisfies (9) for \(k=0\). Thus, proceeding by induction we can prove that the every sequence \(\{x_k\}\) generated by the Newton-InexP method to solve (1), associated to \(\{\theta _k\}\) and starting in \(x_0\), satisfies (9), and by using similar argument as above we can prove that such a sequence converges to \(x_*\). Therefore, the proof is complete. \(\square \)

4 Application

In this section, we present an application of Theorem 2 when F is a maximal monotone operator. To this end, we begin by presenting a class of mappings f and F for which the set-valued mapping defined in (3) is strongly metrically regular. The next result is a version of [18, Remark 4] for strongly metrically regular mappings, and its proof will be included here for the sake of completeness. See also [41, Lemma 2.2].

Proposition 1

Let \(f: {{\mathbb {R}}}^n \rightarrow {{\mathbb {R}}}^n \) be a continuously differentiable function and \(F: {{\mathbb {R}}}^n \rightrightarrows {{\mathbb {R}}}^n \) be a maximal monotone mapping. Assume that \({x_*} \in {{\mathbb {R}}}^n\) and \(\beta >0\) satisfy the following condition:

Then, \(\text{ rge }\,L_{f+F}({x_*}, \cdot )= {{\mathbb {R}}}^n \), and for any \( {{\bar{x}}} \in {{\mathbb {R}}}^n\) and \({\bar{u}} \in L_{f+F}({x_*}, {{\bar{x}}} )\), the set-valued mapping \(L_{f+F}({x_*},\cdot ): {\mathbb R}^n \rightrightarrows {{\mathbb {R}}}^n \) is strongly metrically regular at \( {{\bar{x}}} \in {{\mathbb {R}}}^n\) for \({\bar{u}} \in {\mathbb R}^n\), with constants \(\kappa = 1/\beta \), \(a=+\infty \), and \(b=+\infty \).

Proof

First, we will prove that \(\text{ rge }\,L_{f+F}({x_*}, \cdot )= {{\mathbb {R}}}^n\). For this, let \(0< \mu < 2\beta /\Vert f'({x_*})\Vert ^2\), take \({{\hat{x}}}\in {{\mathbb {R}}}^n\), and define the mapping \({\mathbb R}^n\ni y \mapsto \varPhi (y):=(I+\mu F)^{-1}(\mu {{\hat{x}}} + y -\mu [f({x_*}) +f'({x_*})(y-{x_*})])\). Because F is a maximal monotone mapping, according to [15, Theorem 6C.4, p. 387] the mapping \((I+\mu F)^{-1}\) is single-valued and Lipschitz continuous on \({{\mathbb {R}}}^n\) with constant 1. Thus, for any \(y, z \in {{\mathbb {R}}}^n\) we have

Using the inequality (19) in the last relation, we obtain that

Considering that \(0< \mu < 2\beta /\Vert f'({x_*})\Vert ^2\), we have \(\lambda ^2:=\left( 1-2\beta \mu +\mu ^2\Vert f'({x_*})\Vert ^2\right) <1\). Thus, we conclude that \(\Vert \varPhi (y)-\varPhi (z)\Vert \le \lambda \Vert y - z\Vert \) for all y, \(z \in {{\mathbb {R}}}^n\). Therefore, by the Banach contraction principle (see [15, Theorem 1A.3, p. 17]), there exists \(x \in {\mathbb R}^n\) such that \(x = \varPhi (x)\), which implies that \({{\hat{x}}} = L_{f+F}({x_*}, x)\), and thus \(\text{ rge }\,L_{f+F}({x_*}, \cdot )= {{\mathbb {R}}}^n \). We proceed to prove that the graph of \(L_{f+F}({x_*}, \cdot )\) is locally closed at \(({\bar{x}}, {\bar{u}})\), i.e., there exists a neighborhood \({\mathcal {U}}\) of \(({\bar{x}}, \bar{u})\) such that the intersection \(\text{ gph }\,L_{f+F}({x_*}, \cdot )\cap {\mathcal {U}}\) is closed. Indeed, let \(\{({\bar{x}}_k, \bar{u}_k)\} \subset \text{ gph }\,L_{f+F}({x_*}, \cdot )\cap {\mathcal {U}}\) be a sequence such that \(\lim _{k \rightarrow + \infty }{\bar{x}}_k = {\bar{x}}\) and \(\lim _{k \rightarrow + \infty }{\bar{u}}_k = {\bar{u}}\). By the definition of the graph of a set-valued mapping, we have \({\bar{u}}_k \in L_{f+F}({x_*}, {\bar{x}}_k)\) for \(k=0,1, \ldots \). Hence, by using Definition (3) we obtain \( {\bar{u}}_k \in f({x_*}) + f'({x_*})({\bar{x}}_k - {x_*}) + F({\bar{x}}_k)\) for \(k=0,1, \ldots \). Because F is maximal monotone, according to [4, Proposition 6.1.3, p. 185] it has closed graph, and thus we can take the limit in the last inclusion to conclude that \({\bar{u}} \in f({x_*}) + f'({x_*})({\bar{x}} - {x_*}) + F({\bar{x}})\). This implies that \({\bar{u}} \in L_{f+F}({x_*}, {\bar{x}})\), and the desired statement follows. Now, we will prove that the mapping \( {{\mathbb {R}}}^n \ni x \mapsto L_{f+F}({x_*}, x )\) is metrically regular at \({\bar{x}} \in {{\mathbb {R}}}^n\) for \({\bar{u}} \in {{\mathbb {R}}}^n\) with constants \(\kappa = 1/\beta \), \(a=+\infty \), and \(b=+\infty \). For this, take arbitrary \(x, u\in {{\mathbb {R}}}^n\). Because \(\text{ rge }\,L_{f+F}({x_*}, \cdot )= {{\mathbb {R}}}^n\), there exists \(y\in {{\mathbb {R}}}^n\) such that \(u\in L_{f+F}({x_*}, y)\). Thus, we can take \(w_y\in F(y)\) such that \(u = f({x_*}) + f'({x_*})(y - {x_*})+ w_y\). Moreover, for every arbitrary \(v\in L_{f+F}({x_*}, x)\), we can find \(w_x\in F(x)\) such that \(v = f({x_*}) + f'({x_*})(x - {x_*})+ w_x\). Thus, the monotonicity of F implies that

On the other hand, (19) yields that \(\beta \Vert x - y\Vert ^2 \le \langle f'({x_*})(x - y), x - y\rangle \), which combined with the last inequality gives

Because \(u\in L_{f+F}({x_*}, y)\), it follows that if \(x=y\) then \(x\in L_{f+F}({x_*}, u )^{-1}\). In this case, we can conclude that \(d\left( x, L_{f+F}({x_*}, u)^{-1}\right) =0\le d\left( u, L_{f+F}({x_*}, x) \right) /\beta \). Thus, we assume that \(x\ne y\), and so

Because \(u\in L_{f+F}({x_*}, y)\), the definition of distance given in (4) and the latter inequality imply that

To conclude the proof, it remains to prove that the mapping \( z\mapsto L_{f+F}(x_*, z )^{-1}\) is single-valued from \({{\mathbb {R}}}^n \) to \({{\mathbb {R}}}^n\). Take \(z\in {{\mathbb {R}}}^n\), \(x_1\in L_{f+F}(x_*, z )^{-1}\), and \(x_2\in L_{f+F}(x_*, z )^{-1}\). For \(i=1,2,\) find \(v_i\in F(x_i)\) such that \(z= f(x_*)+f'(x_*)(x_i-x_*)+ v_i\). Thus, (19) and the monotonicity of F imply that

Yielding that \(x_1=x_2\), so that \(L_{f+F}(x_*, \cdot )^{-1}\) is single-valued. Therefore, the proof is concluded. \(\square \)

From now on, \( N_{D}\)denotes the normal cone mapping of a closed convex setD. In the following result we present a particular instance of Theorem 2.

Theorem 3

Let C and D be convex sets in \({{\mathbb {R}}}^n\) such that C is closed and \(C\subset D\), and let \(h: {{\mathbb {R}}}^n \rightarrow {\mathbb R}^n\) be a continuously differentiable function. Assume that \(x_*\in C\), \(h(x_*) + N_{D}(x_*)\ni 0\), and there exist \(\beta >0\) and \(L > 0\) such that

Let \(\{\theta _k\}\subset [0, 1/2)\) be such that \({\tilde{\theta }}:=\sup _{k} \theta _k< 1/2\) and \(r_{*}:= [2(1-\sqrt{2{\tilde{\theta }}})\beta ]/[(3-\sqrt{2{{\tilde{\theta }}}}) L]\). Then, every sequence \(\{x_k\}\) generated by the Newton-InexP method to solve (1), associated to \(\{\theta _k\}\) and starting in \(x_0 \in C \cap B_{ r_{*}}(x_*)\backslash \{x_*\}\), converges to \(x_*\), and the rate of convergence is as follows:

As a consequence, if \(\lim _{k\rightarrow +\infty }\theta _k=0\), then \(\{x_k\}\) converges to \(x_*\) superlinearly. In particular, if \( \theta _k=0\) for all \(k=0, 1 \ldots \), then

and the sequence \(\{x_k\}\) converges to \(x_*\)Q-quadratically.

Proof

Because the normal cone mapping \(N_D\) is maximal monotone (see [4, Corollary 6.3.1, p. 192]), we can use Proposition 1 to obtain that the set-valued mapping \(L_{h + N_D}({x_*},\cdot ): {{\mathbb {R}}}^n \rightrightarrows {\mathbb R}^n \) is strongly metrically regular at \(x_* \in {{\mathbb {R}}}^n\) for \(0 \in {{\mathbb {R}}}^n\), with constants \(\kappa = 1/\beta \), \(a=+\infty \), and \(b=+\infty \). On the other hand, because \(x_* \in C\) is such that \(h(x_*) + N_{D}(x_*)\ni 0\), h has a Lipschitz continuous derivative on \( {{\mathbb {R}}}^n\) and \(x_0 \in C \cap B_{ r_{*}}(x_*)\backslash \{x_*\}\). Therefore, we can apply Theorem 2 to obtain the desired result. \(\square \)

We end this section by presenting two examples from the literature that can be seen as particular cases of constrained generalized equations. We begin by presenting the so-called Constrained Variational Inequality Problem (CVIP). See, for example, [9].

Example 1

Let U and \(\varOmega \) be closed convex sets in \({\mathbb {R}}^n\) and \(h: {{\mathbb {R}}}^n \rightarrow {{\mathbb {R}}}^n\) be a continuous function. The CVIP is defined as:

The problem (20) can be rewritten equivalently as: Find \(x_* \in U \cap \varOmega \) such that \(h(x_*) + N_{U}(x_*) \ni 0\). Then, (20) can be seen as a special instance the constrained generalized equation (1). Observe that the classical variational inequality problem it is not equivalent to the above CVIP, since in (20) the point \(x_*\) must belongs to \(U \cap \varOmega \).

In the next example, we describe the Split Variational Inequality Problem (SVIP), which can be rewritten as special case of CVIP. See [9, 25] for an extensive discussion on this problem.

Example 2

Let \(U\subset {\mathbb {R}}^n\) and \( \varOmega \subset {\mathbb {R}}^m\) be nonempty, closed convex sets, and \(A:{\mathbb {R}}^n \rightarrow {\mathbb {R}}^m\) be a linear operator. Given functions \(f:{\mathbb {R}}^n \rightarrow {\mathbb {R}}^n\) and \(g:{\mathbb {R}}^m \rightarrow {\mathbb {R}}^m\), the SVIP is formulated as follows: Find a point \( x_* \in U\) such that

and such that the point \(y_* = Ax_* \in \varOmega \) satisfies

By taking \({\mathbb {R}}^{nm}:={\mathbb {R}}^n \times {\mathbb {R}}^m\), \(D:= U\times \varOmega \) and \(V:=\{w=(x,y)\in {\mathbb {R}}^{n m} :~ Ax=y\}\) the SVIP is equivalent to the following CVIP:

where \(w = (x,y)\) and \(h: {\mathbb {R}}^{nm} \rightarrow {\mathbb {R}}^{nm}\) is defined by \(h(x,y) := (f(x), g(y))\). See [9, Lemma 5.1]. Therefore, from Example 1 and (21), SVIP is equivalent to the following constrained generalized equation: Find \( w_* \in D \cap V\) such that \(h(w_*) + N_{D}(w_*) \ni 0\), where \(h: {\mathbb {R}}^{nm} \rightarrow {\mathbb {R}}^{nm}\) is defined by \(h(x,y) = (f(x), g(y))\), \(D:= U\times \varOmega \) and \(V:=\{w=(x,y)\in {\mathbb {R}}^{n m} :~ Ax=y\}\).

It is worth noting that SVIP is quite general and includes several problems as special cases. For instance, Split Minimization Problem and Common Solutions to Variational Inequalities Problem. See, for example [1, 9, 10, 34].

5 Conclusions

In this paper, we proposed a new method for solving constrained generalized equations called the Newton-InexP method. Essentially, we expanded the classical Newton’s method for solving generalized equations with the additional of a strategy to obtain a feasible inexact projection and assure feasibility. An analysis of the convergence of the method was established under metric regularity and strong metric regularity. It is worth emphasizing the connection between metric regularity and the Aubin property in [15, Theorem 3E.7, p. 178]. This allows the main result of the paper to be equivalently formulated as a statement about the Aubin property.

References

Abbas, M., AlShahrani, M., Ansari, Q.H., Iyiola, O.S., Shehu, Y.: Iterative methods for solving proximal split minimization problems. Numer. Algorithms 78(1), 193–215 (2018)

Aragón Artacho, F.J., Belyakov, A., Dontchev, A.L., López, M.: Local convergence of quasi-Newton methods under metric regularity. Comput. Optim. Appl. 58(1), 225–247 (2014)

Aragón Artacho, F.J., Dontchev, A.L., Gaydu, M., Geoffroy, M.H., Veliov, V.M.: Metric regularity of Newton’s iteration. SIAM J. Control Optim. 49(2), 339–362 (2011)

Auslender, A., Teboulle, M.: Asymptotic Cones and Functions in Optimization and Variational Inequalities. Springer Monographs in Mathematics. Springer, New York (2003)

Behling, R., Fischer, A., Herrich, M., Iusem, A., Ye, Y.: A Levenberg–Marquardt method with approximate projections. Comput. Optim. Appl. 59(1–2), 5–26 (2014)

Bellavia, S., Morini, B.: Subspace trust-region methods for large bound-constrained nonlinear equations. SIAM J. Numer. Anal. 44(4), 1535–1555 (2006)

Bertsekas, D.P.: Nonlinear Programming. Athena Scientific Optimization and Computation Series, 2nd edn. Athena Scientific, Belmont (1999)

Bonnans, J.F.: Local analysis of Newton-type methods for variational inequalities and nonlinear programming. Appl. Math. Optim. 29(2), 161–186 (1994)

Censor, Y., Gibali, A., Reich, S.: Algorithms for the split variational inequality problem. Numer. Algorithms 59(2), 301–323 (2012)

Censor, Y., Gibali, A., Reich, S., Sabach, S.: Common solutions to variational inequalities. Set-Valued Var. Anal. 20(2), 229–247 (2012)

Daniel, J.W.: Newton’s method for nonlinear inequalities. Numer. Math. 21, 381–387 (1973)

Dontchev, A.L.: Local analysis of a Newton-type method based on partial linearization. In: The Mathematics of Numerical Analysis (Park City, UT, 1995), Lectures in Applied Mathematics, vol. 32, pp. 295–306. American Mathematical Society, Providence (1996)

Dontchev, A.L.: Uniform convergence of the Newton method for Aubin continuous maps. Serdica Math. J. 22(3), 283–296 (1996)

Dontchev, A.L., Rockafellar, R.T.: Convergence of inexact Newton methods for generalized equations. Math. Program. 139(1–2, Ser. B), 115–137 (2013)

Dontchev, A.L., Rockafellar, R.T.: Implicit Functions and Solution Mappings: A View from Variational Analysis. Springer Series in Operations Research and Financial Engineering, 2nd edn. Springer, New York (2014)

Ferreira, O.P.: A robust semi-local convergence analysis of Newton’s method for cone inclusion problems in Banach spaces under affine invariant majorant condition. J. Comput. Appl. Math. 279, 318–335 (2015)

Ferreira, O.P., Silva, G.N.: Kantorovich’s theorem on Newton’s method for solving strongly regular generalized equation. SIAM J. Optim. 27(2), 910–926 (2017)

Ferreira, O.P., Silva, G.N.: Local convergence analysis of Newton’s method for solving strongly regular generalized equations. J. Math. Anal. Appl. 458(1), 481–496 (2018)

Ferris, M.C., Pang, J.S.: Engineering and economic applications of complementarity problems. SIAM Rev. 39(4), 669–713 (1997)

Frank, M., Wolfe, P.: An algorithm for quadratic programming. Nav. Res. Log. Q. 3, 95–110 (1956)

Fukushima, M., Luo, Z.Q., Tseng, P.: Smoothing functions for second-order-cone complementarity problems. SIAM J. Optim. 12(2), 436–460 (electronic) (2001/2002)

Gonçalves, M.L.N., Oliveira, F.R.: An inexact newton-like conditional gradient method for constrained nonlinear systems. Appl. Numer. Math. 132, 22–34 (2018)

Gonçalves, M.L.N., Melo, J.G.: A Newton conditional gradient method for constrained nonlinear systems. J. Comput. Appl. Math. 311, 473–483 (2017)

Gould, N.I.M., Toint, P.L.: Numerical methods for large-scale non-convex quadratic programming. In: Trends in Industrial and Applied Mathematics (Amritsar, 2001), Applied Optimization, vol. 72, pp. 149–179. Kluwer Academic Publications, Dordrecht (2002)

He, H., Ling, C., Xu, H.K.: A relaxed projection method for split variational inequalities. J. Optim. Theory Appl. 166(1), 213–233 (2015)

Izmailov, A.F., Solodov, M.V.: Inexact Josephy–Newton framework for generalized equations and its applications to local analysis of Newtonian methods for constrained optimization. Comput. Optim. Appl. 46(2), 347–368 (2010)

Josephy, N.H.: Newton’s method for generalized equations and the pies energy model. Ph.D. thesis, Department of Industrial Engineering, University of Wisconsin–Madison (1979)

Kanzow, C.: An active set-type Newton method for constrained nonlinear systems. In: Complementarity: Applications, Algorithms and Extensions (Madison, WI, 1999), Applied Optimization, vol. 50, pp. 179–200. Kluwer Academic Publications, Dordrecht (2001)

Kimiaei, M.: A new class of nonmonotone adaptive trust-region methods for nonlinear equations with box constraints. Calcolo 54(3), 769–812 (2017)

La Cruz, W.: A projected derivative-free algorithm for nonlinear equations with convex constraints. Optim. Methods Softw. 29(1), 24–41 (2014)

Lan, G., Zhou, Y.: Conditional gradient sliding for convex optimization. SIAM J. Optim. 26(2), 1379–1409 (2016)

Marini, L., Morini, B., Porcelli, M.: Quasi-Newton methods for constrained nonlinear systems: complexity analysis and applications. Comput. Optim. Appl. 71(1), 147–170 (2018)

Monteiro, R.D.C., Pang, J.S.: A potential reduction Newton method for constrained equations. SIAM J. Optim. 9(3), 729–754 (1999)

Moudafi, A.: Split monotone variational inclusions. J. Optim. Theory Appl. 150(2), 275–283 (2011)

Nocedal, J., Wright, S.J.: Numerical Optimization. Springer Series in Operations Research and Financial Engineering, 2nd edn. Springer, New York (2006)

Robinson, S.M.: Extension of Newton’s method to nonlinear functions with values in a cone. Numer. Math. 19, 341–347 (1972)

Robinson, S.M.: Generalized equations and their solutions, Part I: Basic theory. Math. Program. Stud. 10, 128–141 (1979)

Robinson, S.M.: Strongly regular generalized equations. Math. Oper. Res. 5(1), 43–62 (1980)

Robinson, S.M.: Generalized equations and their solutions, Part II: applications to nonlinear programming. Math. Program. Stud. 19, 200–221 (1982)

Robinson, S.M.: Generalized equations. In: Mathematical Programming: The State of the Art (Bonn, 1982), pp. 346–367. Springer, Berlin (1983)

Uko, L.U.: Generalized equations and the generalized Newton method. Math. Program. 73(3, Ser. A), 251–268 (1996)

Vanderbei, R.J.: Linear Programming: Foundations and Extensions. International Series in Operations Research and Management Science, vol. 4. Kluwer Academic Publishers, Boston (1996)

Zhang, Y., Zhu, D.T.: Inexact Newton method via Lanczos decomposed technique for solving box-constrained nonlinear systems. Appl. Math. Mech. (English Ed.) 31(12), 1593–1602 (2010)

Acknowledgements

The authors would like to thank the anonymous referees for their constructive comments, which have helped to substantially improve the presentation of the paper. In particular, we thank one of the referees for drawing our attention to the use of general procedures to find a feasible inexact projection, rather than a specific one as we were using in our first version. The authors were supported in part by CNPq Grants 305158/2014-7 and 302473/2017-3, FAPEG/GO and CAPES.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

de Oliveira, F.R., Ferreira, O.P. & Silva, G.N. Newton’s method with feasible inexact projections for solving constrained generalized equations. Comput Optim Appl 72, 159–177 (2019). https://doi.org/10.1007/s10589-018-0040-0

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-018-0040-0

Keywords

- Constrained generalized equations

- Newton’s method

- Feasible inexact projection

- Lipschitz continuity

- Metric regularity

- Strong metric regularity

- Local convergence