Abstract

This paper is concerned with a class of neutral type recurrent neural networks with time-varying delays, distributed delay and D operator on time–space scales which unify the continuous-time and the discrete-time recurrent neural networks under the same framework. Some sufficient conditions are given for the existence and the global exponential stability of the pseudo almost periodic solution by using inequality analysis techniques on time scales, fixed point theorem and the theory of calculus on time scales. An example is given to show the effectiveness of the derived results via computer simulations.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

During the last few decades, artificial neural network (NNs) is utilized to simulate the structure and function of a biological neural network [1]. Recently, investigations of artificial NNs have been a prevailing research topic due to their great applications and potentials in various fields, such as such as multilayer neural networks for pattern recognition [2], memristor-based echo state network for time-series forecast [1], and concatenated generative adversarial neural networks to generate videos [3].

Especially, the recurrent NNs are powerful and popular artificial NNs have been widely applied in many fields owing to the pioneering work of Hopfield [4]. In [4], Hopfield consider a class of recurrent artificial neural networks, which can be described as following:

where \(i = 1,2,\dots ,n;\)\(x_i(t)\) denotes the potential of the ith neuron at time t; \(C_i\) are a positive constants and \(R_i\) are the neuron amplifier input capacitances and resistances, respectively; \(t_{ij}\) is the synaptic interconnection strength; \(J_i\) is the constant input from outside of the network; \(f_j(x_j)\) is the activation function. In terms of electrical circuits \(f_j(x_j)\) represents the output characteristic of an amplifier with negligible respond time. For more detailed structure of neural networks (1), the readers are referred to [4]. Denote \(e_i=\frac{1}{R_iC_i}\), \(b_{ij}=\frac{t_{ij}}{C_i}\) and \(I_i=\frac{J_i}{C_i}\). Then the above neural networks (1) are simplified as

This model has been paid much considerable attention due to its wide applications in various areas such as electrical engineering, mechanics, control, parallel computation, automatic and so on [5,6,7,8,9,10,11,12].

In addition, the existence of time delay especially time-varying delay makes the dynamic behaviors become more complex and may cause divergence, oscillation, instability, chaos or other poor performance in NNs, which are usually harmful to the applications of NNs [13, 14]. Therefore, the stability analysis for delayed NNs has become an important research topic and attracted many researchers much attention in the literature [15,16,17,18,19]. For example, Aouiti et al. [19] studied the following recurrent NNs with time-varying coefficients and mixed delays:

On the other hand, neutral-type phenomenon always exists in the study of population dynamics and automatic control etc [20]. Hence, the dynamic behaviors for different classes of recurrent NNs with neutral type delays were investigated in [5, 20,21,22,23]. It should be mentioned that all neutral type recurrent NNs models considered in the above references can be classified into two types:

-

(i)

Non-operator-based neutral functional differential equations (NOBNFDEs) [24, 25].

-

(ii)

D-operator-based neutral functional differential equations (DOBNFDEs) [26,27,28,29,30].

As well known, based on the theory of functional differential equations, DOBNFDEs may have more real significance than NOBNFDEs ones in many practical applications of NNs dynamics [27]. According to the complex neural reactions, neutral type recurrent NNs with D-operator may be described by the following neutral functional differential equations [28,29,30]:

and criteria ensuring the existence of periodic solutions for system (3) are established in [28].

In addition, the theory of time–space scales, which was first introduced by Hilger [31] in order to unify discrete-time and continuous-time calculus. The books on the subject of time–space scale, by Bohner and Peterson [32], Agarwal [33], organize and recapping much of time–space scale calculus. The theory of time–space scale have been successfully applied in some mathematical models of real processes such as in population dynamics, physics, economics, biotechnology and so on. Since then, many works have investigated the dynamics of NNs on time scales [34,35,36,37, 39, 40, 42]. In [34], the authors studied the global exponential stability of the equilibrium point for a class of delayed bidirectional associative memory (BAM) neural network on the time–space scale. The work of [35] studied the pseudo almost periodic solutions for the following neutral type high-order Hopfield NNs with time-varying delays and leakage delays on time–space scales:

The concept of pseudo almost periodicity (PAP), which is the central subject of our work, was introduced and studied by Zhang [38]. It is well known that PAP solutions, which are more general and complicated than periodic and almost periodic solutions [6, 24, 35, 42]. In [42] the authors studied the and global exponential stability of pseudo almost periodic solution for the following neutral delay BAM neural networks with time-varying delay in leakage terms:

Inspired by the above discussions, in this paper, we propose a class of neutral type recurrent NNs with time-varying delays, distributed delay and D-operator on time–space scales:

where \(i,j=1,2\dots ,n\); n donate the number of neurons in layers; \({\mathbb {T}}\) represent the translation invariant time scale, \(x_i(t)\) denotes the activations of the \(i\mathrm{th}\) neuron at time t; \(a_i(.)>0\) are the rate with the \(i\mathrm{th}\) neuron will reset its potential to the resting state in isolation when they are disconnected from the network and the external inputs at time t; \(c_{ij}(t)\) , \(b_{ij}(t)\)\(d_{ij}(t)\) and \(p_i(t)\) are the elements of feedback template and feed forward template at time t; \(f_j\), \(g_j\) and \(h_j\) are the activation functions; \(\tau (t),\)\(r_i(t)\) are transmission delays at time t and satisfy \(t-\tau (t)\in {\mathbb {T}},\)\(t-r_i(t)\in {\mathbb {T}}\) for \(t\in {\mathbb {T}}\); \( N_{ij}(t)\) are the delay kernel at time t; \(I_i(t)\) denotes the input of the \(i\mathrm{th}\) neuron at time t.

We should point out that:

For each interval J of \({\mathbb {R}}\), we denote by \(J_{{\mathbb {T}}}= J \bigcap {\mathbb {T}}\).

The initial conditions associated with system (4) are of the form:

where \(\varphi (.)\) denotes a real-value bounded right-dense continuous function defined on \((-\infty ,0]_{{\mathbb {T}}}.\)

Throughout this paper, for \(i,j = 1,2,\dots ,n\), it will be assumed that \(a_i,\)\(r_i\) are almost periodic on \({\mathbb {T}}\) and \(c_{ij},\)\(b_{ij}\), \(d_{ij}\), \(p_i,\) and \(I_{i},\) are pseudo almost periodic functions on \({\mathbb {T}},\) and let the positive constants \( c^+_{ij}\),\( b^+_{ij}\), \(d^+_{ij}\), \(p^+_{i},\)\(r^+_{i},\)\(I^+_{i}\) such that

We also assume that the following conditions \(\mathbf (H_{1})\)–\(\mathbf (H_{4})\) hold.

- \(\mathbf (H_1)\) :

-

Functions \(f_j,\;g_j,\;h_j\in \mathrm {C}({\mathbb {R}},{\mathbb {R}})\) and for each \( j = \{1,2,\dots ,n\}\), there exist nonnegative constants \( L^{f}_{j},\)\( L^{g}_{j}\) and \( L^{h}_{j}\) such that

$$\begin{aligned} f_{j}(0)= & {} 0,\quad \mid f_{j}(u)- f_{j}(v)\mid \le L^{f}_{j} \mid u-v \mid , \\ g_{j}(0)= & {} 0,\quad \mid g_{j}(u)- g_{j}(v)\mid \le L^{g}_{j} \mid u-v \mid , \end{aligned}$$and

$$\begin{aligned} h_{j}(0)= 0,\quad \mid h_{j}(u)- h_{j}(v)\mid \le L^{h}_{j} \mid u-v \mid . \end{aligned}$$ - \(\mathbf (H_2)\) :

-

For \(i,j, \in \{1,2,\dots ,n\}\), the delay kernel \(N_{ij} :[0, \infty )_{{\mathbb {T}}} \longrightarrow [0, \infty )\) is continuous, and there exist nonnegative constants \(N^{+}_{ij}\) such that

$$\begin{aligned} N^{+}_{ij}= \int _{0}^{\infty }N_{ij}(s) \nabla s. \end{aligned}$$ - \(\mathbf (H_3)\) :

-

For all \(1\le i \le n\), \(a_i\in \mathrm {C}({\mathbb {T}},{\mathbb {R}})\) with \(a_i\in \mathfrak {R}_v^+\), and \(a_i^->0,\) where \(\mathfrak {R}_v^+\) denotes the set of positively regressive functions from \({\mathbb {T}}\) to \({\mathbb {R}}\).

- \(\mathbf (H_4)\) :

-

Assume that

$$\begin{aligned} r=p_i^+ + \frac{1}{a_i^-} \bigg (a_i^+p_i^++ \sum _{j=1}^{n} (c_{ij}^+ L_{j}^{f} + b^+_{ij} L_{j}^{g}+d_{ij}N_{ij}^+ L_{j}^{h} )\bigg )<1. \end{aligned}$$

Remark 1

If \({\mathbb {T}}={\mathbb {R}}\), then (4) reduces to the following from

if \({\mathbb {T}}={\mathbb {Z}}\), then (4) reduces to the following from

Remark 2

To the best of our knowledge, this is the first time to study the PAP solutions of system (4). Since it is a \(\nabla \)-dynamic system on time–space scales, the results obtained in [35, 42] concerning the \(\nabla \)-dynamic systems cannot be directly applied to the system (4). Besides, since it studies the almost periodic problem, although paper [39, 40] deals with \(\nabla \)-dynamic systems on time–space scales, its results also cannot be directly applied to the system (4).

The organization of the rest of this paper is as follows. In Sect. 2, we will introduce some necessary notations, definitions and fundamental properties of the space \(PAP({\mathbb {T}},{\mathbb {R}})\) and make some preparations for later sections. In Sects. 3 and 4, based on the results obtained in the previous sections, Banach’s fixed-point theorem and \(\nabla \)-differential inequalities on time scales, we present some sufficient conditions that guarantee the existence and global exponential stability of pseudo almost periodic solutions to (4). In Sect. 5, we present examples to illustrate the feasibility and effectiveness of our results obtained in Sects. 3 and 4. Finally, conclusions and open problem are drawn in Sect. 6.

2 Preliminary Results

In this section, we shall first recall some basic definitions and prove some lemmas.

For convenience, we denote by \({\mathbb {R}}^n\big ({\mathbb {R}}= {\mathbb {R}}^1\big )\) the set of all n-dimensional real vectors (real numbers). For any \(x = (x_1, x_2,\dots ,x_n)^T\in {\mathbb {R}}^n,\) we let \(\{x_i\} = (x_1, x_2,\dots ,x_n)^T,\) |x| denote the absolute-value vector given by \(|x| = \{|x_i|\},\) and define \(\Vert x\Vert = \max \nolimits _{1\le i\le n} |x_i|.\) Let \(\mathrm {BC}({\mathbb {T}},{\mathbb {R}}^n)\) denotes the set of bounded and continued functions from \({\mathbb {T}}\) to \({\mathbb {R}}^n.\) Note that \(\big (\mathrm {BC}({\mathbb {T}},{\mathbb {R}}^n),\Vert .\Vert _{\infty }\big )\) is a Banach space where \(\Vert .\Vert _{\infty }\) denotes the sup norm

Definition 1

[41] Let \({\mathbb {T}}\) be a nonempty closed subset (time scale) of \({\mathbb {R}}\). The forward and backward jump operators \(\sigma ,\;\rho : {\mathbb {T}}\rightarrow {\mathbb {T}}\) and the graininess \(\mu : {\mathbb {T}}\rightarrow {\mathbb {R}}^+\) are defined, respectively, by

Definition 2

[41] A point \(t\in {\mathbb {T}}\) is called \(\left\{ \begin{array}{ll} \text{ left-dense } &{}\quad \mathrm{if} \,t>\inf {\mathbb {T}} \text{ and } \rho (t)=t,\\ \text{ left-scattered } &{} \quad \mathrm{if}\, \rho (t)<t,\\ \text{ right-dense }&{}\quad \mathrm{if}\, t<\sup {\mathbb {T}} \text{ and } \sigma (t)=t,\\ \text{ right-scattered }&{}\quad \mathrm{if}\,\sigma (t)>t. \end{array} \right. \)

If \({\mathbb {T}}\) has a left-scattered maximum m, then \({\mathbb {T}}^k={\mathbb {T}}{\setminus }\{m\}\); otherwise \({\mathbb {T}}^k={\mathbb {T}}\).

If \({\mathbb {T}}\) has a right-scattered minimum m, then \({\mathbb {T}}_k={\mathbb {T}}{\setminus }\{m\}\); otherwise \({\mathbb {T}}_k={\mathbb {T}}\).

Definition 3

[41] A function \(f:{\mathbb {T}}\rightarrow {\mathbb {R}}\) is rd-continuous provided it is continuous at each right-dense point in \({\mathbb {T}}\) and has a left-sided limit at each left-dense point in \({\mathbb {T}}\).

The set of rd-continuous functions \(f:{\mathbb {T}}\rightarrow {\mathbb {R}}\) will be denoted by \(\mathrm {C}_{rd}({\mathbb {T}},{\mathbb {R}}).\)

Lemma 1

[41] Assume that \(p,q:{\mathbb {T}}\rightarrow {\mathbb {R}}\) are two regressive functions, then

- \(\mathrm{(a)}\) :

-

\(e_0(t,s)\equiv 1\) and \(e_p(t,t)\equiv 1,\)

- \(\mathrm{(b)}\) :

-

\(e_p(t,s)=\frac{1}{e_p(s,t)}=e_{\ominus _{p}}(t,s),\)

- \(\mathrm{(c)}\) :

-

\(e_p(t,s)e_p(s,r)=e_p(t,r),\)

- \(\mathrm{(d)}\) :

-

\((e_p(t,s))^\nabla =p(t)e_p(t,s).\)

Lemma 2

[41] Let f, g be \(\nabla \)-differentiable function on \({\mathbb {T}},\) then

- \(\mathrm{(a)}\) :

-

\((v_1f+v_2g)^\nabla =v_1f^\nabla +v_2g^\nabla ,\) for any constant \(v_1,v_2,\)

- \(\mathrm{(b)}\) :

-

\((fg)^\nabla (t)=f^\nabla (t)g(t)+f(\sigma (t))g^\nabla (t)=f(t)g^\nabla (t)+f^\nabla (t)g(\sigma (t)).\)

Lemma 3

[41] Assume that \(p(t)\ge 0,\) for \(t\ge s\), then \(e_p(t,s)\ge 1.\)

Definition 4

[41] A function \(p:{\mathbb {T}}\rightarrow {\mathbb {R}}\) is called \(\nu \)-regressive if \(1-\nu (t)p(t)\ne 0\) for all \(t\in {\mathbb {T}}_k\). If \(p\in {\mathscr {R}}_\nu \) then we define the nabla exponential function by

where \(\mu \)-cylinder transformation is as in

Definition 5

[41] Let \(\rho :{\mathbb {T}}\rightarrow {\mathbb {R}}\) is called \(\mu \)-regressive provided \(1+\mu (t)\rho (t)\ne 0\) for all \(t\in {\mathbb {T}}^k;\)\(\rho :{\mathbb {T}}\rightarrow {\mathbb {R}}\) is called positively regressive provided \(1+\mu (t)\rho (t)>0\) for all \(t \in {\mathbb {T}}^k.\) The set of all regressive and rd-continuous functions \(\rho : {\mathbb {T}}\rightarrow {\mathbb {R}}\) will be denoted by \({\mathscr {R}}={\mathscr {R}}({\mathbb {T}},{\mathbb {R}}),\) and The set of all regressive and rd-continuous functions \(\rho : {\mathbb {T}}\rightarrow {\mathbb {R}}\) will be denoted by \({\mathscr {R}}^+={\mathscr {R}}^+({\mathbb {T}},{\mathbb {R}}).\)

Lemma 4

[41] Suppose that \(p\in {\mathscr {R}}^+\), then

-

(i)

\(e_p(t,s)>0,\) for all \(t,\; s \in {\mathbb {T}},\)

-

(ii)

if \(p(t)\le q(t)\) for all \(t\ge s,\)\(t,\; s \in {\mathbb {T}}\), then \(e_p(t,s)\le e_q(t,s),\) for all \(t\ge s.\)

Lemma 5

[41] If \(p \in {\mathscr {R}}\) and \(a,b,c \in {\mathbb {T}},\) then

and

Definition 6

[41] Let \(p,q:{\mathbb {T}}\rightarrow {\mathbb {R}}\) are two regressive functions, define

-

(1)

\((p\oplus _\nu q)(t)=p(t)+q(t)-\nu p(t)q(t),\)

-

(2)

\(\ominus _\nu p(t)=-\frac{p(t)}{1-\nu p(t)},\)

-

(3)

\(p\ominus _\nu q=p\oplus _\nu (\ominus _\nu q).\)

Lemma 6

[41] Let \(a\in {\mathbb {T}}^k, b \in {\mathbb {T}}\) and assume that \(f:{\mathbb {T}}\times {\mathbb {T}}^k\rightarrow {\mathbb {R}}\) is continuous at (t, t) where \(t\in {\mathbb {T}}^k\) with \(t>a.\) Also assume that \(f^\nabla (t,.)\) is rd-continuous on \([a, \sigma (t)].\) Suppose that for each \(\varepsilon >0,\) there exists a neighborhood U of \(\tau \in [a ,\sigma (t)]\) such that

where \(f^\nabla \) denotes the derivative of f with respect to the first variable. Then

In the following, we recall some definitions, notations and basic results of almost periodicity and pseudo almost periodicity on time scales. For more details, we refer the reader to [42]

In this paper, we restrict our discussion on almost periodic time scales.

Definition 7

[42] Let \({\mathbb {T}}\) be an almost periodic time scale. A function \(f (t) : {\mathbb {T}} \rightarrow {\mathbb {R}}^n\) is said to be almost periodic on \({\mathbb {T}}\), if for any \(\varepsilon > 0,\) the set

is relatively dense, that is, for any \(\varepsilon > 0,\) there exists a constant \(l(\varepsilon ) > 0\) such that each interval of length \(l(\varepsilon )\) contains at least one \(\tau \in E(\varepsilon , f )\) such that

The set \(E(\varepsilon , f )\) is called the \(\varepsilon \)-translation set of f(t), \(\tau \) is called the \(\varepsilon \)-translation number of f(t), and \(l(\varepsilon )\) is called the inclusion of \(E(\varepsilon , f ).\)

In the following, we introduce some notations

Definition 8

[42] Let \({\mathbb {T}}\) be an almost periodic time scale. A function \(f \in \mathrm {C}({\mathbb {T}},{\mathbb {R}}^n)\) is said to be pseudo almost periodic, if \(f = g + \phi , \text{ where } g \in \mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n) \text{ and } \phi \in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}}^n).\) We denote by \(\mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n)\) the set of all such functions.

3 Existence of Pseudo Almost Periodic Solution

In this section, we establish some results for the existence and the uniqueness of the pseudo almost-periodic solution of (4).

Lemma 7

[42] If \(\varphi , \psi \in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}})\), then \(\varphi + \psi \in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}})\).

Lemma 8

[42] If \(\varphi , \psi \in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}})\), then \(\varphi \times \psi \in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}})\).

Lemma 9

If \(f\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n),\) satisfies the Lipschitcz condition, \(\theta \in \mathrm {C^1} ({\mathbb {T}},\varPi )\) is almost periodic, \(\theta (t)\ge 0\) and \(1-\theta ^{\nabla }(t)>0,\) then \(f(t-\theta (t))\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n).\)

Proof

From Definition 8, we have \(f=h+\varphi ,\) where \(h\in \mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n),\) and \(\varphi \in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}}^n).\) Clearly, \(h(t-\theta (t))\in \mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n).\)

Letting \(\beta =\sup \nolimits _{t\in {\mathbb {T}}}\frac{1}{1-\theta ^{\nabla }(t)}\) and \(s=t-\theta (t)\) give us

which, together with the fact that \(\lim \nolimits _{r\rightarrow +\infty }\frac{1}{2(r+\theta ^+)}\int _{t_0-(r+\theta ^+)}^{t_0+(r+\theta ^+)} |\varphi (s)|\nabla s=0,\) implies that \(\lim \nolimits _{r\rightarrow +\infty }\frac{1}{2r}\int _{t_0-r}^{t_0+r} |\varphi (t-\theta (t))|\nabla t=0,\) and \(\varphi (t-\theta (t))\in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}}^n).\)\(\square \)

Lemma 10

For \(i, \;j =1,\dots ,n,\) if \(\varphi (.) \in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n),\) then

Proof

Obviously, one can obtain

Let \(M^{\varphi }=\sup \nolimits _{\theta \in {\mathbb {T}}} |\varphi (\theta )|,\) and we get

For any sequence \(\{r_m\}_{m=1}^{+\infty }\) satisfying

we denote

Then

and

According to the Lebesgue dominated convergence theorem, we have

which entails that

Thus

\(\square \)

Lemma 11

For \(i, \;j =1,\dots ,n,\) if \(x_j(.)\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n),\) then

Proof

From Definition 8, we have \(x_j(t)=\psi _j(t)+\varphi _j(t),\) where \(\psi _j\in \mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n)\) and \(\varphi _j\in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}}^n).\) Since \(d_{ij}(.)\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n),\) then \(d_{ij}(t)=d_{ij}^\psi (t)+d_{ij}^\varphi (t),\) where \(d_{ij}^\psi \in \mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n)\), \(d_{ij}^\varphi \in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}}^n).\) Therefore,

In view of \(\mathbf {(H_1)}\), the definition of \(\mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n)\) and Lemma 10, we can deduce that

and

Hence

which, together with (7) and (9), implies that

\(\square \)

Lemma 12

For \(i, \;j =1,\dots ,n,\) if \(x_j(.)\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n),\) then

Proof

By Lemma 9 we have \(x_{j}(t-\tau (t))\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n).\) Furthermore, let

where \(x_j^1\in \mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n)\), \(x_j^2\in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}}^n).\) Since \(b_{ij}\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n),\quad i,\;j=1,\dots ,n,\) then

where \(b_{ij}^1\in \mathrm {AP}({\mathbb {T}},{\mathbb {R}}^n)\), \(b_{ij}^2\in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}}^n),\)\(i,\;j=1,\dots ,n.\) Then, for all \(t\in {\mathbb {T}}\), we get

Clearly,

Now, we choose constants \( \alpha _j\) and \(\eta _j\) such that \(\alpha _j=\sup \nolimits _{t\in {\mathbb {T}}}|g_j(x_j^1(t))|,\quad \eta _j=\sup \nolimits _{t\in {\mathbb {T}}}|L_j^g b_{ij}(t)|.\) Consequently,

It follows from (10) that \(b_{ij}(t) g_{j} (x_{j}(t-\tau (t)))\in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}^n).\) Similarly,

\(\square \)

Lemma 13

Define a nonlinear operator \(\varGamma \) by setting

where

Then \(\varGamma _\varphi \in \mathrm {PAP}({\mathbb {T}},{\mathbb {R}}).\)

Proof

According to \(\mathbf {(H1)}\) and \(\mathbf {(H4)},\) it is easily to see that \(\varGamma \in \mathrm {BC}({\mathbb {T}},{\mathbb {R}}^n).\) From Lemmas 7, 8, 9, 10, 11, 12 we obtain that there are \(H_i\in \mathrm {AP}({\mathbb {T}},{\mathbb {R}})\) and \(\varPhi _i\in \mathrm {PAP_0}({\mathbb {T}},{\mathbb {R}})\) such that

Noting that \(M[a_i]>0,\) using the theory of exponential dichotomy in [42] , we get that

satisfies \(y_i^\nabla (t)=-a_i(t)y_i(t)+H_i(t),\; i\;\in \{1,2,\dots ,n\}.\)

Arguing as in the verification of Lemma 11 , one can show

Then

and

Combining with (11), it leads to

Theorem 1

Let \(\mathbf (H_1)\)–\(\mathbf (H_4)\) hold. Then, system (10) has a pseudo almost periodic solution in

where

Proof

Let

We obtain from (4) that

Define an operator as follows:

where

One has

after

Set \(B= \{ \varphi \in \mathrm {PAP}({\mathbb {R}},{\mathbb {R}}^{n}),\parallel \varphi - \varphi _{0} \parallel _{\infty } \le \frac{r\beta }{(1-r)} \} \). Clearly, B is a closed convex subset of \(\mathrm {PAP}({\mathbb {R}},{\mathbb {R}}^{n})\) and, therefore, for any \(\varphi \in B\) by using the estimate just obtained, we see that

which implies that \(\varPhi _\varphi \in B.\) Next, we show that \(\varPhi : B \rightarrow B\) is contraction operator. In view of \(\mathbf {(H_1)},\) for any \(\varphi ,\; \psi \in B\), we have

because \(r<1\), which prove that \(\varPhi \) is a contraction mapping. Then, by virtue of the Banach fixed point theorem, \(\varPhi \) has a unique fixed point which corresponds to the solution of (4) in \( B\subset \mathrm {PAP}({\mathbb {R}},{\mathbb {R}}^{n})\). \(\square \)

4 Exponential Stability of Pseudo Almost Periodic Solution

In this section, we establish some results for the global exponential stability of the unique \(\mathrm {PAP}\) solutions of (4).

Theorem 2

Assume that \(\mathbf (H_1)\)–\(\mathbf (H_4)\) hold, then the unique system \(\mathrm {PAP}\) solution of system (4) is globally exponentially stable.

Proof

From Theorem 1, we see that system (4) has a unique \(\mathrm {PAP}\) solution

with initial value \(\varphi ^*(s)=(\varphi ^*_1(s),\dots ,\varphi ^*_n(t))^T\). Suppose that \(x(t)=(x_1(t),\dots ,x_n(t))^T\) is an arbitrary solution of system (4) with initial value \(\varphi (s)=(\varphi _1(s),\dots ,\varphi _n(t))^T\) and

Then

From \(\mathbf {(H_4)}\), there exists a constant \(\lambda \in \{0,\min _{1\le i\le n}\{a^-_i\}\}\) such that \(1-p^+_iexp(\lambda r^+_i)>0,\) and

Denote

and

Let \(\varepsilon >0\) and \(M>1\). It’s easy to see that

and

We claim that

Contrarily, there exist a \(t_1\in (t_0,+\infty )_{\mathbb {T}}\) and some \(i\in \{1,\dots ,n\}\) such that

Therefore, there must exist a constant \(\omega > 1\) such that

On the other hand,

for all \(t_2\le t\), \(t <t_1\), which implies that

Integrating, for all \(s\in [t_0,t]_{\mathbb {T}}\)

we get

Thus, \(M > 1\), (13), (14), (17) and (19) imply that

which contradicts the first equation of (16). Therefore, (15) holds. Letting \(\varepsilon \longrightarrow +\infty \), we have

Therefore, the unique \(\mathrm {PAP}\) solution of system (4) is globally exponentially stable and the uniqueness follows from the stability. The proof is complete. \(\square \)

Remark 3

Note that from the conditions of Theorems 1 and 2, it is easy to see that both the continuous time case and the discrete time case of recurrent neural networks (4) have the same pseudo-almost periodic.

Remark 4

Theorems 1 and 2 are new even for the both cases of differential equations \(({\mathbb {T}} = {\mathbb {R}})\) and difference equations \(({\mathbb {T}} = {\mathbb {R}})\).

Remark 5

Because neutral-type recurrent NNs with D operator is a class of DOBNFDEs, the stability of its PAP solutions is not easy to be established. Here, the map construction (4) and the variable substitution \(Y_i(t)=y_i(t)-p_i(t)y_i(t-r_i(t))\) play a key role in the proof of Theorem 1, which can be used to analyze the PAP solution problem for other DOBNFDEs.

Remark 6

Set

and

A function \(f\in BC({\mathbb {R}}^+,{\mathbb {R}})\) is said to be asymptotically w-periodic if it can be expressed as \(f=g+h\) where \(g\in P_w({\mathbb {R}}^+,{\mathbb {R}})\) and \(h\in C_0({\mathbb {R}}^+,{\mathbb {R}}).\) The collection of such functions will be denoted by \(AP_w({\mathbb {R}}^+,{\mathbb {R}}).\) The study of the existence of periodic solutions to differential equations is one of the most important topics in the qualitative theory, due both to its mathematical interest and its applications in many scientific fields, such as mathematical biology, control theory, physics, etc. However, some phenomena in the real world are not periodic, but approximately periodic or asymptotically periodic. As a result, in the past several decades many authors proposed and developed several extensions of the concept of periodicity, such as almost periodicity, almost automorphy, pseudo almost periodicity, pseudo almost automorphy. Then, the space of pseudo almost-periodic functions contains strictly the space of almost-periodic functions, of asymptotical periodicity functions, and of periodic functions, the criteria obtained in this paper extend or improve the results given in [43, 44].

5 Numerical Simulations

In this section, we give an example to illustrate the feasibility and effectiveness of our results. Consider the following delayed recurrent NNs with D operator:

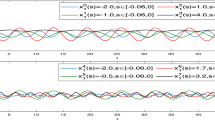

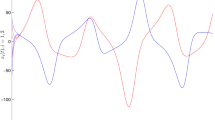

where \(f_i(u)=g_i(u)=h_i(u)=\frac{1}{5}\sin (u)\), \(p_1(t)=0.1\sin (t)\), \(p_2(t)=\frac{1}{15}\cos (t)\), \(a_1(t)=0.9+0.1\sin (t)\), \(a_2(t)=0.8+0.1\cos (t)\), \(c_{11}(t)=0.4+0.1\sin (t)\), \(c_{12}(t)=0.3+0.1\cos (t)\), \(c_{21}(t)=0.5+0.1\cos (t)\), \(c_{22}(t)=0.2+0.1\sin (t)\), \(b_{11}(t)=0.2-0.1\cos (t)\), \(b_{12}(t)=0.3+0.1\sin (t)\), \(b_{21}(t)=0.6-0.1\cos (t)\), \(b_{22}(t)=0.5-0.1\sin (t)\), \(d_{11}(t)=0.2+0.1\sin (t)\), \(d_{12}(t)=0.4+0.1\cos (t)\), \(d_{21}(t)=0.2+0.1\cos (t)\), \(d_{22}(t)=0.6+0.1\sin (t)\), \(r_1(t)=|\cos (t)|\), \(r_2(t)=|\sin (t)|\), \(\tau (t)=2|cos(t)|\), \(N_{ij}=e^{-t}\), \(I_1(t)=0.3+0.2\sin (\sqrt{3}t)\), \(I_2(t)=0.4+0.1\cos (\sqrt{3}t)\).

By simple calculation, we get

\(L^f_i=L^g_i=L^h_i=\frac{1}{5}\), \(p^+_1=0.1\), \(p^+_2=\frac{1}{15}\), \(a^+_1=1\), \(a^+_2=0.9\), \(a^-_1=0.9\), \(a^-_2=0.8\), \(c^+_{11}=0.5\), \(c^+_{12}=0.4\), \(c^+_{21}=0.6\), \(c^+_{22}=0.3\), \(b^+_{11}=0.3\), \(b^+_{12}=0.4\), \(b^+_{21}=0.7\), \(b^+_{22}=0.6\), \(d^+_{11}=0.3\), \(d^+_{12}=0.5\), \(d^+_{21}=0.3\), \(d^+_{22}=0.7\), \(r^+1_1=r^+_2=1\), \(\tau ^+=2\),

and

So condition \(\mathbf {(H_4)}\) is satisfied. Therefore, according to Theorems 1 and 2, system (4) has a unique \(\mathrm {PAP}\) solution that is globally exponentially stable (see Figs. 1, 2, 3, 4).

Remark 7

In numerical example, the problem of global exponential stability of PAP solutions of neutral type recurrent NNs (4) with parameters (24) and D operator on time–space scale has not been studied before. One can see that all results obtained in [35, 39, 40, 42] are invalid for system (24).

6 Conclusion and Open Problem

In this paper, we have studied the a class of neutral type recurrent neural networks with time-varying delays, distributed delay and D operator on time–space scales. By using the Banach’s fixed point theorem and the theory of calculus on time scales, we obtain some sufficient conditions for the existence, the uniqueness and the global exponential stability of PAP solutions for system (4). It is the first time that a class of neutral-type recurrent NNs with time-varying delays, distributed delay and D operator on time–space scales is presented. Finally, we formulate some open problems.

Problem 1

We would like to extend our results to more general recurrent NNs with D operator on time–space scales, such as fuzzy recurrent NNs models:

where \(e_{ij}(.)\) is feed-forward template; \(\alpha _{ij}(.)\), \(\beta _{ij}(.)\), \(T_{ij}(.)\) and \(S_{ij}(.)\) donate elements of the fuzzy feedback MIN template, fuzzy feedback MAX template, fuzzy feed-forward MIN template and fuzzy feed-forward MAX template, respectively; \(\displaystyle \bigvee \) denote the fuzzy AND operation and \(\displaystyle \bigwedge \) is the fuzzy OR operation; \(\nu (.)\) denote the input of the \(i\mathrm{th}\) neuron. The corresponding results will appear in the near future.

Problem 2

It is well known that when discussing dynamic behavior of neutral type recurrent neural networks with time-varying delays, distributed delay and D operator on time–space scales, the assumption \(\mathbf {(H_1)}\) is very important in the proof process. However, in the existing literatures (see [28,29,30]), almost all results on the stability of pseudo almost periodic solution for neutral type recurrent NNs with D operator are obtained under global Lipschitz neuron activations. When neuron activation functions do not satisfy global Lipschitz conditions, people want to know whether the neutral type recurrent NNs is stable. In practical engineering applications, people also need to present new neural networks. Therefore, developing a new class of neutral type recurrent NNs without global Lipschitz neuron activation functions and giving the conditions of the stability of new neutral type recurrent NNs are very interesting and valuable. Therefore, studying the existence and the global exponential stability of the pseudo almost periodic solution of recurrent NNs on time scale and without \(\mathbf {(H_1)}\) will be our future research interest.

Problem 3

It is known that complex numbers are of great significance to fundamental theory and practical applications in engineering such as communication, electromagnetic, quantum mechanics, and so on. At present many research around the stability analysis of complex-valued neural networks such that the stability in Lagrange sense investigated in [45], some sufficient conditions are established in [46] that ensure the boundedness and stability for a general class of complex-valued neural networks with variable coefficients and proportional delays and in [47] the authors investigated the boundedness and robust stability for a class of delayed complex-valued neural networks with interval parameter uncertainties. However, the approach used in the above mentioned work cannot be extended to solve the problem studied in our paper. Thus, the existence and the global exponential stability of the pseudo almost periodic solution of neutral type recurrent neural networks with time-varying delays, distributed delay and D operator on time–space scales will be a real problem to be studied in the near future work.

References

Wen S, Hu R, Yang Y, Huang T, Zeng Z, Song YD (2018) Memristor-based echo state network with online least mean square. IEEE Trans Syst Man Cybern Syst 99:1–10

Wen S, Xiao S, Yan Z, Zeng Z, Huang T (2018) Adjusting learning rate of memristor-based multilayer neural networks via fuzzy method. IEEE Trans Comput Aided Des Integr Circuits Syst. https://doi.org/10.1109/TCAD.2018.2834436

Wen S, Liu W, Yang Y, Huang T, Zeng Z (2018) Generating realistic videos from keyframes with concatenated GANs. IEEE Trans Circuits Syst Video Technol. https://doi.org/10.1109/TCSVT.2018.2867934

Hopfield JJ (1982) Neural networks and physical systems with emergent collective computational abilities. Proc Natl Acad Sci 79(8):2554–2558

Aouiti C, Gharbia IB, Cao J, Alsaedi A (2019) Dynamics of impulsive neutral-type BAM neural networks. J Frankl Inst. https://doi.org/10.1016/j.jfranklin.2019.01.028

Aouiti C, Gharbia IB, Cao J, M’hamdi MS, Alsaedi A (2018) Existence and global exponential stability of pseudo almost periodic solution for neutral delay BAM neural networks with time-varying delay in leakage terms. Chaos Solitons Fractals 107:111–127

Alimi AM, Aouiti C, Assali EA (2019) Finite-time and fixed-time synchronization of a class of inertial neural networks with multi-proportional delays and its application to secure communication. Neurocomputing 332:29–43

Cao J, Wang J (2005) Global asymptotic and robust stability of recurrent neural networks with time delays. IEEE Trans Circuits Syst I Regul Pap 52(2):417–426

Cao J, Wang L (2002) Exponential stability and periodic oscillatory solution in BAM networks with delays. IEEE Trans Neural Netw 13(2):457–463

Aouiti C, Miaadi F (2018) Finite-time stabilization of neutral Hopfield neural networks with mixed delays. Neural Process Lett. 48:1645–1669. https://doi.org/10.1007/s11063-018-9791-y

Alimi AM, Aouiti C, Chérif F, Dridi F, M’hamdi MS (2018) Dynamics and oscillations of generalized high-order Hopfield neural networks with mixed delays. Neurocomputing 321:274–295. https://doi.org/10.1016/j.neucom.2018.01.061

Aouiti C, Miaadi F (2018) Pullback attractor for neutral Hopfield neural networks with time delay in the leakage term and mixed time delays. Neural Comput Appl. https://doi.org/10.1007/s00521-017-3314-z

Li X, Song S (2013) Impulsive control for existence, uniqueness, and global stability of periodic solutions of recurrent neural networks with discrete and continuously distributed delays. IEEE Trans Neural Netw Learn Syst 24(6):868–877

Li X, Song S, Wu J (2018) Impulsive control of unstable neural networks with unbounded time-varying delays. Sci China Inf Sci 61(1):012203

Xiao Q, Huang T, Zeng Z (2018) Global exponential stability and synchronization for discrete-time inertial neural networks with time delays: a timescale approach. IEEE Trans Neural Netw Learn Syst. https://doi.org/10.1109/TNNLS.2018.2874982

Cao J, Huang DS, Qu Y (2005) Global robust stability of delayed recurrent neural networks. Chaos Solitons Fractals 23(1):221–229

Aouiti C, M’hamdi MS, Chérif F (2017) New results for impulsive recurrent neural networks with time-varying coefficients and mixed delays. Neural Process Lett 46(2):487–506

Huang Q, Cao J (2017) Stability analysis of inertial Cohen–Grossberg neural networks with Markovian jumping parameters. Neurocomputing. https://doi.org/10.1016/j.neucom.2017.12.028

Aouiti C, M’hamdi MS, Touati A (2017) Pseudo almost automorphic solutions of recurrent neural networks with time-varying coefficients and mixed delays. Neural Process Lett 45(1):121–140

Chen Z (2017) Global exponential stability of anti-periodic solutions for neutral type CNNs with \(D\) operator. Int J Mach Learn Cybern 9:1109–1115. https://doi.org/10.1007/s13042-016-0633-9

Liu B (2016) Finite-time stability of CNNs with neutral proportional delays and time-varying leakage delays. Math Methods Appl Sci 40:167–174. https://doi.org/10.1002/mma.3976

Aouiti C (2016) Oscillation of impulsive neutral delay generalized high-order Hopfield neural networks. Neural Comput Appl 29:477–495. https://doi.org/10.1007/s00521-016-2558-3

Gui Z, Ge W, Yang X (2007) Periodic oscillation for a Hopfield neural networks with neutral delays. Phys Lett A 364(3–4):267–273

Liu B (2015) Pseudo almost periodic solutions for neutral type CNNs with continuously distributed leakage delays. Neurocomputing 148:445–454

Yu Y (2016) Global exponential convergence for a class of HCNNs with neutral time-proportional delays. Appl Math Comput 285:1–7. https://doi.org/10.1016/j.amc.2016.03.018

Yao L (2017) Global exponential convergence of neutral type shunting inhibitory cellular neural networks with D operator. Neural Process Lett 45(2):401–409

Zhang A (2017) Pseudo almost periodic solutions for neutral type SICNNs with D operator. J Exp Theor Artif Intell 29(4):795–807

Candan T (2016) Existence of positive periodic solutions of first order neutral differential equations with variable coefficients. Appl Math Lett 52:142–148

Yao L (2018) Global convergence of CNNs with neutral type delays and D operator. Neural Comput Appl 29(1):105–109

Chen Z (2017) Global exponential stability of anti-periodic solutions for neutral type CNNs with D operator. Int J Mach Learn Cybern 9:1109–1115. https://doi.org/10.1007/s13042-016-0633-9

Hilger S (1990) Analysis on measure chains—a unified approach to continuous and discrete calculus. Results Math 18(1–2):18–56

Bohner M, Peterson AC (eds) (2002) Advances in dynamic equations on time scales. Springer, Berlin

Agarwal RP (2002) Dynamic equations on time scales: a survey, Special Issue on “Dynamic Equations on Time Scales”, edited by RP Agarwal, M. Bohner, and D. O’Regan. Preprint in Ulmer Seminare 5:1–26

Chen A, Du D (2008) Global exponential stability of delayed BAM network on time scale. Neurocomputing 71(16–18):3582–3588

Li Y, Meng X, Xiong L (2017) Pseudo almost periodic solutions for neutral type high-order Hopfield neural networks with mixed time-varying delays and leakage delays on time scales. Int J Mach Learn Cybern 8(6):1915–1927

Zhou B, Song Q, Wang H (2011) Global exponential stability of neural networks with discrete and distributed delays and general activation functions on time scales. Neurocomputing 74(17):3142–3150

Yu X, Wang Q (2017) Weighted pseudo-almost periodic solutions for shunting inhibitory cellular neural networks on time scales. Bull Malays Math Sci Soc. https://doi.org/10.1007/s40840-017-0595-4

Zhang CY (1994) Pseudo almost periodic solutions of some differential equations. J Math Anal Appl 151:62–76

Gao J, Wang QR, Zhang LW (2014) Existence and stability of almost-periodic solutions for cellular neural networks with time-varying delays in leakage terms on time scales. Appl Math Comput 237:639–649

Du B, Liu Y, Batarfi HA, Alsaadi FE (2016) Almost periodic solution for a neutral-type neural networks with distributed leakage delays on time scales. Neurocomputing 173:921–929

Bohner M, Peterson A (2012) Dynamic equations on time scales: an introduction with applications. Springer, Berlin

Li Y, Yang L, Li B (2016) Existence and stability of pseudo almost periodic solution for neutral type high-order Hopfield neural networks with delays in leakage terms on time scales. Neural Process Lett 44(3):603–623

Wu A, Zeng Z (2016) Boundedness, Mittag–Leffler stability and asymptotical \(\omega \)-periodicity of fractional-order fuzzy neural networks. Neural Netw 74:73–84

Wu A, Zhang J, Zeng Z (2011) Dynamic behaviors of a class of memristor-based Hopfield networks. Phys Lett A 375(15):1661–1665

Song Q, Shu H, Zhao Z, Liu Y, Alsaadi FE (2017) Lagrange stability analysis for complex-valued neural networks with leakage delay and mixed time-varying delays. Neurocomputing 244:33–41

Song Q, Yu Q, Zhao Z, Liu Y, Alsaadi FE (2018) Dynamics of complex-valued neural networks with variable coefficients and proportional delays. Neurocomputing 275:2762–2768

Song Q, Yu Q, Zhao Z, Liu Y, Alsaadi FE (2018) Boundedness and global robust stability analysis of delayed complex-valued neural networks with interval parameter uncertainties. Neural Netw 103:55–62

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Aouiti, C., Assali, E.A. & Ben Gharbia, I. Pseudo Almost Periodic Solution of Recurrent Neural Networks with D Operator on Time Scales. Neural Process Lett 50, 297–320 (2019). https://doi.org/10.1007/s11063-019-10048-2

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-019-10048-2

Keywords

- Global exponential stability

- Neutral-type neural networks

- Time space scales

- D operator

- Pseudo-almost periodic solution.