Abstract

Benefit transfer is the use of pre-existing empirical estimates from one or more settings where research has been conducted previously to predict measures of economic value or related information for other settings. These transfers offer a feasible means to provide information on economic values when time, funding and other constraints impede the use of original valuation studies. The methods used for applied benefit transfers vary widely, however, and it is not always clear why certain procedures were applied or whether alternatives might have led to more credible estimates. Motivated by the importance of benefit transfers for decision-making and the lack of consensus guidance for applied practice, this article provides recommendations for the conduct of valid and reliable transfers, based on the insight from the combined body of benefit transfer research. The primary objectives are to: (a) advance and inform benefit-transfer applications that inform decision making, (b) encourage consensus over key dimensions of best practice for these applications, and (c) focus future research on areas requiring further advances. In doing so, we acknowledge the healthy tension that can exist between best practice as led by the academic literature and practical constraints of real-world applications.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

To make quantitative statements about the likely effects of public policies, economists must extrapolate findings from previous empirical studies to new policy scenarios (US EPA). Footnote 1

Benefit transfer is the use of pre-existing empirical estimates from one or more settings where research has been conducted previously to predict measures of economic value or related information for other settings. The primary feature that distinguishes benefit transfer from other types of economic valuation is that values are quantified by using “existing data or information in settings other than for what it was originally collected” (Rosenberger and Loomis 2003, p. 445). Benefit transfers offer a feasible means to provide information on economic values to support decision-making when time, funding and other practical constraints impede the use of original valuation studies. Due to considerations such as these, benefit transfers have become a ubiquitous component of benefit–cost analyses in the United States, European Union and elsewhere (Griffiths and Wheeler 2005; Iovanna and Griffiths 2006; Johnston and Rosenberger 2010; Brouwer and Navrud 2015; Loomis 2015; Rolfe et al. 2015a; Johnston et al. 2015b, 2018; Wheeler 2015; Newbold et al. 2018a).

Among the primary goals of benefit transfers is the provision of credible value estimates to inform decisions. The methods used for applied transfers vary widely, however, and it is not always clear why certain transfer procedures were applied or whether alternatives might have led to more credible estimates.Footnote 2 More than ten years ago, Boyle et al. (2010, p. 162) argued that “even a cursory review of the benefit transfer literature displays a wide variety of implementation procedures, with no consensus on which procedure actually results in the lowest transfer error […].” This comment reflects similar observations found elsewhere in the benefit-transfer literature (Wilson and Hoehn 2006; Johnston and Rosenberger 2010; Johnston et al. 2018).

Recognizing the importance of benefit transfers as an input to decision making worldwide, there have been longstanding efforts to address this ambiguity over best practices. Research since the late 1980s has provided insights into applicable theory and methods.Footnote 3 The first widely recognized, collaborative effort to inform benefit-transfer methods was a 1992 US EPA sponsored workshop.Footnote 4 These and other efforts have contributed to multiple areas of implicit methodological consensus, summarized in works such as Brouwer (2000), Boyle et al. (2010), Johnston and Rosenberger (2010), Richardson et al. (2015), and Johnston et al. (2018). Yet despite a growing literature, there are still no established expectations for most benefit transfer procedures in applied use. The peer-reviewed literature emphasizes novel contributions to theory, methodology, and empirical results, while remaining agnostic on many practical questions facing benefit-transfer practitioners. This divide between the academic literature and practitioner needs has impeded the development of consensus protocols.Footnote 5

The resulting lack of guidance and consistency in applied practice threatens to undermine the scientific credibility of benefit transfers used to support decision-making. It can also prevent transfers from being used in situations where they might otherwise provide useful information. For example, the absence of consensus best-practice guidance can lead to situations wherein decision makers choose to ignore or suppress potential information from benefit transfers or, conversely, those seeking to discredit value estimates impose ad hoc or unattainable methodological requirements in the name of “validity” without a strong scientific basis (Boyle et al. 2017). In such cases, guidance can both support the use of benefit transfers and establish minimum standards to frame validity and applicability debates.

Motivated by the importance of benefit transfers for decision-making and the lack of consensus guidance for applied practice, this article provides recommendations for the conduct of valid and reliable transfers, based on insights from the combined body of research. The primary objectives are to: (a) advance and inform benefit-transfer applications that support decision making, (b) encourage consensus over key dimensions of best practice for these applications, and (c) focus future research on areas requiring further advances. In doing so, we acknowledge the healthy tension that can exist between best practice as led by the academic literature and practical constraints of real-world applications.

We also recognize that benefit transfer is an evolving method. Although there are some areas in which the literature supports unequivocal guidance for best practices, there are others in which methodological questions remain. For example, there is consensus over the need for unambiguous definitions of the commodity change and theoretical welfare measure that characterize the value to be estimated at the policy site. However, there is less consensus over the degree of similarity that should be required between these clearly defined policy-site concepts and information available from study-sites. How similar is “similar enough”? Further, variation across multiple study sites may enable the sites to collectively characterize policy-site conditions, potentially reducing the need for individual site-to-site similarity across all dimensions. Recognizing that questions of this type persist in the literature and that important tradeoffs exist, we identify areas where the literature does not yet support clear guidance and for which additional research is needed. In areas such as these, it is particularly important for practitioners to document their assumptions and consider the robustness of presented results.

We organize the proposed guidance around ten core recommendations. These recommendations are summarized here and expanded upon in the sections that follow.

-

1.

Value Definition and Valuation Context: The economic value to be estimated should be defined clearly in the context of the policy-site decision context and information needs.

-

2.

Theoretical Foundation: The welfare-theoretic foundations for the benefit transfer should be described, focusing on the definition and properties of the change to be valued.

-

3.

Selection of Study Sites and Study-site Value Information: The search for information to support the benefit transfer, including study sites and value information, should be conducted in a comprehensive and systematic manner that reflects that underlying value definition and valuation context, along with the information available from each study and site.

-

4.

Selection of a Transfer Method: The transfer method should be selected based on: (a) data availability, (b) steps required to harmonize study-site estimates with policy-site conditions, (c) insight from the literature regarding the accuracy of transfer methods under different circumstances, and (d) the intended uses of the resulting information.

-

5.

Data Adjustments: Study-site data adjustments should be completed to harmonize information across studies and enable well-defined value estimates for policy sites. These adjustments should be consistent with the value definition, valuation context, theoretical foundation for the transfer, and available study- and policy-site information.

-

6.

Auxiliary Data: Auxiliary data that are not provided in study-site documentation and that can enhance transfer accuracy should be used when available.

-

7.

Data Analyses: Transfer methods should adhere to recommended practices for the underlying analytical methods that are applied. These include the use of established theoretical and empirical methods for all types of transfers and best practices for the estimation and use of meta-analysis.

-

8.

Aggregation and Scaling: The extrapolation and aggregation of transfer-value estimates to the policy-site population should follow best practices established for welfare and benefit–cost analyses. Any benefit scaling should be justified with respect to the type of commodity and change in question, within the context of policy-site conditions.

-

9.

Robustness Analyses: Robustness analyses should explore the sensitivity of policy-site value estimates to decisions such as those associated with the selection of studies and value estimates, the transfer procedures that are applied, and assumptions about the extent of the market.

-

10.

Reporting: Reporting should document all key components of the transfer exercise. This should include reporting on key study- and policy-site characteristics, data used in the transfer, transfer procedures, analyst assumptions and resulting value predictions.

The paper proceeds with an initial overview of the benefit-transfer literature as a foundation for the discussion of each recommendation. We also briefly review benefit-transfer validity and reliability as core concepts motivating the presented guidance. Although we present the subsequent recommendations in a linear fashion, we acknowledge that the steps in a benefit transfer are interrelated and that what is learned as one proceeds through the transfer process may require review and revision of what has been done on previous steps. Hence, the recommendations for different components in the transfer process are intertwined.

2 Setting the Context for Best Practices

Benefit-transfer methods have been recognized since the 1980s and refined since the 1992 US EPA workshop. Transfers may occur over time, space, populations, policies or other dimensions. The key feature that distinguishes benefit transfers from other types of valuation is that prior study results are used to develop a value estimate for a setting that is different from the setting originally considered. For conciseness in terminology, the source-data settings are typically called “study sites” and the receiving estimates, “policy sites”.Footnote 6 The primary goal of a benefit transfer is the provision of value estimates for changes in the quantity or quality of a good or service that is expected to arise from an action being evaluated. The credibility of transfer estimates, considered in terms of validity and reliability (Bishop and Boyle 2019), is determined by the procedures used to implement the transfer.Footnote 7

The transfer of value information to estimate benefits and costs is common across governmental, intergovernmental, and non-governmental organizations.Footnote 8 These practices took place long before “benefit transfer” was recognized as a field of study. Inspired by seminal work such as Freeman (1984), benefit transfer became recognized as a distinct area of research and application in the early 1990s. The 1992 Association of Environmental and Resource Economics and US EPA workshop and a subsequent special section of Water Resources Research (1992, 28(3)) are credited with launching contemporary research in the area.

Evolution of the literature over the following two decades led to the formalization of benefit transfer as a tool for benefit–cost analyses within US EPA (2000)Footnote 9 and other US government agencies (Loomis 2015; Wheeler 2015), with similar acknowledgement in Canada (Treasury Board of Canada Secretariat 2007). The approval of the European Water Framework Directive in 2000 promoted greater use of benefit transfers in Europe (Hanley et al. 2006; Brouwer and Navrud 2015; Rosenberger and Loomis 2017).Footnote 10 The potential use of benefit transfers in Australia was motivated by the demand for value estimates within Regulatory Impact Statements and in New Zealand by the demand for similar information within Regulatory Impact Analyses (Rolfe et al. 2015a).

In 2005 the US EPA and Environment Canada sponsored a benefit-transfer workshop and special issue in Ecological Economics (2006, 60(2)). Subsequent reviews were provided by Boyle et al. (2010) and Johnston and Rosenberger (2010). Frequently cited books on benefit-transfer methods during this period included Desvousges et al. (1998), Florax et al. (2002), Rolfe and Bennett (2006), Navrud and Ready (2007), and later Johnston et al. (2015a). A recent collective contribution was the 2016 US EPA workshop, Benefit Transfer: Evaluating How Close is Close Enough? (Smith 2018), with an accompanying special issue of Environmental and Resource Economics (2018, 69 (3)). Among the topics emphasized within this special issue were challenges faced by practitioners seeking to apply benefit transfer within the context of applied policy analysis (Newbold et al. 2018a), the extent to which structural modeling could be used to improve transfer validity and reliability (Kling and Phaneuf 2018; Newbold et al. 2018b), and the econometrics of meta-analytic transfers (Boyle and Wooldridge 2018).Footnote 11

These efforts reflect the expanding literature on benefit transfer. Insights from this work are diverse and scattered across hundreds of articles, chapters, and monographs. Although multiple publications going back to the 1980s have sought to present instructions for benefit transfer, these have been limited to such contributions as primers on basic methods, core theoretical conditions for validity, ideal criteria for benefit transfers, and general insights such as the relevance of site similarity.Footnote 12 Although this information is suitable for the introduction of theory, principles and techniques, it falls short of the practical, consensus recommendations required to guide analysts and inform decision makers on the elements of a credible transfer.

2.1 Methodological Overview

Although many different types of information can be transferred, benefit transfers are most often discussed in terms of welfare estimates such as measures of willingness to pay (WTP). As discussed by Boyle et al. (2010), the transfer of pre-existing information to new situations is common across multiple disciplines, and benefit transfer is not the only situation in which monetary quantities are transferred.Footnote 13 Yet there is a difference between benefit transfers and other types of data transfers in heath, engineering and some other areas of economics. Other data transfers are often grounded in observable phenomena, such as death rates in human populations, structural integrity of buildings, and housing sales prices. With such data, observations can be used to establish the accuracy of the transfers.Footnote 14 Transfers of welfare estimates, like many types of economic data (e.g., demand and supply elasticities), do not share this observability condition to establish accuracy (Bishop and Boyle 2019). Applications typically involve the transfer of measures for a well-defined theoretical concept, like WTP, that is never observed. Like many other concepts in the social sciences, they are estimated via statistical inference from associated observational data such as choices in a market or responses to a survey.

The provision of environmental goods and services outside of organized markets compounds the challenge for environmental benefit transfers (Boyle et al. 2010). Because market transactions are not observable for many environmental goods and services, the most appropriate ways to characterize and measure these goods and services for valuation are not always clear (Boyd et al. 2016). Moreover, the ways that environmental changes are measured at a study site may not be equally relevant for the policy site. The resulting diversity in measurement protocols can cause errors and ambiguities within benefit transfers (Johnston and Zawojska 2020).

Challenges such as these have led to the characterization of benefit transfer as one of the most difficult types of information transfer (Boyle et al. 2010). However, this does not imply a lack of mechanisms to evaluate validity and reliability (and hence credibility). Welfare estimates that are the foundational building blocks for any benefit transfer are based on the same economic theory that informs all welfare analysis. Theory informs the logic process through which study-site values are estimated and then transferred to a policy site (Smith 2018). This same logic process provides the foundation for benefit-transfer guidance and evaluations.

Regardless of approach, all benefit transfers construct a policy-site value estimate using information from one or more study-site value estimates. The initial steps in a transfer include identification of: (a) the change in the quantity or quality of the good or service to be valued, (b) the population or market for whom values are to be estimated, (c) the policy or decision the transfer estimate will support, and (d) the type of value information required to support decision making. These considerations, framed in the context of the desired theoretical welfare estimate for the policy site, inform the search for policy-site studies and the choice of value information from these studies, as well as the decision to seek and use related auxiliary data. Available study-site information plays a critical role in determining whether alternative transfer methods can provide credible policy-site value estimates. Another crucial factor is the similarity between study and policy sites, broadly defined, which influences the degree to which (and what type of) adjustments may be required to calibrate study-site information to policy-site conditions.

The key differences among alternative transfer methods are the information used from the study-site and the procedures used to develop and calibrate policy-site value estimates. Methods can be broadly classified into two groups: value transfers and function transfers.Footnote 15 Value transfers use a single value estimate or a set of value estimates from existing studies to compute a policy-site value estimate. These are also called “unit-value transfers.” Values can be transferred as a single study-site value or a summary statistic (e.g., mean) of several study-site values. The resulting estimates can be transferred “as is” or can be adjusted in various ways to calibrate transfer estimates to policy-site conditions.

Function transfers produce calibrated policy-site value estimates using information provided by available studies at one or more study sites, where study information is used to develop a function that produces these estimates (Loomis 1992). The information drawn from each study is not limited to a single value or set of values but includes additional information needed to construct a benefit function. Multiple approaches may be used to produce these functions. For example, transfers may rely on benefit functions estimated directly for one or more study sites using recreation-demand models, hedonic-price (or wage) models, defensive-behavior methods, stated-preference models, various types of ecological production/productivity methods, or other techniques (Freeman et al. 2014; Champ et al. 2017). Transfers of this type are often called single-site or single-study benefit function transfer, as they rely on functions estimated previously for individual sites or studies in the literature. Despite this nomenclature, these functions may be estimated using primary data taken from multiple sites, with the function specified to include spatially explicit explanatory variables that characterize conditions at each site (e.g., Parsons and Kealy 1994; Fezzi and Bateman 2011; Bateman et al. 2013).

In addition, benefit functions may be derived from meta-equations that statistically synthesize value information from multiple prior valuation studies, typically using meta-regression analysis (Bergstrom and Taylor 2006; Nelson and Kennedy 2009; Boyle and Wooldridge 2018). These are commonly referred to as meta-functions and are an increasing source of benefit functions in the literature (Johnston et al. 2018). As a final example, benefit functions may be developed via structural models that use data from multiple prior valuation studies to calibrate preference parameters (Smith et al. 2002, 2006; Smith and Pattanayak 2002; Van Houtven et al. 2011; Phaneuf and Van Houtven 2015).

Function transfers have an advantage over value transfers, in that they can allow systematic adjustment of study-site value information to calibrate transfer estimates to policy-site conditions (Loomis 1992). These adjustments may also have greater credibility because they rely on functions derived from information in the original studies. In contrast, adjustments within value transfers do not typically rely on information present in source studies, and frequently reflect post hoc calibrations (e.g., adjustments to account for income differences, grounded in assumed income elasticities of demand; Czajkowski and Ščasný 2010; Barbier et al. 2017).

A meta-function has a potential advantage over original-study functions in that the synthesis of information from multiple studies allows calibration for factors that are fixed for an individual study but vary across studies. For example, this can provide the capacity to identify and control for systematic influences of primary-study valuation methods on welfare, or characteristics such as baseline environmental conditions that may not vary within the context of an individual primary study. Preference calibration, in turn, ensures that value predictions are consistent with economic theory in the context of policy-site conditions. Both meta-functions and preference calibration allow information from multiple study sites to collectively reflect policy-site conditions, whereas it is typically assumed that value transfers require closer alignment between one or few study sites and the policy site.

All types of transfer enable information from primary studies to be supplemented with auxiliary information. Common examples include the use of information from consumer price indexes to convert monetary quantities to a common base year and the use of government census data to obtain data on household incomes. For example, Hammitt and Robinson (2011) discuss the use of exogenous information to account for differences in income or currency value across sites. Others have discussed or illustrated the use of GIS data to provide spatial information to support benefit transfers, such as data on land cover and land use, baseline environmental conditions, or geospatial information such as distances between households and environmental changes (e.g., Bateman and Lovett 1998; Bateman et al. 2006, 2011a, 2011b, 2013; Martin-Ortega et al. 2012; Perino et al. 2014; Schaafsma 2015; Johnston et al. 2017a, 2019). Data supplementation of this type is common for meta-function transfers but may be encountered across the spectrum of transfer approaches.

2.2 Validity and Reliability

The recommendations that follow are intended to promote accuracy within benefit transfer, framed in terms of validity and reliability. As introduced above, validity reflects the unbiasedness of an estimate, whereas reliability reflects the variance (Bishop and Boyle 2019). Both measures are related to the errors in benefit-transfer value predictions. These errors are commonly discussed within two broad categories (Rosenberger and Stanley 2006). Errors in benefit transfers that arise due to underlying errors in the original study-site value information are often called measurement errors. Errors that arise due to the transfer of information between study- and policy-sites are often called generalization errors.

Measurement errors occur in original study-site value estimates and it may not be possible to control or offset such errors in the transfer process. All empirical values are estimated with at least random error and, by definition, are random variables. There may also be systematic errors if, for example, a biased econometric estimator was used to produce the original study-site value estimates. If we assume an unbiased estimator (i.e., a valid original estimate), then the primary concern is related to the associated variance (i.e., the reliability). If the original estimate is known or assumed to be biased, implications for the transfer prediction can be difficult to determine, because the direction and magnitude of the bias are almost always unknown.Footnote 16

Generalization errors are artifacts of the transfer process. These errors can arise if study-site information is not well aligned with the policy-site value to be estimated and calibrations during the transfer are not sufficient to offset this lack of alignment. They can also be introduced by any step of the transfer process. For example, a generalization error might occur due to the selection of study sites or study-site information or extrapolation of value predictions to the affected population.Footnote 17 Potential errors arising from the transfer process, like errors discussed with respect to study-site values in the previous paragraph, may or may not be measurable.

Although a general point has been made that benefit transfers can only be as accurate as the original underlying benefit estimates (Brookshire and Neill 1992; Wilson and Hoehn 2006), the relationship between the original accuracy of study-site value estimates and the accuracy of benefit transfers is neither monotonic nor straightforward. Measurement and generalization errors can interact or offset in complex ways that depend in part on the transfer methods that are applied. For example, in a value transfer the study-site estimate might have upward bias that is partially or fully offset, or perhaps overcompensated due to adjustments or generalization errors that occur when transferring that value between sites.Footnote 18 These are complicated issues. Hence, the accuracy of any benefit-transfer must be considered within a holistic framework that addresses how well the transfer estimate approximates the theoretical study-site value.

When considering issues such as these, a challenge is that one cannot establish the accuracy of welfare estimates via direct comparisons with observable data. Welfare measures cannot be observed directly. Hence, the guidance proposed below is grounded in the three-Cs framework (content, construct and criterion validity) for evaluating the accuracy of nonmarket values (Bishop and Boyle 2019). Content validity considers the procedures used to implement the transfer, evaluated based on theory, estimation procedures and findings from prior research such as we describe below.Footnote 19Construct validity evaluations use insights from empirical tests to identify procedures and conditions that minimize bias and reduce variance in estimates. Most evaluations of this type for benefit transfers have taken the form of convergent validity tests that compare welfare estimates from benefit transfers and primary studies or those from two or more benefit-transfer procedures, when used to measure the same policy-site value. There have been numerous contributions to this area of the literature. Criterion validity involves tests that compare benefit-transfer value estimates to measures that have presumed truth. We are not aware of any criterion validity tests applied in the context of benefit transfers.Footnote 20

It is important to keep in mind that no single paper or study can establish or refute the accuracy of an empirical method in general, and that the literature may contain conflicting opinions and outcomes. The empirical results of an economic study typically reflect “hundreds of decisions [on] data collection, preparation, and analysis”, which may lead to variation in the reported conclusions (Huntington-Klein et al. 2021, p. 944). Moreover, all validity tests have limitations.Footnote 21 Thus, in preparing this guidance we take a weight of evidence approach to support recommendations that promote the content validity of practical benefit transfers, supported in part by evaluations of content and construct validity in the benefit transfer literature.

3 Guidance for Benefit-Transfer Practice

The guidance proposed below is designed to assist analysts in the design and conduct of benefit transfers that satisfy conditions of content validity. This guidance is motived by factors such as theoretical constructs of consumer demand theory that guide the estimation of welfare values, together with insights from the peer-reviewed literature that investigates benefit-transfer validity and reliability. These recommendations are meant for benefit transfers used to inform decision making. Nothing in this paper is meant to impose constraints on research to enhance benefit-transfer practice. It is our hope that this paper will be a guide for benefit-transfer analysts and a forum for decision makers to evaluate transfer estimates, as well as motivating innovative validity and reliability research.

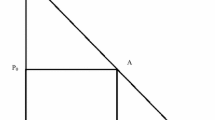

We also emphasize that methodological decisions in any benefit transfer are joint and often iterative, with feedback loops allowing reconsideration of earlier decisions. While the recommendations are presented in a linear sequence below, decisions regarding elements of the transfer may be made jointly or recursively.Footnote 22 To help clarify implications for the guidance that follows, Fig. 1 shows the linkages between the key components and decisions in benefit transfer and the topics covered by each recommendation. While each recommendation speaks primarily to one component of the benefit-transfer process (i.e., theory, information, data, analysis), it indirectly relates to others via the joint and sometimes recursive nature of benefit-transfer procedural decisions. Thus, the recommendations presented below should be considered collectively rather than as a menu of parts to be considered and chosen independently, in isolation or within a fixed sequence.

As a final precursor to these guidelines, we stress that—like all economic valuation—benefit-transfer procedures often require input from disciplines beyond economics. Biophysical data and modeling are often required to predict changes in environmental goods and services or the locations where these changes will occur. Input from health sciences and engineering may also be needed for some applications. Geospatial data and analyses are frequently required to model spatial dimensions of affected systems. These and other areas of research have developed best practices to ensure credible science, which apply similarly when these methods contribute to benefit transfers. Hence, beyond the best practices presented below, guidance from other disciplines may be required to ensure valid and credible benefit transfers.

3.1 Value Definition and Valuation Context

The economic value to be estimated should be defined clearly in the context of the policy-site decision context and information needs.

In all benefit transfers, a defined valuation objective is required to guide transfer design, implementation, analysis, and interpretation. This includes definitions of relevant features such as (1) the policy change in question, (2) the identification and description of the good(s) or service(s) to be valued, (3) the increment or decrement of the change(s) to be valued, (4) baseline (or current/status quo) conditions, (5) the theoretical definition of the value to be estimated, (6) the affected population and extent of the market for the analysis, and (7) other factors that characterize the valuation context. Within the final category, relevant considerations may include, but are not limited to, the geographic location of the policy site, geospatial and biophysical features of the site, quantities/qualities of substitutes and complements, and related market conditions such as prices and incomes. Together, these conditions and characteristics describe policy-site conditions.

These specifications set the foundation for implementing the transfer and for the interpretation of the transfer estimate in terms of policy-site conditions. For example, the valuation context directly informs:

-

selection of relevant study sites,

-

selection of value information (e.g., value estimates and functions) from study-site documentation,

-

selection of auxiliary information from study-site documentation (e.g., increment of change valued, summary statistics on sample demographics),

-

determination of whether information is needed beyond that provided in existing valuation studies (e.g., spatial information on substitutes), and

-

selection of a transfer method and adjustments of study-site values to calibrate estimates to policy-site conditions.

When documenting these conditions, it is important to recognize that original valuation studies rarely match policy-site conditions across all dimensions. Benefit transfers must therefore define policy-site conditions both with respect to (a) characterizing policy-site conditions, and (b) identifying factors that will facilitate the use of study-site information to compute a transfer estimate that is calibrated to those policy-site conditions. Identification of policy-site conditions is necessary to identify relevant study sites and the value information therein, and to choose a transfer method to make appropriate adjustments in the calibrated value prediction.

Among the issues that should be considered is the definition of the policy-site value estimates that are desired. These values should be defined in specific units of measurement that encompass relevant temporal, demographic and/or spatial dimensions,Footnote 23 e.g., WTP per person/activity day for recreational use of a lake, WTP per person/symptom day for morbidity impacts, or Value of a Statistical Life (VSL) per person in a national population. These definitions provide the foundation for subsequent procedures in the benefit transfer and for interpreting the value predictions that emerge.

Not all potential sources of study-site value information will provide value estimates in the desired metrics of measurement for a particular application. Hence, attention should be given to the implications of policy-site information needs for the search for study sites and screening of information from these studies. Among the issues to be considered is whether study-site documentation contains information necessary to transform study-site value estimates into the desired unit of measurement for the policy site, assumptions that may be required to make these transformations, and potential implications for the accuracy of benefit transfer procedures (Rolfe and Windle 2008; Zhao et al. 2013; Johnston and Zawojska 2020).

Matching the units of measurement is a necessary step in the search and selection of study-site value information. However, it is not sufficient. Other dimensions of study- and policy-site contexts influence the values that are provided by a good or service, even for the same units of measurement. For example, two study sites might provide value estimates in the same units of measurement but for different decision-making contexts and decision criteria (Brouwer 2000), e.g., value per fish for commercial, recreational or subsistence harvest. In fact, it is unlikely there will be an exact match between study-site and policy-site contexts. Flexibility is needed in study-site choices and value-information selection such that information is available to calibrate a single value to policy-site conditions or so that multiple studies can collectively provide the information needed to calibrate the transfer estimate. Analysts must determine the allowable variation in the commodity and context when identifying studies to support the transfer. Value definitions should consider not only the ideal and often restrictive definitions that might apply for a primary valuation study (or the “perfect” transfer), but also the allowable flexibility in these definitions that is allowable when searching for relevant source studies. The criteria for these determinations should be transparent, to promote credibility and replicability.

Evaluating the tradeoffs between rigidity and flexibility in value definitions can involve complex and multidimensional considerations. For example, overly strict and narrow definitions can diminish the sample size of studies available to support transfer procedures. This can reduce the total amount of information available to inform the transfer and potentially magnify the impact of individual (perhaps outlier) studies, e.g., limiting the ability of study-site values to collectively describe policy-site conditions. Thus, it is not always the case that more rigid and narrow value definitions engender more accurate transfers (Moeltner and Rosenberger 2014).

This guidance implies a balance between narrow definitions of policy-site conditions and the flexibility required to implement benefit transfers. Transfer procedures should maintain consistency between the value prediction and the policy-site context. However, economic theory provides limited guidance on the set of commodity and study-site characteristics that are most important when matching study- and policy-site conditions. Thus, the analyst must also rely on the collective knowledge from the literature to make key decisions. Because site similarity is not solely an economic consideration, insights from other disciplines may be relevant. The analyst can potentially match study sites and policy sites through approaches such as: a) value adjustments, b) the collective variation of value estimates across study sites, or c) calibrated predictions from transfer functions. For example, within the context of meta-analysis, U.S EPA guidance states “(i)t is unlikely that any single study will match perfectly with the policy case; however, each potential study case should inform at least some aspect of the policy decision.”Footnote 24

Despite the importance of policy-site definitions, the benefit transfer literature gives only modest attention to the topic. This, in part, is due to a divergence between benefit transfer as studied in the peer-reviewed literature and as applied in actual settings. Many studies in the academic literature are predesigned tests of convergent validity and not applied benefit transfers. As a result, valuation context conditions and applicable model specifications are often defined ex ante to be similar or identical across study and policy sites, which may not reflect the conditions present in actual benefit transfers (Carson et al. 2015; Johnston et al. 2018; Carolus et al. 2020). It also leads to a common situation in which “benefit-transfer” procedures implemented in academic literature obviate some of key steps required for actual benefit transfers. Hence, the peer-reviewed literature tends to overlook, or perhaps underappreciate, the importance of (a) careful definitions of policy-site conditions and (b) relationships between policy-site definitions and subsequent search and decision protocols for benefit transfers.

3.2 Theoretical Foundation

The welfare-theoretic foundations for the benefit transfer should be described, focusing on the definition and properties of the change to be valued.

Theoretical validity is a foundational element of content validity for all economic analyses, including benefit transfers. Among other things, this implies that validity hinges on (a) the theoretical definition of the policy-site value to be estimated and (b) consistency of procedures and outcomes with economic theory. This theoretical foundation informs the transfer process in multiple ways.

The first is guiding the identification of study-site value estimates to inform the transfer. Economic theory provides guidance for selection of study-site value estimates with respect to the type of welfare measure(s) desired for the policy site. For example, values for otherwise identical goods might be measured as increments or decrements in quantity (or quality) and might have been estimated as utility-held-constant (Hicksian) surplus (e.g., a stated-preference study) or income-held-constant (Marshallian) surplus (e.g., a travel-cost study). Theoretical and empirical evidence can be used to infer how differences among alternative welfare measures may or may not be relevant within each benefit-transfer setting.Footnote 25 The resulting insights can be used to guide the selection and use of value information to ensure sufficient consistency across potentially distinct types of study-site value information used to support the transfer.

Another potentially important issue of welfare consistency is the difference between values measured in terms of willingness to pay (WTP) versus willingness to accept (WTA). These alternative approaches generate different (but related) estimates due to variations in the budget constraint, behavioral factors and assumed property rights, so that substituting or pooling these values can lead to inconsistencies that may influence the validity of the transfer (Horowitz and McConnell 2002; Rolfe et al. 2015b; Tunçel and Hammitt 2014; Zhao and Kling 2001). However, many study sites report WTP estimates only,Footnote 26 and this can lead to a challenge when the property-rights context of the policy site implies that WTA measures are more appropriate. This conundrum is not unique to benefit transfers (Lloyd-Smith and Adamowicz 2018).

Differences between theoretical definitions of the value measure can also be more fundamental, such that issues of validity become unequivocal. For example, the producer surplus realized by a fishing charter boat operator is an entirely distinct theoretical measure from Marshallian consumer surplus realized by charter boat clients, even though both are derived from the same recreational activity (charter fishing trips). Some existing meta-analyses have pooled estimates of consumer surplus (e.g., from recreation-demand or stated-preference models), producer surplus (e.g., from factor-input methods), and measures that are not typically grounded in welfare-theoretic foundations (e.g., damage or restoration cost estimates). Pooling such divergent welfare constructs within valuation meta-data may be useful for analyzing an empirical literature on a topic, but for benefit transfer these existing meta-analyses are “not consistent with an analysis and prediction of a well-defined economic value” (Boyle and Wooldridge 2018, p. 612). A key difference is that these divergent measures apply to different populations and different welfare effects even if arising from the same policy action. Distinct measures of this type should not be aligned in the transfer process.Footnote 27

To avoid these problems, unambiguous delineation of the theoretical value definition for the policy-site value is required. The theoretical justification for selected study-sites and value estimates should explain how these choices align with the policy-site value to be estimated. When variations between study-site value estimates and the policy-site welfare measure are permitted, the rationale and processes to adjust for these differences and to establish sufficient consistency of the included measures should be elucidated (Smith et al. 2002; Johnston and Moeltner 2014; Moeltner and Rosenberger 2014; Moeltner 2015). If transfer methods include tradeoffs between theoretical properties and empirical performance, these tradeoffs and their resolution should be transparent (e.g., Newbold 2018b; Moeltner 2019).Footnote 28

Theory can also provide evidence on the validity of study-site values selected for the transfer; this speaks to the content validity of the original value estimates selected to inform the transfer. For example, in general and all else equal, study-site value estimates should be higher for more unique or quantity- or quality-constrained items, exhibit diminishing marginal utility, and be sensitive to the presence of substitutes and complements. Individual WTP would normally be positively related to incomes. Conditions such as these, when satisfied, can support the credibility and validity of study-site value estimates in transfer analyses.

However, these relationships are artifacts of posited economic models that may or may not hold (e.g., due to potential confounding, incorrect assumptions, the use of chosen functional forms for empirical analysis, etc.). Hence, it is rarely the case that any one theoretical construct can provide an absolute or sufficient “litmus” test for including or excluding study-site value estimates, or for the type of model structure that should be used for transfer procedures (Kling and Phaneuf 2018; Bishop and Boyle 2019). For example, what constitutes a substitute or a complement for a good may vary across settings. Similarly, the size of any economic effect, such as the relationship between quantity and marginal WTP, is an empirical question. It is also the case that information available from study sites may not permit certain tests to be conducted or effects of interest to be identified.

In summary, the role of theory within benefit transfers must be considered in context. This consideration is part of the “balancing act” of theory, available value information, empirical model performance, and policy relevance that is inherent in all benefit transfers (Smith 2018). Although theory is an important foundation for all welfare analysis, judgement is required when determining how it should inform transfer procedures.

3.3 Selection of Study Sites and Study-site Value Information

The search for information to support the benefit transfer, including study sites and value information, should be conducted in a comprehensive and systematic manner that reflects that underlying value definition and valuation context, along with the information available from each study and site.

Grounded in prior steps within the benefit-transfer process (Fig. 1), the goal of the data selection process is to assemble study-site value information to inform valid and reliable policy-site value estimation. This process should consider the full breadth of information available in the literature and whether the content of the literature includes patterns or biases that may affect benefit-transfer procedures or validity (Hoehn 2006; Rosenberger and Johnston 2009). The selection of information to support the transfer—including study sites and value information—requires candidate studies (sources of data for the transfer) to be identified based on factors that include the similarity of each study site to policy-site conditions, the extent to which the information provided by each study is consistent with the theoretical definition of value required for the policy-site application, and the quality of the data (e.g., biophysical, socioeconomic, geospatial, etc.) used to produce the study-site value information.

The data selection process includes four general steps, grounded in the prior recommendations and procedures outlined above:

-

identification of potentially relevant studies,

-

evaluation and screening of studies for transfer suitability,

-

identification and coding of relevant study-site data, and

-

supplementation of study-site data with information from external sources.

When executing each of these steps the analyst should develop systematic processes so that the data-selection and coding processes can be replicated to ensure transparency and credibility. The analyst should explain the procedures used to select and screen studies, along with the value information therein. This documentation should also outline data coding protocols. Systematic procedures are crucial for consistent selection of study sites and the coding of value information from study-site documents.Footnote 29

The selection of study-site identification should reflect a transparent and comprehensive search of the literature based on predetermined key words and application-specific protocols. Valuation databases such as the Environmental Valuation Reference Inventory (EVRI, https://www.evri.ca/en) or the Economics of Ecosystems & Biodiversity Database (TEEB, https://www.teebweb.org) can be good starting points. Common internet search engines such as Google Scholar are also helpful. The use of on-line search engines should be supplemented with additional search procedures when there is evidence that relevant studies may be omitted, for example when there may be grey literature or recent research that may have limited online access, or when standard keyword searches may be insufficient to identify all relevant studies. These procedures can include direct contact with researchers and queries through social media or internet lists (e.g., RESECON listserv, https://www.aere.org/resecon).

Among the decisions to be made based on the literature search is whether sufficient data and study documentation are available to support policy-site value predictions, using different types of transfer procedures. This requires consideration of site characteristics and the characteristics of available studies at those sites. As noted above, similarity in benefit transfers should not be construed to imply that study sites and policy sites must be identical across all or even most dimensions. Instead, one should consider whether available study-sites collectively provide information that can be used to predict policy-site values, considering key dimensions that are expected to influence value estimates. The analyst should strive to select study sites, perhaps augmented with auxiliary information, that allow value predictions to be calibrated to policy-site conditions. This flexibility enables one to broaden the potential population of study sites for consideration. Not all study- and policy-site conditions are germane to prediction of policy-site values. Differences across irrelevant features should not be an impediment to the use of study-site data. At the same time, some consistency is required. Considerations related to the degree of similarity that is required between study- and policy-sites are often discussed under the general headings of “commodity” and “welfare consistency”; these considerations flow directly from the discussion of theoretical properties above.Footnote 30

The literature contains numerous informal references and discussions related to similarity between study sites and policy sites, but few benefit-transfer applications explicitly specify the dimensions of similarity that were considered when selecting data and adjusting study-site values to estimate policy-site values (Rolfe et al. 2015b, 2015c; Brouwer et al. 2016; Carolus et al. 2020). The literature contains differing insights on ways to define site similarity (e.g., Morrison and Bergland 2006; Johnston 2007; Colombo and Hanley 2008; Bateman et al. 2011a) and there are few areas of consensus regarding the dimensions of similarity that are most relevant for valid transfers (Boyle et al. 2010; Johnston and Rosenberger 2010; Johnston et al. 2018; Carolus et al. 2020). Except for a few core dimensions such as income, studies are not consistent with respect to the dimensions of similarity considered to be important.

Nonetheless, it is possible to draw some conclusions regarding the type of consistency that should be expected between study- and policy-sites. First, the level of required consistency varies depending on the type of transfer. For a value transfer the study-site value estimates should be more closely aligned with the policy-site value context, ceteris paribus, because fewer adjustments are possible and generalization errors may be more of a concern (Rolfe et al. 2015c). In contrast, for a meta-equation or preference-function transfers, study-site value estimates should collectively provide the data to calibrate the transfer estimate to policy-site conditions. Moeltner and Rosenberger (2014) further suggest that benefit transfers may sometimes be enhanced by pooling data from seemingly unlike types of study-site applications. An associated condition is that study-site documentation should provide sufficient information to allow the adjustment of transfer values estimates to policy-site conditions (Loomis and Rosenberger 2006). The ability of the analyst to make these selection decisions depend on the documentation of procedures and assumptions for potential study sites.

Second, it is possible to provide some guidance on the general types of consistency that should be considered. These recommendations are grounded in prior guidance on the valuation context and value definition discussed above. Specifically, we suggest that analysts consider similarity or consistency in terms of:

-

the underlying definition of the good or service, and how it influences welfare (i.e., is it the same or similar commodity, and whether it produces welfare in a similar way across settings)Footnote 31;

-

core economic factors such as substitutes, complements and income;

-

consistency of welfare measures, whether adjustments are possible to convert to a common welfare measure, and whether differences between inconsistent measures are likely to be small or large;

-

the general magnitude of change to be valued at the policy site and whether it is an increment or decrement;

-

potentially influential geopolitical or cultural differences (e.g., are values compared across different countries or regions wherein cultural differences might influence values);

-

other important contextual similarities and differences between study sites and the policy site, considering relevant biophysical and socioeconomic dimensions.Footnote 32

The literature provides considerable evidence on the validity and reliability of international transfers, including transfers involving more and less similar countries.Footnote 33 Results of this literature are mixed, but many studies suggest the possibility for heterogeneity in values (Ready et al. 2004), including differences across arguably similar countries such as the US and Canada (Johnston and Thomassin 2010) or high-income countries in Europe such as Germany and Sweden (Ahtiainen et al. 2014; Artell et al. 2019). Studies have also found that large and/or statistically significant differences in value estimates for otherwise identical changes can occur across intra-country regions such as states within the US and Australia (e.g., Loomis et al. 1995; Morrison et al. 2002; Johnston and Duke 2010; Johnston et al. 2005, 2017a, 2019; Moeltner and Rosenberger 2014; Rolfe and Windle 2012).Footnote 34 Hence, researchers should consider the possibility that differences such as these might influence transfer accuracy when selecting study site information. Similarity in core dimensions of demographic, institutional and cultural contexts may be particularly important, as the evidence is mixed when using function transfers to calibrate policy-site value estimates for differences in dimensions such as these (Ready et al. 2004; Lindhjem and Navrud 2008; Brouwer et al. 2015; Hynes et al. 2013). In fact, value transfers between “most similar” sites with income adjustments have often been shown to perform better than function transfers in international contexts (e.g., Bateman et al. 2011a; Czajkowski et al. 2017; Artell et al. 2019).

Beyond this general guidance, it is not yet possible to identify a consensus for specific variables that must be included in all benefit transfers, and hence for which study-site information is required. Instead, the analyst should ground these decisions on a weight of evidence consideration of theoretical/empirical insights and application-specific considerations. These considerations should justify study-site and value-estimate selections, and any calibrations (or lack thereof) that are conducted to predict policy-site value estimates. Analyst judgement is required when determining the suitability of study-site information for any benefit transfer (Newbold et al. 2018a).Footnote 35

A related consideration for study-site selection is the type and quality of valuation studies that have been conducted at each candidate study site (e.g., stated-preference studies, recreation-demand studies, hedonic property value studies, etc.). Study type directly influences the type of value estimates that are available to support the benefit transfer, as related to the theoretical value definition for the transfer and policy-site information needs. The original study type can also influence transfer accuracy in other ways. For example, prior work suggests that the use of data from some types of valuation methods within a transfer may affect transfer accuracy in a systematic manner (Londoño and Johnston 2012; Kaul et al. 2013; Ferrini et al. 2014).Footnote 36 Hence, one should consider the type of original study used to generate study-site value information when determining the suitability of this information to support the transfer.

Studies should also be screened for adherence to recommended methodological practices, considering the multiple caveats on validity assessments described above. For example, Johnston et al. (2017b), Bishop et al. (2020) and Lupi et al. (2020) present best-practice guidelines for stated-preference methods, hedonic studies and recreation-demand methods, respectively. Validity screening is similarly applicable to non-economic procedures applied by the study (e.g., biophysical or spatial analysis), as applicable to the study type, context and intended uses of study-site information.Footnote 37

However, care must be taken with quality screening. As described above, overly rigid or narrow screening can lead to the elimination of imperfect-but-informative studies in ways that reduce transfer accuracy. A study does not have to be ideal across all dimensions to provide potentially useful information. Moreover, although study screening and value selection processes are necessary components of benefit transfer, they may contribute to selection biases (Florax 2002; Hoehn 2006; Nelson and Kennedy 2009; Rosenberger and Johnston 2009; Boyle and Wooldridge 2018). Analysts should be aware of these potential biases and take steps to identify and ameliorate them when possible. Nelson and Kennedy (2009, p. 347) describe “publication bias (aka “file-drawer problem”) [as] a form of sample selection bias that arises if primary studies with statistically weak, insignificant, or unusual results tend not to be submitted for publication or are less likely to be published.” It may also occur if objective criteria with a strong scientific basis are not applied to define selection. The peer-review process—while screening for quality—may exacerbate this problem by discouraging the publication of (otherwise valid) applied valuation studies that do not have methodological or theoretical novelty (McComb et al. 2006; Loomis and Rosenberger 2006; Johnston and Rosenberger 2010). Selection biases may also prevent studies from being conducted in the first place or from being fully documented.

If publication bias is present in a research literature, estimates of true effect sizes drawn from the literature (such as estimates of mean WTP) are distorted, leading to potentially biased inferences (Florax 2002; Stanley 2005, 2008). Study-site selection criteria through on-line search engines may be more likely to identify peer-reviewed journal articles with an unintentional systematic exclusion of grey literature (Stanley and Doucouliagos 2012). Because data screening and selection protocols can affect selection biases, the analyst should consider the potential for these biases and their potential effects on policy-site value predictions (Hoehn 2006; Nelson and Kennedy 2009; Rosenberger and Johnston 2009; Boyle and Woodridge 2018). This guidance is particularly relevant for systematic exclusion of grey literature studies. In addition, screening criteria for study quality (e.g., methodological standards, statistical criteria, plausibility of welfare estimates) can create or exacerbate selection biases. Although methods exist to identify and offset selection biases (Hoehn 2006; Stanley 2005, 2008; Rosenberger and Johnston 2009), these approaches cannot guarantee that all such biases will be identified and offset. Hence, it is imperative to include a broad set of study-site value estimates and conduct sensitivity analyses to identify the effects of potentially influential information on the transfer (Boyle et al. 2013, 2015).

3.4 Selection of a Transfer Method

The transfer method should be selected based on: (a) data availability, (b) steps required to harmonize study-site estimates with policy-site conditions, (c) insight from the literature regarding the accuracy of transfer methods under different circumstances, and (d) the intended uses of the resulting information.

Once potential study-site information has been identified the analyst must determine the type of benefit-transfer method to be applied. The method should be selected systematically based on the valuation context, as defined by such features as available data, similarities between study sites and policy sites, and the intended uses of the transfer. The analyst should justify the transfer method with respect to both theoretical and empirical criteria. As noted above, common approaches include adjusted and unadjusted value transfers, single-site or single-study function transfers, meta-function transfers (structural or reduced-form), and structural preference-calibration transfers.

In some cases, benefit transfers can also be implemented using integrated models that are embedded within partially/fully predesigned tools such as the Integrated Valuation of Ecosystem Services and Tradeoffs (InVEST)Footnote 38 and the Natural Environment Valuation Online tool (NEVO),Footnote 39 among other integrated modeling systems (Tallis and Polasky 2009; Bagstad et al. 2013; Ferrini et al. 2015; Richardson et al. 2015; Bateman and Kling 2020). US EPA has recently developed BenSPLASH (Benefits Spatial Platform for Aggregating Socioeconomics and H2O Quality), a modeling platform for quantifying the economic benefits of water quality changes in the US (Corona et al. 2020). These tools coordinate spatially explicit data, biophysical science and economic modeling of various types to provide insights into the provision and value of environmental and ecosystem service changes. Although tools of this type vary in design and content, their internal benefit-transfer (or economic value prediction) components rely on the same types of underlying approaches as all other benefit-transfers (e.g., different types of value and value-function transfers). For example, economic value predictions within BenSPLASH rely on an embedded water quality value meta-analysis (Corona et al. 2020). The guidance outlined here is relevant for all benefit transfers, whether the transfer is designed from the ground up or implemented using a predesigned software tool. Documentation accompanying these tools (or associated publications) should be used to evaluate the underlying data and analytical methods, and thereby assess whether best practices have been applied.Footnote 40

Key questions relevant to choosing among different benefit-transfer methods include:

-

For value transfer How similar are study sites and policy sites across relevant dimensions? Is there a single study that provides information sufficient to transfer the study-site estimate directly, perhaps with adjustments (e.g., income) to the policy site? Alternatively, are there several study sites providing values that, when averaged and perhaps adjusted, can provide an accurate and credible estimate of the policy-site value? Would validity be improved through adjustments that are more readily accommodated through function transfers, as applicable to the benefit-transfer context? Does the weight of evidence on site similarity support a value transfer over other transfer methods that allow for greater calibration of study-site estimates to match policy-site conditions?

-

For single-site or single-study function transfer Is there an individual study site that provides an estimated function to support a policy-site value prediction or a study that estimates a similar function using primary data collected from multiple sites? Does the function allow calibrations necessary to compute an accurate and credible policy-site value? Is it reasonable to expect that the available study-site benefit function is applicable to the policy site, e.g., based on the observed degree of consistency across conditions at the two sites? Or, alternatively, can the information from multiple primary study sites used to estimate the benefit function—for example within a random utility model of recreation demand—collectively represent conditions at the policy site? Does information available at the policy-site provide sufficient information to populate the available benefit function, and thereby produce calibrated benefit estimates?

-

For structural preference-function transfer Is there sufficient study-site and value estimate information to compute a structural preference-function that supports an accurate and credible policy-site value prediction? Is there evidence to support maintained assumptions necessary to inform model structure? How would a structural approach compare to others in terms of the capacity to use available study- and policy-site information to inform the transfer?

-

For meta-analysis function transfer Is there an existing meta-function that provides the basis for valid and credible transfer or is there sufficient study-site and value-estimate data to estimate a new meta-function that supports a calibrated policy-site value prediction?Footnote 41 Do the study sites and their value estimates collectively provide sufficient variation to support accurate and credible prediction of a policy-site value? Does the content of the literature enable estimation of a credible meta-analysis? Does information available at the policy-site provide sufficient information to populate the available meta-function, and thereby produce calibrated benefit estimates?

No single benefit-transfer method can be considered universally superior to others across all possible circumstances, and questions such as these imply the tradeoffs that are involved in the selection of a transfer method. For example, can a single or few closely matching study-site value estimates represent comparable policy-site values, compared to those produced by a single study-site equation that allows greater calibration to policy-site conditions (Rolfe et al. 2015c)? Benefit functions estimated using primary data collected over multiple study sites can potentially provide more flexible and broadly applicable predictions (Bateman et al. 2013), but estimation of these functions requires assumptions and procedures beyond those typically required for otherwise similar single-site functions.Footnote 42

Meta-functions allow the analyst to incorporate variables that are fixed for individual studies or sites but vary across different studies or sites; this variation may enhance the capacity of the resulting benefit function to calibrate value estimates to policy-site conditions. However, the estimation and use of meta-regression models for benefit transfer implies procedures and questions that are not present for other types of transfer (Nelson and Kennedy 2009; Nelson 2015; Boyle and Wooldridge 2018). Compared to alternative approaches, the added structure of preference calibration approaches can add theoretical rigor (Smith et al. 2002, 2006). However, the advantages of theoretical consistency must be considered against the possible disadvantages, such as an inability to use potentially relevant information, or sensitivity of estimates to structural assumptions (Smith et al. 2006; Kling and Phaneuf 2018; Moeltner 2019; Newbold et al. 2018b). Considerations such as these should be contemplated in the context of available data to support the transfer and vary from one application to the next.

These tradeoffs also make clear that the choice of method is intertwined with available study-site data and the credibility of study-site value estimates. For example, the policy-site value definition and valuation context guide study-site selection, while the theoretical definition of the desired policy-site value prediction guides the selection of value information from each study. The choice of transfer method then depends, at least in part, on the availability of information determined by these prior or concurrent decisions. Hence, the selection of transfer method is best viewed as being jointly determined with other choices in the transfer process, as implied by the intertwined nature of benefit-transfer procedures in general (Fig. 1). Because of this joint determination, decisions on the transfer method may require recursion to prior steps in the analysis to review data availability to support a proposed transfer method and perhaps the search for additional data or consideration of additional data adjustments.Footnote 43

Analysts should select transfer methods that promote the greatest possible consistency of transfer value estimates with the desired policy-site value, allowing for calibrations that are implemented as part of the transfer. Insight into accuracy can be drawn from the literature that compares the performance of different types of transfer procedures under different circumstances (Brouwer 2000; Engel 2002; Rosenberger and Stanley 2006; Boyle et al. 2010; Kaul et al. 2013; Rosenberger 2015; Johnston et al. 2018). It is important to recognize, however, that much of this literature applies some form of convergent-validity testing. These tests investigate if two or more transfers provide comparable estimates or if the transfer estimate is comparable to an estimate from an original study that is designed to produce that same value. Although this research can be informative, the true value is almost always unknown. Hence, similarity of welfare estimates is an indicator of validity but cannot confirm validity. Thus, when selecting transfer procedures, the analyst should consider the weight of evidence across the literature with attention to applications similar to the current topic.

Value transfers require the greatest similarity between the study site(s) and the policy site, so that the prediction of the policy-site value can rely on simple calibrations such as adjusting for inflation and purchasing power parity (Rolfe et al. 2015c). In general, unadjusted value transfers are one of the least accurate transfer methods and not recommended when a suitable benefit function transfer is possible. Value transfers are usually chosen only when there is insufficient data to support other approaches for the given policy-site application.

In contrast, the ability to adjust study-site value estimates according to observable differences between study and policy contexts via function transfers can promote accuracy, ceteris paribus. Summary research provides robust evidence to support this insight (e.g., Kaul et al. 2013; Rosenberger and Stanley 2006; Rosenberger 2015). Hence, function transfers are usually preferred when a study site provides a function that is a good match for policy-site conditions or when information may be drawn from multiple sites or studies that collectively match policy-site conditions, and thus allow estimation of a multiple-site benefit function, meta-function or development of a preference calibration approach.

This guidance does not apply universally, however. Sometimes an adjusted value transfer can provide the most accurate estimate, e.g., when the transfer is to a new policy application over the same region and population as the study-site. In fact, some studies suggest that value transfers are more accurate when study and policy contexts are very similar (Brouwer 2000; Barton 2002; Bateman et al. 2011a; Johnston and Duke 2010). There is also evidence suggesting that value transfers with simple income or purchasing-power adjustments may perform well in international transfers (e.g., Ready et al. 2004; Lindhjem and Navrud 2008, 2015; Czajkowski and Ščasný 2010; Bateman et al. 2011a; Kosenius and Markku 2015; Andreopoulos and Damigos 2017; Czajkowski et al. 2017; Artell et al. 2019).Footnote 44 One type of transfer, for value of statistical life estimates, is almost implemented as a value transfer, despite the fact that meta-analyses have been conducted on this topic (Brouwer and Bateman 2005; Mrozek and Taylor 2002; Viscusi and Aldy 2003; Lindhjem and Navrud 2015; Viscusi 2015).Footnote 45 Considering the breadth of evidence available in the literature, value transfers should proceed with caution and justification of how the study-site value estimates match the policy-site value question either directly or with simple adjustments.

Depending on the study-site data available, one may have a choice among alternative types of function transfers. For example, one may use a function from one study site (e.g., a recreation demand model) or generated by different types of data-syntheses (e.g., a meta-function or a calibrated preference function). There is a growing consensus of the advantages of methods that synthesize data from multiple sources due to the capacity of multiple study sites to collectively represent policy-site conditions.Footnote 46 There are also advantages of structural approaches that can ensure theoretical properties such as adding up, diminishing marginal utility and other preference characteristics.Footnote 47 However, there is no clear consensus on how analysts should balance theoretical considerations with other dimensions relevant to validity and reliability, such as the empirical performance of estimated models or convergent-validity evidence from the literature.Footnote 48 There can be “a tradeoff between improved statistical fit that can be achieved by allowing additional model flexibility …” within approaches that relax strong structural specifications designed to ensure theoretical properties (Newbold et al. 2018b, p. 544).

In summary, the choice among different benefit transfer methods involves a complex consideration of multiple factors that include theoretical structure, data availability, statistical precision, and matching transfer estimates to policy-site conditions. Further, the validity and reliability of transfers depend on factors such as the amount and variation in study-site data, data coding and econometric analysis. Given influences such as these, a single-site function transfer between closely matching study- and policy sites might be more accurate than a transfer conducted via a meta-function estimated with data from multiple less-well-matched study-sites. In other cases, the collective variation across multiple studies that inform the estimation of the meta-function may provide better transfer estimates.

The selection of method may be further influenced by the precision necessary to support different types of decisions. For example, higher degrees of precision are generally required as one moves from scoping the general magnitude of potential benefits and costs, to conducting benefit–cost analysis that informs high-impact decisions or calculation of compensatory damages for litigation (Navrud and Pruckner 1997; Johnston and Rosenberger 2010).Footnote 49

Benefit transfers are typically conducted in resource constrained conditions and these constraints may also limit the type of transfer selected. Yet, these conditions are not an excuse for a poorly executed or justified transfer. If sufficient supporting information or resources to execute the transfer are not available, then a transfer should not be conducted. If an analyst encounters technical challenges in carrying out a transfer, then it is incumbent upon them to identify a person who has the expertise to assist.

3.5 Data Adjustments

Study-site data adjustments should be used to harmonize information across studies and enable well-defined value estimates for policy sites. These adjustments should be consistent with the value definition, valuation context, theoretical foundation for the transfer, and available study- and policy-site value information.

The ability of transfer procedures to produce well-defined value predictions using study-site data depends on consistent data measurement and interpretation. There are two dimensions to this recommendation. First, study-site information must be reconciled to clearly defined units of measurements that are relevant for the policy site.Footnote 50 Second, when transfers involve the synthesis of data across multiple study-sites, information across these study sites must be reconciled similarly. The latter is particularly important because benefit transfers often use value estimates from multiple studies, which requires the reconciliation of variable measurements across sites and sometimes even for different value estimates within studies. This applies both to value information and other types of data involved in the transfer.

To ensure data consistency across study- and policy-sites, all data used within transfer procedures should be reviewed to determine if adjustments are necessary. These adjustments should be consistent with the commodity and welfare recommendations that define the transfer value estimate and guide the transfer. The rationales and methods for all adjustments should be transparent and enable replication.