Abstract

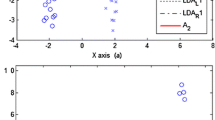

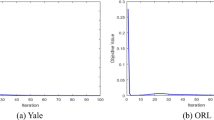

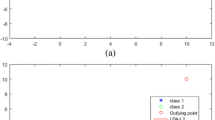

Linear Discriminant Analysis (LDA) is a famous supervised feature extraction method for subspace learning in computer vision and pattern recognition. In this paper, a novel method of LDA based on a new Maximum Correntropy Criterion optimization technique is proposed. The conventional LDA, which is based on L2-norm, is sensitivity to the presence of outliers. The proposed method has several advantages: first, it is robust to large outliers. Second, it is invariant to rotations. Third, it can be effectively solved by half-quadratic optimization algorithm. And in each iteration step, the complex optimization problem can be reduced to a quadratic problem that can be efficiently solved by a weighted eigenvalue optimization method. The proposed method is capable of analyzing non-Gaussian noise to reduce the influence of large outliers substantially, resulting in a robust classification. Performance assessment in several datasets shows that the proposed approach is more effectiveness to address outlier issue than traditional ones.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

- Mean Square Error

- Linear Discriminant Analysis

- Large Outlier

- Mean Square Error Criterion

- Average Reconstruction Error

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Geng, Y., Shan, C., Hao, P.: Square loss based regularized lda for face recognition using image sets. In: CVPRW, pp. 99–106 (2009)

Landon, J., Jeffs, B., Warnick, K.: Model-based subspace projection beamforming for deep interference nulling. IEEE Transactions on Signal Processing 60, 1215–1228 (2012)

McLachlan, G.J.: Discriminant analysis and statistical pattern recognition. Wiley (1992)

Zhao, W., Chellappa, R., Krishnaswamy, A.: Discriminant analysis of principal components for face recognition. In: 3rd International Conference on Automatic Face and Gesture Recognition (1998)

Li, X., Hu, W., Wang, H., Zhang, Z.: Linear discriminant analysis using rotational invariant l1 norm. Neurocomputing, 2571–2579 (2010)

Liu, W., Pokharel, P.P., Principe, J.C.: Correntropy: Properties and applications in non-gaussian signal processing. IEEE Trans. Signal Process 55, 5286–5298 (2007)

Parzen, E.: On estimation of a probability density function and mode. The Annals of Mathematical Statistics 33, 1065–1076 (1962)

Rockfellar, R.: Convex analysis. Princeton Univ., Princeton (1970)

Yuan, X., Hu, B.: Robust feature extraction via information theoretic learning. In: Proceedings of the 26th Annual International Conference on Machine Learning, pp. 1193–1200 (2009)

Silverman, B.W.: Density estimation for statistics and data analysis. Chapman and Hall, London (1986)

http://www.cl.cam.ac.uk/research/dtg/attarchive/facedatabase.html

Turk, M.A., Pentland, A.P.: Face recognition using eigenfaces. In: IEEE Conference on Computer Vision and Pattern Recognition (1991)

Kwak, N.: Principal component analysis based on L1-norm maximization. IEEE Trans. Pattern Anal. Mach. Intell. 30, 1672–1680 (2008)

Martnez, A., Benavente, R.: The ar-face database. CVC Technical Report 24 (1998)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Zhou, W., Kamata, Si. (2013). Linear Discriminant Analysis with Maximum Correntropy Criterion. In: Lee, K.M., Matsushita, Y., Rehg, J.M., Hu, Z. (eds) Computer Vision – ACCV 2012. ACCV 2012. Lecture Notes in Computer Science, vol 7724. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-37331-2_38

Download citation

DOI: https://doi.org/10.1007/978-3-642-37331-2_38

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-37330-5

Online ISBN: 978-3-642-37331-2

eBook Packages: Computer ScienceComputer Science (R0)