Abstract

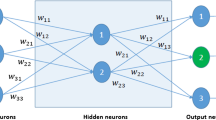

Supervised learning by perceptron networks is investigated a minimization of empirical error functional. Input/output functions minimizing this functional require the same number m of hidden units as the size of the training set. Upper bounds on rates of convergence to zero of infima over networks with n hidden units (where n is smaller than m) are derived in terms of a variational norm. It is shown that fast rates are guaranteed when the sample of data defining the empirical error can be interpolated by a function, which may have a rather large Sobolev-type seminorm. Fast convergence is possible even when the seminorm depends exponentially on the input dimension.

This work was partially supported by GA čR grant 201/05/0557.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Aronszajn, N. (1950). Theory of reproducing kernels. Transactions of AMS, 68: 337–404.

Barron, A. R. (1992). Neural net approximation. In: Proceedings of the 7th Yale Workshop on Adaptive and Learning Systems (pp. 69–72).

Cheang, G. H. L., Barron, A. R. (2000). A better approximation for balls. Journal of Approximation Theory 104:183–203.

Cucker, F. and Smale, S. (2001). On the mathematical foundations of learning. Bulletin of AMS 39: 1–49.

Donahue, M. J., Gurvits, L., Darken, C, Sontag, E. (1997). Rates of convex approximation in non-Hilbert spaces. Constructive Approximation 13: 187–220.

Ito, Y. (1992). Finite mapping by neural networks and truth functions. Math. Scientist 17: 69–77.

Kainen, P. C, Kůrková, V. (1993). Quasiorthogonal dimension of Euclidean spaces. Applied Math. Letters 6:7–10, 1993.

Kainen, P. C, Kůrková, V., Vogt, A. (2004). A Sobolev-type upper bound for rates of approximation by linear combinations of plane waves. Research Report ICS-2003-900, Institute of Computer Science, Prague.

Kůrková, V. (2003). High-dimensional approximation and optimization by neural networks. Chapter 4 In: Advances in Learning Theory: Methods, Models and Applications. (Eds. J. Suykens et al.) (pp. 69–88). IOS Press, Amsterdam.

Kůrková, V. (2004). Learning from data as an inverse problem. In: Proceedings of COMPSTAT 2004 (Ed. J. Antoch) (pp. 1377–1384). Physica-Verlag, Heidelberg.

Kůrková, V., Sanguineti, M. (2004). Error estimates for approximate optimization by the extended Ritz method. SI AM Journal on Optimization (to appear).

Kůrková, V., Sanguineti, M. (2004). Learning with generalization capability by kernel methods of bounded complexity. Journal of Complexity (to appear).

Kůrková, V., Savický, P., Hlaváčková, K. (1998). Representations and rates of approximation of real-valued Boolean functions by neural networks. Neural Networks 11:651–659.

Poggio T., Smale, S. (2003). The mathematics of learning: dealing with data. Notices of AMS 50: 536–544.

Shläfli, L. (1950). Gesamelte mathematische abhandlungen. Band 1. Basel, Verlag Birkhäuzer.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2005 Springer-Verlag/Wien

About this paper

Cite this paper

Kůrková, V. (2005). Minimization of empirical error over perceptron networks. In: Ribeiro, B., Albrecht, R.F., Dobnikar, A., Pearson, D.W., Steele, N.C. (eds) Adaptive and Natural Computing Algorithms. Springer, Vienna. https://doi.org/10.1007/3-211-27389-1_12

Download citation

DOI: https://doi.org/10.1007/3-211-27389-1_12

Publisher Name: Springer, Vienna

Print ISBN: 978-3-211-24934-5

Online ISBN: 978-3-211-27389-0

eBook Packages: Computer ScienceComputer Science (R0)