Abstract

Adaptive dynamics combines deterministic population dynamics of groups having different trait values and random process describing mutation and tries to predict the course of evolution of a species of interest. One of basic interests is to know which group survives, residents or mutants. By using invasion fitness as the primary tool, “invasion implies substitution” principle, IIS principle for short, has been established under the existence of a generating function in the sense of Brown and Vincent (Theor Popul Biol 31(1):140–166, 1987) and Vincent and Brown (Evolutionary game theory, natural selection, and darwinian dynamics. Cambridge University Press, Cambridge, 2005). This principle essentially says that the local gradient of invasion fitness ultimately determines the outcome of the competition. However, as we will see in this paper, even if a system is within the scope of IIS principle, its neighborhood always contains systems which are beyond this scope. In this paper, in order to overcome such a limitation, we establish a wider class of systems which is still reasonable as a model of evolution of a species. For our wider class, the notion of raw invasion fitness is introduced. In terms of raw invasion fitness, an explicit criterion for the existence of generating function and a counterpart of IIS principle are obtained. This enables us to discuss small perturbations of a system within or without the scope of generating functions/IIS principle. Eventually, we understand why invasion implies substitution, i.e. why the method using invasion fitness works well with the existence of generating function, from our broader point of view.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

To explain or predict the course of evolution in the past or in future has been one of the most intriguing and ambitious subjects in the modern science. In the end, the evolution is the outcome of interaction between random alteration of DNA sequences, i.e. mutation, and the environment surrounding the living organism of interest.

Among numerous attempts to understand the evolution, theoretical approaches using game theory and dynamical system have been developed for almost half century. Namely, evolutionary game theory was initiated by Maynard Smith and Price’s celebrated work in (1973). Much progress has been made in this direction by Taylor and Jonker (1978), Taylor (1979) and Smith (1982) for example. Eventually approaches to the dynamical stability of strategies have arisen by using the theory of (continuous-time and discrete-time) dynamical system and have created the notion of evolutionary stable strategy. See Hofbauer and Sigmund (1998), Taylor (1989), Eshel (1983) and Takada and Kigami (1991) for example. In these works, however, the authors mainly discussed dynamical systems whose phase spaces are the collection of (vector-valued) strategies, i.e. trait values of competitors. Consequently little attention had been paid to the variation of populations of groups having different trait values although it is a substantial part of the process of evolution.

To take dynamics of population into account, the theory of adaptive dynamics was brought forth by the works due to Metz et al. (1996), Geritz et al. (1998) and Dieckmann and Law (1996), to name but a few. In this framework, the fitness function, which is the reproduction rate of an individual, depends on both populations and trait values of competitors. Combining the dynamical system of populations describing competition and the random process corresponding to sporadic occurrence of mutation, adaptive dynamics tries to unravel the course of gradual change of phenotypic trait value.

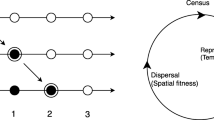

More precisely, the adaptive dynamics consists of the following two parts:

-

(COM) Competition: Deterministic dynamical system which describes the competition between groups possessing different trait values.

-

(MUT) Mutation: Stochastic process which describes the occurrence of mutants.

To begin with, we assume that the population is dominated by a group, called residents, with a single trait value. And then once a mutation governed by (MUT) happens, another group, called mutants, with a new trait value emerges. Then we use (COM) to determine the new dominant group, residents or mutants. Assuming that the average time of the occurrence of mutation in (MUT) is much longer than the relaxation time, i.e. the time needed to reach a new equilibrium, in (COM), one can iterate (COM) and (MUT) alternately to investigate the course of the evolution.

In this paper our main interest is the deterministic dynamical system in (COM) described as ODEs. More precisely, we are going to investigate how the outcome of the competition is determined, in other words, the global behavior of the dynamical system (COM). A typical scenario is that out of two equilibriums dominated by residents and mutants respectively, one of them is globally stable and the other is unstable.

Geritz et al. (2002) have dealt with a discrete time model of a monomorphic population having multiple attractors. In this model, there exists a pair of a resident dominant state and a mutant dominant state corresponding to each attractor of the monomorphic population. Making use of their own “tube theorem”, which corresponds to a discrete version of Lemma A.2 in this paper, they have shown that if a shift between a resident dominant state and a mutant dominant state occurs, then those states constitute one of the pairs mentioned above corresponding to a single attractor of the monomorphic population. As for continuous time models, i.e. ODE models, Geritz have gotten an analogue of the tube theorem for a special class of model in (2005), where he have shown “invasion implies fixation theorem”, which is a counterpart of the ”invasion implies substitution principle” mentioned below. Note that there exists a generating function in the sense of Brown and Vincent (1987) and Vincent and Brown (2005) for Geritz’s model and that the global stability is determined by invasion fitness, which is also called invasion exponent, and often denoted by \(s_x(y)\) in literatures as Geritz et al. (1998), Diekmann (2004) and Brännström et al. (2013).

In this direction, a remarkable achievement is the establishment of the “invasion implies substitution” principle, IIS principle for short, by Dercole and Rinaldi (2008). More precisely, they have shown that the sign of the local gradient of invasion fitness determines which group survives, residents or mutants under the assumption of the existence of generating function. Note that their framework includes Geritz’s one in (2005). In this paper, we use \(\theta \) to denote the invasion fitness. See (2.9) for the explicit definition. Mathematically, the local gradient of the invasion fitness is an index of the local stability of the equilibrium dominated by residents. Therefore, the invasion implies substitution principle means that the local stability determines the global stability. In general, such an immediate coincidence of the local and the global stabilities is not at all obvious. So what does cause such a coincidence? More generally, what is the secret of success of the global stability analysis using the invasion fitness? Our answer will be the existence of an associated generating function in the sense of Brown and Vincent (1987) and Vincent and Brown (2005). Note that there exists an associated generating function in both frameworks of Geritz (2005) and Dercole and Rinaldi (2008). Then, the subsequent natural question is what happens without such a strong constraint as the existence of an associated generating function. In this respect, our goal is to obtain a criterion of the global stability for a class of models which are out of the scope of the invasion fitness and still reasonable as a model of competition of residents and mutants. For this purpose, we are going to introduce the notion of raw invasion fitness \(\Theta \) as a natural extension of invasion fitness and show the results including:

-

Theorem 3.2: The shift of global stability between resident dominant state and mutant dominant state is caused by uniform positivity (and negativity as well) of the local gradients of raw invasion fitness on the line segment between the two states.

-

Theorem 3.9: There exists a generating function associated with a dimorphic system if and only if the local gradients of raw invasion fitness only depends on the total population of two competing groups having different trait-values.

Another reason to seek a theory beyond the scope of the invasion fitness is the question on the stability of global behavior under perturbations of an original system. For example, let us consider the following system:

where \(n_1\) and \(n_2\) are populations of the groups having trait values \(x_1\) and \(x_2\) respectively. For this model,

-

In case \(a = b = 0\), this model has been extensively treated in the previous studies, for example, Diekmann (2004) and Brännström et al. (2013).

-

In case \(a = b\), then there exists an associated generating function and hence the existing method using invasion fitness can be applied.

-

In case \(a \ne b\), there exists no associated generating function and hence the existing method using invasion fitness can not be applied.

The dichotonomy between the last two cases is due to Theorem 3.9. Since a tiny perturbation to a system can easily destroy the equality \(a = b\), we need a theory beyond the paradigm of invasion fitness in order to study the stability of the global behavior under small perturbations of a system. Indeed, by our results, we do see that the global behavior of (1.1) is stable under small perturbations of the parameters a, b and c as long as \((b - c)(a + b - 2c) \ne 0\). See Sect. 3.3 for details.

2 Frameworks and rough description of results

To illuminate our aims and to fix ideas, let us clarify our terminologies and frameworks. A monomorphic system is the following system (2.1) of ordinary differential equation, ODE for short, which describes the time evolution of a population consisting of individuals with a single trait value x,

where n is the population, x is the parameter representing trait value and N is the external environmental factor.

A dimorphic system is the system of ODEs describing the time evolution of a population consisting of individuals with two trait values under an external environmental factor N. In this case the population is divided into two groups depending on trait values and those groups compete with each other. Let \((n_1, x_1)\) and \((n_1, x_2)\) be the pairs of the population and the trait value of two competing groups. In this paper we assume that the growth rate of the population of the group having trait value \(x_1\) is given by

and hence

For simplicity, set

Then the complete expression of a dimorphic system is

where

should hold since the effect of \((n_1, x_1)\) and \((n_2, x_2)\) to the growth of N does not depend on their order. We call the system of ODE’s (2.3) satisfying the symmetry (2.4) as a dimorphic system.

As a model of the competition of two groups within a single species, it is natural to impose two relations between a monomorphic system (2.1) and a dimorphic system (2.3) :

First, if \(n_2 = 0\) in (2.3), then no competition is present and hence the system is reduced to a monomorphic system (2.1). Namely it is required that

Secondly if the trait values \(x_1\) and \(x_2\) are equal in (2.3), then it should be reduced to the monomorphic system (2.1) with \(n = n_1 + n_2\). Mathematically, it is required that

As a whole, our mathematical framework of this paper is the systems of ODEs, (2.3) and a monomorphic system (2.1) with the properties of (2.5) and (2.6). Such a pair of a monomorphic system and a dimorphic system is called a 2-hierarchical system, whose exact definition is as follows.

Definition 2.1

(2-hierarchical system) A pair \(((f_1, q_1), (f_2, q_2))\) is called a 2-hierarchical system if and only if \((f_1, q_1)\) is a monomorphic system, \((f_2, q_2)\) is a dimorphic system and the consistency conditions (2.5) and (2.6) are satisfied.

For the sake of simplicity, we are going to assume that for all parameter x, a monomorphic system (2.1) has a unique equilibrium point \((\widehat{n}_x, \widehat{N}_x)\) which is a global attractor, i.e. \(\lim _{t \rightarrow \infty } (n(t), N(t)) = (\widehat{n}_x, \widehat{N}_x)\) for every nonnegative solution (n(t), N(t)) of (2.1). (See Assumption 3.1 for the precise statement.)

The course of events in this model is as follows: suppose that the population is dominated by residents with a single trait value \(x_*\), i.e. the monomorphic system (2.1) with the parameter \(x_*\) stays at the stable equilibrium \((\widehat{n}_{x_*}, \widehat{N}_{x_*})\). At a certain point, a mutation occurs and a small group of mutants with a new trait value x is introduced into the system. Accordingly, the system of interest has been changed to the dimorphic system with parameter \(x_*\) and x starting from near the equilibrium \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\), which is not necessarily stable as a dimorphic system. Our main interest is to analyze the global behavior of this dimorphic system (2.3). In particular, plausible outcomes of our concern are the following three (A), (B) and (C):

(A) The equilibrium \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\) is globally stable, i.e. mutants are going to extinct eventually and the system will return to the monomorphic system with the original trait value \(x_*\).

(B) The new trait value x is superior to the original one \(x_*\) and eventually mutants will dominate the population. In our framework, this means that the equilibrium \((0, \widehat{n}_x, \widehat{N}_x)\) is globally stable and every solution of (2.3) starting near the original equilibrium \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\) is going to converge to \((0, \widehat{n}_x, \widehat{N}_x)\).

(C) Two trait values \(x_*\) and \(x_*\) are going to coexist in certain proportion, i.e. there exists a stable equilibrium \((\widetilde{n}_{x_*}, \widetilde{n}_x, \widetilde{N})\) of (2.3) such that \(\widetilde{n}_{x_*}, \widetilde{n}_x > 0\) and every solution starting near the original equilibrium \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\) is going to converge to \((\widetilde{n}_{x_*}, \widetilde{n}_x, \widetilde{N})\).

Our main results are the followings:

(I) First we have established a sufficient condition for the global shift between (A) and (B). To be exact, we introduce the notion of raw invasion fitness \(\Theta (n_1, n_2, N, x_1, x_2)\) as the difference between the fitnesses of mutants and residents. i.e.

Note that \(\Theta = 0\) on the line segment \(L(x_*)\) defined by

The “classical” invasion fitness \(\theta (\cdot , \cdot )\) turns out to be a special value of our raw invasion fitness as follows:

Our theorem, Theorem 3.2, shows that if

then as x crosses \(x_*\), (B) happens while \(x_* < x\) and (A) happens while \(x < x_*\). Under our condition (2.10), \(\Theta \) is uniformly positive (resp. negative) around \(L(x_*)\) when \(x < x_*\) (resp. \(x_* < x\)). Thus our result matches the intuition that whichever has the higher fitness wins the competition.

(II) Secondly, we are going to show that the invasion implies substitution principle, IIS principle for short, by Dercole and Rinaldi (2008) can be obtained as a corollary of our general result mentioned above. More specifically, we introduce the notion of a trimorphic system, which is a system of ODE’s describing the competition of tree groups having different trait values. As is the case of 2-hierarchical system, we call a consistent triple of a monomorphic system, a dimorphic system and a trimorphic system a 3-hierarchical system. Under these terminologies, a careful examination of the discussions by Dercole and Rinaldi (2008) yields that their result can be divided into the following two steps:

-

If a dimorphic system is a part of 3-hierarchical system, then there exists a generating function associated with the dimorphic system.

-

If there exists a generating function associated with a dimorphic system, then IIS principle holds.

The notion of generating function has been introduced by Brown and Vincent (1987) and Vincent and Brown (2005). In fact, we are going to present a characterization of the existence of generating function in terms of raw invasion fitness \(\Theta \). Namely, in Theorem 3.9, the existence of associated generating function is shown to be equivalent to the condition that \(\frac{\partial \Theta }{\partial {x_2}}(n_1, n_2, N, x_1, x_1)\) only depends on the values of \(n_1 + n_2\), N and \(x_1\). In view of (2.9), this characterization will lead to IIS principle under the existence of generating function. See Sect. 3.2 for details. Furthermore, we are going to present the following relations of classes of dimorphic systems, where we use DMS as an abbreviation of a dimorphic system:

The proper subset symbol (1) is derived from Theorem 3.7 and Proposition 3.10.

The equality (2) is concluded by Theorems 3.2, 3.8 and 3.9.

The proper subset symbol (3) is due to Propositions 3.6 and 3.12.

The euqality (4) is due to Theorem 3.2.

(III) Thirdly, we have obtained a simple class of examples who have no associated generating functions and where IIS principle may fail. Precisely our example is the following 2-hierarchical system:

Although the third equation is noting to do with the first and second ones, we put it here for the sake of formality to match (2.8) with (2.3). Immediately, one sees \(\widehat{N} = 1\). Furthermore since the associated monomorphic system (2.1) is

it follows that \(\widehat{n}_x = 1\) for any x. In this class, an associated generating function exists if and only if \(a = b\). If

then we can apply our result in part (I) and show the shift of global stability between the resident dominant and the mutant dominant equilibriums. On the contrary, if

\(x_2 < x_1\) and \(x_2\) is sufficiently close to \(x_1\), then (C) occurs, i.e. there exists a stable equilibrium \((\widetilde{n}_{x_1}, \widetilde{n}_{x_2}, 1)\) such that \(\widetilde{n}_{x_1}, \widetilde{n}_{x_2} > 0\) and any solution starting from near the resident dominant equilibrium (1, 0, 1) converges to \((\widetilde{n}_{x_1}, \widetilde{n}_{x_2}, 1)\). Consequently, IIS principle fails in this example. Note that due to the above result (II), this won’t happen under the existence of a generating function. See Sect. 3.3 for exact statements.

3 Main results

In this section, we are going to give exact statements of our results in three subsections according as the rough description (I), (II) and (III) in the last section. In Sect. 3.1, first we provide the notion of shift of global stability called SU-shift and US-shift, where the symbol “S” and “U” stand for “stable” and “unstable” respectively, and give a sufficient condition for shifts of stability as we outlined in (I). In Sect. 3.2, assuming the existence of generating function, we obtain IIS principle originally proven by Dercole and Rinaldi as a corollary of Theorem 3.2 in Sect. 3.1 by using certain characterization of the existence of generating function obtained in Theorem 3.9. In the last Sect. 3.3, we give a simple class of examples where we observe all the variety of plausible outcomes (A), (B) and (C) in the introduction.

Our domain for a monomorphic system (2.1) is

where the variables n, N, and x represent a population, an external environmental factor and a trait value respectively. The external environmental factor may represent amount of available nutrition, population of a predator species and so on. The functions \(f_1\) and \(q_1\) are assumed to be smooth, to be exact, \(C^{\infty }\) in a neighborhood of \(\mathcal {U}_1\), i.e. they can be extended to open neighborhoods of \(\mathcal {U}_1\) and are \(C^{\infty }\) in their extended domains.

In the similar manner, the domain for a dimorphic system (2.3) is

where \(n_1\) (resp. \(n_2\)) represents a population of individuals with a trait value \(x_1\) (resp. \(x_2\)) and N represents an external environmental factor. Note that the first trait value \(x_1\) belongs to residents and the second one belongs to mutants in our model. The functions \(f_2\) and \(q_2\) are assumed to be sufficiently smooth, to be exact, \(C^{\infty }\) in a neighborhood of \(\mathcal {U}_2\), i.e. they can be extended open neighborhoods of \(\mathcal {U}_2\) and are \(C^{\infty }\) on their extended domains.

Furthermore, for the sake of simplicity of statements, we assume the following property.

Assumption 3.1

For any \(x \in \mathbb {R}\), there exists a global attractor \((\widehat{n}_x, \widehat{N}_x)\) such that any solution of a monomorphic system (2.1), (n(t), N(t)) converges to \((\widehat{n}_x, \widehat{N}_x)\) as \(t \rightarrow \infty \). Moreover, \((\widehat{n}_x, \widehat{N}_x)\) is hyperbolic and the real parts of all the eigenvalues of the linearization of (2.1) at \((\widehat{n}_x, \widehat{N}_x)\) ,

are negative.

The second assumption about eigenvalues of the linearization ensures the local stability of the equilibrium \((\widehat{n}_x, \widehat{N}_x)\).

Even without the assumption that \((\widehat{n}_{x_*}, \widehat{N}_{x_*})\) is a global attractor, if the eigenvalues of (3.1) has negative real parts, then our theorems in this paper still hold with some (rather complicated but non-essential) modifications in the statements.

3.1 Shifts of stability

The shift of global stability in this paper means the global transition between the resident dominant state and the mutant dominant state as the trait value of mutants varies around the trait value of residents. Throughout this subsection, we consider a 2-hierarchical system \((f_1, q_1), (f_2, q_2)\) satisfying Assumption 3.1. To present an explicit statement, we need the notion of a tubular neighborhood \(S_{\epsilon }(x_*)\) of \(L(x_*)\) defined as

where \(B(x, \epsilon )\) is a Euclidean ball given by \(\{y : |x - y| < \epsilon \}\).

Definition 3.1

Let \(x_* \in \mathbb {R}\). We say that SU-shift (resp. US-shift) occurs at \(x_*\) if there exist \(\epsilon , \delta > 0\) such that if \(\{(n_1(t), n_2(t), N(t))\}_{t \ge 0}\) is a solution of the dimorphic system (2.3) and \((n_1(0), n_2(0), N(0)) \in S_{\epsilon }(x_*)\), then

whenever \(x_1 = x_*\) and \(x_2 \in (x_* - \delta , x_*)\) (resp. \(x_2 \in (x_*, x_* + \delta )\)) and

whenever \(x_1 = x_*\) and \(x_2 \in (x_*, x_* + \delta )\) (resp. \(x_2 \in (x_* - \delta , x_*)\)).

Recall that the trait values \(x_*\) and \(x_2\) belong to residents and mutants respectively. The statement (S) (resp. (U)) means that any solution starting from near \(L(x_*)\) converges to the resident (resp. mutant) dominant state \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\) (resp. \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\)) as \(t \rightarrow \infty \). Thus SU-shift and US-shift at \(x_*\) are global qualitative transitions between (S) and (U) with the critical value \(x_*\).

The following theorem gives a sufficient condition for shift of global stability.

Theorem 3.2

Let \(x_* \in \mathbb {R}\). SU-shift (resp. US-shift) occurs at \(x_*\) if the following condition (3.2) [resp. (3.3)] holds;

We will prove this theorem in “Appendix A”. Here we give a rough idea why it is true. Note that \(\Theta = 0\) if \(x_2 = x_*\). Hence

if \(x_2\) is sufficiently close to \(x_*\). Suppose that (3.2) is true. If \(x_2 < x_*\), then \(\Theta < 0\) in a small neighborhood of \(L(x_*)\), i.e. \(S_{\epsilon }(x_*)\). Namely, the fitness of residents is uniformly higher than that of mutants. Consequently, residents will be dominant. If \(x_2 > x_*\), then everything becomes opposite and so we have SU-shift.

3.2 Invasion implies substitution principle

In this subsection, we are going to show that “invasion implies substitution principle”, IIS principle for short, can be shown as a corollary of our Theorem 3.2. As we have mentioned in the introduction, the essential claim of IIS principle is that the local stability of the resident dominant equilibrium determines the global stability of both the resident dominant and the mutant dominant equilibriums. To begin with, let us make an observation on the local stability of the resident dominant equilibrium \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\) of a dimorphic system (2.3) with parameters \((x_*, x_2)\). One can easily see that the eigenvalues of the linearization of (2.3) at \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\) are given by the eigenvalues of (3.1) and the invasion fitness \(\theta (x_*, x_2)\). By Assumption 3.1, the sign of \(\theta (x_*, x_2)\) determines the local stability of \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\), i.e. if \(\theta (x_*, x_2) > 0\) (reps. \(\theta (x_*, x_2) < 0\)), then it is locally unstable (resp. stable).

At this point, we are going to revisit the original proof of IIS principle by Dercole and Rinaldi (2008, “Appendix B”). They started with a 2-hierarchical system and assumed the existence of a trimorphic system behind, which has turned out to be the key to fill the gap between local and global stabilities.

A trimorphic system is a system of ODE’s representing competition of three groups inside a single species having three (different) trait values. As a dimorphic system, let \((n_i, x_i)\) for \(i = 1, 2, 3\) be the pair of the population and the trait value of i-th group and let N be an external environmental factor. Suppose \(\{i, j, k\} = \{1, 2, 3\}\). Then it is natural to assume that the fitness of a group i is effected only by the current state of itself, \((n_i, x_i)\), the current state of the opponent groups, \(\{(n_j, x_j), (n_k, x_k)\}\), and the external environmental factor N including possible effect of another living organism. As a result, the fitness of the group having the trait value \(x_i\) must be written as

Consequently, the time evolution of the group i is governed by

By the same line of reasoning, we assume that the growth rate of the external environmental factor N is given by

Consequently the time evolution of N is governed by

Hence if we introduce functions \(f_3: (\mathbb {R}_+)^3 \times \mathbb {R}\times \mathbb {R}^3 \rightarrow \mathbb {R}\) and \(q_3: (\mathbb {R}_+)^3 \times \mathbb {R}\times \mathbb {R}^3 \rightarrow \mathbb {R}\) as

and

then the full equation of a trimorphic system is

Additionally, since the values of \(F_*\) given by (3.5) and H given by (3.6) are independent of the order of j and k and the order of i, j and k respectively, it is natural to assume that

and

where (i, j, k) is an arbitrary permutation of (1, 2, 3).

Definition 3.3

(Trimorphic system) Write \(\mathcal {U}_3 = (\mathbb {R}_+)^3 \times \mathbb {R}\times \mathbb {R}^3\). A system of ODE’s (3.7) is called a trimorphic system if and only if \(f_3\) and \(q_3\) are \(C^{\infty }\) function defined on a neighborhood of \(\mathcal {U}_3\) and satisfy (3.8) and (3.9) on \(\mathcal {U}_3\) respectively.

There are natural consistency conditions (ET) and (CT) between a dimorphic system and a trimorphic system as was the case between a monomorphic system and a dimorphic system.

(ET) Extinction of a trait value: If \(n_3 = 0\), then the third group is no longer existent and the system becomes dimorphic with the groups of trait values \(x_1\) and \(x_2\). Mathematically, this requires

and

(CT) Coincidence of trait values: In case two trait values coincide, then two groups sharing the same trait value behave as one. Hence the system becomes dimorphic. Mathematically this requires

and

There are other variations like the case \(n_1 = 0\) or \(x_3 = x_1\) but the associated mathematical relation are all deduced form the above requirements in (CT) and (ET) due to the symmetries (3.8) and (3.9).

The conditions in (ET) and (CT) ensure the consistency between a dimorphic system and a trimorphic system.

Now, we fix terminologies without ambiguity.

Definition 3.4

-

(1)

A triple \(((f_1, q_1), (f_2, q_2), (f_3, q_3))\) is called a 3-hierarchical system if and only if \(((f_1, q_1), (f_2, q_2))\) is a 2-hierarchical system, \((f_3, q_3)\) is a trimorphic system defined in Definition 3.3 and all the consistency conditions (3.10), (3.11), (3.12), (3.13) and (3.14) are satisfied for any \((n_1, n_2, n_3, N, x_1, x_2, x_3) \in \mathcal {U}_3\).

-

(2)

\(f \in C^{\infty }(\mathcal {U}_2)\) is said to be a part of a 2-hierarchical system if and only if there exists a 2-hierarchical system, \(((f_1, q_1), (f_2, q_2))\), such that \(f = f_2\).

-

(3)

\(f \in C^{\infty }(\mathcal {U}_2)\) is said to be a part of a –hierarchical system if and only if there exists a 3-hierarchical system, \(((f_1, q_1), (f_2, q_2),(f_3, q_3))\), such that \(f = f_2\).

There is one more notion playing a key role, which is the notion of generating function introduced by Brown and Vincent (1987) and Vincent and Brown (2005).

Definition 3.5

(Generating function)

-

(1)

A function \(G:\mathcal {U}_2 \times \mathbb {R}\rightarrow \mathbb {R}\) which is \(C^{\infty }\) in a neighborhood of \(\mathcal {U}_2 \times \mathbb {R}\), is called a generating function if and only if it satisfies the following conditions (G1),(G2) and (G3).

-

(G1)

For any \(n_1 >0\), \(x_1, x_2 \in \mathbb {R}\) and \(N \in \mathbb {R}\),

$$\begin{aligned} G(n_1, 0, N, x_1, x_2, x_1) = G(n_1, 0, N, x_1, x_1, x_1) \end{aligned}$$(3.15) -

(G2)

For any \(s > 0\), \(r_1, r_2 \in [0, 1]\) and \(N, x, y \in \mathbb {R}\),

$$\begin{aligned} G((1-r_1)s, r_1s, N, x, x, y) = G((1 - r_2)s, r_2s, N, x, x, y) \end{aligned}$$(3.16) -

(G3)

For any \((n_1,n_2,N,x_1,x_2,y)\in \mathcal {U}_2\times \mathbb {R}\),

$$\begin{aligned} G(n_1,n_2,N,x_1,x_2,y) = G(n_2,n_1,N,x_2,x_1,y). \end{aligned}$$(3.17)

-

(G1)

-

(2)

\(f \in C^{\infty }(\mathcal {U}_2)\) is said to have an associated generating function if and only if there exists a generating function \(G: \mathcal {U}_2 \times \mathbb {R}\rightarrow \mathbb {R}\) such that

$$\begin{aligned} f(n_1, n_2, N, x_1, x_2) = G(n_1, n_2, N, x_1, x_2, x_1) \end{aligned}$$for any \((n_1, n_2, N, x_1, x_2) \in \mathcal {U}_2\). In this situation, G is called a generating function associated with f.

As a model, the variables \(n_1, n_2, N, x_1, x_2\) have the same roles as before. The sixth variable y has been called virtual strategy in Brown and Vincent (1987) and Vincent and Brown (2005).

The conditions (G1), (G2) and (G3) correspond to the conditions (P1), (P2) and (P3) in Dercole and Geritz (2016), where their definition of a generating function contains an additional condition (P4).

Let G be a generating function. If \(f_1\) and \(f_2\) are defined by

and

then, with appropriate choice of \(q_1\) and \(q_2\), \(q_1 \equiv 0\) and \(q_2 \equiv 0\) for example, \(((f_1, q_1), (f_2, q_2))\) is a 2-hierarchical system. Thus we have the following fact.

Proposition 3.6

If \(f \in C^{\infty }(\mathcal {U}_2)\) has an associated generating function, then f is a part of 2-hierarchical system.

In our terminologies, two steps of arguments by Dercole and Rinaldi (2008) mentioned in the introduction can be stated as the following two theorems, Theorems 3.7 and 3.8.

Theorem 3.7

(Dercole and Rinaldi 2008) If \(f \in C^{\infty }(\mathcal {U}_2)\) is a part of a 3-hierarchical system, then f has an associated generating function.

The following proof of the above theorem is based on the idea of Dercole and Rinaldi.

Proof

Let \(((f_1, q_1), (f_2, q_2), (f_3, q_3))\) be a 3-hierarchical system and \(f = f_2\). Set

Then by (3.12),

Therefore, by (2.5)

and hence we have (G1). By (3.13), it follows that

This immediately implies (G2). Moreover, we have (G3) by (3.8). Thus we have shown that G is a generating function associated with f. \(\square \)

Theorem 3.8

(Dercole and Rinaldi’s IIS principle) Let \(((f_1, q_1), (f_2, q_2))\) be a 2-hierarchical system and \(f_2\) have an associated generating function. If

is positive (resp. negative), then SU-shift (resp. US-shift) occurs at \(x_*\).

Let us clarify how this theorem is deduced from our main theorem, Theorem 3.2. By (2.9), it follows that

Since \(\theta (x_*, x_*) = 0\), we see that

So, in case \(\frac{\partial {\theta }}{\partial {x_2}}(x_*, x_*) > 0\) for example, the locally stability of \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*})\) changes from being stable to being unstable as \(x_2\) crosses \(x_*\) form below. Comparing this with (3.4) and our Theorem 3.2, one can clearly recognize the gap between the local and the global stabilities. Namely, the shift of global stability is determined by the sign of \(\frac{\partial \Theta }{\partial {x_2}}\) on the whole line segment \(L(x_*)\) while the change of local stability is determined by that of the one point \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*}, x_*, x_*)\) in \(L(x_*)\).

So, how is it possible that the sign of a value at one point can determine that of the whole points in \(L(x_*)\)? Our answer is simple: if \(f_2\) has an associated generating function, then the value of \(\frac{\partial {\Theta }}{\partial {x_2}}\) is constant on \(L(x_*)\) so that the value at one point \((\widehat{n}_{x_*}, 0, \widehat{N}_{x_*}, x_*, x_*)\) is the values of the whole points in \(L(x_*)\). Actually such a property is the essence of generating function as the next theorem says.

Theorem 3.9

Let \(((f_1, q_1), (f_2, q_2))\) be a 2-hierarchical system. Then \(f_2\) has an associated generating function if and only if \(\frac{\partial \Theta }{\partial {x_2}}(n_1, n_2, N, x_1, x_1)\) only depends on the values of \(n_1 + n_2\), N and \(x_1\).

This theorem will be proven in “Appendix C”.

Given this theorem, it is now clear that Dercole and Rinaldi’s IIS principle is an immediate corollary of our main theorem, Theorem 3.2 as follows.

Proof of Theorem 3.8

Assume that \(((f_1, q_1), (f_2, q_2))\) is a 2-hierarchical system and \(f_2\) has an associated generating function. Then by Theorem 3.9, it follows that \(\frac{\partial \Theta }{\partial {x_2}}\) is constant on \(L(x_*)\). Hence it coincides with \(\frac{\partial {\theta }}{\partial {x_2}}(x_*, x_*)\). Now Theorem 3.2 immediately yields the desired conclusions. \(\square \)

At this point, we have fulfilled our original aim of this section, which has been to show that the original IIS principle of Dercole and Rinaldi can be obtained as a corollary of our main theorem. There still remain, however, intriguing questions concerning generating functions. One of them is the converse of Theorem 3.7: if f has an associated generating function, then is f a part of a 3-hierarchical system? This turns out to be false since we have the following counterexample.

Proposition 3.10

Define

Then f is a part of a 2-hierarchical system for any \(\alpha , \beta \) and \(\gamma \). Furthermore,

-

(1)

f has an associated generating function if and only if \(\beta + \gamma = 3\alpha \).

-

(2)

f is a part of a 3-hierarchical system if and only if \(\alpha = \gamma \) and \(\beta = 2\alpha \).

So, if \(\alpha = 1, \beta = 0\) and \(\gamma = 3\) for example, then f has an associated generating function but it is not a part of any 3-hierarchical system. The key fact to show Proposition 3.10 is the following proposition.

Theorem 3.11

A smooth function \(f: \mathcal {U}_2 \rightarrow \mathbb {R}\) is a part of a 3-hierarchical system if and only if there exist smooth functions \(\xi , f_*: \mathcal {U}_1 \rightarrow \mathbb {R}\) and \(\rho : \mathcal {U}_2 \rightarrow \mathbb {R}\) satisfying

for any \((n_1, n_2, N, x_1, x_2) \in \mathcal {U}_2\).

A generalized version of this theorem in the case of \(\mathcal {U}_k\) will be proven in “Appendix B”.

Proof of Proposition 3.10

Set \(f_1(n_1, N, x_1) = 1 - (n_1 + n_2)\), \(q_1(n_1, N, x_1) = N(1 - N)\) and \(q_2(n_1, n_2, N, x_1, x_2) = N(1 - N)\). Then \(((f_1, q_1), (f, q_2))\) is a 2-hierarchical system.

-

(1)

Set \(\varphi (n_1, n_2) = \alpha (n_1)^2 + {\beta }n_1n_2 + {\gamma }(n_2)^2\) and define

$$\begin{aligned} G(n_1, n_2, N, x_1, x_2, y)= & {} 1 - (n_1 + n_2) + (x_2 - y)n_2\varphi (n_1, n_2) \nonumber \\&+ (x_1 - y)n_1\varphi (n_2, n_1). \end{aligned}$$Then \(G(n_1, n_2, N, x_1, x_2, x_1) = f(n_1, n_2, N, x_1, x_2)\). Moreover (G1) and (G3) hold. Furthermore, (G2) holds if and only if \(n_2\varphi (n_1, n_2) + n_1\varphi (n_2, n_1)\) only depends on \(n_1 + n_2\). A routine calculation shows that this is equivalent to the condition that \(\beta + \gamma = 3\alpha \).

-

(2)

Comparing (3.23) and (3.24), we see that f is a part of a 3-hierarchical system if and only if \(\varphi (n_1, n_2)\) depends only on \(n_1 + n_2\). This turns out to be equivalent to the condition that \(\alpha = \gamma \) and \(\beta = 2\alpha \).\(\square \)

3.3 Example

In this subsection, we give a class of examples which is simple enough as the fitness function \(f_2\) is a polynomial of degree 2 in \(n_1\) and \(n_2\) but is out of the scope of the preceding framework by Dercole and Rinaldi. Indeed, we do show in Proposition 3.12 that no generating function is associated with it except the case where \(a = b\) in the following example. Still we can show the occurrence of SU-shift and US-shift due to Theorem 3.2. As a reminder, our example is (2.11) given by

where a, b and c are real-valued parameters. In this case, if

then \((f_1, q_1), (f_2, q_2))\) is a 2-hierarchical system corresponding (2.11).

Proposition 3.12

\(f_2\) has an associated generating function if and only if \(a = b\)

Proof

It follows that

By Theorem 3.9, the desired conclusion is immediate. \(\square \)

The following theorem gives us variety of asymptotic behaviors of solutions of (2.11) according to the values of parameters a, b and c. In particular, it tells us that invasion does not always imply substitution.

Theorem 3.13

Let \(x_* \in \mathbb {R}\). Then

-

(1)

If \(b - c > 0\) and \(a + b- 2c > 0\), then SU-shift occurs at \(x_*\).

-

(2)

If \(b - c < 0\) and \(a + b - 2c < 0\), then US-shift occurs at \(x_*\).

-

(3)

Suppose that \((b - c)(a + b - 2c) < 0\). Then two distinct locally stable equilibrium coexist if \(x_2 \ne x_*\) and \(|x_2 - x_*|\) is sufficiently small. More precisely, assume \(b > c\) (resp. \(b < c\).) Then there exists \(\delta > 0\) and \(\epsilon \) such that the following two cases occur.

-

(3A)

\(x_2 \in (x_*, x_* + \delta )\) (resp. \((x_* - \delta , x_*)\)): There exists a locally stable equilibrium point \((\widetilde{n}_{x_*},\widetilde{n}_{x_2}, 1)\) such that any solution starting from \(B((1,0,1), \epsilon ) \cap (0, \infty )^3\) converges to \((\widetilde{n}_{x_*},\widetilde{n}_{x_2}, 1)\)as \(t\rightarrow \infty \). The mutant dominant equilibrium point (0, 1, 1) is also locally stable.

-

(3B)

\(x _2 \in (x_* - \delta , x_*)\) (resp. \((x_*, x_* + \delta )\)): The resident dominant equilibrium (1, 0, 1) is locally stable. There exists a locally stable equilibrium point \((\overline{n}_{x_*},\overline{n}_{x_2}, 1)\) such that any solution starting from \(B((0,1,1), \epsilon ) \cap (0, \infty )^3\) converges to \((\overline{n}_{x_*},\overline{n}_{x_2}, 1)\)as \(t\rightarrow \infty \).

-

(3A)

Proof

For (1) and (2), making use of (3.23), we verify (3.2) and (3.3) in Theorem 3.2 respectively. The proof of (3) is in “Appendix D” \(\square \)

As we have already mentioned in the introduction, the case (3) of the above theorem reveals a phenomenon beyond IIS principle. There exist two locally stable equilibria. In the case of (3A), if the initial population of mutants is relatively small, then residents and mutants will coexist in a certain proportion. If the initial population of mutants is large enough mutants will dominate the entire population eventually, although this may not sound realistic in the real world. In summary, the final outcome of the competition really depends on the initial configuration right after a mutation.

4 Discussions

The shift of global stability between resident dominant state and mutant dominant state has been one of the basic concerns in the theory of adaptive dynamics, where the notion of invasion fitness plays a central role. In fact, Dercole and Rinaldi have shown the “invasion implies substitution” principle, IIS principle for short, in Dercole and Rinaldi (2008). Since the IIS principle means mathematically that the local stability of resident dominant state determines the global behavior of solutions of the focal demographic system, there must be strong constraints in the system of interest such as the existence of an associated generating function. This study answers the natural question: what happens without such a strong constraint?

To fix the framework of our study, we first clarify the terminologies such as monomorphic system (2.1), dimorphic system (2.3) and trimorphic system defined in Definition 3.3 which are systems of differential equations describing the time evolutions of populations with a single trait value, two trait values and three trait values respectively. As a model of the competition of groups having different trait values within a single species, a monomorphic system and a dimorphic system fulfill natural consistency conditions. For example, if one of the competing groups is extinct, then a dimorphic system becomes a monomorphic system and if trait values of the two groups coincide, then the union of two groups behaves as a monomorphic system. A pair of a monomorphic system and a dimorphic system satisfying such requirements is called 2-hierarchical system. Similarly, a triple of a monomorphic system, a dimorphic system and a trimorphic system satisfying natural consistency conditions is called 3-hierarchical system. We think of 2-hierarchical system and 3-hierarchical system as the systems of ODE’s meeting minimal set of requirements as models of competitions within a single species. On the other hand, some authors have studied models based on the notion of generating functions. For example, this was the case in Dercole and Rinaldi’s proof of IIS principle in (2008). There exists an associated generation function for Geritz’s model in (2005) as well. By our results, given a 2-hierarchical system, we have found that the following two conditions are equivalent:

-

there exists an associated generating function.

-

The global stabilities of the resident dominant state and the mutant dominant state can be determined by the local gradient of invasion fitness, i.e. invasion implies substitution principle can be applied.

At the same time, establishing an equivalent condition for the existence of an associated generating function in Theorem 3.9, we have shown that

-

There exists a 2–hierarchical system which does not have any associated generating function.

-

Any 3–hierarchical system has an associated generating function but the converse is not true.

The existence of a 2–hierarchical system which does not have any associated generating function is not just an unrealistic exception. As we have seen in the end of the introduction, such 2-hierarchical systems occupy most of small perturbations of a system having associated generating function. Hence one has to investigate such a 2-hierarchical system which is out of the scope of invasion implies substitution principle/invasion fitness in order to consider the stability of the global behavior of the system under small perturbations.

To study 2-hierarchical systems without an associated generating function, we have introduced the notion of raw invasion fitness as a natural extension of the invasion fitness. In our main result, Theorem 3.2, the general condition for the shift of global stability between resident dominant state and mutant dominant state has given in terms of uniform positiveness/negativeness of the gradient of raw invasion fitness. Note that our general condition can be applied to a dimorphic system having no associated generating function.

As a showcase example, in Sect. 3.3, we have given a class of dimorphic systems where the growth rate has quadratic nature. For this class, we can apply our main result to analyze the global behaviors of their solutions regardless of the presence/absence of generating functions. Moreover, in this class, there exists a dimorphic system that has an attractor at which two different traits can coexist, even if the corresponding resident trait value is not evolutionarily singular in the conventional sense, i.e. the selection gradient at the trait value does not vanish. Such a phenomena can not occur in the presence of a generating function as a small perturbation of resident dominant monomorphic system.

References

Brännström Å, Johansson J, von Festenberg N (2013) The hitchhikers guide to adaptive dynamics. Games 4(3):304–328

Brown J, Vincent T (1987) A theory for the evolutionary game. Theor Popul Biol 31(1):140–166

Dercole F, Geritz S (2016) Unfolding the resident-invader dynamics of similar strategies. J Theor Biol 394:231–254

Dercole F, Rinaldi S (2008) Analysis of evolutionary processes. Princeton Series in Theoretical and Computational Biology

Dieckmann U, Law R (1996) The dynamical theory of coevolution: a derivation from stochastic ecological processes. J Math Biol 34(5–6):579–612

Diekmann O (2004) A beginner’s guide to adaptive dynamics. Mathematical Modelling of Population Dynamics, Banach Center Publications, Vol. 63, Institute of Mathematics, Polish Academy of Sciences, Warszawa

Eshel I (1983) Evolutionary and continuous stability. J Theor Biol 103(1):99–111

Geritz S, Kisdi É, Meszéna G, Metz J (1998) Evolutionarily singular strategies and the adaptive growth and branching of the evolutionary tree. Evol Ecol 12(1):35–57

Geritz S (2005) Resident-invader dynamics and the coexistence of similar strategies. J Math Biol 50(1):67–82

Geritz S, Gyllenberg M, Jacobs F, Parvinen K (2002) Invasion dynamics and attractor inheritance. J Math Biol 44:548–560

Hofbauer J, Sigmund K (1998) Evolutionary games and population dynamics. Cambridge University Press, Cambridge

Metz J, Geritz S, Meszéna G, Jacobs F, van Heerwaarden J (1996) Adaptive dynamics, a geometrical study of the consequences of nearly faithful reproduction. In: Stochastic and spatial structures of dynamical systems (Amsterdam, 1995), Konink. Nederl. Akad. Wetensch. Verh. Afd. Natuurk. Eerste Reeks, 45, North-Holland, Amsterdam, pp 183–231

Smith J (1982) Evolution and the theory of games. Cambridge University Press, Cambridge

Smith J, Price G (1973) The logic of animal conflict. Nature 246:15

Takada T, Kigami J (1991) The dynamical attainability of ess in evolutionary games. J Math Biol 29(6):513–529

Taylor P (1979) Evolutionarily stable strategies with two types of player. J Appl Probab 16(1):76–83

Taylor P (1989) Evolutionary stability in one-parameter models under weak selection. Theor Popul Biol 36(2):125–143

Taylor P, Jonker L (1978) Evolutionary stable strategies and game dynamics. Math Biosci 40(1):145–156

Vincent T, Brown J (2005) Evolutionary game theory, natural selection, and darwinian dynamics. Cambridge University Press, Cambridge

Acknowledgements

We thank Professors Takenori Takada, Joe Yuichiro Wakano, Hisashi Otsuki and Hans Metz for many fruitful discussions and constructive comments to the original manuscript.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix A: Proof of Theorem 3.2

In this appendix, we are going to prove Theorem 3.2. As a reminder, our system is a 2-hierarchical system i.e. a dimorphic system (2.3) and a monomorphic system (2.1) satisfying Assumption 3.1 and the consistency conditions (2.5) and (2.6). We fix \(x_* \in \mathbb {R}\) throughout this section. If no confusion may occur, an element of \(\mathbb {R}^2\) is thought of as either a row vector \((a_1, a_2)\) or a column vector \(\begin{pmatrix} a_1 \\ a_2 \end{pmatrix}\) from place to place for convenience hereafter in this paper. For simplicity, we define vector fields \(V_1\) on \(\mathcal {U}_1\) and \(V_2\) on \(\mathcal {U}_2\) as follows:

Lemma A.1

Set \(v_* = (\widehat{n}_*, \widehat{N}_*)\). There exist a positive definite quadratic form \(p:\mathbb {R}^2 \rightarrow \mathbb {R}_+\), \(\epsilon _0 > 0\) and \(a > 0\) such that, if \(v \in B_{\epsilon _0}(v_*)\), then

where

for a smooth function \(f(s_1, s_2)\) and \(\left||\cdot \right||\) is the standard Euclidean norm of \(\mathbb {R}^2\).

Proof

Without loss of generality, we may assume that \(v_* = (0, 0)\). Let \(J_1\) be the Jacobi matrix of vector field \(V_1\) at \(v_*\). Let P be a \(2 \times 2\) real regular matrix transforming \(J_1\) into the real Jordan normal form, i.e. \(PJ_1P^{-1}\) is the real Jordan normal form of \(J_1\). According to the Jordan normal form of \(J_1\), we have three cases. Namely, \(PJ_1P^{-1} = A_1\) or \(A_2\) or \(A_3\), where

with \(t_1, t_2, \alpha < 0\) and \(\beta \in \mathbb {R}\). For convenience of notation, we write \(A = PJ_1P^{-1}\).

Set \(\tilde{p}_c(s_1,s_2):= {s_1}^2 + c{s_2}^2\), where the constant \(c > 0\) will be determined eventually in accordance with our purpose.

Claim 1

There exist \(c > 0\) and \(a' > 0\) such that

for any \(u \in \mathbb {R}^2\).

Proof of Claim 1

Suppose \(A=A_2\). Then

for any \(u = (u_1, u_2) \in \mathbb {R}^2\). Since \(\alpha < 0\), it follows that the right-hand of the above equality is negative definite if \(c>1/4\alpha ^2\). Therefore, we have verified Claim 1 in this case. Similar argument works for the rest of the cases as well. \(\square \)

Since P is an invertible matrix, there exists \(a'' > 0\) such that \(-a'\left||Pu\right||^2 \le -a''\left||u\right||^2\) for any \(u \in \mathbb {R}^2\). Set \(p_c(u) = \tilde{p}_c(Pu)\). Then \(\nabla p_c(u) = {}^t\!{P}\nabla \tilde{p}_c(Pu)\), where \({}^t\!{P}\) is the transpose of P. Now, since

as \(\left||v\right|| \rightarrow 0\), we verify that

as \(\left||v\right|| \rightarrow 0\). At the same time, by Claim 1, it follows that

for any \(v \in \mathbb {R}^2\). Combining (A.3) and (A.4), we see that there exist \(\epsilon _0 > 0\) and \(a > 0\) such that

for any \(v \in B_{\epsilon _0}(v_*)\). \(\square \)

Let p be the positive definite quadratic form obtained in Lemma A.1. For \(r > 0\), we define a r-Tube \(T_r\) by

Note that \(T_{r}\) is a tubular neighborhood of \(\{(n_1, n_2, N) | (n_1, n_2, N, x_*, x_*) \in L(x_*)\}\).

We call a domain \(U \subseteq \mathcal {U}_2\) is invariant if every solution \((n_1(t),n_2(t),N(t))\) of (2.3) starting from U stays in U for any \(t>0\).

Lemma A.2

There exists \(\epsilon _1 > 0\) such that, for any \(\epsilon < \epsilon _1\), one may choose \(\delta > 0\) so that \(T_{\epsilon }\) is invariant if \(|x_* - x_2| < \delta \).

Remark A.3

This lemma corresponds to the tube theorem in Geritz et al. (2002).

Proof

Let \(\epsilon _0\) be the constant obtained in Lemma A.1. Then, there exists \(\epsilon _1 > 0\) such that \(T_{\epsilon _1} \subseteq \{(n_1, n_2, N) | (n_1 + n_2, N) \in B_{\epsilon _0}(v_*)\}\). Let \((n_1, n_2, N) \in T_{\epsilon _1}\) and let \(v(t) = (n_1(t), n_2(t), N(t))\) be the solution of (2.3) satisfying \((n_1(0), n_2(0), N(0)) = (n_1, n_2, N)\). Then

where

In case \(x_2 = x_*\), since \(\widetilde{V} = V_1(n_1 + n_2, N, x_*)\), Lemma A.1 yields

where \(v = (n_1 + n_2, N)\). Set

Since \(\partial _1T_{\epsilon }\) is compact, by (A.6), there exists \(M > 0\) such that

for any \((n_1, n_2, N) \in \partial _1T_{\epsilon }\). As \(\widetilde{V}\) is continuous on the compact set \(\partial _1T_{\epsilon } \times \{x_*\} \times [x_* - \delta , x_* + \delta ]\), if \(\delta > 0\) is sufficiently small, then

for any \((n_1, n_2, N, x_*, x_2) \in \partial _1T_{\epsilon } \times \{x_*\} \times [x_* - \delta , x_* + \delta ]\). This means that the solution v(t) can not escape \(T_{\epsilon }\) through \(\partial _1T_{\epsilon }\).

The rest of the boundary \(\partial {T_{\epsilon }}\) of \(T_{\epsilon }\) as a subset of \(\mathbb {R}^3\) is included in two planes \(\{(n_1, n_2, N)| n_1, n_2 \ge 0, n_1 = 0\,\,\text {or}\,\,n_2 = 0\}\). Hence one easily see that the solution v(t) can not escape \(T_{\epsilon }\) through this part as well. Thus \(T_{\epsilon }\) is invariant if \(|x_* - x_2| \le \delta \). \(\square \)

Now, let us complete the proof of Theorem 3.2.

Proof of Theorem 3.2

Let p and \(T_r\) be the same as before. Assume that there exists \(c>0\) such that

for any \((n_1,n_2,N, x_1, x_2)\in L(x_*)\). Since \(\frac{\partial \Theta }{\partial {x_2}}\) is continuous on the compact set \(T_{\epsilon } \times \{x_*\} \times [x_* - \epsilon , x_* + \epsilon ]\), if \(\epsilon > 0\) is sufficiently small, then

for any \((n_1, n_2, N, x_1, x_2) \in T_{\epsilon } \times \{x_*\} \times [x_* - \epsilon , x_* + \epsilon ]\). In addition, we let \(0< \epsilon < \epsilon _1\), where \(\epsilon _1\) is the constant appearing in Lemma A.2. Furthermore choose \(\delta _0 > 0\) so that \(\delta _0 \le \min \{\epsilon , \delta \}\), where \(\delta \) is the constant appearing in Lemma A.2. Consequently, what we have shown so far is

-

(1)

\(T_{\epsilon }\) is invariant if \(|x_* - x_2| \le \delta _0\).

-

(2)

For any \((n_1, n_2, N, x_1, x_2) \in T_{\epsilon } \times \{x_*\} \times [x_* - \delta _0, x_* + \delta _0]\),

We assume that \(|x_* - x_2| \le \delta _0\) hereafter in this proof. By elementary calculation, if \(R(t) = n_1(t)/(n_1(t) + n_2(t))\) for a solution \((n_1(t), n_2(t), N(t))\) of (2.3) starting from an interior point of \(T_{\epsilon }\), then

Assume \(x_2 \in (x_*, x_* + \delta _0)\). As \(T_{\epsilon }\) is invariant, \((n_1(t), n_2(t), N(t))\) stays in \(T_{\epsilon }\) for any \(t > 0\). Moreover by (2), it follows that

for any \(t > 0\). Thus \(R(t) \rightarrow 0\) as \(t \rightarrow \infty \) and hence \(n_1(t) \rightarrow 0\) as \(t \rightarrow \infty \).

Set \(\mathcal {U}_{21} = \{(n_1, n_2, N)| (n_1, n_2, N) \in \mathcal {U}_2, n_1 = 0\}\) and \(D_2 = T_{\epsilon } \cap \mathcal {U}_{21}\)

Claim 1

The fixed point \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\) belongs to \(D_2\).

Proof of Claim 1

As both \(T_{\epsilon }\) and \(\mathcal {U}_{21}\) are invariant, we see that \(D_2\) is invariant. Moreover, the system (2.3) is a monomorphic system (2.1) with the parameter \(x_2\) on \(\mathcal {U}_{21}\) and this system restricted on \(\mathcal {U}_{21}\) has the unique attractive fixed point \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\). Considering that \(D_2\) is invariant, we conclude that the fixed point \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\) belongs to \(D_2\). \(\square \)

Claim 2

The fixed point \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\) is attractive.

Proof of Claim 2

Direct calculation shows that the linearization of the dimorphic system (2.3) at \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\) is given by

where \(\begin{pmatrix} a_{22} &{} a_{23}\\ a_{32} &{} a_{33}\end{pmatrix}\) equals (3.1) with \(x = x_2\) whose eigenvalues have negative real parts by Assumption 3.1. The remaining eigenvalue is

Since \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2}, x_*, x_2) \in T_{\epsilon }\), the same argument as in (A.8) implies that \(\Theta (0, \widehat{n}_{x_2}, \widehat{N}_{x_2}, x_*, x_2) > 0\). \(\square \)

Now since \(n_1(t) \rightarrow 0\) as \(t \rightarrow \infty \), if \(u_* \in \mathcal {U}_2\) is an \(\omega \)-limit set of the orbit \((n_1(t), n_2(t), N(t))\), then \(\omega \in D_2\). In other words, there exists a sequence \(\{t_m\}_{m \ge 1}\) such that \(0< t_1< t_2 < \ldots \) and \((n_1(t_m), n_2(t_m), N(t_m)) \rightarrow u_* \in D_2\) as \(m \rightarrow \infty \). Now let u(t) be the solution of (2.3) staring from \(u_* \in D_2\). As \(D_2\) is invariant and \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\) is an attractor on \(D_2\), \(u(t) \rightarrow (0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\) as \(t \rightarrow \infty \). Note that \((n_1(t_m + s), n_2(t_m + s), N(t_m + s)) \rightarrow u(s)\) as \(m \rightarrow \infty \). This implies that \((n_1(t), n_2(t), N(t))\) will eventually enter the basin of attraction of \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\). Therefore, we conclude that any solution \((n_1(t), n_2(t), N(t))\) of (2.3) starting from the interior of \(T_{\epsilon }\) is convergent to \((0, \widehat{n}_{x_2}, \widehat{N}_{x_2})\) as \(t \rightarrow \infty \).

Through similar discussion, it is shown that if \(x_2 \in [x_* - \delta _0, x_*)\), then any solution \((n_1(t), n_2(t), N(t))\) of (2.3) starting from the interior of \(T_{\epsilon }\) is convergent to \((0, \widehat{n}_{x_*}, \widehat{N}_{x_*})\) as \(t \rightarrow \infty \). Since \(S_{\epsilon '}(x_*) \subseteq T_{\epsilon }\) for sufficiently small \(\epsilon ' > 0\), we have shown that SU-shift occurs in this case.

Analogous arguments yield the occurrence of US-shift if there exists \(c > 0\) such that

for any \((n_1,n_2,N, x_1, x_2)\in L(x_*)\). \(\square \)

Appendix B: Proof of Proposition 3.11

In this section, we are going to prove Proposition 3.11. Let

and define \(C^{\infty }(\mathcal {U}_k)\) as the collection of functions on \(\mathcal {U}_k\) which are \(C^{\infty }\) on neighborhoods of \(\mathcal {U}_k\); more precisely,

To define the explicit notion of symmetry, we introduce the k-dimensional permutation group \(\mathfrak {S}_k\) as

For \(\sigma \in \mathfrak {S}_k\), we define \(\iota _{\sigma }: \mathcal {U}_k \rightarrow \mathcal {U}_k\) by

where \(n = (n_1, \ldots , n_k)\) and \(x = (x_1, \ldots , x_k)\).

Let \(i \in \{1, \ldots , k\}\). Define \(d^k_i: \mathcal {U}_k \rightarrow \mathcal {U}_{k -1}\) by

Let \(1 \le i < j \le k\). Define \(d_{i, j}^k: \mathcal {U}_k \rightarrow \mathcal {U}_{k -1}\) by

for any \(n = (n_1, \ldots , n_k)\), N and \(x = (x_1, \ldots , x_k)\)

Definition B.1

A function \(f: \mathcal {U}_k \rightarrow \mathbb {R}\) is said to have \(\mathfrak {S}_{k - 1}\)-symmetry if and only if

for any \(n = (n_1, \ldots , n_k)\), \(N \in \mathbb {R}\) and \(x = (x_1, \ldots , x_k)\) whenever \(\sigma \in \mathfrak {S}_k\) and \(\sigma (1) = 1\).

The above condition of having \(\mathfrak {S}_{k - 1}\)-symmetry is the generalization of the symmetry condition (3.8) to arbitrary number of trait values.

Next we give definitions corresponding to the properties (ET) and (CT) in the definition of trimorphic systems in Definition 3.4.

Definition B.2

Let \(j \in \mathbb {N}\).

-

(1)

Let \(2 \le i \le j\). A pair \((f_j, f_{j - 1}) \in C^{\infty }(\mathcal {U}_j) \times C^{\infty }(\mathcal {U}_{j - 1})\) is said to satisfy the property \(\mathrm{(ET)}_i^j\) if and only if

$$\begin{aligned} f_j(n, N, x) = f_{j - 1}(d_i^j(n, N, x)), \end{aligned}$$where \(n = (n_1, \ldots , n_j)\), \(N \in \mathbb {R}\) and \(x = (x_1, \ldots , x_j)\), provided \(n_i = 0\).

-

(2)

Let \(1 \le i < j \le n\) and \(x_i = x_j\). A pair \((f_j, f_{j - 1}) \in C^{\infty }(\mathcal {U}_j) \times C^{\infty }(\mathcal {U}_{j - 1})\) is said to satisfy the property \(\mathrm{(CT)}_{i, m}^j\) if and only if

$$\begin{aligned} f_j(n, N, x) = f_{j - 1}(d^j_{i, m}(n, N, x)), \end{aligned}$$where \(n = (n_1, \ldots , n_j)\), \(N \in \mathbb {R}\) and \(x = (x_1, \ldots , x_j)\), provided \(x_i = x_m\).

The expressions (ET) and (CT) in the above definition represent “Extinction of a Trait value” and “Coincidence of Trait values” respectively. For example, the properties \(\mathrm (ET)^3_3\), \(\mathrm (CT)^3_{1, 2}\), \(\mathrm (CT)^3_{2, 3}\) correspond to (3.10), (3.12) and (3.13) respectively. Moreover, the properties \(\mathrm (ET)^2_2\) and \(\mathrm (CT)^2_{1, 2}\) correspond to (2.5) and (2.6) respectively. In fact, if we study systems of ODE’s representing general multi-morphic system

for \(k = 1, \ldots , m\), the natural condition for the consistency of the systems with different values of k is that, for any \(k = 2, \ldots , m\), \(f_k\) has \(\mathfrak {S}_{k - 1}\)-symmetry and \((f_k, f_{k - 1})\) satisfy \(\mathrm{(ET)}^k_i\) for \(i = 2, \ldots , k\) and \(\mathrm{(CT)}^k_{i, j}\) for any \(1 \le i < j \le k\). In such a case, the sequence \(((f_i, q_i))_{i = 1, \ldots , k}\) should be called a k-hierarchical system, (kHS) for short, as a natural extension of the notions of a 2-hierarchical system and a 3-hierarchical system.

Under a weaker set of properties, the functions \(f_1, \ldots , f_m\) are shown to have special forms in the next lemma.

Lemma B.3

Let \(m \ge 1\). For \((f_1, \ldots , f_m) \in C^{\infty }(\mathcal {U}_1) \times \ldots \times C^{\infty }(\mathcal {U}_m)\), the following conditions (a) and (b) are equivalent:

-

(a)

For each \(k \in \{2, \ldots , m\}\), \(f_k\) has \(\mathfrak {S}_{k- 1}\)-symmetry and the pair \((f_k, f_{k - 1})\) satisfies \(\mathrm{(ET)}_i^k\) and \(\mathrm{(CT)}_{1, i}^k\) for any \(i = 2, 3, \ldots , k\).

-

(b)

There exists a sequence \(\{h_k\}_{k = 1, \ldots , m}\) such that for any \(k = 1, \ldots , m\), \(h_k \in C^{\infty }(\mathcal {U}_k)\) and for any \(k = 2, \ldots , m\), \(h_k\) has \(\mathfrak {S}_{k - 1}\)-symmetry and

$$\begin{aligned} f_k(n, N, x) = \sum _{j =0}^{k - 1}\sum _{2 \le i_1< \ldots < i_j \le k} \Bigg (\prod _{l = 1}^j n_{i_l}(x_1 - x_{i_l})\Bigg ) h_{j + 1, k}^{(i_1, \ldots , i_j)}(n, N, x)\qquad \quad \end{aligned}$$(B.1)for any \((n, N, x) \in \mathcal {U}_k\), where \(n = (n_1, \ldots , n_k)\), \(x = (x_1, \ldots , x_k)\) and

$$\begin{aligned} h_{j + 1, k}^{(i_1, \ldots , i_j)}(n, N, x) = h_{j + 1}\Big (\sum _{i = 1}^k n_i - \sum _{l = 1}^j n_{i_l}, n_{i_1}, \ldots , n_{i_j}, N, x_1, x_{i_1}, \ldots , x_{i_j}\Big ). \end{aligned}$$

Note that in the above lemma, the property \(\mathrm{(CT)}^k_{i, j}\) is assumed only if \(i = 1\).

For \(k = 2, 3\), (B.1) can be written as

and

Proof

For the sake of simplicity of the expression, we omit to write the variable N explicitly in this proof.

We use an induction on m. If \(m = 1\), it is obvious.

Let \(m \ge 2\). Assume that the statement holds for \(1, \ldots , m - 1\). Define \(\psi _m: \mathcal {U}_m \rightarrow \mathbb {R}\) by

where \(n = (n_1, \ldots , n_m)\) and \(x = (x_1, \ldots , x_m)\).

Assume \(n_i = 0\) for some \(i \ge 2\). Set \((\tilde{n}, \tilde{x}) = d_i^m(n, x)\). Then by \(\mathrm{(ET)}^m_i\), we have

On the other hand, since \(n_i = 0\), we see that

Note that (B.1) holds for \(k = m - 1\) by the induction hypothesis. Hence the right-hand side of the above equality coincides with \(f_{m - 1}(\tilde{n}, \tilde{x})\). Therefore it follows that

if \(n_i = 0\) for some \(i \ge 2\).

Next assume that \(x_i = x_1\) for some \(i \ge 2\). Let \((\hat{n}, \hat{x}) = d_{1, i}^m(n, x)\). Then by \(\mathrm{(CT)}^m_{1, i}\),

At the same time, since \(x_1 - x_i = 0\), we see that

Note that (B.1) holds for \(k = m - 1\) by the induction hypothesis. Hence the right-hand side of the above equality coincides with \(f_{m - 1}(\hat{n}, \hat{x})\). Thus we have shown that

Hence if \(x_1 - x_i = 0\) for some \(i \ge 2\), then

Thus it follows that \(f_m(n, x) - \psi _m(n, x)\) can be divided by \(\prod _{i = 2}^m n_i(x_1 - x_i)\). Therefore, there exists \(h_m: \mathcal {U}_m \rightarrow \mathbb {R}\) such that \(h_m\) has \(\mathfrak {S}_{m - 1}\)-symmetry and

Thus the desired statement is true for m.

As a corollary of Lemma B.3, we have the following lemma.

Lemma B.4

\(f \in C^{\infty }(\mathcal {U}_2)\) is a part of a 2-hierarchical system if and only if there exist \(f_* \in C^{\infty }(\mathcal {U}_1)\) and \(\varphi \in C^{\infty }(\mathcal {U}_2)\) such that

for any \((n_1, n_2, N, x_1, x_2) \in \mathcal {U}_2\).

Proof

Assume that f is a part of a 2-hierarchical system. Let \(((f_1, q_1), (f_2, q_2))\) be a 2-hierarchical system satisfying \(f = f_2\). Then the condition (a) in Lemma B.3 holds for \((f_2, f_1)\). Hence we obtain (B.4) from (B.2). Conversely suppose that (B.4) holds. Define \(f_2 = f, f_1 = f_*, q_1 \equiv 0\) and \(q_2 \equiv 0\). Then \(((f_1, q_1), (f_2, q_2)\) is a 2-hierarchical system and f is a part of a 2-hierarchical system. \(\square \)

Assuming the full properties of \(\mathrm{(ET)}^k_i\) and \(\mathrm{(CT)}^k_{i, j}\) for \(k = 2, 3\), we obtain the following theorem, which includes the restatement of Proposition 3.11. In fact, (B.5) is exactly (3.24).

Theorem B.5

Let \((f_1, f_2, f_3) \in C^{\infty }(\mathcal {U}_1) \times C^{\infty }(\mathcal {U}_2) \times C^{\infty }(\mathcal {U}_3)\). The following conditions (c) and (d) are equivalent.

(c) For \(k = 2, 3\), \(f_k\) is \(\mathfrak {S}_{k - 1}\)-symmetric and the pair \((f_k, f_{k - 1})\) satisfies \(\mathrm{(ED)}_i^k\) for any \(2 \le i \le k\) and \(\mathrm{(CT)}_{i, j}^k\) for any \(1 \le i < j \le k\).

(d) There exist \(\xi \in C^{\infty }(\mathcal {U}_1)\), \(\rho \in C^{\infty }(\mathcal {U}_2)\) and \(F \in C^{\infty }(\mathcal {U}_3)\) such that, for any \((n_1, n_2, n_3, N, x_1, x_2, x_3) \in \mathcal {U}_3\),

and

where \(n = (n_1, n_2, n_3)\) and \(x = (x_1, x_2, x_3)\).

Proof

As we have done in the proof of Lemma B.3, we omit to write N explicitly in the followings.

(c) \(\Rightarrow \) (d): By Lemma B.3, we have (B.2) and (B.3). Let \(x_2 = x_3\) in (B.3). By \(\mathrm{(CT)}_{2, 3}^3\), we have

Define \(H(X, Y, x_1) = Yh_2(X - Y, Y, x_1, x_1)\). Then by (B.6), we obtain

This implies

for any \(t, s_1, s_2 \ge 0\) with \(s_1 + s_2 \le t\). Since H is \(C^{\infty }\), there exists \(\xi \in C^{\infty }(\mathcal {U}_1)\) such that \(H(t, s, x_1) = \xi (t, x_1)s\) if \(0 \le s \le t\). Recalling the definition of H, we have

Since \(h_2(n_1, n_2, x_1, x_2) - h_2(n_1, n_2, x_1, x_1) \equiv 0\) if \(x_1 = x_2\), there exists \(\rho \in C^{\infty }(\mathcal {U}_2)\) such that

This immediately yields (B.5). Next, define

Then, by (B.5),

By \(\mathrm{(ET)}_2^3\), it follows that \(\eta (n_1, 0, n_3, x) = f_3(n_1, 0, n_3, x)\). Similar arguments show that \(\eta (n_1, n_2, 0, x) = f_3(n_1, n_2, 0, x)\). Moreover, by (B.5),

By \(\mathrm{(CT)}_{1, 2}^3\), it follows that \(\eta (n, x_1, x_1, x_3) = f_3(n, x_1, x_1, x_3)\). In the same manner, using \(\mathrm{(CT)}_{1, 3}^3\) and \(\mathrm{(CT)}_{2, 3}^3\), we see that \(\eta (n, x_1, x_2, x_1) = f_3(n, x_1, x_2, x_3)\) and \(\eta (n, x_1, x_2, x_2) = f_3(n, x_1, x_2, x_2)\). Thus, there exists \(F \in C^{\infty }(\mathcal {U}_3)\) such that

Since \(f_3\) has \(\mathfrak {S}_2\)-symmetry, it follows that

(d) \(\Rightarrow \) (c): This is straightforward. \(\square \)

Appendix C: Proof of Theorem 3.9

Throughout “Appendix C”, \(((f_1, q_1), (f_2, q_2))\) is assumed to be a 2-hierarchical system. There exists \(\varphi \in C^{\infty }(\mathcal {U}_2)\), by Lemma B.4, such that

for any \((n_1, n_2, N, x_1, x_2) \in \mathcal {U}_2\). Then we have the following assertion which includes the claim of Theorem 3.9.

Theorem C.1

The following conditions are equivalent:

-

(1)

\(f_2\) has an associated generating function.

-

(2)

\(\frac{\partial \Theta }{\partial {x_2}}(n_1, n_2, N, x_1, x_1)\) depends only on the values \(n_1 + n_2\), N and \(x_1\).

-

(3)

Set

$$\begin{aligned} H(n_1, n_2, N, x_1) = n_2\varphi (n_1, n_2, N, x_1, x_1) + n_1\varphi (n_2, n_1, N, x_1, x_1). \end{aligned}$$Then H depends only on the values \(n_1 + n_2\), N and \(x_1\).

-

(4)

Define

$$\begin{aligned} G_*(n_1, n_2, N, x_1, x_2, y)= & {} f_1(n_1 + n_2, N, y) \nonumber \\&+ n_2(x_2 - y)\varphi (n_1, n_2, N, x_1, x_2) \nonumber \\&+ n_1(x_1 - y) \varphi (n_2, n_1, N, x_2, x_1). \end{aligned}$$(C.2)Then \(G_*\) is a generating function associated with \(f_2\).

The function \(G_*\) defined in (C.2) may be thought of as the canonical generating function associated with \(f_2\). Note that there exists infinitely many generating functions associated with \(f_2\) because

is also a generating function associated with \(f_2\) for any \(c \in \mathbb {R}\) if \(G_*\) is so.

Proof of Theorem C.1

-

(1)

\(\Rightarrow \) (2) Let G be a generating function associated with \(f_2\). Then by (G3),

$$\begin{aligned} -\Theta (n_1, n_2, N, x_1, x_2)&= G(n_1, n_2, N, x_1, x_2, x_1) - G(n_2, n_1, N, x_2, x_1, x_2)\\&= G(n_1, n_2, N, x_1, x_2, x_1) - G(n_1, n_2, N, x_1, x_2, x_2). \end{aligned}$$Hence we have

$$\begin{aligned} -\frac{\partial \Theta }{\partial {x_2}}(n_1, n_2, N, x_1, x_1) = \frac{\partial {G}}{\partial {y}}(n_1, n_2, N, x_1, x_1, x_1). \end{aligned}$$On the other hand, by (G2), \(G(n_1, n_2, N, x_1, x_1, y)\) only depends on the values \(n_1 + n_2, N, x_1\) and y and hence so does \(\frac{\partial {G}}{\partial {y}}(n_1, n_2, N, x_1, x_1, y)\). Therefore, we have (2).

-

(2)

\(\Rightarrow \) (3) By (C.1),

$$\begin{aligned} -\frac{\partial \Theta }{\partial {x_2}}(n_1, n_2, N, x_1, x_1) = -\frac{\partial {f_1}}{\partial {x_1}}(n_1 + n_2, x_1) + H(n_1, n_2, N, x_1, x_1). \end{aligned}$$Hence by (2), one sees that \(H(n_1, n_2, N, x_1, x_2)\) only depends on the values \(n_1 + n_2\), N and \(x_1\).

-

(3)

\(\Rightarrow \) (4) It is easy to see that \(G_*\) satisfies (G3). Since \(G_*(n_1, 0, N, x_1, x_2, x_1) = f_1(n_1, N, y)\), we have (G1). The fact that

$$\begin{aligned} G_*(n_1, n_2, N, x_1, x_1, y) = f_1(n_1 + n_2, N, y) + (x_1 - y)H(n_1, n_2, N, x_1) \end{aligned}$$immediately implies that (G2) holds if (3) is satisfied. Moreover,

$$\begin{aligned} G_*(n_1, n_2, N, x_1, x_2, x_1)&= f_1(n_1 + n_2, N, x_1)\\&\quad + n_2(x_2 - x_1)\varphi (n_1, n_2, N, x_1, x_2)\\&= f_2(n_1, n_2, N, x_1, x_2). \end{aligned}$$Thus we have shown that \(G_*\) is a generating function associated with \(f_2\).

-

(4)

\(\Rightarrow \) (1) This is obvious. \(\square \)

Appendix D: Proof of Theorem 3.13

In this subsection, we prove Theorem 3.13. The equation in question is (2.11). Note that Lemma A.2 is still true in this example. Let I be the \(2 \times 2\) identity matrix. Using the same notations as “Appendix A”, we see that \(v_* = (1, 1)\), \(L(x_*) = \{(n_1, n_2, 1, x_*, x_*)| n_1 \ge 0, n_2 \ge 0, n_1 + n_2 = 1\}\), \(J_1 = I\) and \(P = I\), where \(J_1\) and P have appeared in the proof of Lemma A.1. Consequently one see that

Since the variable N dose not appear in the equations of \(n_1\) and \(n_2\), we only consider the first two equations on \(n_1\) and \(n_2\) in this section. Furthermore, for ease of notations, we write \(x = n_1\), \(y = n_2\) and \(\alpha = x_2 - x_1\). As a result, our equation is

If \(\widehat{T}_{\epsilon }\) and \(\widehat{L}(x_*)\) are the projections of \(T_{\epsilon }\) and \(L(x_*)\) to (x, y)-plane respectively, then \(\widehat{L}(x_*) = \{(x, y)| x \ge 0, y \ge 0, x + y = 1\}\) and

By Lemma A.2, for sufficiently small \(\epsilon \), there exists \(\delta > 0\) such that if \(|\alpha | \le \delta \), then \(\widehat{T}_{\epsilon }\) is invariant with respect to the system of ODE’s (D.1).

In case \((b - c)(a + b - 2c) > 0\), using (3.23), we can easily verify either (3.2) or (3.3) of Theorem 3.2. So, it is enough to consider the case where \((b - c)(a + b - 2c) < 0\). This case is subdivided into

and

Since both cases can be dealt with analogous methods, we are going to study one of them. Namely, we fix \(a, b, c \in \mathbb {R}\) satisfying (Case 2) from now on.

Definition D.1

Set \(U = \{(x, y)| x + y > 0\}\). Define \(\tau : U \rightarrow \mathbb {R}^2\) by

By using the polar coordinate \((x, y) = (r\cos t, r\sin t)\), where \((r, t) \in (0, \infty ) \times (-\frac{\pi }{4}, \frac{3\pi }{4})\), it follows that

Set \(U_{\lambda } = \{(r\cos {t}, r\sin {t})| r > 0, t \in (\frac{\pi }{4} - \lambda , \frac{\pi }{4} + \lambda )\}\) for \(\lambda \in (0, \frac{\pi }{2}]\). Then one can immediately verify the next lemma by direct calculation.

Lemma D.2

For any \(\lambda \in (0, \frac{\pi }{2})\), \(\tau \) is a diffeomorphism between \(U_{\lambda }\) and \((0, \infty ) \times (-\tan {\lambda }, \tan {\lambda })\).

Now if \((X, Y) = \tau (x, y)\), then the system of ODE’s on (x, y), (D.1), is transformed into

where

Define

Then \(F_1(X, \alpha ) = XG_1(X, \alpha )\) and \(F_2(X, \alpha ) = \frac{1}{4}{\alpha }X(1 - Y^2)G_2(X, Y)\).

From now on, we are going to study (D.2) on \(\mathbb {R}\times (0, \infty )\). In particular, the original domain \([0, \infty ) \times [0, \infty )\) of (D.1) corresponds to \([0, \infty ) \times [-1, 1]\). Furthermore, since \(\tau (\widehat{T}_{\epsilon }) = [1 - \epsilon , 1 + \epsilon ] \times [-1, 1]\), for sufficiently small \(\epsilon \), there exists \(\delta \) such that if \(|\alpha | \le \delta \), then \([1 - \epsilon , 1 + \epsilon ] \times [-1, 1]\) is invariant with respect to the system of ODE’s (D.2). Hereafter in this appendix, we choose \(\epsilon \) and \(\delta \) in this manner. In addition, we are going to adopt values of \(\epsilon \) and \(\delta \) to the coming circumstances several times in the course of our discussion.

Note that (1, 1) (resp. \((1, -1)\)) corresponds to the resident dominant (resp. the mutant dominant) equilibrium point. The Jacobian of the right-hand side of (D.2) at \((1, \pm 1)\) is

Hence if \(\alpha > 0\) (reps. \(\alpha < 0\)), then (1, 1) is locally unstable (resp. stable) and \((1, -1)\) is locally stable (resp. unstable).

Set \(I_{\epsilon } = [1 - \epsilon , 1 + \epsilon ] \times [-1, 1]\) and \(I_{\epsilon }^o = (1 - \epsilon , 1 + \epsilon ) \times (-1, 1)\). Next we are going to search equilibrium points of (D.2) inside \(I_{\epsilon }\).

Lemma D.3

and

Then, for sufficiently small \(\epsilon > 0\), there exists \(\delta > 0\) such that the following statements (1), (2) and (3) hold.

-

(1)

\(\Lambda _{\pm }\) are well-defined as functions on \([1 - \epsilon , 1 + \epsilon ]\) and, for \((X, Y) \in I_{\epsilon }^o\), \(F_2(X, Y, \alpha ) = 0\) if and only if \(Y = \Lambda _{\pm }(X)\).

-

(2)

\(\Gamma \) is well-defined as a function on \([-1, 1] \times (-\delta , \delta )\) and \(\Gamma ([-1, 1] \times (-\delta , \delta )) \subseteq [1 - \epsilon , 1 + \epsilon ]\). Moreover, for \((X, Y) \in I_{\epsilon }^o\), \(F_1(X, Y, \alpha ) = 0\) if and only if \(X = \Gamma (Y, \alpha )\).

-

(3)

For any \(\alpha \in (-\delta , \delta )\), there exist a unique \(\Psi _+(\alpha ) \in (0, 1)\) such that

$$\begin{aligned} \Psi _+(\alpha ) = \Lambda _+(\Gamma (\Psi _+(\alpha ), \alpha )) \end{aligned}$$and a unique \(\Psi _-(\alpha ) \in (-1, 0)\) such that

$$\begin{aligned} \Psi _-(\alpha ) = \Lambda _-(\Gamma (\Psi _-(\alpha ), \alpha )) \end{aligned}$$Moreover, define \(\Phi _{\pm }(\alpha ) = \Gamma (\Psi _{\pm }(\alpha ))\) and set \(p_{\pm }(\alpha ) = (\Phi _{\pm }(\alpha ), \Psi _{\pm }(\alpha ))\). Then

-

(3a)

The function \(p_{\pm }: (-\delta , \delta ) \rightarrow I_{\epsilon }^o\) is \(C^1\)-class.

-

(3b)

$$\begin{aligned} p_{\pm }(0) = \Bigg (1, \pm \sqrt{\frac{a + b - 2c}{a - b}}\Bigg ) \end{aligned}$$

-

(3c)

If \(\alpha \ne 0\), then \(p_+(\alpha )\) and \(p_-(\alpha )\) are all the equilibrium points of (D.2) in \(I_{\epsilon }^o\).

-

(3d)

For any \(\alpha \in (-\delta , \delta )\),

$$\begin{aligned} (\Phi _-(-\alpha ), \Psi _-(-\alpha )) = (\Phi _+(\alpha ), -\Psi _+(\alpha )). \end{aligned}$$ -

(3e)

\(\frac{d\Phi _+}{d\alpha }(\alpha ) > 0\) for any \(\alpha \in (-\delta , \delta )\).

-

(3a)

Proof

-

(1)

Note that \(\Lambda _{\pm }\) does not depend on \(\alpha \). Since

$$\begin{aligned} -1< \Lambda _-(1)< 0< \Lambda _+(1) < 1, \end{aligned}$$choosing sufficiently small \(\epsilon > 0\), we see that \(\Lambda _-([1 - \epsilon , 1 + \epsilon ]) \subseteq (-1, 0)\) and \(\Lambda _+([1 - \epsilon , 1 + \epsilon ]) \subseteq (0, 1)\). Furthermore, for \((X, Y) \in I_{\epsilon }^o\), \(F_2(X, Y, \alpha ) = 0\) if and only if \(G_2(X, Y) = 0\). Since \(G_2(X, Y) = 0\) if and only if \(Y = \Lambda _{\pm }(X)\), the desired conclusion is verified.

-

(2)

Since \(\Gamma (Y, 0) = 1\) for any Y, it follows that \(\Gamma ([-1, 1] \times (-\delta , \delta )) \subseteq [1 - \epsilon , 1 + \epsilon ]\) for sufficiently small \(\delta > 0\). For \((X, Y) \in I_{\epsilon }^o\), \(F_1(X, Y, \alpha ) = 0\) if and only if \(G_1(X, Y, \alpha ) = 0\). Since \(G_1(X, Y, \alpha ) = 0\) if and only if \(X = \Gamma (Y, \alpha )\), we obtain the desired conclusion.

-

(3)

Note that

$$\begin{aligned} \frac{\partial \Gamma }{\partial {Y}} = \frac{\alpha (b - a)(1 - 3Y^2)}{(1 + \sqrt{1 - \alpha (b - a)(1 - Y^2)Y})^2\sqrt{1 - \alpha (b - a)(1 - Y^2)Y}} \end{aligned}$$(D.3)and

$$\begin{aligned} \frac{d\Lambda _+}{dX} = -\frac{c}{(b - a)\Lambda _+(X)X^2}. \end{aligned}$$(D.4)Since

$$\begin{aligned} \frac{\partial \Lambda _+\circ \Gamma }{\partial {Y}}(Y, \alpha ) = \frac{d\Lambda _+}{dX}(\Gamma (Y, \alpha ))\frac{\partial \Gamma }{\partial {Y}}(Y, \alpha ), \end{aligned}$$due to (D.3), we can choose \(\delta > 0\) so that