Abstract

This paper presents some theoretical results on dynamical behavior of complex-valued neural networks with discontinuous neuron activations. Firstly, we introduce the Filippov differential inclusions to complex-valued differential equations with discontinuous right-hand side and give the definition of Filippov solution for discontinuous complex-valued neural networks. Secondly, by separating complex-valued neural networks into real and imaginary part, we study the existence of equilibria of the neural networks according to Leray–Schauder alternative theorem of set-valued maps. Thirdly, by constructing appropriate Lyapunov function, we derive the sufficient condition to ensure global asymptotic stability of the equilibria and convergence in finite time. Numerical examples are given to show the effectiveness and merits of the obtained results.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

As applications of neural networks spread more widely, developing neural networks models that can deal with complex numbers is desired in various fields, such as optoelectronics, remote sensing, quantum devices, signal processing and electromagnetic [1,2,3,4]. Compared with real-valued neural networks, complex-valued neural networks can explore new capabilities and higher performance. For example, as Chakravarthy point out in [5], a complex-valued Hopfield neural networks can exist in both fixed point and oscillatory modes. In the fixed-point mode, complex-valued neural network is similar to a continuous-time Hopfield network. In oscillatory mode, when multiple patterns are stored, the network wanders chaotically among patterns. On the other aspect, in the real-valued neural network, the activation function is usually chosen to be a smooth and bounded analytic function such as sigmoid function. However, in the complex-valued domain, such condition is not suitable for complex-valued activation function because of the Liouville’s theorem, which says every bounded and analytic function in complex domain must be a constant function. In place of analytic function, discontinuous complex-valued function becomes an important choice when we design complex-valued neural networks.

In recent years, researchers have paid more attention on the dynamics of complex-valued neural networks. To study the dynamical behavior, many methods are proposed, such as Lyapunov method, synthesis method and matrix measure method [7,8,9,10,11,12]. In [7], the authors studied the existence of energy function for complex-valued Hopfield neural networks and proposed the stability condition using Lyapunov method. Based on delta differential operator, global stability of complex-valued neural networks on time scales is studied using Lyapunov method in [8, 9]. The stability criteria of discrete complex-valued neural networks are derived in [10] using synthesis method. In [11, 12], the stability of complex-valued neural networks is studied based on matrix measure theory. Compared with the above three methods, Lyapunov method is easier to understand, but it is difficult to construct a Lyapunov function, especially for discontinuous complex-valued neural networks. Synthesis method is usually used to analyze discrete neural networks. The advantage of matrix measure method is to avoid constructing Lyapunov function, but the proposed condition is difficult to verify. As time delay is inevitable in real applications, the dynamics of complex-valued neural networks with time delay have attracted many researchers’ interest [13,14,15,16,17,18,19,20,21,22,23,24,25]. The stability and bifurcation set in parameter plane for first-order complex delayed differential equations are studied in [13]. The stability conditions of complex-valued recurrent neural networks with time delay are derived in [14], and the stability of equilibria is further studied in [15] by separating the real and imaginary parts. In [16], the authors improved the results in [14, 15] using complex-valued Lyapunov function. In [17, 18], the authors researched the global stability and boundedness for complex-valued recurrent neural networks on time scales. The authors studied the exponential stability for delayed complex-valued neural networks in [23,24,25]. However, all these results are required the activation function satisfying Lipschitz condition or its real part and imaginary part have bounded partial derivatives. Obviously, these assumptions are very strict in real application. As Hopfield point out in [26], discontinuous character of activation function can not be negligible when we consider the dynamics of neural networks.

As far as we know, there are few results on dynamics analysis of complex-valued neural networks with discontinuous activation functions [27,28,29,30]. In [27], the multistability of complex-valued recurrent neural networks with real–imaginary-type activation functions is studied. In [28], the author studied the multiple \(\mu \)-stability of complex-valued neural networks. The author studied the dissipativity, passivity and passification condition of memristor-based complex-valued recurrent neural net works in terms of LMIs in [29, 30]. However, all the above results did not consider the dynamical behavior caused by discontinuous character. If the equilibria are located on the boundaries of activation functions, the methods in [27,28,29,30] can not used to analyze the dynamics of discontinuous complex-valued neural networks.

In the past decades, real-valued neural networks with discontinuous activation functions have been extensively studied via differential inclusion theory [31,32,33,34,35,36,37,38,39], which can analyze the dynamical behavior of discontinuous neural networks successfully. However, the analysis of stability issues of discontinuous complex-valued neural networks is not a simple task and there still lacks effect analysis methods. Such dynamical behavior analysis is faced with three difficulties as follows:

-

(1)

How to define Filippov differential inclusions and Filippov solution of discontinuous complex-valued differential equation?

-

(2)

How to ensure the existence of Filippov solution and equilibrium point for complex-valued Hopfield neural networks with discontinuous activation functions?

-

(3)

How to propose some sufficient conditions to ensure the stability and convergence of equilibria for discontinuous complex-valued Hopfield neural networks?

As far as we know, the result on dynamics of discontinuous complex-valued neural networks using complex differential inclusion theory are not yet available. Motivated by the above discussion, we study the dynamical behavior of discontinuous complex-valued Hopfield neural networks in this paper. The structure of this paper is outlined as follows. In Sect. 2, we introduce the Filippov differential inclusions for complex-valued differential equations with discontinuous right-hand side and give some preliminaries and lemmas. Section 3 presents the main results, including the existence and uniqueness of equilibria, global asymptotic stability and convergence in finite time. Numerical examples are given in Sect. 4 and some concluding remarks are made in Sect. 5.

Notation throughout this paper, R and C show the set of real numbers and the set of complex numbers, respectively. \(R^{n}\) and \(C^n\) show, respectively, the n-dimensional Euclidean space and the n-dimensional unitary space. \(R^{n\times n}\) and \(C^{n\times n}\) are, respectively, the set of all \(n\times n\) real matrixes and the set of all \(n\times n\) complex matrices. \(\Vert \cdot \Vert \)stands for the Euclidean vector norm or the induced matrix 2-norm. If \(A\in R^{n\times n},\,A^{\mathrm{T}}\) shows the transpose of \(A,\,A>0\, (A<0)\) means that A is positive definite (negative definite), and \(\lambda _{M}(A)\) \((\lambda _{m}(A))\) shows the maximum (minimum) eigenvalue of A. Given a set \(Q\in C^{n}\,(Q\in R^{n}),\,K[Q]\) denotes the closure of the convex hull of \(Q.\,I\) is used to denote an identity matrix.

2 Problem Description and Some Preliminaries

Let us consider the following complex-valued Hopfield neural networks

or

where \(z(t)=[z_{1}(t),\,z_{2}(t),\ldots ,z_{n}(t)]^{\mathrm{{T}}}\in C^{n}\) is the state vector, \(D=\mathrm{diag}\{d_{1},\,d_{2},\ldots ,d_{n}\}\) with \(d_{i}>0\) is self-feedback connection weight matrix, \(A=[a_{ij}]_{n\times n}\in C^{n\times n}\) means the feedback connection weight matrix, \(f(z(t))=[f_{1}(z_{1}(t)),\,f_{2}(z_{2}(t)),\ldots ,f_{n}(z_{n}(t))]^{\mathrm{{T}}}\in C^{n}\) denotes the complex-valued vector activation function and \(u=[u_{1},\, u_{2},\ldots ,u_{n}]^{\mathrm{T}}\in C^{n}\) means the vector of constant neuron inputs.

The discontinuous neuron activations \(f_{i}(z_{i})\) are assumed to satisfy the following properties.

A(1) For each \(i=1,\,2,\ldots ,n,\, f_{i}(z_{i})\) is continuous in finite open domains \(G_{k}\) and discontinuous at the boundary of \(G_{k},\) which is composed by finite smooth curves. \(G_{k},\, k=1,\,2,\ldots ,s\) satisfies \(\bigcup \nolimits _{k=1}^{s}(G_{k}\bigcup \partial G_{k})=C\) and \(G_{l}\bigcap G_{K}=\emptyset \) for \(1\leqslant l\ne k\leqslant s.\)

A(2) For every \(k=1,\,2,\ldots ,s,\) the limitation \(\lim \nolimits _{z\rightarrow z_{0},z\in G_{k}}f_{i}(z)\) exists, where \(z_{0}\in \partial G_{k}.\)

A(3) There exist two nonnegative constants \(\alpha _{i}\) and \(\beta _{i}\) such that

Remark 1

According to the Liouville’s theorem [6], every bounded entire function must be a constant in the complex domain, that is to say, if f(z) is bounded and analytic for all \(z\in C,\,f(z)\) is a constant function. When we design a complex-valued neural network model, how to choose activation function becomes an important issue. Since the discontinuity of complex-valued functions means non-analytic, discontinuous activation functions are very useful in constructing complex-valued neural networks.

Remark 2

Though the activation functions are discontinuous in [27, 28], the authors did not consider the case which the equilibria of neural networks are located on the boundaries of discontinuous complex-valued activation functions. In this paper, we analyze the existence and uniqueness of equilibria and study the dynamical behavior under the framework of Filippov differential inclusion.

Remark 3

The assumption A(3) is less conservative than the existing results. If \(\beta _{i}=0,\) assumption A(3) plays a similar role with Lipschitz condition, which is needed in [14,15,16]. If \(\alpha _{i}=0,\) this means \(f_{i}\) is a bounded function, which is required in [27].

Before giving the main results, we introduce the Filippov differential inclusions for complex-valued differential equations with discontinuous right-hand side. Consider the following autonomous complex-valued differential equation:

where \(f{\text {:}}\,C^{n}\mapsto C^{n}\) is measurable and essentially locally bounded.

Definition 2.1

Let \(E\in C^{n},\,z\mapsto F(z)\) is called a set-valued map from \(E \mapsto C^{n}\) if to each point z of a set \(E\in C^{n},\) there corresponds a nonempty set \(F(z)\in C^{n}.\) A set-valued map F with nonempty values is said to be upper semi-continuous at \(z_{0}\in E\) if for any open set N containing \(F(z_{0}),\) there exists a neighborhood M of \(z_{0}\) such that \(F(M)\subset N,\) where \(F(M)=\bigcup \nolimits _{y\in M}F(y).\, F(z)\) is said to have a closed (convex, compact) image if for each \(z\in E;\, F(z)\) is closed (convex, compact).

Definition 2.2

For system (2.3) with discontinuous right-hand side, a set-valued map \(F{\text {:}}\, C^{n}\mapsto F(z)\) follows

where K(E) denotes the closure of the convex hull of set \(E,\, f(B(z,\,\delta ) \backslash {\mathcal {N}})=\bigcup \nolimits _{y\in (B(z,\delta )\backslash {\mathcal {N}})}f(y),\, B(z,\,\delta )=\{y|\Vert y-z\Vert \le \delta \}\) is the ball with center at x and radius \(\delta ,\) and \(\mu ({\mathcal {N}})\) is Lebesgue measure of set \({\mathcal {N}}\in C^{n}.\) A solution in the sense of Filippov (or Filippov solution) of Eq. (2.3) with initial condition \(z(t_{0})=z_{0}\) is an absolutely continuous vector-value function z(t) on any compact subinterval of \([t_{0},\,T),\) which satisfies \(z(t_{0})=z_{0}\) and differential inclusions:

\(z(t)=z^{*}\) is called an equilibria of system (2.3) if \(0\in F(z^{*})\) holds for any \(t\in R.\)

Remark 4

According to the definition of absolutely continuity for real function, we can define the absolutely continuity for complex variable function as follows. Suppose \(z(t){\text {:}}\,t\mapsto C\) is complex-valued function defined on \([a,\,b].\,z(t)\) is said to be absolutely continuous on \([a,\,b]\) if for any positive number \(\varepsilon >0,\) there exist \(\delta >0,\) such that for any finite open intervals \((a_{i},\,b_{i}),\,i=1,\,2,\ldots ,n\) satisfying \(\sum \nolimits _{i=1}^{n}(b_{i}-a_{i})<\delta ,\, \sum \nolimits _{i=1}^{n}\Vert z(b_{i})-z(a_{i})\Vert <\varepsilon \) holds. It is obvious that the absolutely continuity of z(t) on \([a,\,b]\) is equivalent to that both x(t) and y(t) are absolutely continuous on \([a,\,b],\) where x(t) and y(t) are the real and imaginary part of z(t), respectively.

Remark 5

The complex set-valued map \(F{\text {:}}\,[a,\,b]\rightarrow P_{f}(C^{n})\) is said to be measurable, if for any \(z\in C^{n},\) the \(R_{+}-\)valued function \(t\mapsto \mathrm{dist}(z,\,F(z))=\inf \{\Vert z-v\Vert ,\,v\in F(t)\}\) is measurable.

Definition 2.3

A vector function \(z(t)=[z_1(t),\,z_2(t),\ldots ,z_n(t)]^{\mathrm{T}}\) is a state solution of discontinuous system (2.1) on \([t_{0},\,T)\) if z(t) is absolutely continuous on any subinterval \([t_1,\,t_2]\) of \([t_{0},\,T)\) and there exists a measurable function \(\gamma (t)=[\gamma _1(t),\,\gamma _2(t),\ldots ,\gamma _n(t)]^{\mathrm{T}}\) such that \(\gamma _j(t)\in K[f_{j}(z_{j}(t))]\) for a.e. \(t\in [t_{0},\,T)\) satisfies:

The measurable \(\gamma (t)\) which satisfies (2.5) is called an output solution associated with state solution z(t). With this definition, it turns out that the sate z(t) is a solution of system (2.1) in sense of Filippov since it satisfies:

For an initial value problem (IVP) associated to the complex-valued neural networks model (2.1), we give the following definition.

Definition 2.4

A absolutely continuous function \(z(t)=[z_1(t),\,z_2(t),\ldots ,z_n(t)]^{\mathrm{T}}\) associated with a measurable function \(\gamma (t)=[\gamma _1(t),\,\gamma _2(t),\ldots ,\gamma _n(t)]^{\mathrm{T}}\) is said to be a solution of the IVP for system (2.1) on \([t_{0},\,T)\) with initial value \(z(t_0)=z_0,\) if the following condition holds for all \(i=1,\,2,\ldots ,n,\)

Definition 2.5

\(z^{*}\) is said to be an equilibrium point of set-valued map F(z) if \(0\in F(z^{*}).\) Particulary, \(z^{*}\) is said to be an equilibrium of system (2.1) if there exists \(\gamma ^{*}\in K[f(z^{*})]\) such that

and \(\gamma ^{*}\) is said to be an output equilibrium point of system (2.1) corresponding to \(z^{*}.\)

Definition 2.6

Assume \(z^{*}\) is an equilibrium point of system (2.1). \(z^{*}\) is said to be globally asymptotically stable if it is stable in the sense of Lyapunov and global attractive, where global attractiveness means that every trajectory z(t) tends to \(z^{*}\) as \(t\rightarrow \infty .\)

Definition 2.7

If \(\gamma {\text {:}}\,[a,\,+\infty )\mapsto C^{n}\) is a measurable function, \(\gamma ^{*}\) is said to be a limit of \(\gamma (t)\) in measure if \(\forall \varepsilon>0,\, \exists t_{\varepsilon }>0\) such that \(\mu \{t\in [t_{\varepsilon },\,+\infty ){\text {:}}\,\Vert \gamma (t)-\gamma ^{*}\Vert >\varepsilon \}<\varepsilon \) as \(t\rightarrow +\infty .\) In this case, we write \(\mu -\lim \nolimits _{t\rightarrow +\infty }\gamma (t)=\gamma ^{*},\) i.e., output solution \(\gamma (t)\) converge to \(\gamma ^{*}\) in measure.

Definition 2.8

The system (2.1) is said to be globally convergent in finite time if the following conditions hold,

-

(i)

Discontinuous system (2.1) has a unique equilibrium point \(z^{*}\) and a unique corresponding output equilibrium point \(\gamma ^{*},\)

-

(ii)

For each \(z_{0}\in C^{n}\) and any solution z(t) of system (2.1) with \(z(t_{0})=z_{0},\) there exists \({\tilde{t}}>0,\) such that \(z(t)=z^{*}\) for \(t>{\tilde{t}}.\)

In this paper, as in [12, 17, 21, 27], we choose the following real–imaginary-type function as activation functions in system (2.1),

where \(f^{R}_{i}(\mathrm{Re}(z_{i}))\) and \(f^{I}_{i}(\mathrm{Im}(z_{i}))\) are discontinuous functions. In order to study the stability of system (2.1), we separate it into its real and imaginary parts. Let \(z_{i}(t)=x_{i}(t)+\mathbf{i }y_{i}(t),\, a_{ij}=a_{ij}^{R}+\mathbf{i }a_{ij}^{I},\, f_{i}(z_{i}(t))=f_{i}^{R}(x_{i}(t))+\mathbf{i }f_{i}^{I}(y_{i}(t))\) and \(u=u^{R}+\mathbf{i }u^{I},\) where \(\mathbf{i }\) shows the imaginary unit, i.e., \(\mathbf{i }=\sqrt{-1}.\) Denote \(x=[x_{1},\,x_{2},\ldots ,x_{n}]^{\mathrm{T}}\in R^{n}\) and \(y=[y_{1},\,y_{2},\ldots ,y_{n}]^{\mathrm{T}}\in R^{n},\) then system (2.1) can be separated into its real and imaginary parts as follows:

where \(A^{R}=[a^{R}_{ij}],\, A^{I}=[a^{I}_{ij}],\, f^{R}(x)=[f_{1}^{R}(x_{1}),\,f_{2}^{R}(x_{2}),\ldots ,f_{n}^{R}(x_{n})]^\mathrm{T},\, u^{R}=[u_{1}^{R},\,u_{2}^{R},\,\ldots ,u_{n}^{R}]^{\mathrm{T}},\, f^{I}(x)=[f_{1}^{I}(y_{1}),\,f_{2}^{I}(y_{2}),\ldots ,f_{n}^{I}(y_{n})]^\mathrm{T}\) and \(u^{I}=[u_{1}^{I},\,u_{2}^{I},\ldots ,u_{n}^{I}]^{\mathrm{T}}.\)

From A(1) and A(2), it is easy to know that \(f^{R}_{i}(x_{i})\) is discontinuous with some points \(x^{k}_{i}\) and \(f^{I}_{i}(y_{i})\) is discontinuous with some points \(y^{k}_{i}.\) According to A(2), for every \(i=1,\,2,\ldots ,n,\) the limitations \(\lim \nolimits _{x\rightarrow x_{0}}f_{i}^{R}(x)=f_{i}^{R}(x_{0})\) and \(\lim \nolimits _{y\rightarrow y_{0}}f_{i}^{I}(y)=f_{i}^{I}(y_{0})\) exist where \((x,\,y)\in {\bar{G}}_{k}\) and \((x_{0},\,y_{0})\in \partial {\bar{G}}_{k}.\) Based on above analysis and real-valued set value mapping, we can choose a special way to define the complex set valued maps for (2.6) as follows:

where \(f^R(B(x,\,\delta )\backslash \mathcal {N})=\bigcup \nolimits _{{\bar{x}}\in (B(x,\,\delta )\backslash \mathcal {N})}f^{R}({\bar{x}}),\, B(x,\,\delta )=\{{\bar{x}}|\Vert {\bar{x}}-x\Vert \le \delta \}\) is the ball with center at x and radius \(\delta ,\, f^I(B(y,\,\delta )\backslash {\mathcal {N}})=\bigcup \nolimits _{{\bar{y}}\in (B(y,\,\delta )\backslash {\mathcal {N}})}f^{I}({\bar{y}}),\, B(y,\,\delta )=\{{\bar{y}}|\Vert {\bar{y}}-y\Vert \le \delta \}\) is the ball with center at y and radius \(\delta \) and \(\mu ({\mathcal {N}})\) is Lebesgue measure of set \({\mathcal {N}}\in R^{n}.\)

Remark 6

Compared with Definition 2.2, it follows that

where \(f(z)=f^{R}(x)+\mathbf{i }f^{I}(y).\) For example, let

\(\bigcap \nolimits _{\delta >0}\bigcap \nolimits _{\mu (\mathcal {N})=0}K[f(B(z,\,\delta )\backslash {\mathcal {N}})]\) and \(\bigcap \nolimits _{\delta>0}\bigcap \nolimits _{\mu (\mathcal {N})=0}K[f^{R}(B(x,\,\delta ) \backslash \mathcal {N})]+\mathbf{i }\bigcap \nolimits _{\delta >0}\bigcap \nolimits _{\mu (\mathcal {N})=0}K[f^{I}(B(y,\,\delta )\backslash {\mathcal {N}})]\) at point \(z=0\) are shown in Figs. 1 and 2, respectively. It is easy to see the correction of formula (2.9).

Complex differential inclusion of \(f(\cdot )\) at \(z=0\) in Definition 2.2

Complex differential inclusion of \(f(\cdot )\) at \(z=0\) in the special way of (2.7)

According to A(3), there exist \(\alpha ^{R}_{i},\,\eta ^{R}_{i}\) and \(\alpha ^{I}_{i},\,\eta ^{I}_{i}\) satisfying

where

and

According to Definition 2.3, that \(z(t)=x(t)+\mathbf{i }y(t)\) is a solution of system (2.1) is equivalent to that \([x^\mathrm{T}(t),\,y^{\mathrm{T}}(t)]^{\mathrm{T}}\) is a solution of system (2.7), i.e.,

Now denote

Equation (2.11) can be rewritten as

According to Definition 2.4, we can give the definition of the IVP associated to system (2.7) as follows.

Definition 2.9

A absolutely continuous functions \([x^{\mathrm{T}},\,y^{\mathrm{T}}]^{\mathrm{T}}\) associated with a measurable functions \([(\gamma ^{\mathrm{R}})^\mathrm{T},\,(\gamma ^{\mathrm{I}})^{\mathrm{T}}]^{\mathrm{T}}\) is said to be a solution of the IVP for system (2.7) on \([t_{0},\,T)\) with initial value \(x(t_0)=x_0,\, y(t_0)=y_0,\) if the following condition holds,

where \(x(t)=[x_1(t),\,x_2(t),\ldots ,x_n(t)]^{\mathrm{T}},\, y(t)=[y_1(t),\,y_2(t),\ldots ,y_n(t)]^{\mathrm{T}},\, \gamma ^{R}(t)=[\gamma ^{\mathrm{R}}_1(t),\,\gamma ^{\mathrm{R}}_2(t),\ldots ,\gamma ^{\mathrm{R}}_n(t)]^{\mathrm{T}}\) and \(\gamma ^{R}(t)=[\gamma ^{\mathrm{I}}_1(t),\,\gamma ^\mathrm{I}_2(t),\ldots ,\gamma ^{\mathrm{I}}_n(t)]^{\mathrm{T}}.\)

According to Definition 2.5, \(z^{*}=x^{*}+\mathbf{i }y^{*}\) is an equilibrium point of system (2.1) if and only if \([(x^{*})^{\mathrm{T}},\,(y^{*})^{\mathrm{T}}]^{\mathrm{T}}\) is an equilibrium point of system (2.7). That is to say, there exist \(\gamma ^{R^{*}}=[\gamma ^{R^{*}}_{1},\,\gamma ^{R^{*}}_{2},\ldots ,\gamma ^{R^{*}}_{n}]^\mathrm{T}\) and \(\gamma ^{I^{*}}=[\gamma ^{I^{*}}_{1},\,\gamma ^{I^{*}}_{2},\ldots ,\gamma ^{I^{*}}_{n}]^\mathrm{T},\) such that

holds, where \(\gamma ^{R^{*}}_{i}\in K[f_{i}^{R}(x_{i}^{*})]\) and \(\gamma ^{I^{*}}_{i}\in K[f_{i}^{I}(y^{*}_{i})].\) Therefore, we can analyze the existence and global stability of equilibrium point for system (2.7) through analyzing the equilibrium point of (2.12).

Before giving the stability analysis on the equilibrium point of system (2.1), we present four important lemmas, which will be used later.

Lemma 2.10

(Leray–Schauder alternative theorem [40]) If X is a Banach space, \(E\subset X\) is nonempty convex with \(0\in E\) and \(G{\text {:}}\,E\mapsto P_{kc}(E)\) is an upper semi-continuous multifunction which maps bounded set into relatively compact sets, then one of the following statements is true:

-

(i)

the set \(\Gamma =\{x\in E{\text {:}}\,x\in \lambda G(x),\,\lambda \in (0,\,1)\}\) is unbounded;

-

(ii)

the \(G(\cdot )\) has a fixed point in E, i.e., there exists \(x\in E\) such that \(x\in G(x).\) Here \(P_{kc}(E)\) denotes the collection of all nonempty, compact, convex subset of E.

Lemma 2.11

[31, 33] If \(V(x){\text {:}}\, R^{n}\rightarrow R\) is C-regular, and x(t) is absolutely continuous on any compact subinterval of \([0,\,{+}\infty ).\) Then, x(t) and \(V(x(t)){\text {:}}\,[0,\,{+}\infty )\rightarrow R\) are differentiable for a.a. \(t\in [0,\,{+}\infty )\) and

where \(\partial _{c}V(x(t))\) is the Clark generalized gradient of V at x(t) and \(\gamma (t)\) is an measurable function.

Lemma 2.12

[41] Assume that there exists a continuous, differentiable almost everywhere, positive definite and radially unbounded function \(V{\text {:}}\,R^{n}\mapsto R,\) such that:

-

(i)

\({\dot{V}}\le 0\) for all \(x\in R^{n};\)

-

(ii)

The origin is the only invariant subset of the set \(E=\{x\in R^{n}{\text {:}}\,{\dot{V}}=0\}.\)

Then the equilibrium \(x=0\) of system (2.1) is globally asymptotically stable on \(R^{n}.\)

Lemma 2.13

[14] For any vectors \(x,\,y\in R^m\) and positive matrix \(P\in R^{m\times m},\) the following matrix inequality holds:

3 Dynamical Behavior Analysis

The goal of this section is to investigate the dynamical behavior of system (2.1). Firstly, we investigate the viability, namely, there exists at least one solution of system (2.1) on \([0,\,{+}\infty ].\)

Lemma 3.1

[36] If a set-valued map \(F{\text {:}}\, E\mapsto R^{n},\,(E\in R^{n})\) is upper semi-continuous in \(R^{n}\) with nonempty bounded closed convex values and there exist nonnegative constants p and q such that \(\Vert F(x)\Vert \le p\Vert x\Vert +q,\) then the maximal interval of existence of each solution with initial condition \(x(t_{0})=x_{0}\) of the differential inclusion \({\dot{x}}\in F(x)\) is \([t_{0},\,{+}\infty ).\)

Theorem 3.2

Suppose A(1)–A(3) are satisfied, then system (2.1) with initial value \(z(0)=z_0\) has at least a solution z(t) on \([0,\,{+}\infty ).\)

Proof

Based on the detailed discussions in Sect. 2 and formula (2.12), the set-valued map \(w(t)\mapsto -{\bar{D}}w(t)+{\bar{A}}K[f(w(t))]+{\bar{u}}\) is upper-semi-continuous with nonempty compact convex values, and the local existence of a solution w(t) of system (2.12) can be guaranteed [37].

From A(3) and formula (2.10), there exist two nonnegative constants \({\bar{\alpha }},\,{\bar{\beta }}\) satisfying

It follows that

where \(\bar{{\bar{\alpha }}}=\Vert {\bar{D}}\Vert +{\bar{\alpha }}\Vert {\bar{A}}\Vert \) and \(\bar{{\bar{\beta }}}={\bar{\beta }}\Vert {\bar{A}}\Vert +\Vert {\bar{u}}\Vert .\)

According to (2.12), for the fixed w, we have

It follows that

By the Gronwall inequality, one obtains

Hence, since w(t) remains bounded for positive times, it is defined on \([0,\,{+}\infty ),\) i.e., x(t) and y(t) are existed on \([0,\,{+}\infty ).\) Let \(z(t)=x(t)+\mathbf{i }y(t),\) then z(t) exists on \([0,\,{+}\infty )\) and satisfies:

This completes the proof of Theorem 3.2. \(\square \)

Theorem 3.3

There exists at least one equilibrium of system (2.1) if A(1)–A(3) are satisfied and the following two assumptions hold

A(4) For any \((u_{1},\,v_{1})\in R^{2}\) and \((u_{2},\,v_{2})\in R^{2},\) there exist two constant numbers \(L_{i}^{R}\) and \(L_{i}^{I},\) such that

for \(\forall \gamma ^{R}_{i}\in K[f_{i}^{R}(u_{1})],\,\zeta ^{R}_{i}\in K[f_{i}^{R}(u_{2})],\,\gamma ^{I}_{i}\in K[f_{i}^{I}(v_{1})]\) and \(\zeta ^{I}_{i}\in K[f_{i}^{I}(v_{2})].\)

A(5) There exists \(P=\mathrm{diag}\{p_{1},\,p_{2},\ldots ,p_{n}\}\) with \(p_{i}>0\) such that \(PA^{I}=(A^{I})^{\mathrm{T}}P,\, PA^{R}+(A^{R})^\mathrm{T}P<0,\) and

where \(d_{m}=\min \nolimits _{1\le i\le n}\{d_{i}\},\, \lambda _{m}={-}\lambda _{M}\{PA^{R}+(A^{R})^{\mathrm{T}}P\}\) and \(L_{i}=\max \{L_{i}^{R},\,L_{i}^{I}\}.\)

Proof

Let \(\Phi (w)=w-{\bar{D}}w+{\bar{A}}K[f(w)]+{\bar{u}},\) then that \(w^{*}\) is an equilibrium of (2.12) is equivalent to say that \(w^{*}\) is a fixed point of \(\Phi (\cdot ),\) i.e., \(w^{*}\in \Phi (w^{*}).\) It is clear that \(\Phi {\text {:}}\,R^{2n}\mapsto P_{kc}(R^{2n})\) is an upper semi-continuous multifunction which maps bounded sets into relatively compact sets under the assumptions A(1)–A(3). In order to solve the fixed point problem \(w^{*}\in \Phi (w^{*}),\) it is sufficient to show that the set \(\Gamma =\{w\in R^{2n}{\text {:}}\,w\in \theta \Phi (w),\,\theta \in (0,\,1)\}\) is bounded. Let

then \(\Gamma =\{w\in R^{2n}{\text {:}}\,0\in \Phi (w,\,\theta ),\theta \in (0,\,1)\}.\) Rewrite (3.5) as

where \({\tilde{f}}(w)=f(w)-{\tilde{\eta }},\, {\tilde{\eta }}\in K[f(0)]\) is a constant vector, and \(\tilde{{\bar{u}}}={\bar{A}}{\tilde{\eta }}+{\bar{u}}.\)

From A(5), if \(L_{i}>0,\) we can choose a positive number c such that

If \(L_{i}<0,\) we can choose a positive number c such that

For any \(s\in \Phi (w,\,\theta ),\) there exits \({\hat{\gamma }}\in K[{\tilde{f}}(w)]\) such that \(s={-}(1-\theta )w+\theta [-{\bar{D}}w+{\bar{A}}{\hat{\gamma }}+\tilde{{\bar{u}}}],\) where \({\hat{\gamma }}=[({\hat{\gamma }}^{R})^\mathrm{T},\,({\hat{\gamma }}^{I})^{\mathrm{T}}]^{\mathrm{T}},\, {\hat{\gamma }}^{R}\in K[{\tilde{f}}^{R}(x)],\, {\tilde{f}}^{R}(x)=f^{R}(x)-{\tilde{\eta }}^{R},\, {\tilde{\eta }}^{R}\in K[f^{R}(0)]\) and \({\hat{\gamma }}^{I}\in K[{\tilde{f}}^{I}(y)],\, {\tilde{f}}^{I}(y)=f^{I}(y)-{\tilde{\eta }}^{I},\, {\tilde{\eta }}^{I}\in K[f^{I}(0)].\) From the assumption A(4), we can obtain that for any \((x_{i},\,y_{i})\in R^{2}\) and \({\hat{\gamma }}_{i}^{R}\in K[{\tilde{f}}_{i}^{R}(x_{i})],\, {\hat{\gamma }}_{i}^{I}\in K[{\tilde{f}}_{i}^{I}(y_{i})],\) it follows that

Therefore,

Since \(d_i>0,\) one obtains

It follows that

where \(\varepsilon =\min \nolimits _{1\le i\le n}(1-2cp_{i}L_{i})>0.\)

Since \(PA^{I}=(A^{I})^{\mathrm{T}}P,\) it is easy to get \(({\hat{\gamma }}^{R})^\mathrm{T}PA^{I}{\hat{\gamma }}^{I}=({\hat{\gamma }}^{I})^\mathrm{T}PA^{I}{\hat{\gamma }}^{R},\) which will be used in (3.13).

Note that for any \(s\in \Phi (w,\,\theta ),\)

where \(\alpha =\min \{\varepsilon ,\,\varepsilon d_{m}\},\, M=2(1+cp_{M}{\bar{\alpha }})\Vert \tilde{{\bar{u}}}\Vert ,\, N=2cp_{M}{\bar{\beta }}\Vert \tilde{{\bar{u}}}\Vert \) and \(p_{M}=\max \nolimits _{1\le i\le n}\{p_{i}\}.\)

If \(R_{0}\) is big enough, then

for \(\Vert w\Vert >R_{0},\) which means that \(0\not \in \Phi (w,\,\theta )\) as \(\Vert w\Vert >R_{0},\) i.e., \(0\in \Phi (w,\,\theta )\) only if \(\Vert w\Vert \le R_{0},\) so \(\Gamma \) is bounded. According to Lemma 2.10, there exists \(w^{*}\in R^{2n}\) such that \(w^{*}\in \Phi (w^{*}).\) By Definition 2.2 in [34], \(w^{*}\) is an equilibrium of system (2.12), i.e., \(w^{*}=[(x^{*})^{\mathrm{T}},\,(y^{*})^\mathrm{T}]^{\mathrm{T}}\) is an equilibrium of system (2.7). From (2.13), we can obtain the existence of an output equilibrium \([(\gamma ^{R^{*}})^{\mathrm{T}},\,(\gamma ^{I^{*}})^{\mathrm{T}}]^{\mathrm{T}}\) corresponding to \([(x^{*})^{\mathrm{T}},\,(y^{*})^{\mathrm{T}}]^{\mathrm{T}}.\) Let \(z^{*}=x^{*}+\mathbf{i }y^{*}\) and \(\gamma ^{*}=\gamma ^{R^{*}}+\mathbf{i }\gamma ^{I^{*}},\) then \(z^{*}\) is an equilibrium of system (2.1) and \(\gamma ^{*}\) is an output equilibrium corresponding to \(z^{*}.\) \(\square \)

Remark 7

In many papers, such as [14, 21, 24, 25], the activation functions are assumed to satisfy Lipschitz condition. In this paper, this assumption is removed. Since the continuity of the activation function implies the assumption A(4), our result is less conservative.

To simplify the proof of global stability of system (2.1), we shall shift the equilibrium point \(z^{*}=x^{*}+\mathbf{i }y^{*}\) of (2.1) to the origin. Let \({\tilde{z}}=z-z^{*}\) and \({\tilde{\gamma }}=\gamma -\gamma ^{*}.\) Then we have

where \({\tilde{\gamma }}\in K[{\tilde{f}}(z(t))]\) and \({\tilde{f}}_{i}(z_{i}(t))=f_{i}({\tilde{z}}_{i}(t)+z_{i}^{*}),\, i=1,\,2,\ldots ,n.\) Obviously, \({\tilde{f}}\) satisfies A(1)–A(3) and \(0\in K[{\tilde{f}}(0)].\) Separated (3.15) with its real and imaginary part, it follows that

where \({\tilde{x}}=x-x^{*},\,{\tilde{y}}=y-y^{*},\, {\tilde{\gamma }}^{R}\in K[{\tilde{f}}^{R}(x)],\, {\tilde{f}}_{i}^{R}(x_{i})=f_{i}^{R}(x_{i}^{*}+{\tilde{x}}_{i})\) and \({\tilde{\gamma }}^{I}\in K[{\tilde{f}}^{I}(y)],\, {\tilde{f}}_{i}^{I}(y_{i})=f_{i}^{I}(y_{i}^{*}+{\tilde{y}}_{i}).\) Clearly, from the assumption A(4), we can obtain that for any \((\tilde{x_{i}},\,{\tilde{y}}_{i})\in R^{2}\) and \({\tilde{\gamma }}_{i}^{R}\in K[{\tilde{f}}_{i}^{R}({\tilde{x}}_{i})],\, {\tilde{\gamma }}_{i}^{I}\in K[{\tilde{f}}_{i}^{I}({\tilde{y}}_{i})],\) it follows that

Theorem 3.4

(Global Asymptotic Stability) Assume the assumptions A(1)–A(5) are satisfied, then for any input vector \(u\in C^{n},\) system (2.1) has a unique equilibrium point \(z^{*},\) which is globally asymptotically stable.

Proof

Let us consider the Lyapunov function

where c is defined in (3.7) or (3.8).

From (3.16) and with the similar analysis in [38], it is easy to obtain \(V[{\tilde{z}},\,{\tilde{\gamma }}]\ge 0,\,V(0)=0.\) In addition, it is obvious that \(V[{\tilde{z}},\,{\tilde{\gamma }}]\) is C-regular, continuous, positive definite, and radially unbounded.

By Lemma 2.11, we calculate and estimate the derivative along system (3.16) as follows

where \(\rho =c \lambda _{m}-\frac{2\Vert (A^{R})^{\mathrm{T}}A^{R}+(A^{I})^\mathrm{T}A^{I}\Vert }{d_{m}}>0.\) Therefore, \({\dot{V}}\) is negative definite. From Lemma 2.12, it follows that 0 is globally asymptotically stable, i.e., 0 is an equilibrium point of (3.15). Therefore, the equilibrium \(z^{*}=x^{*}+\mathbf{i }y^{*}\) of system (2.1) is globally asymptotically stable. From (3.20), there exists exactly one equilibrium \(z^{*}\) of system (2.1). \(\square \)

From (3.20), we have \({\dot{V}}[{\tilde{z}},\,{\tilde{\gamma }}]\le -\rho ({\tilde{\gamma }}^{I})^{\mathrm{T}}{\tilde{\gamma }}^{I}\) and \({\dot{V}}[{\tilde{z}},\,{\tilde{\gamma }}]\le -\rho ({\tilde{\gamma }}^{R})^{\mathrm{T}}{\tilde{\gamma }}^{R}.\) It follows that

and

Therefore, one obtains \(\int _{0}^{t}({\tilde{\gamma }}^{R}(s))^\mathrm{T}{\tilde{\gamma }}^{R}(s)\mathrm{d}s\le \frac{V[{\tilde{z}},\,{\tilde{\gamma }}](0)}{\rho }\) and \(\int _{0}^{t}({\tilde{\gamma }}^{I}(s))^\mathrm{T}{\tilde{\gamma }}^{I}(s)\mathrm{d}s\le \frac{V[{\tilde{z}},\,{\tilde{\gamma }}](0)}{\rho }.\)

For any \(\sigma >0,\) let \(E^{R}_{\sigma }=\left\{ t\in [0,\,{+}\infty ){\text {:}}\,\Vert {\tilde{\gamma }}^{R}(t)\Vert >\frac{\sigma }{\sqrt{2}}\right\} \) and \(E^{I}_{\sigma }=\big \{t\in [0,\,{+}\infty ){\text {:}}\,\Vert {\tilde{\gamma }}^{I}(t)\Vert >\frac{\sigma }{\sqrt{2}}\big \},\) we have

Therefore, \(\mu (E^{R}_{\sigma })<\infty \) and \(\mu (E^{I}_{\sigma })<\infty .\) Since \(E_{\sigma }=\{t\in [0,\,{+}\infty ){\text {:}}\,\Vert {\tilde{\gamma }} (t)\Vert>\sigma \}\subseteq \left\{ t\in [0,\,{+}\infty ){\text {:}}\,\Vert {\tilde{\gamma }}^{R}\Vert>\frac{\sigma }{\sqrt{2}}\right\} \bigcup \left\{ t\in [0,\,{+}\infty ){\text {:}}\,\Vert {\tilde{\gamma }}^{I}(t)\Vert >\frac{\sigma }{\sqrt{2}}\right\} ,\) where \({\tilde{\gamma }}={\tilde{\gamma }}^{R}+\mathbf{i }{\tilde{\gamma }}^{I}.\) Therefore, \(\mu (E_{\sigma })<\infty .\) From Proposition 2 in [31], one can see that \({\tilde{\gamma }}(t)\) converge to zero in measure, that is to say \(\mu -\lim \nolimits _{t\rightarrow \infty }{\tilde{\gamma }}(t)=0\) and \(\mu -\lim \nolimits _{t\rightarrow \infty }\gamma (t)=\gamma ^{*}.\) In view of Definition 2.7, the output solution \(\gamma (t)=\gamma ^{R}(t)+\mathbf{i }\gamma ^{I}(t)\) of system (2.1) converges to \(\gamma ^{*}=\gamma ^{R^{*}}+\mathbf{i }\gamma ^{I^{*}}.\)

If we let the imaginary parts of all the variables and parameters be zero, system (2.1) becomes the neural network model in [39], which can be described as follows

With the similar analysis, we can get the following corollary, which is the result of Theorem 2 in paper [39].

Corollary 3.5

Suppose the following assumptions are satisfied:

- \(A^{\prime }(1)\) :

-

For each \(i=1,\,2,\ldots ,n,\, f_{i}(x_{i})\) is continuous at finite points \(x^{k}_{i},\,k=1,\,2,\ldots ,s.\)

- \(A^{\prime }(2)\) :

-

For every \(k=1,\,2,\ldots ,s,\) the limitations \(\lim \nolimits _{x\rightarrow x^{k+}_{i}}f_{i}(x)=f_{i}(x^{k+}_{i})\) and \(\lim \nolimits _{x\rightarrow x^{k-}_{i}}f_{i}(x)=f_{i}(x^{k-}_{i})\) exist.

- \(A^{\prime }(3)\) :

-

For each \(i=1,\,2,\ldots ,n,\) there exist nonnegative constants \(\alpha _{i}\) and \(\beta _{i}\) such that

$$\begin{aligned} \left\| f_{i}\left( x_{i}(t)\right) \right\| \leqslant \alpha _{i}\left\| x_{i}(t)\right\| +\beta _{i}. \end{aligned}$$ - \(A^{\prime }(4)\) :

-

For each \(i=1,\,2,\ldots ,n,\) there exists a constant number \(L_{i},\) such that for any \(u\in R\) and \(v\in R,\, \forall \gamma _{i}\in K[f_{i}(u)],\, \zeta \in K[f_{i}(v)],\)

$$\begin{aligned} \frac{\gamma _{i}-\zeta _{i}}{u-v}\ge -L_{i}. \end{aligned}$$ - \(A^{\prime }(5)\) :

-

There exists \(P=\mathrm{diag}\{p_{1},\,p_{2},\ldots ,p_n\}\) with \(p_{i}>0\) such that \(PA+A^{\mathrm{T}}P<0\) and

$$\begin{aligned} \frac{L_{i}p_{i}\Vert A^{\mathrm{T}}A\Vert }{d_{m}\lambda _{m}}< \frac{1}{4}, \end{aligned}$$where \(d_{m}=\min \nolimits _{1\le i\le n}\{d_{i}\},\, \lambda _{m}={-}\lambda _{M}\{PA+A^{\mathrm{T}}P\}.\)

Then there exists at least one equilibrium of system (3.21). Furthermore, the equilibrium point is globally asymptotically stable.

In Theorems 3.3 and 3.4, the condition of \(PA^{I}=(A^{I})^{\mathrm{T}}P\) is not satisfied for many complex-valued neural networks. Therefore, we proposed the following theorem.

Theorem 3.6

Suppose A(1)–A(4) are satisfied and the following assumption holds: \({\bar{A}}(5)\) There exists \(P=\mathrm{diag}\{p_{1},\,p_{2},\ldots ,p_n\}\) with \(p_{i}>0\) such that \(Q=PA^{R}+(A^{R})^{\mathrm{T}}P+P+(A^{I})^\mathrm{T}PA^{I}<0\) and

where \(d_{m}=\min \nolimits _{1\le i\le n}\{d_{i}\},\, \lambda _{m}={-}\lambda _{M}\{Q\}\) and \(L_{i}=\max \{L_{i}^{R},\,L_{i}^{I}\}.\)

Then system (2.1) has an unique equilibrium point \(z^{*}.\) Moreover, \(z^{*}\) is globally asymptotically stable and the output solution \(\gamma \) converge to \(\gamma ^{*}\) in measure.

Proof

Since \(\theta >0\) and \(c>0,\) using Lemma 2.13, one obtains

and

Submitting the above inequalities into (3.13) and (3.20), we can get

and

The rest proof are same with that in Theorems 3.3 and 3.4. \(\square \)

Theorem 3.7

(Convergence in Finite Time) Suppose the assumptions of Theorem 3.3 and the assumption A(6) below are satisfied:

A(6) \(z_{i}^{*}=x_{i}^{*}+\mathbf{i }y_{i}^{*}\) is a discontinuous point of \(f_{i}\) and \(f_{i}^{R-}(x_{i}^{*})-\gamma ^{R^{*}}<0<f_{i}^{R+}(x_{i}^{*})-\gamma ^{R^{*}}\) and \(f_{i}^{I-}(y_{i}^{*})-\gamma ^{I^{*}}<0<f_{i}^{I+}(y_{i}^{*})-\gamma ^{I^{*}},\) where \(z^{*}=[z_{1}^{*},\,z_{2}^{*},\ldots ,z_{n}^{*}]^{\mathrm{T}}\) is an equilibrium of system (2.1).

Then, for each solution of system (2.1) with initial condition \(z(t_0)=z_{0},\) there exists \(t_{f}>0\) such that \(z(t)=z^{*}\) for \(t\ge t_{f},\) i.e., z(t) converges to the equilibrium \(z^{*}\) in finite time \(t_{f}.\)

Proof

Let us define \(\Delta _{i}^{R-}=\gamma ^{R^{*}}-f_{i}^{R-}(x_{i}^{*}),\, \Delta _{i}^{R+}=f_{i}^{R+}(x_{i}^{*})-\gamma ^{R^{*}},\, \Delta _{i}^{I-}=\gamma ^{I^{*}}-f_{i}^{I-}(x_{i}^{*}),\, \Delta _{i}^{I+}=f_{i}^{I+}(x_{i}^{*})-\gamma ^{I^{*}}\) and \(\Delta =\min \nolimits _{i=1,2,\ldots ,n}\{\min \{\Delta _{i}^{R-},\,\Delta _{i}^{R+},\,\Delta _{i}^{I-},\,\Delta _{i}^{I+}\}\}.\)

Since \(\lim \nolimits _{\rho \rightarrow 0^{-}} {\tilde{f}}^{R}_{i}(\rho )\le -\Delta \) and \(\lim \nolimits _{\rho \rightarrow 0^+} {\tilde{f}}^{R}_{i}(\rho )\ge \Delta ,\, \lim \nolimits _{\rho \rightarrow 0^{-}} {\tilde{f}}^{I}_{i}(\rho )\le -\Delta \) and \(\lim \nolimits _{\rho \rightarrow 0^+} {\tilde{f}}^{I}_{i}(\rho )\ge \Delta ,\) there exists a sufficient small positive constant \(\delta \) such that

Note that the equilibrium point \(z^*\) of system (2.1) is globally asymptotically stable, thus for each solution z(t) of (2.1) with initial condition \(z(t_0)=z_{0},\) there exists \(t_{\delta }>0\) such that \(\Vert x\Vert \le \delta \) and \(\Vert y\Vert \le \delta \) for \(t>t_{\delta }.\)

Performing similar estimates as in the proof of Theorem 3.4, we get \({\dot{V}}[{\tilde{z}},\,{\tilde{\gamma }}]\le {-}\rho ({\tilde{\gamma }}^{I})^{\mathrm{T}}{\tilde{\gamma }}^{I}\le {-}\rho \Delta ^{2}\) and \({\dot{V}}[{\tilde{z}},\,{\tilde{\gamma }}]\le -\rho ({\tilde{\gamma }}^{R})^{\mathrm{T}}{\tilde{\gamma }}^{R}\le -\rho \Delta ^{2}.\) It is easy to get \(V[{\tilde{z}},\,{\tilde{\gamma }}](t)\le 0\) for \(t\ge \frac{V[{\tilde{z}},\,{\tilde{\gamma }}](0)}{\rho \Delta ^{2}}.\) It follows that \(x(t)=x^{*}\) and \(y(t)=y^{*}\) for \(t\ge \frac{V[{\tilde{z}},\,{\tilde{\gamma }}](0)}{\rho \Delta ^{2}}+t_{\delta }.\) This implies that there exists a positive constant \(t_{f}=\frac{V[{\tilde{z}},\,{\tilde{\gamma }}](0)}{\rho \Delta ^{2}}+t_{\delta }>0\) such that \(z(t)=z^{*}\) for \(t>t_{f}.\) \(\square \)

4 Numerical Examples

In this section, we will give two examples to demonstrate the above results.

Example 4.1

Consider a two-neuron complex-valued neural network described as follows:

where

We choose the real part and imaginary part of discontinuous complex-valued activation functions as the same with functions in [33], that is

where \(s\in C\) and \(i=1,\,2.\) It is obvious that the activation function \(f(z)=[f_{1}(z_{1}),\,f_{2}(z_{2})]^{\mathrm{T}}\) is discontinuous on the complex domain \(C^{2},\) and \(f_{i}(z_{i})\) is satisfying A(4) with \(L^{R}_{i}=L_{i}^{I}=1,\) which is unbounded in \(C^{2}.\)

Now take \(P=I,\) where I is the unit matrix. Then it is easy to get \(PA^{I}=(A^{I})^{\mathrm{T}}P,\, PA^{R}+(A^{R})^{\mathrm{T}}P<0\) and \(d_{m}=1.\) Through simple computation, we obtain \(\Vert A^{R}(A^{R})^{\mathrm{T}}+A^{I}(A^{I})^{\mathrm{T}}\Vert =0.0725,\, \lambda _{m}=1/5,\) and

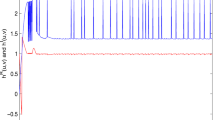

According to Theorem 3.4, the equilibrium \(z^{*}=(0.65+0.23\mathbf{i },\,0.14+0.83\mathbf{i })\) is unique and globally asymptotically stable. The output solution \(\gamma (t)\) of system (4.1) convergence to \(\gamma ^{*}=(1.65-1.23\mathbf{i },\,1.14-1.83\mathbf{i })\) in measure. According to Theorem 3.7, the trajectories of z(t) convergence to \(z^{*}\) in finite time. Consider the IVP of system (4.1) with the initial condition \(z_0=[2-\mathbf{i },\,1-2\mathbf{i }]^{\mathrm{T}},\) the time response of the real part and imaginary part for \(z_{1},\,z_{2}\) and \(\gamma _{1}(t),\,\gamma _{2}(t)\) are shown in Figs. 3 and 4, respectively. The trajectories of \(z_{1},\,z_{2}\) and output solution \(\gamma _1(t),\,\gamma _2(t)\) in complex domain are shown in Figs. 5 and 6.

Phase plane behavior of the state variables \(z_{1}\) and \(z_{2}\) in complex domain C in Example 4.1

Phase plane behavior of the state variables \(\gamma _{1}\) and \(\gamma _{2}\) in complex domain C in Example 4.1

Time responses for the real part and imaginary part of the variables \(z_{1}\) and \(z_{2}\) in Example 4.2

Phase plane behavior of the state variables \(z_{1}\) and \(z_{2}\) in complex domain C in Example 4.2

Remark 8

Let the imaginary parts of all the variables and parameters be zero, the system (4.1) becomes:

where \(f_i(x)=(x+1)\mathrm{sign(x)}\) for \(i=1,\,2.\) From Fig. 3, we can see that the equilibrium point of system (4.2) is different from the equilibrium point of real part of z in system (4.1). This is because the imaginary part of system can affect the equilibrium point definitely. On the other aspect, system (4.1) has four equilibrium points while system (4.2) has two equilibrium points, this manifests that complex-valued neural networks can enhance the capacity compared with the same dimensional real-valued neural networks, which has been reported in [10].

Example 4.2

Consider a two-neuron complex-valued neural network described as follows:

where

and \(f(s)=(\mathrm{Re}(s)+0.2)\mathrm{sign}(\mathrm{Re}(s))+\mathbf{i }(\mathrm{Im}(s)+0.1)\mathrm{sign}(\mathrm{Im}(s))\) for any \(s\in C\) and \(i=1,\,2.\) It is clear that the activation function \(f(z)=[f_{1}(z_{1}),\,f_{2}(z_{2})]^{\mathrm{T}}\) is discontinuous on the complex domain \(C^{2},\) and \(f_{i}(z_{i})\) is satisfying A(4) with \(L^{R}_{i}=L_{i}^{I}=1.\) According to Definition 2.5, \(z^*=[0,\,0]^{\mathrm{T}}\) is an equilibrium point of system (4.3).

Now take \(P=I,\) it is easy to get \(Q=\mathrm {diag}\{-11/16,\,-11/16\}\) and \((A^{R})^{\mathrm{T}}A^{R}+(A^{I})^{\mathrm{T}}A^{I}=\mathrm {diag}\{ 11/8,\,11/8\}.\) Therefore, we obtain \(\Vert (A^{R})^{\mathrm{T}}(A^{R})+(A^{I})^\mathrm{T}(A^{I})\Vert =11/8,\, \lambda _{m}=11/16\) and

According to Theorem 3.6, the equilibrium \(z^*=[0,\,0]^{\mathrm{T}}\) is unique and globally asymptotically stable. We choose the initial value \(z(0)=[2-\mathbf{i },\,1-2\mathbf{i }]^{\mathrm{T}},\) then time response of the real part and imaginary part of \(z_{1},\,z_{2}\) is shown in Fig. 7. Figure 8 shows the trajectories of \(z_{1},\,z_{2}\) in complex domain.

Remark 9

It can be seen that \([0,\,0]^{\mathrm{T}}\) is a discontinuous point of activation function f(z) and \(K[f(0)]=[-0.2,\,0.2]+\mathbf{i }[-0.1,\,0.1],\) which is shown in Fig. 2. The stability problem can not be studied by methods proposed in [27, 28]. In this paper, we can analyze the stability of the equilibria under the framework of Filippov differential inclusion.

5 Conclusion

In this paper, we introduced the Filippov differential inclusions for complex differential equations with discontinuous right-hand side. Dynamical behavior of complex-valued Hopfield neural networks, including global asymptotic stability, output solution convergence in measure and convergence in finite time was studied in this paper based on differential inclusion theory and generalized Lyapunov stability theory. Numerical simulations are given to show the effectiveness and correction of our results. We think it would be interesting to investigate the possibility of extending the results to more complex discontinuous complex-valued neural network system with time varying and distributed delays.

References

Hirose A (2012) Complex-valued neural networks. Springer, Berlin

Nitta T (2003) Orthogonality of decision boundaries of complex-valued neural networks. Neural Comput 16:73–79

Tanaka G, Aihara K (2009) Complex-valued multistate associative memory with nonlinear multilevel functions for gray-level image reconstruction. IEEE Trans Neural Netw 20:1463–1473

Song R, Xiao W (2014) Adaptive dynamic programming for a class of complex-valued nonlinear systems. IEEE Trans Neural Netw Learn Syst 20(9):1733–1739

Chakravarthy V (2008) Complex-valued neural networks: utilizing high-dimensional parameters. Hershey, New York

Rudin W (1987) Real and complex analysis. Academic, New York

Ozdemir N, Iskender B, Ozgur N (2011) Complex-valued neural network with Mobius activation function. Commun Nonlinear Sci Numer Simul 16:4698–4703

Bohner M, Rao V, Sanyal S (2011) Global stability of complex-valued neural networks on time scales. Differ Equ Dyn Syst 19(1–2):3–11

Yasuaki K, Mitsuo Y (2003) On activation functions for complex-valued neural networks existence of energy functions. Lect Notes Comput Sci 2714:985–992

Liu X, Fang K, Liu B (2009) A synthesis method based on stability analysis for complex-valued Hopfield neural networks. In: Proceedings of the 7th Asian control conference, p 1245–1250

Fang T, Sun J (2013) Stability analysis of complex-valued nonlinear delay differential systems. Syst Control Lett 62:910–914

Gong W, Liang J, Cao J (2015) Matrix measure method for global exponential stability of complex-valued recurrent neural networks with time-varying delays. Neural Netw 70:81–89

Wei J, Zhang C (2004) Stability analysis in a first-order complex differential equations with delay. Nonlinear Anal 59:657–671

Hu J, Wang J (2012) Global stability of complex-valued recurrent neural networks with time-delays. IEEE Trans Neural Netw Learn Syst 23(6):853–865

Zhang Z, Lin C, Chen B (2014) Global stability criterion for delayed complex-valued recurrent neural networks. IEEE Trans Neural Netw Learn Syst 25(9):1704–1708

Fang T, Sun J (2014) Further investigation on the stability of complex-valued recurrent neural networks with time delays. IEEE Trans Neural Netw Learn Syst 25(9):1709–1713

Chen X, Song Q (2013) Global stability of complex-valued neural networks with both leakage time delay and discrete time delay on time scales. Neurocomputing 121:254–264

Zou B, Song Q (2013) Boundedness and complete stability of complex-valued neural networks with time delay. IEEE Trans Neural Netw Learn Syst 24(8):1227–1238

Rakkiyappan R, Cao J, Velmurugan G (2014) Existence and uniform stability analysis of fractional-order complex-valued neural networks with time delays. IEEE Trans Neural Netw Learn Syst 26:84–97

Zhang Z, Yu S (2015) Global asymptotic stability for a class of complex-valued Cohen–Grossberg neural networks with time delay. Neurocomputing 171:1158–1166

Velmurugan G, Cao J (2015) Further analysis of global \(\mu \)-stability of complex-valued neural networks with unbounded time-varying delays. Neural Netw 67:14–27

Hu J, Wang J (2015) Global exponential periodicity and stability of discrete-time complex-valued recurrent neural networks with time-delays. Neural Netw 66:119–130

Pan J, Liu X (2015) Global exponential stability for complex-valued recurrent neural networks with asynchronous time delays. Neurocomputing 164:293–299

Liu X, Chen T (2016) Exponential stability of a class of complex-valued neural networks with time-varying delays. IEEE Trans Neural Netw Learn Syst 27:593–606

Wang H, Huang T, Wang L (2015) Exponential stability of complex-valued memristive recurrent neural networks. IEEE Trans Neural Netw Learn Syst. doi:10.1109/TNNLS.2015.2513001

Hopfield J (1984) Neurons with graded response have collective computational properties like those of two-state neurons. Proc Natl Acad Sci USA 81:3088–3092

Huang Y, Zhang H (2014) Multistability of complex-valued recurrent neural networks with real–imaginary-type activation functions. Appl Math Comput 229:187–200

Rakkiyappan R, Cao J (2014) Multiple \(\mu \)-stability analysis of complex-valued neural networks with unbounded time-varying delays. Neurocomputing 149:594–607

Rakkiyappan R, Sivaranjani K, Velmurugan G (2014) Passivity and passification of memristor based complex-valued recurrent neural networks with interval time varying delays. Neurocomputing 144:391–407

Li X, Rakkiyappan R, Velmurugan G (2014) Dissipativity analysis of memristor based complex-valued neural networks with time-varying delays. Inf Sci 294:645–665

Forti M, Nistri P (2003) Global convergence of neural networks with discontinuous neuron activations. IEEE Trans Circuits Syst I 50(11):1421–1435

Guo Z, Huang L (2009) Generalized Lyapunov method for discontinuous systems. Nonlinear Anal 71(7–8):3083–3092

Forti M, Papini D (2005) Global exponential stability and global convergence in finite time of delayed neural network with infinite gain. IEEE Trans Neural Netw 16:1449–1463

Guo Z, Huang L (2009) LMI conditions for global robust stability of delayed neural networks with discontinuous neuron activations. Appl Math Comput 215:889–900

Guo Z, Huang L (2009) Global output convergence of a class of recurrent delayed neural networks with discontinuous neuron activations. Neural Process Lett 30:213–227

Wang J, Huang L, Guo Z (2009) Dynamical behavior of delayed Hopfield neural networks with discontinuous activations. Appl Math Model 33:1793–1802

Filippov A (1988) Differential equations with discontinuous right-hand side. Kluwer Academic, Boston

Huang L, Guo Z (2009) Global convergence of periodic solution of neural networks with discontinuous activation functions. Chaos Solitons Fractals 42:2351–2356

Wang J, Huang L, Guo Z (2009) Global asymptotic stability of neural networks with discontinuous activations. Neural Netw 22:931–937

Dugundji J, Granas A (2013) Fixed point theory I. Springer, Berlin

Miller R, Michel A (1982) Ordinary differential equations. Academic, Orlando

Acknowledgements

This work was supported by National Natural Science Foundation of China (1573003, 11601143), Natural Science Foundation of Hunan Province of China (13JJ4111, 14JJ3141), a key Project supported by Scientific Research Fund of Hunan Provincial Education Department (15k026, 15A038) and Aid Program for Science and Technology Innovative Research Team in Higher Educational Institution of Hunan Province.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Wang, Z., Guo, Z., Huang, L. et al. Dynamical Behavior of Complex-Valued Hopfield Neural Networks with Discontinuous Activation Functions. Neural Process Lett 45, 1039–1061 (2017). https://doi.org/10.1007/s11063-016-9563-5

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-016-9563-5