Abstract

Because of successful implementations and high intensity, metaheuristic research has been extensively reported in literature, which covers algorithms, applications, comparisons, and analysis. Though, little has been evidenced on insightful analysis of metaheuristic performance issues, and it is still a “black box” that why certain metaheuristics perform better on specific optimization problems and not as good on others. The performance related analyses performed on algorithms are mostly quantitative via performance validation metrics like mean error, standard deviation, and co-relations have been used. Moreover, the performance tests are often performed on specific benchmark functions—few studies are those which involve real data from scientific or engineering optimization problems. In order to draw a comprehensive picture of metaheuristic research, this paper performs a survey of metaheuristic research in literature which consists of 1222 publications from year 1983 to 2016 (33 years). Based on the collected evidence, this paper addresses four dimensions of metaheuristic research: introduction of new algorithms, modifications and hybrids, comparisons and analysis, and research gaps and future directions. The objective is to highlight potential open questions and critical issues raised in literature. The work provides guidance for future research to be conducted more meaningfully that can serve for the good of this area of research.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Optimization is everywhere, be it engineering design or industrial design, business planning or holiday planning, etc. We use optimization techniques to solve problems intelligently by choosing the best from larger number of available options. Metaheuristics have earned more popularity over exact methods in solving optimization problems because of simplicity and robustness of results produced while implemented in widely varied fields including engineering, business, transportation, and even social sciences. There is established extensive research by metaheuristic community, which involves introduction of new methods, applications, and performance analysis. However, Srensen et al. (2017) believes that the field of metaheuristics has still to reach maturity as compared to physics, chemistry, or mathematics. There is immense room of research to appear on various issues faced by metaheuristic computing. This paper aims at determining the volume of research conducted in this particular discipline, as well as, highlight some of the open questions and critical concerns that need further attention by researchers. Additionally, this survey work presents complete overview of body of knowledge in order to present current status of this particular field and suggest potential future directions. In this context, we present a systematic study of research work published between the years 1983 and 2016. In order to lay the foundation of the idea of this paper, we took guidance from recent survey study conducted by Uddin et al. (2016). It is worth mentioning here that the terms optimization algorithm, method, algorithm, and technique refer to metaheuristic algorithm.

The remainder of this paper is structured as follows. First, in the following section, the systematic mapping process for conducting this survey is explained. Section 3 answers the research questions by analyzing the synthesizing results of the collected data (publications). In Sect. 4 some of the related work is highlighted. The significant gaps in metaheuristic research identified in this survey are presented in Sect. 5. Finally, Sect. 6 duly concludes the paper and presents a summary of the present work and potential directions for the future research.

2 Systematic mapping process

Generally, systematic review of existing literature is performed based on the guidelines provided by Petersen et al. (2008) and Keele (2007). The guidelines adopted in this paper are from Keele (2007) whereas the study mapping process is taken from Petersen et al. (2008) after modification. The modified process is depicted in Fig. 1 and explained later in this paper. For greater detail on the original process, the relevant study mentioned previously can be referred.

Research questions guide and create strong bond of ideas pivoting the main objective of the study. Therefore, this research revolves around a vivid central point by clearly defining the research questions. It helps in screening and selecting the desired papers. Additionally, keywords are identified from abstracts of some of the primary literature already in hand. These keywords are used to search publications including proceedings, papers, articles, and book chapters.

2.1 Preliminary study

This is where the systematic literature review commences. For better idea about the scope of search, it is necessary to perform some preliminary study earlier. This draws the reason behind authors motivation for conducting a comprehensive review, systematically. The primary study can be performed by random search over the internet via common search engines (for instance Google Scholar) using one or two keywords strictly relevant to the area of study. After finding few papers, these papers can be used to select more relevant keywords from abstracts, and can also be used to determine initial search venues; later on, few more significant search sources may be added as the search furthers. That said, we performed our initial search with the keyword “Metaheuristic” on Google Scholar. From this early stage, we finalized keywords and search venues mentioned in Table 1. For this, we listed down all the keywords from the papers in hand, selected most commonly used keywords for our further search. Table 1 also serves as the guide for the reader to know the useful keywords while searching the related literature.

After initial search and study of some of the papers, the idea about designing research questions was to be more logical. Hence, the following research questions were well designed at this stage.

2.2 Research questions

The main question which derives the motivation behind this study is: How can we draw a comprehensive picture of metaheuristic research? For answering this, we formulated four different research questions (RQs) to be injected into the collected literature. These questions are:

-

RQ-1

What are the basic concepts related to metaheuristics?

Rationale The answer to this question establishes foundation and provides preliminary knowledge to comprehend the research.

-

RQ-2

What is the intensity of publications in the field of metaheuristics?

Rationale This question investigates the potential of metaheuristic algorithms in solving optimization problems. Here, conference proceedings, journals, papers, book chapters and articles are taken into account.

-

RQ-3

What are the most frequently used metaphors or design patterns used to develop metaheuristic algorithms?

Rationale This question investigates the metaphors or frameworks adopted from different disciplines to design metaheuristic methods.

-

RQ-4

What analytical techniques have been used for validating metaheuristic performances?

Rationale This question lists approaches to performance validation of metaheuristic algorithms. These techniques are critically analyzed to determine the authentication of the approaches used in comparisons and performance analysis.

-

RQ-5

What are the potential future directions in the field of metaheuristics?

Rationale This question highlights some of the important topics or sub-areas in the field of metaheuristics as future directions. Here, some of the critical issues and open questions that call for significant amount of future research are presented.

2.3 Conduct search

There are two ways to conduct search for systematic literature review: automatic (Petersen et al. 2008) and manual (Keele 2007). This study prefers the later strategy, as the prior approach poses few drawbacks since currently available automatic search engines are not feasible for this kind of study (Brereton et al. 2007). The manual search is generally used for searching primary studies from the most relevant sources. Table 1 shows the potential venues that have been used as publication sources. These sources have vast variety of publications including conference proceeding, journal papers, articles and book chapters.

The search keywords mentioned in Table 1 were keyed in on the search panel of the publication websites, with default search settings, but list order was set to descending order of publication date (latest first). Averagely, 25 items were listed in every search page, and we browsed through only first three pages, which make 75 search items for each keyword on each venue. We went through all these 75 items and selected the most relevant publications for our review database. Following are the inclusion and exclusion criteria defined:

-

Inclusion Most relevant publications including journal papers, conference proceedings, articles, and book chapters focus on introduction of new metaheuristic algorithm, proposing modification or hybrid of metaheuristic methods, reviews, surveys, comparisons and analysis, or applications of metaheuristics in solving any real-world or benchmark optimization problem.

-

Exclusion The short papers with less than 5 pages, and publications not related to metaheuristics.

2.4 Screening of publications

It is a two iterations process. Initially, total 1222 publications were identified through search on selected venues. After this, the literature was reviewed and assessed by the project team in order to judge the relevance. Here, we reviewed abstracts and conclusions, also put a glance on the main body if the research deemed potential and close to our research theme. This way, the inclusion and exclusion was performed to create our search database.

2.5 Data extraction

A spreadsheet was designed to record different characteristics of a publication to address the research questions and objectives of the study. The information covered in this sheet included paper number, title, authors, publication year, venue, publication type (conference, journal, or book chapter), research type (new method, modification, hybrid, comparisons and analysis, or survey).

3 Analysis and synthesis of data

This section answers the research questions designed in this study. In order to better understand the data collected, the study is divided into following research questions.

3.1 RQ1: what are the basic concepts related to metaheuristics?

This section explains the basic concepts of metaheuristic computing, terms used in this area, and generalized mathematical representation of a metaheuristic algorithm. This section adequately builds preliminary knowledge for readers to comprehend this particular area of research, hence it starts with Optimization.

3.1.1 Optimization

Optimization is performed to maximize outcome with limited resources by selecting the best solution, from a set of available solutions (Greenberg 2004). Yang (2012) elaborates optimization with an example of searching diamond in a large forest (search domain). Looking into the search area inch-by-inch will consume enormous amount of energy and time. In such scenario, any optimization technique may be applied to focus only on the potential spots where the possibility of finding diamond is higher. This way, the problem will be solved intelligently rather than laboriously.

Every optimization problem comes with few decision variables (e.g., which search mode to use for searching diamond and in which order to visit the forest), certain objective function (e.g., searching maximum diamond), and some constraints (e.g., searching diamond with less time and effort) (Srensen et al. 2012). Optimization techniques are employed to obtain the values of decision variables that optimize an objective function subject to certain constraints.

Definition 1

Formally, optimization problem, P, is defined as:

where, S is the search space defined over a finite set of decision variables \(X_{i},i=1,\ldots ,n\); \({\varOmega }\) is a set of constraints among the decision variables; f is an objective function that needs to be optimized.

To solve P, an optimal solution \(s^*\) of P is to be found with minimum objective function value \(f(s^*) \le f(s),\forall s \in S\), or \(f(s^*) \ge f(s),\forall s \in S \), in case the objective function is to be maximized.

Optimization problems vary by structure: single or multi-objective, constrained or unconstrained, or combinatorial optimization problems.

Single versus multi-objective optimization Optimization problems that rely on one specific objective which is to be maximized or minimized are supposed to be single-objective optimization problems. Such problems may also have several objectives combined together to form single main objective. The purpose of single-objective optimization technique is to find a single solution (best solution) that best lumps a number of objectives into one. On the other hand, many complex industry and scientific optimization problems are multi-objective in nature. These problems involve multiple contradictory criteria or objectives that must be satisfied simultaneously. Multi-objective optimization problem contains more than one contradicting objectives that need to be satisfied based on certain constraints (Tan et al. 2013).

Constrained versus un-constrained optimization Many real-life optimization problems have certain constraints/rules that cannot be violated while finding an optimized solution. Therefore, the problem in this category involves objective function f(x) that is to be minimized/maximized based on certain constraints. Whereas, unconstrained optimization problems do not involve any restrictions or limitations, and they depend on real variables. Such problems have objective function that minimizes or maximizes the value of f (Bae et al. 2012).

Combinatorial optimization In combinatorial optimization problems, solutions are encoded in discrete variables (Blum and Roli 2003). Unlike multi-objective optimization, where the optimal values of variables are to be found in order to maximize/minimize the objective function, many real-world problems need to select or arrange (combination or permutation) a set of objects in a way that objective function is maximized/minimized. Combinatorial optimization is optimization derived from discrete mathematics, where we try different combinations of unordered collection of distinct elements, of size k, taken from a set of S elements (Yu and Gen 2010).

Since the background of optimization has been established, the next section introduces basic concepts and mathematical representation of a metaheuristic algorithm, moreover, the subsections discuss different categories of the algorithms.

3.1.2 Metaheuristic algorithm

Metaheuristics are used to solve optimization problems by the process of searching optimal solutions to a particular problem of interest. The process of searching can be carried out using multiple agents which essentially form a system of evolving solutions using a set of rules or mathematical equations during multiple iterations. These iterations carry until the solution found meets some predefined criterion. This final solution (near optimal solution) is said to be an optimal solution and a system is deemed to have reached a converged state (Yang and Deb 2014).

As opposite to exact methods that find optimal solution at the expense of high computational time, heuristic methods find near optimal solution rather quickly. But, these methods are mostly problem specific. As the term meta in metaheuristics suggests, metaheuristics are one level higher than heuristic approaches. Metaheuristic techniques have seen a great amount of success as they are likely to provide solutions at an acceptable computational cost. Very good solutions can be obtained by hybridizing good heuristics with classical metaheuristics for many real-world problems.

In order to build theoretical foundations of metaheuristic, it is important to analyze the fundamental terms in metaheuristic computing which implements adaptive intelligent behavior. The definitions given by Wang (2010) serve the purpose:

Definition 2

A heuristic is a reasoning methodology in problem solving that enables a solution to a problem is driven by trial-and-error.

Definition 3

A metaheuristic is a generic or higher-level heuristic that is more general in problem solving.

Definition 4

Metaheuristic computing is an adaptive computing that applies general heuristic rules in solving a category of computational problems.

On the basis of above definitions, the generalized mathematical formulation of a metaheuristic can be defined as below (Wang 2010):

Definition 5

A metaheuristic (MH) can be described as:

where, O is a set of metaheuristic methodologies (i.e. metaheuristic, adaptive, automotive, trial-and-error, cognitive, etc.); A is a set of generic algorithms (e.g., genetic algorithm, particle swarm optimization, evolutionary algorithm, ant colony optimization, etc.); \(R^c=O\times A\), is a set of internal relations; \(R{^i} \subseteq A^{\prime } \times A, A^{\prime } \wedge A \sqsubseteq \), is a set of input relations; where \(C^{\prime }\) is a set of external concepts and c is concept environment. For convenience, \(R^i = A^{\prime } \times A\) may be simply denoted as \(R^i = C^{\prime } \times c\). \(R^o\subseteq c \times C^{\prime }\) is a set of output relations.

Other than the concepts mentioned above, it is also mandatory to comprehend additional key factors; such as neighborhood search, diversification or exploration, intensification or exploitation, local minima versus global minima, escaping local minima, local search versus global search, evolutionary computing, and swarm intelligence, etc. Beside these terms, there include some fundamental strategies used in metaheuristics; such as, keeping balance between exploration and exploitation, searching for most promising or potential neighbors, avoiding inappropriate or inefficient neighbors, limiting search from entering into unpromising neighbors, etc.

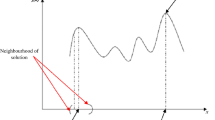

Exploration versus exploitation Exploration and exploitation (also referred to as diversification and intensification, divergence and convergence, respectively) are two common and fundamental features of any optimization method. However, it is highly dependent on the search philosophy adopted by each metaheuristic. These two features are considered as cornerstones of solving an optimization problem successfully (Črepinšek 2013). Exploration is the ability to expand search in wide spread domain to explore unvisited areas, whilst exploitation, via accumulated search experience, allows to focus promising regions (high quality solutions) to utilize and converge optimally (Khajehzadeh et al. 2011). For mastering the two features, an efficient algorithm spreads new solutions, via randomization techniques and random walks, far from current area of search so that explorative move should reach all the regions within search space accessed at least once. On the other hand, using intensive local search information about the landscape and past search experience, the algorithm tries to converge quickly without wasting too many moves (Yang et al. 2015).

Local versus global search metaheuristics Local search optimization algorithms are generally more exploitative methods [e.g., tabu search (TS) (Glover 1989), greedy randomized adaptive search procedure (GRASP) (Feo and Resende 1989), and iterated local search (ILS) (Sttzle 1998), etc.], while global search methods are more explorative in nature [e.g., ant colony optimization (ACO) (Dorigo et al. 2006), genetic algorithms (GAs) (Holland 1992), particle swarm optimization (PSO) Eberhart and Kennedy (1995), etc.]. There are also many hybrid methods which combine local search capability of local search algorithms as an improvement mechanism in global search or population based metaheuristics (Li and Tian 2015; Pham and Huynh 2015; Sahli et al. 2014).

Single versus population based metaheuristics The number of solutions to be carried in search process determines whether the metaheuristic is a single-solution (trajectory) or population-based algorithm. In order to select a metaheuristic for a specific optimization problem, it is first decided to whether use a trajectory or population based algorithm. Usually, basic single-solution based algorithms are more exploitation oriented, whereas basic population-based metaheuristics are more explorative in nature (Boussad et al. 2013). Trajectory methods use one solution at a time and start with a single initial solution. During the course of iterations, these algorithms create a trajectory in the search space. Here, it is noteworthy that the solution may or may not belong to neighborhood of the current solution. For a population-based algorithm, a population of multiple solutions is generated initially. Then, in every iteration, a set of solutions are manipulated in order to find solutions toward better search areas. These algorithms either do recombinations of multiple solutions or modify each via the strategy adopted to enforce exploration and exploitation of the search area (Blum and Roli 2003).

Now that the above discussion has already established preliminary knowledge about metaheuristics, the upcoming sections explore the depth of literature through following research questions.

3.2 RQ2: what is the intensity of publications in the field of metaheuristics?

The primary data extracted from the collected publications suggest that an extensive research has been conducted in the field of metaheuristics. Although, the related research has started as early as after WW-II when operational research was in its infancy, the introduction of simulated annealing (SA) (Kirkpatrick et al. 1983) in 1983 formally kick-started research specifically in the field of metaheuristics.

A total of 1222 publications were reported in this study, which were published during the period of 1983–2016; this does not necessarily imply that all publications in the literature have been found, there remains much more to be explored. The intensity of publications year-wise is illustrated in Fig. 2. It is evident from the graph that metaheuristic research attracted researchers more effectively after 2005, and that until 2010 it had steady growth in the number of publications. After a jerk in 2011, there was a significant surge in the metaheuristic research. This, along with experiments, applications, and analysis of metaheuristic methods, the trend of inventing “nove” metaheuristics shot high till 2015. Meanwhile this period, some of the authors highlighted the issue of metaphor-based methods, and according to them such researches hardly presented any scientific contribution to the field of metaheuristic research (Srensen 2015). As a result of critical publications, until mid of 2016 the factory of novel metaheuristics reduced its production and real analytical and critical research raised its pillars. While collecting primary data for this study, it was witnessed that currently most of the authors have endorsed the issues highlighted in some of the recent critical studies (Yang 2012). The trend is now moving towards actual scientific contribution that involves mathematical analysis of metaheuristic performance instead of just measuring squared errors for performance comparisons.

It is obvious from Table 2 and Fig. 3 that the most focused avenue for publishing metaheuristic literature was Elsevier followed by Springer with highest number of reviews, surveys, chapters, and articles among other avenues. Whereas, Hindawi published the most number of modified metaheuristics. Besides being top contributor to metaheuristic research, Elsevier was also top priority by researchers for introducing their novel metaheuristic techniques.

The primary data collected in this study revealed around 140 metaheuristics (listed in “Appendix A”) applied in diversified range of fields broadly in the area of science and technology, economics, and daily life. Fig. 4 infers that mostly metaheuristics have been applied and tested on numerical problems which include continuous and discrete, constrained and unconstrained, single and multi-objective optimization problems, etc. Data mining is another field of interest mostly chosen by metaheuristic researchers, which includes optimization tasks involved in classification, prediction, clustering, and system modeling, etc. Among top practical applications, metaheursitics have been utilized to find optimum solutions for power generation and distribution, and electronics board designing in the field of electrical and electronics. Many industrial applications require scheduling jobs to be assigned on sequential or parallel processes in order to optimized cost. Another promising area of metaheuristic applications is combinatorial optimization problems ranging from facility location problems to set-covering, to more difficult multi-agent task allocation in extreme teams, etc. Other applications among top ten areas include communications (networking, telecommunication, antennas and radar design, etc.), transportation (traveling salesman problem, routing, shortest path, etc.), engineering (mechanical designs, aircraft and ship components design, etc.), civil engineering (structural design, buildings and bridges, construction, etc.), and information and communications technology—ICT (cloud and grid computing, swarm robotics, security, software development, etc.). The applications of metaheuristics need to be explored in the areas of mining, traffic control, manufacturing and production, etc.

The metaheuristic-wise applications in different areas are presented in Table 3. The acronyms of the abbreviations in this table can be found in “Appendix A”. Overall, Fig. 5 provides evidence of metaheuristics that are popular according to the number of publications; here only those which had 10 or more publications in our database are shown. Among other metaheuristic methods, PSO was the most attractive technique. There seems significant distinction between PSO and the rest of the methods. PSO has gained immense popularity amongst researchers due to simplicity and effectiveness in plenty of scientific and industrial applications. After this method, artificial bee colony (ABC) (Karaboga 2005), ant colony optimization (ACO) (Dorigo et al. 2006), and GA have been extensively applied by majority of researchers. On the other hand, in classic metaheuristics, TS, differential evolution (DE) (Storn and Price 1997), SA, variable neighborhood search (VNS) (Mladenovi and Hansen 1997), and ILS are still being widely published due to their robustness in variety of optimization problems. Among modern methods, harmony search (HS) (Geem et al. 2001), cuckoo search (CS) (Yang and Deb 2009), bat algorithm (BA) (Yang 2010), firefly algorithm (FA) (Yang 2008), and fireworks algorithm (FWA) (Tan and Zhu 2010) have shown efficiency of producing quality solutions. Some of the latest metaheuristics, such as teaching learning based optimization (TLBO) (Rao et al. 2011), biogeography-based optimization (BBO) (Simon 2008), and bacterial foraging optimization algorithm (BFOA) (Zhao and Wang 2016) have also generated convincing results.

After being aware of metaheuristic techniques, the following question dives deeper for determining the inspirations fascinated researchers to invent the new methods.

3.3 RQ3: what are the most frequently used metaphors or design patterns to develop new metaheuristic algorithms?

According to the primary data collected in this study, the metaphors of the metaheuristics available today are taken from nine disciplines considerably are Biology, Physics, Computation, Psychology, and Chemistry (see Fig. 6 for complete list of disciplines). Most of the metaheuristics are bio-based, and other than this, there also exist significant number of methods adopted from Physics, for example, law of motion or gravitation (Rashedi et al. 2009), electromagnetics (Abedinpourshotorban et al. 2016), etc. The researchers have also used metaphors from our daily life; such as, interior design (Gandomi 2014), sports (Osaba et al. 2014), music (Geem et al. 2001), and vocational skills (Qin 2009), etc. Interestingly, some of the metaphors have also been adopted from the disciplines that deal with how humans rule territories and run economic systems, including Economics (Atashpaz-Gargari and Lucas 2007) and Military (Sun et al. 2016). Some of the methods were so confusing in terms of putting them in one group, so we just grouped them into Computation category.

Generally, metaheuristic methods have been designed mostly mimicking the living and survival systems of insects, animals, and birds. Fig. 7 shows top ten leading metaphors mostly preferred by researchers. Among these, insects is the most favorite metaphor for mimicking the social behavior in order to design efficient optimization methods, and among insects, bees is the top trend followed by ants. Other than these species, the biological behaviors of fireflies, spiders, and bacteria have also been explored in the hunt of producing powerful metaheuristics. The second most popular trend is natural evolution the Darwin theory of survival. Some of the animals, such as bats, fish, cat, and monkeys have also attracted metaheuristic designers. Other than these mentioned previously, birds, human, plant, water, ecosystem, electromagnetic force, and gravitation have been interestingly expressed metaphorically in the designs of metaheuristic methods.

When designed a new metaheuristic algorithm, the inventor is supposed to prove its validity through employing some performance validation criteria. These criteria are then compared with counterpart methods to prove effectiveness of an algorithm. The commonly used performance validation criteria are investigated in the surveyed literature in the following question.

3.4 RQ4: what analytical techniques have been used for validating performance of metaheuristics?

Through this question we have tried to determine about the validating techniques used to investigate performance of metaheuristics. In order to analyze the performance of metaheuristic algorithms, researchers have most commonly used benchmark test functions, such as those used in Civicioglu (2013), Doan and lmez (2015), and in Salimi (2015), etc. To name a few, among the wide variety of test functions are Sphere, Rastrigin, Ackley, etc. A detail about these functions is available in Karaboga and Akay (2009). Other than the test functions, some benchmark engineering design problems have also been solved by using metaheuristic methods to measure and compare performances. Some example problems are pressure vessel design problem, compression spring design problem, welded beam design problem (Li et al. 2016), truss structure problems of multi-story braced frames (Kaveh and Farhoudi 2016), and gear train design problem (Mirjalili 2015). Rest of the problems which have been used to solve via metaheuristics include classification and clustering problems (Eberhart and Kennedy 1995; Marinakis et al. 2009), multiple knapsack problems (Arasomwan and Adewumi 2014), traveling salesman problem (TSP) and vehicle routing problems (Osaba et al. 2013; Iordache 2010), (Meignan et al. 2010), scheduling problems (Bandieramonte et al. 2010; Zheng 2015; Li et al. 2015), and prediction problems (Pan 2012; Gonçalves et al. 2008). Authors in Civicioglu (2013) have used maximum number of test functions (75) while introducing backtracking search optimization algorithm (BSOA). At least, 1 test function is used in Wei (2013), Bouhmala (2015), and Yang and Wang (2007) when measuring performances of metaheuristic algorithms raindrop algorithm (RA), variable depth search algorithm (VDSA), and water flow-like algorithm (WFA), respectively.

In order to analyze the performance of metaheuristics, some validation criteria have been considered. These include best, worst, mean, and standard deviation of objective function values obtained over specific number of runs. Other than these methods, some of the statistical analysis techniques have also been utilized such as, p value obtained by Wilcoxon Signed-Rank test, z test, and NOVA test, etc. (e.g., Uymaz et al. 2015; Caraveo et al. 2015; and Salimi 2015, etc.).

While comparing performances of metaheuristic methods, the inventors have compared their new methods with existing techniques. Mostly, researchers have compared their newly introduced metaheuristics with PSO, GA, and ABC or their variants (James and Li 2015; Abedinia et al. 2014; Chen et al. 2015). Other than these popular methods, ACO, DE, and evolutionary strategies (ES) and their variants have also been used for comparative analysis of results, see for example (Salcedo-Sanz et al. 2014; Kaveh and Farhoudi 2016), and (Li et al. 2015).

4 Related work

Due to enormous success of metaheuristic algorithms in effectively solving wide variety of optimization problems, extensive literature has been published until today. This section specifically focuses on highlighting research performed on metaheuristics techniques by considering only those survey studies that are conducted purely on evolution of metaheuristic methods. The survey papers have been carefully selected and studied so that a summary of current picture of metaheuristic research shall be drawn.

In Mahdavi et al. (2015), Mahdavi and Rahnamayan reinforced the importance of metaheuristic research as there have been specialized conferences, journals, websites, and research groups established. Creating a hierarchical classification, this study identifies two main approaches in solving optimization problems more efficiently: cooperative coevolution (CC) algorithms and non-decomposition methods. According to the authors, the earlier approach divides optimization problems into subcomponents and solves these components independently, and later on merging together to form an aggregated solution. Non-decomposition methods, on the other hand, solve any optimization problem as whole. Highlighting some of the crucial challenges to metaheuristics, the study contends that metaheuristic methods suffer from loss of efficiency and add up computational cost when the dimensions of the problem in hand increase significantly. The curse of dimensionality increases with problem size and landscape complexity making the exploration of potential solutions sterner. Some of the gaps and future directions have also been presented in this study, which are discussed in the subsequent sections.

Revisiting history of evolutionary and swarm intelligence algorithms, Zelinka in Zelinka (2015) officiated the beginning of evolutionary algorithms back in 1960s and 1970s when genetic algorithms, evolutionary programming and evolutionary strategy were introduced (Holland 1992; Fogel and Corne 2002; Schwefel 1977; Rechenberg 1994), respectively. Subsequently, the field of metaheuristics properly kicked off by the introduction of SS&PR (Glover 1997), PSO (Eberhart and Kennedy 1995), DE (Storn and Price 1997), and ACO (Dorigo 1992). The main objective of the study is to answer the question whether the dynamics of swarm and evolutionary algorithms can be visualized in order to improve their performance. The authors argue that since complex network structure is hidden behind these metaheuristics, it is possible to control their behavior through mapping a complex network structure of solutions (individuals or search agents). For detailed explanation and illustration of the argument, this paper has also depicted the concept in graphs. The study also claims that swarm and evolutionary algorithms can solve wide class of complex optimization problems if equipped with chaotic dynamics.

The two contradictory but important building blocks of any metaheuristic algorithm: exploration and exploitation have been surveyed in Črepinšek (2013) using nearly one hundred papers. The core motivation behind this study is the two appealing concerns: (a) components controlling exploration and exploitation of a metaheuristic; (b) how to bring balance between them. The authors contend that there exists limited understanding on how these two factors affect performance of any specific method; therefore, strong drip on exploration and exploitation can help metaheuristic researchers develop efficient methods instead of just relying on oversimplified concepts. Having said that, in evolutionary algorithms (i.e. GA, ES, EP), mutation, crossover, and selection are the main sources of controlling exploration and exploitation yet it is life-and-death for an algorithm to decide the types and approaches of these sources. Although, the survey reported significantly varying approaches to maintaining the tradeoff balance between exploration and exploitation in evolutionary algorithms; overall, some of the prominent approaches are parameter tuning, population size control, and diversity maintenance through deterministic, adaptive, and self-adaptive techniques. Authors in Boussad et al. (2013) surveyed some of the popular metaheuristics in the groups of single-solution (i.e TS, SA, and GRASP etc.) and population-based methods (e.g., GA, DE, ACO, and PSO, etc.), and found certain similarities: inspired by nature, rely on randomization instead of gradient information, and maintain several parameters to be tuned according to the problem being solved. Moreover, the study also contends that basic single-solution based methods are mainly exploitation oriented, whereas population-based methods are often exploration oriented. However, the study terms the fundamental approach adopted by all such algorithms as adaptive memory programming where the once visited search locations are maintained in a memory, and based on it, new locations are visited. The memory is updated with the information about the latest locations visited. Furthermore, this survey also determines that the number of parameters in a metaheuristic algorithm is directly related to its complexity. Finding good initial parameters is also a tedious job. Therefore, the more suitable approach to combat this problem is emerged as adaptive metaheuristics that self-tune the parameters based on objective function values. Interesting gaps and future directions have been proposed by this study, which are discussed in the later section.

In an interesting study, Yang in (2011) gave an overview of metaheuristic convergence and efficiency analysis as well as presents some open questions that need to be answered. According to the study, even though metaheuristics have been observed to have successfully solved wide range of NP hard problems, this field is still younger than combinatorial and continuous optimization in terms of convergence, complexity, and runtime analysis. In fact, there is lack of mathematical analysis, and mostly ad-hoc approaches have been adopted in literature to measure performance of metaheuristic algorithms. It is observed that mean and standard deviation of objective function values on well-known optimization problems have been examined. If these values stay better than counterpart algorithms, the method in hand is said to be efficient. That is, convergence and efficiency analysis are still challenging. This is due to the fact that the components of metaheuristic algorithms are highly non-linear, complex, and stochastic in behavior. To address this, some of the studies with convergence analysis have been reviewed in order to provide a framework for analyzing convergence and efficiency of metaheuristics. The framework provided by the study can be a useful research direction for developing tools that may help analyze randomization and convergence. The study also contends that randomization, other than exploration and exploitation, is an important component of any metaheuristic technique, which helps get out of local optima positions. The randomization techniques range from the simple uniform distributions to complex methods like Monte Carlo (Gamerman and Lopes 2006), or from Brownian Random Walk (Spitzer 2013) to Levy Flight (Gutowski 2001). The work suggests that all the metaheuristics with multiple agents with interacting paths can be analyzed using general framework of Markov Chain Monte Carlo. The study also raises significant open questions yet to be answered in metaheuristic literature; discussed in the later section.

Optimization problems, in real-world, are highly complex and computationally expensive demand extra efforts (in terms of computation as well as time) from metaheuristics to evaluate the fitness of a group of candidate solutions. With the advancements in surrogate modeling, it has been proved that fitness function of such problems can be approximated to reduce computational cost to a limited budget when metaheuristic algorithm is evaluating a population of solutions. Highlighting the progress made in this particular area of metaheuristc research, Yaochu in Jin (2011) surveyed literature of successful applications of surrogate assisted evolutionary algorithm and suggested useful future directions. According to the survey, the major challenge in surrogate assisted methods is to avoid any possible misapproximation of surrogate that may mislead a metaheuristic algorithm by false optimum. To address this, various methods have been proposed which include learning mechanisms of neural networks, etc. Since, this area of research lags behind strong theoretical foundations, much work needs to be done on metaheuristic properties; such as, convergence analysis, in relation with managing surrogate related issues.

Based on newly emerging research domain quantum-inspired evolutionary algorithms, the work (Zhang 2011) revisited existing but limited literature; as only three categories of algorithms found: EDQAs, QEAs, and QIEAs. This study provides comprehensive details about this potential area of metaheuristic research. Researchers interested in this area may refer to the mentioned paper. According to the survey, authors (Alba 2005) revealed the usefulness of quantum computing into evolutionary algorithms. QIEAs employ the concepts of quantum inspired bits and gates to represent and generate offspring. Moreover, these algorithms are highly exploitative to search global optimum solutions even with tiny population. Summarizing the literature reviewed and experiments conducted on benchmark functions, the study states that QIEAs produce superior results as compared to other canonical EAs. Furthermore, advancement in this area may reveal robust algorithms for highly complex problems through quantum parallelism. The study also highlighted considerable gaps that need significant future work.

Another interesting but not yet fully unfolded area of operational research is parallel metaheuristics employed on parallel computing paradigm of grids and clouds. Authors (Alba 2005) investigated recent advances in literature related to parallel metaheuristics that employ parallelism in order to reduce search time, and at the same time, produce high quality solutions. According to the study, there are three models based on trajectory algorithms: parallel exploration and evaluation of the neighborhood, parallel multi-start, and parallel evaluation of single solution. On the other hand, population-based algorithms have also been applied on parallel computing through two approaches: computational parallelization and population parallelization. In the prior approach, operations on individuals are performed in parallel, whereas the later approach divides population into subpopulations to be executed in parallel. The study reports that parallel metaheuristics have not only been tested on popular benchmark problems including TSP, routing, and scheduling, but also have found applications in the domains of science, business, and industry. These parallel implementations employed PSO, ACO, and other EAs. There are specific software and hardware platforms to be used for parallel implementations of algorithms which have been discussed in the survey. According to authors, mostly, object oriented platform (C++ and Java) for software engineering has been utilized for parallel metaheuristics; and among the developed frameworks, MALLBA and ParadisEO are the most comprehensive tools to be considered while implementing parallel metaheuristics. There are some theoretical developments regarding this work, the study has mentioned few studies which can be referred for more information.

A more detailed analysis of new trends and potential research lines has been discussed in the subdomains of technology, algorithms, methodology, and challenges, and discussed in the following section.

5 Research gaps and future work

Despite success of metaheuristic methods on diversified areas of science, engineering and technology, there remains sufficient gap that needs to be filled in order to reach maturity level as compared to other established fields of research. This section helps identify some of the related but potential areas of research that may build future literature.

Mahdavi et al. (2015) foresee the design of decomposition methods, with great performance and accuracy, as potential research area while solving real-world imbalanced problems with large decision variables. Theoretical foundations for investigating metaheuristic characters are still lagging behind. According to the authors, it will help improve performance of metaheuristics. Although, benchmark test functions were developed to represent imbalance in large scale optimization problems, however there is a need of theoretical evidence that proves this fact. Moreover, this study also raises an important question “how these common benchmark test set and evaluation criteria reflect the characteristics of real-world problems?” Another future potential research area is highlighted in this study; that is, scalability of metaheuristic methods for solving optimization problems with dimensions greater than 1000.

Zelinka (2015) highlights some of the gaps in terms of unanswered questions that are raised based on papers surveyed in the study. Some of the questions can be rephrased into one as: can control (like chaotification) of swarm and evolutionary algorithms dynamics significantly improve performance and diversity in search operation? As future directions, the study proposes some potential research areas that range from swarm robotics to evolvable hardware to breaking terrorists communication.

Focusing specifically the two important factors in the design of metaheuristic: exploration and exploitation in evolutionary algorithms, Črepinšek (2013) identified three main gaps as opportunities for further research. Broadly, we can list them as (a) formal definition of exploration and exploitation; (b) more deep theoretical understanding on which parts of evolutionary algorithms contribute to exploration and exploitation, as well as, how and when to control or balance the ratio between these two elements; and (c) some direct measures are required to gage the influence of different approaches and techniques of manipulating exploration and exploitation in evolutionary algorithms.

Boussad et al. (2013) advocate that in the absence of theoretical foundations, the analysis of metaheuristic performance is performed based on experiments with mean and standard deviation as validation criteria. More statistical analysis is required for authentic comparisons. As interesting future work, the survey intensifies the need of software framework for metaheuristics that may help develop, hybrid, and use methods without building from scratch. The framework should also be able to provide analysis and comparison facilities. Another potential research area is complex large scale optimization problems. The optimization problems with high number of dimensions can be solved through parallel metaheuristic execution approach hyper-heuristics may help in this. It is often observed that different instances of the same optimization problem corresponds to different landscape structure. Therefore, the third potential research line identified by this study is the landscape structure for developing better metaheuristic methods.

Despite huge success in solving tough optimization problems, Yang (2011) asserts that it is hard to affirm mathematically why metaheuristic algorithms are that efficient. Mathematical analysis of rate of convergence and efficiency help obtain in-depth information about the behavior of an algorithm on a specific problem. This will help effectively modify existing or develop new method with authentic (not ad-hoc) results. Few efforts can be witnessed in literature trying to address this gap, however to reach maturity in this area, metaheuristic researchers need a lot of work in future. Another open area in metaheuristic research identified by this work is measuring the balance between exploration and exploitation. On part of comparative performance measurement, the study urges any agreed criteria instead of just comparing objective function values and number of function evaluations. The authors of this research foresee more intelligent, self-adaptive, or in other words self-optimizing next-generation metaheuristics in future. These algorithms will be smart enough to tune their parameters in order to find optimum quality solution with minimum computational cost. In another article (Yang 2012), the same author maintains the challenge of large-scale problems to be solved by metaheuristics; as mostly these algorithms are implemented and tested on small benchmark test problems with number of design variables ranging from few to hundred. Many engineering design, business and industry problems involve thousands and even millions of variables. Moreover, the researcher also predicts the next eight to ten years to be significant in addressing this open problem residing both in theory and practice.

As a potential and emerging research area of evolutionary algorithms inspired by quantum computing, there are few gaps that are highlighted by Zhang (2011). Like in the case of other metaheuristics, this area also needs theoretical basis with reference to searching optimal solutions, searching global optimality, and convergence. Additionally, more advanced characteristics related to quantum computing such as, quantum registers, entanglement, interference, controlled quantum-inspired gates, etc. are to be explored for developing efficient algorithms. As QIEAs are mostly compared with EAs only, these algorithms should be further compared with other popular metaheuristics like PSO and ACO.

Another key area of research that needs attention by metaheuristic community is intelligent sampling and surrogate modeling. Through intelligent sampling, the bounds of problem space are reduced for restricted searching to best neighborhoods, whereas surrogate methods assist metaheuristics in function evaluations of highly computationally expensive functions through approximating the actual objective function. The limited work in this direction has shown significant potential. For example, Mann and Singh (2017) improved the performance of ABC by incorporating a sampling technique called Student’s-t distribution. Hu et al. (2008) implemented wheel neighborhood relation (one of the topologies for population distribution) with PSO for using fewer samples with better optimality for designing the model in hand.

A promising but not fully explored direction is to combine exact algorithms and metaheuristics to solve optimization problems. Different approaches have been introduced in this regard (Jourdan et al. 2009; Puchinger and Raidl 2005), which are mainly aimed at achieving better and efficient solutions early in the iterations. This was illustrated by Rossel and Jahuira in Jahuira (2002) by combining GA with exact techniques Branch and Bound, Minimal Spanning Tree, and Backtracking Algorithms for solving combinatorial optimization problem Traveling Salesman Problem (TSP). The paper achieved optimal solutions in few generations and small population size. Another example is (Khabzaoui et al. 2008) combined exact method with evolutionary algorithm GA for solving data mining problem of rule discovery. This combination accelerated the convergence of the algorithm to best solutions.

Utilizing modern technology of parallel computing, metaheuristics have also been implemented in parallel or distributed computation. Exploring metaheuristics in this particular area, Alba (2005) highlights some important research lines to further strengthen outcomes. Since the focus of this current study is metaheuristics, therefore, we only extract from the paper the key issues related to parallel metaheuristics. Just like the gaps mentioned above, this area also deals with the same issues like theoretical foundations, convergence analysis, statistical measurements, and exploration versus exploration altogether totally in a different paradigm of parallelism. Efficient use of population in distributed architecture will lead to powerful metaheuristics for big problems as compared to sequential ones.

6 Discussion and concluding remarks

It is ascertained in literature written in recent decades that metaheuristics have solved optimization problems with ample efficiency and reasonable cost of computation as compared to exact methods. The success of metaheuristics is topped by the concerns about some important gaps and enormous research to be held to reach maturity level as of Physics, Mathematics, and other optimization fields in terms of strong theoretical and mathematical foundations as well as convergence analysis.

The literature surveyed in this research ranges from introduction of new methods, hybrid of two or more metaheuristic or heuristic techniques, surveys, comparisons and performance analysis, and wider range of applications including engineering, business, transportation, and social sciences, etc. This systematic literature review produced a database of 1222 publications appeared from the year 1983 to mid-2016. The designed five research questions laid the foundation this study, and helped extract meaningful information from the database to draw a comprehensive picture of current status of metaheuristic research. More importantly, this study provides a platform for new metaheuristic researchers including new PhD. fellows for commencing their research by finding potential research topics highlighted here.

The idea of solving optimization problems through heuristic approach was envisioned more than forty years ago when Operations Research was in its infancy during WWII. The formal kick off of metaheuristic research took place when initial metaheuristic methods like Tabu Search and Simulated Annealing were introduced in 1680s. However, the boom of this field of research was witnessed in 1990s after the wider applications of PSO, ACO and GAs. Past twenty years have been flooded with “novel” metaheuristics due to attractive approach of mimicking one or the other metaphor from the disciplines of Biology, Chemistry, and Physics, etc. Despite of enormous success in wide variety of applications, however, according to (Koziel and Yang 2011) this has harmed research in its true sense of scientific findings. Moreover, author in Srensen et al. (2017) is optimistic about the future of this field as the more research is to be conducted based on theoretical and mathematical foundations. To list down future potential research lines, the gaps identified in this study are summarized as follows:

-

It is observed that performance analysis of metaheuristic methods have been mostly performed based on simple mean of objective function values, standard deviation, and some basic statistical tests on certain test functions; which is an ad-hoc approach. More well-established and commonly agreed performance validation criteria are required in order to establish firm conclusions about the efficiency of any method being introduced.

-

Theoretical and mathematical foundations are required for different components of metaheuristics; such as, exploration versus exploitation, local optimum versus global optimum search ability, and convergence, etc.

-

Scalable metaheuristics to be designed that are able to self-adopt, self-tune, or self-evolve in order to cope with complex and highly imbalanced landscapes of large optimization problems with massive decision variables.

References

Aarts EHL, Lenstra JK (1997) Local search in combinatorial optimization. Princeton University Press, Princeton

Abdechiri M, Meybodi MR, Bahrami H (2013) Gases brownian motion optimization: an algorithm for optimization (GBMO). Appl Soft Comput 13(5):2932–2946

Abdullahi M, Ngadi A et al (2016) Symbiotic organism search optimization based task scheduling in cloud computing environment. Future Gener Comput Syst 56:640–650

Abedinia O, Amjady N, Ghasemi A (2014) A new metaheuristic algorithm based on shark smell optimization. Complexity 21:97–116

Abedinpourshotorban H, Shamsuddin SM, Beheshti Z, Jawawi DNA (2016) Electromagnetic field optimization: a physics-inspired metaheuristic optimization algorithm. Swarm Evolut Comput 26:8–22

Al Rifaie MM, Bishop MJ, Blackwell T (2011) An investigation into the merger of stochastic diffusion search and particle swarm optimisation. In: Proceedings of the 13th annual conference on genetic and evolutionary computation, ACM pp 37–44

Alba E (2005) Parallel metaheuristics: a new class of algorithms, vol 47. Wiley, Hoboken

Ali MZ, Awad NH, Suganthan PN, Duwairi RM, Reynolds RG (2016) A novel hybrid cultural algorithms framework with trajectory-based search for global numerical optimization. Inf Sci 334:219–249

Amudhavel J, Kumarakrishnan S, Anantharaj B, Padmashree D, Harinee S, Kumar KP (2015) A novel bio-inspired krill herd optimization in wireless ad-hoc network (WANET) for effective routing. In: Proceedings of the 2015 international conference on advanced research in computer science engineering & technology (ICARCSET 2015), ACM p 28

Angeline PJ, Saunders GM, Pollack JB (1994) An evolutionary algorithm that constructs recurrent neural networks. IEEE Trans Neural Networks 5(1):54–65

Arasomwan AM, Adewumi AO (2014) An investigation into the performance of particle swarm optimization with various chaotic maps. Math Prob Eng 2014:14

Askarzadeh A (2016) A novel metaheuristic method for solving constrained engineering optimization problems: crow search algorithm. Comput Struct 169:1–12

Atashpaz-Gargari E, Lucas C (2007) Imperialist competitive algorithm: an algorithm for optimization inspired by imperialistic competition. In: 2007 IEEE congress on evolutionary computation, IEEE, pp 4661–4667

Bae C, Yeh W-C, Wahid N, Chung YY, Liu Y (2012) A new simplified swarm optimization (SSO) using exchange local search scheme. Int J Innov Comput Inf Control 8(6):4391–4406

Bandieramonte M, Di Stefano A, Morana G (2010) Grid jobs scheduling: the alienated ant algorithm solution. Multiagent Grid Syst 6(3):225–243

Barresi KM (2014) Foraging agent swarm optimization with applications in data clustering. In: International conference on swarm intelligence, Springer, pp 230–237

Baykasoğlu A, Akpinar Ş (2015) Weighted superposition attraction (WSA): a swarm intelligence algorithm for optimization problems-part 2: constrained optimization. Appl Soft Comput 37:396–415

Bayraktar Z, Komurcu M, Werner DH (2010) Wind driven optimization (WDO): a novel nature-inspired optimization algorithm and its application to electromagnetics. In: IEEE antennas and propagation society international symposium (APSURSI), 2010, IEEE, pp 1–4

Beyer H-G, Schwefel H-P (2002) Evolution strategies–a comprehensive introduction. Nat Comput 1(1):3–52

Blum C, Roli A (2003) Metaheuristics in combinatorial optimization: overview and conceptual comparison. ACM Comput Surv (CSUR) 35(3):268–308

Boussaïd I, Julien L, Siarry P (2013) A survey on optimization metaheuristics. Inf Sci 237:82–117

Brabazon A, Cui W, ONeill M (2016) The raven roosting optimisation algorithm. Soft Comput 20(2):525–545

Brereton P, Kitchenham BA, Budgen D, Turner M, Khalil M (2007) Lessons from applying the systematic literature review process within the software engineering domain. J Syst Softw 80(4):571–583

Canayaz M, Karcı A (2015) Investigation of cricket behaviours as evolutionary computation for system design optimization problems. Measurement 68:225–235

Caraveo C, Valdez F, Castillo O (2015) Bio-inspired optimization algorithm based on the self-defense mechanism in plants. In: Mexican international conference on artificial intelligence, Springer, pp 227–237

Chen CC, Tsai YC, Liu II, Lai CC, Yeh YT, Kuo SY, Chou YH (2015) A novel metaheuristic: Jaguar algorithm with learning behavior. In: 2015 IEEE international conference on systems, man, and cybernetics (SMC), IEEE, pp 1595–1600

Chen MR, Lu YZ, Yang G (2006) Population-based extremal optimization with adaptive lévy mutation for constrained optimization. In: 2006 International conference on computational intelligence and security, vol 1, IEEE pp 258–261

Chetty S, Adewumi AO (2015) A study on the enhanced best performance algorithm for the just-in-time scheduling problem. Discret Dyn Nature Soc 2015:12

Civicioglu P (2013) Backtracking search optimization algorithm for numerical optimization problems. Appl Math Comput 219(15):8121–8144

Crawford B, Soto R, Berríos N, Johnson F, Paredes F, Castro C, Norero E (2015) A binary cat swarm optimization algorithm for the non-unicost set covering problem. Math Probl Eng, 2015

Črepinšek M, Liu S-H, Mernik M (2013) Exploration and exploitation in evolutionary algorithms: a survey. ACM Comput Surv (CSUR) 45(3):35

Cuevas E, Cienfuegos M, Zaldívar D, Pérez-Cisneros M (2013) A swarm optimization algorithm inspired in the behavior of the social-spider. Expert Syst Appl 40(16):6374–6384

Cuevas E, González A, Fausto F, Zaldívar D, Pérez-Cisneros M (2015) Multithreshold segmentation by using an algorithm based on the behavior of locust swarms. Math Probl Eng 2015:25

Dash T, Sahu PK (2015) Gradient gravitational search: an efficient metaheuristic algorithm for global optimization. J Comput Chem 36(14):1060–1068

Deb S, Fong S, Tian Z (2015) Elephant search algorithm for optimization problems. In: 2015 Tenth international conference on digital information management (ICDIM), IEEE, pp 249–255

Djenouri Y, Drias H, Habbas Z, Mosteghanemi H (2012) Bees swarm optimization for web association rule mining. In: 2012 IEEE/WIC/ACM international conferences on web intelligence and intelligent agent technology (WI-IAT), vol 3, IEEE, pp 142–146

Doan B, lmez T (2015) A new metaheuristic for numerical function optimization: vortex search algorithm. Inf Sci 293:125–145

Dorigo Marco (1992) Optimization, learning and natural algorithms. Ph. D. Thesis, Politecnico di Milano, Italy

Dorigo M, Gambardella LM (1997) Ant colony system: a cooperative learning approach to the traveling salesman problem. IEEE Trans Evol Comput 1(1):53–66

Dorigo M, Birattari M, Stutzle T (2006) Ant colony optimization. IEEE Comput Intell Mag 1(4):28–39

Duan QY, Gupta VK, Sorooshian S (1993) Shuffled complex evolution approach for effective and efficient global minimization. J Optim Theory Appl 76(3):501–521

Duman E, Uysal M, Alkaya AF (2012) Migrating birds optimization: a new metaheuristic approach and its performance on quadratic assignment problem. Inf Sci 217:65–77

Eberhart RC, Kennedy J (1995) A new optimizer using particle swarm theory. In: Proceedings of the sixth international symposium on micro machine and human science, vol 1, pp 39–43. New York, NY

Erol OK, Eksin I (2006) A new optimization method: big bang-big crunch. Adv Eng Softw 37(2):106–111

Eusuff M, Lansey K, Pasha F (2006) Shuffled frog-leaping algorithm: a memetic meta-heuristic for discrete optimization. Eng Optim 38(2):129–154

Faisal M, Mathkour H, Alsulaiman M (2016) AntStar: enhancing optimization problems by integrating an Ant system and A* algorithm. Sci Prog 2016:2

Feng X, Lau FCM, Gao D (2009) A new bio-inspired approach to the traveling salesman problem. In: International conference on complex sciences, Springer, pp 1310–1321

Feo TA, Resende MGC (1989) A probabilistic heuristic for a computationally difficult set covering problem. Oper Res Lett 8(2):67–71

Filipović V, Kartelj A, Matić D (2013) An electromagnetism metaheuristic for solving the maximum betweenness problem. Appl Soft Comput 13(2):1303–1313

Fogel GB, Corne DW (2002) Evolutionary computation in bioinformatics. Morgan Kaufmann, Burlington

Gamerman D, Lopes HF (2006) Markov chain Monte Carlo: stochastic simulation for Bayesian inference. CRC Press, Boca Raton

Gandomi AH (2014) Interior search algorithm (ISA): a novel approach for global optimization. ISA Trans 53(4):1168–1183

Gao-Ji Sun (2010) A new evolutionary algorithm for global numerical optimization. In: International conference on machine learning and cybernetics (ICMLC), 2010, vol 4, IEEE, pp 1807–1810

Geem ZW, Kim JH, Loganathan GV (2001) A new heuristic optimization algorithm: harmony search. Simulation 76(2):60–68

Glover F (1997) A template for scatter search and path relinking. In: European conference on artificial evolution, Springer, p 1–51

Glover F (1989) Tabu search–part I. ORSA J Comput 1(3):190–206

Gonçalves R, Goldbarg MC, Goldbarg EF, Delgado MR (2008) Warping search: a new metaheuristic applied to the protein structure prediction. In: Proceedings of the 10th annual conference on genetic and evolutionary computation, ACM, pp 349–350

Gonçalves MS, Lopez RH, Miguel LFF (2015) Search group algorithm: a new metaheuristic method for the optimization of truss structures. Comput Struct 153:165–184

Gonzalez-Fernandez Y, Chen S (2015) Leaders and followers–a new metaheuristic to avoid the bias of accumulated information. In: IEEE congress on evolutionary computation (CEC), 2015, IEEE, pp 776–783

Greenberg HJ (2004) Mathematical programming glossary. Greenberg, New York

Gupta K, Deep K (2016) Tournament selection based probability scheme in spider monkey optimization algorithm. In: Harmony search algorithm, Springer, pp 239–250

Gutowski M (2001) Lévy flights as an underlying mechanism for global optimization algorithms. arXiv preprint arXiv:math-ph/0106003v1

Hajipour H, Khormuji HB, Rostami H (2016) ODMA: a novel swarm-evolutionary metaheuristic optimizer inspired by open source development model and communities. Soft Comput 20(2):727–747

Haldar V, Chakraborty N (2017) A novel evolutionary technique based on electrolocation principle of elephant nose fish and shark: fish electrolocation optimization. Soft Comput 21(14):3827–3848

Hasançebi O, Azad SK (2015) Adaptive dimensional search: a new metaheuristic algorithm for discrete truss sizing optimization. Comput Struct 154:1–16

He S, Wu QH, Saunders JR (2006) A novel group search optimizer inspired by animal behavioural ecology. In: IEEE congress on evolutionary computation, 2006. CEC 2006, IEEE, pp 1272–1278

Holland JH (1992) Genetic algorithms. Sci Am 267(1):66–72

Huang Z, Chen Y (2015) Log-linear model based behavior selection method for artificial fish swarm algorithm. Comput Intell Neurosci 2015:10

Iordache S (2010) Consultant-guided search: a new metaheuristic for combinatorial optimization problems. In: Proceedings of the 12th annual conference on genetic and evolutionary computation, ACM, pp 225–232

Jahuira CAR (2002) Hybrid genetic algorithm with exact techniques applied to TSP. In: Second international workshop on intelligent systems design and application, Dynamic Publishers, Inc, pp 119–124

James JQ, Li VOK (2015) A social spider algorithm for global optimization. Appl Soft Comput 30:614–627

Jin Y (2011) Surrogate-assisted evolutionary computation: recent advances and future challenges. Swarm Evolut Comput 1(2):61–70

Jourdan L, Basseur M, Talbi E-G (2009) Hybridizing exact methods and metaheuristics: a taxonomy. Eur J Oper Res 199(3):620–629

Karaboga D, Akay B (2009) A comparative study of artificial bee colony algorithm. Appl Math Comput 214(1):108–132

Karaboga D, An idea based on honey bee swarm for numerical optimization. Report, technical report-tr06, Erciyes University, Engineering Faculty, Computer Engineering Department, 2005

Karami H, Sanjari MJ, Gharehpetian GB (2014) Hyper-spherical search (HSS) algorithm: a novel meta-heuristic algorithm to optimize nonlinear functions. Neural Comput Appl 25(6):1455–1465

Karimkashi S, Kishk AA (2010) Invasive weed optimization and its features in electromagnetics. IEEE Trans Antennas Propag 58(4):1269–1278

Kashani AR, Gandomi AH, Mousavi M (2016) Imperialistic competitive algorithm: a metaheuristic algorithm for locating the critical slip surface in 2-dimensional soil slopes. Geosci Front 7(1):83–89

Kaveh A, Bakhshpoori T (2016) A new metaheuristic for continuous structural optimization: water evaporation optimization. Struct Multidiscip Optim 54(1):23–43

Kaveh A, Farhoudi N (2016) Dolphin monitoring for enhancing metaheuristic algorithms: layout optimization of braced frames. Comput Struct 165:1–9

Kaveh A, Khayatazad M (2012) A new meta-heuristic method: ray optimization. Comput Struct 112:283–294

Kaveh A, Mahdavi VR (2014) Colliding bodies optimization: a novel meta-heuristic method. Comput Struct 139:18–27

Kaveh A, Talatahari S (2010) A novel heuristic optimization method: charged system search. Acta Mech 213(3):267–289

Kaveh A, Motie MA, Share MM (2013) Magnetic charged system search: a new meta-heuristic algorithm for optimization. Acta Mech 224(1):85–107

Keele S (2007) Guidelines for performing systematic literature reviews in software engineering. In: Technical report, Ver. 2.3 EBSE Technical Report. EBSE. sn

Khabzaoui M, Dhaenens C, Talbi E-G (2008) Combining evolutionary algorithms and exact approaches for multi-objective knowledge discovery. RAIRO-Oper Res 42(1):69–83

Khajehzadeh M, Taha MR, Elshafie AHKAN, Eslami M (2011) A survey on meta-heuristic global optimization algorithms. Res J Appl Sci Eng Technol 3(6):569–578

Kirkpatrick SC, Gelatt D, Vecchi MP et al (1983) Optimization by simulated annealing. Science 220(4598):671–680

Kiruthiga G, Krishnapriya S, Karpagambigai V, Pazhaniraja N, Paul P Victer (2015) A novel bio-inspired algorithm based on the foraging behaviour of the bottlenose dolphin. In: 2015 International conference on computation of power, energy information and commuincation (ICCPEIC), IEEE, pp 0209–0224

Koziel S, Yang X-S (2011) Computational optimization, methods and algorithms, vol 356. Springer, New York

Kuo RJ, Zulvia FE (2015) The gradient evolution algorithm: a new metaheuristic. Inf Sci 316:246–265

Li SX, Wang JS (2015) Dynamic modeling of steam condenser and design of pi controller based on grey wolf optimizer. Math Probl Eng 2015:9

Li Z-Y, Li Z, Nguyen TT, Chen SM (2015) Orthogonal chemical reaction optimization algorithm for global numerical optimization problems. Expert Syst Appl 42(6):3242–3252

Li MD, Zhao H, Weng XW, Han T (2016) A novel nature-inspired algorithm for optimization: virus colony search. Adv Eng Softw 92:65–88

Lianbo M, Kunyuan H, Yunlong Z, Hanning C, Maowei H (2014) A novel plant root foraging algorithm for image segmentation problems. Math Probl Eng 2014:16

Liang X, Li W, Liu PP, Zhang Y, Agbo AA (2015) Social network-based swarm optimization algorithm. In: IEEE 12th international conference on networking, sensing and control (ICNSC), 2015, IEEE, pp 360–365

Li K, Tian H (2015) A de-based scatter search for global optimization problems. Discret Dyn Nat Soc, 2015:303125

Liu Y, Tian P (2015) A multi-start central force optimization for global optimization. Appl Soft Comput 27:92–98

Li W, Wang L, Yao Q, Jiang Q, Yu L, Wang B, Hei X (2015) Cloud particles differential evolution algorithm: a novel optimization method for global numerical optimization. Math Probl Eng 2015:3242–3252

Mahdavi S, Shiri ME, Rahnamayan S (2015) Metaheuristics in large-scale global continues optimization: a survey. Inf Sci 295:407–428

Mann PS, Singh S (2017) Improved metaheuristic based energy-efficient clustering protocol for wireless sensor networks. Eng Appl Artif Intell 57:142–152

Marinakis Y, Marinaki M (2014) A bumble bees mating optimization algorithm for the open vehicle routing problem. Swarm Evolut Comput 15:80–94

Marinakis Y, Marinaki M (2011) A honey bees mating optimization algorithm for the open vehicle routing problem. In: Proceedings of the 13th annual conference on genetic and evolutionary computation, ACM, pp 101–108

Marinakis Y, Marinaki M, Matsatsinis N (2009) A hybrid bumble bees mating optimization-GRASP algorithm for clustering. In: International conference on hybrid artificial intelligence systems, Springer, pp 549–556

Meignan D, Koukam A, Crput J-C (2010) Coalition-based metaheuristic: a self-adaptive metaheuristic using reinforcement learning and mimetism. J Heuristics 16(6):859–879

Meng X, Liu Y, Gao X, Zhang H (2014) A new bio-inspired algorithm: chicken swarm optimization. In: International conference in swarm intelligence, Springer, pp 86–94

Merrikh-Bayat F (2015) The runner-root algorithm: a metaheuristic for solving unimodal and multimodal optimization problems inspired by runners and roots of plants in nature. Appl Soft Comput 33:292–303

Mirjalili S (2015) Moth-flame optimization algorithm: a novel nature-inspired heuristic paradigm. Knowl-Based Syst 89:228–249

Mirjalili S (2016) SCA: a sine cosine algorithm for solving optimization problems. Knowl-Based Syst 96:120–133

Mladenovi N, Hansen P (1997) Variable neighborhood search. Comput Oper Res 24(11):1097–1100

Munoz MA, López JA, Caicedo E (2009) An artificial beehive algorithm for continuous optimization. Int J Intell Syst 24(11):1080–1093

Muthiah-Nakarajan V, Noel MM (2016) Galactic swarm optimization: a new global optimization metaheuristic inspired by galactic motion. Appl Soft Comput 38:771–787

Narayanan A, Moore M (1996) Quantum-inspired genetic algorithms. In: Proceedings of IEEE International conference on evolutionary computation, 1996, IEEE, pp 61–66

Nasir ANK, Raja Ismail RMT, Tokhi MO (2016) Adaptive spiral dynamics metaheuristic algorithm for global optimisation with application to modelling of a flexible system. Appl Math Model 40(9):5442–5461

Niu B, Wang H (2012) Bacterial colony optimization. Discret Dyn Nature Soc 2012:28

Nourddine B (2015) A variable depth search algorithm for binary constraint satisfaction problems. Math Probl Eng, 2015

Odili JB, Kahar MNM (2016) Solving the traveling salesman’s problem using the african buffalo optimization. Comput Intell Neurosci 2016:3

Osaba E, Diaz F, Carballedo R, Onieva E, Perallos A (2014) Focusing on the golden ball metaheuristic: an extended study on a wider set of problems. Sci World J 2014:1–17

Osaba E, Diaz F, Onieva E (2013) A novel meta-heuristic based on soccer concepts to solve routing problems. In: Proceedings of the 15th annual conference companion on genetic and evolutionary computation, ACM, pp 1743–1744

Pan W-T (2012) A new fruit fly optimization algorithm: taking the financial distress model as an example. Knowl-Based Syst 26:69–74

Petersen K, Feldt R, Mujtaba S, Mattsson M (2008) Systematic mapping studies in software engineering. In: 12th international conference on evaluation and assessment in software engineering, vol 17

Pham DT, Huynh TTB (2015) An effective combination of genetic algorithms and the variable neighborhood search for solving travelling salesman problem. In: 2015 Conference on technologies and applications of artificial intelligence (TAAI), IEEE, pp 142–149

Puchinger J, Raidl GR (2005) Combining metaheuristics and exact algorithms in combinatorial optimization: a survey and classification. In: International work-conference on the interplay between natural and artificial computation, Springer, pp 41–53

Qin J (2009) A new optimization algorithm and its application key cutting algorithm. In: 2009 IEEE international conference on grey systems and intelligent services (GSIS 2009), IEEE, pp 1537–1541

Rahmani R, Yusof R (2014) A new simple, fast and efficient algorithm for global optimization over continuous search-space problems: radial movement optimization. Appl Math Comput 248:287–300

Rao RV, Savsani VJ, Vakharia DP (2011) Teaching learning-based optimization: a novel method for constrained mechanical design optimization problems. Comput Aided Des 43(3):303–315

Rashedi E, Nezamabadi-Pour H, Saryazdi S (2009) GSA: a gravitational search algorithm. Inf Sci 179(13):2232–2248

Rechenberg I (1994) Evolutionsstrategie: Optimierung technischer systeme nach prinzipien der biologischen evolution. frommann-holzbog, stuttgart, 1973. Step-size adaptation based on non-local use of selection information. In: Parallel problem solving from nature (PPSN3)

Rodzin SI (2014) Smart dispatching and metaheuristic swarm flow algorithm. J Comput Syst Sci Int 53(1):109–115

Sadollah A, Bahreininejad A, Eskandar H, Hamdi M (2013) Mine blast algorithm: a new population based algorithm for solving constrained engineering optimization problems. Appl Soft Comput 13(5):2592–2612

Sadollah A, Eskandar H, Kim JH (2015) Water cycle algorithm for solving constrained multi-objective optimization problems. Appl Soft Comput 27:279–298

Sahli Z, Hamouda A, Bekrar A, Trentesaux D (2014) Hybrid PSO-tabu search for the optimal reactive power dispatch problem. In: IECON 2014-40th annual conference of the IEEE industrial electronics society, IEEE, pp 3536–3542

Salcedo-Sanz S, Del Ser J, Landa-Torres I, Gil-Lpez S, Portilla-Figueras JA (2014) The coral reefs optimization algorithm: a novel metaheuristic for efficiently solving optimization problems. Sci World J, 2014

Salimi H (2015) Stochastic fractal search: a powerful metaheuristic algorithm. Knowl-Based Syst 75:1–18

Savsani P, Savsani V (2016) Passing vehicle search (PVS): A novel metaheuristic algorithm. Appl Math Model 40(5):3951–3978

Schwefel H-P (1977) Numerische optimierung von computer-modellen mittels der evolutionsstrategie, vol 1. Birkhuser, Basel