Abstract

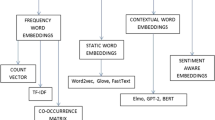

Today, a vast amount of unstructured text data is consistently at our disposal. Owing to the rapid increase in user-generated content on the one hand and powerful, ready-to-use machine learning algorithms on the other hand, automated text analysis can now be carried out in a way that would have been unimaginable a few years ago. However, in order to be able to analyze textual data further, it first becomes necessary to extract features and transform the text into numerical values, which is required by many algorithms. As such, this chapter will present the basics of vectorizing text, beginning with the most simple approaches of text representation and increasing in complexity and performance as each subsequent algorithm is presented. The aim of this chapter is, thus, to convey the intuition behind each approach in a straightforward manner and to focus on the elements that are relevant for practical application.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Afrizal, A. D., Rakhmawati, N. A., & Tjahyanto, A. (2019). New filtering scheme based on term weighting to improve object based opinion mining on tourism product reviews. Procedia Computer Science, 161, 805–812. https://doi.org/10.1016/j.procs.2019.11.186

Aggarwal, C. C. (2018). Machine learning for text. Springer. Retrieved from https://springerlink.bibliotecabuap.elogim.com/content/pdf/10.1007/978-3-319-73531-3.pdf

Almeida, F., & Xexéo, G. (2019). Word Embeddings: A survey.

Alsentzer, E., Murphy, J. R., Boag, W., Weng, W.-H., Di Jin, Naumann, T., & McDermott, M. B. A. (2019, April 6). Publicly available Clinical BERT Embeddings. Retrieved from http://arxiv.org/pdf/1904.03323v3

Anandarajan, M., Hill, C., & Nolan, T. (2019). Practical text analytics (Vol. 2). Springer International Publishing. https://doi.org/10.1007/978-3-319-95663-3

Anibar, S. (2021, April 11). Text classification — From Bag-of-Words to BERT — Part 3(fastText). Retrieved from https://medium.com/analytics-vidhya/text-classification-from-bag-of-words-to-bert-part-3-fasttext-8313e7a14fce

Arefieva, V., & Egger, R. (2021). Tourism_Doc2Vec [computer software].

Arefieva, V., Egger, R., & Yu, J. (2021). A machine learning approach to cluster destination image on Instagram. Tourism Management, 85, 104318. https://doi.org/10.1016/j.tourman.2021.104318

Beltagy, I., Lo Kyle, & Cohan, A. (2019). SciBERT: A pretrained language model for scientific text. Retrieved from http://arxiv.org/pdf/1903.10676v3

Bender, E. M., & Lascarides, A. (2019). Linguistic fundamentals for natural language processing II: 100 essentials from semantics and pragmatics. Synthesis Lectures on Human Language Technologies, 12(3), 1–268. https://doi.org/10.2200/s00935ed1v02y201907hlt043

Bengio, Y. (2008). Neural net language models. Scholarpedia, 3(1), 3881. https://doi.org/10.4249/scholarpedia.3881

Chang, Y.-C., Ku, C.-H., & Chen, C.-H. (2020). Using deep learning and visual analytics to explore hotel reviews and responses. Tourism Management, 80, 104129. https://doi.org/10.1016/j.tourman.2020.104129

Chang, C.-Y., Lee, S.-J., & Lai, C.-C. (2017). Weighted word2vec based on the distance of words. In Proceedings of 2017 International Conference on Machine Learning and Cybernetics: Crowne Plaza City center Ningbo, Ningbo, China, 9–12 July 2017. IEEE. https://doi.org/10.1109/icmlc.2017.8108974

Chantrapornchai, C., & Tunsakul, A. (2020). Information extraction tasks based on BERT and SpaCy on tourism domain. ECTI Transactions on Computer and Information Technology (ECTI-CIT), 15(1), 108–122. https://doi.org/10.37936/ecti-cit.2021151.228621

Conneau, A., & Kiela, D. (2018, March 14). SentEval: An evaluation toolkit for universal sentence representations. Retrieved from http://arxiv.org/pdf/1803.05449v1

Dhami, D. (2020). Understanding BERT - Word Embeddings. Retrieved from https://medium.com/@dhartidhami/understanding-bert-word-embeddings-7dc4d2ea54ca

Dong, G., & Liu, H. (Eds.). (2017). Chapman & Hall/CRC data mining & knowledge discovery series: No. 44. Feature engineering for machine learning and data analytics (1st ed.). CRC Press/Taylor & Francis Group.

Duboue, P. (2020). The art of feature engineering. Cambridge University Press. https://doi.org/10.1017/9781108671682

Dündar, E. B., Çekiç, T., Deniz, O., & Arslan, S. (2018). A hybrid approach to question-answering for a banking Chatbot on Turkish: Extending keywords with embedding vectors. In A. Fred & J. Filipe (Eds.), Proceedings: Volume 1, KDIR. [S. l.]: SCITEPRESS = science and technology publications. https://doi.org/10.5220/0006925701710177.

Ethayarajh, K. (2019). How contextual are contextualized word representations? Comparing the geometry of BERT, ELMo, and GPT-2 Embeddings.

FastText.cc (2020, July 18). fastText – Library for efficient text classification and representation learning. Retrieved from https://fasttext.cc/

Goldberg, Y., & Levy, O. (2014). word2vec Explained: deriving Mikolov et al.’s negative-sampling word-embedding method.

Gurjar, O., & Gupta, M. (2020, December 18). Should I visit this place? Inclusion and exclusion phrase mining from reviews. Retrieved from http://arxiv.org/pdf/2012.10226v1

Han, Q., Leid, Z., & Margarida Abreu, N. (2019). tourism2vec, Available at SSRN 3350125.

Harris, Z. S. (1954). Distributional structure. WORD, 10(2–3), 146–162. https://doi.org/10.1080/00437956.1954.11659520

Hayashi, T., & Yoshida, T. (2019). Development of a tour recommendation system using online customer reviews. In J. Xu, F. L. Cooke, M. Gen, & S. E. Ahmed (Eds.), Lecture notes on multidisciplinary industrial engineering. Proceedings of the twelfth international conference on management science and engineering management (pp. 1145–1153). Springer International Publishing. https://doi.org/10.1007/978-3-319-93351-1_90

Horn, N., Erhardt, M. S., Di Stefano, M., Bosten, F., & Buchkremer, R. (2020). Vergleichende Analyse der Word-Embedding-Verfahren Word2Vec und GloVe am Beispiel von Kundenbewertungen eines Online-Versandhändlers. In R. Buchkremer, T. Heupel, & O. Koch (Eds.), FOM-edition. Künstliche Intelligenz in Wirtschaft & Gesellschaft (pp. 559–581). Springer Fachmedien Wiesbaden. https://doi.org/10.1007/978-3-658-29550-9_29

Jang, B., Kim, I., & Kim, J. W. (2019). Word2vec convolutional neural networks for classification of news articles and tweets. PLoS One, 14(8), e0220976. https://doi.org/10.1371/journal.pone.0220976

Jatnika, D., Bijaksana, M. A., & Suryani, A. A. (2019). Word2Vec model analysis for semantic similarities in English words. Procedia Computer Science, 157, 160–167. https://doi.org/10.1016/j.procs.2019.08.153

Jurafsky, D., & Martin, J. H. (2000). Speech and language processing: An introduction to natural language processing, computational linguistics, and speech recognition. In Prentice Hall series in artificial intelligence. Prentice Hall.

Karanikolas, N. N., Voulodimos, A., Sgouropoulou, C., Nikolaidou, M., & Gritzalis, S. (Eds.). (2020). 24th Pan-Hellenic Conference on Informatics. ACM.

Kenyon-Dean, K., Newell, E., & Cheung, J. C. K. (2020). Deconstructing word embedding algorithms. In B. Webber, T. Cohn, Y. He, & Y. Liu (Eds.), Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP) (pp. 8479–8484). Association for Computational Linguistics. https://doi.org/10.18653/v1/2020.emnlp-main.681

Kishore, A. (2018). Word2vec. In Pro machine learning algorithms (pp. 167–178). Apress.

Krishna, K., Jyothi, P., & Iyyer, M. (2018). Revisiting the importance of encoding logic rules in sentiment classification.

Kuntarto, G. P., Moechtar, F. L., Santoso, B. I., & Gunawan, I. P. (2015). Comparative study between part-of-speech and statistical methods of text extraction in the tourism domain. In G. Kuntarto, F. Moechtar, B. I. Santoso, & I. P. Gunawan (Eds.), 2015 International Conference on Information Technology Systems and Innovation (ICITSI) (pp. 1–6). IEEE. https://doi.org/10.1109/ICITSI.2015.7437675

Landthaler, J. (2020). Improving semantic search in the German legal domain with word Embeddings. Technische Universität München. Retrieved from https://mediatum.ub.tum.de/1521744

Le, Q., & Mikolov, T. (2014). Distributed representations of sentences and documents. In International conference on machine learning (pp. 1188–1196) Retrieved from http://proceedings.mlr.press/v32/le14.html

Lee, J., Yoon, W., Kim, S., Kim, D., Kim, S., So, C. H., & Kang, J. (2020). Biobert: A pre-trained biomedical language representation model for biomedical text mining. Bioinformatics (Oxford, England), 36(4), 1234–1240. https://doi.org/10.1093/bioinformatics/btz682

Li, W., Guo, K., Shi, Y., Zhu, L., & Zheng, Y. (2018). DWWP: Domain-specific new words detection and word propagation system for sentiment analysis in the tourism domain. Knowledge-Based Systems, 146, 203–214. https://doi.org/10.1016/j.knosys.2018.02.004

Li, Q., Li, S., Hu, J., Zhang, S., & Hu, J. (2018). Tourism review sentiment classification using a bidirectional recurrent neural network with an attention mechanism and topic-enriched word vectors. Sustainability, 10(9), 3313. https://doi.org/10.3390/su10093313

Li, S., Li, G., Law, R., & Paradies, Y. (2020). Racism in tourism reviews. Tourism Management, 80, 104100. https://doi.org/10.1016/j.tourman.2020.104100

Li, Q., Li, S., Zhang, S., Hu, J., & Hu, J. (2019). A review of text corpus-based tourism big data mining. Applied Sciences, 9(16), 3300. https://doi.org/10.3390/app9163300

Li, W., Zhu, L., Guo, K., Shi, Y., & Zheng, Y. (2018). Build a tourism-specific sentiment lexicon via Word2vec. Annals of Data Science, 5(1), 1–7. https://doi.org/10.1007/s40745-017-0130-3

Liu, Y., Che, W., Wang, Y., Zheng, B., Qin, B., & Liu, T. (2020). Deep contextualized word Embeddings for universal dependency parsing. ACM Transactions on Asian and Low-Resource Language Information Processing, 19(1), 1–17. https://doi.org/10.1145/3326497

Luo, Y., He, J., Mou, Y., Wang, J., & Liu, T. (2021). Exploring China’s 5A global geoparks through online tourism reviews: A mining model based on machine learning approach. Tourism Management Perspectives, 37, 100769. https://doi.org/10.1016/j.tmp.2020.100769

Memarzadeh, M., & Kamandi, A. (2020). Model-based location recommender system using geotagged photos on Instagram. In 2020 6th International Conference on Web Research (ICWR) (pp. 203–208). IEEE. https://doi.org/10.1109/ICWR49608.2020.9122274

Mikolov, T., Chen, K., Corrado, G., & Dean, J. (2013, January 16). Efficient estimation of word representations in vector space. Retrieved from http://arxiv.org/pdf/1301.3781v3

Mikolov, T., Grave, E., Bojanowski, P., Puhrsch, C., & Joulin, A. (2017). Advances in pre-training distributed word representations.

Mikolov, T., Sutskever, I., Chen, K., Corrado, G., & Dean, J. (2013). Distributed representations of words and phrases and their compositionality.

Mishra, R., Lata, S., Llavoric, R. B., & Srinathand, K. (2019). Automatic tracking of tourism spots for tourists. SSRN Electronic Journal. Advance online publication. https://doi.org/10.2139/ssrn.3462982

Nathania, H. G., Siautama, R., Amadea Claire, I. A., & Suhartono, D. (2021). Extractive hotel review summarization based on TF/IDF and adjective-noun pairing by considering annual sentiment trends. Procedia Computer Science, 179, 558–565. https://doi.org/10.1016/j.procs.2021.01.040

Pennington, J., Socher, R., & Manning, C. (2014). Glove: Global vectors for word representation. In M. Alessandro, P. Bo, & D. Walter (Eds.), Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP) (pp. 1532–1543). Association for Computational Linguistics. https://doi.org/10.3115/v1/D14-1162

Peters, M. E., Neumann, M., Iyyer, M., Gardner, M., Clark, C., Lee, K., & Zettlemoyer, L. (2018). Deep contextualized word representations.

Premakumara, N., Shiranthika, C., Welideniya, P., Bandara, C., Prasad, I., & Sumathipala, S. (2019). Application of summarization and sentiment analysis in the tourism domain. In 2019 IEEE 5th International Conference for Convergence in Technology (I2CT) (pp. 1–5). IEEE. https://doi.org/10.1109/I2CT45611.2019.9033569

Putra, Y. A., & Khodra, M. L. (2016). Deep learning and distributional semantic model for Indonesian tweet categorization. In Proceedings of 2016 International Conference on Data and Software Engineering (ICoDSE): Udayana University, Denpasar, Bali, Indonesia, October 26th–27th 2016. IEEE. https://doi.org/10.1109/icodse.2016.7936108

Ramos, J. (2003). Using TF-IDF to determine word relevance in document queries. In: Proceedings of the first instructional conference on machine learning.

Ray, B., Garain, A., & Sarkar, R. (2021). An ensemble-based hotel recommender system using sentiment analysis and aspect categorization of hotel reviews. Applied Soft Computing, 98, 106935. https://doi.org/10.1016/j.asoc.2020.106935

Sahlgren, M. (2008). The distributional hypothesis. Ialian Journal of Disability Studies. (20), 33–53. Retrieved from https://www.diva-portal.org/smash/get/diva2:1041938/fulltext01.pdf

Santos, J., Consoli, B., & Vieira, R. (Eds.) (2020). Word embedding evaluation in downstream tasks and semantic analogies.

Shahbazi, H., Fern, X. Z., Ghaeini, R., Obeidat, R., & Tadepalli, P. (2019). Entity-aware ELMo: Learning contextual entity representation for entity disambiguation.

Sieg, A. (2019a). FROM Pre-trained Word Embeddings TO Pre-trained Language Models: FROM Static Word Embedding TO Dynamic (Contextualized) Word Embedding. Retrieved from https://towardsdatascience.com/from-pre-trained-word-embeddings-to-pre-trained-language-models-focus-on-bert-343815627598

Simov, K., Boytcheva, S., & Osenova, P. (2017). Towards lexical chains for knowledge-graph-basedWord Embeddings. In RANLP 2017 – Recent Advances in Natural Language Processing Meet Deep Learning. Incoma Ltd. https://doi.org/10.26615/978-954-452-049-6_087

Sun, Y., Liang, C., & Chang, C.-C. (2020). Online social construction of Taiwan's rural image: Comparison between Taiwanese self-representation and Chinese perception. Tourism Management, 76, 103968. https://doi.org/10.1016/j.tourman.2019.103968

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., … Polosukhin, I. (2017). Attention is all you need.

Wang, L., Wang, X., Peng, J., & Wang, J. (2020). The differences in hotel selection among various types of travellers: A comparative analysis with a useful bounded rationality behavioural decision support model. Tourism Management, 76, 103961. https://doi.org/10.1016/j.tourman.2019.103961

C. Yuan, J. Wu, H. Li, & L. Wang (2018). Personality recognition based on user generated content. In 2018 15th International Conference on Service Systems and Service Management (ICSSSM).

Zhang, X., Lin, P., Chen, S., Cen, H., Wang, J., Huang, Q., … Huang, P. (2016). Valence-arousal prediction of Chinese Words with multi-layer corpora. In M. Dong (Ed.), Proceedings of the 2016 International Conference on Asian Language Processing (IALP): 21–23 November 2016, Tainan, Taiwan. IEEE. https://doi.org/10.1109/ialp.2016.7875992

Zheng, A., & Casari, A. (2018). Feature engineering for machine learning: Principles and techniques for data scientists (1st ed.). O’Reilly.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Further Readings and Other Sources

Further Readings and Other Sources

-

Manning, Chris (2019) NLP with Deep Learning. Introduction and Word Vectors: https://www.youtube.com/watch?v=8rXD5-xhemo

-

Manning, Chris (2017) Word Vector Representations: Word2vec: https://www.youtube.com/watch?v=ERibwqs9p38

-

Bornstein, Aron (2018) Beyond Word Embeddings

-

Sieg, Adrien (2019) From Pre-trained Word Embeddings To Pre-trained Language Models — Focus on BERT: https://towardsdatascience.com/from-pre-trained-word-embeddings-to-pre-trained-language-models-focus-on-bert-343815627598

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this chapter

Cite this chapter

Egger, R. (2022). Text Representations and Word Embeddings. In: Egger, R. (eds) Applied Data Science in Tourism. Tourism on the Verge. Springer, Cham. https://doi.org/10.1007/978-3-030-88389-8_16

Download citation

DOI: https://doi.org/10.1007/978-3-030-88389-8_16

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-88388-1

Online ISBN: 978-3-030-88389-8

eBook Packages: Business and ManagementBusiness and Management (R0)