Abstract

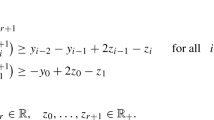

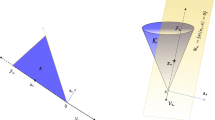

This paper describes a method for unconstrained optimization that associates quasi-Newton methods with conic functions. The derivation is based upon the construction of a conic function so that a local nonquadratic model can interpolate two function and one gradient values of the objective function at the last two iterates as a natural extension of existing quasi-Newton methods. The new method is shown to have Q-superlinear rate of convergence under standard assumptions on the objective function, and to decrease the number of line searches for good choice of parameters. Numerical experiments verify that the new method is very successful.

Zusammenfassung

Die Arbeit beschreibt eine Methode zur unrestringierten Optimierung, die konische Funktionen im quasi-Newton-Verfahren verwendet. Es wird dabei eine konische Funktion so konstruiert, daß das lokale Modell zwei Funktionswerte und einen Gradientenwert der Zielfunktion an den letzten zwei Iterierten interpoliert, was eine natürliche Erweiterung bestehender Quasi-Newton-Verfahren darstellt. Unter Standardannahmen über die Zielfunktion wird eine Q-superlineare Konvergenzgeschwindigkeit gezeigt und eine Verminderung der Anzahl der “line searches” bei guter Parameterwahl. Numerische Experimente bestätigen die Effizienz des Verfahrens.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Ariyawansa, K. A.: Deriving collinear scaling algorithms as extension of quasi-Newton methods and the local convergence of DFP and BFGS related collinear scaling algorithms. Math. Prog.49, 23–48 (1990).

Broyden, C. G., Dennis, J. E. Moré, J. J.: On the local and superlinear convergence of quasi-Newton method. J. Inst. Math. Appl.12, 223–245 (1973).

Davidon, W. C.: Conic approximation and collinear scalings for optimizations. SIAM J. Numer. Anal.17, 268–281 (1980).

Dennis, J.E., Moré, J. J.: Quasi-Newton methods, motivation and theory. SIAM Rev.19, 46–89 (1977).

Dennis, J. E., Schnabel, R. B.: Numerical methods for unconstrained optimization and nonlinear equations. Prentice-Hall Series in Computational Mathematics, 1983.

Dennis, J.E., Moré, J.J.: A characterization of superlinear convergence and its application to quasi-Newton methods. Math. Comp.28, 549–560 (1979).

Gourgeon, H., Nocedal, J.: A conic algorithm for optimization. SIAM J. Sci. Stat. Comput.6, 253–266 (1985).

Moré, J. J., Garbow, B. S., Hillstron, K. E.: Testing unconstrained optimization software. ACM Trans. Math. Software7, 17–41 (1981).

Orgeta, J. M., Rheinboldt, W. C.: Iterative solution of nonlinear equations in several variables. New York: Academic Press 1970.

Schnabel, R. B.: Conic methods for unconstrained optimization and tensor methods for nonlinear equations. In: Mathematical programming: the state of the art (Bachem, A., Groschel, M., Korte, B., eds.), pp. 417–438. Bonn 1982.

Songbai, S.: Collinear scaling methods with a parameter vector, OR and descision making. Chengdu University of Science and Technology Press, China, pp. 426–435, 1992.

Songbai, S.: A class of collinear scaling algorithms for unconstrained optimization, (1990).

Sorensen, D. C.: The Q-superlinear convergence of a collinear scaling algorithm for unconstrained optimization. SIAM J. Numer. Anal.,17, 84–114 (1980).

Xu, H., Sheng, S.: Broyden family of collinear scaling algorithm for unconstrained optimization. Numer. Math.4, 318–330 (1991).

Author information

Authors and Affiliations

Additional information

The project was supported by the National Natural Science Foundation of China.

Rights and permissions

About this article

Cite this article

Sheng, S. Interpolation by conic model for unconstrained optimization. Computing 54, 83–98 (1995). https://doi.org/10.1007/BF02238081

Received:

Issue Date:

DOI: https://doi.org/10.1007/BF02238081