Abstract

We reconsider a classical problem, namely how the (1+1) evolutionary algorithm optimizes the LeadingOnes function. We prove that if a mutation probability of p is used and the problem size is n, then the optimization time is

For the standard value of p = 1/n, this is approximately 0.86 n 2. As our bound shows, this mutation probability is not optimal: For p ≈ 1.59/n, the optimization time drops by more than 16% to approximately 0.77 n 2.

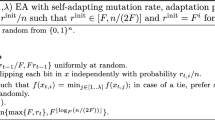

Our method also allows to analyze mutation probabilities depending on the current fitness (as used in artificial immune systems). Again, we derive an exact expression. Analysing it, we find a fitness dependent mutation probability that yields an expected optimization time of approximately 0.68 n 2, another 12% improvement over the optimal mutation rate. In particular, this is the first example where an adaptive mutation rate provably speeds up the computation time. In a general context, these results suggest that the final word on mutation probabilities in evolutionary computation is not yet spoken.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Eiben, A., Smith, J.: Introduction to Evolutionary Computing, 2nd edn. Springer, Heidelberg (2007)

Motwani, R., Raghavan, P.: Randomized Algorithms. Cambridge University Press, Cambridge (1995)

Droste, S., Jansen, T., Wegener, I.: On the analysis of the (1+1) evolutionary algorithm. Theor. Comput. Sci. 276, 51–81 (2002)

Jansen, T., Wegener, I.: Evolutionary algorithms—how to cope with plateaus of constant fitness and when to reject strings of the same fitness. IEEE Trans. Evolutionary Computation 5, 589–599 (2001)

Oliveto, P.S., He, J., Yao, X.: Time complexity of evolutionary algorithms for combinatorial optimization: A decade of results. International Journal of Automation and Computing 4, 281–293 (2007)

Doerr, B., Fouz, M., Witt, C.: Quasirandom evolutionary algorithms. In: Proceedings of GECCO 2010. ACM, New York (to appear 2010)

Doerr, B., Johannsen, D., Winzen, C.: Drift analysis and linear functions revisited. In: Proceedings of CEC 2010. IEEE, Los Alamitos (to appear 2010)

Rudolph, G.: Convergence properties of evolutionary algorithms. Kovac, Hamburg (1997)

Witt, C.: Runtime analysis of the (μ+1) EA on simple pseudo-Boolean functions. Evolutionary Computation 14, 65–86 (2006)

Neumann, F., Sudholt, D., Witt, C.: Analysis of different MMAS ACO algorithms on unimodal functions and plateaus. Swarm Intelligence 3, 35–68 (2009)

Jansen, T., Wegener, I.: On the choice of the mutation probability for the (1+1) EA. In: Deb, K., Rudolph, G., Lutton, E., Merelo, J.J., Schoenauer, M., Schwefel, H.-P., Yao, X. (eds.) PPSN 2000. LNCS, vol. 1917, pp. 89–98. Springer, Heidelberg (2000)

Zarges, C.: Rigorous runtime analysis of inversely fitness proportional mutation rates. In: Rudolph, G., Jansen, T., Lucas, S., Poloni, C., Beume, N. (eds.) PPSN 2008. LNCS, vol. 5199, pp. 112–122. Springer, Heidelberg (2008)

Zarges, C.: On the utility of the population size for inversely fitness proportional mutation rates. In: Proceedings of the 10th International Workshop on Foundations of Genetic Algorithms (FOGA 2009), pp. 39–46. ACM, New York (2009)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2010 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Böttcher, S., Doerr, B., Neumann, F. (2010). Optimal Fixed and Adaptive Mutation Rates for the LeadingOnes Problem. In: Schaefer, R., Cotta, C., Kołodziej, J., Rudolph, G. (eds) Parallel Problem Solving from Nature, PPSN XI. PPSN 2010. Lecture Notes in Computer Science, vol 6238. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-15844-5_1

Download citation

DOI: https://doi.org/10.1007/978-3-642-15844-5_1

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-15843-8

Online ISBN: 978-3-642-15844-5

eBook Packages: Computer ScienceComputer Science (R0)