Abstract

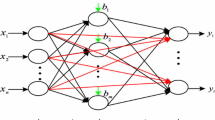

The graphics processing unit has evolved through the years into the powerful resource for general purpose computing. We present in this article the implementation of Extended Kalman filter used for recurrent neural networks training, which most computational intensive tasks are performed on the GPU. This approach achieves significant speedup of neural network training process for larger networks.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

- Graphic Processing Unit

- Hide Neuron

- Extend Kalman Filter

- Recurrent Neural Network

- Cholesky Factorization

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Čerňanský, M., Makula, M., Beňušková, L.: Processing Symbolic Sequences by Recurrent Neural Networks Trained by Kalman Filter-Based Algorithms. In: SOFSEM 2004 (2004) ISBN 80-86732-19-3 58–65

Dongarra, J.: Basic Linear Algebra Subprograms Technical Forum Standard. Int. J. of High Performance Applications and Supercomputing 16(1), 1–111 (2002)

Haykin, S.: Kalman Filtering and Neural Networks. John Wiley & Sons, Inc., New York (2002) ISBN: 0-471-36998-5

Jung, J.: Cholesky Decomposition and Linear Programming on a GPU. Sholarly Paper. University of Maryland

Kalman, R.E.: A New Approach to Linear Filtering and Prediction Problems. Trans. of the ASME, Series D, Journal of Basic Engineering 82, 35–45 (1960)

Kyoung-Su, O., Keechul, J.: GPU Implementation of Neural Networks. Pattern Recognition 37, 1311–1314 (2004)

Patel, G.S.: Modeling Nonlinear Dynamics with Extended Kalman Filter Trained Recurrent Multilayer Perceptrons. McMaster University (2000)

Prokhorov, D.V.: Kalman Filter Training of Neural Networks: Methodology and Applications. Ford Research Laboratory (2002)

Trebatický, P.: Recurrent Neural Network Training with the Kalman Filter-based Techniques. Neural network world 15(5), 471–488 (2005)

Trebatický, P.: Recurrent Neural Network Training with the Extended Kalman Filter. IIT SRC: Proc. In: Informatics and Info. Technologies, 57–67 (2005)

Automatically Tuned Linear Algebra Software (ATLAS), http://math-atlas.sourceforge.net

Compute Unified Device Architecture (CUDA), http://www.nvidia.com/object/cuda_home.html

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Trebatický, P., Pospíchal, J. (2008). Neural Network Training with Extended Kalman Filter Using Graphics Processing Unit. In: Kůrková, V., Neruda, R., Koutník, J. (eds) Artificial Neural Networks - ICANN 2008. ICANN 2008. Lecture Notes in Computer Science, vol 5164. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-87559-8_21

Download citation

DOI: https://doi.org/10.1007/978-3-540-87559-8_21

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-87558-1

Online ISBN: 978-3-540-87559-8

eBook Packages: Computer ScienceComputer Science (R0)