Summary

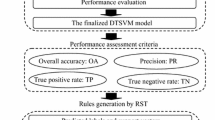

Innovative storage technology and the rising popularity of the Internet have generated an ever-growing amount of data. In this vast amount of data much valuable knowledge is available, yet it is hidden. The Support Vector Machine (SVM) is a state-of-the-art classification technique that generally provides accurate models, as it is able to capture non-linearities in the data. However, this strength is also its main weakness, as the generated non-linear models are typically regarded as incomprehensible black-box models. By extracting rules that mimic the black box as closely as possible, we can provide some insight into the logics of the SVM model. This explanation capability is of crucial importance in any domain where the model needs to be validated before being implemented, such as in credit scoring (loan default prediction) and medical diagnosis. If the SVM is regarded as the current state-of-the-art, SVM rule extraction can be the state-of-the-art of the (near) future. This chapter provides an overview of recently proposed SVM rule extraction techniques, complemented with the pedagogical Artificial Neural Network (ANN) rule extraction techniques which are also suitable for SVMs. Issues related to this topic are the different rule outputs and corresponding rule expressiveness; the focus on high dimensional data as SVM models typically perform well on such data; and the requirement that the extracted rules are in line with existing domain knowledge. These issues are explained and further illustrated with a credit scoring case, where we extract a Trepan tree and a RIPPER rule set from the generated SVM model. The benefit of decision tables in a rule extraction context is also demonstrated. Finally, some interesting alternatives for SVM rule extraction are listed.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

E. Altendorf, E. Restificar, and T.G. Dietterich. Learning from sparse data by exploiting monotonicity constraints. In Proceedings of the 21st Conference on Uncertainty in Artificial Intelligence, Edinburgh, Scotland, 2005.

Robert Andrews, Joachim Diederich, and Alan B. Tickle. Survey and critique of techniques for extracting rules from trained artificial neural networks. Knowledge-Based Systems, 8(6):373-389, 1995.

B. Baesens, R. Setiono, C. Mues, and J. Vanthienen. Using neural network rule extraction and decision tables for credit-risk evaluation. Management Science, 49(3):312-329, 2003.

B. Baesens, T. Van Gestel, S. Viaene, M. Stepanova, J.A.K. Suykens, and J. Vanthienen. Benchmarking state-of-the-art classification algorithms for credit scoring. Journal of the Operational Research Society, 54(6):627-635, 2003.

N. Barakat and J. Diederich. Learning-based rule-extraction from support vector machines. In 14th International Conference on Computer Theory and Applications ICCTA 2004 Proceedings, Alexandria, Egypt, 2004.

N. Barakat and J. Diederich. Eclectic rule-extraction from support vector machines. International Journal of Computational Intelligence, 2 (1):59-62, 2005.

A. Ben-David. Monotonicity maintenance in information-theoretic machine learning algorithms. Machine Learning, 19(1):29-43, 1995.

C.M. Bishop. Neural networks for pattern recognition. Oxford University Press, Oxford, UK, 1996.

G.E.P. Box and D.R. Cox. An analysis of transformations. Journal of the Royal Statistical Society Series B, 26:211-243, 1964.

O. Boz. Converting A Trained Neural Network To A Decision Tree. DecText -Decision Tree Extractor. PhD thesis, Lehigh University, Department of Computer Science and Engineering, 2000.

L. Breiman, J. Friedman, R. Olshen, and C. Stone. Classification and Regression trees. Wadsworth and Brooks, Monterey, CA, 1994.

P.L. Brockett, X. Xia, and R. Derrig. Using kohonen's self-organizing feature map to uncover automobile bodily injury claims fraud. International Journal of Risk and Insurance, 65:245-274, 1998.

M. Brown, W. Grundy, D. Lin, N. Cristianini, C. Sugnet, M. Ares Jr., and D. Haussler. Support vector machine classification of microarray gene expression data. Technical UCSC-CRL-99-09, University of California, Santa Cruz, 1999.

F. Chen. Learning accurate and understandable rules from SVM classifiers. Master's thesis, Simon Fraser University, 2004.

P. Clark and T. Niblett. The CN2 induction algorithm. Machine Learning, 3(4):261-283, 1989.

W. Cohen. Fast effective rule induction. In Armand Prieditis and Stuart Russell, editors, Proceedings of the 12th International Conference on Machine Learning, pages 115-123, Tahoe City, CA, 1995. Morgan Kaufmann Publishers.

M.W. Craven. Extracting Comprehensible Models from Trained Neural Networks. PhD thesis, Department of Computer Sciences, University of Wisconsin-Madison, 1996.

M.W. Craven and J.W. Shavlik. Extracting tree-structured representations of trained networks. In D.S. Touretzky, M.C. Mozer, and M.E. Hasselmo, editors, Advances in Neural Information Processing Systems, volume 8, pages 24-30. The MIT Press, 1996.

M.W. Craven and J.W. Shavlik. Rule extraction: Where do we go from here? Working paper, University of Wisconsin, Department of Computer Sciences, 1999.

N. Cristianini and J. Shawe-Taylor. An introduction to Support Vector Machines and Other Kernel-Based Learning Methods. Cambridge University Press, New York, NY, USA, 2000.

H. Daniels and M. Velikova. Derivation of monotone decision models from non-monotone data. Discussion Paper 30, Tilburg University, Center for Economic Research, 2003.

G. Deboeck and T. Kohonen. Visual Explorations in Finance with selforganizing maps. Springer-Verlag, 1998.

EMC. Groundbreaking study forecasts a staggering 988 billion gigabytes of digital information created in 2010. Technical report, EMC, March 6, 2007.

A.J. Feelders and M. Pardoel. Pruning for monotone classification trees. In Advanced in intelligent data analysis V, volume 2810, pages 1-12. Springer, 2003.

G. Fung, S. Sandilya, and R.B. Rao. Rule extraction from linear support vector machines. In Proceedings of the 11th ACM SIGKDD international Conference on Knowledge Discovery in Data Mining, pages 32-40, 2005.

S. Hettich and S. D. Bay. The uci kdd archive [http://kdd.ics.uci.edu], 1996.

T. Honkela, S. Kaski, K. Lagus, and T. Kohonen. WEBSOM—self-organizing maps of document collections. In Proceedings of Workshop on Self-Organizing Maps (WSOM’97), pages 310-315. Helsinki University of Technology, Neural Networks Research Centre, Espoo, Finland, 1997.

J. Huysmans, B. Baesens, and J. Vanthienen. ITER: an algorithm for predictive regression rule extraction. In 8th International Conference on Data Warehousing and Knowledge Discovery (DaWaK 2006), volume 4081, pages 270-279. Springer Verlag, lncs 4081, 2006.

J. Huysmans, B. Baesens, and J. Vanthienen. Using rule extraction to improve the comprehensibility of predictive models. Research 0612, K.U.Leuven KBI, 2006.

J. Huysmans, B. Baesens, and J. Vanthienen. Minerva: sequential covering for rule extraction. 2007.

J. Huysmans, D. Martens, B. Baesens, J. Vanthienen, and T. van Gestel. Country corruption analysis with self organizing maps and support vector machines. In International Workshop on Intelligence and Security Informatics (PAKDD-WISI 2006), volume 3917, pages 103-114. Springer Verlag, lncs 3917, 2006.

J. Huysmans, C. Mues, B. Baesens, and J. Vanthienen. An empirical evaluation of the comprehensibility of decision table, tree and rule based predictive models. 2007.

T. Joachims. Learning to Classify Text Using Support Vector Machines: Methods, Theory and Algorithms. Kluwer Academic Publishers, Norwell, MA, USA, 2002.

U. Johansson, R. König, and L. Niklasson. Rule extraction from trained neural networks using genetic programming. In Joint 13th International Conference on Artificial Neural Networks and 10th International Conference on Neural Information Processing, ICANN/ICONIP 2003, pages 13-16, 2003.

U. Johansson, R. König, and L. Niklasson. The truth is in there - rule extraction from opaque models using genetic programming. In 17th International Florida AI Research Symposium Conference FLAIRS Proceedings, 2004.

R. Kohavi and J.R. Quinlan. Decision-tree discovery. In W. Klosgen and J. Zytkow, editors, Handbook of Data Mining and Knowledge Discovery, pages 267-276. Oxford University Press, 2002.

T. Kohonen. Self-organized formation of topologically correct feature maps. Biological Cybernetics, 43:59-69, 1982.

T. Kohonen. Self-Organising Maps. Springer-Verlag, 1995.

M. Mannino and M. Koushik. The cost-minimizing inverse classification problem: A genetic algorithm approach. Decision Support Systems, 29:283-300, 2000.

U. Markowska-Kaczmar and M. Chumieja. Discovering the mysteries of neural networks. International Journal of Hybrid Intelligent Systems, 1(3-4):153-163, 2004.

U. Markowska-Kaczmar and W. Trelak. Extraction of fuzzy rules from trained neural network using evolutionary algorithm. In European Symposium on Artificial Neural Networks (ESANN), pages 149-154, 2003.

D. Martens, B. Baesens, T. Van Gestel, and J. Vanthienen. Comprehensible credit scoring models using rule extraction from support vector machines. European Journal of Operational Research, Forthcoming.

D. Martens, M. De Backer, R. Haesen, B. Baesens, C. Mues, and J. Vanthienen. Ant-based approach to the knowledge fusion problem. In Proceedings of the Fifth International Workshop on Ant Colony Optimization and Swarm Intelligence, Lecture Notes in Computer Science, pages 85-96. Springer, 2006.

D. Martens, M. De Backer, R. Haesen, M. Snoeck, J. Vanthienen, and B. Baesens. Classification with ant colony optimization. IEEE Transaction on Evolutionary Computation, Forthcoming.

R. Michalski. On the quasi-minimal solution of the general covering problem. In Proceedings of the 5th International Symposium on Information Processing (FCIP 69), pages 125-128, 1969.

H. Nú ñez, C. Angulo, and A. Català. Rule extraction from support vector machines. In European Symposium on Artificial Neural Networks (ESANN), pages 107-112, 2002.

M. Pazzani, S. Mani, and W. Shankle. Acceptance by medical experts of rules generated by machine learning. Methods of Information in Medicine, 40(5):380-385, 2001.

J. R. Quinlan. Induction of decision trees. Machine Learning, 1(1):81-106, 1986.

J.R. Quinlan. C4.5 programs for machine learning. Morgan Kaufmann, 1993.

J.R. Rabuñal, J. Dorado, A. Pazos, J. Pereira, and D. Rivero. A new approach to the extraction of ANN rules and to their generalization capacity through GP. Neural Computation, 16(47):1483-1523, 2004.

B.D. Ripley. Neural networks and related methods for classification. Journal of the Royal Statistical Society B, 56:409-456, 1994.

G.P.J. Schmitz, C. Aldrich, and F.S. Gouws. Ann-dt: An algorithm for the extraction of decision trees from artificial neural networks. IEEE Transactions on Neural Networks, 10(6):1392-1401, 1999.

R. Setiono, B. Baesens, and C. Mues. Risk management and regulatory compliance: A data mining framework based on neural network rule extraction. In Proceedings of the International Conference on Information Systems (ICIS 2006), 2006.

J. Sill. Monotonic networks. In Advances in Neural Information Processing Systems, volume 10. The MIT Press, 1998.

D.W. Silverman. Density Estimation for Statistics and Data Analysis. Chapman and Hall, 1986.

I.A. Taha and J. Ghosh. Symbolic interpretation of artificial neural networks. IEEE Transactions on Knowledge and Data Engineering, 11(3):448-463, 1999.

P.-N. Tan, M. Steinbach, and V. Kumar. Introduction to Data Mining. Addison Wesley, Boston, MA, 2005.

M. Tipping. The relevance vector machine. In Advances in Neural Information Processing Systems, San Mateo, CA. Morgan Kaufmann, 2000.

M. Tipping. Sparse bayesian learning and the relevance vector machine. Journal of Machine Learning Research, 1:211-244, 2001.

T. Van Gestel, B. Baesens, P. Van Dijcke, J. Garcia, J.A.K. Suykens, and J. Van-thienen. A process model to develop an internal rating system: credit ratings. Decision Support Systems, forthcoming.

T. Van Gestel, B. Baesens, P. Van Dijcke, J.A.K. Suykens, J. Garcia, and T. Alderweireld. Linear and non-linear credit scoring by combining logistic regression and support vector machines. Journal of Credit Risk, 1(4), 2006.

T. Van Gestel, D. Martens, B. Baesens, D. Feremans, J; Huysmans, and J. Vanthienen. Forecasting and analyzing insurance companies ratings.

T. Van Gestel, J.A.K. Suykens, B. Baesens, S. Viaene, J. Vanthienen, G. Dedene, B. De Moor, and J. Vandewalle. Benchmarking least squares support vector machine classifiers. CTEO, Technical Report 0037, K.U. Leuven, Belgium, 2000.

V. N. Vapnik. The nature of statistical learning theory. Springer-Verlag New York, Inc., New York, NY, USA, 1995.

M. Velikova and H. Daniels. Decision trees for monotone price models. Computational Management Science, 1(3-4):231-244, 2004.

M. Velikova, H. Daniels, and A. Feelders. Solving partially monotone problems with neural networks. In Proceedings of the International Conference on Neural Networks, Vienna, Austria, March 2006.

J. Vesanto. Som-based data visualization methods. Intelligent Data Analysis, 3:111-26, 1999.

Z.-H. Zhou, Y. Jiang, and S.-F. Chen. Extracting symbolic rules from trained neural network ensembles. AI Communications, 16(1):3-15, 2003.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this chapter

Cite this chapter

Martens, D., Huysmans, J., Setiono, R., Vanthienen, J., Baesens, B. (2008). Rule Extraction from Support Vector Machines: An Overview of Issues and Application in Credit Scoring. In: Diederich, J. (eds) Rule Extraction from Support Vector Machines. Studies in Computational Intelligence, vol 80. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-75390-2_2

Download citation

DOI: https://doi.org/10.1007/978-3-540-75390-2_2

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-75389-6

Online ISBN: 978-3-540-75390-2

eBook Packages: EngineeringEngineering (R0)