Abstract

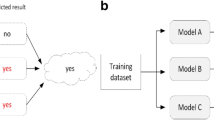

The combined classification is an important area of machine learning and there are a plethora of approaches methods for constructing efficient ensembles. The most popular approaches work on the basis of voting aggregation, where the final decision of a compound classifier is a combination of discrete individual classifiers’ outputs, i.e., class labels. At the same time, some of the classifiers in the committee do not contribute much to the collective decision and should be discarded. This paper discusses how to design an effective ensemble pruning and combination rule, based on continuous classifier outputs, i.e., support functions. As in many real-life problems we do not have an abundance of training objects, therefore we express our interest in aggregation methods which do not required training. We concentrate on the field of weighted aggregation, with weights depending on classifier and class label. We propose a new untrained method for simultaneous ensemble pruning and weighted combination of support functions with the use of a Gaussian function to assign mentioned above weights. The experimental analysis carried out on the set of benchmark datasets and backed up with a statistical analysis, prove the usefulness of the proposed method, especially when the number of class labels is high.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

References

Alpaydin, E.: Combined 5 x 2 cv f test for comparing supervised classification learning algorithms. Neural Computation 11(8), 1885–1892 (1999)

Biggio, B., Fumera, G., Roli, F.: Bayesian Analysis of Linear Combiners. In: Haindl, M., Kittler, J., Roli, F. (eds.) MCS 2007. LNCS, vol. 4472, pp. 292–301. Springer, Heidelberg (2007)

Demšar, J.: Statistical comparisons of classifiers over multiple data sets. J. Mach. Learn. Res. 7, 1–30 (2006)

Frank, A., Asuncion, A.: UCI machine learning repository (2010), http://archive.ics.uci.edu/ml

García, S., Fernández, A., Luengo, J., Herrera, F.: Advanced nonparametric tests for multiple comparisons in the design of experiments in computational intelligence and data mining: Experimental analysis of power. Inf. Sci. 180(10), 2044–2064 (2010)

Jackowski, K., Krawczyk, B., Woźniak, M.: Improved adaptive splitting and selection: the hybrid training method of a classifier based on a feature space partitioning. Int. J. Neural Syst. 24(3) (2014).

Rao, N.S.V.: A Generic Sensor Fusion Problem: Classification and Function Estimation. In: Roli, F., Kittler, J., Windeatt, T. (eds.) MCS 2004. LNCS, vol. 3077, pp. 16–30. Springer, Heidelberg (2004)

Rokach, L., Maimon, O.: Feature set decomposition for decision trees. Intell. Data Anal. 9(2), 131–158 (2005)

Wozniak, M.: Experiments on linear combiners. In: Pietka, E., Kawa, J. (eds.) Information Technologies in Biomedicine. AISC, vol. 47, pp. 445–452. Springer, Berlin / Heidelberg (2008)

Woźniak, M., Graña, M., Corchado, E.: A survey of multiple classifier systems as hybrid systems. Information Fusion 16, 3–17 (2014)

Wozniak, M., Zmyslony, M.: Designing combining classifier with trained fuser - analytical and experimental evaluation. Neural Network World 20(7), 925–934 (2010)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Krawczyk, B., Woźniak, M. (2014). Untrained Method for Ensemble Pruning and Weighted Combination. In: Zeng, Z., Li, Y., King, I. (eds) Advances in Neural Networks – ISNN 2014. ISNN 2014. Lecture Notes in Computer Science(), vol 8866. Springer, Cham. https://doi.org/10.1007/978-3-319-12436-0_40

Download citation

DOI: https://doi.org/10.1007/978-3-319-12436-0_40

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-12435-3

Online ISBN: 978-3-319-12436-0

eBook Packages: Computer ScienceComputer Science (R0)