Abstract

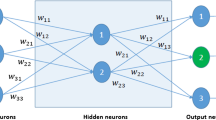

When feedforward neural networks of multi-layer perceptron (MLP) type are used as black-box models of complex processes, a common problem is how to select relevant inputs from a large set of potential variables that affect the outputs to be modeled. If, furthermore, the observations of the input-output tuples are scarce, the degrees of freedom may not allow for the use of a fully connected layer between the inputs and the hidden nodes. This paper presents a systematic method for selection of both input variables and a constrained connectivity of the lower-layer weights in MLPs. The method, which can also be used as a means to provide initial guesses for the weights prior to the final training phase of the MLPs, is illustrated on a class of test problems.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Principe J. C, N. R. Euliano and W. C. Lefebvre, (1999) Neural and adaptive systems: Fundamentals through simulations, John Wiley & Sons, New York.

Sridhar, D.V., E. B. Bartlett and R. C. Seagrave, (1998) “Information theoretic subset selection for neural networks”, Comput. Chem. Engng. 22, 613–626.

Bogler, Z., (2003) “Selection of quasi-optimal inputs in chemometrics modeling by artificial neural network analysis”, Analytical Chimica Acta 490, 31–40.

Sarle, W.S., (2000) “How to measure importance of inputs”, ftp://ftp.sas.com/pub/neural/importance.html

Frean, M., (1989) “The Upstart Algorithm. A method for Constructing and Training Feed-forward Neural Networks”, Edinburgh Physics Department, Preprint 89/469, Scotland.

Fahlman, S.E. and C. Lebiere, (1990) “The Cascade-Correlation Learning Architecture”, in Advances in Neural Information Processing Systems II, (Ed. D.S. Touretzky), pp. 524–532.

Le Chun, Y., J. S. Denker and S. A. Solla, (1990) “Optimal Brain Damage”, in Advances in Neural Information Processing Systems 2, ed. D.S. Touretzky, pp. 598–605, (Morgan

Thimm, G. and E. Fiesler, (1995) “Evaluating pruning methods”, Proc. of the 1995 International Symposium on Artificial Neural Networks (ISANN’95), Hsinchu, Taiwan, ROC.

Maniezzo, V., (1994) “Genetic Evolution of the Topology and Weight Distribution of Neural Networks”, IEEE Transactions on Neural Networks 5, 39–53.

Gao, F., M. Li, F. Wang, B. Wang and P. Yue, (1999) “Genetic Algorithms and Evolutionary Programming Hybrid Strategy for Structure and Weight Learning for Multilayer Feedforward Neural Networks”, Ind. Eng. Chem. Res. 38, 4330–4336.

Pettersson, F. and H. Saxén, (2003) “A hybrid algorithm for weight and connectivity optimization in feedforward neural networks”, in Artficial Neural Nets and Genetic Algorithms (eds. Pearson, D. et al.), pp 47–52, Springer-Verlag.

Golub, G., (1965) “Numerical methods for solving linear least squares problems”, Numer. Math. 7, 206–216.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2005 Springer-Verlag/Wien

About this paper

Cite this paper

Saxén, H., Pettersson, F. (2005). A simple method for selection of inputs and structure of feedforward neural networks. In: Ribeiro, B., Albrecht, R.F., Dobnikar, A., Pearson, D.W., Steele, N.C. (eds) Adaptive and Natural Computing Algorithms. Springer, Vienna. https://doi.org/10.1007/3-211-27389-1_3

Download citation

DOI: https://doi.org/10.1007/3-211-27389-1_3

Publisher Name: Springer, Vienna

Print ISBN: 978-3-211-24934-5

Online ISBN: 978-3-211-27389-0

eBook Packages: Computer ScienceComputer Science (R0)