Abstract

A simple step-stress accelerated life testing plan with two stress variables is considered, when the failure times in each level of stress follow the lognormal distribution. The lognormal distribution is commonly used to model certain types of data that arise in several fields of engineering such as, for example, different types of lifetime data or coefficients of wear and friction. The problem of choosing the optimal times to change the stress level is investigated by minimizing the asymptotic variance of the reliability estimate and maximizing the determinant of Fisher information matrix. In this paper, we obtain the optimal bivariate step-stress accelerated life test using both the criteria. Due to the nonlinearity and complexity of problem, the particle swarm optimization algorithm is developed to calculate the optimal hold times. In this method, the research speed is very fast and the optimization ability is more. To illustrate the effect of the initial estimates on the optimal values, sensitivity analysis is performed. Finally, numerical studies are discussed to illustrate the proposed criterion. Simulation results show that the proposed optimum plan is robust.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

When the units being tested are of high reliability, some traditional life tests result in no or very few failures by the end of the test. In such cases, one approach is to do life testing at higher than the usual use conditions to obtain failures quickly. The data collected under stresses are used to estimate the life distribution at normal use conditions (Ismail and Sarhan 2009). Tests that are done in this way are called accelerated life tests (ALTs). In accelerated life tests, the engineer is interested in predicting the life of the product (or more specifically, life characteristics, such as MTTF, etc.) at normal use conditions, from the data obtained in an accelerated life test. By analyzing the product’s response to such tests, engineers can make predictions about the service life and maintenance intervals of a product.

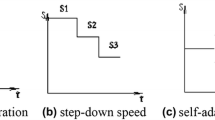

Step-stress accelerated life testing (SSALT) is an advanced case of ALT. In SSALT, the stress levels of the experiment are increased during the test period at some pre-specified times. If only one change of the stress level is done, it is called a simple SSALT. The step-stress procedure was first introduced, with the cumulative exposure model, by Nelson (1980).

In this paper, the lognormal distribution is considered for the lifetime of this product. This distribution is especially important in reliability. The lognormal life distribution is a very flexible model that can empirically fit many types of failure data. The lognormal distribution is important in the description of natural phenomena. As may be surmised from the name, the lognormal distribution has certain similarities to the normal distribution. A random variable is lognormally distributed, if the logarithm of the random variable is normally distributed. Because of this, there are many mathematical similarities between the two distributions. For example, the mathematical reasoning for the construction of the probability plotting scales and the bias of parameter estimators is very similar for these two distributions. The lognormal distribution is commonly used to model the lives of units, whose failure modes are of a fatigue–stress nature.

Recently, the problem of optimal scheduling of the step-stress test has attracted great attention in the reliability literature. For this purpose, the time of rising stress is optimized. The main objective of this paper is to optimize this test.

Miller and Nelson (1983) provided the optimum simple step-stress plans for accelerated life testing, where life products are assumed to have exponentially distributed lifetimes. Khamis and Higgins (1996, 1998) obtained the stress change time, which minimizes the asymptotic variance of the maximum likelihood estimate of the log mean life at the design condition. Alhadeed and Yang (2002) discussed the optimal simple step-stress plan for the Khamis–Higgins model.

In those studies, only one accelerating stress variable is used. In many applications for various reasons, it is desirable to use more than one accelerating stress variable. For example, an ALT of capacitors could include two accelerated variables, such as temperature and voltage. Since the use of more than one variable stress makes more failure data and a better understanding of the simultaneous effects of the stress variables, this paper presents an SSALT model with two stress variables. We consider each stress variable has two stress levels. The test process is that the first stress variable increases at time \(\tau _1\) and the second variable increases at time \(\tau _2\). So, in this scheme, there are two different times to change the stress levels that need to be optimized.

Li and Fard (2007) studied the SSALT for two stress variables with Weibull failure times under Type-I censoring. Ling et al. (2011) present a SSALT for two stress variables to obtain optimal hold times under a Type-I hybrid censoring scheme.

In this paper, optimal bivariate SSALT is considered. The choice of a criterion of optimality is not straightforward in this case. Several criteria have been studied for this purpose. Obviously, each of these criteria has different properties. We use two different optimality criteria. The first criterion minimizes the asymptotic variance (AV) of reliability estimate at time \(\xi\) under usual operating conditions and the second criterion maximizes the determinant of Fisher information matrix.

As mentioned, the two times must be optimized. For optimization, we use the particle swarm optimization (PSO) method. PSO is an extremely simple algorithm that seems to be effective for optimizing a wide range of functions. Following are the two key aspects by which we believe that PSO has become so popular:

-

1.

The main algorithm of PSO is relatively simple.

-

2.

PSO has been found to be very effective in a wide variety of applications, being able to produce very good results at a very low computational cost (Engelbrecht 2005; Kennedy et al. 2001).

The paper is organized as follows. Section 2 describes test method and assumptions. The maximum likelihood estimators (MLEs) are given in Sect. 3. The problem of choosing the optimal hold times using two criteria will be addressed in Sect. 4. Fisher information matrix is given in Sect. 5. Finally, simulation study and the sensitivity analysis are given in Sect. 6.

2 Model and Assumption

We consider the SSALT with two stress variables, and each stress variable has two levels. Let \(S_{lk}\) be the kth stress level of variable l, where \(l = 1, 2\) and \(k = 0, 1, 2\). The \(S_{10}, S_{20}\) are the stress levels at typical operating conditions.

Suppose we have n independent and identically distributed items that are initially put to test at the first step with stress levels \((S_{11},S_{21})\). The first stress variable is increased from \(S_{11}\) to \(S_{12}\) at time \(\tau _1\). The test is continued until the time \(\tau _{2}\), when the other stress variable are increased from \(S_{21}\) to \(S_{22}\). The test continues, until all the units fail. Let \(n_i\) be the number of failures at time \(t_{ij}\), where \(j=1,2,\ldots ,n_i\) in step i and \(i=1,2,3\). However, \(\tau _1\) and \(\tau _2\) are the times of increasing the first and second stress variables, respectively. To optimize the described test, \(\tau _1\) and \(\tau _2\) should be selected as appropriate. In Sect. 4, optimization of the test plan is studied.

The basic assumptions are:

-

1.

Under any constant stress, the lifetime of a test unit follows a base-e lognormal distribution with cumulative distribution function (CDF)

$$F(t)=\Phi \left( \frac{\log (t)-u}{\sigma }\right) ,\quad t\ge 0$$where u and \(\sigma\) are, respectively, the mean and standard deviation of the distribution of the log life time of the unit under life testing and \(\Phi (.)\) is standard normal CDF.

-

2.

The mean of log life time u is a linear function of the stress level. Thus, we proposed the following life-stress relationship:

$$\begin{aligned} \text{Step }1{:} \log \left( u_1\right)&= \beta _0 + \beta _1 S_{11} +\beta _2 S_{21}, \nonumber \\ \text{Step }2{:} \log \left( u_2\right)&= \beta _0 + \beta _1 S_{12} +\beta _2 S_{21}, \nonumber \\ \text{Step }3{:} \log \left( u_3\right)&= \beta _0 + \beta _1 S_{12} +\beta _2 S_{22}, \end{aligned}$$(1)where \(\beta _0\), \(\beta _1\), and \(\beta _2\) are the unknown parameters depending on the nature of the product, and the method of test.

-

3.

A cumulative exposure model holds, i.e., the remaining life of a test product depends only on the cumulative exposure (CE) it has seen (Khamis and Higgins 1998).

-

4.

The constant \(\sigma\) does not depend on the stress level.

The CDF of test units under bivariate SSALT and CE model is:

The corresponding PDF is

Let \(Y=\log T\), the probability density function under CE model be comes as

3 The Likelihood Function and Fisher Information Matrix

The log likelihood function for complete data from the log of the observed lifetimes is,

where \(A_{j}={\mathrm{e}}^{y_{2j}}-\tau _1+\tau _1 {\mathrm{e}}^{u_2-u_1}\) and \(B_{j}={\mathrm{e}}^{y_{3j}}-\tau _2+(\tau _2-\tau _1 +\tau _1 {\mathrm{e}}^{u_2-u_1}) {\mathrm{e}}^{u_3-u_2}\). In addition, \(y_{1j}\), \(y_{2j}\) and \(y_{3j}\) are the logarithm of the failure times in three steps of the test.

With the placement of (1) in the above equation, the log likelihood function can be written as,

On maximizing the above function, the MLEs of \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\) can be obtained. With \(\hat{\beta }_0\), \(\hat{\beta }_1\), \(\hat{\beta }_2\) and \(\hat{\sigma }\) and by the invariance property, we can obtain \(\hat{u}_1\), \(\hat{u}_2\) and \(\hat{u}_3\). The first-order partial derivatives of the log likelihood function with respect to \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\) and setting them to zero are given by,

Given that, it is difficult to obtain a closed form solution to the nonlinear Eqs. (4)–(7), a numerical method is used to solve these equations.

4 Optimization Criteria

We can obtain the preliminary estimates of the parameters \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\) from the previous experiments of similar products, or from some target mean time to failure (MTTF) values or from a small sample experiment. These estimates are then used to obtain the optimal test design.

In this section, we consider the problem of optimal designing using two criteria.

4.1 Criterion I

The first one (Criterion I) is obtained by minimizing the asymptotic variance of MLE of the log of the mean life or some percentile life at a specified stress level. Notice that since the MTTF is related to the reliability function, the optimization function could also be defined as, \(\text{MTTF}=\int _{0}^{\infty } R(t) {\mathrm{d}}t\), the optimization criterion could also be defined as a function of reliability. Reliability prediction is an important factor in a product design and during the developmental testing process. To accurately estimate the product reliability, the test design criterion is defined to minimize the AV of the reliability estimate at the time \(\xi\) under the normal operating conditions.

Let \(x_i=(S_{i1}-S_{i0})/(S_{i2}-S_{i0})\), \(i=1,2\), then \(S_{i0}=(S_{i1}-x_i S_{i2})/(1-x_i)\), \(i=1,2\). As previously mentioned, \(S_{10}, S_{20}\) are the stress levels at typical operating conditions. Similarly, \(u_0\) is mean of log lifetime at typical operating conditions. From assumption 2, we can obtain \(\log (u_0)\) as follows:

Thus, the reliability under typical operating conditions at predetermined time \(\xi\) is

with the placement of (8) in (9), MLE of \(R_{(S_{10},S_{20})}(\xi )\) is

The AV of the reliability estimate at a predetermined time \(\xi\) under typical operating conditions can be obtained as follows, using the delta theorem:

where F is Fisher information matrix, and H is the row vector of the first derivative of \(\widehat{R}_{(S_{10},S_{20})}(\xi )\) with respect to \(\hat{\beta }_0\), \(\hat{\beta }_1\), \(\hat{\beta }_2\) and \(\hat{\sigma }\). i.e.,

where

where \(A=\frac{1}{\hat{\sigma }}(\log \xi -\frac{1}{1-x_1} \hat{u}_1+\frac{x_2-x_1}{(1-x_1)(1-x_2)}\hat{u}_2-\frac{x_2}{1-x_2}\hat{u}_3)\) and \(\phi (.)\) is the standard normal probability density function.

The values \(\tau _1^{*}\) and \(\tau _2^{*}\) that minimizes \(\text{AV} [\widehat{R}_{S_{10},S_{20}}(\xi )]\), given by Eq. (10), leads to the optimal SSALT plan.

4.2 Criterion II

Another approach (Criterion II) is based on maximizing the determinant of Fisher information matrix. Maximizing this determinant is equivalent to minimizing the generalized asymptotic variance (GAV) of the MLE of the model parameters at normal use condition. The GAV is the reciprocal of the determinant of Fisher information matrix; see Bai et al. (1993). That is,

where F is the Fisher information matrix, which is obtained in the next section.

Therefore, the optimal hold times are chosen, so that |F| is maximized and then the GAV is minimized. It can be statistically shown that maximizing the determinant of the Fisher information matrix is the same as minimizing the determinant of the covariance matrix. Therefore, the optimal SSALT plan that maximizes the determinant of the Fisher information matrix will provide the smallest standard error and it is called a D-optimal plan. Moreover, the determinant of Fisher information is proportional to the reciprocal of the volume of the asymptotic joint confidence region for the parameters, so that maximizing the determinant is equivalent to minimizing the volume of confidence region (Arefi and Razmkhah 2013).

The values \(\tau _1^{+}\) and \(\tau _2^{+}\) maximize the determinant of the Fisher information matrix.

5 Fisher Information Matrix

To optimize the test plan, the Fisher information matrix F must be obtained first. The Fisher information matrix plays a key role in the parameter estimation. It is a measure of the information content of the data relative to the parameters being estimated. Its elements are obtained through taking expectation on the negative second partial and mixed partial derivative of \(\ell (\beta _0, \beta _1, \beta _2, \sigma )\) with respect to parameters \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\). Then, the Fisher information matrix F is obtained as follows:

Details of the calculations are presented in Appendices 1 and 2. Therefore, the elements of F are given as,

where the detailed calculation for \(J_1\) to \(J_{24}\), and \(e_1\), \(e_2\) and \(e_3\) in the formulas above are in Appendices 1 and 2, respectively.

This work focus on considering the lognormal distribution for the lifetime of the items. We can generalize this study to the large family of distributions. For example, Hong et al. (2010) proposed an approach for computing the approximate variance of MLEs of quantiles of the log-location-scale family of distributions. They examined the results for the Weibull and lognormal distributions.

The most applied statistical distributions are either members of the log-location-scale family of distributions or closely related to this class of distributions (e.g., lognormal, loglogistic, etc.). To aim the generalization of our method, assume that at any step of test, the failure time follows a log-location-scale family of distributions with the following CDF and PDF,

and

where \(\psi\), \(\varphi\), \(\mu\) and \(\sigma\) are CDF, PDF, the location and the scale parameters of the location-scale family of the distributions, respectively.

Under the assumptions considered in Sect. 2, the CDF of test units under bivariate SSALT and CE model is:

The corresponding PDF under bivariate SSALT and CE model is:

in this paper, all of the analyses are performed using PDF. So, we can use the above functions for analysis of model and optimization of the test plan.

6 Simulation Study

In this section, we first present a simulation study to illustrate the proposed procedure in obtaining the optimal plan. Then, we perform a sensitivity analysis.

Because the objective function in both the criteria is nonlinear and complex, PSO in this methodology is an appropriate optimization method and can be employed to find the near optimal solution. PSO is originally attributed to Eberhart and Kennedy (1995) and Shi and Eberhart (1998) and was first intended for simulating social behavior, as a stylized representation of the movement of organisms in a bird flock or fish school. The algorithm was simplified and it was observed to be performing optimization.

PSO has been found to be successful in a wide variety of optimization tasks (Kennedy et al. 2001). The mechanism used to search the solution space in the PSO differs from the evolutionary computations. The simplicity and the applicability of the PSO method have added to the popularity of this method for solving a large number of engineering and management optimization problems (Khalili-Damghani et al. 2013).

The advantages of this method compared to other methods are that it occupies more optimization ability, can be completed easily, and the speed of the researching is very fast.

In Appendix 3, the PSO algorithm is described.

The numerical examples are given for calculating the optimal hold times of the bivariate SSALT under both the criteria. We propose a simple bivariate SSALT for lognormal data. For the given values of \(n=40\), \(u_1=0.0975\), \(u_2=0.1115\), \(u_3=0.1415\) and \(\sigma =1\), the parameters \(\beta _i\) at assumption 2, for \(i = 0, 1, 2\) is obtained as \(\beta _0=0.025\), \(\beta _1=0.05\) and \(\beta _2=0.075\). Now, we determine the optimal hold times using PSO algorithm under both the criteria. Using the criterion I and assuming \(\xi =9\), the optimal hold times are obtained \(\tau _1^*=1.1470\) and \(\tau _2^*=2.3251\). Using the criterion II, the optimal hold times are obtained \(\tau _1^+=1.8140\) and \(\tau _2^+=2.1988\).

6.1 Sensitivity Analysis

To examine the effect of changes in the initial parameters \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\) on the optimal values, a sensitivity analysis is performed. Its objective is to identify the sensitive parameters, which need to be estimated with special care to minimize the risk of obtaining an erroneous optimal solution. Toward this end, the optimal values are derived, when one of the objectives \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\) changes and the others are fixed.

Tables 1, 2, 3 and 4 present the sensitivity analysis for the different values of the parameters, \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\), respectively and with respect to the criterion I. From these tables, we can see that as these parameters increase, the optimal stress change times very slightly increase.

Tables 5, 6, 7 and 8 present the optimal hold times for the specified values of the parameters, \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\), respectively and with respect to the criterion II. These tables also show that, as parameters increase, the optimal stress change times very slightly increase. In addition, we see that, based on the both of the criteria, these parameters have a very small effect on the hold times. According to these two criteria, the optimal hold times are not too sensitive.

7 Conclusion

In this paper, a simple SSALT plan with two variables was considered for products in which the failure times at each level of stress are lognormally distributed. Since each criterion can be used for a special purpose, it was also seen that in some situations, the different criteria give quite different results. To find the optimal stress change times, two different criteria were considered. The first criterion determined the optimal hold times, so that the AV of the reliability estimate under the normal operating conditions was minimized. The second criterion was based on maximizing the determinant of the Fisher information matrix. It was seen that the criterion II was less influenced by changes in the initial parameters than the criterion I. The PSO algorithm was used for optimization. Furthermore, according to simulation studies, we have found that since the optimal hold times are not too sensitive to the model’s parameters, we anticipate that the proposed design is robust.

References

Alhadeed AA, Yang SS (2002) Optimal simple step-stress plan for Khamis–Higgins model. IEEE Trans Reliab 51(2):212–215

Arefi A, Razmkhah M (2013) Optimal simple step stress plan for type-I censored data from geometric distribution. J Iran Stat Soc 12(2):193–210

Bai DS, Chung SW, Chun YR (1993) Optimal design of partially accelerated life tests for the Lognormal distribution under type-I censoring. Reliab Eng Syst Saf 40:85–92

Eberhart R, Kennedy J (1995) Particle swarm optimization. In: Proceedings of the 1995 IEEE international conference on neural networks, pp. 1942–1948

Engelbrecht AP (2005) Fundamentals of computational swarm intelligence. Wiley, New York

Hong Y, Ma H, Meeker WQ (2010) A tool for evaluating time-varying-stress accelerated life test plans with log-location-scale distributions. IEEE Trans Reliab 59:620–627

Ismail AA, Sarhan AM (2009) Optimal design of step-stress life test with progressively type-II censored exponential data. Int Math Forum 4(40):1963–1976

Kennedy JF, Kennedy J, Eberhart RC (2001) Swarm intelligence. Morgan Kaufmann, San Francisco

Khalili-Damghani K, Abtahi AR, Tavana M (2013) A new multi-objective particle swarm optimization method for solving reliability redundancy allocation problems. Reliab Eng Syst Saf 111:58–75

Khamis IH, Higgins JJ, (1996) An alternative to the Weibull cumulative exposure model. In: 1996 Proceeding section on quality and productivity. American Statistical Association, Chicago

Khamis IH, Higgins JJ (1998) A new model for step-stress testing. IEEE Trans Reliab 47:131–134

Li C, Fard N (2007) Optimum bivariate step-stress accelerated life test for censored data. IEEE Trans Reliab 56(1):77–84

Ling L, Xu W, Li M (2011) Optimal bivariate step-stress accelerated life test for type-I hybrid censored data. J Stat Comput Simul 81(9):1175–1186

Miller R, Nelson WB (1983) Optimum simple step-stress plans for accelerated life testing. IEEE Trans Reliab 32:59–65

Mu AQ, Cao DX, Wang XH (2009) A modified particle swarm optimization algorithm. Nat Sci 1(2):151–155

Nelson WB (1980) Accelerated life testing-step-stress models and data analyses. IEEE Trans Reliab 29:103–108

Shi Y, Eberhart R (1998) A modified particle swarm optimizer. In: Evolutionary computation proceedings, 1998. IEEE World Congress on computational intelligence. The 1998 IEEE international conference, pp 69–73

Acknowledgements

The author would like to thank the Editor, the Associate Editor and the two referees for carefully reading the paper and for their constructive comments which greatly improved the paper.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix 1

The Fisher information matrix can be obtained by taking the expected values of the negative second partial, and mixed partial derivatives with respect to \(\beta _0\), \(\beta _1\), \(\beta _2\) and \(\sigma\). The results of these derivatives are given in the following which are used to develop the Fisher information matrix:

where \(C=\tau _2-\tau _1+\tau _1 {\mathrm{e}}^{u_2-u_1}\).

The results of the above equations are then used to develop the Fisher information matrix. To simplify the second partial and mixed partial derivatives, the following definitions are made:

Appendix 2

The calculation details of \(e_i=E\left[ \frac{n_i}{n} \right]\), \(i=1,2,3\) are demonstrated through the following three steps:

At the first step, n new products are tested at stress levels \((S_{11}, S_{21})\) until the time \(\tau _1\), where the test units are assumed independent and identically distributed. The life of items follows the CDF of t in Eq. (2). The number of failures \(n_1\) in the time \(\tau _1\) is a binomial random variable with parameters n and \(p_1\). From the Eq. (2), we have:

The second step starts with \(n-n_1\) unfailed items, tested at stress levels \((S_{12}, S_{21})\) until the time \(\tau _2\). The life of items follows the CDF of t given by the Eq. (2), where the number of failures \(n_2\) follows a binomial distribution with parameters \(n-n_1\) and \(p_2\). Then, from the Eq. (2), we have:

For the third step, \(n-n_1- n_2\) i.i.d. survival items are then placed at stresses \((S_{12}, S_{22})\) until all the units failed. The lifetime of any item follows the CDF in Eq. (2). Since \(n_3\) is the number of failures in the final stage and \(n_3=n-n_1-n_2\), its expected value is obtained from the following equation:

Appendix 3

PSO algorithm is a new intelligent optimization algorithm intimating the bird swarm behaviors. Compared with other optimization algorithms, the PSO is more objective, easy to perform, and is applied in many fields.

In PSO algorithm, each individual is called “particle”, which represents a potential solution. The algorithm achieves the best solution by the variability of some particles in the tracing space. The particles search in the solution space following the best particle by changing their positions and the fitness frequently, the flying direction and velocity are determined by the objective function (Mu et al. 2009).

For improving the convergence performance of PSO, the inertia factor w is used to control the impact on current particle by the former particles velocity. PSO algorithm has preferred global searching ability, when w is relatively large. On the contrary, its local searching ability becomes better, when w is smaller. Now, we describe the PSO Algorithm.

Assuming \(X_i=(x_{i1},x_{i2},\ldots ,x_{iD})\) is the position of ith particle in D dimension, \(V_i=(v_{i1},v_{i2},\ldots ,v_{iD})\) is its velocity, which represents its direction of searching. In iteration process, each particle keeps the best position pbest found by itself, besides, it also knows the best position gbest searched by the group particles, and changes its velocity according to the two best positions. The standard formula of PSO is as follows:

In which, \(i=1,2,\ldots ,N\); N is the population of the group particles; \(d=1,2,\ldots ,D\); k is the maximum number of iterations; \(r_1\) and \(r_2\) are the random values between [0, 1], which are used to keep the diversity of the group particles; \(c_1\) and \(c_2\) are the learning coefficients, also called as acceleration coefficients; \(v_{id}^k\) is the number d component of the velocity of particle i in kth iterating; \(x_{id}^k\) is the number d component of the position of particle i in kth iterating; \(p_{id}\) is the number d component of the best position particle i has ever found; \(p_{gd}\) is the number d component of the best position the group particles have ever found.

The procedure of PSO is as follows:

-

1.

Initialize the original position and velocity of the particle swarm,

-

2.

Calculate the fitness value of each particle,

-

3.

For each particle, compare the fitness value with the fitness value of pbest, if the current value is better, then renew the position with current position, and update the fitness value simultaneously,

-

4.

Determine the best particle of group with the best fitness value, if the fitness value is better than the fitness value of gbest, then update the gbest and its fitness value with the position,

-

5.

Check the finalizing criterion, if it has been satisfied, quit the iteration; otherwise, return to step 2.

Rights and permissions

About this article

Cite this article

Hakamipour, N. Optimal Plan for Step-Stress Accelerated Life Test with Two Stress Variables for Lognormal Data. Iran J Sci Technol Trans Sci 42, 2259–2271 (2018). https://doi.org/10.1007/s40995-017-0391-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40995-017-0391-x