Abstract

Objective

The present study aimed to assess the consistencies and performances of deep learning (DL) models in the diagnosis of condylar osteoarthritis (OA) among patients with dentofacial deformities using panoramic temporomandibular joint (TMJ) projection images.

Methods

A total of 68 TMJs with or without condylar OA in dentofacial deformity patients were tested to verify the consistencies and performances of DL models created using 252 TMJs with or without OA in TMJ disorder and dentofacial deformity patients; these models were used to diagnose OA on conventional panoramic (Con-Pa) images and open (Open-TMJ) and closed (Closed-TMJ) mouth TMJ projection images.

The GoogLeNet and VGG-16 networks were used to create the DL models. For comparison, two dental residents with < 1 year of experience interpreting radiographs evaluated the same condyle data that had been used to test the DL models.

Results

On Open-TMJ images, the DL models showed moderate to very good consistency, whereas the residents’ demonstrated fair consistency on all images. The areas under the curve (AUCs) of both DL models on Con-Pa (0.84 for GoogLeNet and 0.75 for VGG-16) and Open-TMJ images (0.89 for both models) were significantly higher than the residents’ AUCs (p < 0.01). The AUCs of the DL models on Open-TMJ images (0.89 for both models) were higher than the AUCs on Closed-TMJ images (0.72 for both models).

Conclusions

The DL models created in this study could help residents to interpret Con-Pa and Open-TMJ images in the diagnosis of condylar OA.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Dentofacial deformity patients with malocclusion who plan to undergo orthodontic treatments or orthognathic surgery have a substantially higher incidence of temporomandibular disorders (TMDs) compared with subjects who lack deformities [1]. Osteoarthritis (OA), classified as a degenerative disease with osseous changes according to the diagnostic criteria for TMD (DC/TMD) [2], is frequently observed in skeletal Class II and III malocclusion patients [3].

Although computed tomography (CT) or magnetic resonance (MR) examinations are recommended to confirm osseous changes in the temporomandibular joint (TMJ) [2], panoramic radiography maintains a role in long-term follow-up examinations because of its convenience and low radiation exposure for patients. As an alternative to conventional panoramic (Con-Pa) radiography, panoramic TMJ projections are preferentially used for patients with suspected TMDs, especially in Japan [4,5,6,7]. In panoramic machines equipped with this function, the main X-ray beam is designed to be introduced parallel to the mean long axis of the condylar head (as determined on axial sectional images showing the maximum condylar area) [4,5,6]. Moreover, images can be obtained in open-mouth (Open-TMJ) and closed-mouth (Closed-TMJ) positions. Open-TMJ projections can avoid overlap with surrounding structures. Therefore, the images are more distinct than the results of Con-Pa radiography, in which the beam is projected with inclination relative to the condylar long axis and typically is performed in the closed-mouth position.

Recent advances in deep learning (DL) techniques have attracted considerable attention regarding diagnostic imaging of the TMJ [8,9,10,11,12,13,14]. A DL system using Con-Pa radiography showed relatively good diagnostic performances for condylar OA compared with the diagnostic performances of inexperienced observers [8], but its performances were worse than those of a CT image-based DL system [9]. Only one study has addressed the performance of panoramic images in the open-mouth position [14]; to our knowledge, no studies of DL models using panoramic TMJ projection images in the open- and closed-mouth positions have been conducted in patients with dentofacial deformities.

The aim of the present study was to evaluate the performances of DL models in the diagnosis of condylar OA among patients with dentofacial deformities using panoramic TMJ projection images.

Materials and methods

This study protocol was approved by the ethics committee of our university (Approval No. 496), and the study was performed in accordance with the tenets of the Declaration of Helsinki. Due to the retrospective and noninvasive nature of the study, which used anonymized images stored in our hospital’s clinical database, the ethics committee waived the requirement for informed consent. Patients were given the opportunity to refuse participation in the study through an opt-out method.

Patients

This study included 194 TMJs in 106 patients (74 women and 32 men, mean age: 25.8 years) with dentofacial deformities who were scheduled for orthognathic surgery; all underwent CT, Con-Pa, and panoramic TMJ projection examinations between April 2021 and March 2022 (Table 1). Among these TMJs, condylar OA was observed in 34 based on the DC/TMD [2], whereas 160 did not exhibit OA. Thus, all 34 TMJs with condylar OA and 34 randomly selected TMJs without OA were regarded as test data. The remaining 126 TMJs were used as training and validation data, along with 126 TMJs in 83 TMD patients (68 women and 15 men, mean age: 54.2 years) who had OA. These TMD patients had undergone CT, Con-Pa, and panoramic TMJ projection examinations between May 2007 and September 2019. For most patients, the Con-Pa and panoramic TMJ projection images were obtained on the same day; CT images were obtained within 2 months of radiograph acquisition. The presence or absence of osseous changes were determined by two experienced radiologists using CT images, and disagreements were resolved by discussion.

CT images were obtained using an Aquilion PRIME or Asterion TSX scanner (Canon Medical Systems, Otawara, Japan) with a tube voltage of 120 kV, tube current exposure time product value of 100 mAs, slice thickness of 0.5 mm, pitch of 0.3 mm, and field of view of 20 cm. Con-Pa radiography was performed using a Veraviewepocs (J. Morita Mfg. Corp., Kyoto, Japan) with a tube voltage of 75 kV, tube current of 8 mA, and irradiation time of 16.2 s. Panoramic TMJ projection images were obtained with an AZ 3000 panoramic machine (Asahi Roentgen Ind. Co. Ltd, Kyoto, Japan) with a tube voltage of 75 kV, tube current of 6 mA, and irradiation time of 10 s. CT images were observed on a high-resolution monitor (RadiForce RX240 monitor, EIZO Co., Hakusan, Japan) using an image processing software (AquariusNET; TeraRecon Inc., Foster City, CA, USA).

Image patch preparation

Con-Pa and panoramic TMJ projection images (900 × 900 pixels) were downloaded from our hospital’s imaging database in tagged image file (TIF) format (Fig. 1). Square image patches of 100 × 100 pixels were manually cropped to align the centers of patches approximately with the center of the condylar head using Pictcutter ver. 2.0.0 (https://hp.vector.co.jp/authors/VA020302/). These patches were labeled, classified into two categories (with or without OA), and used as supervised data for the training process and ground truth data for the testing process (Fig. 2).

Downloaded images (900 × 900 pixels). Image patches were cropped into squares of 100 × 100 pixels for the right and left sides in the open- and closed-mouth positions. The center of each patch was set to the center of the condylar head. A conventional panoramic image, B panoramic TMJ projection image

Example image patches of condyles with (A and B) or without (C) OA. A Erosion is evident on an Open-TMJ image without superimposition of surrounding structures. B Osteophytes are observed on both Open- and Closed-TMJ images despite limited condylar movement on an Open-TMJ image. C Condyle without OA. The condyle is clearly observed on an Open-TMJ image

Deep learning architectures

Using two networks, GoogLeNet and VGG-16, classification models were created from DIGITS library version 5.0 (NVIDIA; https://developer.nvidia.com/digits) on Ubuntu OS version 16.04.2 with an 11 GB graphic processor unit (NVIDIA GeForce GTX 1080 Ti; NVIDIA, Santa Clara, CA, USA) and 128 GB memory. GoogLeNet was implemented by 22 layers including 1 × 1, 3 × 3 and 5 × 5 convolutional layers for reducing the number of CNN model parameters [15]. VGG-16 consisted of 13 convolutional layers and 3 free connected layers. This CNN available on DIGITS library was a fine-tuned network [16].

For each network, the training process were performed twice for three types of images; Con-Pa images, Open-TMJ projection images, and Closed-TMJ projection images. The hyperparameters were set for the both networks with initial learning rate of 0.001, solver type of stochastic gradient descent (SGD), and 100 epochs learning. A total of 252 image patches for the learning process were divided into 189 (75%) training and 63 (25%) validation datasets. The training data were augmented 20 times by horizontal flipping, and changes of brightness and contrast using an image processing software (Irfan View version 4.44; https://www.Irfanview.com). Consequently, six models were created for each network. These models were tested on test data consisting of 68 condyles with and without OA, which had been obtained from dentofacial deformity patients.

Assessments of inter-evaluator consistency

Inter-evaluator consistencies were assessed using Cohen’s kappa statistic. For DL models, kappa values between two estimation results were determined. The results for test patches were expressed as the probability of a positive (condylar OA) or negative (non-OA condyle) evaluation. An estimated probability of > 0.5 for estimation of condylar OA was considered positive.

For comparison, two dental residents with < 1 year of experience interpreting radiographs evaluated the same 68 image patches that had been used to test the DL models. Before the evaluations, calibration was performed with respective ten images of condyles with or without OA which were not used for creating and testing the models and specially prepared for the calibration purpose. In actual evaluation, they were asked to determine the probability of a positive evaluation (condylar OA) using a visual analog scale with scores ranging from 0 to 100. Kappa values between the two residents were calculated, and probability of > 50 was considered positive.

The degree of consistency was defined as follows [17]: kappa value < 0.2, poor consistency; 0.2 ≤ kappa value < 0.4, fair consistency; 0.4 ≤ kappa value < 0.6, moderate consistency; 0.6 ≤ kappa value < 0.8, good consistency; and 0.8 ≤ kappa value ≤ 1.0, very good consistency.

Assessments of performance

Two sets of test results from the DL models were combined for each type of image; these sets were used to calculate sensitivity, specificity, and accuracy, with an estimated probability of > 0.5 for condylar OA regarded as positive. The two residents’ results were combined in the same manner; sensitivity, specificity, and accuracy were calculated, with a probability of > 50 considered positive. These probabilities were used to perform receiver operating characteristic (ROC) analysis and determine the area under the ROC curve (AUC). Diagnostic performances were evaluated according to the AUC.

Statistical method

Differences in AUCs were tested by the chi-square test with a significance threshold of p < 0.05.

Results

Inter-evaluator consistencies are presented in Table 2. In both models, Open-TMJ images exhibited the highest kappa values: 0.69 (good consistency) and 0.91 (very good consistency) for GoogLeNet and VGG-16, respectively. The values for residents were lower, and all indicated fair consistency.

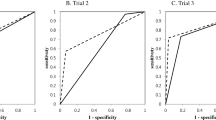

The performances and comparisons of AUCs are shown in Table 3 and Figs. 3 and 4. When performances were compared among evaluators (Fig. 3), the AUCs of both DL models were significantly higher than those of residents for Con-Pa and Open-TMJ images (p < 0.01) (Fig. 3A and B); there were no differences in Closed-TMJ image performances between evaluators (Fig. 3C). Regarding Con-Pa images, the AUC of GoogLeNet differed from the AUC of VGG-16 (p < 0.05) (Fig. 3A). Comparison of AUCs among the three types of images (Con-Pa, Open-TMJ, and Closed-TMJ) (Fig. 4) revealed that Open-TMJ images had the highest AUC in both DL models (Table 3). In the GoogLeNet model, the AUC of Open-TMJ images was higher than the AUC of Closed-TMJ images (p < 0.05) (Fig. 4A). In the VGG-16 model, AUCs did not differ between the Con-Pa and Closed-TMJ images (Fig. 4B). No differences in image AUCs were identified between the residents (Fig. 4C).

Receiver operating characteristic curves for inter-evaluator comparisons. A conventional panoramic image, B panoramic TMJ projection at open-mouth position, C panoramic TMJ projection at closed-mouth position. Con-Pa conventional panoramic image, Open-TMJ: panoramic TMJ projection image in the open-mouth position, Closed-TMJ panoramic TMJ projection image in the closed-mouth position, * Significant difference between AUCs (p < 0.05), ** Significant difference between AUCs (p < 0.01)

Receiver operating characteristic curves for comparisons among panoramic images. A GoogLeNet, B VGG-16, C residents. Con-Pa: conventional panoramic image, Open-TMJ: panoramic TMJ projection image in the open-mouth position, Closed-TMJ: panoramic TMJ projection image in the closed-mouth position, * Significant difference between AUCs (p < 0.05), ** Significant difference between AUCs (p < 0.01)

Discussion

According to Krisjane et al. [3], the prevalences of condylar OA are reportedly 43 and 20% in patient’s joints with Classes II and III malocclusion, respectively. In the present study, 34 of 194 (17.5%) condyles in dentofacial deformity patients were diagnosed with OA. This lower prevalence compared with the previous study may be related to the different distribution of malocclusion classes.

When data features differ between learning (i.e., training and validation) and test data, DL model performances may be affected [11, 18]. In the present study, the learning data regarded as positive evaluations, namely condylar OA, were collected from TMD patients who lacked dentofacial deformities and had a substantially higher mean age compared with the test data; these differences arose from specific characteristics among patients visiting our hospital. Despite these differences, the DL models demonstrated sufficiently high performances, especially for Open-TMJ images. These results suggest that differences in data characteristics can be ignored in the present study. However, in a previous study, the use of data from different hospitals affected model performances even when the same imaging procedure was used [11]. Thus, careful usage is required when applying models created in the present study to data from other institutions.

The differences were found in two estimation results even by DL models, representing as not perfect consistency. This might be related to differences in training and validation data between the two learning processes because these training and validation data were randomly allocated by the system. The inter-resident consistencies of Con-Pa and Open-TMJ images were low (both 0.21), consistent with the results of a previous study concerning Con-Pa image consistency [8]. In the same report, high consistency (kappa value: 0.84) among Con-Pa images was achieved using AlexNet. In the present study, relatively high consistencies were achieved by VGG-16, especially for Open-TMJ images (kappa value: 0.91). This high consistency may facilitate effective use of DL models in clinical practice.

Comparison of performances among the evaluators (Fig. 3) showed that the GoogLeNet AUCs for Con-Pa images were significantly different from those AUCs achieved by VGG-16 (p < 0.05) and the residents (p < 0.01). The AUCs for Open-TMJ images did not differ between DL models, but they were significantly higher than the AUCs achieved by residents. These results indicated that the DL models could help inexperienced observers to interpret Con-PA and Open-TMJ images in the diagnosis of condylar OA.

Comparisons of performance among the three images (Fig. 4) revealed that, excluding the residents’ results, Open-TMJ images had the highest AUCs, as expected. There were no differences in AUCs achieved by VGG-16 and the residents between Con-Pa and Closed-TMJ images, likely because of the similar condylar position on these images.

The diagnostic performances of Con-Pa radiography for condylar OA are reportedly insufficient, with accuracies slightly better than 0.7 achieved even by experts, such as TMD treatment specialists and experienced oral and maxillofacial radiologists [8]. Several researchers have applied DL systems to Con-Pa images for the diagnosis of condylar OA. Nozawa et al. reported an AUC of 0.76 using AlexNet with CT images as the gold standard; they concluded that this AUC was superior to the AUC achieved by residents and equivalent to the AUC achieved by experts [8]. Choi et al. reported similar results. They evaluated the performance of ResNet on Con-Pa images, using CT images as the gold standard, and reported an accuracy of 0.80; this was equivalent to the accuracy achieved by experts (0.81) [12]. Although their gold standard was not definitively described, Kim et al. reported an AUC of 0.82 when a refined VGG-16 was applied to Con-Pa images [10]. In the present study, application of GoogLeNet to Con-Pa images had the highest AUC (0.84) compared with previous studies.

Regarding the performances of panoramic TMJ projection for condylar OA, Araki et al. reported that an accuracy of 0.82 was achieved by experienced radiologists [5]. Using cone-beam CT images as the gold standard, they evaluated the presence of OA by combining Open- and Closed-TMJ images. Their accuracy was superior to the accuracies achieved by residents (0.63 for both Open- and Closed-TMJ images) in the present study. In another study, by Jung et al. [14] applied DL models created by ResNet-152 and EfficientNet-B7 to panoramic images in the open-mouth position, which corresponded to Open-TMJ images in the present study. They used a panoramic machine equipped with the function of panoramic TMJ projection, revealing AUCs of 0.94 and 0.95 for ResNet and EfficientNet, respectively. These AUCs were higher than the AUCs achieved in the present study (AUCs of 0.89 for both GoogLeNet and VGG-16). This difference may be related to the characteristics of the test data. The condylar shape and position in dentofacial deformity patients differ among malocclusion classes [19, 20], resulting in changes regarding the angle of X-ray projection relative to the condylar long axis. Moreover, superimposition of surrounding structures may have reduced performance, even on Open-TMJ images, because the present test data included condyles with limited movement.

There were some limitations in this study. First, the number of images required to develop a model can vary depending on factors such as the complexity of the task, quality of the images, variability in the dataset. Therefore, although good performances were observed, the learning data were insufficient to confirm these performance outcomes. Further data collection is needed to verify the findings. Second, these data were collected in a single hospital, and external validity was not evaluated. The model performances should be verified in prospective study in our hospital, and the models should subsequently be applied to data from other institutions. Third, the types of dentofacial deformities were not classified; the classes of deformities may have affected model performances. Fourth, the use of diagnostic assistant was verified only for inexperienced observers. It should be tested also for experienced observers to establish actual clinical use.

Conclusion

Compared with residents, the DL models created in the present study achieved significantly better performances (AUCs) in Con-Pa and Open-TMJ images. Among the three types of images evaluated, Open-TMJ images had the highest AUCs in both DL models. These results indicated that the DL models could help residents to interpret Con-Pa and Open-TMJ images in the diagnosis of condylar OA.

Data availability

The dataset used in this study will be available from the corresponding author upon reasonable requests.

References

Al-Moraissi EA, Perez D, Ellis E 3rd. Do patients with malocclusion have a higher prevalence of temporomandibular disorders than controls both before and after orthognathic surgery? a systematic review and meta-analysis. J Craniomaxillofac Surg. 2017;45:1716–23. https://doi.org/10.1016/j.jcms.2017.07.015.

Schiffman E, Ohrbach R, Truelove E, Look J, Anderson G, Goulet JP, List T, Svensson P, Gonzalez Y, Lobbezoo F, Michelotti A, Brooks SL, Ceusters W, Drangsholt M, Ettlin D, Gaul C, Goldberg LJ, Haythornthwaite JA, Hollender L, Jensen R, John MT, De Laat A, de Leeuw R, Maixner W, van der Meulen M, Murray GM, Nixdorf DR, Palla S, Petersson A, Pionchon P, Smith B, Visscher CM, Zakrzewska J, Dworkin SF. Diagnostic criteria for temporomandibular disorders (DC/TMD) for clinical and research applications: recommendations of the international RDC/TMD consortium network and orofacial pain special interest group. J Oral Fac Pain Headache. 2014;28:6–27.

Krisjane Z, Urtane I, Krumina G, Neimane L, Ragovska I. The prevalence of TMJ osteoarthritis in asymptomatic patients with dentofacial deformities: a cone-beam CT study. Int J Oral Maxillofac Surg. 2012;41:690–5.

Kudoh T, Maeda N, Kawaguchi S, Takeuchi T, Sugawara C, Shimomura M, Hosoki H, Iwasaki Y, Uemura S. Panoramic radiography of the temporomandibular joint part 4: detectability of outline of the temporomandibular joint in lateral panoramic temporomandibular joint projection (in Japanese). Dent Radiol. 2000;40:237–41.

Araki K, Honda K, Okano T, Endo A, Kaneko N, Funahashi I, Kataoka R. Diagnostic accuracy of the detection of bone change using panoramic TMJ projection: comparative study with limited cone-beam CT (in Japanese). Dent Radiol. 2007;47:121–5.

Igarashi C. Image diagnosis of osteoarthrosis of the temporomandibular joint using the panoramic TMJ (temporomandibular joint) radiograph (in Japanese). J Jpn Soc TMJ. 2017;29:85–91.

Nozawa M, Ogi N, Ariji Y, Kise Y, Nakayama M, Nishiyama M, naitoh M, Kurita K, Ariji E. Reliability of diagnostic imaging for degenerative diseases with osseous changes in the temporomandibular joint with special emphasis on subchondral cyst. Oral Radiol. 2020;36:156–62. https://doi.org/10.1007/s11282-019-00392-3.

Nozawa M, Ariji Y, Fukuda M, Kise Y, Naitoh M, Nishiyama M, Ogi N, Katsumata A, Ariji E. Use of a deep learning system for diagnosis of degenerative disease of the temporomandibular joint on panoramic radiographs (in Japanese). J Jpn Soc TMJ. 2020;32:55–64.

Lee KS, Kwak HJ, Oh JM, Jha N, Kim YJ, Kim W, Bail UB, Ryu JJ. Automated detection of TMJ osteoarthritis based on artificial intelligence. J Dent Res. 2020;99:1363–7. https://doi.org/10.1177/0022034520936950.

Kim D, Choi E, Jeong HG, Chang J, Youm S. Expert system for mandibular condyle detection and osteoarthritis classification in panoramic imaging using R-CNN and CNN. Appl Sci. 2020;10:7464. https://doi.org/10.3390/app10217464.

Nishiyama M, Ishibashi K, Ariji Y, Fukuda M, Nishiyama W, Umemura M, Katsumata A, Fujita H, Ariji E. Performance of deep learning models constructed using panoramic radiographs from two hospitals to diagnose fractures of the mandibular condyle. Dentomaxillofac Radiol. 2021;50:20200611. https://doi.org/10.1259/dmfr.20200611.

Nozawa M, Ito H, Ariji Y, Fukuda M, Igarashi C, Nishiyama M, Ogi N, Katsumata A, Kobayashi K, Ariji E. Automatic segmentation of the temporomandibular joint disc on magnetic resonance images using a deep learning technique. Dentomaxillfac Radiol. 2021;50:20210185. https://doi.org/10.1259/dmfr.20210185.

Choi E, Kim D, Lee JY, Park HK. Artificial intelligence in detecting temporomandibular joint osteoarthritis on orthopantomogram. Sci Rep. 2021;11:10246. https://doi.org/10.1038/s41598-021-8942-y.

Jung W, Lee KE, Suh BJ, Seok H, Lee DW. Deep learning for osteoarthritis classification in temporomandibular joint. Oral Dis. 2023;29:1050–9.

Szegedy C, Liu W, Jia Y, et al (2015) Going deeper with convolutions. In: Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA: IEEE 1–9

Simonyan K, Zisserman A (2015) Very deep convolutional networks for large-scale image recognition. In: Proceedings of the 2015 3rd IAPR Asian Conference on Pattern Recognition (ACPR), Kuala Lumpur, Malaysia: ICLR 730–734

Altman DG. Practical statistics for medical research. London, U. K.: Chapman and Hall; 1991. p. 404.

Ariji Y, Mori M, Fukuda M, Katsumata A, Ariji E. Automatic visualization of the mandibular canal in relation to an impacted mandibular third molar on panoramic radiographs using deep learning segmentation and transfer learning techniques. Oral Surg Oral Med Oral Pathol Oral Radiol. 2022;134:749–57.

Rodrigues AF, Fraga MR, Vitral RWF. Computed tomography evaluation of the temporomandibular joint in class I malocclusion patients: condylar symmetry and condyle-fossa relationship. Am J Orthod Dentofacial Orthop. 2009;136:192–8.

Rodrigues AF, Fraga MR, Vitral RWF. Computed tomography evaluation of the temporomandibular joint in class II division 1 and class III malocclusion patients: condylar symmetry and condyle-fossa relationship. Am J Orthod Dentofacial Orthop. 2009;136:199–206.

Acknowledgements

We thank Ryan Chastain-Gross, Ph.D., from Edanz (https://jp.edanz.com/ac) for editing a draft of this manuscript.

Funding

Not applicable.

Author information

Authors and Affiliations

Contributions

Y.I, T.S and Y.K were responsible for conceptualization, methodology and formal analysis, data analysis and interpretation, and were major contributors in writing the manuscript. M.S, M.H, M.F and Y.A were performed processing image data and evaluating the performance of learning models. E.A supervised the whole experiment with important instructions and advices. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Conflict of interest

Not applicable.

Ethics approval

All procedures followed were in accordance with the ethical standards of the responsible committee on human experimentation (institutional and national) and with the Helsinki Declaration of 1975, as revised in 2008 (5).

Informed consent

This was a non-invasive, observational study using only anonymized images from a database. By using opt-out consent, subjects were given the opportunity to refuse to participate in the study. The Ethics Committee of our university waived the requirement for informed consent from all participants.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Iwase, Y., Sugiki, T., Kise, Y. et al. Deep learning classification performance for diagnosing condylar osteoarthritis in patients with dentofacial deformities using panoramic temporomandibular joint projection images. Oral Radiol 40, 538–545 (2024). https://doi.org/10.1007/s11282-024-00768-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11282-024-00768-0