Abstract

In this paper, we introduce and study fused lasso nearly-isotonic signal approximation, which is a combination of fused lasso and generalized nearly-isotonic regression. We show how these three estimators relate to each other and derive solution to a general problem. Our estimator is computationally feasible and provides a trade-off between monotonicity, block sparsity, and goodness-of-fit. Next, we prove that fusion and near-isotonisation in a one-dimensional case can be applied interchangably, and this step-wise procedure gives the solution to the original optimization problem. This property of the estimator is very important, because it provides a direct way to construct a path solution when one of the penalization parameters is fixed. Also, we derive an unbiased estimator of degrees of freedom of the estimator.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

This work is motivated by recent papers in nearly-constrained estimation in several dimensions, and by the papers in generalised penalized least squared regression. The subject of penalized estimators starts with \(L_{1}\)-penalisation, cf. Tibshirani (1996), which is called lasso signal approximation, and \(L_{2}\)-penalisation, which is usually addressed as ridge regression (Hoerl and Kennard 1970) or sometimes as Tikhonov–Philips regularization (Phillips 1962; Tikhonov et al. 1995). The first generalisation of lasso is \(L_{1}\)-penalisation imposed on the successive differences of the coefficients. For a given sequence of data points \({\varvec{y}} \in {\mathbb {R}}^{n}\), the fusion approximator (cf. Rinaldo 2009) is given by

The combination of fusion approximator and lasso is called fused lasso estimator and is given by:

The fused lasso was introduced in Tibshirani et al. (2005), and its asymptotic properties were studied in detail in Rinaldo (2009). Also, it is worth to note that in the paper Tibshirani and Taylor (2011) the estimator in (1) is called the fused lasso, while the estimator in (2) is addressed as the sparse fused lasso.

In the area of constrained inference the basic and simplest problem is isotonic regression in onedimension. For a given sequence of data points \({\varvec{y}} \in {\mathbb {R}}^{n}\), the isotonic regression is the following approximation

i.e. it is \(\ell ^{2}\)-projection of the vector \({\varvec{y}}\) onto the set of non-increasing vectors in \({\mathbb {R}}^{n}\). The notion of isotonic “regression” in this context might be confusing. Nevertheless, it is a standard notion in this subject, cf., for example, the papers Best and Chakravarti (1990); Stout (2013), where the notation “isotonic regression” is used for the isotonic projection of a general vector. Also, in this paper we use the notations “regression”, “estimator” and “approximator” interchangeably. A general introduction to isotonic regression can be found, for example, in Robertson et al. (1988).

The nearly-isotonic regression, introduced in Tibshirani et al. (2011) and studied in detail in Minami (2020), is a less restrictive version of isotonic regression, and it is given by the following optimization problem

where \(x_{+} = x \cdot 1\{x > 0 \}\).

In this paper we combine the fused lasso estimator with nearly-isotonic regression and call the resulting estimator as fused lasso nearly-isotonic signal approximator, i.e. for a given sequence of data points \({\varvec{y}} \in {\mathbb {R}}^{n}\), the problem in one-dimensional case is the following optimization:

Also, in the case of \(\lambda _{F} \ne 0\) and \(\lambda _{NI} \ne 0\) with \(\lambda _{L} = 0\) we call the estimator fused nearly-isotonic regression, i.e.

This generalisation of nearly-isotonic regression in (6) was proposed in the conclusion of the paper Tibshirani et al. (2011). Next, a one-dimensional fused nearly-isotonic regression was considered and numerically solved in Yu et al. (2022) with time complexity \({\mathcal {O}}(n)\). Nevertheless, first, in this paper we consider and solve the problem in general dimensions. Second, for fixed penalisation parameters in a one-dimensional case we also provide a solution with linear complexity and an exact partly path solution (when one of the parameters is fixed and the path is with respect to the other one) with complexity \({\mathcal {O}}(n\log (n))\).

It is also worth to mention the paper Gómez et al. (2022), where the authors studied the nearly-isotonic approximator with extra penalisation term

with an additional lasso penalty. Also, in the paper Gaines et al. (2018) the authors did a comparison of the algorithms to solve the lasso with linear constraints, which is called constrained lasso.

In the next step we state the problem defined in (5) for the general case of isotonic constraints with respect to a general partial order. First, we have to introduce the notation.

1.1 Notation

We start with basic definitions of partial order and isotonic regression. Let \({\mathcal {I}} = \{{\varvec{i}}_{1}, \dots , {\varvec{i}}_{n}\}\) be some index set. Next, we define the following binary relation \(\preceq \) on \({\mathcal {I}}\).

A binary relation \(\preceq \) on \({\mathcal {I}}\) is called partial order if

-

it is reflexive, i.e. \({\varvec{j}}\preceq {\varvec{j}}\) for all \({\varvec{j}} \in {\mathcal {I}}\);

-

it is transitive, i.e. \({\varvec{j}}_{1}, {\varvec{j}}_{2}, {\varvec{j}}_{3} \in {\mathcal {I}}\), \({\varvec{j}}_{1} \preceq {\varvec{j}}_{2}\) and \({\varvec{j}}_{2} \preceq {\varvec{j}}_{3}\) imply \({\varvec{j}}_{1} \preceq {\varvec{j}}_{3}\);

-

it is antisymmetric, i.e. \({\varvec{j}}_{1}, {\varvec{j}}_{2} \in {\mathcal {I}}\), \({\varvec{j}}_{1} \preceq {\varvec{j}}_{2}\) and \({\varvec{j}}_{2} \preceq {\varvec{j}}_{1}\) imply \({\varvec{j}}_{1} = {\varvec{j}}_{2}\).

Further, a vector \({\varvec{\beta }}\in {\mathbb {R}}^{n}\) indexed by \({\mathcal {I}}\) is called isotonic with respect to the partial order \(\preceq \) on \({\mathcal {I}}\) if \({\varvec{j}}_{1} \preceq {\varvec{j}}_{2}\) implies \(\beta _{{\varvec{j}}_{1}} \le \beta _{{\varvec{j}}_{2}}\). We denote the set of all isotonic vectors in \({\mathbb {R}}^{n}\) with respect to the partial order \(\preceq \) on \({\mathcal {I}}\) by \({\varvec{{\mathcal {B}}}}^{is}\), which is a closed convex cone in \({\mathbb {R}}^{n}\) and it is also called isotonic cone. Next, a vector \({\varvec{\beta }}^{I}\in {\mathbb {R}}^{n}\) is the isotonic regression of an arbitrary vector \({\varvec{y}} \in {\mathbb {R}}^{n}\) over the pre-ordered index set \({\mathcal {I}}\) if

For any partial order relation \(\preceq \) on \({\mathcal {I}}\) there exists a directed graph \(G = (V,E)\), with \(V = {\mathcal {I}}\) and E is the minimal set of edges such that

and an arbitrary vector \({\varvec{\beta }} \in {\mathbb {R}}^{n}\) is isotonic with respect to \(\preceq \) iff \(\beta _{{\varvec{l_{1}}}} \le \beta _{{\varvec{l_{2}}}}\), given that E contains the chain of edges from \({\varvec{l}}_{1} \in V\) to \({\varvec{l}}_{2} \in V\).

Now we can generalise the estimators discussed above. First, equivalent to the definition in (7), a vector \({\varvec{\beta }}^{I}\in {\mathbb {R}}^{n}\) is the isotonic regression of an arbitrary vector \({\varvec{y}} \in {\mathbb {R}}^{n}\) indexed by the partially ordered index set \({\mathcal {I}}\) if

subject to \(\beta _{{\varvec{l_{1}}}} \le \beta _{{\varvec{l_{2}}}}\), whenever E contains the chain of edges from \({\varvec{l}}_{1} \in V\) to \({\varvec{l}}_{2} \in V\).

Second, for the directed graph \(G = (V, E)\), which corresponds to the partial order \(\preceq \) on \({\mathcal {I}}\), the nearly-isotonic regression of \({\varvec{y}}\in {\mathbb {R}}^{n}\) indexed by \({\mathcal {I}}\) is given by

This generalisation of nearly-isotonic regression was introduced and studied in Minami (2020).

Next, fused and fused lasso approximators for a general directed graph \(G = (V, E)\) are given by

and

These optimization problems were introduced and solved for a general graph in Friedman et al. (2007); Hoefling (2010); Tibshirani and Taylor (2011).

Further, let D denote the oriented incidence matrix for the directed graph \(G = (V, E)\), corresponding to \(\preceq \) on \({\mathcal {I}}\). We choose the orientation of D in the following way. Assume that the graph G with n vertexes has m edges. Next, assume we label the vertexes by \(\{1, \dots , n\}\) and edges by \(\{1, \dots , m\}\). Then, D is \(m\times n\) matrix with

In order to clarify the notations we consider the following examples of partial order relation. First, let us consider the monotonic order relation in the one-dimensional case. Let \({\mathcal {I}} = \{1, \dots , n\}\), and for \(j_{1} \in {\mathcal {I}}\) and \(j_{2} \in {\mathcal {I}}\) we naturally define \(j_{1}\preceq j_{2}\) if \(j_{1} \le j_{2}\). Further, if we let \(V = {\mathcal {I}}\) and \(E = \{(i, i+1): i = 1, \dots , n-1 \}\), then \(G = (V, E)\) is the directed graph which corresponds to the one-dimensional order relation on \({\mathcal {I}}\). Figure 1 displays the graph and the incidence matrix for the graph.

Next, we consider two dimensional case with bimonotonic constraints. The notion of bimonotonicity was first introduced in Beran and Dümbgen (2010) and it means the following. Let us consider the index set

with the following order relation \(\preceq \) on it: for \({\varvec{j}}_{1}, {\varvec{j}}_{2}\in {\mathcal {I}}\) we have \({\varvec{j}}_{1} \preceq {\varvec{j}}_{2}\) iff \(j^{(1)}_{1} \le j^{(1)}_{2}\) and \(j^{(2)}_{1} \le j^{(2)}_{2}\). Then, a vector \({\varvec{\beta }}\in {\mathbb {R}}^{n}\), with \(n=n_{1}n_{2}\), indexed by \({\mathcal {I}}\) is called bimonotone if it is isotonic with respect to bimonotone order \(\preceq \) defined on its index \({\mathcal {I}}\). Further, we define the directed graph \(G = (V, E)\) with vertexes \(V = {\mathcal {I}}\), and the edges

The labeled directed graph for bimonotone constraints and its incidence matrix are displayed in Fig. 2.

1.2 General statement of the problem

Now we can state the general problem studied in this paper. Let \({\varvec{y}} \in {\mathbb {R}}^{n}\) be a signal indexed by the index set \({\mathcal {I}}\) with the partial order relation \(\preceq \) defined on \({\mathcal {I}}\). Next, let \(G=(V,E)\) be the directed graph corresponding to \(\preceq \) on \({\mathcal {I}}\). The fused lasso nearly-isotonic signal approximation with respect to \(\preceq \) on \({\mathcal {I}}\) (or, equivalently, to the directed graph \(G = (V, E)\), corresponding to \(\preceq \)) is given by

Therefore, the estimator in (14) is a combination of the estimators in (10) and (12).

Equivalently, we can rewrite the problem in the following way:

where D is the oriented incidence matrix of the graph \(G =(V, E)\). Here, we clarify that in the case of penalisation with the incidence matrix D we assume that \({\varvec{\beta }}\) is indexed according to the indexing of the edges in the graph \(G =(V, E)\). Analogously to the definition in the one-dimensional case, if \(\lambda _{L} = 0\) we call the estimator fused nearly-isotonic approximator and denote it by \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\).

Here, it is worth to mention recent papers in constrained estimation (Deng and Zhang 2020; Han et al. 2019; Han and Zhang 2020), where the authors studied the asymptotic properties of the isotonic regression in general dimensions. Also, in paper Wang et al. (2015) \(\ell _{1}\)-trend filtering was generalised for the case of a general graph.

1.3 Organisation of the paper

The rest of the paper is organized as follows. In Sect. 2 we provide the numerical solution to the fused lasso nearly-isotonic signal approximator. Section 3 is dedicated to the theoretical properties of the estimator. We show how the solutions to the fused lasso nearly-isotonic regression, fused lasso, and nearly-isotonic regression are related to each other. Also, we prove that in the one-dimensional case the new estimator has agglomerative property and the procedures of near-isotonisation and fusion can be swapped and provide the solution to the original problem. Next, in Sect. 4 we derive the unbiased estimator of the degrees of freedom of the estimator. Furthermore, in Sect. 5 we discuss the computational aspects, do the simulation study and show that the estimator is computationally feasible for moderately large data sets. Also, we illustrate the usage of the estimator for the real data set. The article closes with a conclusion and a discussion of possible generalisations in Sect. 6. The proofs of all results are given in the Appendix. The R and Python implementations of the estimator are available upon request.

2 Solution to the fused lasso nearly-isotonic signal approximator

First, we consider fused nearly-isotonic regression, i.e. in (15) we assume that \(\lambda _{L} = 0\).

Theorem 1

For a fixed data vector \({\varvec{y}} \in {\mathbb {R}}^{n}\) indexed by the index set \({\mathcal {I}}\) with the partial order relation \(\preceq \) defined on \({\mathcal {I}}\), the solution to the fused nearly-isotonic problem in (15) is given by

with

where D is the incidence matrix of the directed graph \(G = (V, E)\) with n vertices and m edges corresponding to \(\preceq \) on \({\mathcal {I}}\), \({\varvec{1}}\in {\mathbb {R}}^{m}\) is the vector whose all elements are equal to 1 and the notation \({\varvec{a}} \le {\varvec{b}}\) for vectors \({\varvec{a}},{\varvec{b}} \in {\mathbb {R}}^{m}\) means \(a_{i} \le b_{i}\) for all \(i = 1, \dots , m\).

Next, we provide the solution to the fused lasso nearly-isotonic regression.

Theorem 2

For a given vector \({\varvec{y}}\) indexed by \({\mathcal {I}}\), the solution to the fused lasso nearly-isotonic signal approximator \(\hat{{\varvec{\beta }}}^{FLNI}({\varvec{y}},\lambda _{F}, \lambda _{L},\lambda _{NI})\) is given by soft thresholding the fused nearly-isotonic regression \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}},\lambda _{F}, \lambda _{NI})\), i.e.

for \({{\varvec{i}}}\in {\mathcal {I}}\).

From this result we can conclude that adding lasso penalisation does not add much to the computational complexity of the solution. The computational aspects of fused nearly-isotonic approximator will be discussed in Sect. 5 below. In the next section we discuss properties of the fused lasso nearly-isotonic regression.

3 Properties of the fused lasso nearly-isotonic signal approximator

We start with a proposition which shows how the solutions to the optimization problems (11), (10) and (15) are related to each other. This result will be used in the next section to derive degrees of freedom of the fused lasso nearly-isotonic signal approximator.

Proposition 3

For a fixed data vector \({\varvec{y}}\) indexed by \({\mathcal {I}}\) and penalisation parameters \(\lambda _{NI}\) and \(\lambda _{F}\) the following relations between estimators \(\hat{{\varvec{\beta }}}^{F}\), \(\hat{{\varvec{\beta }}}^{NI}\) and \(\hat{{\varvec{\beta }}}^{FNI}\) hold

and

where D is the oriented incidence matrix for the graph \(G = (V, E)\) corresponding to the partial order relation \(\preceq \) on \({\mathcal {I}}\).

Further, let us introduce two "naive" versions of \(\hat{{\varvec{\beta }}}^{FNI}\). Instead of simultaniously penalise by fusion and isotonisation we consider the following two-step procedures:

and

Below we prove that both “naive” methods in the one-dimensional case with a simple monotonic restriction defined above are not only equivalent, but both methods provide the solution to the fused nearly-isotonic regression.

First, we have to prove that, analogously to fused lasso and nearly-isotonic regression, as one of the penalization parameters increases, the constant regions in the solution \(\hat{{\varvec{\beta }}}^{FLNI}\) can only be joined together and not split apart. In the paper Minami (2020) this property of the estimator was called agglomerative property. We prove this result only for the one-dimensional monotonic order, and the general case is an open question.

Proposition 4

(Agglomerative property of FLNI estimator) Let \({\mathcal {I}} = \{1, \dots , n\}\) with the natural order for integers defined on it. Next, let \({\varvec{\lambda }} = (\lambda _{F}, \lambda _{L}, \lambda _{NI})\) and \({\varvec{\lambda }}^{*}= (\lambda _{F}^{*}, \lambda _{L}^{*}, \lambda _{NI}^{*})\) are the triples of penalisation parameters such that one of the elements of \({\varvec{\lambda }}^{*}\) is greater than the corresponding element in \({\varvec{\lambda }}\), while two others are the same. Next, assume that for some i the solution \(\hat{{\varvec{\beta }}}^{FLNI}({\varvec{y}},{\varvec{\lambda }})\) satisfies

Then for \({\varvec{\lambda }}^{*}\) we have

Now we can prove the commutability property of the “naive” estimators and the equivalence of the approach to the fused nearly-isotonic regression.

Theorem 5

(Commutability property of FNI estimator) Let \(\hat{{\varvec{\beta }}}^{F\rightarrow NI}\!({\varvec{y}}, \lambda _{F}, \lambda _{NI})\) and \(\hat{{\varvec{\beta }}}^{NI\rightarrow F}\!({\varvec{y}}, \lambda _{NI}, \lambda _{F})\) be the “naive” versions of the fused nearly-isotonic approximator, defined in (22) and (23), in the case of one-dimensional monotonic constraint. Then, we have

One of the first conclusions of Theorem 5 is commutability of strict isotonisation (which corresponds to the large values of \(\lambda _{NI}\)) and fusion. For big values of \(\lambda _{NI}\), fused lasso nearly-isotonic signal approximation is, in principle, analogous to the approach studied in Gao et al. (2020), where the authors studied estimation of isotonic piecewise constant signals solving the following optimization problem

where

and pen(n, k) is a penalization term which depends on n and k but not on \({\varvec{y}}\). Therefore, the result of Theorem 5 provides an alternative approach to obtain an exact solution in the estimation isotonic piecewise constant signals.

4 Degrees of freedom

In this section we discuss the estimation of the degrees of freedom for the fused nearly-isotonic regression and the fused lasso nearly-isotonic signal approximator. Let us consider the following nonparametric model

where \({\varvec{\mathring{\beta }}}\in {\mathbb {R}}^{n}\) is an unknown signal, and the error term \({\varvec{\varepsilon }}\in {\mathcal {N}}({\varvec{0}}, \sigma ^{2}{\varvec{I}})\).

The degrees of freedom is a measure of complexity of the estimator, and following Efron (1986), for the fixed values of \(\lambda _{F}\), \(\lambda _{L}\) and \(\lambda _{Ni}\) the degrees of freedom of \(\hat{{\varvec{\beta }}}^{FNI}\) and \(\hat{{\varvec{\beta }}}^{FLNI}\) are given by

The next theorem provides the unbiased estimators of the degrees of freedom \(df(\hat{{\varvec{\beta }}}^{FNI})\) and \(df(\hat{{\varvec{\beta }}}^{FLNI})\).

Theorem 6

For the fixed values of \(\lambda _{F}\), \(\lambda _{L}\) and \(\lambda _{Ni}\) let

and

Then we have

and

We can potentially use the estimate of degrees of freedom for an unbiased estimation of the true risk \({\mathbb {E}}[\sum _{i=1}^{n}(\mathring{\beta }_{i}- {\hat{\beta }}^{FLNI}_{i}({\varvec{Y}}, \lambda _{F}, \lambda _{L}, \lambda _{NI}))^2]\), which is given by the \({\hat{C}}_{p}\) statistic

Though, we note that in real applications the variance \(\sigma ^{2}\) is unknown. The variance estimator for the case of one-dimensional isotonic regression was introduced in Meyer and Woodroofe (2000). To the authors’ knowledge, the variance estimator even for the one-dimensional nearly-isotonic regression is an open problem.

In order to illustrate the performance of the degrees of freedom estimator, we generate \(M = 3000\) independent samples from te following signal on a grid

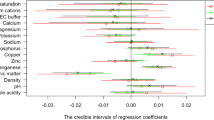

where \(i,j= 1,\dots , 5\) and \({\varvec{\varepsilon }}\in {\mathcal {N}}(0, 0.25)\). Using Monte-Carlo simulations, we estimate \(\textrm{Cov}[{\hat{\beta }}^{FLNI}_{i}, Y_{i}]\) and compare the estimated true value of df with the estimator defined in Theorem 6. The result for different values of penalisation parameters is given in Fig. 3.

5 Computational aspects, simulation study and application to a real data set

First of all, recall that the solution to the fused lasso nearly-isotonic approximator is given by

for \({{\varvec{i}}}\in {\mathcal {I}}\), with

where

where D is the incidence matrix displayed in Fig. 1 (a) for the one-dimensional case. The matrix D is full raw ranked, therefore, the problem is strictly convex. Next, we have similar box-type constraints as in the problem of the \(L_{1}\)-trend filtering example and we can solve the problem with \({\mathcal {O}}(n)\) time complexity.

Second, note that in one-dimensional case the time complexities of path solution algorithms for the nearly-isotonic regression and the fusion approximator are equal to \({\mathcal {O}}(n\log (n))\), cf. Tibshirani et al. (2011); Hoefling (2010); Bento et al. (2018) with the references therein. Therefore, if we have \(\lambda _{F}\) fixed, then using the result of Theorem 5 we can get the solution path with respect to \(\lambda _{NI}\) with the time complexity \({\mathcal {O}}(n\log (n))\). Further, if we fix \(\lambda _{NI}\) then, again, using Theorem 5 we can obtain the solution path with respect to \(\lambda _{F}\) with complexity \({\mathcal {O}}(n\log (n))\). In paper Yu et al. (2022) the one-dimensional fused nearly-isotonic regression was solved for fixed values of penalisation parameters. Therefore, one-dimensional fused lasso and nearly-isotonic regression have been studied in detail, therefore, in our paper we focus on the two-dimensional case.

The case of several dimensions is more complicated. Note, that, for example, even in the case of two dimensions the matrix D, displayed in Fig. 2, is not full raw ranked. Therefore, the dual problem is not strictly convex. At the same time one can see that the matrix D is sparse diagonal. Therefore, we apply the recently developed OSQP algorithm, cf. Stellato et al. (2020). The time complexity of the solution is linear with respect to the number of edges in the graph, i.e. it is \({\mathcal {O}}(|E|)\).

The exact solution for fixed values of penalisation parameters can be obtained using the results of the paper Minami (2020), where the author proposed the algorithm for a general graph with computational complexity \({\mathcal {O}}(n|E|\log (\frac{n^{2}}{|E|}))\). Therefore, in principle, using the relation between fused nearly-isotonic regression and nearly-isotonic regression proved in Proposition 3 it is possible to obtain the exact solution to the fused nearly-isotonic approximation for a general graph.

First, recall that from Theorem 2 it follows that the solution with \(\lambda _{L} \ne 0\) is given by soft-thresholding of the solution with \(\lambda _{L} = 0\). Therefore, lasso penalization does not add much to the complexity, and we concentrate on the case with \(\lambda _{L} = 0\). Following Minami (2020), we use the following bi-monotone functions (bisigmoid and bicubic) to test the performance of the fused nearly-isotonic approximator:

where \(x^{(1)} \in [0,1)\) and \(x^{(2)} \in [0,1)\).

The simulation experiment is performed in the following way. First, we generate homogeneous grid \(k\times k\):

for \(k = 1, \dots , d\). The size of the side d varies in \(\{ 2\times 10^{2}, 4\times 10^{2}, 6\times 10^{2}, 8\times 10^{2}, 10^{3} \}\). Next, we uniformly generate penalisation parameters \(\lambda _{F}\) and \(\lambda _{NI}\) from U(0, 5). We perform 10 runs and compute computational times for each d. Analogously to Stellato et al. (2020), we consider two cases of OSQP algorithm: low precision case with \(\varepsilon _{abs} = \varepsilon _{rel} = 10^{-3}\), and high precision case with \(\varepsilon _{abs} = \varepsilon _{rel} = 10^{-5}\) (for the details of the settings in OSQP we refer to Stellato et al. (2020)). Figure 4 below provides these computational times. All the computations were performed on MacBook Air (Apple M1 chip), 16 GB RAM. From these results we can conclude that the estimator is computationally feasible for moderate sized data sets (i.e. for the grids with millions of nodes).

Next, Fig. 5 visualizes the fused nearly-isotonic approximator. We use the Adult data set, available from the UCI Machine Learning repository (Becker and Kohavi 1996). The target variable in this data set is either a person’s salary is greater than 50 000 dollars per year or less. We use two features (education number and working hours per week) and each bar in the figure is the proportion of people making more that the amount of money mentioned above. This data set was used, for example, in Wang et al. (2022).

From Fig. 5 we can see that fused nearly-isotonic regression provides a trade-off between monotonicity, block sparsity and goodness-of-fit.

6 Conclusion and discussion

In this paper we introduced and studied the fused lasso nearly-isotonic signal approximator in general dimensions. The main result is that the estimator is computationally feasible and it provides interplay between fusion and monotonisation. Also, we proved that the properties of the new estimator are very similar to the properties of the fusion estimator and the nearly-isotonic regression.

In our opinion, one of the most important results is Theorem 5, where we proved the commutability property of fusion and nearly-isotonisation, because for the fixed values of one of the penalisation parameters we can immediately obtain the path solution with respect to the other one. Path algorithm for fused lasso exists (Hoefling 2010; Tibshirani and Taylor 2011). At the same time, to the authors’ knowledge, path algorithm for nearly-isotonic regression in general dimensions has not been developed yet. Therefore, further direction could be the solution for the nearly-isotonic regression, and, next, to prove if commutability holds in a general dimensional case.

One of the other possible directions is to study the asymptotic properties. In particular, it is interesting to understand the rate of convergence for different model selection and cross-validation procedures of choosing penalisation parameters.

Another direction is to study properties of the solution when \(\lambda _{F}\) and \(\lambda _{NI}\) are not the same for each vertex. An example where one must use different penalisation parameters is the case when the data points are measured along non-homogeneously spaced grid. It is important to note that, as discussed in Minami (2020), this case is different and even in the one-dimensional case the estimator will behave differently. In particular, the agglomerative property of the nearly-isotonic regression holds if the penalisation parameters satisfy the certain relation, cf. Proposition A.1. in Minami (2020), which is crucial for the solution path.

Finally, in our opinion, it is interesting to study different combinations of penalisation estimators, even though, practically, in this case one needs more data, because there will be more penalisation parameters to estimate.

References

Becker, B., Kohavi, R.: UCI Machine Learning Repository (1996). http://archive.ics.uci.edu/ml

Bento, J., Furmaniak, R., Ray, S.: On the complexity of the weighted fused lasso. IEEE Signal Process. Lett. 25(10), 1595–1599 (2018)

Beran, R., Dümbgen, L.: Least squares and shrinkage estimation under bimonotonicity constraints. Stat. Comput. 20(2), 177–189 (2010)

Best, M.J., Chakravarti, N.: Active set algorithms for isotonic regression; a unifying framework. Math. Program. 47(1), 425–439 (1990)

Deng, H., Zhang, C.-H.: Isotonic regression in multi-dimensional spaces and graphs. Ann. Stat. 48(6), 3672–3698 (2020)

Efron, B.: How biased is the apparent error rate of a prediction rule? J. Am. Stat. Assoc. 81(394), 461–470 (1986)

Friedman, J., Hastie, T., Höfling, H., Tibshirani, R.: Pathwise coordinate optimization. Ann. Appl. Stat. 1(2), 302–332 (2007)

Gaines, B.R., Kim, J., Zhou, H.: Algorithms for fitting the constrained lasso. J. Comput. Graph. Stat. 27(4), 861–871 (2018)

Gao, C., Han, F., Zhang, C.-H.: On estimation of isotonic piecewise constant signals. Ann. Stat. 48(2), 629–654 (2020)

Gómez, A., He, Z., Pang, J.-S.: Linear-step solvability of some folded concave and singly-parametric sparse optimization problems. Math. Program. 198, 1–42 (2022)

Han, Q., Zhang, C.-H.: Limit distribution theory for block estimators in multiple isotonic regression. Ann. Stat. 48(6), 3251–3282 (2020)

Han, Q., Wang, T., Chatterjee, S., Samworth, R.J.: Isotonic regression in general dimensions. Ann. Stat. 47(5), 2440–2471 (2019)

Hoefling, H.: A path algorithm for the fused lasso signal approximator. J. Comput. Graph. Stat. 19(4), 984–1006 (2010)

Hoerl, A.E., Kennard, R.W.: Ridge regression: Biased estimation for nonorthogonal problems. Technometrics 12(1), 55–67 (1970)

Kim, S.-J., Koh, K., Boyd, S., Gorinevsky, D.: \(\ell _1\) trend filtering. SIAM Rev. 51(2), 339–360 (2009)

Meyer, M., Woodroofe, M.: On the degrees of freedom in shape-restricted regression. Ann. Stat. 28(4), 1083–1104 (2000)

Minami, K.: Estimating piecewise monotone signals. Electron. J. Stat. 14(1), 1508–1576 (2020)

Phillips, D.L.: A technique for the numerical solution of certain integral equations of the first kind. J ACM (JACM) 9(1), 84–97 (1962)

Rinaldo, A.: Properties and refinements of the fused lasso. Ann. Stat. 37(5B), 2922–2952 (2009)

Robertson, T., Wright, F.T., Dykstra, R.L.: Order Restricted Statistical Inference. Wiley, New York (1988)

Stellato, B., Banjac, G., Goulart, P., Bemporad, A., Boyd, S.: OSQP: an operator splitting solver for quadratic programs. Math. Progr. Comput. 12(4), 637–672 (2020)

Stout, Q.F.: Isotonic regression via partitioning. Algorithmica 66(1), 93–112 (2013)

Tibshirani, R.: Regression shrinkage and selection via the lasso. J. Roy. Stat. Soc.: Ser. B (Methodol.) 58(1), 267–288 (1996)

Tibshirani, R.J., Taylor, J.: The solution path of the generalized lasso. Ann. Stat. 39(3), 1335–1371 (2011)

Tibshirani, R., Saunders, M., Rosset, S., Zhu, J., Knight, K.: Sparsity and smoothness via the fused lasso. J. R. Stat. Soc. Ser. B (Stat. Methodol.) 67(1), 91–108 (2005)

Tibshirani, R.J., Hoefling, H., Tibshirani, R.: Nearly-isotonic regression. Technometrics 53(1), 54–61 (2011)

Tikhonov, A.N., Goncharsky, A., Stepanov, V., Yagola, A.G.: Numerical Methods for the Solution of Ill-posed Problems, vol. 328. Springer, Dordrecht (1995)

Wang, Y.-X., Sharpnack, J., Smola, A., Tibshirani, R.: Trend filtering on graphs. In: Artificial Intelligence and Statistics, pp. 1042–1050. PMLR (2015)

Wang, X., Ying, J., Cardoso, J.V.M., Palomar, D.P.: Efficient algorithms for general isotone optimization. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 36, pp. 8575– 8583 (2022)

Yu, Z., Chen, X., Li, X.: A dynamic programming approach for generalized nearly isotonic optimization. Math. Program. Comput. 15, 1–31 (2022)

Acknowledgements

This work was partially supported by the Wallenberg AI, Autonomous Systems and Software Program (WASP) funded by the Knut and Alice Wallenberg Foundataion.

Funding

Open access funding provided by University of Vienna.

Author information

Authors and Affiliations

Contributions

I am the only author of this paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A Proofs of the results

Appendix A Proofs of the results

Proof of Theorem 1

First, following the derivations of \(\ell _{1}\) trend filtering and generalised lasso in Kim et al. (2009) and Tibshirani and Taylor (2011), respectively, we can write the optimization problem in (6) in the following way

Further, the Lagrangian is given by

where \({\varvec{\nu }} \in {\mathbb {R}}^{m}\) is a dual variable.

Note that

and

Next, the dual function is given by

and, therefore, the dual problem is

which is equivalent to

Lastly, taking first derivative of Lagrangian \(L({\varvec{\beta }}, {\varvec{z}}, {\varvec{\nu }})\) with respect to \({\varvec{\beta }}\) we get the following relation between \(\hat{{\varvec{\beta }}}^{FNI}(\lambda _{F}, \lambda _{NI})\) and \(\hat{{\varvec{\nu }}}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\)

\(\Box \)

Proof of Theorem 2

The proof is similar to the derivation of the solution of the fused lasso in Friedman et al. (2007). Nevertheless, for compleeteness of the paper we provide the proof for \(\hat{{\varvec{\beta }}}^{FLNI}({\varvec{y}},\lambda _{F}, \lambda _{L},\lambda _{NI})\).

The subgradient equations (which are necessary and sufficient conditions for the solution of (5)) for \(\beta _{{\varvec{i}}}\), with \({\varvec{i}}\in {\mathcal {I}}\), are

where

Next, let \(q_{{\varvec{i}}, {\varvec{j}}}(\lambda _{L})\), \(t_{{\varvec{i}}, {\varvec{j}}}(\lambda _{L})\) and \(s_{{\varvec{i}}}(\lambda _{L})\) denote the values of the parameters defined above at some value of \(\lambda _{L}\). Note, the values of \(\lambda _{NI}\) and \(\lambda _{F}\) are fixed. Therefore, if \({\hat{\beta }}_{{\varvec{i}}}^{FLNI}({\varvec{y}},\lambda _{F}, 0, \lambda _{NI}) \ne 0\) for \(s_{{\varvec{i}}}(0)\) we have

and for \({\hat{\beta }}_{{\varvec{i}}}^{FLNI}({\varvec{y}}, \lambda _{F}, 0, \lambda _{NI}) = 0\) we can set \(s_{{\varvec{i}}}(0) = 0\).

Next, let \(\hat{{\varvec{\beta }}}^{ST}(\lambda _{L})\) denote the soft thresholding of \(\hat{{\varvec{\beta }}}^{FLNI}({\varvec{y}}, \lambda _{F}, 0, \lambda _{NI})\), i.e.

The goal is to prove that \(\hat{{\varvec{\beta }}}^{ST}(\lambda _{L})\) provides the solution to (14).

Note, analogously to the proof for the fused lasso estimator in Lemma A.1 at Friedman et al. (2007), if either \({\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L}) \ne 0\) or \({\hat{\beta }}_{{\varvec{j}}}^{ST}(\lambda _{L}) \ne 0\), and \({\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L}) < {\hat{\beta }}_{{\varvec{j}}}^{ST}(\lambda _{L})\) or \({\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L}) > {\hat{\beta }}_{{\varvec{j}}}^{ST}(\lambda _{L})\), then we also have \({\hat{\beta }}_{{\varvec{i}}}^{ST}(0) < {\hat{\beta }}_{{\varvec{j}}}^{ST}(0)\) or \({\hat{\beta }}_{{\varvec{i}}}^{ST}(0) > {\hat{\beta }}_{{\varvec{j}}}^{ST}(0)\), respectively. Therefore, soft thresholding of \(\hat{{\varvec{\beta }}}^{FLNI}({\varvec{y}}, \lambda _{F}, 0, \lambda _{NI})\) does not change the ordering of these pairs and we have \(q_{{\varvec{i}}, {\varvec{j}}}(\lambda _{L}) = q_{{\varvec{i}},{\varvec{j}}}(0)\) and \(t_{{\varvec{i}}, {\varvec{j}}}(\lambda _{L}) = t_{{\varvec{i}},{\varvec{j}}}(0)\). Next, if for some \(({\varvec{i}}, {\varvec{j}})\in E\) we have \({\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L}) = {\hat{\beta }}_{{\varvec{j}}}^{ST}(\lambda _{L}) = 0\), then \(q_{{\varvec{i}}, {\varvec{j}}} \in [0,1]\) and \(t_{{\varvec{i}}, {\varvec{j}}} \in [-1, 1]\), and, again, we can set \(t_{{\varvec{i}}, {\varvec{j}}}(\lambda _{L}) = t_{{\varvec{i}}, {\varvec{j}}}(0)\), and \(q_{{\varvec{i}}, {\varvec{j}}}(\lambda _{L}) = q_{{\varvec{i}}, {\varvec{j}}}(0)\).

Now let us insert \({\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L})\) into subgradient equations (A2) and show that we can find \(s_{{\varvec{i}}}(\lambda _{L}) \in [0,1]\), for all \({\varvec{i}}\in {\mathcal {I}}\).

First, assume that for some \({\varvec{i}}\) we have \({\hat{\beta }}_{{\varvec{i}}}^{FLNI}({\varvec{y}}, \lambda _{F}, 0, \lambda _{NI}) \ge \lambda _{L}\). Then

Note, that

because \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI}) \equiv \hat{{\varvec{\beta }}}^{FLNI}({\varvec{y}},\lambda _{F}, 0, \lambda _{NI})\).

Therefore, if \(s_{i}(\lambda _{L}) = \textrm{sign}{{\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L})} = 1\), then \(g_{{\varvec{i}}}(\lambda _{L}) = 0\).

The proof for the case when \({\hat{\beta }}_{{\varvec{i}}}^{FLNI}({\varvec{y}}, \lambda _{F}, 0, \lambda _{NI}) \le -\lambda _{L}\) is similar and one can show that \(g_{{\varvec{i}}}(\lambda _{L}) = 0\) if \(s_{{\varvec{i}}}(\lambda _{L}) = \textrm{sign}{{\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L})} = -1\).

Second, assume that \(|{\hat{\beta }}_{{\varvec{i}}}^{FLNI}({\varvec{y}}, \lambda _{F}, 0, \lambda _{NI})| < \lambda _{L}\). Then, \({\hat{\beta }}_{{\varvec{i}}}^{ST}(\lambda _{L}) = 0\), and

Next, if we let \(s_{{\varvec{i}}}(\lambda _{L}) = {\hat{\beta }}_{{\varvec{i}}}^{FLNI}({\varvec{y}},\lambda _{F}, 0, \lambda _{NI})/\lambda _{L}\), then, again, we have

Therefore, we have proved that \(\hat{{\varvec{\beta }}}^{FLNI}({\varvec{y}},\lambda _{F}, \lambda _{L},\lambda _{NI}) = \hat{{\varvec{\beta }}}^{ST}(\lambda _{L})\). \(\Box \)

Proof of Proposition 3

First, from Tibshirani et al. (2011) the solution to the nearly-isotonic problem is given by

with

and from Tibshirani and Taylor (2011) it follows

with

Second, let us introduce a new variable \({\varvec{v}}^{*} = {\varvec{v}} - \frac{\lambda _{NI}}{2} {\varvec{1}}\). Then

where

Therefore, we have proved that \(\hat{{\varvec{\beta }}}^{NI}({\varvec{y}}, \lambda _{NI}) = \hat{{\varvec{\beta }}}^{F}({\varvec{y}} - \frac{\lambda _{NI}}{2} D^{T}{\varvec{1}}, \frac{1}{2}\lambda _{NI})\).

The proof for the fused lasso nearly-isotonic estimator is the same with the change of variable \({\varvec{u}}^{*} = {\varvec{u}} + D^{T}\lambda _{F} {\varvec{1}}\) in (16) and (17) for the proof of the first equality in (20) and with \({\varvec{u}}^{*} = {\varvec{u}} - \frac{\lambda _{NI}}{2} {\varvec{1}}\) for the second equality.

Next, we prove the result for the case of fused lasso nearly-isotonic approximator. From Theorem 2 we have

for \({\varvec{i}} \in {\mathcal {I}}\).

Further, using (20) we have

if \({\hat{\beta }}^{F}_{{\varvec{i}}}({\varvec{y}} - \frac{\lambda _{NI}}{2} D^{T}{\varvec{1}}, \frac{1}{2}\lambda _{NI} + \lambda _{F}) \ge \lambda _{L}\),

if \(|{\hat{\beta }}^{F}_{{\varvec{i}}}({\varvec{y}} - \frac{\lambda _{NI}}{2} D^{T}{\varvec{1}}, \frac{1}{2}\lambda _{NI} + \lambda _{F})| \le \lambda _{L}\),

if \({\hat{\beta }}^{F}_{{\varvec{i}}}({\varvec{y}} - \frac{\lambda _{NI}}{2} D^{T}{\varvec{1}}, \frac{1}{2}\lambda _{NI} + \lambda _{F}) \le -\lambda _{L}\).

Therefore, we obtain

\(\Box \)

Let us consider the following cases separately.

Case 1: \(\lambda _{NI}\)and \(\lambda _{F}\)are fixed and \(\lambda _{L}^{*} > \lambda _{L}\). The result of the proposition for this case follows directly from Theorem 2.

Case 2: \(\lambda _{F}\) and \(\lambda _{L}\) are fixed and \(\lambda _{NI}^{*} > \lambda _{NI}\). Let us consider the fused nearly-isotonic regression and write the subgradient equations

where \(q_{i}\) and \(t_{i}\), with \(i = 1, \dots , n\), are defined in (A3), and, analogously to the proof of Theorem 2, \(q(\lambda _{NI})\), \(t(\lambda _{NI})\) denote the values of the parameters defined above at some value of \(\lambda _{NI}\).

Assume that for \(\lambda _{NI}\) in the solution \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\) we have a following constant region

and in the same way as in Tibshirani et al. (2011) for \(\lambda _{NI}^{*}\) we consider the subset of the subgradient equations

with \(i = j, \dots , k\), and show that there exists the solution for which (A4) holds, \(q_{i} \in [0, 1]\) and \(t_{i} \in [-1, 1]\).

Note first that as \(\lambda _{NI}\) increases, (A4) holds until the merge with other groups happens, which means that \(q_{j-1}, q_{j+k} \in \{0, 1\}\) and \(t_{j-1}, t_{j+k} \in \{-1, 1\}\) will not change their values until the merge of this constant region. Also, as it follows from (A3), for \(i \in [j, j+ k]\) the value of \(t_{i}\) is in \([-1,1]\). Therefore, without any violation of the restrictions on \(t_{i}\) we can assume that \(t_{i}(\lambda _{NI}^{*}) = t_{i}(\lambda )\) for any \(i \in [j, j + k - 1]\).

Next, taking pairwise differences between subgradient equations for \(\lambda _{NI}\) we have

where D is displayed at Fig. 1,

and

Since A is invertible we have

and, since \(\tilde{{\varvec{q}}}(\lambda _{NI})\) and \(\tilde{{\varvec{t}}}(\lambda _{NI})\) provide the solution to the subgradient equations (A5), then

Next, as pointed out at Friedman et al. (2007) and Tibshirani et al. (2011)

then, one can show that

Further, let us consider the case of \(\lambda _{NI}^{*} > \lambda _{NI}\). Then we have

Recall, above we set \(\tilde{{\varvec{t}}}(\lambda _{NI}^{*}) = \tilde{{\varvec{t}}}(\lambda _{NI})\), and \(q_{j-1}, q_{j+k}, t_{j-1}\) and \(t_{j+k}\) does not change their values until the merge, which means that \({\varvec{c}}(\lambda _{NI}^{*}) = {\varvec{c}}(\lambda _{NI})\), and \({\varvec{d}}(\lambda _{NI}^{*}) = {\varvec{d}}(\lambda _{NI})\).

Therefore, the subgradient equations for \(\lambda _{NI}^{*}\) can be written as

Next, since the term \(A^{-1}D\tilde{{\varvec{y}}}\) is not changed, \(-\lambda _{F} \le \lambda _{F}\tilde{{\varvec{t}}}(\lambda _{NI}) \le \lambda _{F}\), and

then we have

Therefore we proved that \({\hat{\beta }}_{i}^{FNI}({\varvec{y}},{\varvec{\lambda }}^{*}) = {\hat{\beta }}_{i+1}^{FNI}({\varvec{y}},{\varvec{\lambda }}^{*})\). Since \({\hat{\beta }}_{i}^{FLNI}({\varvec{y}},{\varvec{\lambda }}^{*})\) is given by soft thresholding of \({\hat{\beta }}_{i}^{FNI}({\varvec{y}},{\varvec{\lambda }}^{*})\), then \({\hat{\beta }}_{i}^{FLNI}({\varvec{y}},{\varvec{\lambda }}^{*}) = {\hat{\beta }}_{i+1}^{FLNI}({\varvec{y}},{\varvec{\lambda }}^{*})\) for \(i \in [j, k]\).

Case 3: \(\lambda _{NI}\) and \(\lambda _{L}\) are fixed and \(\lambda _{F}^{*} > \lambda _{F}\). The proof for this case is virtually identical to the proof for the Case 2. In this case we assume that \(q_{i}(\lambda _{F}^{*}) = q_{i}(\lambda _{2 })\) for any \(i \in [j, j + k-1]\). Next, \(q_{j-1}, q_{j+k}, t_{j-1}\) and \(t_{j+k}\) do not change their values until the merge, which, again, means that \({\varvec{c}}(\lambda _{F}^{*}) = {\varvec{c}}(\lambda _{F})\), and \({\varvec{d}}(\lambda _{F}^{*}) = {\varvec{d}}(\lambda _{F})\). Finally, we can show that

\(\Box \)

Proof of Theorem 5

For some fixed \(\lambda _{F}\) and \(\lambda _{NI}\), let us write subgradient equations for the fused lasso nearly-isotonic approximator:

for \(i = 1, \dots , n\), where \(q_{i}\) and \(t_{i}\), with \(i = 1, \dots , n-1\), are given by

and \(q_{0} = q_{n} = t_{0} = t_{n} = 0\).

Second, assume that in the solution \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\) there are K distinct constant regions \({\mathcal {A}}(\lambda _{F}, \lambda _{NI}) = \{ A_{1}, \dots , A_{K} \}\), and \(f_{j}\) and \(l_{j}\) are the first and last indices, respectively, in the region \(A_{j}\). Therefore, using the telescoping sums, for \(k\in A_{j}\) the solution \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\) can be written as

with \(|A_{j}| = \#\{j: y_{j}\in A_{j} \}\).

We, first, prove that

Let us fix some \(\lambda _{F}\), and take \(\lambda _{NI}^{*} > \lambda _{NI}\) such that \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI}^{*})\) has the same constant regions as \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\). Therefore, analogously to the case of one dimensional nearly-isotonic regression in Tibshirani et al. (2011), for a fixed \(\lambda _{NI}\) the solution is linear function of \(\lambda _{NI}\) in between the values of \(\lambda _{NI}\) (which are called knots) where some constant regions merge.

Assume now that \(\lambda _{NI} = 0\). Next, assume that in the solution \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, 0)\) there are K(0) distinct constant regions \({\mathcal {A}}(\lambda _{F}, 0) = \{ A_{1}, \dots , A_{K} \}\), and \(f_{j}\) and \(l_{j}\) are the first and last indices, respectively, in those region \(A_{j}\).

Next, we increase the value of \(\lambda _{NI}^{*} > \lambda _{NI}\) and assume that we still have the same constant regions as for \(\lambda _{F}\) and \(\lambda _{NI}\), i.e.

i.e. at the value \(\lambda _{NI}^{*}\) not merge has happened, which means that

if k and \(k'\) are not in the same \(A_{j}\in {\mathcal {A}}(\lambda _{F}, 0)\). Next, recall that for any \(k \in A_{j}\) we have

Therefore, \({\hat{\beta }}^{F}_{k}({\varvec{y}}, \lambda _{F})\) has the same constant regions as \({\hat{\beta }}^{FNI}_{k}({\varvec{y}}, \lambda _{F}, 0)\).

Then, recall that

Next, let us choose \(\lambda '_{NI} < \lambda _{NI}^{*} \) such that, again, the constant regions of \(\hat{{\varvec{\beta }}}^{NI}({\hat{\beta }}^{F}_{k}({\varvec{y}}, \lambda _{F}), \lambda '_{NI})\) are the same as for \({\hat{\beta }}^{F}_{k}({\varvec{y}}, \lambda _{F})\) and \({\hat{\beta }}^{FNI}_{k}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\). Then, for \(k\in A_{j}\) the solution is given by

and using (A10) we get

which means that the solution is linear function of \(\lambda '_{NI}\) until some constant regions merge.

Note now

and, obviously, this equality holds at least until constant regions merge. Let \(\lambda _{NI}^{(1)}\) be the first value of \(\lambda _{NI}\) when the first merge happens. At the value \(\lambda _{NI}^{(1)}\) the equality

holds, since \(\hat{{\varvec{\beta }}}^{NI}\) is continuous in \(\lambda _{NI}\).

Assume for simplicity of notation that at \(\lambda _{NI} = \lambda _{NI}^{(1)}\) the constant region \(A_{j}\) merges with constant region \(A_{j+1}\). Therefore, for \(k\in A_{j}\cup A_{j+1}\) we have

and for \(k \in A_{m} \ne A_{j}\cup A_{j+1}\):

Further, for \(\hat{{\varvec{\beta }}}^{F\rightarrow NI}({\varvec{y}}, \lambda _{F}, \lambda _{NI}^{(1)})\) for \(k\in A_{j}\cup A_{j+1}\) we have

and for \(k \in A_{m} \ne A_{j}\cup A_{j+1}\):

Next, let us increase \(\lambda _{NI}^{(1)}\) by \(\delta \lambda \) so that no merge in \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI} + \delta \lambda )\) happens. Then, for \(k\in A_{j}\cup A_{j+1}\) we have

and for \(k \in A_{m} \ne A_{j}\cup A_{j+1}\):

Further, in the case of \(\hat{{\varvec{\beta }}}^{F\rightarrow NI}({\varvec{y}}, \lambda _{F}, \lambda _{NI}^{(1)})\) we increase \(\lambda \) by \(\delta \lambda ' < \delta \lambda \) and, therefore, we have for \(k\in A_{j}\cup A_{j+1}\):

and for \(k \in A_{m} \ne A_{j}\cup A_{j+1}\):

Therefore, before the next merge happens we have the following relation between the estimators \( \hat{{\varvec{\beta }}}^{F\rightarrow NI}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\) and \(\hat{{\varvec{\beta }}}^{FNI}({\varvec{y}}, \lambda _{F}, \lambda _{NI})\)

if \(k\in A_{j}\cup A_{j+1}\), and

for \(k \in A_{m} \ne A_{j}\cup A_{j+1}\).

We have proved that before the second merge we have

and at the value of \(\lambda _{NI}^{(2)}\) when the second merge of some constant regions happens we have

by the continuity.

We can continue this process until the last knot point in the path. Therefore we proved the equality of the estimators. The proof of

is virtually the same with \(q_{j}\) suitably changed to \(t_{j}\) and \(\lambda _{NI}\) to \(\lambda _{F}\) and using the propertis of fused lasso from Hoefling (2010). \(\Box \)

Proof of Theorem 6

First, for the fused estimator \(\hat{{\varvec{\beta }}}^{F}({\varvec{y}}, \lambda _{F})\) let

Then, as it follows from Tibshirani and Taylor (2011), for \(\hat{{\varvec{\beta }}}^{F}({\varvec{y}}, \lambda _{F})\) we have

Next, from Proposition 3, it follows

Therefore, using the property of covariance we have

where \([{\varvec{a}}]_{i}\) denotes i-th element in the vector \({\varvec{a}}\in {\mathbb {R}}^{n}\).

Next, we prove the result for the fused lasso nearly-isotonic approximator. From Proposition 3 we have

Next, for the fused lasso \(\hat{{\varvec{\beta }}}^{FL}({\varvec{y}}, \lambda _{F}, \lambda _{L})\) defined in (2) let

and from Tibshirani and Taylor (2011) it follows

Further, again, using the property of the covariance, we have

Lastly, we note that the proof for the unbiased estimator of the degrees of freedom for nearly-isotonic regression, given in Tibshirani et al. (2011), can be done in the same way as in the current proof, using the relation (19) and, again, the result of the paper Tibshirani and Taylor (2011) for the fusion estimator \(\hat{{\varvec{\beta }}}^{FLNI}({\varvec{Y}}, \lambda _{F})\). \(\Box \)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Pastukhov, V. Fused lasso nearly-isotonic signal approximation in general dimensions. Stat Comput 34, 120 (2024). https://doi.org/10.1007/s11222-024-10432-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11222-024-10432-6