Abstract

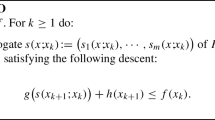

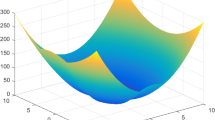

In this paper, we propose a Barzilai-Borwein (BB) type method for minimizing the sum of a smooth function and a convex but possibly nonsmooth function. At each iteration, our method minimizes an approximation function of the objective and takes the difference between the minimizer and the current iteration as the search direction. A nonmonotone strategy is employed for determining the step length to accelerate the convergence process. We show convergence of our method to a stationary point for nonconvex functions. Consequently, when the objective function is convex, the proposed method converges to a global solution. We establish sublinear convergence of our method when the objective function is convex. Moreover, when the objective function is strongly convex the convergence rate is R-linear. Preliminary numerical experiments show that the proposed method is promising.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Barzilai, J., Borwein, J.M.: Two-point step size gradient methods. IMA J. Numer. Anal. 8(1), 141–148 (1988)

Beck, A., Teboulle, M.: A fast iterative shrinkage-thresholding algorithm for linear inverse problems. SIAM J. Imaging Sci. 2(1), 183–202 (2009)

Becker, S., Fadili, M.J.: A quasi-Newton proximal splitting method. In: Advances in Neural Information Processing Systems, pp. 1–9 (2012)

Bioucas-Dias, J., Figueiredo, M.: A new TwIST: two-step iterative shrinkage/thresholding algorithms for image restoration. IEEE Trans. Image Process. 16(12), 2992–3004 (2007)

Bioucas-Dias, J., Figueiredo, M., Oliveira, J.P.: Total variation-based image deconvolution: a majorization-minimization approach. In: IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 861–864 (2006)

Birgin, E.G., Martínez, J.M., Raydan, M.: Nonmonotone spectral projected gradient methods on convex sets. SIAM J. Optim. 10(4), 1196–1211 (2000)

Birgin, E.G., Martínez, J.M., Raydan, M.: Inexact spectral projected gradient methods on convex sets. IMA J. Numer. Anal. 23(4), 539–559 (2003)

Birgin, E.G., Martínez, J.M., Raydan, M.: Spectral projected gradient methods: Review and perspectives. http://www.ime.usp.br/~egbirgin/ (2013)

Bredies, K., Lorenz, D.A.: Linear convergence of iterative soft-thresholding. J. Fourier Anal. Appl. 14(5–6), 813–837 (2008)

Candès, E., Romberg, J., Tao, T.: Robust uncertainty principles: Exact signal reconstruction from highly incomplete frequency information. IEEE Trans. Inf. Theory 52(2), 489–509 (2006)

Candès, E.J., Romberg, J.: Quantitative robust uncertainty principles and optimally sparse decompositions. Found. Comput. Math. 6(2), 227–254 (2006)

Candès, E.J., Tao, T.: Near-optimal signal recovery from random projections: Universal encoding strategies. IEEE Trans. Inf. Theory 52(12), 5406–5425 (2006)

Chambolle, A., DeVore, R.A., Lee, N.Y., Lucier, B.J.: Nonlinear wavelet image processing: Variational problems, compression, and noise removal through wavelet shrinkage. IEEE Trans. Image Process. 7(3), 319–335 (1998)

Chaux, C., Combettes, P., Pesquet, J.C., Wajs, V.: A variational formulation for frame-based inverse problems. Inverse Probl. 23(4), 1495 (2007)

Chen, S.S., Donoho, D.L., Saunders, M.A.: Atomic decomposition by basis pursuit. SIAM J. Sci. Comput. 20(1), 33–61 (1998)

Combettes, P.L., Wajs, V.R.: Signal recovery by proximal forward-backward splitting. SIAM Multiscale Model. Simul. 4(4), 1168–1200 (2005)

Cores, D., Escalante, R., González-Lima, M., Jimenez, O.: On the use of the spectral projected gradient method for support vector machines. Comput. Appl. Math. 28(3), 327–364 (2009)

Dai, Y.H., Fletcher, R.: Projected Barzilai-Borwein methods for large-scale box-constrained quadratic programming. Numer. Math. 100(1), 21–47 (2005)

Dai, Y.H., Liao, L.Z.: R-linear convergence of the Barzilai and Borwein gradient method. IMA J. Numer. Anal. 22(1), 1–10 (2002)

Dai, Y.H., Zhang, H.: Adaptive two-point stepsize gradient algorithm. Numer. Algor. 27(4), 377–385 (2001)

Donoho, D.L.: De-noising by soft-thresholding. IEEE Trans. Inf. Theory 41(3), 613–627 (1995)

Donoho, D.L.: Compressed sensing. IEEE Trans. Inf. Theory 52(4), 1289–1306 (2006)

Figueiredo, M., Nowak, R., Wright, S.: Gradient projection for sparse reconstruction: Application to compressed sensing and other inverse problems. IEEE J. Sel. Top. Signal Process. 1(4), 586–597 (2007)

Fletcher, R.: On the Barzilai-Borwein method. In: Qi, L.Q., Teo, K.L., Yang, X.Q., Pardalos, P.M., Hearn, D.W., (eds.) Optimization and Control with Applications. Applied Optimization 96, 235–256 (2005)

Fuchs, J.J.: On sparse representations in arbitrary redundant bases. IEEE Trans. Inf. Theory 50(6), 1341–1344 (2004)

Gonzaga, C.C., Karas, E.W.: Fine tuning Nesterov’s steepest descent algorithm for differentiable convex programming. Math. Program. 138(1–2), 141–166 (2013)

Grippo, L., Lampariello, F., Lucidi, S.: A nonmonotone line search technique for Newton’s method. SIAM J. Numer. Anal. 23(4), 707–716 (1986)

Grippo, L., Sciandrone, M.: Nonmonotone globalization techniques for the Barzilai-Borwein gradient method. Comput. Optim. Appl. 23(2), 143–169 (2002)

Guerrero, J., Raydan, M., Rojas, M.: A hybrid-optimization method for large-scale non-negative full regularization in image restoration. Inverse Probl. Sci. Eng. 21(5), 741–766 (2013)

Hager, W.W., Phan, D.T., Zhang, H.: Gradient-based methods for sparse recovery. SIAM J. Imaging Sci. 4(1), 146–165 (2011)

Hale, E.T., Yin, W., Zhang, Y.: Fixed-point continuation for ℓ 1-minimization: methodology and convergence. SIAM J. Optim. 19(3), 1107–1130 (2008)

Hale, E.T., Yin, W., Zhang, Y.: Fixed-point continuation applied to compressed sensing: implementation and numerical experiments. J. Comput. Math. 28(2), 170–194 (2010)

Huang, Y.K., Liu, H.W., Zhou, S.: A Barzilai-Borwein type method for stochastic linear complementarity problems. Numer. Algor. (2013). doi:10.1007/s11075-013-9803-y

Kim, S., Koh, K., Lustig, M., Boyd, S., Gorinevsky, D.: An interior-point method for large-scale ℓ 1-regularized least squares. IEEE J. Sel. Top. Signal Process. 1(4), 606–617 (2007)

Lee, J., Sun, Y., Saunders, M.: Proximal Newton-type methods for convex optimization. In: Advances in Neural Information Processing Systems, pp. 836–844 (2012)

Loris, I., Bertero, M., De Mol, C., Zanella, R., Zanni, L.: Accelerating gradient projection methods for ℓ 1-constrained signal recovery by steplength selection rules. Appl. Comput. Harm. Anal. 27(2), 247–254 (2009)

Nesterov, Y.: Gradient methods for minimizing composite functions. Math. Program. 140(1), 125–161 (2013)

Raydan, M.: The Barzilai and Borwein gradient method for the large scale unconstrained minimization problem. SIAM J. Optim. 7(1), 26–33 (1997)

Wang, Y., Ma, S.: Projected Barzilai-Borwein method for large-scale nonnegative image restoration. Inverse Probl. Sci. Eng. 15(6), 559–583 (2007)

Wen, Z., Yin, W., Goldfarb, D., Zhang, Y.: A fast algorithm for sparse reconstruction based on shrinkage, subspace optimization, and continuation. SIAM J. Sci. Comput. 32(4), 1832–1857 (2010)

Wright, S., Nowak, R., Figueiredo, M.: Sparse reconstruction by separable approximation. IEEE Trans. Signal Process. 57(7), 2479–2493 (2009)

Yin, W., Osher, S., Goldfarb, D., Darbon, J.: Bregman iterative algorithms for ℓ 1-minimization with applications to compressed sensing. SIAM J. Imaging Sci. 1(1), 143–168 (2008)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Huang, Y., Liu, H. A Barzilai-Borwein type method for minimizing composite functions. Numer Algor 69, 819–838 (2015). https://doi.org/10.1007/s11075-014-9927-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11075-014-9927-8