Abstract

We consider Arnoldi-like processes to obtain symplectic subspaces for Hamiltonian systems. Large dimensional systems are locally approximated by ones living in low dimensional subspaces, and we especially consider Krylov subspaces and some of their extensions. These subspaces can be utilized in two ways: by solving numerically local small dimensional systems and then mapping back to the large dimension, or by using them for the approximation of necessary functions in exponential integrators applied to large dimensional systems. In the former case one can expect an excellent energy preservation and in the latter this is so for linear systems. We consider second order exponential integrators which solve linear systems exactly and for which these two approaches are in a certain sense equivalent. We also consider the time symmetry preservation properties of the integrators. In numerical experiments these methods combined with symplectic subspaces show promising behavior also when applied to nonlinear Hamiltonian problems.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Symplectic methods have shown to be very effective in long time integration of Hamiltonian systems (see [11]). Many of them are implicit and necessitate the solution of systems of equations. If the differential equation system is large and sparse, a natural approach is to use Krylov subspace techniques to approximate the solution of the algebraic equations. A related approach is to use Krylov approximations of the matrix exponential in exponential integrators (see [13]). This has turned out to be a superior technique for several classes of problems.

Krylov subspace techniques can be viewed as local low dimensional approximations of the large system. For Hamiltonian systems standard Arnoldi-type iterations produce low dimensional systems that are no longer Hamiltonian. In this paper special attention is paid to produce symplectic bases for Krylov subspaces.

This is an extended version of the slides by Eirola, presented at the Workshop on Exponential Integrators in Innsbruck in 2004 (see [7]). Some of the results were introduced in the master’s thesis of the second author [15] which was supervised by Eirola. The original ideas of Eirola came from considering linear Hamiltonian systems in \({\mathbb {R}}^{2n}\) as \({\mathbb {R}}\)-linear systems in \({{\mathbb {C}}^n}\) (see [8]). The slides [7] were written using that language, but the present version is written in a more standard form.

2 Hamiltonian systems

Given a smooth function \(H\;:\;{\mathbb {R}}^{2n}\rightarrow {\mathbb {R}}\), consider the Hamiltonian system

where \({{\varvec{J}}}_n= \left[ {\begin{matrix} 0&{}I\\ -I&{}0 \end{matrix}}\right] \in \mathbb {R}^{2n \times 2n}\). A matrix \({{\varvec{A}}}\in {\mathbb {R}}^{2n\times 2n}\) is called Hamiltonian, if \({{\varvec{A}}}^T={{\varvec{JAJ}}}\), and symplectic, if \({{\varvec{A}}}^T{{\varvec{J}}}_n{{\varvec{A}}}={{\varvec{J}}}_n\). The Jacobian of \(\nabla H({{\varvec{x}}})\) is a symmetric matrix at every point. Thus \(D \,{{\varvec{J}}}_n^{-1}\,\nabla H({{\varvec{x}}})\) is a Hamiltonian matrix.

The system (2.1) has a unique solution and we write it \({{\varvec{x}}}(t)={\varvec{\varphi }}^t({{\varvec{x}}}_0)\). It holds:

-

Energy is preserved: \(H({{\varvec{x}}}(t))\) is constant in t.

-

For every t the mapping \({\varvec{\varphi }}^t\;:\;{\mathbb {R}}^{2n}\rightarrow {\mathbb {R}}^{2n}\) is symplectic (or canonical), that is, its derivative is a symplectic matrix at every point.

-

The mapping \({\varvec{\varphi }}^t\) is time symmetric, i.e. \({\varvec{\varphi }}^{-t}({{\varvec{x}}}(t))={{\varvec{x}}}_0\) for every t.

Symplectic integrators produce symplectic one step maps for Hamiltonian systems (see [11]). For example, the implicit midpoint rule

is such. For linear systems, i.e., when H is of the form \(H({{\varvec{x}}})=\tfrac{1}{2}\,{{\varvec{x}}}^T{{\varvec{S}}}\,{{\varvec{x}}}+{{\varvec{c}}}^T{{\varvec{x}}}\), \({{\varvec{S}}}\) symmetric, the energy is also preserved in the numerical solution with this and many other symplectic methods. One step methods are called symmetric the map given by the integrator is time symmetric, i.e. changing h to \(-h\) is equivalent to switching \({{\varvec{x}}}_j\) and \({{\varvec{x}}}_{j+1}\). The implicit midpoint rule, for example, is symmetric.

For large systems implicit methods may become expensive. In this paper we consider several low dimensional Hamiltonian approximations and the use of exponential integrators for these.

3 Symplectic subspaces and low dimensional approximations

Recall some basic definitions and properties in \({\mathbb {R}}^{2n}\) (see e.g. [1]). Denote the non-degenerate skew-symmetric bilinear form \(\omega ({{\varvec{x}}},{{\varvec{y}}}):={{\varvec{x}}}^T{{\varvec{J}}}_n{{\varvec{y}}}\). A subspace V is isotropic, if \(\omega ({{\varvec{x}}},{{\varvec{y}}})=0\) for all \({{\varvec{x}}},{{\varvec{y}}}\in V\), and a subspace W is symplectic, if for every nonzero \({{\varvec{x}}}\in W\) there exists \({{\varvec{y}}}\in W\) such that \(\omega ({{\varvec{x}}},{{\varvec{y}}})\not =0\). Then the dimension of W is even. A basis \({{\varvec{e}}}_1,\ldots ,{{\varvec{e}}}_k,{{\varvec{f}}}_1,\ldots ,{{\varvec{f}}}_k\in W\) is called symplectic, or a Darboux basis, if for all \(i,j=1,\ldots ,k\) holds \(\omega ({{\varvec{e}}}_i,{{\varvec{e}}}_j)=\omega ({{\varvec{f}}}_i,{{\varvec{f}}}_j)=0\) and \(\omega ({{\varvec{e}}}_i,{{\varvec{f}}}_j)=\delta _{i,j}\).

If V is an isotropic subspace with an orthonormal basis \({{\varvec{e}}}_1,\ldots ,{{\varvec{e}}}_k\), then \({{\varvec{e}}}_1,\ldots ,{{\varvec{e}}}_k\) are also \(\omega \)–orthogonal and \(W=V\oplus {{\varvec{J}}}_n V\) is a symplectic subspace and \({{\varvec{e}}}_1,\ldots ,{{\varvec{e}}}_k,\)\({{\varvec{J}}}_n^{-1}{{\varvec{e}}}_1,\ldots ,{{\varvec{J}}}_n^{-1}{{\varvec{e}}}_k\) is a symplectic basis of W.

We call also a matrix \({{\varvec{U}}}\in {\mathbb {R}}^{2n\times 2k}\) symplectic, if \({{\varvec{U}}}^T{{\varvec{J}}}_n {{\varvec{U}}}={{\varvec{J}}}_k\), where \({{\varvec{J}}}_k = \left[ {\begin{matrix} 0&I{{\varvec{I}}}&0 \end{matrix}}\right] \in \mathbb {R}^{2k \times 2k}\). Then \({{\varvec{U}}}^\dagger ={{\varvec{J}}}_k^{-1}{{\varvec{U}}}^T{{\varvec{J}}}_n\) is a left inverse of \({{\varvec{U}}}\) if and only if \({{\varvec{U}}}\) is symplectic.

We will consider local approximations of the Hamiltonian system

Assume that at a point \({{\varvec{x}}}_0\in {\mathbb {R}}^{2n}\) we are given a symplectic matrix \({{\varvec{U}}}\in {\mathbb {R}}^{2n\times 2k}\). Consider the Hamiltonian system in \({\mathbb {R}}^{2k}\) corresponding to the function \(\eta ({\varvec{\xi }})=H({{\varvec{x}}}_0+{{\varvec{U}}}{\varvec{\xi }})\). Then we get

which is Hamiltonian in \({\mathbb {R}}^{2k}\). Denote \({{\varvec{U}}}^\dagger ={{\varvec{J}}}_k^{-1}{{\varvec{U}}}^T{{\varvec{J}}}_n\). Then

One strategy is to solve (3.2) numerically from \({\varvec{\xi }}_0=0\) up to \({\varvec{\xi }}_1\approx {\varvec{\xi }}(t_1)\) and set \({{\varvec{x}}}_1={{\varvec{x}}}_0+{{\varvec{U}}}{\varvec{\xi }}_1\). Clearly, if we use an energy preserving scheme for the system (3.2), we will conserve the energy of the large system too, i.e. \(H({{\varvec{x}}}_1)=H({{\varvec{x}}}_0)\).

Note that if the sets of constant energy of the original system are bounded, then they are such for the small dimensional approximations too. This implies that the approximations inherit stability of equilibria in a natural way.

We will consider also another strategy: instead of solving low-dimensional systems, we approximate the matrix functions appearing in exponential integrators in the low dimensional space \({\mathbb {R}}^{2k}\).

The idea of approximating a Hamiltonian system by another of smaller dimension is not new. See, for example the discussion in [16]. A novelty here is to use local (later Krylov subspace) approximations.

If \({{\varvec{U}}}\) is symplectic and does not depend on \({{\varvec{x}}}_0\), then using a symplectic method for (3.2) induces a map \({\varvec{\psi }}\;:\;{{\varvec{x}}}_0\rightarrow {{\varvec{x}}}_1\) that is symplectic in \(R({{\varvec{U}}})\), that is

But in order to get efficient algorithms we let \({{\varvec{U}}}\) to depend on \({{\varvec{x}}}_0\) and then this approach generally does not produce a symplectic map.

4 Exponential integrators

The use of approximations of the matrix exponential as a part of time propagation methods for differential equations has turned out to be very effective (see e.g. [13]). We consider application of three second order exponential integrators to the Hamiltonian system (2.1). In what follows, the matrix \({{\varvec{H}}}\) denotes the Jacobian of the right hand side of (3.1) at \({{\varvec{x}}}_0\), i.e., \({{\varvec{H}}}= D {{\varvec{f}}}({{\varvec{x}}}_0)\). The methods can be seen as exponential integrators applied to semilinear equations which are local linearizations of (2.1). In the literature methods of this type are also called exponential Rosenbrock methods [14].

4.1 Exponential Euler method

As a first method we consider the exponential Euler method (EE)Footnote 1

where \(\phi (z)=\int _0^1 e^{tz}\,dt=z^{-1}\,(e^z-1)\) and \({{\varvec{H}}}= D{{\varvec{f}}}({{\varvec{x}}})\).

Note that if \({{\varvec{f}}}\) is linear, then \({{\varvec{x}}}_+={\varvec{\varphi }}^h({{\varvec{x}}})\), i.e., the method gives exact values of the solution.

Assume now that the system \({{\varvec{x}}}'={{\varvec{f}}}({{\varvec{x}}})\) is Hamiltonian in \({\mathbb {R}}^{2n}\) and \({{\varvec{U}}}\in {\mathbb {R}}^{2n\times 2k}\) is symplectic. Then \({{\varvec{H}}}=D{{\varvec{f}}}({{\varvec{x}}})\) is a Hamiltonian matrix as well as \({{\varvec{F}}}={{\varvec{U}}}^\dagger {{\varvec{H}}}{{\varvec{U}}}\).

If we use the exponential Euler method (4.1) for the low dimensional system (3.2) we produce

For linear problems this will preserve energy exactly:

Lemma 4.1

Assume the system is of the form \( \,{{\varvec{f}}}({{\varvec{x}}})={{\varvec{J}}}_n^{-1}\,\nabla H({{\varvec{x}}})={{\varvec{H}}}{{\varvec{x}}}+{{\varvec{c}}}\, \), where \({{\varvec{H}}}\) is a constant Hamiltonian matrix. Then the exponential Euler method (4.2) preserves energy, i.e., \(H({{\varvec{x}}}_+)=H({{\varvec{x}}})\).

Proof

The local problem now is (see (3.2))

Then \({\varvec{\xi }}_+={\varvec{\xi }}(h)=h\,\phi (h\,{{\varvec{F}}})\,{{\varvec{U}}}^\dagger \,({{\varvec{H}}}{{\varvec{x}}}+{{\varvec{c}}})\), i.e., the exponential Euler approximation gives the exact solution for the problem in \({\mathbb {R}}^{2k}\). Hence the energy is preserved in the small system and consequently also for \({{\varvec{x}}}_+={{\varvec{x}}}+{{\varvec{U}}}\,{\varvec{\xi }}(h)\). \(\square \)

4.2 Explicit exponential midpoint rule

We consider next the explicit exponential midpoint rule (EEMP)

where \({{\varvec{H}}}=D{{\varvec{f}}}({{\varvec{x}}})\) (see also [13]). For linear Hamiltonian problems \({{\varvec{f}}}({{\varvec{x}}})={{\varvec{H}}}{{\varvec{x}}}+{{\varvec{c}}}\) this gives

i.e., \({\frac{1}{2}}({{\varvec{x}}}_++{{\varvec{x}}})=e^{h{{\varvec{H}}}}{\frac{1}{2}}({{\varvec{x}}}+{{\varvec{x}}}_-)+h\,\phi (h{{\varvec{H}}})\,{{\varvec{c}}}=\widetilde{{{\varvec{x}}}}(h)\), where \(\widetilde{{{\varvec{x}}}}\) is the solution of \(\widetilde{{{\varvec{x}}}}'(t)={{\varvec{J}}}_n^{-1}\,\nabla H(\widetilde{{{\varvec{x}}}}(t))\), \(\widetilde{{{\varvec{x}}}}(0)=\tfrac{1}{2}({{\varvec{x}}}+{{\varvec{x}}}_-)\). Hence the energy of the averages is preserved:

Remark 4.1

In [9] it was noticed that the explicit midpoint rule (for the homogeneous problem)

preserves another quantity: \(\omega ({{\varvec{H}}}{{\varvec{x}}},{{\varvec{x}}}_+)=\omega ({{\varvec{H}}}{{\varvec{x}}}_-,{{\varvec{x}}})\). Equation (4.4) implies this, too.

Again we approximate (4.3) with

For this we have the following.

Theorem 4.1

Let the right hand side \({{\varvec{f}}}\) of the Hamiltonian system be linear, \({{\varvec{U}}}\in {\mathbb {R}}^{2n\times 2k}\) symplectic, and assume that \({{\varvec{x}}}-{{\varvec{x}}}_-\) is in the range of \({{\varvec{U}}}\). Then (4.4) holds for the scheme (4.5).

Proof

Now \({{\varvec{U}}}^\dagger {{\varvec{U}}}={{\varvec{I}}}\). Write \(\widehat{{{\varvec{x}}}}_+={\frac{1}{2}}({{\varvec{x}}}_++{{\varvec{x}}}),\ \widehat{{{\varvec{x}}}}={\frac{1}{2}}({{\varvec{x}}}+{{\varvec{x}}}_-)\). By the assumption there exists \({\varvec{\zeta }}\in {\mathbb {R}}^{2k}\) such that \({\frac{1}{2}}({{\varvec{x}}}-{{\varvec{x}}}_-)={{\varvec{U}}}{\varvec{\zeta }}\). Then \({{\varvec{x}}}=\widehat{{{\varvec{x}}}}+{{\varvec{U}}}{\varvec{\zeta }}\). From (4.5) and \(z\phi (z)=e^z-1\) we get

Thus the \(\widehat{{{\varvec{x}}}}\)-vectors propagate according to the exponential Euler method (4.2) and we get the result by Lemma 4.1. \(\square \)

We also have the following.

Lemma 4.2

Assume that \({{\varvec{U}}}\) is a full rank matrix at \({{\varvec{x}}}\) with a left inverse \({{\varvec{U}}}^\dagger \), and that \(R({{\varvec{U}}})\) contains \({{\varvec{x}}}_--{{\varvec{x}}}\). Then, the approximate explicit exponential midpoint rule is symmetric.

Proof

Multiplying (4.5) with \({{\varvec{U}}}\,e^{-h{{\varvec{F}}}}\,{{\varvec{U}}}^\dagger \) gives

Since \(e^{-z}\phi (z)=\phi (-z)\) we get

Thus the steps backward can be taken by replacing h with \(-h\). \(\square \)

4.3 Implicit exponential midpoint rule

As a third exponential integrator, we consider the implicit exponential midpoint rule (IEMP)

(see [4]). This gives a symmetric method when the linear part \( {{\varvec{H}}}\) of \( {{\varvec{f}}}\) is fixed. When \({{\varvec{H}}}\) comes from a linearization of a nonlinear Hamiltonian system (2.1), the method is symmetric if \( {{\varvec{H}}}= D{{\varvec{f}}}(\widehat{{{\varvec{x}}}})\), where \(\widehat{{{\varvec{x}}}}\) satisfies (4.6).

For linear systems of the form \( {{\varvec{f}}}({{\varvec{x}}}) = {{\varvec{H}}}{{\varvec{x}}}+ {{\varvec{c}}} \), the second equation of (4.6) can be written equivalently as \( {{\varvec{x}}}_+ = {{\varvec{x}}}+ 2 h\,\phi (2 h{{\varvec{H}}})\,{{\varvec{f}}}({{\varvec{x}}})\, \). Then, \({{\varvec{x}}}_+\) propagates according to the exponential Euler method and the energy is preserved in case \({{\varvec{U}}}\) is symplectic (Lemma 4.1).

When we apply (4.6) to the local system, the total approximation is symmetric if \({{\varvec{H}}}\) is evaluated at the midpoint \(\widehat{{\varvec{x}}}\).

Lemma 4.3

Assume that \({{\varvec{U}}}\) is a full rank matrix with a left inverse \( {{\varvec{U}}}^\dagger \). Suppose \( {{\varvec{H}}}\) is evaluated at \(\widehat{{{\varvec{x}}}}\), i.e., \( {{\varvec{H}}}\, = D{{\varvec{f}}}( \widehat{{{\varvec{x}}}} )\), where \(\widehat{{\varvec{x}}}\) satisfies (4.6). Consider the approximation

where \({\varvec{\xi }}\) is obtained from applying (4.6) to the local system. Then, (4.7) gives a symmetric method.

Proof

Applying (4.6) to the local system (3.2) gives

We show that (4.8) leads to a symmetric approximation of the full system. Multiplying the upper equation of (4.8) by \( e^{h{{\varvec{F}}}}\), and using the relation \(e^z \phi (z) = 2\phi (2z) - \phi (z)\) gives

Combining this and both equations of (4.8) gives

Multiplying this from left by \( {{\varvec{U}}}\) and adding \( {{\varvec{x}}}\) gives

where \( \widehat{{\varvec{x}}}= {{\varvec{x}}}+ {{\varvec{U}}}{\varvec{\xi }}\) and \( {{\varvec{x}}}_+ = {{\varvec{x}}}+ {{\varvec{U}}}{\varvec{\xi }}_+ \). Replacing here h with \(-h\) and multiplying from the left by \( \, {{\varvec{U}}}e^{h {{\varvec{F}}}} {{\varvec{U}}}^\dagger \) shows the symmetry. \(\square \)

The IEMP is symmetric if the Jacobian \({{\varvec{H}}}\) and the basis \( {{\varvec{U}}}\) are the same when considering stepping from \( {{\varvec{x}}}\) to \( {{\varvec{x}}}_+ \) and vice versa. This is the case if \( {{\varvec{H}}}\) is evaluated at \( \widehat{{{\varvec{x}}}} \), and if \( {{\varvec{U}}}\) is generated at \( \widehat{{{\varvec{x}}}} \) using the Krylov subspace methods described in Sect. 5.

Our numerical strategy is to perform one time step h using the exponential Euler method from \( {{\varvec{x}}}\) to \( \widetilde{{\varvec{x}}}\, \) in order to approximate the midpoint \( \widehat{{\varvec{x}}}\). Then after evaluating the Jacobian \( {{\varvec{H}}}\) and forming the basis \( {{\varvec{U}}}\) at \( \widetilde{{\varvec{x}}}\, \) using Krylov subspace methods, we solve the implicit equation using fixed point iteration and perform the step of size 2h to obtain \( {\varvec{\xi }}_+ \) and \( {{\varvec{x}}}_+ = {{\varvec{x}}}+ {{\varvec{U}}}{\varvec{\xi }}_+ \).

5 Forming the local basis using Krylov subspace methods

We discuss next the approximation of matrix valued \(\phi \) functions using Krylov subspace methods and show how they are naturally connected to the local approximation discussed in Sect. 4.

When matrix \({{\varvec{A}}}\) is large but the operation \({{\varvec{v}}}\rightarrow {{\varvec{A}}}{{\varvec{v}}}\) inexpensive, it is reasonable to use Krylov subspace methods. These work in Krylov subspaces

Then we have \({{\varvec{A}}}\,K_k({{\varvec{A}}},{{\varvec{v}}})\subset K_{k+1}({{\varvec{A}}},{{\varvec{v}}})\). The Arnoldi iteration uses the Gram-Schmidt process and produces an orthonormal basis \({{\varvec{q}}}^1,\ldots ,{{\varvec{q}}}^k\) for \(K_k({{\varvec{A}}},{{\varvec{v}}})\). Denote \({{\varvec{Q}}}_k=[{{\varvec{q}}}^1 \ldots {{\varvec{q}}}^k]\) and \({{\varvec{F}}}_k={{\varvec{Q}}}_k^T{{\varvec{A}}}{{\varvec{Q}}}_k\), which is a Hessenberg matrix.

If the iteration stops, i.e. \({{\varvec{A}}}^k{{\varvec{v}}}\in K_k({{\varvec{A}}},{{\varvec{v}}})\), then

-

a)

\({{\varvec{A}}}K_k({{\varvec{A}}},{{\varvec{v}}})\subset K_k({{\varvec{A}}},{{\varvec{v}}})\) and \( K_j({{\varvec{A}}},{{\varvec{v}}})= K_k({{\varvec{A}}},{{\varvec{v}}})\) for all \(j \ge k\),

-

b)

\({{\varvec{A}}}{{\varvec{Q}}}_k={{\varvec{Q}}}_k{{\varvec{F}}}_k\) and for the spectra we have \(\Lambda ({{\varvec{F}}}_k)\subset \Lambda ({{\varvec{A}}})\),

-

c)

If \(\varphi (z)=\sum _ja_j\,z^j\) has convergence radius larger than the spectral radius of \({{\varvec{A}}}\) and \({{\varvec{w}}}\in R({{\varvec{Q}}}_k)\), then

$$\begin{aligned} \varphi ({{\varvec{A}}}){{\varvec{v}}}={{\varvec{Q}}}_k\,\varphi ({{\varvec{F}}}_k)\,{{\varvec{Q}}}_k^T{{\varvec{v}}}. \end{aligned}$$

The effectivity of Krylov subspace methods is based on the fact that if the component of \({{\varvec{A}}}^k{{\varvec{v}}}\) orthogonal to \( K_k({{\varvec{A}}},{{\varvec{v}}})\) is small, then things are approximately as above and this can happen already for a reasonable size k. This motivates us to consider the approximation

(see [6] and [10]). We refer to [12] for a detailed error analysis.

In case \({{\varvec{Q}}}_k\) gives a symplectic basis for \(K_k({{\varvec{A}}},{{\varvec{v}}})\), one easily verifies that things are as above for \({{\varvec{F}}}_k = {{\varvec{Q}}}_k^\dagger {{\varvec{A}}}{{\varvec{Q}}}_k\) and with \({{\varvec{Q}}}_k^T\) replaced by \({{\varvec{Q}}}_k^\dagger \). In this case we use the approximation

We show next how the Krylov approximation (5.1) is naturally connected to the strategy of applying exponential integrators to the local system (3.2).

5.1 Equivalence of the Krylov and the local system approximations

Consider the local system (3.2) corresponding to the basis \({{\varvec{U}}}\) which gives a symplectic basis for \(K_k({{\varvec{H}}}, \, {{\varvec{f}}}({{\varvec{x}}}))\). Recall from Sect. 4 the strategy of solving the local system

numerically from \({\varvec{\xi }}(0)=0\) up to \( {\varvec{\xi }}(h) = {\varvec{\xi }}_1 \) and setting \({{\varvec{x}}}_+={{\varvec{x}}}+{{\varvec{U}}}{\varvec{\xi }}_1\). As shown in Sect. 4.1, applying the exponential Euler method to the local system gives the approximation

We immediately see from (5.1) that this is the Krylov subspace approximation of the exponential Euler step (4.1).

As shown in Sect. 4.2, applying the exponential explicit midpoint rule to the local system gives

This can be seen as a Krylov subspace approximation of the EEMP step (4.3). Here the vector \({{\varvec{x}}}_- - {{\varvec{x}}}\) has to be in the range of \({{\varvec{U}}}\). This is discussed in Sect. 5.2.1.

Similarly, if we perform a Krylov approximation of the IEMP step (4.6), and denote \({\varvec{\xi }}= \widehat{{\varvec{x}}}- {{\varvec{x}}}\) and \({\varvec{\xi }}_+ = {{\varvec{x}}}_+ - {{\varvec{x}}}\), we get the small dimensional system (4.8).

In case the basis matrix \({{\varvec{U}}}\) has orthonormal columns, we get the equivalence of the Krylov and local systems approximations by replacing \({{\varvec{U}}}^\dagger \) by \({{\varvec{U}}}^T\) above.

We next consider iterations which produce a symplectic basis for the Krylov subspace \(K_k({{\varvec{H}}}, \, {{\varvec{f}}}({{\varvec{x}}}_0))\), where \({{\varvec{H}}}\) is Hamiltonian.

5.2 Symplectic Krylov processes

In order to obtain good local approximations for a Hamiltonian system with linear part \({{\varvec{H}}}\) we would like to have

-

a)

A symplectic subspace \({{\varvec{W}}}\) with a corresponding basis.

-

b)

\(K_k({{\varvec{H}}},{{\varvec{f}}})\subset {{\varvec{W}}}\) in order to have polynomials of \({{\varvec{H}}}\) applied to \({{\varvec{f}}}\) represented in \({{\varvec{W}}}\). We expect this to be worth pursuing for approximations of \(\varphi ({{\varvec{H}}}){{\varvec{f}}}\).

Consider first the Krylov subspace corresponding to \({{\varvec{H}}}\) and \({{\varvec{v}}}\):

and set \(W_k=K_k({{\varvec{H}}},{{\varvec{v}}})+{{\varvec{J}}}_n\,K_k({{\varvec{H}}},{{\varvec{v}}})\).

-

Now \({{\varvec{H}}}\,W_k({{\varvec{H}}},{{\varvec{v}}})\not \subset W_{k+1}({{\varvec{H}}},{{\varvec{v}}})\), generally.

-

If p is a degree \(k-1\) polynomial, then \(p({{\varvec{H}}}){{\varvec{v}}}\in K_k({{\varvec{H}}},{{\varvec{v}}})\subset W_k\).

The construction of a symplectic basis for \( W_k\) is slightly more complicated than the standard Arnoldi process. We consider the following three processes.

Symplectic Arnoldi

In the first approach we simply reorthogonalize with respect to \(\left\langle \,\cdot ,\,\cdot \, \right\rangle \) and \(\omega (\,\cdot ,\,\cdot \,)\) the \(\left\langle \,\cdot ,\,\cdot \, \right\rangle \)—orthogonal vectors provided by the standard Arnoldi. The result is a symplectic and orthonormal matrix.

Here the columns of \({{\varvec{Q}}}\) form an orthonormal basis for \( K_k({{\varvec{H}}},{{\varvec{v}}})\) and those of \({{\varvec{U}}}\) a symplectic basis for \(W_k\).

Remark 5.1

There is a way to construct matrix \({{\varvec{F}}}\) more economically from the computations of step 2. But anyway the reorthogonalization stays the costly part of this approach.

Isotropic Arnoldi

Mehrmann and Watkins [17] suggest the isotropic Arnoldi process, which is a direct \(\left\langle \,\cdot ,\,\cdot \, \right\rangle \) and \(\omega (\,\cdot ,\,\cdot \,)\)—orthogonalization in the Arnoldi process:

Here we obtain a symplectic matrix with orthonormal columns. However its range does not necessarily contain the Krylov subspace \(K_k({{\varvec{H}}},{{\varvec{v}}})\). Thus, generally, this iteration does not have the property that \(p({{\varvec{H}}}){{\varvec{v}}}\) is in the span of \({{\varvec{u}}}^1,\ldots ,{{\varvec{u}}}^k\) for every polynomial p of degree \(k-1\). Since our present aim is to approximate matrix functions, this iteration can be expected to be less effective for our purposes.Footnote 2 We will see this in the numerical tests.

Also there is a possibility of a breakdown: after orthogonalization we may get \({{\varvec{r}}}=0\) without obtaining any useful information about \(K_k({{\varvec{H}}},{{\varvec{v}}})\).

Hamiltonian Lanczos

Benner, Faßbender, and Watkins have several versions of Hamiltonian Lanczos processes (see [2, 18]). The following is a promising one from Watkins (Algorithm 4 of [18]):

Due to short recursion this is an economic iteration. But it has similar problems as the usual biorthogonal Lanczos, e.g., near breakdowns and loss of orthogonality. These can be partly circumvented. For small k this may be a good choice.

By the very construction of these symplectic maps we get the following:

Proposition 5.1

Combining any of the symplectic Krylov processes with a method that preserves energy for the small dimensional system (3.2) will preserve the energy of the original system, too.

The costs of these algorithms are approximately as follows. To produce a basis of dimension k, the Hamiltonian Lanczos requires k matvecs (matrix vector multiplications) and 2k inner products, the Arnoldi iteration k matvecs and \(k^2/2\) inner products, the Isotropic Arnoldi k / 2 matvecs and \(k^2\) inner products, and the Symplectic Arnoldi k / 2 matvecs and \(2 k^2\) inner products. However, only the Hamiltonian Lanczos and the Arnoldi iteration provide then also a basis for a Krylov subspace of dimension k. Thus the Hamiltonian Lanczos process is the cheapest alternative to obtain a basis for a Krylov subspace of a given dimension.

The main weaknesses of each of these algorithms are

-

Arnoldi in \({\mathbb {R}}^{2n}\): the approximation is not Hamiltonian.

-

Hamiltonian Lanczos: breakdown, early loss of symplecticity.

-

Isotropic Arnoldi: does not include a Krylov subspace.

-

Symplectic Arnoldi: expensive.

5.2.1 Symplectic reorthogonalization of a vector to the basis

When using the EEMP method (4.3), the vector \({{\varvec{x}}}_--{{\varvec{x}}}\) needs to be added to the basis \({{\varvec{U}}}_k\) at each time step. For orthogonal and/or isotropic basis this is straightforward. For the symplectic basis, a symplectic version of the Gram–Schmidt algorithm adds \({{\varvec{x}}}\) and \({{\varvec{J}}}_n {{\varvec{x}}}\) to the basis \({{\varvec{U}}}= [{{\varvec{V}}}\,\, {{\varvec{W}}}]\). This algorithm is shown in the following pseudocode. Here the symplectic orthogonalization can also be performed in a modified Gram–Schmidt manner, one vector at a time. Notice also that in the second step the vector \(\widehat{{{\varvec{x}}}}\) could be scaled with any constant.

-

1.

\( \widehat{{{\varvec{x}}}} = {{\varvec{x}}}- \sum _{\ell =1}^{n} \omega ({{\varvec{w}}}_\ell ,{{\varvec{x}}}) {{\varvec{v}}}_\ell + \sum _{\ell =1}^{n} \omega ({{\varvec{v}}}_\ell ,{{\varvec{x}}}) {{\varvec{w}}}_\ell \)

-

2.

\( {{\varvec{v}}}_{k+1} \leftarrow \widehat{{{\varvec{x}}}}\)

-

3.

\( {{\varvec{x}}}\leftarrow {{\varvec{J}}}_n \widehat{{{\varvec{x}}}}\)

-

4.

\( \widetilde{{{\varvec{x}}}} = {{\varvec{x}}}- \sum _{\ell =1}^{n} \omega ({{\varvec{w}}}_\ell ,{{\varvec{x}}}) {{\varvec{v}}}_\ell + \sum _{\ell =1}^{n} \omega ({{\varvec{v}}}_\ell ,{{\varvec{x}}}) {{\varvec{w}}}_\ell \)

-

5.

\( {{\varvec{w}}}_{k+1} \leftarrow - \frac{\widetilde{{{\varvec{x}}}}}{\omega ({{\varvec{v}}}_{k+1}, \widetilde{{{\varvec{x}}}})}\)

6 Numerical tests

We compare numerically the three exponential time integrators of Sect. 4 and the four Arnoldi like processes of Sect. 5 to produce the local basis \({{\varvec{U}}}_k\). We apply the methods to large sparse Hamiltonian systems which are obtained from finite difference discretizations of one dimensional nonlinear wave equations. For ease of presentation, we first illustrate by an example our approach of deriving large sparse Hamiltonian systems from Hamiltonian PDEs. For further examples we refer to [5].

6.1 Spatial discretization of Hamiltonian PDEs

As an example consider the nonlinear Klein–Gordon equation in one dimension,

where u(x, t) is a scalar valued periodic function \((u(0,t) = u(L,t)\) for some \(L>0\)) and f is a smooth function. To obtain a Hamiltonian system approximating the equation (6.1), we perform a discretization with respect to x on an equidistant grid with an interval \(\varDelta x = L/n\), \(n \in \mathbb {N}\), and denote by \(q_i(t)\) and \(p_i(t)\) the approximations to \(u(i\varDelta x,t)\) and \(u_t(i\varDelta x,t)\). For the second derivative \(u_{xx}\) we use the central difference approximation. Expressing the approximations as vectors \({{\varvec{q}}}(t)\) and \({{\varvec{p}}}(t)\) (i.e. \(( {{\varvec{q}}})_i = q_i \) and \(( {{\varvec{p}}})_i = p_i \)), we get the approximation of the PDE in a matrix form as

where \(\{{{\varvec{f}}}({{\varvec{q}}})\}_i = f(q_i)\) and \(\varvec{\varDelta }_n \in \mathbb {R}^{n \times n}\) is the discretized Laplacian with periodic boundary conditions,

Defining the Hamiltonian function

where \(F'(u) = f(u)\), we see that

Setting \({{\varvec{x}}}(t) = \left[ {\begin{matrix} {{\varvec{q}}}(t) \\ {{\varvec{p}}}(t) \end{matrix}}\right] \), we have the Hamiltonian system in \(\mathbb {R}^{2n}\)

where \({{\varvec{x}}}_0 = \left[ {\begin{matrix} {{\varvec{q}}}_0 \\ {{\varvec{p}}}_0 \end{matrix}}\right] \) comes from the discretization of the initial values of (6.1).

6.2 Linear wave equation

As a first numerical example we consider the linear wave equation with the Dirichlet boundary conditions,

where \(x\in [0,L]\), \(t\in [0,T]\), and

Performing spatial discretization on an equidistant grid of size n using central differences leads to a Hamiltonian system of the form (6.4) with the Hamiltonian (6.3), where \({{\varvec{F}}}({{\varvec{q}}}) = - {{\varvec{c}}}^T {{\varvec{q}}}\), \(({{\varvec{c}}})_i = g(x_i)\). Here the initial data \( {{\varvec{x}}}_0 = \left[ {\begin{matrix} {{\varvec{q}}}_0 \\ {{\varvec{p}}}_0 \end{matrix}}\right] \), where \(({{\varvec{q}}}_0)_i = u_0(x_i)\), \(({{\varvec{p}}}_0)_i = v_0(x_i)\), \(x_i = i \varDelta x\), and \(\varDelta x = L/(n+1)\). Here the discretized Laplacian is given by

We set \(L=2\), \(n = 400\), and we integrate up to \(T=50\) with the step size \(h = T/n_t\), where \(n_t=2000\).

Using this linear example we illustrate the differences between the iterative processes of Sect. 5 to produce the basis \({{\varvec{U}}}_k \in \mathbb {R}^{2n \times 2k}\). We apply the exponential Euler method (4.2) to the small dimensional system (3.2) obtained from the projection using \({{\varvec{U}}}_k\).

As illustrated in Fig. 1, the approximation obtained using the Arnoldi iteration results generally in a linear growth of the energy error, whereas the symplectic basis gives a bounded energy error. Figure 2 shows that, as opposed to the Hamiltonian Lanczos approximation, the energy error of the Arnoldi approximation is dependent on the accuracy of the approximation. Notice that in both cases

and \({{\varvec{f}}}_0\) is the right hand side of (6.4) evaluated at x(0). Property (6.5) means that these processes give a polynomial approximation of degree \(\ell \) for the exponential Euler step which gives the exact solution at \(t=h\). This effect is also seen in Fig. 3, which depicts the solution errors for the Arnoldi iteration and the Hamiltonian Lanczos process. When \(\dim ({{\varvec{U}}}_k) = 16\), the methods give errors not far from each other, however for smaller basis size the symplectic alternative gives more accurate results.

When increasing the basis size also the isotropic Arnoldi and the symplectic Arnoldi start to perform better (see Fig. 4). Need for a larger dimension is expected for the symplectic Arnoldi since instead of (6.5), only \(K_{\ell /2}({{\varvec{H}}}, {{\varvec{f}}}_0) \subset \mathrm {Range}({{\varvec{U}}}_k)\), where \(\ell = \dim ({{\varvec{U}}}_k)\). Isotropic Arnoldi performs worse as expected due to its poor polynomial approximation properties. However, both processes give bounded energy errors as in both cases \({{\varvec{U}}}_k\) is symplectic (Fig. 5).

6.3 Nonlinear Schrödinger equation

Consider next a one dimensional nonlinear Schrödinger equation (NLS) on \([-4\pi ,4\pi ]\) with periodic boundary conditions (see [3]),

The initial value is given by

where the phase function \(\theta (x)\) satisfies

(see Fig. 6). We set \(V_0 = B = 1.0\) which gives a stable soliton solution (see [3]).

The phase function \(\theta (x)\) of the initial data (6.6)

We first carry out a spatial discretization on an equidistant grid with grid size \(\varDelta x = 8 \pi /n\) and denote by \(q_i(t)\) and \(p_i(t)\) the approximations to \(\mathrm {Re} \, \psi (i \varDelta x,t)\) and \(\mathrm {Im} \, \psi (i \varDelta x,t)\). This leads to a Hamiltonian system with the energy functional

where \( \varDelta _n\) is a discretized Laplacian of the form (6.2). We get from (6.7) the Hamiltonian system

where \(\{\overline{{{\varvec{x}}}}\}_i = i \varDelta x\), \(\circ \) denotes the Hadamard product, \(({{\varvec{u}}}\circ {{\varvec{v}}})_i = u_iv_i\), and \(\sin ^2(\overline{{{\varvec{x}}}})_i = \sin ^2(x_i).\) The initial condition \( \left[ {\begin{matrix} {{\varvec{q}}}_0 \\ {{\varvec{p}}}_0 \end{matrix}}\right] \) comes from the discretization of \(\psi _0(x)\).

We set \(n=500\) and integrate first from 0 to \(T=40 \pi \) with the step size \(h=T/n_t\), \(n_t = 8000\). The benefits obtained from the symmetry properties of the EEMP (Lemma 4.2) are illustrated by Figs. 7 and 8 which depict the relative energy errors and solution errors given by the EE and the EEMP, when \(\dim ({{\varvec{U}}}_k) = 20\). The nonsymmetric EE shows a linear growth in energy error and quadratic growth in solution error whereas the EEMP gives a bounded energy error and a linear growth in solution error.

We then integrate from 0 to \(T=80 \pi \) with the step size \(h=T/n_t\), \(n_t = 10000\), which implies that the norm of \(h \, {{\varvec{H}}}\) is now bigger and thus larger dimension for \({{\varvec{U}}}_k\) is required. Differences resulting from the symplecticity of the basis \({{\varvec{U}}}_k\) can be seen in Fig. 9 where we compare the IEMP when \(\dim ( {{\varvec{U}}}_k ) = 24\) and \({{\varvec{U}}}_k\) is given by the Arnoldi iteration and the Hamiltonian Lanczos process. The Arnoldi iteration shows a growth of energy error whereas the Hamiltonian Lanczos iteration shows bounded energy error.

6.4 Nonlinear Klein–Gordon equation

As a last numerical example we consider the nonlinear Klein–Gordon equation with periodic boundary conditions,

After a spatial discretization on the interval [0, L] using the finite differences with the grid size \(\varDelta x = L/n\), we get a Hamiltonian system of the form (6.4) with the Hamiltonian (6.3), where \(F(q_i) = \tfrac{m^2}{2} q_i^2 + \tfrac{g}{4} q_i^4\). We set the initial data

Set \(A=1\), \(m=0.5\), \(L=1\), \(g=1\) and \(n=400\). We integrate from 0 to \(T=180\) with the step size \(h = \frac{T}{n_t}\), \(n_t = 9000\).

Here applying the EEMP combined with the Arnoldi iteration results in an unstable method. However, the Hamiltonian Lanczos process gives a stable alternative. Figure 10 show the relative energy and solution errors for the EEMP combined with the Hamiltonian Lanczos, and for the EE combined with the Arnoldi iteration. For the energy error the EEMP combined with a symplectic basis gives a bounded energy error and also a smaller solution error than the EE combined with the Arnoldi iteration.

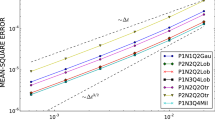

When applying the IEMP, the effect of the symplecticity of \({{\varvec{U}}}_k\) shows up. Figure 11 shows the relative energy errors when \(\dim ({{\varvec{U}}}_k) = 22\) and \({{\varvec{U}}}_k\) is produced using the Arnoldi iteration and the Hamiltonian Lanczos process.

7 Conclusions and outlook

The theoretical background of this research was the following. By backward error analysis it can be shown that applying a symplectic integrator to an integrable Hamiltonian systems gives a symplectic map \({{\varvec{x}}}_j\rightarrow {{\varvec{x}}}_{j+1}\) and also

for exponentially long times (see [11, Ch. X]). Behavior \((\#)\) can also be shown for symmetric time integrators when applied to integrable Hamiltonian systems (see [11, Ch. XI]).

Here we have considered exponential integration methods which give symplectic maps when applied to linear Hamiltonian systems. When using the approximations to nonlinear systems the resulting maps are not symplectic, but \((\#)\) can anyway be observed numerically. This is especially true when using the exponential explicit midpoint rule

in such a way that the range of \({{\varvec{U}}}\) contains

Then the method becomes symmetric. The effect of symplecticity of \({{\varvec{U}}}\) can be seen numerically when applying the implicit exponential midpoint rule (Sect. 4.3) to nonlinear Hamiltonian problems (see e.g. Fig. 11).

The numerical experiments clearly show that both preserving the Hamiltonian structure and the time symmetry are important when applying exponential integrators with Krylov approximations to large scale Hamiltonian systems. The Hamiltonian Lanczos method appears to be the most efficient method to produce a symplectic basis \({{\varvec{U}}}_k\) among those alternatives that give a Krylov subspace of a given dimension. However, further study is needed to find iterations with short \(\omega \)-orthogonalization recursions that are more efficient and numerically stable for the approximation of the \(\phi \) functions.

Notes

We use the shorthand notation \({{\varvec{x}}}={{\varvec{x}}}_j,\ {{\varvec{x}}}_+={{\varvec{x}}}_{j+1}\) etc. for the rest of the manuscript.

Mehrmann and Watkins use the iteration for computing eigenpairs of skew-Hamiltonian/Hamiltonian pencils.

References

Abraham, R., Marsden, J.E.: Foundations of Mechanics, 2nd edn. Benjamin/Cummings Publishing Company, Reading (1978)

Benner, P., Fassbender, H.: An implicitly restarted symplectic Lanczos method for the Hamiltonian eigenvalue problem. Linear Algebra Appl. 263, 75–111 (1997)

Bronski, J.C., Carr, L.D., Deconinck, B., Kutz, J.N.: Bose–Einstein condensates in standing waves: the cubic nonlinear Schrödinger equation with a periodic potential. Phys. Rev. Lett. 86(8), 1402–1405 (2001)

Celledoni, E., Cohen, D., Owren, B.: Symmetric exponential integrators with an application to the cubic Schrödinger equation. Found. Comput. Math. 8, 303–317 (2008)

Celledoni, E., Grimm, V., McLachlan, R.I., McLaren, D.I., O’Neale, D., Owren, B., Quispel, G.R.W.: Preserving energy resp. dissipation in numerical PDEs using the “Average Vector Field” method. J. Comput. Phys. 231(20), 6770–6789 (2012)

Druskin, V.L., Knizhnerman, L.A.: Two polynomial methods of calculating functions of symmetric matrices. USSR Comput. Math. Math. Phys. 29, 112–121 (1989)

Eirola, T.: Krylov integrators for Hamiltonian systems. Workshop on Exponential Integrators, October 20–23 (2004) Innsbruck, Austria. https://numerical-analysis.uibk.ac.at/images/Conference-Bilder/Workshop-Exponential-Integrators/pdfs/timo.pdf

Eirola, T., Huhtanen, M., von Pfaler, J.: Solution methods for \(\mathbb{R}\)-linear problems in \(\mathbb{C}^n\). SIAM J. Matrix Anal. Appl. 25(3), 804–828 (2003)

Eirola, T., Sanz-Serna, J.M.: Conservation of integrals and symplectic structure in the integration of differential equations by multistep methods. Numer. Math. 61, 281–290 (1992)

Gallopoulos, E., Saad, Y.: Efficient solution of parabolic equations by Krylov approximation methods. SIAM J. Sci. Stat. Comput. 13, 1236–1264 (1992)

Hairer, E., Lubich, C., Wanner, G.: Geometric Numerical Integration: Structure Preserving Algorithms for Ordinary Differential Equations. Springer Series in Computational Mathematics, vol. 31, 2nd edn. Springer, Berlin (2006)

Hochbruck, M., Lubich, C.: On Krylov subspace approximations to the matrix exponential operator. SIAM J. Numer. Anal. 34, 1911–1925 (1997)

Hochbruck, M., Lubich, C., Selhofer, H.: Exponential integrators for large systems of differential equations. SIAM J. Sci. Comput. 19, 1552–1574 (1998)

Hochbruck, M., Ostermann, A.: Exponential integrators. Acta Numer. 19, 209–286 (2010)

Koskela, A.: Structure preserving Krylov integrators for Hamiltonian systems. Master’s thesis, Aalto University, Finland (2010)

Lubich, C.: A variational splitting integrator for quantum molecular dynamics. Appl. Numer. Math. 48, 355–368 (2008)

Mehrmann, V., Watkins, D.: Structure-preserving methods for computing eigenpairs of large sparse skew-Hamiltonian/Hamiltonian pencils. SIAM J. Sci. Comput. 22, 1905–1925 (2001)

Watkins, D.S.: On Hamiltonian and symplectic Lanczos processes. Linear Algebra Appl. 385, 23–45 (2004)

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Christian Lubich.

In memory of Timo Eirola (1951–2016).

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Eirola, T., Koskela, A. Krylov integrators for Hamiltonian systems. Bit Numer Math 59, 57–76 (2019). https://doi.org/10.1007/s10543-018-0732-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10543-018-0732-y

Keywords

- Hamiltonian systems

- Exponential integrators

- Krylov subspace methods

- Symplectic integrators

- Symmetric integrators

- Hamiltonian Lanczos algorithm