Abstract

We consider an even probability distribution on the d-dimensional Euclidean space with the property that it assigns measure zero to any hyperplane through the origin. Given N independent random vectors with this distribution, under the condition that they do not positively span the whole space, the positive hull of these vectors is a random polyhedral cone (and its intersection with the unit sphere is a random spherical polytope). It was first studied by Cover and Efron. We consider the expected face numbers of these random cones and describe a threshold phenomenon when the dimension d and the number N of random vectors tend to infinity. In a similar way we treat the solid angle, and more generally the Grassmann angles. We further consider the expected numbers of k-faces and of Grassmann angles of index \(d-k\) when also k tends to infinity.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The following is a literal quotation from [7]: “Recent work has exposed a phenomenon of abrupt phase transitions in high-dimensional geometry. The phase transitions amount to a rapid shift in the likelihood of a property’s occurrence when a dimension parameter crosses a critical level (a threshold).” Two early observations in high-dimensional random geometry of such distinctly different behavior below and above a threshold were published in 1992. Dyer et al. [10] considered the convex hull of \(N=N(d)\) points chosen independently at random (with equal chances) from the vertices of the unit cube in \({{\mathbb {R}}}^d\). Let \(V_{d,N}\) denote the volume of this random polytope. Then, for every \(\varepsilon >0\),

Here \({{\mathbb {E}}}\) denotes mathematical expectation. The paper [10] has a similar result for the convex hull of i.i.d. uniform random points from the interior of the unit cube. We have quoted this example as an illustration of what we have in mind: for instance, a d-dimensional random polytope with its number N of vertices depending on d, where a small change of this dependence causes an abrupt change of some property as \(d\rightarrow \infty \). In the work of Vershik and Sporyshev [20], a d-dimensional random polytope is obtained as a uniform random orthogonal projection of a fixed regular simplex with N vertices in a higher-dimensional space, and threshold phenomena are exhibited for the expected numbers of k-faces, under the assumption of a linearly coordinated growth of the parameters d, N, k. Similar models, also with the regular simplex replaced by the regular cross-polytope, and random projections extended to more general random linear mappings, have found important applications in the work of Donoho and collaborators. We refer to the article of Donoho and Tanner [8], where also earlier work of these authors is cited and explained. The paper [9] of the same authors treats random projections of the cube and the positive orthant in a similar way. Generally in stochastic geometry, threshold phenomena have been investigated for face numbers, neighborliness properties, volumes, intrinsic volumes, more general measures, and for several different models of random polytopes. Different phase transitions were exhibited. We mention that [11] has extended the model of [10] by introducing more general distributions for the random points. The paper [17] considers convex hulls of i.i.d. random points with either Gaussian distribution or uniform distribution on the unit sphere. In [3, 4], the points have a beta or beta-prime distribution. The paper [5] studies facet numbers of convex hulls of random points on the unit sphere in different regimes. The papers [3, 13, 17] deal also with polytopes generated by intersections of random closed halfspaces. An important role is played by phase transitions in convex programs with random data. We quote from the work of Amelunxen et al. [1], which discovers and describes several of these phenomena: “This paper provides the first rigorous analysis that explains why phase transitions are ubiquitous in random convex optimization problems \(\dots \)Ṫhe applied results depend on foundational research in conic geometry.”

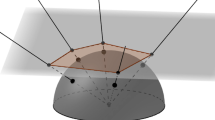

In this paper, we consider a model of random polyhedral convex cones (or, equivalently, of random spherical polytopes) that was introduced by Cover and Efron [6] (and more closely investigated in [14]). Let \(\phi \) be a probability measure on the Euclidean space \({{\mathbb {R}}}^d\) that is even (invariant under reflection in the origin o) and assigns measure zero to each hyperplane through the origin. For \(n\in {{\mathbb {N}}}\), the \((\phi ,n)\)-Cover–Efron cone \(C_n\) is defined as the positive hull of n independent random vectors \(X_1,\dots ,X_n\) with distribution \(\phi \), under the condition that this positive hull is different from \({{\mathbb {R}}}^d\). The intersection \(C_n\cap {{\mathbb {S}}}^{d-1}\) with the unit sphere \({{\mathbb {S}}}^{d-1}\) is a spherical random polytope, contained in some closed hemisphere. In the following it will be convenient to work with polyhedral cones instead of spherical polytopes.

For \(k\in \{1,\dots ,d-1\}\), let \(f_k(C_n)\) denote the number of k-dimensional faces of the cone \(C_n\) (equivalently, the number of \((k-1)\)-dimensional faces of the spherical polytope \(C_n\cap {{\mathbb {S}}}^{d-1}\)). We are interested in the asymptotic behavior of the expectation \({{\mathbb {E}}}f_k(C_n)\), as d tends to infinity and n grows suitably with d.

Convention

In the following, \(C_N\) is a \((\phi ,N)\)-Cover–Efron cone in \({{\mathbb {R}}}^d\), and \(N=N(d)\ge d\) is an integer depending on the dimension d, but we will omit the dimension d in the notation.

Any k-face of the cone \(C_N\) is a.s. the positive hull of k vectors from \(X_1,\dots ,X_N\), and there are \(\left( {\begin{array}{c}N\\ k\end{array}}\right) \) possible choices. Therefore, we consider the quotient \({{\mathbb {E}}}f_k(C_N)/\left( {\begin{array}{c}N\\ k\end{array}}\right) \). For this, we claim the following behavior above and below a threshold.

Theorem 1.1

Suppose that

with a number \(\delta \in [0,1]\). Let \(k\in {{\mathbb {N}}}\) be fixed. Then the Cover–Efron cone \(C_N\) satisfies

We have assumed here that the number k is constant. But if in the case where \(0\le \delta < 1/2\) we let also k depend on d, in such a way that

(thus, k grows with d, but sublinearly) then

This is easily read off from the proof of the second part of Theorem 1.1 given in Sect. 4.

The case \(1/2<\delta \le 1\) of Theorem 1.1 follows from the stronger Theorem 1.2 below, but we have formulated Theorem 1.1 in this way to illuminate the different behavior if \(\delta \) is below 1/2 or above 1/2. Partial information on the case \(\delta =1/2\) is contained in Theorem 1.2. In this theorem, also the number k is allowed to depend on d.

Theorem 1.2

Let \(k=k(d)\in \{1,\dots ,d-1\}\). Suppose that

with some real constant a. Then

The same conclusion is obtained, if \(k=k(d)\) is bounded (as \(d\rightarrow \infty \)) and

with constants \(a>0\) and \(\alpha \ge 0\).

The assumptions of this theorem are, in particular, satisfied if k is constant and

(which holds, for instance, if \(N-2d\) is bounded from above); the latter holds if \({d}/{N}\rightarrow \delta >{1}/{2}\). We do not have complete information in the case where \(d/N\rightarrow 1/2\), but there is a precise result if \(d/N=1/2\).

Theorem 1.3

If \(N=2d\) and \(k\in {{\mathbb {N}}}\) is fixed, then

On the other hand, Theorem 1.7 below implies that if

then

As another functional of a closed convex cone \(C\subset {{\mathbb {R}}}^d\), we consider the solid angle \(v_d(C)\). This is the normalized spherical Lebesgue measure of \({C\cap {{\mathbb {S}}}^{d-1}}\). (We avoid the notation \(V_d(C)\) used in [14], since \(V_d\) is often used for the volume of a convex body. The reader is warned that what we denote here by \(v_d(C)\) was denoted by \({v_{d-1}(C\cap {{\mathbb {S}}}^{d-1})}\) in [18, Sect. 6.5].)

More generally, we consider the Grassmann angles. For a closed convex cone \(C\subset {{\mathbb {R}}}^d\) which is not a subspace, the jth Grassmann angle of C, for \(j\in \{1,\dots ,d-1\}\), is defined by

where \({{\mathcal {L}}}\) is a random \((d-j)\)-dimensional subspace with distribution \(\nu _{d-j}\). The latter is the unique Haar probability measure on \(G(d,d-j)\), the Grassmannian of \((d-j)\)-dimensional linear subspaces of \({{\mathbb {R}}}^d\). Thus,

Grassmann angles were introduced by Grünbaum [12], in a slightly different, though equivalent way. Grünbaum’s Grassmann angles are given by \(\gamma ^{d-j,d}=1-2U_j\). We note that \(v_d=U_{d-1}\), and that \(U_j(C)\le 1/2\), with equality if C is a halfspace.

Theorem 1.4

Suppose that

with a number \(\delta \in [0,1]\). Let \(k\in {{\mathbb {N}}}\) be fixed. Then the \((\phi ,N)\)-Cover–Efron cone \(C_N\) satisfies

We have assumed here that the number k is constant. But if in the case where \(0\le \delta < 1/2\) we let also k depend on d, in such a way that

(thus, k grows with d, but sublinearly) then

This can be seen from the second part of the proof of Theorem 1.4 given in Sect. 5.

Again, the first part of this theorem has a stronger version, given by the following theorem.

Theorem 1.5

Let \(k=k(d)\in \{1,\dots ,d-1\}\). Suppose that

with some real constant a. Then

The same conclusion is obtained, if \(k=k(d)\) is bounded (as \(d\rightarrow \infty )\) and

with constants \(a>0\) and \(\alpha \ge 0\).

And similarly as above, there is a more precise asymptotic relation if \(N=2d\).

Theorem 1.6

If \(N=2d\) and \(k\in {{\mathbb {N}}}\) is fixed, then

On the other hand, Theorem 1.9 below implies that if

then

Clearly, in Theorem 1.1 (and similarly in Theorem 1.4), the change when passing a threshold is not so abrupt as in the examples from [9], where both parameters, N and k, grow linearly with the dimension: below the threshold \(\delta =1/2\), the limit in question increases (decreases) with the parameter to an extremal value, above the threshold, it remains constant. The situation changes if also the number k increases sublinearly in the dimension. Then indeed we also have a sharp threshold as pointed out above. Now we consider the case where k increases proportionally to the dimension; then a more subtle phase transition is observed. Under a linearly coordinated growth, for k-faces we find the same threshold as established by Donoho and Tanner [9] in their investigation of random linear images of orthants. This may seem unexpected, since the random cones considered in [9] and here have different distributions (see, however, the appendix).

As in [9], we define

(the index W stands for ‘weak’ threshold).

Theorem 1.7

Let \(0<\delta <1\), \(0\le \rho <1\) be given. Let \(k(d)=k<d<N=N(d)\) be integers such that

Then

We note that the first assumption of this theorem, \(\rho <\rho _W(\delta )\), implies that for large d we have \(N+k<2d\).

Adapting an argument of Donoho and Tanner [9] to the present situation, we can also replace the convergence of an expectation in the first part of Theorem 1.7 by the convergence of a probability, at the cost of a smaller threshold.

Theorem 1.8

Let \(0<\delta ,\rho <1\) be given, where \(\delta >1/2\). Let \(k(d)=k<d<N=N(d)\) be integers such that

Then there exists a positive number \(\rho _S(\delta )\) such that, for \(\rho <\rho _S(\delta )\),

There is also a counterpart to Theorem 1.7 for Grassmann angles.

Theorem 1.9

Let \(0<\delta <1\), \(0\le \rho <1\) be given. Let \(k(d)=k<d<N=N(d)\) be integers such that

Then

After some preliminaries in the next section, we collect a number of auxiliary results about sums of binomial coefficients in Sect. 3. Then we prove the first three theorems in Sect. 4, Theorems 1.4 to 1.6 in Sect. 5, Theorems 1.7 and 1.8 in Sect. 6, and Theorem 1.9 in Sect. 7.

2 Preliminaries

First we recall two classical facts. For \(n\in {{\mathbb {N}}}\), let \(H_1,\dots ,H_n\in G(d,d-1)\). Assume that these hyperplanes are in general position, that is, the intersection of any \(m\le d\) of them is of dimension \(d-m\). Then the number of d-dimensional cones in the tessellation of \({{\mathbb {R}}}^d\) induced by these hyperplanes is given by

From this result of Steiner (in dimension three) and Schläfli, Wendel has deduced the following. If \(X_1,\dots ,X_n\) are i.i.d. random vectors in \({{\mathbb {R}}}^d\) with distribution \(\phi \) (enjoying the properties mentioned above), then

where \({{\mathbb {P}}}\) stands for probability and \({{\,\mathrm{pos}\,}}\) denotes the positive hull. For references and proofs, we refer to [18, Sect. 8.2.1]. Now we can write down the distribution of the \((\phi ,n)\)-Cover–Efron cone \(C_n\), namely

for \(B\in {\mathcal {B}}({{\mathcal {C}}}^d)\), where \({{\mathcal {C}}}^d\) denotes the space of closed convex cones in \({{\mathbb {R}}}^d\) (with the topology of closed convergence) and \({\mathcal {B}}({{\mathcal {C}}}^d)\) is its Borel \(\sigma \)-algebra.

There is an equivalent representation of \(C_n\). For this, we denote by \(\phi ^*\) the image measure of \(\phi \) under the mapping \(x\mapsto x^\perp \) from \({{\mathbb {R}}}^d\setminus \{o\}\) to \(G(d,d-1)\). Let \({\mathcal {H}}_1,\dots ,{\mathcal {H}}_n\) be i.i.d. random hyperplanes with distribution \(\phi ^*\). They are almost surely in general position. The \((\phi ^*,n)\)-Schläfli cone \(S_n\) is obtained by picking at random (with equal chances) one of the d-dimensional cones from the tessellation induced by \({\mathcal {H}}_1,\dots ,{\mathcal {H}}_n\). Its distribution is given by

for \(B\in {\mathcal {B}}({{\mathcal {C}}}^d)\), where \({{\mathcal {F}}}_d(H_1,\dots ,H_n)\) is the set of d-cones in the tessellation induced by \(H_1,\dots ,H_n\). We have (see [14, Thm. 3.1])

where \(S_n^\circ \) denotes the polar cone of \(S_n\).

For the expectations appearing in our theorems, explicit representations are available. The proofs of Theorems 1.1, 1.2, 1.3, and 1.7 are based on the formula

\(k\in \{0,\ldots ,d-1\}\) (see [6, (3.3)] or [14, (27)]). For the proofs of Theorems 1.4, 1.5, 1.6, and 1.9, we use the explicit formula

for \(k\in \{1,\ldots ,d-1\}\) (see [14, (29)]). It is sometimes useful to write this in the form

(where an empty sum is zero, by definition).

3 Auxiliary Results on Binomial Coefficients

First we collect some information on the Wendel probabilities

Let \(\xi _n\) be a random variable having the binomial distribution with parameters n and 1/2, i.e.,

Thus we can write

and therefore, by (1),

Similarly, by (2) we have

The following two lemmas concern the Wendel probabilities and are therefore stated here, although they are not needed before the proof of Theorem 1.8.

Lemma 3.1

For \(k\in \{1,\dots ,d-1\}\),

with

Proof

Writing \(\left( {\begin{array}{c}N-1\\ i\end{array}}\right) = \left( {\begin{array}{c}N-2\\ i-1\end{array}}\right) +\left( {\begin{array}{c}N-2\\ i\end{array}}\right) \) for \(i=1,\dots ,d-1\), we obtain

This and induction can be used to prove that for \(k\in \{1,\dots ,d-1\}\),

For \(j\in \{1,\dots ,k\}\) we have

This gives

and thus the assertion. \(\square \)

Lemma 3.2

If \(N-2d+k-1<0\), the sum is zero, by convention.

Proof

Let M, p be integers. If \(1\le p\le {M}/{2}\), then

thus

If \(p>{M}/{2}\), we have

Hence, for arbitrary \(p\ge 1\) we may write

with the convention that the last sum is zero if \(2p> M\). The choice \(M= N-k-1\) and \(p=d-k\) now gives the assertion. \(\square \)

Below some information on binomial coefficients is required. First we note Stirling’s formula

It implies, in particular, that

(where \(a_n\sim b_n\) as \(n\rightarrow \infty \) means that \(\lim _{n\rightarrow \infty }a_n/b_n=1\)).

The following lemma gives upper and lower bounds for the expressions appearing in (3). For the proof of the upper bound, we adjust and slightly refine the argument for [15, Prop. 1(c)], in the current framework. The improved lower bound in (12) will be crucial in the following.

Lemma 3.3

Let \(n\in {{\mathbb {N}}}\), \(m\in {{\mathbb {N}}}_0\), and \(2m\le n+1\).

-

(a)

If \(2m<n+1\), then

$$\begin{aligned} \frac{1}{\left( {\begin{array}{c}n\\ m+1\end{array}}\right) }\sum _{j=0}^{m}\left( {\begin{array}{c}n\\ j\end{array}}\right) \le \frac{m+1}{n-m} \cdot \frac{n-m+1}{n-2m+1} \biggl (1-\biggl (\frac{m}{n-m+1}\biggr )^{m+1}\biggr ). \end{aligned}$$(10)If \(2m=n+1\), then

$$\begin{aligned} \frac{1}{\left( {\begin{array}{c}n\\ m+1\end{array}}\right) }\sum _{j=0}^{m}\left( {\begin{array}{c}n\\ j\end{array}}\right) \le \frac{(m+1)^2}{n-m}. \end{aligned}$$(11) -

(b)

If \(2\le \ell \le m\), then

$$\begin{aligned} \frac{m-\ell +1}{n-2m+2\ell -1}\biggl (1-\biggl (\frac{m-\ell +1}{n-m+\ell }\biggr )^{\ell +1}\biggr )\le \frac{1}{\left( {\begin{array}{c}n\\ m+1\end{array}}\right) }\sum _{j=0}^{m}\left( {\begin{array}{c}n\\ j\end{array}}\right) . \end{aligned}$$(12)Moreover,

$$\begin{aligned} \frac{m+1}{n-m}\le \frac{m+1}{n-m}\cdot \frac{n+1}{n+1-m}\le \frac{1}{\left( {\begin{array}{c}n\\ m+1\end{array}}\right) }\sum _{j=0}^{m}\left( {\begin{array}{c}n\\ j\end{array}}\right) . \end{aligned}$$(13)

Proof

(a) The cases \(n=1\), \(m\in \{0,1\}\) and \(n\ge 2\), \(m=0\) are easy to check. Now let \(n\ge 2\), \(m\ge 1\), and hence also \(m\le n-1\). If \(j\in \{0,\ldots ,m-1\}\), then

since

Therefore, if \(2m<n+1\), then \(0<q_0:=m/(n-m+1)<1\) and

which implies (10). If \(2m=n+1\), then \(m/(n-m+1)=1\), and (11) follows similarly.

(b) Note that \(m\le ({n+1})/{2}\le n\), and \(m\le n-1\) if \(n\ge 2\). Hence, if \( 2\le \ell \le m\), then \(m\le n-1\), \(n-2m+2\ell -1\ge 2\), \((n+1)/(m+1)>1\), and \(0<q_1:=(m-\ell +1)/(n-m+\ell )<1\). Then, for \(j\in \{m-\ell ,\ldots ,m\}\) we obtain

and hence

which yields (12). If \(m=n\), then (13) holds trivially, since \(\left( {\begin{array}{c}n\\ n+1\end{array}}\right) =0\). It also holds for \(m=0\). In the remaining cases, we have

This completes the proof of (b). \(\square \)

From (10) and (13) with \(n=N-1\) and \(m=d-2\), we deduce that

if \(N>2d-4\).

Lemma 3.4

If \(d/N \rightarrow \delta \) as \(d\rightarrow \infty \), with \(0\le \delta <1/2\), then

Proof

Assume that \(d/N\rightarrow \delta \) as \(d\rightarrow \infty \), with a number \(0\le \delta <1/2\). We write \(N=\alpha d\), where \(\alpha \) depends on d and satisfies \(\alpha \rightarrow \delta ^{-1}\) as \(d\rightarrow \infty \). If \(\delta =0\), this means that \(\alpha \rightarrow \infty \). We assume that d is so large that \(\alpha >2\). From (14) we have

We conclude that

Lemma 3.3(b) provides the lower bound

if \(2\le \ell _1\le d-2\). From this, we obtain

for each fixed \(\ell _1\ge 2\). Letting \(\ell _1\rightarrow \infty \), we find that

Together with (15) this completes the proof. \(\square \)

We state another simple lemma.

Lemma 3.5

Let \(m\in {{\mathbb {N}}}\). Then

Proof

We use \(\left( {\begin{array}{c}n\\ \ell \end{array}}\right) = \left( {\begin{array}{c}n\\ n-\ell \end{array}}\right) \). If \(x:= \sum _{i=0}^m\left( {\begin{array}{c}2m\\ m\end{array}}\right) \), then

which gives the first relation. If \(y:= \sum _{i=0}^{m-1}\left( {\begin{array}{c}2m-1\\ i\end{array}}\right) \), then

which gives the second relation. \(\square \)

4 Proofs of Theorems 1.1 to 1.3

Proof of Theorem 1.1

As already mentioned, the first part of Theorem 1.1 follows from Theorem 1.2, which will be proven below. To prove the second part of Theorem 1.1, we assume that \(d/N\rightarrow \delta \) as \(d\rightarrow \infty \), where \(0\le \delta <1/2\). We write (1) in the form

and here

Since also \((d-k)/(N-k)\rightarrow \delta \), we deduce from Lemma 3.4 that the normalized sums in the numerator and denominator of (16) tend to the same finite limit. It follows that \(\lim _{d\rightarrow \infty }{{\mathbb {E}}}f_k(C_N)/\left( {\begin{array}{c}N\\ k\end{array}}\right) =(2\delta )^k\). \(\square \)

Proof of Theorem 1.2

As in Sect. 3, we denote by \(\xi _n\) a random variable having the binomial distribution with parameters \(n\in {{\mathbb {N}}}\) and \(p=1/2\). Let \(\xi _n^*\) denote the standardized version of \(\xi _n\), that is, \(\xi _n^*=(\xi _n-{{\mathbb {E}}}\xi _n)/\sqrt{{{\mathbb {V}}}\xi _n}\) with \({{\mathbb {E}}}\xi _n=n/2\) and \({{\mathbb {V}}}\xi _n=n/4\). Then (5) implies that

by the Berry–Esseen Theorem (see, e.g., Shiryaev [19, p. 426]), where \(\Phi \) is the distribution function of the standard normal distribution. We have

Since

we get for \(({k+1})/{N}\le {1}/{2}\) (which holds if d is large enough) that

thus

with \(\theta \in [1,4]\). This shows that

We define

hence \(a(d)\ge 2a\), \(b(d)\rightarrow 0\) as \(d\rightarrow \infty \), and

In the same way we get

with

where \({\overline{\theta }}\in [1,4]\). Thus we arrive at

with intermediate values \(z_1,z_2\in {{\mathbb {R}}}\). Since the derivative \(\Phi '\) is bounded, further \(b(d),c(d)\rightarrow 0\) as \(d\rightarrow \infty \), and \(\Phi (a(d))\ge \Phi (2a)>0\), we conclude that the quotient tends to 1 as \(d\rightarrow \infty \).

For the remaining assertion, we assume that k(d) is bounded. Then we have \(b(d)=O(1/{\sqrt{N}})\). Since the case where a(d) is bounded from below has been settled above, we can assume that \(a(d)\le -1\). Thus,

In view of (17), it is sufficient to show that

To verify this, we use the fact that

(the difference of the function on the left-hand side and the right-hand side converges to zero, as \(x\rightarrow \infty \), the derivative of this difference is non-positive for \(x\ge 1\)). Applying this inequality with \(x=-a(d)\), we get

Since

as \(d\rightarrow \infty \), the assertion follows. \(\square \)

Proof of Theorem 1.3

Suppose that \(N=2d\). Then by (1) we have

We distinguish two cases. If k is odd, say \(k=2\ell -1\) with \(\ell \in {{\mathbb {N}}}\), then

where Lemma 3.5 was used. Since Stirling’s formula (8) yields

it follows (again using Lemma 3.5) that

If k is even, say \(k=2\ell \) with \(\ell \in {{\mathbb {N}}}\), then

by Lemma 3.5. By Stirling’s approximation (8),

and hence we get

Thus in both cases the asymptotic relation is proven. \(\square \)

5 Proofs of Theorems 1.4 to 1.6

Proof of Theorem 1.4

The first part of Theorem 1.4 follows from Theorem 1.5, which will be proven below. For the second part of the proof, we assume that \(d/N\rightarrow \delta \) as \(d\rightarrow \infty \), with \(0\le \delta <1/2\). We note that relation (2) shows that

Let

Then

and here

Together with \(d/N\rightarrow \delta \) we have \((d-k+1)/N\rightarrow \delta \) and \((d+1)/N\rightarrow \delta \), hence Lemma 3.4 yields that

The assertion follows. \(\square \)

Proof of Theorem 1.5

The random variables \(\xi _n\) and \(\xi _n^*\) are defined as in the proof of Theorem 1.2. Using (6), we proceed as in that proof and obtain

The rest of the proof follows that for Theorem 1.2. \(\square \)

Proof of Theorem 1.6

Let \(N=2d\). Using Lemma 3.5, we get

By Stirling’s approximation (8), we obtain for \(j\in \{0,\ldots ,k-1\}\) that

Hence we get

Thus we arrive at

which completes the proof. \(\square \)

6 Linearly Growing Face Dimensions

In this section and the next one, we allow also the number k to grow linearly with the dimension d. In the present section, we are interested in a phase transition for the expectation \({{\mathbb {E}}}f_k(C_N)/\left( {\begin{array}{c}N\\ k\end{array}}\right) \). It turns out that it appears at the same threshold as it was observed earlier by Donoho and Tanner [9] for a different, but closely related class of random polyhedral cones. These authors considered a real random \(d\times N\) matrix \(\mathsf{A}\) of rank d, where \(d<N\), the nonnegative orthant

of \({{\mathbb {R}}}^N\), and its image \(\mathsf{A}{{\mathbb {R}}}^N_+\) in \({{\mathbb {R}}}^d\). Considering the column vectors of \(\mathsf{A}\) as random vectors in \({{\mathbb {R}}}^d\), the image \(\mathsf{A}{{\mathbb {R}}}^N_+\) is the positive hull of these vectors. For a suitable distribution, the random cone \(\mathsf{A}{{\mathbb {R}}}^N_+\) is obtained in a similar way as the Cover–Efron cone, just by omitting the condition that the cone is different from \({{\mathbb {R}}}^d\). Imposing this condition leads, of course, to different distributions of the random cones. Comparing formula (13) of [9] with our formula (1), where the right-hand side can be written as

we see that it results in an additional denominator in the expression for the expected number of k-faces, thus increasing this expectation. Therefore some of our estimates, though leading to the same threshold, require more effort.

Proof of Theorem 1.7

First we assume that \(0\le \rho <\rho _W(\delta )\). For this part of the proof, we reproduce an argument which was suggested by an anonymous referee (our original proof can be found at arXiv:2004.11473v1). We use the representation

given by (5), where \(\xi _n\) is a random variable with binomial distribution with parameters n and 1/2. Since \(\xi _n\) is in distribution equal to the sum of n i.i.d. Bernoulli random variables with parameter 1/2, the weak law of large numbers gives

for each \(\varepsilon >0\). If now \(d/N\rightarrow \delta >{1}/{2}\) and \({k}/{d}\rightarrow \rho <2-\delta ^{-1}\), then

Therefore,

and hence

as stated.

For the second part of the proof, we use (16) and show first that for increasing d the terms in brackets remain between two positive constants. The asymptotic behavior of the remaining quotient is then determined with the aid of Stirling’s formula.

We assume that \(\rho >\rho _W(\delta )\). Then \(\rho >2-\delta ^{-1}\), irrespective of whether \(\delta \ge 1/2\) or not. For sufficiently large d (which we assume in the following), we then have \(N-2d+k>0\). Thus, we can apply (14) to the normalized sum in the numerator of (16). This yields

Here,

where the last denominator is positive. It follows that

for all sufficiently large d. Here and below we denote by \(c_i\) a positive constant that is independent of d.

In view of (16), we now determine the asymptotic behavior of

Here,

To treat the remaining terms, we use the Stirling formula (8). Define

then \(\delta _d\rightarrow \delta \) and \(\rho _d\rightarrow \rho \) as \(d\rightarrow \infty \). We obtain

where \(\varphi \) is contained in a fixed interval independent of d, and

We define

Note that for \(a,b\in (0,1)\) we have \(b>2-a^{-1}\) if and only if \(a<1/(2-b)\). Let \(H_a(b):=H(a,b)\). Differentiation yields

Hence \(H_a'(b)<0\) for \(b>2-a^{-1}\), since

If \(a\le 1/2\), then

for \(b\in (0,1)\). On the other hand, if \(a>1/2\) and \(b>2-a^{-1}\), we have

Since the function g defined by (21) satisfies \(g(1/2)=1\) and \(g'(a)>0\) for \(1/2<a<1\), we also have

Now we distinguish two cases.

-

(i)

Let \(\delta \le 1/2\). Then (16), (19), and (20) yield

$$\begin{aligned} \limsup _{d\rightarrow \infty }\frac{{{\mathbb {E}}}f_k(C_N)}{\left( {\begin{array}{c}N\\ k\end{array}}\right) }\le & {} \frac{1-\rho }{1-\rho \delta }\cdot \frac{\sqrt{1-\rho \delta }}{\sqrt{1-\rho }} \limsup _{d\rightarrow \infty } H(\delta _d,\rho _d)^N\frac{1+c_2}{1}\\\le & {} (1+c_2) \limsup _{d\rightarrow \infty }c_3^N=0, \end{aligned}$$where \(H(\delta _d,\rho _d)\le c_3<1\), since \(H(\delta _d,\rho _d)\rightarrow H(\delta ,\rho )<H(\delta ,0)=1\).

-

(ii)

Let \(\delta > 1/2\). Then we can assume that \(N/d<c_4<2\). We have

$$\begin{aligned} \sum _{i=0}^{d-2}\left( {\begin{array}{c}N-1\\ i\end{array}}\right) =2^{N-1}-\sum _{j=0}^{N-d}\left( {\begin{array}{c}N-1\\ j\end{array}}\right) . \end{aligned}$$Since \(2(N-d)<N\), Lemma 3.3 yields

$$\begin{aligned} \left( {\begin{array}{c}N-1\\ N-d+1\end{array}}\right) ^{-1}\,\sum _{j=0}^{N-d}\left( {\begin{array}{c}N-1\\ j\end{array}}\right) \le \frac{{N}/({d-1})-1}{2-{N}/{d}}, \end{aligned}$$and hence

$$\begin{aligned} \left( {\begin{array}{c}N-1\\ d-1\end{array}}\right) ^{-1}\,\sum _{i=0}^{d-2}\left( {\begin{array}{c}N-1\\ i\end{array}}\right) \ge \left( {\begin{array}{c}N-1\\ d-1\end{array}}\right) ^{-1}2^{N-1}-\frac{1}{2-{N}/{d}}. \end{aligned}$$To estimate the last binomial coefficient, we use Stirling’s approximation (8) together with (22). Thus, we get for large d the lower bound

$$\begin{aligned} \left( {\begin{array}{c}N-1\\ d-1\end{array}}\right) ^{-1}\,\sum _{i=0}^{d-2}\left( {\begin{array}{c}N-1\\ i\end{array}}\right) \ge c_5 H(\delta _d,2-\delta _d^{-1})^N-c_6. \end{aligned}$$Combining these estimates and starting again from (16), we finally obtain

$$\begin{aligned}&\limsup _{d\rightarrow \infty }\frac{{{\mathbb {E}}}f_k(C_N)}{\left( {\begin{array}{c}N\\ k\end{array}}\right) } \le c_7 \limsup _{d\rightarrow \infty }\, H(\delta _d,\rho _d)^N\frac{1+c_2}{ 1+ c_5H(\delta _d,2-\delta _d^{-1})^N -c_6}\\&\qquad \qquad =c_8\limsup _{d\rightarrow \infty }\,\biggl (\frac{H(\delta _d,\rho _d)}{H(\delta _d,2-\delta _d^{-1})}\biggr )^{N}\frac{1}{(1-c_6) H(\delta _d,2-\delta _d^{-1})^{-N}+c_5}=0. \end{aligned}$$Here we have used that

$$\begin{aligned} \frac{H(\delta ,\rho )}{H(\delta ,2-\delta ^{-1})}<1\quad \ \ \text {for }\ \rho >2-\delta ^{-1} \end{aligned}$$by (22) and that

$$\begin{aligned} H(\delta ,2-\delta ^{-1})>1\ \quad \text{ for } \ \delta >\frac{1}{2} \end{aligned}$$by (23). This completes the proof also in the case \(\rho >\rho _W(\delta )\).

\(\square \)

Remark

Under the assumption \(d/N\rightarrow \delta >1/2\), \(d\rightarrow \infty \), we have seen in the first part of the preceding proof that \({{\mathbb {P}}}{{{\,\mathrm{pos}\,}}{\{X_1,\dots ,X_{N-1}\}}}\,{\ne }\,{{\mathbb {R}}}^d)={{\mathbb {P}}}(\xi _{N-1}\le d-1)\rightarrow 1\), for \(d\rightarrow \infty \), as a simple consequence of the weak law of large numbers. In this situation, an application of a large deviation (concentration) result for the binomial distribution in fact shows that the convergence is exponentially fast. For this, we choose d sufficiently large so that \({d}/({N-1})\ge 1/2\). Then

by Okamoto’s inequality (see [16, Thm. 2 (i)]), which applies as \({d}/({N-1})-1/2\ge 0\) (if d is large enough). Hence, if d is sufficiently large so that \({d}/({N-1})- 1/2\ge (\delta -1/2)/{\sqrt{2}}>0\), we obtain

as asserted. On the other hand, if \(({d-1})/({N-1})\le {1}/{2}\) then [16, Thm. 2 (ii)] yields

Hence, if \({d}/{N}\rightarrow \delta <{1}/{2}\) and d is large enough so that \(1/2-{d}/({N-1})\ge (1/2-\delta )/{\sqrt{2}}>0\), then

In this case, a finer analysis of the ratio (18) with corresponding lower bounds for the involved probabilities is required.

We prepare the proof of Theorem 1.8 by a lemma, which serves to establish the threshold \(\rho _S\) and to provide an upper estimate for it. By \(\mathsf{H}\) we denote the binary entropy function with base e, that is

(with \(0\log 0:=0\)). We note that \(\mathsf{H}(0)=\mathsf{H}(1)=0\) and that \(\mathsf{H}\) attains its unique maximum, \(\log 2\), at the point 1/2. As in [9], we consider the function defined by

For a later application, we remark that

Lemma 6.1

For \(\delta \in (1/2,1)\), the function \(G_\delta \) defined by \(G_\delta (x):=G(\delta ,x)\) has a unique zero \(x_0\in [0,1]\). Moreover, \(x_0\in (0,\min {\{2/3,2-\delta ^{-1}\}})\).

Proof

Clearly, \(G_\delta (0)=\mathsf{H}(\delta )-\log 2<0\) since \(\delta \ne 1/2\). We have

Hence \(x_0=2/3\) is the unique zero of \(G_\delta '\) in (0, 1), and \(G_\delta '>0\) in (0, 2/3) and \(G_\delta '<0\) in (2/3, 1). We will show that

which then implies that \(G_\delta \) has a unique zero \(x_0\) in [0, 1] and \(x_0<2/3\) as well as \(x_0<2-\delta ^{-1}\).

For (a) we define \(\kappa _1(\delta ):=G_\delta (2/3)\) for \(\delta \in [1/2,1]\). Then a simple calculation shows that \(\kappa _1(1/2)=1/2\log 3>0\), \(\kappa _1(1)=\log 3-\log 2>0\), and

Hence \(\kappa _1'(3/4)=0\), \(\kappa _1'>0\) on (1/2, 3/4), and \(\kappa _1'<0\) on (3/4, 1). This shows that \(\kappa _1(\delta )=G_\delta (2/3)>0\) for \(\delta \in (1/2,1)\) (with maximal value \(\kappa _1(3/4)=\log 2\)).

For (b) we consider \(\kappa _2(\delta ):=G_\delta (1)\) for \(\delta \in [1/2,1]\). We have \(\kappa _2(1/2)=1/2\log 2>0\), \(\kappa _2(1)=0\), and

Hence, \(\kappa _2'(2/3)=0\), \(\kappa _2'>0\) on (1/2, 2/3), and \(\kappa _2'<0\) on (2/3, 1). In particular, this yields \(G_\delta (1)=\kappa _2(\delta )>0\) for \(\delta \in (1/2,1)\).

For (c) we consider \(\kappa _3(\delta )=G_\delta (2-\delta ^{-1})\) for \(\delta \in [1/2,1]\). Then

and

We have \(\kappa _3(1/2)=\kappa _3(1)=0\), \(\kappa _3'(3/4)=0\), \(\kappa _3'>0\) on (1/2, 3/4), and \(\kappa _3'<0\) on (3/4, 1). But then \(\kappa _3>0\) on (1/2, 1) and therefore \(G_\delta (2-\delta ^{-1})=\kappa _3(\delta )>0\) for \(\delta \in (1/2,1)\). \(\square \)

Now we denote the zero \(x_0\) of the function \(G_\delta \) provided by Lemma 6.1 by \(\rho _S(\delta )\). This defines the function \(\rho _S\) appearing in Theorem 1.8.

Proof of Theorem 1.8

Again we define

Let \(\delta >1/2\) and \(0<\rho <\rho _S(\delta )\). Then \(G(\delta ,\rho )<0\). For sufficiently large d, we have \(G(\delta _d,\rho _d)<0\) as well as \(\delta _d>1/2\) and \(\rho _d<\rho _S(\delta _d)\). We assume that d is large enough in this sense. Since \(\rho _s(\delta _d)<\rho _W(\delta _d)\), we have \(N-2d+k<0\).

Let \(X_1,\dots ,X_N\) be i.i.d. unit vectors with distribution \(\phi \). By definition, \(C_N\) is the positive hull of \(X_1,\dots ,X_N\) under the condition that this positive hull is different from \({{\mathbb {R}}}^d\). For \(k\in \{1,\dots ,d-1\}\), choose \(1\le i_1<\ldots <i_k\le N\) and let \(M=\{X_{i_1},\dots ,X_{i_k}\}\). Then the probability that \({{\,\mathrm{pos}\,}}M\) is a face of \(C_N\), under the condition that \({{\,\mathrm{pos}\,}}{\{X_1,\dots ,X_N\}}\ne {{\mathbb {R}}}^d\), is independent of the choice of \(i_1,\dots ,i_k\), hence

Therefore,

by (7) (and with the notation used there). By Boole’s inequality,

and thus

Here,

Since \(N-2d+k-1< 0\) for all d, the sum in the last numerator of (25) is zero, hence

Using this and the identities

together with (8), we get

where (24) was used and where \(\varphi \) is in a fixed interval. Since \(G(\delta _d,\rho _d)\rightarrow G(\delta ,\rho )<0\) as \(d\rightarrow \infty \), it follows that

from which the assertions follow. \(\square \)

7 Proof of Theorem 1.9

We use the representations

and show the convergence of the quotients, under different assumptions.

To prove the first part of the theorem, we assume that \(0\le \rho <\rho _W(\delta )/2\); then \(0\le \rho <1-(2\delta )^{-1}\), and \(\delta (1-\rho )>1/2\) and \(\delta >1/2\). Hence

Using the weak law of large numbers, as in the proof of Theorem 1.7, we obtain

which completes this part of the argument.

Now we deal with the second part of the proof and point out that our argument requires to distinguish whether \(\rho >\rho _W(\delta )\) or not. We begin with the case \(\rho >\rho _W(\delta )\); then \(\rho \ge 2-\delta ^{-1}\). Clearly,

Since (a fortiori) \(\rho >1-(2\delta )^{-1}\), we have \(N-2d+2k>0\) for sufficiently large d, hence Lemma 3.3 yields

as \(d\rightarrow \infty \), and the last denominator is positive. Hence, if d is large enough (which is always assumed in the following), there are constants \(C_1,C_2\), independent of d, such that

where

We have \(K(a,0)=1\), and also \(K(a,2-a^{-1})=1\) if \(a\ge 1/2\). We write \(K_a(b):=K(a,b)\). Then

Thus, for any \(a\in (0,1)\), we have \(K(a,b)<1\) for all \(b>2-a^{-1}\) in (0, 1). Since \(\rho >2-\delta ^{-1}\), we deduce that \(K(\delta ,\rho )<1\) and hence \(K(\delta _d,\rho _d)\le c <1\) for all sufficiently large d, with c independent of d. It follows that

Now we suppose that \(\rho _W(\delta )/2 < \rho \le \rho _W(\delta )\); then \(1-(2\delta )^{-1}<\rho \le 2-\delta ^{-1}\). Since \(\rho >0\), we have \(\delta >1/2\), further \(\delta (1-\rho )<1/2\). We use repeatedly that

We note that still \(N-2d+2k>0\) for sufficiently large d, so that (28) can be applied. It yields

Here and below, \(C_m\) denotes a positive constant independent of d.

To estimate the last denominator, we can again use Lemma 3.3, since \(\delta >1/2\) and hence \(2(N-d-1)<N\), if d is large enough, to get

with g defined by (21). As already observed, \(g(1/2)=1\) and \(g(a)>1\) for \(a\in [0,1]\setminus \{1/2\}\). Since \(\delta >1/2\), we have \(g(\delta )>1\) and hence \(g(\delta _d)\ge c>1\) for sufficiently large d, with c independent of d. It follows that \(g(\delta _d)^{-N}\rightarrow 0\) as \(d\rightarrow \infty \). Finally, we observe that

since \(\delta _d(1-\rho _d)\rightarrow \delta (1-\rho )<1/2\) and \(g(\delta (1-\rho ))>1\). Thus, (29) is obtained again.

Data availability

Data sharing not applicable to this article as no datasets were generated or analysed during the current study.

References

Amelunxen, D., Lotz, M., McCoy, M.B., Tropp, J.A.: Living on the edge: phase transitions in convex programs with random data. Inf. Inference 3(3), 224–294 (2014)

Baryshnikov, Yu.M., Vitale, R.A.: Regular simplices and Gaussian samples. Discrete Comput. Geom. 11(2), 141–147 (1994)

Bonnet, G., Chasapis, G., Grote, J., Temesvari, D., Turchi, N.: Threshold phenomena for high-dimensional random polytopes. Commun. Contemp. Math. 21(5), # 1850038 (2019)

Bonnet, G., Kabluchko, Z., Turchi, N.: Phase transition for the volume of high-dimensional random polytopes. Random Struct. Algorithms 58(4), 648–663 (2021)

Bonnet, G., O’Reilly, E.: Facets of spherical random polytopes (2019). arXiv:1908.04033

Cover, T.M., Efron, B.: Geometrical probability and random points on a hypersphere. Ann. Math. Statist. 38, 213–220 (1967)

Donoho, D., Tanner, J.: Observed universality of phase transitions in high-dimensional geometry, with implications for modern data analysis and signal processing. Philos. Trans. R. Soc. Lond. Ser. A Math. Phys. Eng. Sci. 367(1906), 4273–4293 (2009)

Donoho, D.L., Tanner, J.: Counting faces of randomly projected polytopes when the projection radically lowers dimension. J. Am. Math. Soc. 22(1), 1–53 (2009)

Donoho, D.L., Tanner, J.: Counting the faces of randomly-projected hypercubes and orthants, with applications. Discrete Comput. Geom. 43(3), 522–541 (2010)

Dyer, M.E., Füredi, Z., McDiarmid, C.: Volumes spanned by random points in the hypercube. Random Struct. Algorithms 3(1), 91–106 (1992)

Gatzouras, D., Giannopoulos, A.: Threshold for the volume spanned by random points with independent coordinates. Isr. J. Math. 169, 125–153 (2009)

Grünbaum, B.: Grassmann angles of convex polytopes. Acta Math. 121, 293–302 (1968)

Hörrman, J., Hug, D., Reitzner, M., Thäle, C.: Poisson polyhedra in high dimensions. Adv. Math. 281, 1–39 (2015)

Hug, D., Schneider, R.: Random conical tessellations. Discrete Comput. Geom. 56(2), 395–426 (2016)

Klar, B.: Bounds on tail probabilities of discrete distributions. Probab. Eng. Inf. Sci. 14(2), 161–171 (2000)

Okamoto, M.: Some inequalities relating to the partial sum of binomial probabilities. Ann. Inst. Stat. Math. 10, 29–35 (1958)

Pivovarov, P.: Volume thresholds for Gaussian and spherical random polytopes and their duals. Stud. Math. 183(1), 15–34 (2007)

Schneider, R., Weil, W.: Stochastic and Integral Geometry. Probability and its Applications (New York). Springer, Berlin (2008)

Shiryaev, A.N.: Probability. vol. 1. Graduate Texts in Mathematics, vol. 95. Springer, New York (2016)

Vershik, A.M., Sporyshev, P.V.: Asymptotic behavior of the number of faces of random polyhedra and the neighborliness problem. Selecta Math. Soviet. 11(2), 181–201 (1992)

Acknowledgements

We are very grateful to the anonymous referees, whose hints allowed us in some cases to give shorter proofs and to obtain stronger results.

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Additional information

Editor in Charge: Kenneth Clarkson

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Before Theorem 1.7, we have claimed that the occurrence of the same threshold in the work [9] and in our Theorem 1.7 may be unexpected. An anonymous referee suggested the following explanation. We quote it verbally (but adding bibliographic information): “Here is an attempt of explanation of this coincidence. Donoho and Tanner [9] consider projections of orthants on random uniform subspaces. By the same argument as in the paper of Baryshnikov and Vitale [2], the expected number of faces does not change if random uniform projection is replaced by applying a Gaussian random matrix. Since the orthant is the positive hull of the standard basis, it follows that instead of the Donoho–Tanner cones one may consider positive hulls of N i.i.d. standard Gaussian random variables in \({{\mathbb {R}}}^d\). Thus, the difference between the Cover–Efron cones studied here and the Donoho–Tanner cones is the conditioning on the event that the cone is not equal to \({{\mathbb {R}}}^d\). (...) Now let us look at the case \(\delta >1/2\) in Theorem 1.7. Then, N is between d and \((2-\varepsilon )d\), which means that the probability that the positive hull of the vectors \(X_1,\dots ,X_N\) is \({{\mathbb {R}}}^d\) goes to 0 exponentially fast. So, both models of cones differ just on an event of exponentially small probability and are equal otherwise. Moreover, the events of exponentially small probability make no contribution to the expected f-vector because on this event the Donoho–Tanner cone is \({{\mathbb {R}}}^d\) and \(f_k=0\) for all \(k<d\). The case \(\delta <1/2\) is more difficult to explain.“ (end of quotation) The referee then sketches an argument for this case which, in his/her opinion, is not rigorous, but makes the result quite natural.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Hug, D., Schneider, R. Threshold Phenomena for Random Cones. Discrete Comput Geom 67, 564–594 (2022). https://doi.org/10.1007/s00454-021-00323-2

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00454-021-00323-2