Abstract

We present new consistent goodness-of-fit tests for exponential distribution, based on the Desu characterization. The test statistics represent the weighted \(L^2\) and \(L^{\infty }\) distances between appropriate V-empirical Laplace transforms of random variables that appear in the characterization. In addition, we perform an extensive comparison of Bahadur efficiencies of different recent and classical exponentiality tests. We also present the empirical powers of new tests.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

To justify the use of more complicated models for lifetime data, one of the first steps is to reject the most simple one, the exponential. For this purpose numerous tests have been developed and are available in the literature.

The classical, and most commonly used procedure, is to apply one of universal time-honored goodness-of-fit tests based on empirical distribution function, such as Kolmogorov–Smirnov, Cramér–von Mises, Anderson–Darling. To make them applicable to the case of a composite null hypothesis, the Lilliefors modification with estimated rate parameter is frequently used.

Another approach is to use tests tailor-made for testing exponentiality. Such tests usually employ some special properties of the exponential distribution. Various integral transform related properties have been exploited: characteristic functions (see e.g. [17, 19, 20]); Laplace transforms (see e.g. [18, 24, 30]); and other integral transforms (see e.g. [25, 29]). Other possible properties include maximal correlations (see [15, 16, 48]), entropy (see [1]), etc.

An important type of such properties are the characterizations of the exponential distribution. Many of them, being relatively simple, are very suitable for construction of goodness-of-fit tests. This is especially true for the equidistribution-type characterizations. Since the equality in distribution can be expressed in many ways (equality of distribution functions, densities, integral transforms, etc.), many different types of test statistics are available. Tests that use U-empirical and V-empirical distribution functions, of integral-type (integrated difference) and supremum-type, can be found in Nikitin and Volkova [42], Volkova [53], Jovanović et al. [23], Milošević and Obradović [33], Milošević [31], Nikitin and Volkova [43]. Weighted integral-type and \(L^2\)-type tests that use U- or V-empirical Laplace transforms are presented in Milošević and Obradović [32] and in Cuparić et al. [11].

The common approach to explore the quality of tests is to find their power against different alternatives. Several papers are devoted to comparative power studies of exponentiality tests (see e.g. [3, 20, 51]).

Another popular choice for the quality assessment is the asymptotic efficiency. In this regard, however, no extensive study has been done. In this paper our aim is to compare the exponentiality tests using the approximate Bahadur efficiency (see [5]).

We opt for the approximate Bahadur efficiency since it is applicable to asymptotically non-normally distributed test statistics, and moreover it can distinguish tests better than some other types of efficiencies like Pitman or Hodges–Lehmann (see [36]).

Consider the setting of testing the null hypothesis \(H_0:\theta \in \varTheta _0\) against the alternative \(H_1:\theta \in \varTheta _1\). Let us suppose that for a test statistic \(T_n\), under \(H_0\), the limit \(\lim _{n\rightarrow \infty }P\{T_n\le t\}=F(t)\), where F is non-degenerate distribution function, exists. Further, suppose that \(\lim _{t\rightarrow \infty }t^{-2}\ln (1-F(t))=-\frac{a_T}{2}\), and that the limit in probability \(P_\theta \) \(\lim _{n\rightarrow \infty }T_n=b_T(\theta )>0\), exists for \(\theta \in \varTheta _1\). The relative approximate Bahadur efficiency with respect to another test statistic \(V_n\) is

where

is the approximate Bahadur slope of \(T_n\). Its limit when \(\theta \rightarrow 0\) is called the local approximate Bahadur efficiency.

The tests we consider may be classified into three groups according to their limiting distributions: asymptotically normal ones; those whose asymptotic distribution coincides with the supremum of some Gaussian process; and those whose limiting distribution is an infinite linear combination of independent and identically distributed (i.i.d.) chi-squared random variables.

For the first group of tests, the coefficient \(a_T\) is the inverse of the limiting variance. For the second, it is the inverse of the supremum of the covariance function of the limiting process (see [28]). For the third group, \(a_T\) is the inverse of the largest coefficient in the corresponding linear combination (see [55]), which is also equal to the largest eigenvalue of some integral operator.

The goal of this paper is twofold. First, we propose two new classes of characterization based exponentiality tests. One of them is of weighted \(L^2\)-type, and the other, for the first time, is based on \(L^{\infty }\) distance between two V-empirical Laplace transforms of the random variables that appear in the characterization.

Secondly, we perform an extensive efficiency comparison. Unlike for the remaining two, for the third group of tests, the efficiencies have not been calculated so far. This is due to the fact that the largest eigenvalue in question usually cannot be obtained analytically. We overcome this problem using a recently proposed approximation procedure from Božin et al. [9].

The rest of the paper is organized as follows. In Sect. 2 we propose new tests and explore their asymptotic properties. In Sect. 3 we give a partial review of test statistics for testing exponentiality, together with their Bahadur slopes. Section 4 is devoted to the comparison of efficiencies. In Sect. 5 we present the powers of new tests. Appendices A and B contain all the proofs, while the tables with Bahadur efficiencies are given in Appendix C.

2 New test statistics

In this section we present two new exponentiality tests based on the following characterization from Desu [12].

Characterization 1

Let \(X_1,...,X_m\) be independent copies of a random variable X with pdf f(x). Then for each m, X and \(m\cdot \min (X_1,..., X_m)\) have the same distribution if and only if for some \(\lambda >0\) \(f(x)=\lambda e^{-\lambda x},\) for \(x\ge 0\).

It is accustomed for goodness-of-fit testing purposes to use this characterization for \(m=2\) (see e.g. [37, 52]). Although some distributions different from exponential for which \(2\min (X_1,X_2)\) and \(X_1\) are equally distributed exist and can be constructed (see [21]), they are of no practical interest and this special case is often referred to as Desu’s characterization (see [38, Theorem 3]).

Let \(X_1,X_2,...,X_n\) be a random sample from a non-negative continuous distribution. To test the null hypothesis that the sample comes from the exponential distribution \({\mathcal {E}}(\lambda )\), with an unknown \(\lambda >0\), we examine the difference \({\mathcal {L}}^{(1)}_n(t)-{\mathcal {L}}^{(2)}_n(t)\), of V-empirical Laplace transforms of X and \(2\min (X_1,X_2)\).

Clearly, if null hypothesis is true, the difference \({\mathcal {L}}^{(1)}_n(t)-{\mathcal {L}}^{(2)}_n(t)\) will be small for each t. Taking this into account we propose the following two classes of test statistics, with their large values considered significant:

where \(Y_i=\frac{X_i}{{\bar{X}}_n}\), \(i=1,2,\ldots ,n\), is the scaled sample.

The sample is scaled to make the test statistic ancillary for the parameter \(\lambda \) and the purpose of the tuning parameter a is to magnify different types of deviations from the null distribution.

Remark 1

The supremum in the expression for test statistic \(L^{{\mathcal {D}}}_{n,a}\) is calculated using greed search over fixed interval [0, A] with suitably chosen large value of A.

2.1 Asymptotic properties under \(H_0\)

Notice that \(M^{{\mathcal {D}}}_{n,a}\) is a V-statistic with estimated parameter \({\hat{\lambda }}\), i.e. it can be represented in the form

where H is

where \(\varPi (4)\) is the set of all permutations of \(\{1,2,3,4\}\), and \({\hat{\lambda }}_n\) is the reciprocal sample mean.

Similarly, for a fixed t, the expression in the absolute parenthesis of the statistics \(L^{{\mathcal {D}}}_{n,a}\) is a V-statistics that can be represented as

where

is a symmetric function of its arguments.

From the law of large number for V-statistics with estimated parameters (see [22]), both statistics converge in probability to zero if and only if the equidistribution in the Desu’s characterization is satisfied, which happens under \(H_0\). Under \(H_1\), when the equidistribution does not hold, both test statistics converge to a positive number, from where follows their consistency.

The asymptotic behaviour of \(M^{{\mathcal {D}}}_{n,a}\) is given in the following theorem.

Theorem 2

Let \(X_1,..., X_n\) be i.i.d. with exponential distribution. Then

where \(\delta _k, k=1,2,...,\) is the sequence of eigenvalues of the integral operator A defined by \(Aq(x)=\int _{0}^{\infty }{\tilde{h}}_2(x,y;a)q(y)dF(y)\), with \({\tilde{h}}_2(x,y)=E(H(\cdot )|X_1=x,X_2=y)\) being the second projection of kernel \(H(X_1,X_2,X_3,X_4;a,\lambda )\), and \(W_{k},\;k=1,2,...,\) are independent standard normal variables.

The asymptotic behaviour of \(L^{{\mathcal {D}}}_{n,a}\) is given in the following theorem.

Theorem 3

Let \(X_1,..., X_n\) be i.i.d. with exponential distribution. Then

where \(\eta (t)\) is a centered Gaussian process with the covariance function

2.2 Approximate Bahadur slope

Let \({\mathcal {G}}=\{G(x;\theta ),\;\theta >0\}\) with corresponding densities \(\{g(x;\theta )\}\) be a family of alternative distribution functions with finite expectations, such that \(G(x,\theta )=1-e^{-\lambda x}\), for some \(\lambda >0\), if and only if \(\theta =0\), and the regularity conditions for V-statistics with weakly degenerate kernels from Nikitin and Peaucelle [39, Assumptions WD] are satisfied.

The approximate local Bahadur slopes of \(M^{{\mathcal {D}}}_{n,a}\) and \(L_{n,a}^{{\mathcal {D}}}\), for close alternatives, are derived in the following theorem.

Theorem 4

For the statistics \(M^{{\mathcal {D}}}_{n,a}\) and \(L^{{\mathcal {D}}}_{n,a}\) and a given alternative density \(g(x,\theta )\) from \({\mathcal {G}},\) the local Bahadur approximate slopes are given by

-

(1)

$$\begin{aligned} c^*_M(\theta )=\delta _1^{-1}\int \limits _{0}^{\infty }\int \limits _{0}^{\infty }{\tilde{h}}_2(x,y)g'_{\theta }(x;0)g'_{\theta }(y;0)dxdy\cdot \theta ^2+o(\theta ^2), \theta \rightarrow 0, \end{aligned}$$

where \(\delta _1\) is the largest eigenvalue of the integral operator A with kernel \({\tilde{h}}_2\);

-

(2)

$$\begin{aligned} c^*_L(\theta )=\frac{1}{\sup _tK(t,t)}\sup \Big ({2}\int _{0}^{\infty }{\tilde{\varphi }}_1(x;t)g'_{\theta }(x;0)dx\Big )^2\cdot \theta ^2+o(\theta ^2), \theta \rightarrow 0, \end{aligned}$$

where \({\tilde{\varphi }}_1(x;t)=E(\varPhi (\cdot )|X_1=x)\) with \(\varPhi \) being defined in (2).

Proof

See Appendix A. \(\square \)

To calculate the slope of \(M^{{\mathcal {D}}}_{n,a}\), one needs to find the largest eigenvalue \(\delta _1\). Since it cannot be obtained analytically, we use the approximation introduced in Božin et al. [9]. The procedure utilizes the fact that \(\delta _1\) is the limit of the sequence of the largest eigenvalues of linear operators defined by \((m+1)\times (m+1)\) matrices \(M^{(m)}=||m_{i,j}^{(m)}||,\; 0\le i\le m, 0\le j\le m\), where

when m tends to infinity and B is constant chosen in such way that F(B) approaches 1.

3 Other exponentiality tests—a partial review

In this section we present test statistics of some classical and some recent goodness-of-fit tests for the exponential distribution, along with their Bahadur local approximate slopes. For some of the test statistics, the Bahadur local approximate slope (or exact slope which locally coincides with the approximate one) is available in the literature and for the others we derive them in Appendix B.

As indicated in Introduction, we classify the tests according to their asymptotic distribution. The first group contains asymptotically normally distributed statistics.

-

The test proposed by Epps and Pulley [13] based on the expected value of the exponential density, with test statistic

$$\begin{aligned} EP_n=\sqrt{48}\bigg (\frac{1}{n}\sum \limits _{j=1}^ne^{-\frac{X_j}{{\overline{X}}_n}}-\frac{1}{2}\bigg ). \end{aligned}$$Its approximate Bahadur slope is

$$\begin{aligned} c^*_{EP}(\theta )=3\Bigg (\int \limits _{0}^\infty \Big (4e^{-x}+x\Big )g'_{\theta }(x;0)dx\Bigg )^2\cdot \theta ^2+o(\theta ^2) \end{aligned}$$ -

The score test for the Weibull shape parameter proposed by Cox and Oakes [10]

$$\begin{aligned} CO_n=1+\frac{1}{n}\displaystyle \sum _{i=1}^n\bigg (1-\frac{X_i}{{\overline{X}}_n}\bigg )\ln \frac{X_i}{{\overline{X}}_n}. \end{aligned}$$Its approximate slope is

$$\begin{aligned} c^*_{CO}(\theta )=\frac{6}{\pi ^2}\Bigg (\int \limits _{0}^\infty \Big ((1-x)\ln x+(1-\gamma )x\Big )g'_{\theta }(x;0)dx\Bigg )^2\cdot \theta ^2+o(\theta ^2) \end{aligned}$$ -

A test based on Gini coefficient from Gail and Gastwirth [14]

$$\begin{aligned} G^*_n=\Big |\frac{1}{2n(n-1){\overline{X}}_n}\sum _{i,j=1}^n|X_i-X_j|-\frac{1}{2}\Big |. \end{aligned}$$The approximate slope is (see [40])

$$\begin{aligned} c^*_{G}(\theta )=12\Bigg (\int \limits _0^\infty \Big (2e^{-x}+\frac{x}{2}\Big )g'_{\theta }(x;0)dx\Bigg )^2\theta ^2+o(\theta ^2). \end{aligned}$$ -

The score test for the gamma shape parameter proposed by Moran [35] and Tchirina [49]

$$\begin{aligned} MO_n=\Big |\gamma +\frac{1}{n}\sum _{i=1}^n\ln \frac{X_i}{{\overline{X}}_n}\Big |. \end{aligned}$$Its approximate slope is (see [49])

$$\begin{aligned} c^*_{MO}(\theta )=\Big (\frac{\pi ^2}{6}-1\Big )^{-1}\Bigg (\int \limits _0^\infty (\ln x-x)g'_{\theta }(x;0)dx\Bigg )^2\theta ^2+o(\theta ) \end{aligned}$$ -

Characterization based integral-type tests Let the relation

$$\begin{aligned} \omega _1(X_1,...,X_m)\overset{d}{=}\omega _2(X_1,...,X_p), \end{aligned}$$(5)where \(X_1,\ldots ,X_{\max \{m,p\}}\) are i.i.d. random variables, characterize the exponential distribution. Then the following types of test statistics have been proposed:

$$\begin{aligned} I_n=\int _{0}^{\infty }\Big (H_n^{(\omega _1)}(t)-H_n^{(\omega _2)}(t)\Big )dF_n(t), \end{aligned}$$where \(H_n^{(\omega _1)}(t)\) and \(H_n^{(\omega _2)}(t)\) are V-empirical distribution functions of \(\omega _1\) and \(\omega _2\), respectively, and \(F_n\) is the empirical distribution function, and

$$\begin{aligned} J_{n,a}=\int _{0}^{\infty }\Big (L_n^{(\omega _1)}(t)-L_n^{(\omega _2)}(t)\Big )e^{-at}dt, \end{aligned}$$(6)where \(L_n^{(\omega _1)}(t)\) and \(L_n^{(\omega _2)}(t)\) are V-empirical Laplace transforms of \(\omega _1\) and \(\omega _2\), respectively, applied to the scaled sample, and \(a>0\) is the tuning parameter.

From these groups of tests we take the following representatives

-

\(I_{n,k}^{(1)}\), proposed in Jovanović et al. [23], based on the Arnold and Villasenor characterization, where \(\omega _1(X_1,...,X_k)=\max (X_1,...,X_k)\) and

\(\omega _2(X_1,...,X_k)=X_1+\frac{X_2}{2}+\cdots \frac{X_k}{k}\) (see [4, 34]);

-

\(I_{n}^{(2)}\), proposed in Milošević and Obradović [33], based on the Milošević-Obradović characterization, where \(\omega _1(X_1,X_2)=\max (X_1,X_2)\) and

\(\omega _2(X_1,X_2,X_3)=X_1+\min (X_2,X_3)\) (see [34]);

-

\(I_n^{(3)}\), proposed in Milošević [31], based on the Obradović characterization, where \(\omega _1(X_1,X_2,X_3)=\max (X_1,X_2,X_3)\) and

\(\omega _2(X_1,X_2,X_3,X_4)=X_1+\mathrm{med}(X_2,X_3,X_4)\) (see [45]);

-

\(I_n^{(4)}\), proposed in Volkova [53], based on the Yanev-Chakraborty characterization, where \(\omega _1(X_1,X_2,X_3)=\max (X_1,X_2,X_3)\) and

\(\omega _2(X_1,X_2,X_3)=\frac{X_1}{3}+\max (X_2,X_3)\) (see [54]);

-

\(I_n^{{\mathcal {D}}}\) based on the Desu characterization 1;

-

\(I_n^{{\mathcal {P}}}\) based on the Puri–Rubin characterization, where \(\omega _1(X_1)=X_1\) and

\(\omega _2(X_1,X_2)=|X_1-X_2|\) (see [46]);

-

\(J_{n,a}^{{\mathcal {D}}}\), proposed in Milošević and Obradović [32], based on the Desu characterization 1;

-

\(J_{n,a}^{{\mathcal {P}}}\), proposed in Milošević and Obradović [32], based on the Puri–Rubin characterization.

Since these statistics are very similar, we give general expressions for their Bahadur approximate slopes.

Statistics \(I_n\) are non-degenerate V-statistics with some kernel \(\varPsi \) and their approximate slope is (see [39])

$$\begin{aligned} c^{*}_I(\theta )=\frac{1}{{\mathrm{Var}}\psi ({\mathrm{X}}_1)}\Big (\int \psi (x)g'_{\theta }(x;0)dx\Big )^2 \cdot \theta ^2 + o(\theta ^2), \end{aligned}$$(7)where \(\psi (x)=E\varPsi (\cdot |X_1=x)\).

Statistics \(J_{n,a}\) are, due to the sample scaling, non-degenerate V-statistics with estimated parameters. Nevertheless, the formula (7) is applicable here also, with \(\varPsi \) being the kernel of the test statistic as if the scaling were done using the real value of \(\lambda \) (see [32] for details).

-

The second group contains statistics whose limiting distribution is the supremum of some centered Gaussian process.

-

Lilliefors modification of the Kolmogorov–Smirnov test

$$\begin{aligned} KS_n=\sup |F_n(t)-(1-e^{-\frac{t}{{\bar{X}}_n}})|. \end{aligned}$$The approximate slope is (see [41])

$$\begin{aligned} \begin{aligned} c^*_{KS}(\theta )\!&=\!\frac{1}{\sup \limits _{x\ge 0}(e^{-2x}(e^x-x^2-1))}\sup \limits _{x\ge 0}\Bigg (xe^x\int \limits _0^\infty G'_{\theta }(u;0)du-G(x;0)dx\Bigg )^2\!\cdot \! \theta ^2\\&\quad +o(\theta ^2). \end{aligned} \end{aligned}$$ -

Characterization based supremum-type tests

Using the characterizations of the type (5), another proposed type of test statistics is

$$\begin{aligned} D_n=\sup _{t>0}\Big |H_n^{(\omega _1)}(t)-H_n^{(\omega _2)}(t)\Big |. \end{aligned}$$From this group of tests we take the following representatives:

\(D_{n,k}^{(1)}\), proposed in Jovanović et al. [23]; \(D_{n}^{(2)}\), proposed in Milošević and Obradović [33]; \(D_n^{(3)}\), proposed in Milošević [31]; \(D_n^{(4)}\), proposed in Volkova [53], based on the same characterizations as for the respective integral-type statistics, \(D_{n}^{{\mathcal {D}}}\) based on Desu characterization 1 and \(D_{n}^{{\mathcal {P}}}\) based on Puri–Rubin characterization [46]. Statistics from this group are asymptotically distributed as a supremum of some non-degenerate V-empirical processes, and the expression in the absolute parenthesis, for a fixed t is a V-statistic with some kernel \(\varPsi (X_1,\ldots ,X_{\max \{m,p\}};t)\). Their approximate slopes is (see [37])

$$\begin{aligned} c^{\star }_D(\theta )=\frac{1}{\sup _{t>0}\mathrm{Var}\psi (X_1;t)}\sup _{t>0}\Big (\int \psi (x;t)g'_{\theta }(x;0)dx\Big )^2 \cdot \theta ^2 + o(\theta ^2), \end{aligned}$$where \(\psi (x;t)=E\varPsi (\cdot ;t |X_1=x)\).

The third group contains statistics whose limiting distribution is an infinite linear combination of i.i.d. chi-squared random variables. Each of the presented statistics, except the last one, is of the form

where \(U_n(t;{\hat{\mu }})\) is an empirical process of order 1 with estimated parameter. It also can be viewed as a weakly degenerate V-statistics with estimated parameters, with some kernel \(\varPhi (X_1,X_2;{\hat{\mu }})\), where \(\mu =E_{\theta }X_1\). Then, the Bahadur approximate slope of such statistic is

where \(\delta _\varPhi \) is the largest eigenvalue of the integral operator

where \(K(s,t)=\lim _{n\rightarrow \infty }n\mathrm{Cov}(U_n(t),U_n(s))\) is the limiting covariance function.

Hence it suffices to present only the kernels and limiting covariance functions of test statistics. We consider the following tests:

-

Lilliefors modification of the Cramér–von Mises test

$$\begin{aligned} \omega ^2_n=\int _{0}^{\infty }(F_n(t)-(1-e^{-\frac{t}{{\bar{X}}_n}}))^2\frac{1}{{\bar{X}_n}}e^{-\frac{t}{{\bar{X}_n}}}dt. \end{aligned}$$Its kernel is

$$\begin{aligned} \varPhi _{\omega ^2}(x,y;\mu (\theta ))=e^{-\max (\frac{x}{\mu (\theta )},\frac{y}{\mu (\theta )})}-e^{-\frac{x}{\mu {\theta }}}-e^{-\frac{y}{\mu (\theta )}}+\frac{1}{2}(e^{-2\frac{x}{\mu (\theta )}}+e^{-2\frac{y}{\mu (\theta )}})+\frac{1}{3}; \end{aligned}$$and the covariance function is

$$\begin{aligned} K_{\omega ^2}(s,t)&=e^{-\frac{3}{2}s-\frac{3}{2}t}(e^{\min (s,t)}-1-st). \end{aligned}$$ -

Lilliefors modification of the Anderson–Darling test

$$\begin{aligned} {\mathrm{AD}}_n=\int _{0}^{\infty }\frac{(F_n(t)-(1-e^{-\frac{t}{{\bar{X}}_n}}))^2}{{\bar{X}}_n(1-e^{-\frac{t}{{\bar{X}}_n}})}dt. \end{aligned}$$Its kernel is

$$\begin{aligned} \varPhi _{\mathrm{AD}}(x,y;\mu (\theta ))=\frac{x}{\mu (\theta )}+\frac{y}{\mu (\theta )}-1-\ln (e^{\max (\frac{x}{\mu (\theta )},\frac{y}{\mu (\theta )})}-1). \end{aligned}$$and the covariance function is

$$\begin{aligned} K_{AD}(s,t)&=\frac{e^{-s-t}(e^{\min (s,t)}-1-st)}{\sqrt{(1-e^{-s})(1-e^{-t})}}. \end{aligned}$$ -

A test proposed by Baringhaus and Henze [7]

$$\begin{aligned} {\mathrm{BH}}_n=\int _0^\infty \Big ((1+t)\psi '_n(t)+\psi _n(t)\Big )^2e^{-at}dt, \end{aligned}$$where \(\psi _n(t)\) is the empirical Laplace transform. Its kernel is

$$\begin{aligned} \varPhi _{\mathrm{BH}}(x,y;\mu (\theta ))=\frac{(1-x)(1-y)}{x+y+a\mu (\theta )}-\frac{x\mu (\theta )+y\mu (\theta )-2xy}{(a\mu (\theta )+x+y)^2}+\frac{2xy\mu (\theta )}{(a\mu (\theta )+x+y)^3}; \end{aligned}$$and the covariance function is

$$\begin{aligned} K_{BH}(s,t)&=\frac{1+s+t+2st}{(1+s+t)^3}-\frac{1}{(1+s)^2(1+t)^2}. \end{aligned}$$ -

The test proposed by Henze [17]

$$\begin{aligned} {\mathrm{HE}}_n=\int _0^\infty \Big (\psi _n(t)-\frac{1}{1+t}\Big )^2e^{-at}dt; \end{aligned}$$Its kernel is

$$\begin{aligned} \varPhi _{\mathrm{HE}}(x,y;\mu (\theta ))&\!=\!1\!+\!\frac{\mu (\theta )}{a\mu (\theta )+x+y}\!+\!ae^aEi(-a)\!+\!e^{a+\frac{x}{\mu (\theta )}}Ei\left( -\left( a\!+\!\frac{x}{\mu (\theta )}\right) \right) \\&\quad +e^{a+\frac{y}{\mu (\theta )}}Ei\left( -\left( a+\frac{y}{\mu (\theta )}\right) \right) ; \end{aligned}$$and the covariance function is

$$\begin{aligned} K_{HE}(s,t)&=\frac{s^2t^2}{(s+t+1)(s+1)^2(t+1)^2}. \end{aligned}$$ -

The test proposed by Henze and Meintanis [18]

$$\begin{aligned} W_n=\int _0^\infty \Big (\psi _n(t)-\frac{1}{1+t}\Big )^2(1+t)^2e^{-at}dt. \end{aligned}$$Its kernel is

$$\begin{aligned} \varPhi _{W}(x,y;\mu (\theta ))&=\frac{1}{a}-\frac{\mu (\theta )(\mu (\theta )(1+a)+x)}{(a\mu (\theta )+x)^2}- \frac{\mu (\theta )(\mu (\theta )(1+a)+y)}{(a\mu (\theta )+y)^2}\\&\quad +\frac{2\mu ^3(\theta )}{(a\mu (\theta )+x+y)^3}+\frac{2\mu ^2(\theta )}{(a\mu (\theta )+x+y)^2}+\frac{\mu (\theta )}{a\mu (\theta )+x+y}; \end{aligned}$$and the covariance function is

$$\begin{aligned} K_{W}(s,t)&=\frac{s^2t^2}{(s+t+1)(s+1)(t+1)}. \end{aligned}$$ -

Two tests proposed by Henze and Meintanis [19]

$$\begin{aligned} {\mathrm{HM}}_n=\int _0^\infty (s_n(t)-tc_n(t))^2\omega _{i}(t)dt, \;\;i=1,2, \end{aligned}$$where \(\omega _1(t)=e^{-at}\) and \(\omega _2(t)=e^{-at^2}\). Their kernels are

$$\begin{aligned} \begin{aligned} \varPhi _{\mathrm{HM1}}(x,y;\mu (\theta ))&=\frac{a\mu ^2(\theta )}{2(a^2\mu ^2(\theta )+(x-y)^2)}-\frac{a\mu ^2(\theta )}{2(a^2\mu ^2(\theta )+ (x+y)^2)}\\&\quad +\frac{a^2\mu ^2(\theta )-3(x-y)^2}{(a^2\mu ^2(\theta )+(x-y)^2)^3}+\frac{a^2\mu ^2(\theta )-3(x+y)^2}{(a^2\mu ^2(\theta )+(x+y)^2)^3} \\&\quad - \frac{2a\mu ^3(\theta )(x + y)}{(a^2\mu ^2(\theta ) +(x + y)^2)^2}, \end{aligned} \end{aligned}$$and

$$\begin{aligned} \begin{aligned} \varPhi _{\mathrm{HM2}}(x,y;\mu (\theta ))&=\frac{\sqrt{\pi }}{4\sqrt{a}}\Bigg (\bigg (\frac{1}{2a}-\frac{x+y}{a\mu (\theta )}-\frac{(x-y)^2}{4a^2\mu ^2(\theta )}-1\bigg )e^{-\frac{(x+y)^2}{4a\mu ^2(\theta )}}\\&\quad +\bigg (1+\frac{1}{2a}-\frac{(x-y)^2}{4a^2\mu ^2(\theta )}\bigg )e^{-\frac{(x-y)^2}{4a\mu ^2(\theta )}}\Bigg ); \end{aligned} \end{aligned}$$and the covariance function is

$$\begin{aligned} K_{HM}(s,t)&=\frac{st(s^2+t^2+1)}{(1+(s-t)^2)(1+(s+t)^2)}-\frac{st}{(1+s^2)(1+t^2)}. \end{aligned}$$ -

Characterization based \(L^2\)-type test proposed by Cuparić et al. [11].

$$\begin{aligned} M^{{\mathcal {P}}}_{n,a}=\int _{0}^{\infty }\Big (L_n^{(1)}(t)-L_n^{(2)}(t)\Big )^2e^{-at}dt. \end{aligned}$$(9)Its slope is

$$\begin{aligned} \begin{aligned} c^*_{M}(\theta )&=(2\delta _1)^{-1}\int \limits _{0}^{\infty }\int \limits _{0}^{\infty }\bigg [\frac{1}{6}e^{a-x-y}\text {Ei}(-a) \Big (a (e^x-2)(e^y-2)-e^x-e^y+4\Big )\\&\quad +\frac{1}{6}e^{-a-x-y}\Big (\text {Ei}(a) (4 a+e^x+e^y-4)-(\text {Ei}(a+x) (4 (a+x-1)+e^y)\\&\quad +\text {Ei}(a+y) (4 (a+y-1)+e^x)-4 (a+x+y-1) \text {Ei}(a+x+y))\Big )\!-\!\frac{1}{2}\\&\quad +\frac{1}{3}(e^{-x}+e^{-y})+\frac{1}{6(a+x+y)}\bigg ]g'(x;0)g'(y;0)dxdy\cdot \theta ^2+o(\theta ^2). \end{aligned} \end{aligned}$$

4 Comparison of efficiencies

In this section we calculate approximate local relative Bahadur efficiencies of test statistics introduced in Sects. 2 and 3 with respect to the likelihood ratio test (see [6]). Likelihood ratio tests are known to have optimal Bahadur efficiencies and they are therefore used as benchmark for comparison.

The alternatives we consider are the following:

-

a Weibull distribution with density

$$\begin{aligned} g(x,\theta )=e^{-x^{1+\theta }}(1+\theta )x^\theta ,\theta >0,x\ge 0; \end{aligned}$$(10) -

a gamma distribution with density

$$\begin{aligned} g(x,\theta )=\frac{x^\theta e^{-x}}{\varGamma (\theta +1)},\theta >0,x\ge 0; \end{aligned}$$(11) -

a linear failure rate (LFR) distribution with density

$$\begin{aligned} g(x,\theta )=e^{-x-\theta \frac{x^2}{2}}(1+\theta x),\theta >0,x\ge 0; \end{aligned}$$(12) -

a mixture of exponential distributions with negative weights (EMNW(\(\beta \))) with density

$$\begin{aligned} g(x,\theta )=(1+\theta )e^{-x}-\theta \beta e^{-\beta x},\theta \in \left( 0,\frac{1}{\beta -1}\right] ,x\ge 0; \end{aligned}$$

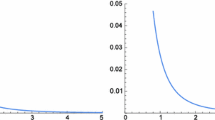

On Figs. 1, 2, 3 and 4, there are plots of local approximate Bahadur efficiencies as a function of the tuning parameter. For tests with no such parameter straight lines are drawn. To avoid too many lines on the same plot, there are three separate plots given for each alternative, each corresponding to one of the classes of tests from Sect. 3. The exact values of local Bahadur efficiencies are available in Table 3 in Appendix C.

As a rule we can notice that, in the class of supremum-type statistics, new test \(L^{{\mathcal {D}}}_{n,a}\) is by far the most efficient. On the other hand, supremum-type test based on characterizations that use U-empirical distribution functions, are the least efficient among all considered tests.

The impact of the tuning parameter, for the tests that have got it, is also visible in all the figures. It is interesting to note that this impact is different for various tests in terms of monotonicity of the plotted functions.

Another general conclusion is that the ordering of the tests depends on the alternative and that there is no most efficient test to be recommended in any situation.

The CO and MO tests are known to be locally optimal for Weibull and gamma alternatives, respectively, so they are the most efficient in these cases. However, there are quite a few other tests that perform very well in there cases. In the case of the LFR alternative, the most efficient are EP and \(\hbox {HM}^{(1)}_{n,a}\) and \(\hbox {HM}^{(2)}_{n,a}\). It it interesting that for other alternatives the latter two tests are among the least efficient.

In the case of the EMNW alternative, the integral and supremum-type tests based on the characterizations via Laplace transforms, as well as most of the \(L^2\) test reach, for some value of the tuning parameter, an efficiency close to one.

5 Powers of new tests

In this section we present the simulated powers of our new tests against different alternatives. The list of alternatives is chosen to be in concordance with the papers with extensive power comparison studies. The alternatives are:

-

a Weibull \(W(\theta -1)\) distribution with density (10);

-

a gamma \(\varGamma (\theta -1)\) distribution with density (11);

-

a half-normal HN distribution with density

$$\begin{aligned} g(x)=\sqrt{\frac{2}{\pi }}e^{-\frac{x^2}{2}}, x\ge 0; \end{aligned}$$ -

a uniform U distribution with density

$$\begin{aligned} g(x)=1, 0\le x\le 1; \end{aligned}$$ -

a Chen’s \(CH(\theta )\) distribution with density

$$\begin{aligned} g(x,\theta )=2\theta x^{\theta -1}e^{x^\theta -2(1-e^{x^\theta })}, x\ge 0; \end{aligned}$$ -

a linear failure rate \(LF(\theta )\) distribution with density (12);

-

a modified extreme value \(EV(\theta )\) distributions with density

$$\begin{aligned} g(x,\theta )=\frac{1}{\theta }e^{\frac{1-e^x}{\theta }+x}, x\ge 0; \end{aligned}$$ -

a log-normal \(LN(\theta )\) distribution with density

$$\begin{aligned} g(x,\theta )=\frac{1}{x\sqrt{2\pi \theta ^2}}e^{-\frac{(\ln x)^2}{2\theta ^2}}, x\ge 0; \end{aligned}$$ -

a Dhillon \(DL(\theta )\) distribution with density

$$\begin{aligned} g(x,\theta )=\frac{\theta +1}{x+1}(\ln (x+1))^\theta e^{-(\ln (x+1))^{\theta +1}}, x\ge 0. \end{aligned}$$

The powers, for aforementioned alternatives, and different choices of the tuning parameter are estimated using the Monte Carlo procedure with 10000 replicates at the level of significance 0.05.

The results are presented in Tables 1 and 2. In addition, we provide the bootstrap expected power estimate for data-driven optimal value of the tuning parameter (see [2] for details). Some steps to overcome "random nature" of selected parameters are made in Tenreiro [50], but some questions still remain open and are planned for future research.

We can see from tables that all the sizes of our tests are equal to the level of significance, and that the powers range from reasonable to high. In comparison to the other exponentiality tests (see [11, 51]) we can conclude that our tests are serious competitors to the most powerful classical and recent exponentiality tests.

6 Conclusion

In this paper we proposed two new consistent scale-free tests for the exponential distribution. In addition, we performed an extensive comparison of efficiency of recent and classical exponentiality tests.

We showed that our tests are very efficient and powerful and can be considered as serious competitors to other high quality exponentilaity tests.

From the comparison study, the general conclusion is that there is no uniformly best test, since the performance is different for different alternatives. However, the tests based on integral transforms, due to their flexibility because of the tuning parameter, generally tend to have higher efficiency, and they are recommended to use.

References

Alizadeh Noughabi, H., Arghami, N.R.: Testing exponentiality based on characterizations of the exponential distribution. J. Stat. Comput. Simul. 81(11), 1641–1651 (2011)

Allison, J., Santana, L.: On a data-dependent choice of the tuning parameter appearing in certain goodness-of-fit tests. J. Stat. Comput. Simul. 85(16), 3276–3288 (2015)

Allison, J., Santana, L., Smit, N., Visagie, I.: An ‘apples to apples’ comparison of various tests for exponentiality. Comput. Stat. 32(4), 1241–1283 (2017)

Arnold, B.C., Villasenor, J.A.: Exponential characterizations motivated by the structure of order statistics in samples of size two. Stat. Prob. Lett. 83(2), 596–601 (2013)

Bahadur, R.R.: On the asymptotic efficiency of tests and estimates. Sankhyā Indian J. Stat. 22(3/4), 229–252 (1960)

Bahadur, R.R.: Rates of convergence of estimates and test statistics. Ann. Math. Stat. 38(2), 303–324 (1967)

Baringhaus, L., Henze, N.: A class of consistent tests for exponentiality based on the empirical Laplace transform. Ann. Inst. Stat. Math. 43(3), 551–564 (1991)

Billingsley, P.: Convergence of Probability Measures. Wiley (1968)

Božin, V., Milošević, B., Nikitin, Ya.. Yu.., Obradović, M.: New characterization based symmetry tests. Bull. Malays. Math. Sci. Soc. 43(1), 297–320 (2020)

Cox, D., Oakes, D.: Analysis of Survival Data. Chapman and Hall, New York (1984)

Cuparić, M., Milošević, B., Obradović, M.: New \({L}^2\)-type exponentiality tests. SORT-Stat. Oper. Res. Trans. 43(1), 25–50 (2019)

Desu, M.M.: A characterization of the exponential distribution by order statistics. Ann. Math. Stat. 42(2), 837–838 (1971)

Epps, T., Pulley, L.: A test of exponentiality vs. monotone-hazard alternatives derived from the empirical characteristic function. J. R. Stat. Soc. Ser. B (Methodol.) 48(2), 206–213 (1986)

Gail, M., Gastwirth, J.: A scale-free goodness-of-fit test for the exponential distribution based on the Gini statistic. J. R. Stat. Soc. Ser. B (Methodol.) 40(3), 350–357 (1978)

Grané, A., Fortiana, J.: A location-and scale-free goodness-of-fit statistic for the exponential distribution based on maximum correlations. Statistics 43(1), 1–12 (2009)

Grané, A., Fortiana, J.: A directional test of exponentiality based on maximum correlations. Metrika 73(2), 255–274 (2011)

Henze, N.: A new flexible class of omnibus tests for exponentiality. Commun. Stat. Theory Methods 22(1), 115–133 (1992)

Henze, N., Meintanis, S.: Tests of fit for exponentiality based on the empirical Laplace transform. Stat. J. Theor. Appl. Stat. 36(2), 147–161 (2002)

Henze, N., Meintanis, S.G.: Goodness-of-fit tests based on a new characterization of the exponential distribution. Commun. Stat. Theory Methods 31(9), 1479–1497 (2002)

Henze, N., Meintanis, S.G.: Recent and classical tests for exponentiality: a partial review with comparisons. Metrika 61(1), 29–45 (2005)

Huang, J.S.: On a theorem of Ahsanullah and Rahman. J. Appl. Probab. 11(1), 216–218 (1974)

Iverson, H., Randles, R.: The effects on convergence of substituting parameter estimates into U-statistics and other families of statistics. Probab. Theory Relat. Fields 81(3), 453–471 (1989)

Jovanović, M., Milošević, B., Nikitin, Ya.. Yu.., Obradović, M., Volkova, KYu.: Tests of exponentiality based on Arnold–Villasenor characterization and their efficiencies. Comput. Stat. Data Anal. 90, 100–113 (2015)

Klar, B.: On a test for exponentiality against Laplace order dominance. Statistics 37(6), 505–515 (2003)

Klar, B.: Tests for exponentiality against the M and LM-Classes of life distributions. TEST 14(2), 543–565 (2005)

Korolyuk, V.S., Borovskikh, Y.V.: Theory of U-statistics. Kluwer, Dordrecht (1994)

Ledoux, M., Talagrand, M.: Probability in Banach Spaces: Isoperimetry and Processes. Springer Science and Business Media (2013)

Marcus, M.B., Shepp, L.: Sample behavior of Gaussian processes. In: Proc. of the Sixth Berkeley Symposium on Math. Statist. and Prob, vol. 2, pp. 423–421 (1972)

Meintanis, S.G.: Tests for generalized exponential laws based on the empirical Mellin transform. J. Stat. Comput. Simul. 78(11), 1077–1085 (2008)

Meintanis, S.G., Nikitin, Ya.. Yu.., Tchirina, A.: Testing exponentiality against a class of alternatives which includes the RNBUE distributions based on the empirical Laplace transform. J. Math. Sci. 145(2), 4871–4879 (2007)

Milošević, B.: Asymptotic efficiency of new exponentiality tests based on a characterization. Metrika 79(2), 221–236 (2016)

Milošević, B., Obradović, M.: New class of exponentiality tests based on U-empirical Laplace transform. Stat. Pap. 57(4), 977–990 (2016)

Milošević, B., Obradović, M.: Some characterization based exponentiality tests and their Bahadur efficiencies. Publications de L’Institut Mathematique 100(114), 107–117 (2016)

Milošević, B., Obradović, M.: Some characterizations of the exponential distribution based on order statistics. Appl. Anal. Discrete Math. 10(2), 394–407 (2016)

Moran, P.: The random division of an interval—Part II. J. R. Stat. Soc. Ser. B (Methodol.) 13(1), 147–150 (1951)

Nikitin, Y.Y.: Asymptotic Efficiency of Nonparametric Tests. Cambridge University Press, New York (1995)

Nikitin, Y.Y.: Large deviations of U-empirical Kolmogorov–Smirnov tests and their efficiency. J. Nonparametr. Stat. 22(5), 649–668 (2010)

Nikitin, Y.Y.: Tests based on characterizations, and their efficiencies: a survey. Acta et Commentationes Universitatis Tartuensis de Mathematica 21(1), 4–24 (2017)

Nikitin, Y.Y., Peaucelle, I.: Efficiency and local optimality of nonparametric tests based on U- and V-statistics. Metron 62(2), 185–200 (2004)

Nikitin, Y.Y., Tchirina, A.V.: Bahadur efficiency and local optimality of a test for the exponential distribution based on the Gini statistic. J. Ital. Stat. Soc. 5(1), 163–175 (1996)

Nikitin, Y.Y., Tchirina, A.V.: Lilliefors test for exponentiality: large deviations, asymptotic efficiency, and conditions of local optimality. Math. Methods Stat. 16(1), 16–24 (2007)

Nikitin, Y.Y., Volkova, K.Y.: Asymptotic efficiency of exponentiality tests based on order statistics characterization. Georgian Math. J. 17(4), 749–763 (2010)

Nikitin, YY. and K. Y. Volkova,: Efficiency of exponentiality tests based on a special property of exponential distribution. Math. Methods Stat. 25(1), 54–66 (2016)

Novoa-Muñoz, F., Jiménez-Gamero, M.D.: Testing for the bivariate Poisson distribution. Metrika 77(6), 771–793 (2014)

Obradović, M.: Three characterizations of exponential distribution involving median of sample of size three. J. Stat. Theory Appl. 14(3), 257–264 (2015)

Puri, P.S., Rubin, H.: A characterization based on the absolute difference of two iid random variables. Ann. Math. Stat. 41(6), 2113–2122 (1970)

Serfling, R.: Approximation Theorems of Mathematical Statistics, vol. 162. Wiley, New York (2009)

Strzalkowska-Kominiak, E., Grané, A.: Goodness-of-fit test for randomly censored data based on maximum correlation. SORT Stat. Oper. Res. Trans. 41(1), 119–138 (2017)

Tchirina, A.: Bahadur efficiency and local optimality of a test for exponentiality based on the Moran statistics. J. Math. Sci. 127(1), 1812–1819 (2005)

Tenreiro, C.: On the automatic selection of the tuning parameter appearing in certain families of goodness-of-fit tests. J. Stat. Comput. Simul. 89(10), 1780–1797 (2019)

Torabi, H., Montazeri, N.H., Grané, A.: A wide review on exponentiality tests and two competitive proposals with application on reliability. J. Stat. Comput. Simul. 88(1), 108–139 (2018)

Volkova, K.Y.: Tests of the exponentiality based on properties of order statistics 1. In: 6th St. Petersburg Workshop on Simulation, pp. 761–764 (2009)

Volkova, K.Y.: Goodness-of-fit tests for exponentiality based on Yanev–Chakraborty characterization and their efficiencies. In: Proceedings of the 19th European Young Statisticians Meeting, Prague, pp. 156–159 (2015)

Yanev, G.P., Chakraborty, S.: Characterizations of exponential distribution based on sample of size three. Pliska Studia Mathematica Bulgarica 22(1), 237p–244p (2013)

Zolotarev, V.M.: Concerning a certain probability problem. Theory Probab. Appl. 6(2), 201–204 (1961)

Acknowledgements

We would like to thank the anonymous reviewer for his useful remarks.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The work of M. Cuparić and B. Milošević is supported by the Ministry of Education, Science and Technological Development of the Republic of Serbia.

Appendices

Appendix A: Proofs of theorems

Proof (Theorem 2)

Our statistic \(M^{{\mathcal {D}}}_{n,a}({\widehat{\lambda }}_n)\) can be rewritten as

Here \(V_n(t;{\widehat{\lambda }}_n)\), for each \(t>0\), is a V-statistic of order 2 with an estimated parameter, and kernel

Since the function \(\xi (x_{1},x_{2},t;a,\gamma )\) is continuously differentiable with respect to \(\gamma \) at the point \(\gamma =\lambda \) we may apply the mean value theorem. We have

for some \(\lambda ^*\) between \(\lambda \) and \({\widehat{\lambda }}_n\). From the Law of large numbers for V-statistics [47, 6.4.2.], the partial derivative \(\frac{\partial V_n(t;\gamma )}{\partial \gamma }\) converges to

Since \(\sqrt{n}({\widehat{\lambda }}_n-\lambda )\) is stochastically bounded, it follows that statistics \(\sqrt{n}V_n(t;{\widehat{\lambda }}_n)\) and \(\sqrt{n}V_n(t;1)\) are asymptotically equally distributed. Therefore, \(nM^{{\mathcal {D}}}_{n,a}({\widehat{\lambda }}_n)\) and \(nM^{{\mathcal {D}}}_{n,a}(\lambda )\) will have the same limiting distribution. Hence we need to derive limiting distribution of \(nM^{{\mathcal {D}}}_{n,a}(\lambda )\).

First notice that \(M^{{\mathcal {D}}}_{n,a}(\lambda )\) is a V-statistic with symmetric kernel H. Also, since the distribution of \(M^{{\mathcal {D}}}_{n,a}(\lambda )\) does not depend on \(\lambda \) we may assume that \(\lambda =1.\)

It is easy to show that its first projection of kernel H on \(X_1\) is equal to zero. After some calculations, we obtain that its second projection on \((X_1,X_2)\) is given by

where \(\text {Ei}(x)=-\int _{-x}^\infty \frac{e^{-t}}{t}dt\) is the exponential integral. The function \({\widetilde{h}}_2\) is non-constant for any \(a>0\). Hence, kernel h is degenerate with degree 2.

Since the kernel H is bounded and degenerate, from the theorem on asymptotic distribution of U-statistics with degenerate kernels [26, Corollary 4.4.2], and the Hoeffding representation of V-statistics, we get that, \(M^{{\mathcal {D}}}_{n,a}(1)\), being a V-statistic of degree 2, has the following asymptotic distribution

where \(\{\delta _k\}\) are the eigenvalues of the integral operator \({\mathcal {M}}_a\) defined by

and \(\{W_{k}\}\) is the sequence of i.i.d. standard Gaussian random variables. \(\square \)

Proof (Theorem 3)

The test statistic can be represented as

where \(\{V_n(t;{\widehat{\lambda }}_n)\}\) is a V-empirical process introduced in the proof of Theorem 2.

Substituting \(s=e^{-t}\) in (17) we can express our statistic as

thus obtaining, as a core of the statistic, the process \(V_{n}(-\ln s;{\hat{\lambda }}_n)\), \(s\in (0,1)\), defined on C[0, 1] equipped with supremum norm.

Next we show that the difference between \(\sqrt{n}L^{{\mathcal {D}}}_{n,a}\) and \(\sqrt{n}\sup _{s\in (0,1)}|V_{n}(-\ln s;\lambda )s^a|\) (for a fixed \(\lambda \)) tends uniformly to zero and proceed finding the limiting distribution of the latter.

This is, taking into account (13), equivalent to \(\lim _{n\rightarrow \infty }\sup _{s\in (0,1)}R_n(s)=0\), where

From (14) we know that the expression in the absolute parentheses tends to zero for each s, and using [44, Lemma 1] we get that its supremum also tends to zero. Since \(-\ln s\cdot s^a\) is bounded function, \(\sup _s R_n(s)\) tends also to zero.

Since, for a fixed s, \(\sqrt{n}V_n(-\ln s;\lambda )\) is a non-degenerate V-statistic, \(\sqrt{n}V_n(-\ln s;\lambda )\) is asymptotically normally distributed. The same holds for finite-dimensional distributions of the process \(\sqrt{n}V_n(-\ln s;\lambda )\). In addition, it can be shown that \(\sqrt{n}V_n(-\ln s;\lambda )\) satisfies conditions from Billingsley [8, Theorem 12.3], and is, therefore, tight in C[0, 1]. Therefore it converges weakly to a zero mean Gaussian process \(\{\eta ^\star (s)\}\) with covariance function

where K is defined in (3).

Since the supremum is continuous on C[0, 1], using the continuous mapping theorem we get that \(\sqrt{n}\sup _{s\in (0,1)}|V_{n}(-\ln s;\lambda )s^a|\) converges to \(\sup _{s\in (0,1)}|\eta ^\star (s)s^a|\), which is the same as \(\sup _{t>0}|\eta (t)e^{-at}|\). This completes the proof. \(\square \)

Proof (Lemma 4)

Using the result of Zolotarev [55], the logarithmic tail behavior of limiting distribution function of \({\widetilde{M}}_{n,a}^{{\mathcal {D}}}({\widehat{\lambda }}_n)=\sqrt{nM^{{\mathcal {D}}}_{n,a}({\widehat{\lambda }}_n)}\) is

Therefore, \(a_{{\widetilde{M}}_a}=\frac{1}{6\delta _1}.\) The limit in probability \(P_{\theta }\) of \({\widetilde{M}}_{n,a}({\widehat{\lambda }}_n)/\sqrt{n}\) is

The expression for \(b_M(\theta )\) is derived in the following lemma.

Lemma A1

For a given alternative density \(g(x;\theta )\) whose distribution belongs to \({\mathcal {G}}\), we have that the limit in probability of the statistic \(M^{{\mathcal {D}}}_{n,a}({\widehat{\lambda }}_n)\) is

Proof

For brevity, denote \({\varvec{x}}=(x_1,x_2,x_3,x_{4})\) and \({\varvec{G}}({\varvec{x}};\theta )=\prod _{i=1}^{4}G(x_i;\theta )\). Since \({\overline{X}}_n\) converges almost surely to its expected value \(\mu (\theta )\), using the Law of large numbers for V-statistics with estimated parameters (see [22]), \(M^{{\mathcal {D}}}_{n,a}({\widehat{\lambda }}_n)\) converges to

We may assume that \(\mu (0)=1\) since the test statistic is ancillary for \(\lambda \) under the null hypothesis. After some calculations we get that \(b'_M(0)=0\) and that

Expanding \(b_M(\theta )\) into the Maclaurin series

we complete the proof. \(\square \)

Now we pass to the statistic \(L^{{\mathcal {D}}}_n.\) The tail behaviour of the random variable \(\sup _{t>0}|n_{t}|\) is equal to the inverse of supremum of its covariance function, i.e. the \(a_L=\frac{1}{\sup _{t>0}K(t,t)}\) (see [27, 36]).

Similarly like before, since \({\overline{X}}_n\) converges almost surely to its expected value \(\mu (\theta )\), using the Law of large numbers for V-statistics with estimated parameters [22], \(V_n(t,a;{\hat{\lambda }})e^{-at}\) converges to

Expanding \(b_L(\theta ;t)\) in the Maclaurin series we obtain

where \({\widetilde{\varphi }}_1(x,t;a)=E(\varPhi (X_1,X_2,t;a,1)|X_1=x_1).\)

Since V-empirical Laplace transforms are monotonous functions, a Glivenko-Cantelli-type theorem holds, see Novoa-Muñoz and Jiménez-Gamero [44, Lemma 1]. Hence, the limit in probability under the alternative for statistics \(L_{n,a}^{{\mathcal {D}}}\) is equal to \(\sup _{t\ge 0}|b_L(\theta ;t)|\). Inserting this into the expression for the Bahadur slope completes the proof. \(\square \)

Appendix B: Bahadur approximate slopes

Proof

Approximate local Bahadur slope of statistics EP and CO

Those statistics can be represented as

where \(\varPhi (x;\gamma )\) is continuously differentiable with respect to \(\gamma \) at point \(\gamma =\mu .\) It was shown that the limiting distribution of \(\sqrt{n}T_n\) is zero mean normal with variance \(\sigma ^2_{\varPhi }\) (see [10, 13]). Hence, the coefficient \(a_T\) is equal to \(\frac{1}{\sigma ^2_{\varPhi }}.\)

Further, we have

Then it holds that

From this we obtain the expression for \(c_T(\theta ).\) \(\square \)

Proof

Approximate local Bahadur slope of statistics BH, HE, Wn, HM, \(\omega ^2\) and AD

Let T be the one of considered statistics. It was shown that the limiting distribution of \(nT_n\) is \(\sum _{i=1}^{\infty }\delta _iW_i^2,\) where \(\{W_i\}\) is the sequence of i.i.d. standard normal variables and \(\{\delta _i\}\) the sequence of eigenvalues of certain covariance operator. Using the result of Zolotarev in [55], we have that the logarithmic tail behavior of limiting distribution function of \({\widetilde{T}}_n=\sqrt{n T_n}\) is

Next, the limit in probability of \({\widetilde{T}}_n/\sqrt{n}\) is \(b_{{\widetilde{T}}}(\theta )=\sqrt{b_T(\theta )}\). Statistic \(T_n\) can be represented as

As before, we may assume that \(\mu (0)=1\). Since the sample mean converges almost surely to its expected value, by using the Law of large numbers for V-statistics with estimated parameters (see [22]), we can conclude that the limit in the probability of statistic \(T_n\) is equal to the one of

We get that \(b_T'(0)=0\) and that

Expanding \(b_T(\theta )\) into Maclaurin series we obtain expression for \(b_T\). \(\square \)

Appendix C: Tables of efficiencies

Rights and permissions

About this article

Cite this article

Cuparić, M., Milošević, B. & Obradović, M. New consistent exponentiality tests based on V-empirical Laplace transforms with comparison of efficiencies. RACSAM 116, 42 (2022). https://doi.org/10.1007/s13398-021-01184-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s13398-021-01184-3