Abstract

In this paper, a class of Caputo-type fractional-order neural networks with mixed delay is introduced. By employing known inequalities, such as Hölder inequality, Cauchy–Schwartz inequality and Gronwall inequality, sufficient conditions are presented to ensure that such neural network is quasi-uniformly stable. Finally, a numerical example is presented to prove the theoretical results.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Fractional calculus’s phylogeny dated back to 1695, about 300 years ago, which was used to deal with derivatives and integrals of arbitrary. Owing to its complexity and lacking of application background, it didn’t draw much attention for a long time. Until recently, it has got increasing interests from researchers and has become a valuable tool in modeling in various field of engineering, physics, and so on [1–4], such as the fractional model of viscoelastic liquid [5], the diffusion and transmission model of ware [6], the fractional model of colored noise [7].

It has been well known that compared with classical integer-order models, fractional-order models possesses the heredity and memory, so that it provide an excellent instrument to describe the behavior of system. For instance, fractional-order models of happiness [8] and love [9] have been developed and are claimed to give a better representation than integer-order dynamical approaches. Recently, some researchers apply the characteristic of fractional calculus to neural networks to form fractional-order neural models, which can depict the complex relationship between the signal input and signal output more accurately and flexibly. In Ref. [10], it first introduced a cellular neural network with fractional-order cells. In Ref. [11], it was suggested that fractional derivatives provide neurons with a fundamental and general computation ability that can contribute to efficient information processing stimulus anticipation and frequency-independent phase shifts of oscillatory neuronal firing. Furthermore, in Refs. [12–15], it was noted that fractional-order neural networks might play a significant role in parameter estimation, therefore, it is essential to incorporate the fractional calculus into neural network models, which can said to be an important improvement.

Currently, the analyses of fractional-order artificial neural networks have received much attention and some available results about fractional-order neural networks have been obtained, especially about stability. For example, in Ref. [16], stability of fractional-order neural networks of Hopfield type is proved by the way of energy-like function analysis. Besides, employing the stability theory of fractional-order system, the asymptotically stability of fractional-order neural networks of Hopfield type is analyzed in Refs. [17, 18]. The author of Ref. [19] confirm the truth of \(\alpha \)-stability and \(\alpha \)-synchronization for fractional-order neural networks. In Ref. [20], it was pointed out that chaotic behaviors can emerge in a fractional network. What’s more, Refs. [21–23] proposed the chaos control and synchronization of some simple fractional networks by mainly using laplace transformation theory and numerical simulations. Besides, the predecessors also investigate the discrete-time Hopfield neural networks. For example, the stability and bifurcation for discrete-time Cohen-Grossberg neural network is studied in Ref. [24] and the Hopf bifurcation and stability analysis on discrete-time Hopfield neural network with delay is researched in Ref. [25].

To the best of our knowledge, much work has been done about the stability of fractional-order neural networks, such as exponential stability, Lyapunov stability, asymptotic stability and so on. In Ref. [26], it has been demonstrated the uniform stability of fractional-order neural networks. Owing to the complexity of the fractional-order neural networks, it may affected by many factors, so it is meaningful to study fractional-order neural networks with mixed delay. However, there is not exist a paper to describe the quasi-uniform stability of fractional neural networks with mixed delay. So to establish the sufficient criteria for such neural networks is quite necessary and challenging. Motivated by the above discussions, this paper devotes to presenting sufficient criterions for quasi-uniform stability of a class of fractional-order neural networks with mixed delay.

The rest of the paper is organized as follows. The fractional-order network model is introduced and some necessary definitions and lemmas are given in Sect. 2. Sufficient conditions ensuring the finite-time stability of the fractional-order neural networks are presented in Sect. 3. A numerical simulation is obtained in Sect. 4.

2 Model description and preliminaries

In this section we present some definitions, lemmas and recall some well-known results about fractional differential equations.

Definition 2.1

[1] The Riemann–Liouville fractional integral with non-integer order \(\beta \in R^{+}\) of f(s) is given as follows:

where \(\Gamma (\cdot )\) is the Gamma function \(\Gamma (\xi )=\int ^{+\infty }_{0}s^{\xi -1}e^{-s}ds\).

Definition 2.2

[1] The Riemann–Liouville derivative with fractional order \(\beta >0\) of f(s) is defined as

where \(\beta \) is a positive number such that \(n-1<\beta <n\in Z^{+}\).

Definition 2.3

[1] The Caputo derivative of fractional order \(\beta >0\) of f(s) is given by

where \(n-1<\beta <n\in Z^{+}\).

These three definitions are in general non-equivalent. Based on the definition of integral derivative and the above expressions (2) and (3), it is recognized that integral derivative of a function is only related to its nearby points, while the fractional derivative has relationship with all of the function history information. That is, the next state of a system not only depends upon its current state but also upon its historical states staring from the initial time. As a result, a model described by fractional-order equations possess memory. It is more precise to describe the state of neuron. On the another hand, from the Laplace transform of fractional derivative, the main advantage of the Caputo derivative is that it only requires initial conditions given in terms of integer-order derivatives, representing well-understood features of physical situations and thus making it more applicable to real world problems. So throughout this paper, we deal with the fractional-order neural networks involving Caputo derivative, and the notation D\(^{\beta }\) is chosen as the Caputo fractional derivative operator D\(^{\beta }_{o,t}\). The following properties of operator D\(^{\beta }\) are provided.

Lemma 2.1

[27] If \(x(s)\in C^{n}[0,\infty )\), and \(n-1<\alpha ,\beta <n\in Z^{+}\), then

Lemma 2.2

[28] (Hölder inequality). Suppose that \(p,q>1\), and \(1/p+1/q=1\), if \(\mid f(\cdot )\mid ^{p}\), \(\mid h(\cdot )\mid ^{q}\) \(\in \) \(L^{1}(E)\), then \(f(\cdot )h(\cdot )\in L^{1}(E)\) and

where \(L^{1}(E)\) is the Banach space of all Lebesgue measurable functions \(f: E\longrightarrow R\) with \(\int _{E}\mid f(x) \mid dx < \infty \). Let \(p,q=2\), it Converts to the Cauchy–Schwartz inequality as follow:

Lemma 2.3

[29] Let \(k\in N\), and let \(x_{1}, x_{2},\ldots ,x_{n}\) be non-negative real numbers. Then for \(\eta \) \(>\)1.

Lemma 2.4

[30] (Gronwall inequality). If

where all the functions involved are continuous on [0, T), \(T\le \) \(\infty \), and \(g(t)\ge 0\), then x(t) satisfies

If, in addition, f(t) is nondecreasing, then

3 Main results

In this section, two sufficient conditions are derived for a class of fractional-order neural networks of mixed delay with order \(0<\beta <0.5\) and \(0.5\le \beta <1\). The dynamic behavior of a continuous fractional-order neural network with mixed delay can be described by the following differential equation.

or equivalently

where \(0<\beta <1, i=1,2\ldots ,n,\) n corresponds to the number of units in a neural network; \(x(t)=(x_{1}(t), x_{2}(t),\ldots , x_{n}(t))^{T}\) corresponds to the state vector at time t; \(F(x(t))=(f_{1}(x_{1}(t)), f_{2}(x_{2}(t),\ldots , f_{n}(x_{n}(t)))^{T}\), \(G(x(t))=(g_{1}(x_{1}(t)), g_{2}(x_{2}(t),\) \(\ldots , g_{n}(x_{n}(t)))^{T}\) and \(H(x(t))=(h_{1}(x_{1}(t)), h_{2}(x_{2}(t),\ldots , h_{n}(x_{n}(t)))^{T}\) denote the activation function of the neurons; \(\mathscr {C}=diag(c_{i}>0)\), \(\mathscr {A}=(a_{ij})\), \(\mathscr {B}=(b_{ij})\) and \(\mathscr {M}=(m_{ij})\) are constant matrices; \(c_{i}\) denotes the rate with which the ith unit will reset its potential to the resting state in isolation when disconnected from the network. \(\mathscr {A}=({a_{ij}})\), \(\mathscr {B}=({b_{ij}})\) and \(\mathscr {M}=({m_{ij}})\) are referred to the connection of the jth neuron to the ith neuron at time t, \(t-\tau \) and \(t-\sigma \), respectively, where \(\tau \) and \(\sigma \) is the transmission delay and a nonnegative constant; \(I=(I_{1}(t), I_{2}(t),\ldots , I_{n}(t))^{T}\) is an external bias vector.

For the initial conditions associated with system (10),it is usually assumed that \(\psi _{i}(s)\in C([-\gamma ,0],R),i\in N,\) and the norm of \(C([-\gamma ,0],R)\) is denoted by \(\parallel \psi \parallel \)=\(sup_{s\in [-\gamma ,0]}\) \(\parallel \psi (s)\parallel \).

Suppose that x(t) and y(t) are any two solutions of (11) with different initial functions \(\psi \) \(\in \) C and \(\phi \) \(\in \) C, \(\psi (0)=\phi (0)=0\), let \(x(t)-y(t)=e(t)\)=\((e_{1}(t)\), \(e_{2}(t)\), ..., \(e_{n}(t))^{T}\), \(\varphi \) =\(\psi \)-\(\phi \), one obtain the error system

where \(\varphi \) \(\in \) C, \(\varphi \)(0) = 0 is the initial function of system (11), define the norm \(\parallel \varphi \parallel \) = \( sup_{s\in [-\gamma ,0]}\parallel \varphi (s)\parallel \).

Definition 3.1

For any \(\varepsilon >0\), if there exists two constants \(0<\delta <\varepsilon , T>0\), when \(\parallel e(t_{0})\parallel <\delta \), we have \(\parallel e(t)\parallel <\varepsilon \), \(\forall t \in J=[t_{0},t_{0}+T]\), where \(t_{0}\) is the initial time of observation, then system (11) is said to be quasi-uniformly stable.

In order to obtain main results, the following assumptions are made:

-

(1)

Denote \(\parallel x\parallel =\Sigma _{i=1}^{n}\) \(\mid x_{i}\mid \) and \(\parallel \mathscr {A}\parallel =max _{1\le j\le n}\) \(\Sigma _{i=1}^{n}\) \(\mid \)a\(_{ij}\) \(\mid \), which are the Euclidean vector norm and matrix norm, respectively ; \(x_{i}\) and \(a_{ij}\) are the elements of the vector x and the matrix \(\mathscr {A}\), respectively.Let \(C=\parallel \mathscr {C}\parallel \), \(A=\parallel \mathscr {A}\parallel \), \(B=\parallel \mathscr {B}\parallel \), and \(M=\parallel \mathscr {M}\parallel \).

-

(2)

The neuron activation functions F(x), G(x) and H(x) are Lipschitz continuous, namely, there exist positive constants F, G and H such that

Theorem 3.1

When \(1/2\le \beta <1\), if assumption (1) and (2) hold and

where

then system (11) is quasi-uniformly stable.

Proof

Let the initial time \(t_{0}=0, e_{0}=\varphi (0)\) is the initial condition of system (12). Depend on Lemma 2.1, the solution of the system (12) can be expressed in the following form:

Trough the Assumptions (1), (2) and the properties of norm \(\parallel \cdot \parallel \), it obtain

\(\square \)

According to Cauchy–Schwartz inequality (6), one gets

Since

Substituting (16) into (15), one obtains

From Lemma 2.2, let \(k=5\) and \(\eta =2\), it follows from (17) that

let

since

which is equivalent to

According to the Gronwall inequality (8), it obtain

let

then

so

therefore

It follows that when \(\parallel \varphi \parallel <\delta \), if (3.1) is satisfied, then \(\parallel e(t)\parallel <\varepsilon \), from Definition 3.1, one can obtain system (11) is quasi-uniformly stable.

Theorem 3.2

When \(0<\beta <1/2\), if assumption (1) and (2) hold and

where

\(p=1+\beta ,q=1+1/\beta \), then system (11) is quasi-uniformly stable.

Proof

As the same in Theorem 3.1, we have the following estimate form of solution of system(12).

Let \(p=1+\beta ,q=1+1/\beta \), we can see that, \(p,q>1\) and \(1/p+1/q=1\). By using the Hölder inequality (5), one obtain

Note that

\(\square \)

Substituting (21) into (20), we has

Then form Lemma 2.2, let \(k=5\) and \(\eta =q\), one gets

let

then

let

one gets

then

Form the Gronwall inequality (8),it follows that

let

then

so

therefore

It follows that when \(\parallel \varphi \parallel <\delta \), if (19) is satisfied, then \(\parallel e(t)\parallel <\varepsilon \), from Definition 3.1, one can obtain system (11) is quasi-uniformly stable.

4 An illustrative example

In this section, a numerical example is presented to illustrate the result. Let us consider the two-state fractional-order mixed delay neural network model.

Where the activation function is described by the function \(f_{i}(x_{i}(t))\) \(=g_{i}(x_{i}(t))=h_{i}(x_{i}(t))=tanhx\) \((i=1,2)\). So \(F=G=H=1\).

Obviously, \(C=0.1\), \(A=0.3\), \(B=0.7\), \(M=0.5\). Next is needs to verify the quasi-uniform stability. Let \(t_{0}=0\), \(\delta \)=0.1, \(\varepsilon \)=1, \(\sigma \)=0.05.

On one hand, when \(\beta \)=0.7, through employing the MATLAB, it could be verified that \(P=4.9938\), \(Q=0.2278\), \(L=2.7994\), \(N=0.6878\), \(W(t)=3.0884\). By the inequality \(\sqrt{P+Qe^{2t}+W(t)e^{(W(t)+2)t}(P\frac{1-e^{-(2+W(t))t}}{2+W(t)}+Q\frac{1-e^{-W(t)t}}{W(t)})}<\frac{\varepsilon }{\delta }\), it could be obtained that \(T=0.6690\). On the other hand, when \(\beta =0.3\), by means of the MATLAB, it could be noted that \(\tilde{E}=2.4949, \tilde{P}=213.7470 , \tilde{Q}=9.2269, \tilde{L}=83.7659, \tilde{N}=16.9440, \tilde{W}(t)=84.1261\), form calculating the inequality \(\root q \of {\tilde{P}+\tilde{Q}e^{qt}+\frac{\tilde{W}(t)\tilde{P}(e^{(\tilde{W}(t)+q)t}-1)}{q+\tilde{W}(t)}+\frac{\tilde{W}(t)\tilde{Q}e^{(\tilde{W}(t)+q)t}(1-e^{-\tilde{W}(t)t})}{\tilde{W}(t)}}<\frac{\varepsilon }{\delta }\), it gets \(T=0.0522\).

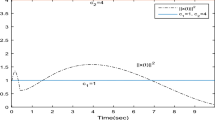

Then with fixed order \(\beta \), show the trajectories of \(x_{1}\) with different initial values. Here choose \(\tau =0.1, \sigma =0.05, \beta =0.7\), give the time evolution of state with \(x_{1}(t)=0.8, 0.5, 0.1, -0.1, -0.5, -0.8\) (see Fig. 1), and under the same conditions show the trajectories of \(x_{2}\) with different initial values. Chosen to be \(x_{2}(t)=0.8, 0.5, 0.1, -0.1, -0.5, -0.8\) (see Fig. 2).

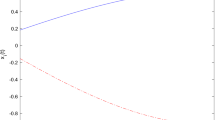

On the other hand, with fixed order \(\beta \)=0.3, show the trajectories of \(x_{1}\) and \(x_{2}\) with the same conditions as above (see Figs. 3, 4).

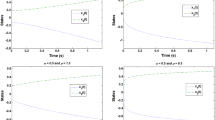

Furthermore, with fixed delay \(\sigma =0.1\), confirmed order \(\beta =0.7\), unchangeable initial values \(x_{1}(t)=0.5\), \(x_{2}(t)=0.5\), give the trajectories of \(x_{1}\) and \(x_{2}\) with different the other delay \(\tau =0.1, 0.5, 0.8\) (see Figs. 5, 6).

Following, with fixed delay \(\tau =1\), confirmed order \(\beta \)=0.7, unchangeable initial values \(x_{1}(t)=0.5\), \(x_{2}(t)=0.5\), show the trajectories of \(x_{1}\) and x\(_{2}\) with different the other delay \(\sigma =0.05, 0.1, 0.2\) (see Figs. 7, 8).

From the above numerical results, we could note that the neural network in this example is quasi-uniformly stable, and also the influence on quasi-uniform property with varying initial values and delays.

5 Conclusions

In this paper, quasi-uniform stability problems of a class of fractional-order neural networks with mixed delay is investigated. Not as in the integer-order delayed systems, it is very difficult to construct Lyapunov functions for fractional-order case. So by using only the inequality scaling skills in this paper, sufficient conditions ensuring quasi-uniform stability are derived. Finally, it can obtain that the fractional-order neural networks with mixed delay considered in this paper is quasi-uniformly stable through the numerical example and corresponding numerical simulation.

References

Chua LO (1971) Memristor—the missing circut element. IEEE Trans Circuit Theory 18:507–519

Strukov DB, Snider GS, Stewart DR, Williams RS (2008) The missing memristor found. Nature 453:80–83

Tour JM, He T (2008) Electronics: the fourth element. Nature 453:42–43

Podlubny I (1999) Fractional differential equations. Academic, New York

Butzer PL, Westphal U (2000) An introduction to fractional calculus. World Scientific, Singapore

Hilfer R (2001) Applications of fractional calculus in physics. World Scientific, Hackensack

Kilbas AA, Srivastava HM, Trujillo JJ (2006) Theory and application of fractional differential equations. Elsevier, Amsterdam

Song L, Xu SY, Yang JY (2010) Dynamical models of happiness with fractional order. Commun Nonlinear Sci Numer Simul 15(3):616–628

R.C. Gu, Y. Xu (2011) Chaos in a fractional-order dynamical model of love and its control. In: Li SM, Wang X, Okazaki Y, Kawabe J, Murofushi T, Li G (eds) Nonlinear mathematics for uncertainty and its applications. AISC, Springer, pp 349–356, 881–886

Arena P, Caponetto R, Fortuna L, Porto D (1998) Bifurcation and chaos in noninteger order cellular neural networks. Int J Bifurc Chaos 8(7):1527–1539

Lundstrom BN, Higgs MH, Spain WJ, Fairhall AL (2008) Fractional differentiation by neocortical pyramidal neurons. Nat Neurosci 11(11):1335–1342

Chon KH, Hoyer D, Armoundas AA (1999) Robust nonlinear autoregressive moving average model parameter estimation using stochastic recurrent artificial neural networks. Ann Biomed Eng 27(4):538–547

Raol JR (1995) Parameter estimation of state space models by recurrent neural networks. IET Control Theory A 142(2):114–118

Beer RD (2006) Parameter space structure of continuous-time recurrent neural networks. Neural Comput 18(12):3009–3051

Huang H, Huang TW, Chen XP (2013) A mode-dependent approach to state estimation of recurrent neural networks with Markovian jumping parameters and mixed delays. Neural Netw 46:50–61

Wu A, Zhang J, Zeng Z (2011) Dynamic behaviors of a class of memristor-based Hopfield networks. Phys Lett A 375:1661–1665

Wen S, Zeng Z, Huang T Dynamic behaviors of memristor based delayed recurrent networks. Neural Comput Appl. doi:10.1007/s00521-012-0998-y

Zhang G, Shen Y (2013) Global exponential periodicity and stability of a class of memristor-based recurrent neural networks with multiple delays. Inform Sci 232:386–396

Wu H, Zhang L (2013) Almost periodic solution for memristive neural networks with time-varying delays. J Appl Math, V, Article ID 716172, p 12. http://dx.doi.org/10.1155/2013/716172

Zhang R, Qi D, Wang Y (2010) Dynamics analysis of fractional order three-dimensional Hopfield neural network. In: International conference on natural computation, pp 3037–3039

Zhou S, Li H, Zhu Z (2008) Chaos control and synchronization in a fractional neuron network system. Chaos Solitons Fractals 36:973–984

Zhu H, Zhou S, Zhang W (2008) Chaos and synchronization of time-delayed fractional neuron network system. In: The 9th international conference for Young computer scientists, pp 2937–2941

Zhou S, Lin X, Zhang L, Li Y (2010) Chaotic synchronization of a fractional neurons network system with two neurons. In: International conference on communications, circuits and systems, pp 773–776

Zhao HY, Wang L, Ma CX (2008) Hopf bifurcation and stability analysis on discrete-time Hopfield neural network with delay. Nonlinear Anal Real World Appl 9:103–113

Zhao HY, Wang L (2006) Stability and bifurcation for discrete-time CohenCGrossberg neural network. Appl Math Comput 179:787–798

Chen L, Chai Y, Wu R, Ma T, Zhai H (2013) Dynamic analysis of a class of fractional-order neural networks with delay. Neurocomputing 111:190–194

Li CP, Deng WH (2007) Remarks on fractional derivatives. Appl Math Comput 187:777–784

Mitrinovic DS (1970) Analytic inequalities. Springer, New York

Kuczma M (2009) An introduction to the theory of functional equations and inequalities: Cauchy’s equation and Jensen’s inequality. Birkhauser, Switzerland

Corduneanu C (1971) Principles of differential and intergral equations. Allyn and Bacon, USA

Acknowledgments

The authors are extremely grateful to anonymous reviewers for their careful reading of the manuscript and insightful comments, which help to enrich the content. We would also like to acknowledge the valuable comments and suggestions from the editors, which vastly contributed to improve the presentation of this paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was jointly supported by the National Natural Science Foundation of China (61573306), the Postgraduate Innovation Project of Hebei province of China (00302-6370019) and High level talent support project of Hebei province of China (C2015003054).

Rights and permissions

About this article

Cite this article

Wu, H., Zhang, X., Xue, S. et al. Quasi-uniform stability of Caputo-type fractional-order neural networks with mixed delay. Int. J. Mach. Learn. & Cyber. 8, 1501–1511 (2017). https://doi.org/10.1007/s13042-016-0523-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13042-016-0523-1