Abstract

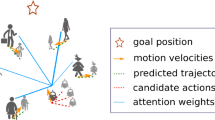

Although deep reinforcement learning has recently achieved some successes in robot navigation, there are still unsolved problems. Particularly, a robot guided by a distant ultimate goal is easy to get stuck in danger or encounter collisions in dynamic crowded environments due to the lack of long-term perspectives. In this paper, a novel subgoal-guided approach based on two-level hierarchical deep reinforcement learning with spatial-temporal graph attention networks (ST-GANets), called SG-HDRL, is proposed for a robot navigating in a dynamic crowded environment with autonomous obstacles, e.g., crowd. Specifically, the high-level policy, that models the spatial-temporal relation between the robot and the obstacles using the obstacles’ trajectories by the designed high-level ST-GANet, generates intermediate subgoals from a longer-term perspective over higher temporal scales. The subgoals give a favorable and collision-free direction to avoid encountering danger or collisions while approaching the ultimate goal. The low-level policy, that similarly implements the designed low-level ST-GANet to implicitly predict the obstacles’ motions, takes the subgoals as short-term guidance through an intrinsic reward incentive to generate primitive actions for the robot. Simulation results demonstrate that SG-HDRL using ST-GANets has better performances compared with state-of-the-art baselines. Furthermore, the proposed SG-HDRL is deployed to an experimental platform based on omnidirectional cars, and experiment results validate the effectiveness and practicability of the proposed SG-HDRL.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

V. Mnih, K. Kavukcuoglu, D. Silver, A. A. Rusu, J. Veness, M. G. Bellemare, A. Graves, M. Riedmiller, A. K. Fidjeland, G. Ostrovski et al., “Human-level control through deep reinforcement learning,” Nature, vol. 518, no. 7540, pp. 529–533, 2015.

Z. Sui, Z. Pu, J. Yi, and S. Wu, “Formation control with collision avoidance through deep reinforcement learning using model-guided demonstration,” IEEE Transactions on Neural Networks and Learning Systems, vol. 32, no. 6, pp. 2358–2372, 2020.

J.-Y. Jhang, C.-J. Lin, C.-T. Lin, and K.-Y. Young, “Navigation control of mobile robots using an interval type-2 fuzzy controller based on dynamic-group particle swarm optimization,” International Journal of Control, Automation, and Systems, vol. 16, no. 5, pp. 2446–2457, 2018.

T. Zhang, Z. Liu, S. Wu, Z. Pu, and J. Yi, “Multi-robot cooperative target encirclement through learning distributed transferable policy,” Proc. of International Joint Conference on Neural Networks (IJCNN), IEEE, pp. 1–8, 2020.

J. Xin, C. Dong, Y. Zhang, Y. Yao, and A. Gong, “Visual servoing of unknown objects for family service robots,” Journal of Intelligent & Robotic Systems, vol. 104, Article number 10, 2022.

M. Tampubolon, L. Pamungkas, H.-J. Chiu, Y.-C. Liu, and Y.-C. Hsieh, “Dynamic wireless power transfer for logistic robots,” Energies, vol. 11, no. 3, p. 527, 2018.

W. Youn, H. Ko, H. Choi, I. Choi, J.-H. Baek, and H. Myung, “Collision-free autonomous navigation of a small uav using low-cost sensors in GPS-denied environments,” International Journal of Control, Automation, and Systems, vol. 19, no. 2, pp. 953–968, 2021.

T. Zhang, T. Qiu, Z. Pu, Z. Liu, and J. Yi, “Robot navigation among external autonomous agents through deep reinforcement learning using graph attention network,” IFACPapersOnLine, vol. 53, no. 2, pp. 9465–9470, 2020.

R. Tang and H. Yuan, “Cyclic error correction based q-learning for mobile robots navigation,” International Journal of Control, Automation, and Systems, vol. 15, no. 4, pp. 1790–1798, 2017.

P. Fiorini and Z. Shiller, “Motion planning in dynamic environments using velocity obstacles,” The International Journal of Robotics Research, vol. 17, no. 7, pp. 760–772, 1998.

J. Van den Berg, M. Lin, and D. Manocha, “Reciprocal velocity obstacles for real-time multi-agent navigation,” Proc. of IEEE International Conference on Robotics and Automation, IEEE, pp. 1928–1935, 2008.

J. van den Berg, S. J. Guy, M. Lin, and D. Manocha, “Reciprocal n-body collision avoidance,” Robotics Research, C. Pradalier, R. Siegwart, and G. Hirzinger, Eds., Berlin, Heidelberg: Springer Berlin Heidelberg, pp. 3–19, 2011.

M. Kuderer, H. Kretzschmar, C. Sprunk, and W. Burgard, “Feature-based prediction of trajectories for socially compliant navigation.” Robotics: Science and Systems, 2012.

G. S. Aoude, B. D. Luders, J. M. Joseph, N. Roy, and J. P. How, “Probabilistically safe motion planning to avoid dynamic obstacles with uncertain motion patterns,” Autonomous Robots, vol. 35, no. 1, pp. 51–76, 2013.

H.-T. L. Chiang, A. Faust, M. Fiser, and A. Francis, “Learning navigation behaviors end-to-end with autorl,” IEEE Robotics and Automation Letters, vol. 4, no. 2, pp. 2007–2014, 2019.

Y. Chen, C. Liu, B. E. Shi, and M. Liu, “Robot navigation in crowds by graph convolutional networks with attention learned from human gaze,” IEEE Robotics and Automation Letters, vol. 5, no. 2, pp. 2754–2761, 2020.

Y. F. Chen, M. Liu, M. Everett, and J. P. How, “Decentralized non-communicating multiagent collision avoidance with deep reinforcement learning,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 285–292, 2017.

Y. F. Chen, M. Everett, M. Liu, and J. P. How, “Socially aware motion planning with deep reinforcement learning,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1343–1350, 2017.

P. Long, T. Fanl, X. Liao, W. Liu, H. Zhang, and J. Pan, “Towards optimally decentralized multi-robot collision avoidance via deep reinforcement learning,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), IEEE, pp. 6252–6259, 2018.

T. Fan, X. Cheng, J. Pan, D. Manocha, and R. Yang, “Crowdmove: Autonomous mapless navigation in crowded scenarios,” arXiv preprint arXiv:1807.07870, 2018.

T. Fan, P. Long, W. Liu, and J. Pan, “Fully distributed multi-robot collision avoidance via deep reinforcement learning for safe and efficient navigation in complex scenarios,” arXiv preprint arXiv:1808.03841, 2018.

S. Hochreiter and J. Schmidhuber, “Long short-term memory,” Neural Computation, vol. 9, no. 8, pp. 1735–1780, 1997.

M. Everett, Y. F. Chen, and J. P. How, “Motion planning among dynamic, decision-making agents with deep reinforcement learning,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), IEEE, pp. 3052–3059, 2018.

C. Chen, Y. Liu, S. Kreiss, and A. Alahi, “Crowd-robot interaction: Crowd-aware robot navigation with attention-based deep reinforcement learning,” Proc. of International Conference on Robotics and Automation (ICRA), pp. 6015–6022, 2019.

X. Yang, M. Moallem, and R. V. Patel, “A layered goal-oriented fuzzy motion planning strategy for mobile robot navigation,” IEEE Transactions on Systems, Man, and Cybernetics, Part B (Cybernetics), vol. 35, no. 6, pp. 1214–1224, 2005.

D. Wang, D. Liu, N. Kwok, and K. Waldron, “A subgoal-guided force field method for robot navigation,” Proc. of IEEE/ASME International Conference on Mechtronic and Embedded Systems and Applications, IEEE, pp. 488–493, 2008.

O. Nachum, S. S. Gu, H. Lee, and S. Levine, “Data-efficient hierarchical reinforcement learning,” Proc. of the 32nd International Conference on Neural Information Processing Systems, pp. 3303–3313, 2018.

T. D. Kulkarni, K. R. Narasimhan, A. Saeedi, and J. B. Tenenbaum, “Hierarchical deep reinforcement learning: Integrating temporal abstraction and intrinsic motivation,” arXiv preprint arXiv:1604.06057, 2016.

T. Haarnoja, K. Hartikainen, P. Abbeel, and S. Levine, “Latent space policies for hierarchical reinforcement learning,” Proc. of International Conference on Machine Learning, PMLR, pp. 1851–1860, 2018.

R. Makar, S. Mahadevan, and M. Ghavamzadeh, “Hierarchical multi-agent reinforcement learning,” Proc. of the fifth International Conference on Autonomous Agents, pp. 246–253, 2001.

A. S. Vezhnevets, S. Osindero, T. Schaul, N. Heess, M. Jaderberg, D. Silver, and K. Kavukcuoglu, “Feudal networks for hierarchical reinforcement learning,” arXiv preprint arXiv:1703.01161, 2017.

P.-L. Bacon, J. Harb, and D. Precup, “The option-critic architecture,” Proc. of the AAAI Conference on Artificial Intelligence, vol. 31, no. 1, 2017.

Z. Wu, S. Pan, F. Chen, G. Long, C. Zhang, and S. Y. Philip, “A comprehensive survey on graph neural networks,” IEEE Transactions on Neural Networks and Learning Systems, vol. 32, no. 1, pp. 4–24, 2021.

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. u. Kaiser, and I. Polosukhin, “Attention is all you need,” Proc. of 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA.

T. Hester, M. Vecerik, O. Pietquin, M. Lanctot, T. Schaul, B. Piot, D. Horgan, J. Quan, A. Sendonaris, I. Osband et al., “Deep q-learning from demonstrations,” Proc. of the AAAI Conference on Artificial Intelligence, vol. 32, no. 1, pp. 3223–3230, 2018.

N. Ltd, “Nokov products: Mars series,” in Beijing, China, 2021. [Online]. Available: https://www.nokov.com/

Author information

Authors and Affiliations

Corresponding author

Additional information

Tianle Zhang received his B.Eng. degree in mechanical engineering and automation from University of Science and Technology Beijing, Beijing, China, in 2018. He is currently pursuing a Ph.D. degree in control theory and control engineering with the Institute of Automation, Chinese Academy of Sciences, Beijing, China. His current research interests include planning and decision-making in mobile robots, multiagent deep reinforcement learning (MDRL), and swarm intelligence.

Zhen Liu received his B.Eng. degree in automation from the Nanjing University of Aeronautics and Astronautics, Nanjing, China, in 2010, and a Ph.D. degree in control theory and control engineering from the Institute of Automation, Chinese Academy of Sciences, Beijing, China, in 2015. He is currently an Associate Professor with the Integrated Information System Research Center, Institute of Automation, Chinese Academy of Sciences. His research interests include robust adaptive control, fuzzy control, and applications of aerospace systems.

Zhiqiang Pu received his B.Eng. degree in automation from Wuhan University, Wuhan, China, in 2009, and a Ph.D. degree in control theory and control engineering from Institute of Automation, Chinese Academy of Sciences, Beijing, China, in 2014. He is currently a Professor in the Integrated Information System Research Center, Institute of Automation, Chinese Academy of Sciences. He has been supported by the Talent Program of Youth Innovation Promotion Association CAS since 2017. His research interests include decision intelligence, collective intelligence, and applications of unmanned autonomous systems.

Jianqiang Yi received his B.Eng. degree in mechanical engineering from Beijing Institute of Technology, Beijing, China, in 1985, and his M.Eng. and Ph.D. degrees in automation from the Kyushu Institute of Technology, Kitakyushu, Japan, in 1989 and 1992, respectively. From 1992 to 1994, he worked as a Research Fellow with the Computer Software Development Company, Tokyo, Japan. From 1994 to 2001, He was with MYCOM, Inc., Kyoto, Japan. Since 2001, he has been a Full Professor with the Institute of Automation, Chinese Academy of Sciences, Beijing, China. He has authored or co-authored over 100 international journal papers and 270 international conference papers. His research interests mainly include intelligent control, adaptive control, robot system, and collective intelligence.

Yanyan Liang received his B.S. degree from the Chongqing University of Posts and Telecommunications, Chongqing, China, in 2004, and his M.S. and Ph.D. degrees from the Macau University of Science and Technology (MUST), Taipa, Macau, in 2006 and 2009, respectively. He is currently an Associate Professor with MUST. He has published more than 40 papers related to pattern recognition, image processing, and computer vision in IEEE Transactions and international conferences, including IEEE Transactions on Imageprocessing, IEEE Transactions on Cybernetics, IEEE Transactions on Multimedia, IEEE Transactions on Information Forensics and Security, IJCAI, CVPR, ICPR, and FG. He is also working on smart city applications with computer vision and big data. His research interests include computer vision, image processing, and machine learning.

Du Zhang received his M.S. degree from Nanjing University, Nanjing, China, in 1982, and a Ph.D. degree from the University of Illinois, USA, in 1987. He is currently a Chair Professor with the Faculty of Innovation Engineering, Macau University of Science and Technology, Macau. His research interests cover machine learning (STEP perpetual learning), knowledge-based systems, big data analytics, software engineering, and high-level petri net applications.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work was supported by the National Key Research and Development Program of China under Grant 2018AAA0101005, in part by the Strategic Priority Research Program of Chinese Academy of Sciences under Grant No. XDA27030204, in part by the External Cooperation Key Project of Chinese Academy Sciences (No. 173211KYSB20200002), in part by the Foundation under Grant 2019-JCJQ-ZD-049, and in part by the National Natural Science Foundation of China (62073323).

Rights and permissions

About this article

Cite this article

Zhang, T., Liu, Z., Pu, Z. et al. Robot Subgoal-guided Navigation in Dynamic Crowded Environments with Hierarchical Deep Reinforcement Learning. Int. J. Control Autom. Syst. 21, 2350–2362 (2023). https://doi.org/10.1007/s12555-022-0171-z

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-022-0171-z