Abstract

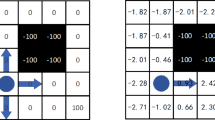

Similar to control systems, reinforcement learning can capture notions of optimal behavior using natural interaction experience. In the context of reinforcement learning, the temporal difference error of the generated experience measures how well the learner responds to the system. Specially sequential difference of accumulated temporal difference error can indicate the learning performance. In this paper, we fully utilize the error correction in closed-loop peculiarity by mapping a representation error to the step-size component. The proposed cyclic step-size could better control how new estimates are iteratively blended together over time, and the new estimates guide the action selection process which in turn influence the value distribution. To guide more promising action decision, an ensemble action selector is proposed which incorporates the idea of ensemble wisdom of the weak. Experimental results conducted under gridworld mobile robot navigation task demonstrate the validity, capacity of fast learning and easy-plugged implementation of the derived algorithm, leading to increasing applicability to real-life problems.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

M. Hutter and S. Scanner, Recent Advances in Reinforcement Learning, Springer, New York, 2012.

R. Sutton and A. Barto, Reinforcement Learning: An introduction, MIT Press, Cambridge, MA, 1998.

C. Watkins and P. Dayan, “Q-learning,” Machine Learning, vol. 8, no. 3, pp. 279–292, 1992. [click]

R. Coulom, Reinforcement Learning Using Neural Networks, with Applications to Motor Control, Institut National Polytechnique de Grenoble-INPG, 2002.

J. Kober, J. Bagnell, and J. Peters, “Reinforcement learning in robotics: a survey,” International Journal of Robotics Research, vol. 32, no. 11, pp. 1238–1274, 2013.

B. Zuo, J. Chen, L. Wang, and Y. Wang, “A reinforcement learning based robotic navigation system” Proc. 2014 IEEE International Conference on. Systems, Man and Cybernetics (SMC), pp. 3452–3457 2014.

J. Millan and C. Torras, “Learning to avoid obstacles through reinforcement” Proc. the 8th International Workshop on Machine Learning, pp. 298–302 2014.

A. Gosavi, Simulation-based Optimization: Parametric Optimization Techniques and Reinforcement Learning, Springer, US, 2014.

A. Gosavi, “On step sizes, stochastic shortest paths, and survival probabilities in reinforcement learning” Proc. the 40th Conference on Winter Simulation, pp. 525–531 2008.

K. Moriyama, “Learning-rate adjusting Q-learning for prisoner’s dilemma games” IEEE/WIC/ACM International Conference onWeb Intelligence and Intelligent Agent Technology, WI-IAT’08, pp. 322–325 2008.

A. Mahmood, R. Sutton, T. Degris, and P. Pilarski, “Tuning-free step-size adaptation” Proc. 2012 IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 2121–2124 2012.

E. Even-Dar and Y. Mansour, “Learning rates for Qlearning,” The Journal of Machine Learning Research, vol. 5, pp. 1–25, 2004.

S. Lee, I. Suh, and W. Kwon, “A motivation-based actionselection-mechanism involving reinforcement learning,” Int. J. of Control, Automation, and Systems, vol. 6, no. 6, pp. 904–914, 2008.

M. Tokic and G. Palm, “Value-difference based exploration: adaptive control between epsilon-greedy and softmax” Advances in Artificial Intelligence, Springer Berlin Heidelberg, pp. 335–346 2011.

M. Guo, Y. Liu, and J. Malec, “A new Q-learning algorithm based on the metropolis criterion,” IEEE Transactions on Systems, Man, and Cybernetics, Part B: Cybernetics, vol. 34, no. 5, pp. 2140–2143, 2004. [click]

F. Lewis, D. Vrabie, “Reinforcement learning and adaptive dynamic programming for feedback control,” IEEE Circuits and Systems Magazine, vol. 9, no. 3, pp. 32–50, 2009. [click]

M. Puterman, Markov Decision Processes: Discrete Stochastic Dynamic Programming, John Wiley & Sons, 2014.

M. Littman, “Reinforcement learning improves behaviour from evaluative feedback,” Nature, vol. 521, no. 7553, pp. 445–451, 2015. [click]

C. Szepesvari, “Algorithms for reinforcement learning,” Synthesis Lectures on Artificial Intelligence and Machine Learning, vol. 4, no. 1, pp. 1–103, 2010.

C. Zhang and Y. Ma, Ensemble Machine Learning, Springer, New York, 2012.

M. Mendoza and A. Bazzan, “The wisdom of crowds in bioinformatics: what can we learn (and gain) from ensemble predictions?” Proc. the 27th AAAI Conference on Artificial Intelligence, pp. 1678-1679, 2013.

C. Bishop, Pattern Recognition and Machine Learning, Springer, New York, 2006.

D. Borrajo and L. Parker, “A reinforcement learning algorithm in cooperative multi-robot domains,” Journal of Intelligent and Robotic Systems, vol. 43, no. 2-4, pp. 161–174, 2005.

L. Panait and S. Luke, “Cooperative multi-agent learning: The state of the art,” Autonomous Agents and Multi-Agent Systems, vol. 11, no. 3, pp. 387–434, 2005. [click]

W. Burgard, M. Moors, C. Stachniss, and F. Schneider, “Coordinated multi-robot exploration,” IEEE Transactions on Robotics, vol. 21, no. 3, pp. 376–386, 2005. [click]

Y. Li, L. Chen L, K. Tee, and Q. Li, “Reinforcement learning control for coordinated manipulation of multi-robots,” Neurocomputing, vol. 170, pp. 168–175, 2015. [click]

Author information

Authors and Affiliations

Corresponding author

Additional information

Recommended by Associate Editor Huaping Liu under the direction of Editor Fuchun Sun. This work is supported by the National Natural Science Foundation of China under Grant No. 61273327.

Rongkuan Tang received his B.S. degree in Automation from Changshu Institute of Technology in 2013. He received his M.S. degree in Control Science and Engineering from Tongji University in 2016. His research interests include reinforcement learning and its application to robots.

Hongliang Yuan received his B.S. degree in Automatic Control from University of Science and Technology of China in 2002. He received his M.S. and Ph.D degrees in Electrical Engineering from University of Central Florida, USA, in 2006 and 2009, respectively. He is currently an associate professor at the School of Electronics and Information Engineering, Tongji University, P.R. China. His research interests include robotics, intelligent control, optimizations, vehicle dynamics and control, etc.

Rights and permissions

About this article

Cite this article

Tang, R., Yuan, H. Cyclic error correction based Q-learning for mobile robots navigation. Int. J. Control Autom. Syst. 15, 1790–1798 (2017). https://doi.org/10.1007/s12555-015-0392-5

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-015-0392-5