Abstract

A competitive neural network model was proposed to describe the dynamics of cortical maps in which, there exist two memories: long-term and short-term. In this paper, we investigate the existence and the exponential stability of the pseudo-almost periodic solution of a system of equations modeling the dynamics of neutral-type competitive neural networks with mixed delays in the time-space scales for the first time. The mixed delays include time-varying delays and continuously distributed ones. Based on contraction principle and the theory of calculus on time-space scales, some new criteria proving the convergence of all solutions of the networks toward the unique pseudo-almost periodic solution are derived by using the ad-hoc Lyapunov–Krasovskii functional. Finally, numerical example with graphical illustration is given to confirm our main results.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

The human brain is made up of a large number of cells called neurons and their synapses. An artificial neural network is an information processing system that has certain characteristics in common with biological neural networks. Recently, many researchers have been devoted to the stability analyses, periodic oscillations and pseudo-almost periodic solutions of different class of neutral-type neural networks (see [1, 3, 15, 16]) due to their wide range of applications, such as associative memory, pattern recognition, signal processing, image processing, fault diagnosis, automatic control engineering, combinatorial optimization, and so on (see [7, 10, 12, 14]). Furthermore, over the last twenty years, Anke Meyer-Baese et al. proposed the so-called competitive neural networks with different time scales in [2], which is used to model the dynamics of cortical cognitive maps with unsupervised synaptic modifications. Different from the traditional neural networks with first-order interactions, in this paper, we consider a target model with two different state variables: the short-term memory (STM) variable, describing the fast neural activity and the long-term memory (LTM) variable, describing the slow unsupervised synaptic modifications. In addition, it has been reported that if the parameters and time delays are appropriately chosen, the delayed competitive neural networks in leakage term can exhibit complicated behaviors even with strange chaotic attractors (see [13]). Based on the aforementioned arguments, the study of delayed competitive neural networks and its analogous equations have attracted worldwide interest (see [3, 5, 15]). In fact, it is natural and important that systems contain some information about the derivative of the past state to further describe and model the dynamics for such complex neural reactions. Many researchers have studied the dynamics of various classes of neutral-type neural networks (see [1, 3, 15]). In real world, the mixed time-varying delays and leakage delay should be taken into account when modeling realistic neural networks.

Recently, the concept of pseudo-almost periodic on time-space scales have been introduced in [8], which generalizes the one of the almost periodicity on time-space scales, and the author gave some properties of the space of pseudo-almost periodic functions on time-space scales. Before moving on, the meaning of this notion will be explained firstly, we say that a ld-continuous function f is pseudo-almost periodic on time-space scales if

where g is almost periodic on time-space scales and \(\phi \) is ergodic function, in the sense that, for \(r\in {\varPi }\) with \(r>0\)

(where \(t_{0}\in \mathbb {T}\) and \({\varPi }:=\left\{ \tau \in \mathbb {R}{:}\, t\pm \tau \in \mathbb {T}, \forall t\in \mathbb {T}\right\} \ne 0. \)). It is known that the existence and stability of almost periodic solutions play an important role in characterizing the behavior of a dynamical system. To the author’s best knowledge, there are few works on the existence of almost periodic solutions and the periodic solutions for neutral-type competitive neural networks (CNNs) [5, 15], especially, for discrete time CNNs. Besides, little has been done about the pseudo-almost periodic solutions on time-space scales for the competitive neural networks with mixed time-varying and leakage delays. Therefore, it is a challenging and important problem both in theories and applications.

On the other hand, controversial opinions arise from the possibility of equal roles displayed by both continuous time and discrete time neural networks in various implementations and applications. However, it is troublesome to study the dynamical properties for continuous and discrete time systems, respectively. We should mention that, in many references, the networks are described either by difference equations or by differential equations, that is, dynamical systems are defines as \(\mathbb {Z}\) or \(\mathbb {R}\). Stefan Hilger in his PhD thesis in 1988, proposed the famous theory of dynamics equations on time-space scales which has been extensively studied and developed, in recent years. In addition, pseudo almost periodicity is more universal than periodicity. Moreover, pseudo-almost periodic solutions on time scales were introduced in [8]. This theory represents a powerful tool for applications in economics, biological models, quantum physics, neural networks, Lotka volterra and among others.

However, from the time scales angle, it is known that \(\mathbb {R}\) and \(\mathbb {Z}\) are classical periodic time scales that can be unified well by the following periodic time scale:

We can obtain \({\varPi }_{0}=\{n(a+b), n\in \mathbb {Z}\}\) as the periodicity set for the time scale (1). Obviously, if \(a=b=0\), then \(\mathbb {T}=\mathbb {R}\); if \(a=0\), \(b=1\), then \(\mathbb {T}=\mathbb {Z}\).

The remainder of this paper is organized as follows: In Sect. 2, we will present the model of CNNs. In Sect. 3, we will introduce some necessary notations, definitions and fundamental properties of the space \(PAP(\mathbb {T},\mathbb {R}^{n})\) (which will be used in the paper). In Sect. 4, some sufficient conditions are derived ensuring the existence of the pseudo-almost periodic solution on time-space scales. Section 5 is devoted to the exponential stability of the pseudo-almost periodic solution on time-space scales of a CNNs model, and the convergence of all solutions to its unique pseudo almost periodic solution. At last, one illustrative numerical example is given.

2 Model Description

In this paper, we consider a new n-neuron neutral-type competitive neural networks with mixed time-varying delays and leakage delays on time-space scales which is defined in the following lines:

where \(j=1,\ldots ,n\); \(\mathbb {T}\) is an almost periodic time scale; \(x_{i}(t)\) is the neuron current activity level (\(i=1,\ldots ,n\)); \(\alpha _{i}(t), c_{i}(t)\) are the time variable of the neuron i (where \(i=1,\ldots ,n\)); \(f_{j}(x_{j}(t))\) is the output of neurons; \(m_{ij}(t)\) is the synaptic efficiency; \(y_{i}\) is the constant external stimulus; \(D_{ij}(t)\), \(D_{ij}^{\tau }(t)\), \(\overline{D}_{ij}(t)\), \(\widetilde{D}_{ij}(t)\) represent the connection weight and the synaptic weight of delayed feedback between the ith neuron and the jth neuron respectively; \(B_{i}(t)\) is the strength of the external stimulus; \(E_{i}(t)\) denotes disposable scale; \(I_{i}(t)\), \(J_{i}(t)\) denote the external inputs on the ith neuron at time t; \(\eta _{i}(t)\) and \(\varsigma _{i}(t)\) are leakage delays and satisfy \(t-\eta _{i}(t)\in \mathbb {T}\), \(t-\varsigma _{i}(t)\in \mathbb {T}\) for \(t\in \mathbb {T}\); \(\tau _{ij}(t)\), \(\sigma _{ij}(t)\) and \(\xi _{ij}(t)\) are transmission delays and satisfy \(t-\tau _{ij}(t)\in \mathbb {T}\), \(t-\sigma _{ij}(t)\in \mathbb {T}\) and \(t-\xi _{ij}(t)\in \mathbb {T}\) for \(t\in \mathbb {T}\).

The neural network is modeled by a system of deterministic equations with a time-dependent input vector rather than a source emitting input signal with a prescribed probability distribution. By setting \(S_{i}=\sum \nolimits _{j=1}^{n}m_{ij}(t)y_{i}=Y^{T}m_{i}(t)\), where \(y=(y_{1},y_{2},\ldots ,y_{n})^{T}\), \(m_{i}=(m_{i1},m_{i2},\ldots ,m_{in})^{T}\) and, without loss of generality, the input stimulus Y is assumed to be normalized with unit magnitude \(|y|^{2}=1\), summing up the LTM over j, then the above networks are simplified and we obtain a state-space representation of the LTM and STM equations of the networks:

For convenience, we introduce the following notations:

We denote \([a,b]_{\mathbb {T}}=\{t, t\in [a,b]\cap \mathbb {T}\}\). The initial conditions associated with system (2), are of the form:

where \(\varphi _{i}(.)\) and \(\phi _{i}(.)\) are the real-valued bounded \(\nabla \)-differentiable functions defined on \([-\theta ,0]_{\mathbb {T}}\), \(\theta =\max \{\eta ,\tau ,\sigma ,\xi ,\varsigma \}\), \(\eta =\max \limits _{1\le i\le n}\eta _{i}^{+}\), \(\tau =\max \limits _{1\le i,j\le n}\tau _{ij}^{+}\),

\(\sigma =\max \limits _{1\le i,j\le n}\sigma _{ij}^{+}\), \(\xi =\max \limits _{1\le i,j\le n}\xi _{ij}^{+}\) and \(\varsigma =\max \limits _{1\le i,j\le n}\varsigma _{ij}^{+},\quad i,j=1,\ldots ,n\).

Remark 1

This is the first time to study the pseudo-almost periodic solutions of system (2) for the both cases: continuous and discrete. Besides, since it studies almost periodic problem, although paper [15] deals with \(\nabla \)-dynamic systems on time scales, its results also cannot be applied to (2).

Remark 2

In [13], the authors only consider the constant delay and the constant leakage term:

If the leakage delays are constants and the coefficients \(\overline{D}_{ij}(t)=\widetilde{D}_{ij}(t)\equiv 0\), it is clear that the model in Liu et al. (2014) is a special case of the system investigated in this work. Furthermore, model (2) is more general than model in [13].

3 Preliminaries and Function Spaces

In this section, we will introduce some basic definitions of time scales which will be used as the proof of our relative results.

A time scale \(\mathbb {T}\) is a closed subset of \(\mathbb {R}\). It follows that the jump operators \(\sigma ,\rho :\mathbb {T}\longrightarrow \mathbb {T}\) defined by \(\sigma (t)=\inf \{s\in \mathbb {T}{:}s>t\}\) and \(\rho (t)=\sup \{s\in \mathbb {T}{:}s<t\}\) for all \(t\in \mathbb {T}\). A point \(t\in \mathbb {T}\) is called left-dense if \(t>\inf \mathbb {T}\) and \(\rho (t)=t\), left-scattered if \(\rho (t)<t\), right-dense if \(t<\sup \mathbb {T}\) and \(\sigma (t)=t\), and right-scattered if \(\sigma (t)>t\). If \(\mathbb {T}\) has a right scatter minimum m, define \(\mathbb {T}_{k}{:}=\mathbb {T}\backslash {m}\); otherwise, set \(\mathbb {T}^{k}=\mathbb {T}\). The notations \([a,b]_{\mathbb {T}}\), \([a,b)_{\mathbb {T}}\) and so on, we will denote time scale intervals

where \(a,b\in \mathbb {T}\) with \(a<\rho (b)\).

Finally, the graininess function \(\nu {:}\mathbb {T}\longrightarrow +\infty \) is defined by \(\nu (t)=t-\rho (t)\), for all \(t\in \mathbb {T}\).

Definition 1

([4]). The function \(f{:}\mathbb {T}\longrightarrow \mathbb {R}\) is called ld-continuous provided that it is continuous at each left-dense point and has a right-sided limit at each point, write \(f\in C_{ld}(\mathbb {T})=C_{ld}(\mathbb {T},\mathbb {R})\). Let \(t\in \mathbb {T}^{k}\) the Nabla derivative of f at t to be the number (provided it exists) with the property that given any \(\epsilon >0\), there is a neighborhood U of t (i.e., \(U=(t-\delta ,t+\delta )\cap \mathbb {T}\) for some \(\delta >0\)) such that

Let F be a function, it is called the antiderivative of \(f{:}\mathbb {T}\longrightarrow \mathbb {R}\) provided \(F^{\nabla }(t)=f(t)\) for each \(t\in \mathbb {T}_{k}\). If \(F^{\nabla }(t)=f(t)\) then we define the nabla integral by

A function \(p{:}\mathbb {T}\longrightarrow \mathbb {R}\) is called \(\nu \)-regressive if \(1-\nu (t)p(t)\ne 0\) for all \(t\in \mathbb {T}_{k}\). The set of all \(\nu \)-regressive and left-dense continuous functions \(p{:}\mathbb {T}\longrightarrow \mathbb {R}\) will be denoted by \(\mathcal {R}_{\nu }= \mathcal {R}_{\nu }(\mathbb {T})=\mathcal {R}_{\nu }(\mathbb {T},\mathbb {R})\). We define the set

If \(p\in \mathcal {R}_{\nu }\) then we define the nabla exponential function by

where \(\mu \)-cylinder transformation is as in

Definition 2

([4]). Let \(p,q:\mathbb {T}\longrightarrow \mathbb {R}\) are two regressive functions; define

Lemma 1

([4]). Assume that \(p,q:\mathbb {T}\longrightarrow \mathbb {R}\) are two \(\nu \)-regressive functions, then

-

(i)

\(\hat{e}_{0}(t,s)\equiv 1\) and \(\hat{e}_{p}(t,t)\equiv 1\);

-

(ii)

\(\hat{e}_{p}(\rho (t),s)=(1-\nu (t)p(t))\hat{e}_{p}(t,s)\);

-

(iii)

\(\hat{e}_{p}(t,s)=\frac{1}{\hat{e}(s,t)}=\hat{e}_{\ominus \nu }(s,t)\);

-

(iv)

\(\hat{e}_{p}(t,s)\hat{e}_{p}(s,r)=\hat{e}_{p}(t,r)\);

-

(v)

\((\hat{e}_{ p}(t,s))^{\nabla }=( p)(t)\hat{e}_{p}(t,s)\).

Lemma 2

([6]). For each \(t_{0}\in \mathbb {T}\) in \(\mathbb {T}\backslash \mathbb {T}_{k}\) the single-point set \(\{t_{0}\}\) is \(\nabla \)-measurable and its \(\nabla \)-measure is given by \(\nu _{\nabla }(\{t_{0}\})=\nu (t_{0})-t_{0}\).

For more details of time scales and \(\nabla \)-measurability, one is referred to read the excellent books ([4, 6]).

Lemma 3

([9]) Let f, g be nabla differentiable functions on \(\mathbb {T}\), then:

-

(i)

\((\lambda _{1}f+\lambda _{2}g)^{\nabla }=\lambda _{1}f^{\nabla }+\lambda _{2}g^{\nabla }\), for any constants \(\lambda _{1},\lambda _{2}\);

-

(ii)

\((fg)^{\nabla }(t)=f^{\nabla }(t)g(t)+f(\rho (t))g(t)^{\nabla }=f(t)g^{\nabla }(t)+f^{\nabla }(t)g(\rho (t))\);

-

(iii)

If f and \(f^{\nabla }\) are continuous, then \(\left( \int _{a}^{t}f(t,s)\nabla s\right) ^{\nabla }=f(\rho (t),t)+\int _{a}^{t}f(t,s)\nabla s\).

Lemma 4

([9]) Assume \(p\in \mathcal {R}_{\nu }\) and \(t_{0}\in \mathbb {T}\). If \(1-\nu (t)p(t)>0\) for \(t\in \mathbb {T}\), then \(\hat{e}_{p}(t,t_{0})>0\) for all \(t\in \mathbb {T}\).

Lemma 5

Suppose that f(t) is an ld-continuous function and c(t) is a positive ld-continuous function which satisfies that \(c(t)\in \mathcal {R}_{\nu }^{+}\). Let

where \(t_{0}\in \mathbb {T}\), then

Proof

Definition 3

([9]) A time scale \(\mathbb {T}\) is called an almost periodic time scale if

Definition 4

([9]) Let \(\mathbb {T}\) be an almost periodic time scale. A function \(f\in C_{ld}(\mathbb {T},\mathbb {R}^{n})\) is called an almost periodic on \(\mathbb {T}\), if for any \(\epsilon >0\), the set

is relatively dense; that is, for any given \(\epsilon >0\), there exists a constant \(l(\epsilon )>0\) such that each interval of length \(l(\epsilon )\) contains at least one \(\tau =\tau (\epsilon )\in E(\epsilon ,f)\) such that

The set \(E(\epsilon ,f)\) is called the \(\epsilon \)-translation set of f(t), \(\tau \) is called the \(\epsilon \)-translation number of f(t) and \(l(\epsilon )\) is called the contain interval length of \(E(\epsilon ,f)\).

Let \(AP(\mathbb {T})=\left\{ f\in C_{ld}(\mathbb {T},\mathbb {R}){:} f \,\ \text {is almost periodic}\right\} \) and \(BC(\mathbb {T},\mathbb {R}^{n})\) denote the space of all bounded continuous functions from \(\mathbb {T}\) to \(\mathbb {R}^{n}\). Define the class of functions \(PAP_{0}(\mathbb {T})\) as follows:

Definition 5

([9]) A function \(f\in C_{ld}(\mathbb {T},\mathbb {R}^{n})\) is called pseudo almost periodic if \(f=g+\phi \), where \(g\in AP(\mathbb {T})\) and \(\phi \in PAP_{0}(\mathbb {T})\). Denote by \(PAP(\mathbb {T})\), the set of pseudo-almost periodic functions.

By Definition 5, one can easily show that

Lemma 6

([9]) If \(f,g\in PAP(\mathbb {T})\), then \(f+g, fg\in PAP(\mathbb {T})\); if \(f\in PAP(\mathbb {T})\), \(g\in AP(\mathbb {T})\), then \(fg\in PAP(\mathbb {T})\).

Lemma 7

([9]) If \(f\in C_{ld}(\mathbb {R},\mathbb {R})\) satisfies the Lipschitz condition, \(\varphi \in PAP(\mathbb {T})\), \(\theta \in C_{ld}^{1}(\mathbb {T},{\varPi })\) and \(\eta :=\inf \limits _{t\in \mathbb {T}}\left( 1-\theta ^{\nabla }(t)\right) >0\), then \(f(\varphi (t-\theta (t)))\in PAP(\mathbb {T})\).

4 The Existence of Pseudo-Almost Periodic on Time-Space Scales Solutions

In this section, we will present a new condition for the uniqueness, global exponential stability for pseudo-almost periodic solutions of (2) and the convergence are also derived. The existence and uniqueness of pseudo-almost periodic solution are given based on Banach’s fixed point theorem and the theory of calculus on time-space scales.

Let

with the norm \( \Vert \psi \Vert _{\mathbb {B}}=\sup \limits _{t\in \mathbb {T}}\max \limits _{i=1,\ldots ,n}\{|\varphi _{i}(t)|,|\phi _{i}(t)|,|\varphi ^{\nabla }_{i}(t)|,|\phi ^{\nabla }_{i}(t)|\}, \) then \((\mathbb {B},\Vert \psi \Vert _{\mathbb {B}})\) is a Banach space.

For every \(\psi =(\varphi _{1},\ldots \varphi _{n},\phi _{1},\ldots ,\phi _{n})\in \mathbb {B}\), we consider the following system

where, for \(i=1,\ldots ,n\)

Let \(y_{\psi }(t)=\left( x_{\varphi _{1}}(t),\ldots ,x_{\varphi _{n}}(t),S_{\phi _{1}}(t),\ldots ,S_{\phi _{n}}(t)\right) ^{T}\), where

Let us list some assumptions which will be used throughout the rest of this paper.

- (\(\mathbf{H_{1}}\)):

-

For all \(1\le i,j\le n,\), the functions \(\alpha _{i}\left( \cdot \right) , c_{i}\left( \cdot \right) \in \mathcal {R}_{\nu }^{+}\) and \(D_{ij}(.)\), \(D^{\tau }_{ij}(.)\) \(\overline{D}_{ij}(.)\), \(\widetilde{D}_{ij}(.)\), \(B_{i}(.)\), \(E_{i}(.)\), \(\eta _{i}(.)\), \(\varsigma _{i}(.)\), \(\tau _{ij}(.)\), \(\sigma _{ij}(.)\), \(\xi _{ij}(.)\), \(I_{i}(.)\), \(J_{i}(.)\) are ld-continuous pseudo-almost periodic functions for \(i,j=1,\ldots ,n\).

\(\inf \limits _{t\in \mathbb {T}}\left( 1-\tau _{ij}^{\nabla }(t)\right) >0\), \(\inf \limits _{t\in \mathbb {T}}\left( 1-\sigma _{ij}^{\nabla }(t)\right) >0\) and \(\inf \limits _{t\in \mathbb {T}}\left( 1-\xi _{ij}^{\nabla }(t)\right) >0\).

- (\(\mathbf{H_{2}}\)):

-

The functions \(f_{j}\left( \cdot \right) \), are \(\nabla \)-differential and satisfy the Lipschitz condition, i.e., there are constants \(L_{j}>0\) such that for all \(x,y\in \mathbb {R}\), and for all \(1\le j\le n,\) one has \( \left| f_{j}\left( x\right) -f_{j}\left( y\right) \right| \le L_{j}\left| x-y\right| . \)

- (\(\mathbf{H_{3}}\)):

-

$$\begin{aligned}&\max \limits _{1\le i\le n}\left\{ \frac{M_{i}}{\alpha _{i}^{-}},\left( 1+\frac{\alpha _{i}^{+}}{\alpha _{i}^{-}}\right) M_{i},\frac{N_{i}}{c_{i}^{-}},\left( 1+\frac{c_{i}^{+}}{c_{i}^{-}}\right) N_{i}\right\} \le r,\\&\quad \max \limits _{1\le i\le n}\left\{ \frac{\overline{M}_{i}}{\alpha _{i}^{-}},\left( 1+\frac{\alpha _{i}^{+}}{\alpha _{i}^{-}}\right) \overline{M}_{i},\frac{\overline{N}_{i}}{c_{i}^{-}},\left( 1+\frac{c_{i}^{+}}{c_{i}^{-}}\right) \overline{N}_{i}\right\} \le 1, \end{aligned}$$

where r is a constant, and for \(i=1,\ldots ,n\)

$$\begin{aligned} M_{i}= & {} \alpha _{i}^{+}\eta _{i}^{+}r\!+\!\sum \limits _{j=1}^{n}\left( D_{ij}^{+}\!+\!(D_{ij}^{\tau })^{+}\!+\!\overline{D}_{ij}^{+}\sigma _{ij}^{+} +\widetilde{D}_{ij}^{+}\xi _{ij}^{+}\right) (L_{j}r+|f_{j}(0)|)+B_{i}^{+}r+I_{i}^{+},\\ \overline{M}_{i}= & {} \alpha _{i}^{+}\eta _{i}^{+}+\sum \limits _{j=1}^{n}\left( D_{ij}^{+}+(D_{ij}^{\tau })^{+}+\overline{D}_{ij}^{+}\sigma _{ij}^{+}+\widetilde{D}_{ij}^{+}\xi _{ij}^{+}\right) L_{j} +B_{i}^{+},\\ N_{i}= & {} c_{i}^{+}\varsigma _{i}^{+}r+E_{i}^{+}(L_{i}r+|f_{i}(0)|)+J_{i}^{+}, \overline{N}_{i}=c_{i}^{+}\varsigma _{i}^{+}+E_{i}^{+}L_{i}. \end{aligned}$$

Lemma 8

Suppose that assumptions \((H_{1})-(H_{3})\) hold. Let \(\mathbb {F}=\{\psi \in \mathbb {B}: \Vert \psi \Vert _{\mathbb {B}}\le r\}\) and define the nonlinear operator \({\varGamma }:\mathbb {F}\longrightarrow \mathbb {F}\) by for each \(\psi \in PAP(\mathbb {T},\mathbb {R}^{n})\)

Then \({\varGamma }\) maps \(PAP(\mathbb {T},\mathbb {R}^{n})\) into itself.

Proof

We show that for any \(\psi \in \mathbb {F}\), \({\varGamma }\psi \in \mathbb {F}\).

In a similar way, we have

Which leads to, for \(i=1,\ldots ,n\)

and,

Otherwise, for \(i=1,\ldots ,n\), we have

Similarly,

From hypothesis \((H_{3})\), we can obtain

which implies that operator \({\varGamma }\) is a self-mapping from \(\mathbb {F}\) to \(\mathbb {F}\).

Theorem 1

Let \((H_{1})-(H_{3})\) hold. The system (2) has a unique pseudo-almost-periodic solution in the region \(\mathbb {F}=\{\psi \in \mathbb {B}: \Vert \psi \Vert _{\mathbb {B}}\le r\}\).

Proof

It suffices to show that the operator \({\varGamma }\) is a contraction mapping.

First, for \(\psi =\left( \varphi _{1},\ldots ,\varphi _{n},\phi _{1},\ldots ,\phi _{n}\right) ^{T}\), \({\varOmega }=\left( u_{1},\ldots ,u_{n}, v_{1},\ldots ,v_{n}\right) ^{T}\in \mathbb {F}\), we have

Besides,

Similarly,

and

therefore,

According to the well-known contraction principle there exists a unique fixed point \( y^{*}\left( \cdot \right) \) such that \({\varGamma }y^{*}=y^{*}\). So, \(y^{*}\) is a pseudo almost periodic solution of the model (2) in \(\mathbb {F}=\{\psi \in \mathbb {B}{:} \,\ \Vert \psi \Vert _{\mathbb {B}}\le r\}\). This completes the proof.

Remark 3

To the best of our knowledge, there have been no results on the pseudo almost periodic solutions on time-space scale for competitive neural networks with time varying coefficients, mixed delays and leakage until now. Hence, the obtained results are essentially new and the investigation methods used in this paper can also be applied to study pseudo-almost periodic solutions on time-space scale for some other types of neural networks, such as Cohen–Grossberg neural networks.

Remark 4

In practice, time delays, leakage delay and parameter perturbations are unavoidably encountered in the implementation of competitive neural networks. They may destroy the stability of pseudo-almost periodic solution of competitive neural networks, thus it is crucial to study the dynamic behaviors of pseudo-almost periodic solution of competitive neural networks with time delays, leakage term and parameter perturbations.

5 Global Exponential Stability of Pseudo-Almost Periodic Solution and the Convergence of All Solutions to Its Unique Pseudo Almost Periodic Solution

In this section, we will study the exponential stability of pseudo-almost periodic solutions of (2):

Definition 6

Let \(Z^{*}\left( t\right) =\left( x_{1}^{*}\left( t\right) , x_{2}^{*}\left( t\right) , \ldots ,x_{n}^{*}\left( t\right) ,S_{1}^{*}\left( t\right) ,S_{2}^{*}\left( t\right) ,\ldots ,S_{n}^{*}\left( t\right) \right) ^{T}\) be a pseudo-almost periodic solution on time-space scales of system (3) with initial value \(\psi ^{*}\left( t\right) =\left( \varphi _{1}^{*}\left( t\right) ,\varphi _{2}^{*}\left( t\right) ,\ldots , \varphi _{n}^{*}\left( t\right) ,\phi _{1}^{*}\left( t\right) ,\phi _{2}^{*}\left( t\right) ,\ldots , \phi _{n}^{*}\left( t\right) \right) ^{T}\). If there exist constants \(\gamma >0\), \(\ominus _{\nu }\gamma \in \mathcal {R}_{+}\) and \(M>1\) such that for every solution \(Z\left( t\right) =\left( x_{1}\left( t\right) , x_{2}^{*}\left( t\right) , \ldots ,x_{n}\left( t\right) ,S_{1}\left( t\right) ,S_{2}\left( t\right) ,\ldots ,S_{n}\left( t\right) \right) ^{T}\) of system (3) with any initial value \(\psi \left( t\right) =\left( \varphi _{1}\left( t\right) ,\varphi _{2}\left( t\right) ,\cdots , \varphi _{n}\left( t\right) ,\phi _{1}\left( t\right) ,\phi _{2}\left( t\right) ,\cdots , \phi _{n}\left( t\right) \right) ^{T}\), \(\,\ \forall t\in (0,+\infty )_{\mathbb {T}}\), \(t\ge t_{0}\),

where \(t_{0}=\max \{[-\theta ,0]_{\mathbb {T}}\}\). Then \(Z^{*}\left( \cdot \right) \) is said to be globally exponential stable.

Theorem 2

Let \((H_{1}){-}(H_{3})\) hold. The unique pseudo-almost periodic solution of system (2) is globally exponentially stable.

Proof

From Theorem 1 the system (2) has one and only one pseudo-almost periodic solution on time scales \(Z^{*}(t)=(x^{*}_{1}(t),\ldots x^{*}_{n}(t),S^{*}_{1}(t),\ldots ,S^{*}_{n}(t))^{T}\in \mathbb {R}^{n}\) with the initial condition \(\psi ^{*}(t)=(\varphi ^{*}_{1}(t),\ldots ,\varphi ^{*}_{n}(t),\phi ^{*}_{1}(t),\ldots ,\phi ^{*}_{n}(t))^{T}\).

Let \(Z(t)=(x_{1}(t),\ldots ,x_{n}(t),S_{1}(t),\ldots ,S_{n}(t))\) one arbitrary solution of (2) with initial condition \(\psi (t)=(\varphi _{1}(t),\ldots ,\varphi _{n}(t),\phi _{1}(t),\ldots ,\phi _{n}(t))^{T}\).

From system (2) we obtain:

where \(u_{i}(t)=x_{i}(t)-x^{*}_{i}(t), v_{i}(t)=S_{i}(t)-S_{i}^{*}(t)\), \(p_{j}(u_{j}(t))=f_{j}(x_{j}(t))-f_{j}(x_{j}^{*}(t))\), \(h_{j}(x_{j}^{\nabla }(t))=f_{j}(x_{j}^{\nabla }(t))-f_{j}({x_{j}^{*}}^{\nabla }(t))\), for \(i,j=1,\ldots ,n\), the initial condition of (5) is \(u_{i}(s)=\varphi _{i}(s)-\varphi _{i}^{*}(s), \,\ s\in [-\theta ,0]_{\mathbb {T}}\), \(v_{i}(s)=\phi _{i}(s)-\phi _{i}^{*}(s), \,\ s\in [-\theta ,0]_{\mathbb {T}}\), where \(i=1,\ldots ,n\).

Multiplying the first equation in system (5) by \(\hat{e}_{-\alpha _{i}}(t_{0},\rho (s))\) and the second equation by \(\hat{e}_{-c_{i}}(t_{0},\rho (s))\), and integrating over \([t_{0},t]_{\mathbb {T}}\), where \(t_{0}\in [-\theta ,0]_{\mathbb {T}}\), we obtain

Now, we define \(G_{i}\), \(\overline{G}_{i}\), \(H_{i}\) and \(\overline{H}_{i}\) as follows:

where \(i=1,\ldots ,n\), \(w\in ]0,+\infty [\).

From \(\left( H_{3} \right) \), we have

Since the functions \(G_{i}(.)\), \(\overline{G}_{i}(.)\), \(H_{i}(.)\) and \(\overline{H}_{i}(.)\) are continuous on \([0,+\infty )\) and \(G_{i}(w)\), \(\overline{G}_{i}(w)\), \(H_{i}(w)\), \(\overline{H}_{i}(w)\longrightarrow -\infty \) when \(w\longrightarrow +\infty \), it exist \(\eta _{i}, \bar{\eta }_{i}, \epsilon _{i}, \bar{\epsilon }_{i}>0\) such as \(H_{i}(\eta _{i})=\overline{H}_{i}(\bar{\eta }_{i})=G_{i}(\epsilon _{i})=\overline{G}_{i}(\bar{\epsilon }_{i})=0\) and \(G_{i}(w)>0\) for \(w\in (0,\eta _{i})\), \(\overline{G}_{i}(w)>0\) for \(w\in (0,\bar{\eta }_{i})\), \(H_{i}(w)>0\) for \(w\in (0,\epsilon _{i})\), \(\bar{H}_{i}(w)>0\) for \(w\in (0,\bar{\epsilon }_{i})\). Let \(a=\min \limits _{1\le i\le n}\left\{ \eta _{i}, \bar{\eta }_{i}, \epsilon _{i}, \bar{\epsilon }_{i}\right\} \), we obtain

So, we can choose the positive constant \( 0<\gamma <\min \limits _{1\le i \le n}\{a,\alpha _{i}^{-},c_{i}^{-}\}, \)

\(\text {such that}\,\ H_{i}(\gamma )>0,\,\ \overline{H}_{i}(\gamma )>0, \,\ G_{i}(\gamma )>0\,\ \text {and} \,\ \overline{G}_{i}(\gamma )>0, \,\ i=1,\ldots ,n. \) which imply that, for \(i=1,\ldots ,n\)

Let

By hypothesis (\(H_{3}\)), we have \(K>1\), therefore,

where \(\ominus _{\nu }\gamma \in \mathcal {R}_{\nu }^{+}\). We claim that

To prove (9), we show that for any \(\varpi >1\), the following inequality holds:

If (10) is not true, then there must be some \(t_{1}\in (0,+\infty )_{\mathbb {T}}\), \(d\ge 1\) such that

and

By (6), (11), (12) and \((H_{1}){-}(H_{3})\), we have for \(i=1,\ldots ,n\)

In addition, we have

We can easily obtain some upper bound of the derivative \(|u_{i}^{\nabla }(t_{1})|\) and \(|u_{i}^{\nabla }(t_{1})|\) as follow:

and

which contradicts (11), therefore (10) holds. Letting \(\varpi \longrightarrow 1\), then (9) holds. This implies that only pseudo-almost periodic solution of system (2) is globally exponentially stable.

Theorem 3

Suppose that assumptions (\(H_{1}){-}(H_{3}\)) hold.

Let \(y^{*}\left( \cdot \right) =\left( x_{1}^{*}\left( \cdot \right) ,\ldots ,x_{n}^{*}\left( \cdot \right) ,,S_{1}^{*}\left( \cdot \right) ,\ldots ,,S_{n}^{*}\left( \cdot \right) \right) ^{T}\) be a pseudo-almost periodic solution of system (2). If

then all solutions \(\psi =(\varphi _{1},\ldots ,\varphi _{n},\phi _{1},\ldots ,\phi _{n})\) of (2) satisfying

converge to its unique pseudo-almost periodic solution \(y^{*}\).

Proof

Let \(y^{*}\left( \cdot \right) =\left( x_{1}^{*}\left( \cdot \right) ,\ldots ,x_{n}^{*}\left( \cdot \right) , S_{1}^{*}\left( \cdot \right) ,\ldots ,S_{n}^{*}\left( \cdot \right) \right) \) be a solution of (2) and \(\psi \left( \cdot \right) =\left( \varphi _{1}\left( \cdot \right) ,\ldots ,\varphi _{n}\left( \cdot \right) , \phi _{1}\left( \cdot \right) ,\ldots ,\phi _{n}\left( \cdot \right) \right) \) be a pseudo almost periodic solution of (2). First, one verifies without difficulty that

and

then,

then

Now, consider the following (ad-hoc) Lyapunov–Krasovskii functional

where

and

Let us calculate the upper right Dini derivative \(D^{+}V\left( t\right) \) of V along the solution of the equation above. Then one has

Obviously,

and

Reasoning in a similar way, we can obtain the following estimation

and,

By using the inequality

we get

By integrating the above inequality from \(t_{0}\) to t, we get

Now, we remark that \(V(t)>0.\) It follows that

Note that \(y^{*}\left( \cdot \right) \) is bounded on \(\mathbb {R}^{+}\). Therefore

The proof of this theorem is now completed.

Remark 5

Theorem 1, Theorem 2 and Theorem 3 are new even for the both cases of differential equations (\(\mathbb {T}=\mathbb {R}\)) and difference equations (\(\mathbb {T}=\mathbb {Z}\)).

Remark 6

The model studied in [16] is considered without leakage time-varying delays and the system investigated have not contained some information about the derivative of the past state. In addition, the coefficients \(a_{i}\) (\(i=1,\ldots ,n)\) (playing the role of \(\alpha _{i}(\cdot )\) in our work) are constant. Furthermore, using the outcomes in this manuscript we can generalize the sufficient conditions for keeping the original system of the model, with leakage time-varying delays and contained some information about the derivative of the past state, to be stable. Besides, by the technique in the paper [16], we can study the Hopf bifurcation of the novel class of competitive neutral-type neural networks with mixed time-varying delays and leakage delays.

6 Example and Computer Simulations

In this section, we will present an example to illustrate the feasibility of our results that were derived in the previous sections.

In system (3), let \(i,j=1,2\), and take coefficients as follows:

By a simple calculation, we have

We can take \(L_{1}=L_{2}=0.1\), \(r=0.5\) and we have

The conditions \((H_{1})\) and \((H_{2})\) are satisfied and it is easy to verify

and

so, condition \((H_{3})\) holds. Therefore, using Theorem 1 and Theorem 2, we conclude that system (2) has only one pseudo-almost periodic solution. Besides, this unique solution is globally exponentially stable.

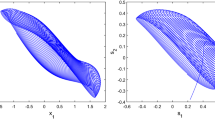

Figures 1, 2, 3 and 4 confirm that the proposed conditions in our theoretical results are effective for this example. Furthermore, it can be easily seen that the solutions of the system in this example for the both continuous time and discrete time are exponentially stable (Figs. 1, 2). Besides, it is clear that from a certain time we have the same distance between the two pseudo-almost periodic trajectories of the orbit (Figs. 3, 4).

Remark 7

In many cases, the delays in leakage terms have a negative effect on ensuring the stability of the system. In this case, people usually take a small value to the delays in leakage terms, such as \(\max \limits _{i=1,2}\eta _{i}(t)=\frac{(1+|\sin (t)|)}{2000}\le \frac{1}{1000}\) and \(\max \limits _{i=1,2}\eta _{i}(t)=0.1\), respectively in the examples of references ([11, 13]). When \(\eta _{1}^{+}=0.07\), \(\eta _{2}^{+}=0.02\), \(\varsigma _{1}^{+}=0.02\) and \(\varsigma _{2}^{+}=0.02\), their condition swill not be satisfied, but ours can. Therefore, our conditions are less conservative than that in ([11, 13]).

7 Conclusion and Open Problem

As it is widely known, the leakage delay has great impact on the dynamical behavior of neutral-type neural networks. Hence, it is necessary and rewarding to investigate the leakage delay effects on the dynamic behaviors of pseudo-almost periodic solution of competitive neutral-type neural networks. It is the first time that a class of neutral-type competitive with mixed delays and leakage delays on time-space scales is presented. In addition, the existence and exponential stability of pseudo almost periodic solutions for the system of equation modeling this system are also considered. The problem is investigated for the differential equations and difference equations. In the best of our knowledge, the model is general in the meaning that is considered with mixed time-varying delays and leakage time-varying delays. Finally, we formulate some open problems. We would like to extend our results to more general competitive neural networks with mixed time-varying delays and leakage delays, such as high-order competitive neural networks models.

where, for all \(i,j,k=1,\ldots n,\) \(T_{ijk}\) are the second-order connection weights of delayed feedback.

And stochastic high-order competitive neural networks model on time-space scales with mixed time-varying delays and leakage delays:

where \(\omega (t)=(\omega _{1}(t),\ldots ,\omega _{n}(t))^{\mathbb {T}}\), \((t\in \mathbb {T})\) is the n-dimensional Brownian motion defined on complete probability space \(({\varOmega },\mathcal {F},\mathbb {P})\); \(\delta _{ij}\) are Borel measurable functions; \(A=(\delta _{ij})_{n\times n}\) is the diffusion coefficient matrix.

The corresponding results will appear in the near future.

References

Arbi A, Chérif F, Aouiti C, Touati A (2016) Dynamics of new class of hopfield neural networks with time-varying and distributed delays. Acta Math Sci 36(3):891–912

Baese Meyer A, Ohl F, Scheich H (1996) Singular perturbation analysis of competitive neural networks with different time scales. Neural Comput 8:1731–1742

Baese Meyer A, Pilyugin SS, Chen Y (2003) Global exponential stability of competitive neural networks with different time scales. IEEE Trans Neural Netw 14(3):716–719

Bohner M, Peterson A (2003) Advances in dynamic equations on time scales. Birkhäuser Boston, Boston

Gu H, Jiang H, Teng Z (2010) Existence and global exponential stability of equilibrium of competitive neural networks with different time scales and multiple delays. J Frankl Inst 347:719–731

Guseinov GS (2003) Integration on time scales. J Math Anal Appl 285:107–127

Li S, Li Y (2014) Nonlinearly activated neural network for solving time-varying complex Sylvester equation. IEEE Trans Cybern 44(8):1397–1407

Li Y, Wang C (2012) Pseudo almost periodic functions and pseudo almost periodic solutions to dynamic equations on time scales. Adv Differ Equ 2012:77

Li Y, Meng X, Xiong L (2016) Pseudo almost periodic solutions for neutral type high-order Hopfield neural networks with mixed time-varying delays and leakage delays on time scales. Int J Mach Learn Cyber. doi:10.1007/s13042-016-0570-7

Liang X, Wang J (2000) A recurrent neural network for nonlinear optimization with a continuously differentiable objective function and bound constraints. IEEE Trans Neural Netw 11(6):1251–1262

Liu B (2013) Global exponential stability for BAM neural networks with time-varying delays in the leakage terms. Nonlinear Anal Real World Appl 14:559–566

Liu Q, Dang C, Huang T (2013) A one-layer recurrent neural network for real-time portfolio optimization with probability criterion. IEEE Trans Cybern 43(1):14–23

Liu Y, Yang Y, Li L, Liang T (2014) Existence and global exponential stability of antiperiodic solutions for competitive neural networks with delays in the leakage terms on time scales. Neurocomputing 133:471–482

Shen Q, Jiang B, Shi P, Lim C (2014) Novel neural networks-based fault tolerant control scheme with fault alarm. IEEE Trans Cybern 44(11):2168–2267

Tan Y, Jing K (2016) Existence and global exponential stability of almost periodic solution for delayed competitive neural networks with discontinuous activations. Math Methods Appl Sci 39(11):2821–2839

Xu W, Cao J, Xiao M, Ho DW, Wen G (2015) A new framework for analysis on stability and bifurcation in a class of neural networks with discrete and distributed delays. IEEE Trans Cybern 45(10):2224–2236

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Arbi, A., Cao, J. Pseudo-Almost Periodic Solution on Time-Space Scales for a Novel Class of Competitive Neutral-Type Neural Networks with Mixed Time-Varying Delays and Leakage Delays. Neural Process Lett 46, 719–745 (2017). https://doi.org/10.1007/s11063-017-9620-8

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-017-9620-8