Abstract

In this paper we investigate a class of stochastic absolute value equations (SAVE). After establishing the relationship between the stochastic linear complementarity problem and SAVE, we study the expected residual minimization (ERM) formulation for SAVE and its Monte Carlo sample average approximation. In particular, we show that the ERM problem and its sample average approximation have optimal solutions under the condition of \(R_0\) pair, and the optimal value of the sample average approximation has uniform exponential convergence. Furthermore, we prove that the solutions to the ERM problem are robust for SAVE. For a class of SAVE problems, we use its special structure to construct a smooth residual and further study the convergence of the stationary points. Finally, a smoothing gradient method is proposed by simultaneously considering sample sampling and smooth techniques for solving SAVE. Numerical experiments exhibit the effectiveness of the method.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The absolute value equation (AVE) is to find a vector \(x\in \Re ^{n}\) such that

where \(A\in \Re ^{n\times n}, B\in \Re ^{n\times n}, b\in \Re ^{n}\) and |x| denotes the component-wise absolute value of x. If \(B =-I\), where I denotes an identity matrix, then AVE (1) reduces to the special form:

The main significance of AVE arises from its wide applications in the different fields of mathematics and engineering applications. For example, the general linear complementarity problem [3] (including linear programs), quadratic programs, bimatrix games and some other mathematical programming problems can be formulated as AVE; see [13] for more information. Particularly, if \(B = 0,\) then AVE (1) reduces to the system of linear equations \(Ax = b\), the most foundamental problem in scientific computing. Over the last ten years, AVE (1) and its special form (2) have been extensively studied from both theoretical analysis and numerical algorithms, see [2, 9,10,11,12, 20, 26, 29, 30, 32].

In this paper, we mainly study a class of stochastic absolute value equation (SAVE), which is to find a vector \(x\in \Re ^{n}\) such that

where \(\omega \in {\Omega }\) and \(({\Omega },\mathcal {F},P)\) is a probability space, \(A(\omega )\in \Re ^{n\times n}\), \(B(\omega )\in \Re ^{n\times n}\) and \(b(\omega )\in \Re ^{n}\) for each \(\omega \in {\Omega }\). If \({\Omega }\) only contains one realization, then SAVE (3) would reduce to AVE (1). One of our motivations for studying SAVE comes from the fact that the well-known stochastic linear complementarity problem (SLCP)

with \(M(\omega )\in \Re ^{n\times n}\) and \(q(\omega )\in \Re ^{n}\) can be formulated as a SAVE (see Sect. 2 below). In the past few decades, due to its widespread application, SLCP has been extensively studied. Here we only list a few literatures that are closely related to this paper. For example, Chen and Fukushima [4] introduced the Expected Residual Minimization (ERM) formula for SLCP and studied some properties of this new reformulation. Chen et al. [6] further proved that the solutions of the ERM formulation are robust for the monotone SLCP. Zhang and Chen [33] investigated a smooth projected gradient method and applied this algorithm to solve SLCP. Zhou and Caccetta [34] proposed a feasible semismooth Newton method for solving a class of SLCPs with finite many realizations. More research and discussions on SLCP can be found in [7, 16, 17] and references therein. For stochastic absolute value equation, Du [8] proved that the SAVE \(A(\omega )x-|x|=b(\omega )\), a special case of SAVE (3), is equivalent to the stochastic bilinear programming and the stochastic generalized linear complementarity problem.

Due to the existence of the randomness of \(\omega \), we do not expect the existence of a vector x satisfying SAVE (3) for all \(\omega \in {\Omega }\). Therefore, in order to obtain a reasonable solution of (3), we need to search for suitable reformulations for SAVE. It is well-known that the deterministic Expected Residual Minimization (ERM) formulation is one of the most effective techniques to deal with stochastic optimization problems. The ERM formulation was firstly proposed by Chen and Fukushima [4] for solving SLCP, where the objective is to minimize an expected residual defined by NCP functions. Subsequently, the ERM formulation has been further studied to deal with stochastic variational inequalities [5], stochastic complementarity problems [14], stochastic generalized complementarity problems [18] and stochastic second-order cone complementarity problems [15, 19, 31].

Our goal is to study the ERM deterministic formulation for SAVE (3) and then propose a smoothing gradient method to find its approximate solution. Our main contributions of the paper can be summarized as follows.

(i) We first show that the SLCP can be reformulated as a SAVE, while the converse direction holds as well provided that \(A(\omega )+B(\omega )\) is nonsingular for each \(\omega \in {\Omega }\).

(ii) We introduce the ERM formulation for SAVE and its Monte Carlo sample average approximation. The existence of optimal solutions to ERM problem and its discrete approximation is established when \(\{A({\bar{\omega }}), B({\bar{\omega }})\}\) is an \(R_0\) pair for an observation \({\bar{\omega }}\). Our results show that the solution of the approximate ERM problem converges to that of ERM problem with probability one, and the optimal value of the sample average approximation achieves a uniform exponential convergence.

(iii) Under some regularity conditions, we prove that the solutions of ERM problem are robust in the sense that they may have a minimum sensitivity with respect to random parameter variations in SAVE. This also indicates from a new perspective that ERM is an effective reformulation of SAVE.

(iv) In general, SAVE is nonsmooth due to the existence of absolute values. However, for a special class of SAVE problems, by exploiting its structure we provide a smooth (even twice continuously differentiable) residual and further study the convergence of the stationary points.

(v) For a general SAVE, we propose a new smooth approximation formulation by simultaneously considering sampling and smoothing techniques (in which the number of samples can also be treated as a smoothing factor). This new smooth approximation allows us to use a smooth gradient method to solve SAVE. Our numerical experiments indicate the algorithm is very effective.

The rest of our paper is organized as follows. In the next section, we discuss the relationship between SAVE and SLCP. In Sect. 3, we study the ERM formulation for SAVE and investigate the boundedness of its level sets, the existence of optimal solutions as well as the exponential convergence rate. The robustness of the solutions to the ERM problem is given in Sect. sec4. Section 5 is devoted to a smoothing reformation for a class of special SAVE. Section 6 proposes a smoothing gradient method for solving general SAVE. Conclusions are drawn in Sect. 7.

2 Relations of SAVE and SLCP

Theorem 1

The SLCP (4) can be formulated as a SAVE (3) with \(A(\omega )=I+M(\omega )\), \(B(\omega )=I-M(\omega )\) and \(b(\omega )=2q(\omega ).\)

Proof

Note that SLCP (4) can be written as

Let \(x:=v-u\). Then, for any \(i=1,...,n\), since \(u_i\ge 0, v_i\ge 0\) and \(u_iv_i=0\), we have \(x_i^2=(v_i-u_i)^2=(v_i+u_i)^2\) and so \(|x_i|=v_i+u_i\). This implies that \(|x|=v+u\). So, SLCP (4) reduces to SAVE (3) with \(A(\omega )=I+M(\omega )\), \(B(\omega )=I-M(\omega )\) and \(b(\omega )=2q(\omega )\). \(\square \)

Theorem 2

If the matrix \(A(\omega )+B(\omega )\) is nonsingular for each \(\omega \in {\Omega }\), then SAVE (3) is equivalent to SLCP (4).

Proof

According to Theorem 1, it only needs to show that SAVE (3) can be formulated as a SLCP (4). Note that SAVE (3) can be written as

Since \(A(\omega )+B(\omega )\) is nonsingular for each \(\omega \in {\Omega }\), the above equation is equivalent to

By setting \(v:=\frac{|x|+x}{2},~u:=\frac{|x|-x}{2},~M(\omega ):=(A(\omega )+B(\omega ))^{-1}(A(\omega )-B(\omega ))\) and \(q(\omega ):=(A(\omega )+B(\omega ))^{-1}b(\omega ),\) SAVE (5) is reformulated as SLCP (4). \(\square \)

Remark 1

Theorem 1 provides an equivalent way to solve SLCP (4). Specifically, we may first find a solution x by solving the following SAVE

Then setting \(v:=\frac{|x|+x}{2}\) and \(u:=\frac{|x|-x}{2}\) yields a solution (u, v) for SLCP (4).

3 ERM Formulation for SAVE

Let

We introduce the following ERM model for the SAVE (3):

where \({\mathbb {E}}\) denotes the expectation with respect to the random variable \(\omega \). To proceed, we first give the assumption required in this section.

Assumption A Let \(\omega \) be a continuous random variable. Let \(A(\omega ), B(\omega )\) and \(b(\omega )\) be continuous functions of \(\omega \) satisfying

where \(\rho :{\Omega }\rightarrow \Re _+\) denotes the continuous probability density function of \(\omega \).

To analyze the existence of optimal solutions to the ERM problem (7), we introduce the following definition of \(R_0\) pair.

Definition 1

For the given matrices \(A,B\in \Re ^{n\times n}\), we define \(\{A,B\}\) to be an \(R_0\) pair if

The definition of \(R_0\) pair is motivated by the original definition of \(R_0\) matrix for the LCP [4]. Obviously, if AVE (1) is uniquely solvable for any \(b\in \Re ^n\), then \(\{A,B\}\) is an \(R_0\) pair. According to existing results on the unique solvability of AVE (1), \(\{A,B\}\) is an \(R_0\) pair if one of the following conditions holds:

(a) \(\sigma _{\max }(|B|)<\sigma _{\min }(A)\), where \(\sigma _{\max }\) and \(\sigma _{\min }\) denote the maximal and minimal singular value respectively [25];

(b) The inequality \(|Ax|\le |B||x|\) only has trivial solutions [24];

(c) The interval matrix \([A -|B|, A+|B|]\) is regular [30];

(d) \(\{A+B, A- B\}\) has the column \(\mathcal {W}\)-property [20].

More sufficient conditions for the unique solvability of AVE (1) can be found in [26].

Lemma 1

If \(\{A({\bar{\omega }}),B({\bar{\omega }})\}\) is an \(R_0\) pair for some \({\bar{\omega }}\in {\Omega }\), then there exists a closed sphere \(\mathcal {B}({\bar{\omega }},\delta ):=\{\omega |~\Vert \omega -{\bar{\omega }}\Vert \le \delta \}\) with \(\delta > 0\) such that \(\{A({\omega }),B({\omega })\}\) is an \(R_0\) pair for every \(\omega \in \mathcal {B}({\bar{\omega }},\delta )\cap {\Omega }\).

Proof

Assume that the lemma is not true. Then there is a sequence \(\{\omega ^k\}\subset {\Omega }\) satisfying \(\lim \limits _{k\rightarrow \infty }\omega ^k={\bar{\omega }}\) and, for each k, there exists \(x^k\in \Re ^{n}\) such that

Let \(t^k:=\frac{x^k}{\Vert x^k\Vert }.\) Then \(\Vert t^k\Vert =1\) and

This implies the existence of \({\bar{t}}\in \Re ^{n}\) such that

This leads to a contradiction with the assumption that \(\{A({\bar{\omega }}),B({\bar{\omega }})\}\) is an \(R_0\) pair. \(\square \)

Theorem 3

Suppose that there exists some \({\bar{\omega }}\in {\Omega }\) such that \(\rho ({\bar{\omega }})>0\) and \(\{A({{\bar{\omega }}}),B({{\bar{\omega }}})\}\) is an \(R_0\) pair. Then for any \(\gamma >0\), the level set defined by

is bounded.

Proof According to the continuity of \(\rho \) and Lemma 1, there exist a constant \({\bar{\rho }}>0\) and a closed sphere \(\mathcal {B}({\bar{\omega }},\delta )\) such that \(\rho (\omega )\ge {\bar{\rho }}\) and \(\{A({\omega }),B({\omega })\}\) is an \(R_0\) pair for any \(\omega \in \bar{\mathcal {B}}:=\mathcal {B}({\bar{\omega }},\delta )\cap {\Omega }\). Suppose on the contrary that there exists a constant \(\gamma > 0\) and a sequence \(\{{x}^k\}\subset \mathcal {L}(\gamma )\) such that \(\lim \limits _{k\rightarrow \infty }\Vert {x}^k\Vert =+\infty \). The continuity of \(A(\omega ), B(\omega )\) and \(b(\omega )\) ensures that for every k there exists an \(\omega ^k\in \bar{\mathcal {B}}\) such that

Hence

which together with \(\int _{\mathcal {{\bar{B}}}}d\omega >0\) implies that \(\{\Vert \textrm{F}({x}^k,\omega ^k)\Vert \}\) is bounded. So, there exists a constant \(C>0\) such that

Since the sequences \(\big \{\frac{{x}^k}{\Vert {x}^k\Vert }\big \}\) and \(\{\omega _k\}\) are bounded, we can assume by taking a subsequence if necessary that they are convergent and denote the limits as \({x}^*:=\lim \limits _{k\rightarrow \infty }\frac{{x}^k}{\Vert {x}^k\Vert }\) and \(\omega ^*:=\lim \limits _{k\rightarrow \infty }\omega ^k.\) Clearly, \(\Vert {x}^*\Vert =1\) and \(\omega ^*\in \mathcal {{\bar{B}}}\). By (8), we have

Since \(\{b(\omega ^k)\}\) is bounded due to the continuity of \(b(\omega )\), by letting k tend towards infinity in (9), it holds that

Hence \({x}^*= 0\) because \(\{A({\omega ^*}),B({\omega ^*})\}\) is an \(R_0\) pair. This yields a contradiction with \(\Vert {x}^*\Vert =1\). The proof is complete. \(\sqcap \!\!\!\!\sqcup \)

As is well known, for an integrable function \(\varphi :{\Omega }\rightarrow \Re \), the Monte Carlo sampling estimate for \(\mathbb {E}[\varphi (\omega )]\) is obtained by taking independently and identically distributed random samples \({\Omega }_k:=\{\omega ^1,...,\omega ^{N_k}\}\) from \({\Omega }\) and letting

The strong law of large numbers guarantees that this procedure converges with probability one (abbreviated by “w.p.1" below), that is,

where \(N_k\rightarrow +\infty \) as \(k\rightarrow \infty \).

By generating independently and identically distributed random samples \({\Omega }_k=\{\omega ^1,...,\omega ^{N_k}\}\) from \({\Omega }\), we can obtain the following approximation of the ERM problem (7):

According to (6), the function \({\Theta }_k({x})\) can be further written as

We now give the existence of global optimal solutions to (7) and (11). To this end, we denote the set of optimal solutions to the ERM problem (7) by \(\mathcal {S}^*\) and those of the approximate ERM problem (11) by \(\mathcal {S}_k^*\) respectively

Theorem 4

Let Assumption A hold. Suppose that there exists an \({\bar{\omega }}\in {\Omega }\) such that \(\rho ({\bar{\omega }})>0\) and \(\{A({\bar{\omega }}),B({\bar{\omega }})\}\) is an \(R_0\) pair. Then \(\mathcal {S}_k^*\) is nonempty and bounded for all large k. Let \(x^k\in S^*_k\) for each k and \(x^*\) be an accumulation point of the sequence \(\{x^k\}\). Then \(x^*\in \mathcal {S}^*\) with probability one.

Proof

By Lemma 1, there exist an index \({\bar{k}} > 0\) and a closed sphere \(\mathcal {B}({\bar{\omega }},\delta )\) such that for all \(k\ge {\bar{k}}\), \({\Omega }_k\cap \mathcal {B}({\bar{\omega }},\delta )\) are nonempty and \(\{A(\omega ),B(\omega )\}\) is an \(R_0\) pair for each \(\omega \in {\Omega }_k\cap \mathcal {B}({\bar{\omega }},\delta )\). Hence, by following a similar argument as in Theorem 3, we can show that \(S^*_k\) is nonempty and bounded as \(k\ge {\bar{k}}\).

Next, we prove \(x^*\in \mathcal {S}^*\). In fact, for any fixed \({x}\in \Re ^n\),

This together with Assumption A implies that the function \(\Vert \textrm{F}({x},\omega )\Vert ^2\) is integrable over \({\Omega }\). Thus, according to (10), for any fixed \({x}\in \Re ^n\),

We now assume by taking a subsequence if necessary that \(\lim \limits _{k\rightarrow \infty }{x}^k={x}^*\). Pick up a constant \(\zeta >0\) satisfying \({\Theta }({x}^*)<\zeta \). Since

and \(\mathbb {E}[(\Vert A(\omega )\Vert +\Vert B(\omega )\Vert +\Vert b(\omega )\Vert )^2]<+\infty \) by Assumption A, by [22, Theorem 16.8], we know that \({\Theta }({x})={\mathbb {E}}[\Vert \textrm{F}({x},\omega )\Vert ^2]\) is continuous at any \(x\in \mathbb {R}^n.\) Hence \({\Theta }({x}^k)\le \zeta \) for all sufficiently large k. Since the level set \(\mathcal {L}(\zeta )= \{{x}\in \Re ^n|{\Theta }({x}) \le \zeta \}\) is closed and bounded by Theorem 3, the constant

is well-defined. For all sufficiently large k and any \(\omega \in {\Omega }\), we have

Thus, by (13), (15) and (16), it follows from (12) that

where the limit holds because \(\{{x}^k\}\) converges to \({x}^*\) and

by Assumption A and (10). Further, putting (14) and (17) together yields

On the other hand, since \({x}^k\) is the optimal solution of the problem (11), we have

This together with (14) and (18) gives

with probability one. Hence \(x^*\in \mathcal {S}^*\) with probability one. \(\square \)

The following result shows that the optimal values of the sample average approximations have a uniform exponential convergence.

Theorem 5

Suppose that the support set \({\Omega }\) is a compact set. Let \({x}^k\) be an optimal solution of (11) for each k and \({x}^*\) be an accumulation point of the sequence \(\{{x}^k\}\). Then, for every \(\varepsilon >0\), there exist positive constants \(D(\varepsilon )\) and \(\beta (\varepsilon )\), independent of \(N_k\), such that

Proof

We assume again without loss of generality that \(\{{x}^k\}\) itself converges to \({x}^*\). Let \(\mathcal {Z}\) be a compact set that contains the whole sequence \(\{{x}^k\}.\) Then \({x}^*\in {\mathcal {Z}}\). First, since \(\mathcal {Z}\) and \({\Omega }\) are compact sets, by the continuity of \(\textrm{F}\), there exists a constant \(c>0\) such that

So for every \({x}\in \mathcal {Z}\), the moment generating function \(\mathbb {E}\left[ e^{t(\Vert \textrm{F}({x},\omega )\Vert ^2-\mathbb {E}[\Vert \textrm{F}({x},\omega )\Vert ^2])}\right] \) of the random variable \(\Vert \textrm{F}({x},\omega )\Vert ^2-\mathbb {E}[ \Vert \textrm{F}({x},\omega )\Vert ^2 ]\) is finite-valued whenever t near zero. Second, for any \(({x}',\omega ),({x},\omega )\in \mathcal {Z}\times {\Omega }\), it follows from (19) that

Let \(\kappa (\omega ):=2c(\Vert A(\omega )\Vert +\Vert B(\omega )\Vert ).\) Then \(\kappa (\omega )\) is bounded on \({\Omega }\) due to the continuity of \(A(\omega )\) and \(B(\omega )\). This ensures that \(\mathbb {E}[\kappa (\omega )]<+\infty \) and the moment generating function \(\mathbb {E}[e^{t\kappa (\omega )}]\) of the random variable \(\kappa (\omega )\) is finite-valued for all t near of zero. Thus, the conditions (C1)–(C3) assumed in [28, Theorem 5.1] are all satisfied. Therefore, according to [28, Theorem 5.1], for every \(\varepsilon >0\), there exist positive constants \(D(\varepsilon )\) and \(\beta (\varepsilon )\), independent of \(N_k\), such that

i.e.,

For each k, since \({x}^k\) is an optimal solution of (11), we have \({\Theta }_k({x}^k)\le {\Theta }_k({x}^*)\) and hence

On the other hand, by Theorem 4, \({x}^*\) is an optimal solution of (7) with probability one. So for each k, \({\Theta }(x^*)\le {\Theta }(x^k)\) with probability one and hence

Combining (22) and (23) together yields

This together with (21) gives

Since

it follows from (21) and (24) that

This completes the proof. \(\square \)

At the end of this section, we give some discussions on the computational complexity of the Monte Carlo approximation method. Denote \(\pi (x,\omega ):=\Vert \textrm{F}({x},\omega )\Vert ^2-\mathbb {E}[\Vert \textrm{F}({x},\omega )\Vert ^2]\). We further assume that the moment generating function \(\mathbb {E}\left[ e^{t\pi (x,\omega )}\right] \) of the random variable \(\pi (x,\omega )\) satisfies the following condition: there exists \(\sigma >0\) such that for all \(x\in \mathbb {R}^n,\)

This condition was introduced by Shapiro and Xu [28]. It is noticed that the right side of (25) is just the moment generating function of normal distribution with a variance \(\sigma ^2\). Hence, the inequality (25) holds as an equality as \(\pi (x,\omega )\) has normal distribution. In addition, this condition can also be satisfied if \(\pi (x,\omega )\) has a subguass distribution.

From the proof process of Theorem 5, we know that the conditions (C1)–(C3) assumed in [28, Theorem 5.1] are all satisfied. Moreover, by (20) we have

Let \(L:=\mathbb {E}[2c(\Vert A(\omega )\Vert +\Vert B(\omega )\Vert )]\). Obviously, L is finite on the compact set \({\Omega }\) due to the continuity of \(A(\omega )\) and \(B(\omega )\). Denote by \(D:=\textrm{sup}_{x',x\in \mathcal {Z}}\Vert x'-x\Vert \) the diameter of \(\mathcal {Z}\). Then, according to (5.14) in [28], we know that for \(\alpha \in (0,1)\), if the sample size \(N_k\) satisfies

then

i.e.,

Then by following the proof of Theorem 5, we can obtain

This means that \(x^k\) is an \(\varepsilon \)-optimal solution with probability at least \(1-\alpha \). Hence, to obtain an \(\varepsilon \)-optimal solution, the computational complexity of Monte Carlo approximation method is \(\mathcal {O}\left( \frac{n}{\varepsilon ^2}\textrm{log}\frac{1}{\varepsilon }\right) \) by (26). For the computational complexity of Monte Carlo based methods, more research and discussions can be founded in [1, 27]

4 Robustness of ERM Problem

In this section, we discuss the robustness of solutions of the ERM problem (7). This indicates from a new perspective that ERM is an effective reformulation of SAVE. To this end, we first consider the error bound conditions for the original AVE (1). Let

It is easy to see that \(\textrm{F}(x)\) is globally Lipschitz continuous and semismooth on \(\Re ^n\). Hence, \(\textrm{F}(x)\) is differentiable almost everywhere by Rademacher’s theorem. Let \(D_{\textrm{F}}\) be the set of points at which \(\textrm{F}\) is differentiable. The Clarke generalized Jacobian of \(\textrm{F}\) at x, denoted by \(\partial \textrm{F}(x)\), is defined by

where conv denotes the convex hull and \(\textrm{F}'({x})\) is the Jacobian whenever \(\textrm{F}({x})\) is differentiable at x. The mapping \(\textrm{F}({x})\) is said to be BD-regular at x if all subgradients in \(\partial \textrm{F}(x)\) are nonsingular.

Denote the solution set of AVE (1) as \({X}^*\), that is,

Assume that \({X}^*\) is nonempty. A function \(r: \Re ^n\rightarrow \Re _+\) is called a residual function of AVE (1) if \(r(x)\ge 0\) for all x and

With the help of residual functions, the AVE problem is transformed into finding out the roots of nonlinear equations. The property of error bound plays an important role in theoretical and numerical analysis. Recall that a residual function r has a local error bound for AVE (1) if there exist constants \(\kappa > 0\) and \(\delta >0\) such that for each \(x\in \{x\in \Re ^n|\textrm{dist}(x, {X}^*)\le \delta \}\),

where \(\textrm{dist}(x, {X}^*):=\textrm{inf}_{y\in {X}^*}\Vert x-y\Vert .\) The residual function r has a global error bound for AVE (1) if (27) holds for all \(x\in \Re ^n\).

Let

Clearly r(x) is a residual function of the AVE (1).

Lemma 2

Let r(x) be defined by (28). If \(\{A,B\}\) is an \(R_0\) pair, then the level set

is compact for any \(\theta >0.\)

Proof

By similarly following the arguments given in Theorem 3, we can prove that \(\mathcal {L}(\theta )\) is bounded for all \(\theta >0.\) This together with the continuity of r yields the desired result. \(\square \)

Theorem 6

Suppose that \(\{A,B\}\) is an \(R_0\) pair and \(\textrm{F}(x)\) is BD-regular at any \(x\in {X}^*\). Then r(x) provides a global error bound for AVE (1), that is, there exists a constant \(\xi > 0\) such that

Proof

We first show that the residual r(x) provides a local error bound for AVE (1). In fact, if this conclusion is not true, there must exist a sequence \(\{x^k\}\) such that

which in turn implies \( r(x^k)\rightarrow 0\) as \(k\rightarrow \infty \). Hence there is a constant \(\theta >0\) such that \( r({x^k}) \le \theta \) for all \(k\ge 0\). By Lemma 2, \(\{x^k\}\) has an accumulation point \(x^*\), and we assume without loss of generality that \(x^k\rightarrow x^*\) as \(k\rightarrow \infty .\) Since r(x) is continuous, \( r(x^*)=0\), i.e., \(x^*\in {X}^*\). Thus

Because \(\textrm{F}(x)\) is semismooth and BD-regular at \(x^*,\) according to [23, Proposition 3], there exist \(\varrho > 0\) and \(\delta > 0\) such that \( r(x)\ge \varrho \Vert x-x^*\Vert \) for all x satisfying \(\Vert x-x^*\Vert \le \delta \), which contradicts (29). Thus, r(x) provides a local error bound for AVE (1).

Next, we show that r(x) further provides a global error bound for AVE (1). Suppose on the contrary that for any \(k>0\), there exists \(x^k\) such that

Choose a fixed solution \({\hat{x}}\in {X}^*\). Then

We claim that there exist \(K>0\) and \(\delta > 0\) such that \( r(x^k)>\delta \) for every \(k>K\). If not, then for any integer \(K>0\) and any \(\delta >0\), there exists \(k>K\) such that \( r(x^k)\le \delta .\) By (30) and the property of local error bound of r(x), we have

It implies \(\delta /k>1\), leading to a contradiction as \(k\rightarrow +\infty .\) Thus, by (31) we have \(\Vert x^k-{\hat{x}}\Vert >k\delta \) for every \(k>K\), which further yields \(\Vert x^k\Vert \rightarrow +\infty \) as \(k\rightarrow + \infty .\) Since the sequence \(\big \{\frac{{x}^k}{\Vert {x}^k\Vert }\big \}\) is bounded, we assume by taking a subsequence if necessary that \(\lim \limits _{k\rightarrow \infty }\frac{{x}^k}{\Vert {x}^k\Vert }={x}^*.\) It then follows from (31) that

which gives

i.e., \(Ax^*+B|x^*|=0.\) Since \(\{A,B\}\) is an \(R_0\) pair, then \(x^*=0\), a contradiction with \(\Vert x^*\Vert =1\). This completes the proof. \(\square \)

Let us discuss the relation between the solution of the ERM problem (7) and that of (3). For a fixed \(\omega \in {\Omega }\), denote by \({X}^*(\omega )\) the solution set of the SAVE (3), that is,

We assume that \({X}^*(\omega )\) is nonempty.

Theorem 7

Let \({\Omega }=\{\omega ^1,...,\omega ^N\}\). For each \(\omega \in {\Omega }\), suppose that \(\textrm{F}(x,\omega )\) defined by (6) is BD-regular at any \(x\in {X}^*(\omega )\) and \(\{A(\omega ),B(\omega )\}\) is an \(R_0\) pair. Then there is a constant \(\eta >0\) such that

Proof

By Theorem 6, there exists a constant \(\eta > 0\) such that

Therefore

where the second inequality comes from the Schwarz inequality. \(\square \)

Theorem 7 indicates that, for an optimal solution \(x^*\) of ERM problem (7), it holds that

Unlike an error bound for AVE (1), the left hand side of the inequality (32) is in general positive at a solution of ERM problem (7). Nevertheless, the inequality (32) suggests that the expectation of the distance from \(x^*\) to the solution set \({X}^*(\omega )\) is bounded by the expectation residual function \({\Theta }(x^*)\). This nice property tells us that the solutions of ERM problem (7) may have a minimum sensitivity with respect to random variables in SAVE (3). In this sense, the solutions of ERM problem (7) are robust for SAVE.

In our previous paper [30], we proved that the AVE (1) is uniquely solvable for any \(b\in \Re ^{n}\) if the interval matrix \([A -|B|, A+|B|]\) is regular. For the definitions of the interval matrix and its regularity, one may refer to [21, 30] for more detailed information. Based on Theorem 7 and regularity conditions, we obtain the following result.

Theorem 8

Let \({\Omega }=\{\omega ^1,...,\omega ^N\}\). Suppose that the interval matrix \([A(\omega )-|B(\omega )|, A(\omega )+|B(\omega )|]\) is regular for each \(\omega \in {\Omega }\). Then there is a constant \(\lambda >0\) such that

where \(x^*(\omega )\) denotes the unique solution of SAVE (3) for each fixed \(\omega \in {\Omega }\).

Proof

For a fixed \(\omega \in {\Omega }\), by [30, Theorem 2.2], the SAVE (3) is uniquely solvable and so \(\{A(\omega ),B(\omega )\}\) is an \(R_0\) pair. Thus, according to Theorem 7, it only needs to prove that \(\textrm{F}(x,\omega )\) defined by (6) is BD-regular at \(x^*(\omega )\). In fact, for \(\omega \in {\Omega }\), the element V in \(\partial \textrm{F}(x,\omega )\) takes the format

where \(D_x=\textrm{diag}(d_x)\) with

and \(\tau \) takes any number in the interval \([-1, 1]\). Since \(diag (d_x)\in [-I,I]\), then \(B(\omega )D_x\in [-|B(\omega )|, |B(\omega )|]\) and hence \(A(\omega )+B(\omega )D_x\in [A-|B(\omega )|, A+|B(\omega )|]\). This together with the regularity of \([A(\omega )-|B(\omega )|, A(\omega )+|B(\omega )|]\) implies that \(A(\omega )+B(\omega )D_x\) is nonsingular, i.e., V is nonsingular. This completes the proof. \(\square \)

5 A Class of Special SAVE

We consider in this section the following SAVE:

This SAVE is a special case of SAVE (3) by letting \(B(\omega )=-I\), see [8]. Due to the existence of absolute value, SAVE (33) is nonsmooth. However, it can be equivalently transformed into a smooth SAVE by exploiting its special structure. For \(i=1,...,n\), denote by \(a_i(\omega )^T\) the i-th row of \(A(\omega )\). Then

where \(\alpha ^3:=(\alpha _1^3,...,\alpha _n^3)^T\) for \(\alpha =(\alpha _1,...,\alpha _n)^T\in \Re ^n\). Thus, solving SAVE (33) is equivalent to solving the following SAVE:

Since the function \(f(t)=|t|^3\) is twice continuously differentiable on \(\Re \) with \(f'(t)=3|t|t\) and \(f''(t)=6|t|\), SAVE (34) is smooth. Let

The corresponding ERM model for SAVE (34) takes the form

and its sample average approximation is

Since the function \(\tilde{\textrm{F}}(\cdot ,\omega )\) is twice continuously differentiable on \(\Re ^n\), the problems (35) and (36) are both smooth and hence some smoothing-type algorithms are applicable. The limiting behavior of the stationary points of (36) is established as below.

Theorem 9

Let the support set \({\Omega }\) be a compact set. Assume that \(x^k\) is a stationary point of (36) and \(x^*\) is an accumulation point of \(\{x^k\}\). Then \(x^*\) is a stationary point of (35) with probability one.

Proof

For the sake of simplicity, we assume without of generality that \(\lim \limits _{k\rightarrow \infty }x^k=x^*\). Let \(\mathcal {D}\) be a compact and convex set containing the whole sequence \(\{x^k\}\). For each k, since \(x^k\) is a stationary point of (36), then

By the continuity of \({{\tilde{F}}}, \nabla _x{{\tilde{F}}}\) and \(\nabla ^2_x {{\tilde{F}}}_j (j = 1,...,n)\) on the compact set \(\mathcal {D}\times {\Omega }\), there exists a constant \({M}>0\) such that

hold for every \((x,\omega )\in \mathcal {D}\times {\Omega }.\) Therefore

where the second inequality comes from the mean-value theorem and (38), and the third inequality follows from (38) and (39). Thus

Moreover, it follows from (38) that

According to [22, Theorem 16.8], we obtain

Then, by letting \(k\rightarrow \infty \) in (37) and taking (40) and (41) into account, we have

Hence \(x^*\) is a stationary point of (35) with probability one. \(\square \)

6 A Smoothing Gradient Method

In this section, we present a smoothing gradient method to solve SAVE (3) and give some numerical examples to explain effectiveness of the method. For the ERM problem (7), here we use the well-known sample average approximation (SAA) method to get the approximation of \({\Theta }({x})\) as

where \(\omega ^i (i=1,...,N)\) is sample points generated by the given distribution. Since the function \(\textrm{F}({x},\omega )=A(\omega )x+B(\omega )|x|- b(\omega )\) is nonsmooth with respect to x, we can not apply smoothing-type methods to solve the problem (42). To overcome this difficulty, we consider the following smoothing function:

where N is the number of sample points and

Obviously, \(\textrm{F}_N({x},\omega )\) is smooth with respect to x and \(\lim \limits _{N\rightarrow \infty }\textrm{F}_N({x},\omega )=\textrm{F}({x},\omega )\) for each \(x\in \Re ^{n}\). By using \(\textrm{F}_N({x},\omega )\), we define the following smooth approximation of the problem (42):

It is easy to see that if \({x}^*\) is the global optimal solution of the problem (43), then \({x}^*\) is an approximation to the global optimization solution of the problem (42) when N is sufficiently large. Moreover, \({\Theta }_N({x})\) is smooth with respect to x and its gradient is

with \(T_x:=\textrm{diag}\Big (\frac{x_i}{\sqrt{x_i^2+1/N}}\Big ).\) Now we present a smoothing gradient method to solve the problem (43).

- Algorithm A:

-

(A smoothing gradient method)

- Step 0 :

-

Given \(x^0\in \Re ^n, \sigma , \rho \in (0,1)\) and \(\epsilon > 0.\) Set \(k:=0.\)

- Step 1 :

-

If \(\Vert \nabla _{x}{\Theta }_N({x}^k)\Vert \le \epsilon \), then stop.

- Step 2 :

-

Compute the search direction \(d_k=-\nabla _x{\Theta }_N({x}^k).\)

- Step 3 :

-

Let the step-size \(\alpha _k:=\rho ^{l_k}\), where \(l_k\) is the smallest nonnegative integer l satisfying

$$\begin{aligned} {\Theta }_N(x^k+ \rho ^{l}d_k)\le (1-\sigma \rho ^{2l}) {\Theta }_N(x^k). \end{aligned}$$ - Step 4 :

-

Set \(x_{k+1}:= x_k+\alpha _kd_k.\) Set \(k:=k+1\) and go to Step l.

Theorem 10

For any positive integer N, let \(\{x^k\}\) be the iteration sequence generated by Algorithm A. Then any accumulation point \(x^*\) of \(\{x^k\}\) satisfies \(\nabla _{x}{\Theta }_N({x}^*)= 0.\)

Proof

Since \(x^*\) is an accumulation point of \(\{x^k\}\), there exists an infinite subsequence \(\{x^k\}_{k\in K}\subset \{x^k\}\) such that \(\lim \limits _{k\in K,k\rightarrow \infty } x^k=x^*\). Since \(\{ {\Theta }_N(x^k)\}\) is monotonically decreasing, it is convergent and so \(\lim \limits _{k\rightarrow \infty } {\Theta }_N(x^k)={\Theta }_N({x}^*).\) Note that if \({\Theta }_N({x}^*)=0\), then by (43) we have for \(i=1,...,N,\)

which together with (44) gives \(\nabla _{x}{\Theta }_N({x}^*)= 0.\) Now we assume \({\Theta }_N({x}^*)>0\). Since

it follows from \(\lim \limits _{k\rightarrow \infty } {\Theta }_N(x^k)={\Theta }_N({x}^*)>0\) that \(\lim \limits _{k\rightarrow \infty } \alpha _k=0\). Moreover, by the line search criterion in Step 3, we have for all \(k\in {K}\),

i.e.,

Since \( {\Theta }_N(x)\) is continuously differentiable everywhere, by letting \(k\rightarrow \infty \) with \(k\in {K}\) on both sides of the above inequality, we have \(\nabla _{x}{\Theta }_N({x}^*)^{T}d^{*}\ge 0,\) where \(d^*=-\nabla _{x}{\Theta }_N({x}^*)\). It follows that \(-\Vert \nabla _{x}{\Theta }_N({x}^*)\Vert ^2\ge 0\) and so \(\nabla _{x}{\Theta }_N({x}^*)=0.\) We complete the proof. \(\square \)

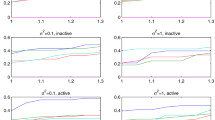

In the following, we give some numerical examples to explain effectiveness of Algorithm A. All experiments are carried on a PC with CPU of 12th Gen Intel(R) Core(TM) i9-12,900 2.40 GHz and RAM of 64.0GB. The program codes are written in MATLAB and run in MATLAB R2018a environment. In our experiments, we choose \(\rho = 0.2,~\sigma = 10^{-3},~\epsilon =10^{-5}\). For the purpose of comparison, we also apply the smoothing gradient method proposed by Du [8] to solve these examples. The main difference between two methods is that for the generated sampling \(\{\omega ^1,...,\omega ^{N}\}\), our method takes the smooth parameter \(\mu =\frac{1}{N}\) which is fixed, while Du’s method [8] takes the smooth parameter \(\mu =\mu _k\) which is updated in every iteration.

In the following tables, N-SGM denotes Algorithm A, SGM denotes the smoothing gradient method proposed in [8], N is the number of sample points, IT denotes the iteration numbers, CPU denotes the CPU time in seconds and \(x^k\) denotes the obtained solution when the algorithms terminate.

Example 1

Consider SAVE (3), in which

This SAVE has a solution \(x^*=(1, 2)^T\). We generate samples \(\omega ^i, i = 1, 2,...,N,\) which obey the the uniform distribution of [0, 1]. We take \(x^0=\textrm{rand}(2,1)\) as the starting point. Numerical results are given in Table 1.

Example 2

Consider SAVE (3), in which \(\omega =(\omega _1,\omega _2,\omega _3,\omega _4)^T\) and

where \(\omega _1\) and \(\omega _2\) are distributed uniformly, \(\omega _3\) is distributed exponentially, \(\omega _4\) is distributed normally, respectively, with the following parameters:

This SAVE has a solution \(x^*=(1, 1, 1, 1)^T\). We take \(x^0=\textrm{rand}(4,1)\) as the starting point. Numerical results are given in Table 2.

Example 3

Consider SLCP (4), in which \(\omega =(\omega _1,\omega _2,\omega _3,\omega _4)^T\) and

where \(\omega _1\) is distributed uniformly, \(\omega _2\) is distributed exponentially, \(\omega _3\) and \(\omega _4\) are distributed normally, respectively, with the following parameters:

This SLCP has the solution \(u^*=(0,0,0,1)^T\) and \(v^*=(1,1,1,0)^T\). By Remark 1, to obtain the solution of this SLCP, we may solve the SAVE (3) with

We take \(x^0=\textrm{rand}(4,1)\) as the starting point and the numerical results are given in Table 3.

Example 4

Consider SLCP (4), in which \(\omega =(\omega _1,\omega _2,\omega _3,\omega _4,\omega _5,\omega _6)^T\) and

where \(\omega _1\) and \(\omega _2\) are distributed uniformly, \(\omega _3\) is distributed exponentially, \(\omega _4\), \(\omega _5\) and \(\omega _6\) are distributed normally, respectively, with the following parameters:

This SLCP has the solution \(u^*=(1,1,0,0)^T\) and \(v^*=(0,0,2,2)^T\). Same as Example 3, we solve the SAVE (3) with

We take \(x^0=(1.1,1,1)^T\) as the starting point and the numerical results are given in Table 4.

From numerical results listed in Tables 1, 2, 3 and 4, we may observe that, as the sample size N increases, both N-SGM and SGM converge stably, that is, there is clearly convergent trend toward the solutions of the test problems. Moreover, we may see that N-SGM has some advantages over SGM because the former converges faster as the sample size N increases.

7 Conclusions

We have established the relationship between the SAVE (3) and the SLCP (4). We have presented the ERM formulation (7) for SAVE and have given its Monte Carlo approximation. Some results related to existence of solutions, exponential convergence rate and robustness of solutions have been derived. In particular, we have discussed a class of special SAVE (33) and have obtained a result related to convergence of stationary points. Finally, we have proposed a smoothing gradient method to solve SAVE and have given some numerical examples to explain its effectiveness. Although Guass/Subguass distribution satisfies the condition (25), it is worth further studying the computational complexity of Monte Carlo approximation method when the underlying distribution of random vector is not Guass/Subguass or even unknown in advance. Hence how to establish the computational complexity of Monte Carlo approximation method for SAVE under weaker conditions is an important but challenging topic and we prefer to leave this as our future research topic.

Data Availibility

The data sets generated during the current study are available from the authors on reasonable request.

References

Anderson, D.F., Higham, D.J., Sun, Y.: Computational complexity analysis for Monte Carlo approximations of classically scaled population processes. Multiscale Model. Sim. 16(3), 1206–1226 (2018)

Ali, R., Pan, K.: The new iteration methods for solving absolute value equations. Appl. Math. 68(1), 109–122 (2023)

Cottle, R.W., Pang, J.-S., Stone, R.E.: The Linear Complementarity Problem. Academic Press, New York (1992)

Chen, X., Fukushima, M.: Expected residual minimization method for stochastic linear complementarity problems. Math. Oper. Res. 30, 1022–1038 (2005)

Chen, X., Wets, R.J.-B., Zhang, Y.: Stochastic variational inequalities: residual minimization smoothing sample average approximations. SIAM J. Optim. 22, 649–673 (2012)

Chen, X., Zhang, C., Fukushima, M.: Robust solution of monotone stochastic linear complementarity problems. Math. Progr. 117(1), 51–80 (2009)

Cui, X., Zhang, L.: Stochastic \(R_0\) matrix linear complementarity problems: the Fischer-Burmeister function-based expected residual minimization. Comput. Appl. Math. 40, 183 (2021)

Du, S.Q., Sun, J.J., Niu, S.Q., Zhang, L.P.: Stochastic absolute value equations. J. Appl. Math. Comput. 69, 921–939 (2023)

Haghani, F.K.: On generalized Traub’s method for absolute value equations. J. Optim. Theory Appl. 166, 619–625 (2015)

Hladík, M.: Bounds for the solutions of absolute value equations. Comput. Optim. Appl. 69, 243–266 (2018)

Iqbal, J., Iqbal, A., Arif, M.: Levenberg-Marquardt method for solving systems of absolute value equations. J. Comput. Appl. Math. 282, 134–138 (2015)

Mangasarian, O.L.: A hybrid algorithm for solving the absolute value equation. Optim. Lett. 9(7), 1469–1474 (2015)

Mangasarian, O.L., Meyer, R.R.: Absolute value equations. Linear Algebra Appl. 419, 359–367 (2006)

Lin, G.H., Fukushima, M.: New reformulations for stochastic complementarity problems. Optim. Meth. Softw. 21, 551–564 (2006)

Lin, G.H., Luo, M.J., Zhang, D.L., Zhang, J.: Stochastic second-order-cone complementarity problems: expected residual minimization formulation and its applications. Math. Progr. 165, 197–233 (2017)

Liu, H.W., Huang, Y.K., Li, X.L.: Partial projected Newton method for a class of stochastic linear complementarity problems. Numer. Alg. 58, 593–618 (2011)

Liu, Z.M., Du, S.Q., Wang, R.Y.: Nonsmooth Levenberg-Marquardt type method for solving a class of stochastic linear complementarity problems with finitely many elements. Alg. 9, 83 (2016)

Luo, M.J., Wang, L.Z.: The deterministic ERM and CVaR reformulation for the stochastic generalized complementarity problem. Japan J. Indust. Appl. Math. 34, 321–333 (2017)

Luo, M.J., Zhang.Y.: Smoothing sample average approximation method for solving stochastic second-order-cone complementarity problems. J. Inequal. Appl. 2018: 77 (2018)

Mezzadri. F.: On the solution of general absolute value equations. Appl. Math. Lett. 107, 106462 (2020)

Neumaier, A.: Interval Methods for Systems of Equations. Cambridge University Press, Cambridge (1990)

Patrick, B.: Probability and Measure. Wiley, New York (1995)

Pang, J.S., Qi, L.: Nonsmooth equations: motivation and algorithms. SIAM J. Optim. 3, 443–465 (1993)

Rohn, J.: A theorem of the alternatives for the equation \(Ax+ B|x| = b\). Linear Multilinear A. 52, 421–426 (2004)

Rohn, J.: On unique solvability of the absolute value equation. Optim. Lett. 3, 603–606 (2009)

Rohn, J., Hooshyarbakhsh, V., Farhadsefat, R.: An iterative method for solving absolute value equations and sufficient conditions for unique solvability. Optim. Lett. 8, 35–44 (2014)

Shapiro A.: Computational complexity of stochastic programming: Monte Carlo sampling approach. In Proceedings of the International Congress of Mathematicians, pages 2979–2995, Hyderabad, India, 2010

Shapiro, A., Xu, H.: Stochastic mathematical programs with equilibrium constraints, modelling and sample average approximation. Optim. 57, 395–418 (2008)

Shen, S., Wu, S.: On the unique solution of the generalized absolute value equation. Optim. Lett. 15(6), 2017–2024 (2021)

Tang, J.Y., Zhou, J.C.: A quadratically convergent descent method for the absolute value equation \(Ax+B|x|=b\). Oper. Res. Lett. 47, 229–234 (2019)

Wang, G.X., Zhang, J., Zeng, B., Lin, G.H.: Expected residual minimization formulation for a class of stochastic linear second-order cone complementarity problems. Eur. J. Oper. Res. 265, 437–447 (2018)

Wu, S.L., Li, C.X.: The unique solution of the absolute value equations. Appl. Math. Lett. 76, 195–200 (2018)

Zhang, C., Chen, X.J.: Smoothing projected gradient method and its application to stochastic linear complementarity problems. SIAM J. Optim. 20, 627–649 (2009)

Zhou, G.L., Caccetta, L.: Feasible semismooth Newton method for a class of stochastic linear complementarity problems. J. Optim. Theory Appl. 139(2), 379–392 (2008)

Acknowledgements

The authors would like to thank two referees for their valuable suggestions that greatly improved the paper. Especially, we are grateful to Professor Shouqiang Du for providing us with his code.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Communicated by Nobuo Yamashita.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work was partially supported by the National Natural Science Foundation of China (12371305) and the Shandong Provincial Natural Science Foundation (ZR2023MA020, ZR2021MA066).

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Tang, J., Zhou, J. Expected Residual Minimization Formulation for Stochastic Absolute Value Equations. J Optim Theory Appl (2024). https://doi.org/10.1007/s10957-024-02527-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10957-024-02527-x