Abstract

This paper presents an approximate method for solving a class of fractional optimization problems with multiple dependent variables with multi-order fractional derivatives and a group of boundary conditions. The fractional derivatives are in the Caputo sense. In the presented method, first, the given optimization problem is transformed into an equivalent variational equality; then, by applying a special form of polynomial basis functions and approximations, the variational equality is reduced to a simple linear system of algebraic equations. It is demonstrated that the derived linear system has a unique solution. We get an approximate solution for the initial optimization problem by solving the final linear system of equations. The choice of polynomial basis functions provides a method with such flexibility that all initial and boundary conditions of the problem can be easily imposed. We extensively discuss the convergence of the method and, finally, present illustrative test examples to demonstrate the validity and applicability of the new technique.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In this article, an efficient approximate method for solving a class of fractional optimization problems is developed. The discussed problem is formulated by a bilinear form, which is a real valued functional of multiple dependent variables with multi-order fractional derivatives. Fractional derivatives are defined in the Caputo sense.

An important type of fractional optimization problems are fractional variational problems (FVPs). General optimality conditions have been developed for FVPs in previous works. For instance, Euler–Lagrange equations for FVPs with Riemann–Liouville and Caputo derivatives are derived in [1] and [2], respectively. Optimality conditions for FVPs with functionals containing both fractional derivatives and integrals are presented in [3]. Such formulas are also developed for FVPs with other definitions of fractional derivatives in [4, 5]. The general form of the Euler–Lagrange equations for FVPs with Riemann–Liouville, Caputo, Riesz–Caputo and Riesz–Riemann–Liouville derivatives is derived in [6]. A number of other generalizations of Euler–Lagrange equations for problems with free boundary conditions can be found in [7–10].

Optimal solutions of FVPs should satisfy Euler–Lagrange equations [1–3]. Hence, solving Euler–Lagrange equations leads to optimal solutions to FVPs. Except for some special cases [11], it is hard to find exact solutions for Euler–Lagrange equations. There exist examples of numerical methods, developed and applied by researchers of the field, for solving various classes of FVPs. Some of them can be found in [12–17].

It is known that for optimization problems with bilinear form operators, there exists an equivalent variational equality [18]. Building on this existence, we develop a method for solving multidimensional optimization problems with multi-order fractional derivatives and a group of boundary conditions. First, equivalent variational equality of the given multi dimensional optimization problem is derived; then, by expanding unknown functions in terms of special forms of polynomial basis functions and substituting them in the variational equality, a linear system of algebraic equations is achieved. It is proved that the derived system of equations has a unique solution. By approximating fractional derivative operators with Legendre orthonormal polynomial basis functions, the linear system turns into an approximate linear system. By solving the subsequent approximate system of equations, we determine unknown coefficients of the expansions for each variable. Thus, we get polynomial functions as approximate solutions for the problem. The main advantage of our method over the schemes presented in [12, 13, 15, 16] is that we easily derive a linear system of equations that can be solved instead of the main optimization problem. The existence and uniqueness of the solution for the derived linear system is guaranteed. We also get smooth approximate solutions, in terms of polynomials, that satisfy all initial and boundary conditions of the problem. Examples demonstrate that by applying only few number of approximations we can achieve satisfactory results.

2 Problem Formulation

Operator \(\text{ B }\) is defined as follows

where the product space \(\prod _{i=1}^{(m+1)n} L_2[t_0,t_1]\) is equipped with the following product norm

Assumption 2.1

Operator \(\text{ B }\) is considered to have the following properties

-

(i)

Bilinearity. For all \(U, V, W\in \prod _{i=1}^{(m+1)n} L_2[t_0,t_1]\) and \(a, b \in \mathbb {R}\)

$$\begin{aligned} \text {B}(aU+bV,W)= & {} a\text {B}(U,W)+b\text {B}(V,W),\\ \text {B}(W,aU+bV)= & {} a\text {B}(W,U)+b\text {B}(W,V). \end{aligned}$$ -

(ii)

Boundedness. There exists a constant \(d>0\) such that

$$\begin{aligned} \mid \text {B}(U,V) \mid \le d \parallel U \parallel _{\pi } \parallel V \parallel _{\pi }, \quad U,V \in \prod _{i=1}^{(m+1)n} L_2[t_0,t_1]. \end{aligned}$$ -

(iii)

Symmetry.

$$\begin{aligned} \text {B}(U,V)=\text {B}(V,U),\quad U,V \in \prod _{i=1}^{(m+1)n} L_2[t_0,t_1]. \end{aligned}$$ -

(iv)

Strong positivity. There exists \(c>0\) such that

$$\begin{aligned} c\parallel U \parallel _{\pi }^2 \le \text {B}(U,U),\quad U \in \prod _{i=1}^{(m+1)n} L_2[t_0,t_1]. \end{aligned}$$Functional J is defined as follows:

$$\begin{aligned} \quad J[u_1,\dots ,u_n]:= \frac{1}{2}\text {B}(U,U)-\text {L}(U)+C, \end{aligned}$$(2)

where \( \text{ L }: \prod _{i=1}^{(m+1)n} L_2[t_0,t_1] \rightarrow \mathbb {R} \) is a bounded linear operator, C is a real constant,

and the fractional derivative is defined in the Caputo sense

In cases for which \(\alpha =n\), the Caputo derivative is defined as \(^C _{t_0}D^{\alpha }_{t}u(t):=u^{(n)}(t)\). We consider that there exists an element, say \((u_1^*,\dots ,u_n^*)\in \prod _{i=1}^{n} E_i[t_0,t_1]\), that minimizes the functional J on the space \( \prod _{i=1}^{n} E_i[t_0,t_1]\),

For this article, our goal was to find approximate minimizing solution for the functional J on \( \prod _{i=1}^{n} E_i[t_0,t_1]\).

3 Variational Equality

Without any loss of generality, we let \(t_0=0\), \(t_1=1\) and \(t\in [0,1]\) in problem (2).

Theorem 3.1

The minimization problem of Sect. 2 is equivalent to the following variational problem

for \((u_1,\dots ,u_n) \in \prod _{i=1}^{(m+1)n} E_i[0,1]\) fixed and \((v_1,\dots ,v_n) \in \prod _{i=1}^{(m+1)n} E^*[0,1]\), where

Proof

Let

then, we have

Since \(\text {B}(V,V)\) is positive for all \(V\ne 0\), the necessary and sufficient condition of minimality, \(\varGamma '(0)=0\), is equivalent with the following condition:

and the proof is completed. \(\square \)

Corollary 3.1 shows that variational equality (3) determines a unique solution for minimization problem (2).

Corollary 3.1

Let \((u_1,\dots ,u_n)\) and \((w_1,\dots , w_n)\) be two minimizing solutions for the functional J; then, we have

Proof

According to Theorem 3.1, we have

where

Let \((v_1,\dots ,v_n)=(u_1,\dots ,u_n)-(w_1,\dots ,w_n)\); then, by Assumption 2.1 we get

and the proof is completed by considering (1). \(\square \)

4 Approximate Solution of the Variational Equality

In this section, we present an approximate method for solving variational equality (3).

Consider expansions \(u_{j,k}(t)\), \(1\le j \le n\), in the following form

Here, \(\phi _j\)s, \(j\in \{0\}\bigcup \mathbb {N}\) are shifted Legendre orthonormal polynomials

and each \(w_j\) is the Hermit interpolating polynomial that satisfies all initial and boundary conditions of \(u_j\). Now let

then,

forms a linear system of \(n(k+1)\) equations and unknowns. By solving linear system (7), we achieve coefficients of expansions (4). Thus, we get an approximate solution for minimization problem (2) in terms of polynomials. Note that expansion (4) and consequent approximate solutions satisfy all the boundary conditions of the problem. In Lemma 4.2, we show that linear system (7) has a unique solution. First, we state a lemma, which plays an important role in our discussion in this section and the subsequent section.

Lemma 4.1

Let

where \(f_{0}^j,f_{1}^j\) are given constant values. There exists a sequence of polynomial functions \(\{s_l(t)\}_{l\in \mathbb {N}}\) in E[0, 1] such that \(s_l \rightarrow f\) with respect to \(\parallel . \parallel _n\).

Proof

[14]. \(\square \)

Lemma 4.2

For any \(k\in \mathbb {N}\), linear system (7) has unique solution.

Proof

Let

\(\square \)

Here \(P_k[0,1]\) denotes the space of polynomials with a degree of at most k. According to Assumption 2.1, we have

If \(\parallel U \parallel _{\pi } \rightarrow \infty \), then \(J[u_1,\dots ,u_n] \rightarrow \infty \). Hence, \(\eta _k >-\infty \). By the definition of \(\eta _k\), there exists a sequence \(\{(\gamma _{1,j}^k, \dots , \gamma _{n,j}^k)\}_{j\in \mathbb {N}} \subseteq \prod _{i=1}^n P_k[0,1] \bigcap E_i[0,1]\) such that \(\lim _{j \rightarrow \infty } J[\gamma _{1,j}^k, \dots , \gamma _{n,j}^k]=\eta _k\). In addition, it can be observed that

where

It is obvious that \((\frac{\gamma _{1,i}^k+\gamma _{1,j}^k}{2},\dots ,\frac{\gamma _{n,i}^k +\gamma _{n,j}^k}{2})\) \(\in \prod _{i=1}^n P_k[0,1] \bigcap E_i[0,1]\) and \(J[\frac{\gamma _{1,i}^k+\gamma _{1,j}^k}{2}\), \(\dots , \frac{\gamma _{n,i}^k+\gamma _{n,j}^k}{2}]\ge \eta _k\). Hence

Inequality (8) shows that the sequence \(\{(\gamma _{1,j}^k, \dots , \gamma _{n,j}^k)\}_{j\in \mathbb {N}}\) is a Cauchy sequence with respect to the product norm \(\Vert . \Vert _{\pi }\). On the other hand, according to Lemma 4.1, \( P_k[0,1] \bigcap E_i[0,1]\) is a closed subset of the Banach space \((C^{\lceil \alpha _m \rceil }[0,1],\Vert . \Vert _{\lceil \alpha _m \rceil })\). Thus, it is a complete metric space with respect to \(\Vert . \Vert _{\lceil \alpha _m \rceil }\). Because \( P_k[0,1] \bigcap E_i[0,1]\) is a finite dimensional Banach space, it is complete with respect to any norm. Hence, there exists an element, say \((\gamma _1^k,\dots ,\gamma _n^k)\in \prod _{i=1}^n P_k[0,1] \bigcap E_i[0,1]\), such that \((\gamma _{1,j}^k,\dots ,\gamma _{n,j}^k)\rightarrow (\gamma _1^k,\dots ,\gamma _n^k)\). According to Assumption 2.1, bilinear operator \(\text{ B }\) and linear operator \(\text{ L }\) are bounded. So it can be easily observed that \( \eta _k=\lim _{j \rightarrow \infty } J[\gamma _{1,j}^k,\dots ,\gamma _{n,j}^k]=J[\gamma _1^k,\dots ,\gamma _n^k]. \) So far we have shown that there exists an element \((\gamma _1^k,\dots ,\gamma _n^k)\in \prod _{i=1}^n P_k[0,1] \bigcap E_i[0,1]\) that minimizes the functional J on \(\prod _{i=1}^n P_k[0,1] \bigcap E_i[0,1]\). Therefore, according to Theorem 3.1, \(\varGamma ^k\) is a solution for the system (7), where

Now we are going to show that the solution is unique. Suppose \((u_1^k,\dots ,u_n^k)\) and \((w_1^k,\dots ,w_n^k)\) are two solutions of system (7)

then, we have

where

Now let \(V=U_k-W_k\); then, \( c \parallel U_k-W_k \parallel _{\pi } \le \text{ B }(U_k-W_k,U_k-W_k)=0. \)

Referring to the definition of \(\parallel . \parallel _{\pi }\) in (1), we get \(\parallel u_j^k-w_j^k \parallel _{L_2[0,1]}=0\), and the uniqueness is proved given that norms are equivalent in finite dimensional Banach spaces.\(\square \)

Now we rewrite linear system (7) explicitly in terms of unknown coefficients \(c_{i,j}\), \(1 \le i \le n\), \(0\le j \le k\). First, \(U_k\) is decomposed as follows:

Considering expansions (4), we have

By applying (9) and (10), system (7) can be rewritten as follows:

We need to solve linear system (11) to find approximate solution for problem (3). In order to simplify the calculation of each \(\text{ B }(\lambda _{r,l},\mu _{i,j})\), in Lemma 4.3 we approximate elements \(^C_{0}D^{\alpha _r}_{t}\psi _l(t)\), \(1\le r \le m\), \(0\le l \le k\), by the Legendre orthonormal polynomials \(\phi _j\)s, \(j\in \{0\}\bigcup \mathbb {N}\), utilizing the following theorem.

Theorem 4.1

Let \(f\in L^2[0,1]\), \(r_m=\sum _{j=0}^m c_j \phi _j\), where \(c_{j}=\int _0^1 f(t)\phi _j(t){\hbox {d}}t\); then,

Proof

[19]. \(\square \)

Lemma 4.3

Consider

where

for \( {\lceil \alpha \rceil \le 2{\lceil \alpha _m \rceil }+k-i}\) and \(\delta (\alpha _m ,k,i,\alpha ,j)=0\), for \({\lceil \alpha \rceil >2{\lceil \alpha _m \rceil }+k-i}\); then,

Proof

By utilizing (6), we get

With respect to the fact that \( ^C_{0}D^{\alpha }_{t} t^k= \frac{\varGamma (k+1)}{\varGamma (k+1-\alpha )}t^{k-\alpha }\), when \({\lceil \alpha \rceil \le k}\), and \(^C_{0}D^{\alpha }_{t} t^k=0\) for \({\lceil \alpha \rceil > k}\) [20], we get the Caputo derivative of \(\psi _r(t)\)

when \({\lceil \alpha \rceil \le 2{\lceil \alpha _m \rceil }+k-i,}\) and \(^C_{0}D^{\alpha }_{t}\psi _r(t)=0\) when \({\lceil \alpha \rceil > 2{\lceil \alpha _m \rceil }+k-i}\). Now by applying Theorem 4.1, we approximate \(t^{2{\lceil \alpha _m \rceil }+k-i-\alpha }\) for \(\lceil \alpha \rceil \le 2{\lceil \alpha _m \rceil }+k-i\) with Legendre orthonormal basis functions \(\phi _s\)s, and we get

Substituting (13) and (14) in (12) we get \(d_s^{\alpha }\), and the proof is completed. \(\square \)

By applying Lemma 4.3 , system (11) is approximated as follows

By solving system (15), the following approximate solution for the problem is achieved:

5 Convergence

In this section, we discuss the convergence of the method presented in section 4. In Theorem 5.1, we show that, with an increase in values of k and \(\gamma \) in (16), the approximate minimizing function \((u_{1,k}^{\gamma },\dots ,u_{n,k}^{\gamma })\) tends to \((u_{1}^{*},\dots ,u_{n}^{*})\). First, we state some basic properties of Caputo fractional derivatives needed in Lemma 5.1 and Theorem 5.1.

Let \(f\in C^{n}[0,1]\). For the Caputo fractional derivative of order \(\alpha \), \(n-1<\alpha \le n\), \( ^C _{0}D^{\alpha }_{t}f(t) \in C[0,1] \). We also have [20]

Lemma 5.1

Suppose \(C_{j,k}\), \(1\le j \le n\), \(k\in \mathbb {N}\), is the solution of system (11); then, for a sufficiently large value of \(\gamma \in \mathbb {N} \), there exists a unique solution \(C_{j,k}^{\gamma }\) for the system (15), where

Proof

By utilizing Lemma 4.3, we get

According to Assumption 2.1, the bilinear operator \(\text{ B }\) and the linear operator \(\text{ L }\) are bounded. Hence,

Consider linear systems (11) and (15) as follows:

where

According to Lemma 4.2, linear system (7) has a unique solution. So \(\det M_{(k+1)n\times (k+1)n}\ne 0\), and (18) and (19) show us that, for a sufficiently large value of \(\gamma \), \(\det M_{(k+1)n\times (k+1)n}^{\gamma }\ne 0\). This means that, for a sufficiently large value of \(\gamma \), the linear system (15) has a unique solution. Let

for a sufficiently large \(\gamma \); then,

Here \(\tilde{M}_{i,j}\) and \(\tilde{M}_{i,j}^{\gamma }\) are matrices achieved by deleting the ith row and jth column of matrices \(M_{(k+1)n\times (k+1)n}\) and \({M_{(k+1)n\times (k+1)n}^{\gamma }}\), respectively. \(0 < \epsilon <1\) is given. Because the determinant of a matrix is a polynomial constructed by matrix entries, considering (18) and (19) it can be observed that, for a sufficiently large value of \(\gamma \),

By (18)–(20), it is also observed that for a large enough \(\gamma \),

Hence,

and the proof is completed. \(\square \)

Theorem 5.1

Suppose \(\epsilon >0\) is given; then, for sufficiently large values of k and \(\gamma \) we have

Proof

Let \((u_{1,k},\dots ,u_{n,k})\) be the solution of system (7):

According to Theorem 3.1

So, considering (22) and (23), it can be observed that

By Lemma 4.1, there exists a sequence, say \(\{(v_{1,k},\dots ,v_{n,k})\}_{k\in \mathbb {N}}\), \((v_{1,k},\dots ,v_{n,k})\in \prod _{i=1}^{n} P_k[0,1] \bigcap E_i[0,1]\), such that \(v_{i,k} \rightarrow u_i^*\), \(1\le i \le n\) with respect to \(\parallel . \parallel _{\lceil \alpha _m\rceil }\). Considering (24), we get

where

From (25) we obtain

and

Now by referring to Assumption 2.1, we get

\(\epsilon >0\) is given. With respect to (17), it can be easily observed that with an increase in the value of k, \(\parallel U^*-V_k \parallel _{\pi }\) tends to zero. So inequality (28) shows that for a large enough value of k,

Now for a fixed value of k that satisfies (29) and (30), according to (17) and Lemma 5.1, \(\gamma \) can be set sufficiently large such that

and the proof is completed. \(\square \)

6 Illustrative Test Problems

In this section, we apply the method presented in section 4 for solving the following test examples. The well-known symbolic software “Mathematica” has been employed for calculations and creating figures.

Example 6.1

Consider the following one dimensional problem:

with exact solution \(u(t)=t^{\frac{5}{2}}\) and \(J[u]=0\). For the above problem we have

Considering \(k=\gamma =2\) in approximation (16), we get

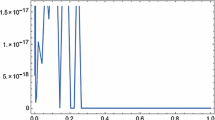

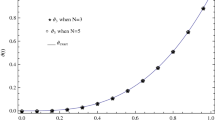

The approximate solution \(u_2^2(t)\) and the exact solution \(u(t)=t^{\frac{5}{2}}\) are plotted in Fig. 1. The absolute errors in example 6.1 are shown in Table 1.

Example 6.2

Consider the following two dimensional problem:

with exact solution \({u}_1(t)=t^{2.5}+t^2+1\), \({u}_2(t)=t^{4.5}\) and \(J[u_1,u_2]=0\). For the above problem we have

Considering \(k=4\) and \(\gamma =5\) in approximations (16), we get

The approximate solution \(u_{1,4}^5(t)\) and \(u_{2,4}^5(t)\) and the exact solution \({u}_1(t)=t^{2.5}+t^2+1\) and \({u}_2(t)=t^{4.5}\) are plotted in Fig. 2. The absolute errors in example 6.2 are shown in Table 2.

7 Conclusions

An approximate method was developed for solving a class of fractional optimization problems. First, the optimization problem was transformed into a variational equality; then, using a special type of polynomial basis functions, the variational equality was reduced to a linear system of algebraic equations with a unique solution. The approximate solutions are smooth polynomial functions with high flexibility in satisfying all initial and boundary conditions of the problem. The convergence of the method was extensively discussed, and illustrative test examples were presented to demonstrate efficiency of the new technique.

References

Agrawal, O.M.P.: Formulation of Euler–Lagrange equations for fractional variational problems. J. Math. Anal. Appl. 272, 368–379 (2002)

Almeida, R., Torres, D.F.M.: Necessary and sufficient conditions for the fractional calculus of variations with Caputo derivatives. Commun. Nonlinear Sci. Numer. Simul. 16, 1490–1500 (2011)

Almeida, R., Torres, D.F.M.: Calculus of variations with fractional derivatives and fractional integrals. Appl. Math. Lett. 22, 1816–1820 (2009)

Agrawal, O.M.P.: Fractional variational calculus in terms of Riesz fractional derivatives. J. Phys. Math. Theo. 40, 6287–6303 (2007)

Almeida, R., Torres, D.F.M.: Fractional variational calculus for nondifferentiable functions. Comput. Math. Appl. 61, 3097–3104 (2011)

Agrawal, O.M.P.: Generalized variational problems and Euler–Lagrange equations. Comput. Math. Appl. 59, 1852–1864 (2010)

Agrawal, O.M.P.: Fractional variational calculus and transversality conditions. J. Phys. Math. Gen. 39, 10375–10384 (2006)

Agrawal, O.M.P.: Generalized Euler–Lagrange equations and transversality conditions for FVPs in terms of the Caputo derivative. J. Vib. Control 13, 1217–1237 (2007)

Malinowska, A.B., Torres, D.F.M.: Generalized natural boundary conditions for fractional variational problems in terms of the Caputo derivative. Comput. Math. Appl. 59, 3110–3116 (2010)

Yousefi, S.A., Dehghan, M., Lotfi, A.: Generalized Euler–Lagrange equations for fractional variational problems with free boundary conditions. Comput. Math. Appl. 62, 987–995 (2011)

Baleanu, D., Trujillo, J.J.: On exact solutions of class of fractional Euler–Lagrange equations. Nonlinear Dyn. 52, 331–335 (2008)

Agrawal, O.M.P.: A general finite element formulation for fractional variational problems. J. Math. Anal. Appl. 337, 1–12 (2008)

Pooseh, S., Almeida, R., Torres, D.F.M.: Discrete direct methods in the fractional calculus of variations. Comput. Math. Appl. 66, 668–676 (2013)

Lotfi, A., Yousefi, S.A.: A numerical technique for solving a class of fractional variational problems. J. Comput. Appl. Math. 237, 633–643 (2013)

Wang, D., Xiao, A.: Fractional variational integrators for fractional variational problems. Commun. Nonlinear Sci. Numer. Simul. 17, 602–610 (2012)

Maleki, M., Hashim, I., Abbasbandy, A., Alsaedi, A.: Direct solution of a type of constrained fractional variational problems via an adaptive pseudospectral method. J. Comput. Appl. Math. 283, 41–57 (2015)

Lotfi, A., Yousefi, S.A.: Epsilon–Ritz method for solving a class of fractional constrained optimization problems. J. Optim. Theor. Appl. 163, 884–899 (2014)

Zeidler, E.: Applied Functional Analysis Applications to Mathematical Physics. Springer, New York (1991)

Rivlin, T.J.: An Introduction to the Approximation of Functions. Dover Publications, New York (1981)

Kilbas, A.A., Srivastava, H.M., Trujillo, J.J.: Theory and Applications of Fractional Differential Equations. North-Holland Mathematics Studies, Amsterdam (2006)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Lotfi, A., Yousefi, S.A. A Generalization of Ritz-Variational Method for Solving a Class of Fractional Optimization Problems. J Optim Theory Appl 174, 238–255 (2017). https://doi.org/10.1007/s10957-016-0912-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-016-0912-3

Keywords

- Caputo fractional derivative

- Fractional optimization problem

- Polynomial basis functions

- Variational equality