Abstract

The inverse eigenvalue problem for a weighted Helmholtz equation is investigated. Based on the finite spectral data, the density function is estimated. The inverse problem is formulated as a least squared functional with respect to the density function, with a \(L^2\) regularity term. The continuity of the eigenpairs with respect to the density is proved. Mathematical properties of the continuous and the discrete optimization problems are established. A conjugate gradient algorithm is proposed. Numerical results for 1D and 2D inverse eigenvalue problem of the weighted Helmholtz equation are presented to illustrate the effectiveness and efficiency of the proposed algorithm.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

An inverse eigenvalue problem concerns the reconstruction or identification of the parameters in the governing differential equation from the prescribed spectral data. It arises in various applications, such as control design, system identification, seismic tomography, principal component analysis, exploration and remote sensing, antenna array processing, geophysics, molecular spectroscopy, particle physics, structure analysis, circuit theory, mechanical system simulation, and so on [1].

The inverse eigenvalue problem for a weighted Helmholtz equation is a kind of the classical inverse Sturm-Liouville problem [2,3,4,5]. Given the first finite smallest eigenvalues, the density function in the weighted Helmholtz equation is recovered. McCarthy uses projection of the boundary value problem and its coefficients onto appropriate vector spaces, which leads to a matrix inverse problem [6, 7]. Andrew proposes a new algorithm for solving the inverse Sturm-Liouville problem of reconstructing a symmetric potential from eigenvalues [8]. Drignei deals with the recovery of the potential coefficient of a Sturm-Liouville operator from three known sequences of eigenvalues corresponding respectively to three sets of Dirichlet boundary conditions [9]. Jiang et al. investigate the inverse second-order Sturm-Liouville problem and the inverse fourth-order Sturm-Liouville problem, and derive trace formulas showing relations between the unknown coefficients and eigenvalues explicitly for both problems [10]. Gao et al. propose a new iterative method to recover the impedance of Sturm-Liouville problem from the finite eigenvalues [11]. Based on natural eigenfrequencies, Zhang et al. investigate the damage identification of elastic vibration structure, where level set method is introduced to represent two different material regions [12]. For the same objective functional, Zhang et al. propose the piecewise constant level set method to represent the shape and topology of the damaged region [13]. Lee and Shin introduce a frequency response function-based structural damage identification method for beam structures [14].

The finite element method is used to solve the eigenvalue problem. There are many excellent works on it, and we refer to [15, 16] and references cited therein. The refined estimates for Galerkin approximations of the eigenvalues and eigenvectors of selfadjoint eigenvalue problem is investigated in [17, 18]. The error estimates for the generalised Dirichlet eigenvalue problem with stochastic coefficients is presented in [19].

This paper is organized as follows. In Sect. 2, the mathematical formulations of the inverse eigenvalue problem of the weighted Helmholtz equation is described. In Sect. 3, the properties of existence, stability and Fr\(\acute{e}\)chet derivative of the continuous optimization problems are established. In Sect. 4, the properties of existence and the convergence of the discrete optimization problems are given. A conjugate gradient method is proposed in Sect. 5. 1D and 2D numerical results of inverse eigenvalue problem of the weighted Helmholtz equation are presented In Sect. 6.

2 Problem Statement

Consider the weighted Helmholtz equation

where \({\Omega \subset {\mathbb {R}}^d} \ (d=1,2)\) is a bounded and connected domain and \(\partial \Omega \) is the boundary of the domain. \(\rho (x)\) is the density function and is assumed to satisfy the condition

where \( \rho _0\) and \( \rho _1\) are two constants. \((\lambda ,u)\) is the eigenpair of the minus Laplace operator \(-\Delta \) with density function \(\rho (x)\). The weak formulation of (1) and (2) is given by

By rearranging, (4) admits a countable sequence of real eigenvalues [see 18]

and the corresponding eigenfunctions

The i-th eigenpair \((\lambda _i(\rho ),u_i(\rho ))\) is obtained by the min-max principle [18]

where \(H_0^1(\Omega )\) is the subspace of \(H^1(\Omega )\) consisting of functions which vanish at the boundary of \(\Omega \) in the sense of trace. Notice that the eigenfunction \(u_i(\rho )\) in (7) is not unique, since for a nonzero constant C, \(C u_i(\rho )\) is also the eigenfunction corresponding to \(\lambda _i(\rho )\). When \(\lambda _i(\rho )\) is multiple, \(u_i(\rho )\) is assigned one of the eigenfunctions corresponding to \(\lambda _i(\rho )\). For any \(\lambda _i(\rho )\) we let

The eigenfunction \(u_i(\rho )\) in (7) is deemed to be one of the eigenfunctions corresponding to \( \lambda _i(\rho )\), that is, \(u_i(\rho )\in M(\lambda _i(\rho )) \). The eigenfunctions in (6) are normalized and orthogonalized to satisfy

It is known that an inverse eigenvalue problem, especially for the real-valued case, may not necessarily have an exact solution [1]. It is also known that the spectral information, in practice, is often obtained by experimental devices and thus inevitably contaminated with measurement errors. That is, there are situations where an approximate solution best in the sense of least squares would be satisfactory. In order to deal with the instability of the inverse problem, a \(L^2\) regularity term is added. The inverse eigenvalue problem is reformulated as the following constrained optimization problem:

where \({\widehat{\lambda }}_i,\ (i=1,2,\ldots , N)\) are the measured data of the first N eigenvalues, \(\varepsilon \) is the regularity parameter and \({\mathcal {A}}\) is the admissible set of the density function, that is,

3 Existence and Stability of the Optimization Problem

In this section, we present the properties of existence, stability and Fr\(\acute{e}\)chet derivative of the continuous optimization problem (10) and (4).

Lemma 3.1

For any \(\rho \in {\mathcal {A}}\), we have

Proof

For \(i=1,2,\ldots , N \), by (7), we set \(u_i^0, \ u_i^1 \in V_i \subset H_0^1(\Omega ) \text { and} \ dim (V_i)=i\) such that

and

Consequently, by (3), (7), (13) and (14), we have

\(\square \)

Lemma 3.2

For \( i=1,2,\ldots , N\), assume that \((\lambda _i(\rho ),u_i(\rho ))\) is the i-th eigenpair of (4), and (\({\tilde{\lambda }}_i,{\tilde{u}}_i)\) is the eigenpair of (4) replacing \(\rho \) by \({\tilde{\rho }}\), then

Proof

For \( i=1,2,\ldots , N\), since \((\lambda _i(\rho ),u_i(\rho ))\) satisfies (4) and (\({\tilde{\lambda }}_i,{\tilde{u}}_i)\) satisfies (4) by replacing \(\rho \) by \({\tilde{\rho }}\), we obtain

\(\square \)

Replacing \(\rho \in {\mathcal {A}}\) in (4)–(9) by \(\rho ^n\), \(\rho ^*\in {\mathcal {A}}\), the eigenpairs \((u _{i}(\rho ^{n}), \lambda _{i}(\rho ^{n}))\) and \((u_{i}(\rho ^{*}), \lambda _{i}(\rho ^{*})),\ i=1,\ 2,\ \ldots ,\) are obtained, respectively.

Lemma 3.3

For \( i=1,2,\ldots ,N,\) let \(\rho ^{n},\ \rho ^{*} \in {\mathcal {A}}\) and \(\rho ^{n} {\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*}\ \text {in} \ L^{\infty }(\Omega )\) as \(n\rightarrow \infty \), then \(\lambda _{i}(\rho ^n)\rightarrow \lambda _{i}(\rho ^*)\). Moreover, there exists a subsequence \(u_{i}(\rho ^n)\in M(\lambda _i(\rho ^n))\), and some \(u_{i}(\rho ^*)\in M(\lambda _i(\rho ^*))\), satisfying \(u_{i}(\rho ^n)\rightarrow u_{i}(\rho ^*)\ in \ H^{1}(\Omega ).\)

Proof

For \( i=1,2,\ldots ,N,\) by Lemma 3.1, the boundedness of \(\lambda _i(\rho ^n)\) implies that there exists a subsequence, also denoted by \(\lambda _i(\rho ^n)\), such that

Notice that by (9) (replacing \(\rho \) by \(\rho ^n\)) the eigenfunction \(u_i(\rho ^n)\in M(\lambda _i(\rho ^n))\) satisfies

By Poincar\(\acute{e }\) inequality, we have

where C is a constant independent of \(\rho ^n\). Thus, there exists a subsequence, also denoted by \(u_i(\rho ^n)\), such that

We need to prove that \((\lambda _i^*,u_i^*)\) is the eigenpair of (4) corresponding to \(\rho ^*\) for \( i=1,2,\ldots ,N.\) Notice that the eigenpair \((\lambda _i(\rho ^n), \ u_i(\rho ^n))\) corresponding to \(\rho ^n\) satisfies (4) (replacing \(\rho \) by \(\rho ^n\)), that is,

The RHS item of equation of (21) could be rewritten by

By (11), (17), (19), (20) and \(\rho ^{n} {\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*}\) in \(L^{\infty }(\Omega )\), we have

By (20), we have

Combining (23) and (24), and taking the limitation of equation (21), we have

Therefore, it concludes that \((\lambda _i^*,u_i^*)\ ( i=1,2,\ldots ,N)\) are eigenpairs of (4) corresponding to \(\rho ^*\).

Furthermore, replacing \(\rho ,\ u_{i}(\rho ), \ \lambda _{i}(\rho )\) by \(\rho ^n,\ u_{i}(\rho ^n), \ \lambda _{i}(\rho ^n)\), and replacing \({\tilde{\rho }},\ {\tilde{u}}_{i}, \ {\tilde{\lambda }}_{i}\) by \(\rho ^*,\ u_{i}^*, \ \lambda _{i}^*\) in Lemma 3.2, respectively, we have

with (11), (20), (17), (19) and \(\rho ^{n}{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ \text {in} \ L^{\infty }(\Omega )\). Combining (20) and (26), we have

By the orthogonality (9) (replacing \(\rho \) by \(\rho ^n\)), (27), (17) and \(\rho ^{n}{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ \text {in} \ L^{\infty }(\Omega )\), the orthogonality of \(u_i^*\) is obtained by

Finally, we prove \((\lambda ^*_{i},u^*_{i})\ ( i=1,2,\ldots ,N)\) are the i-th eigenpairs for \(\rho ^{*}\) by induction. For \(i=1\), by (7) (replacing \(\rho \) by \(\rho ^*\)) we have

On the other hand, by (17), (7) ( replacing \(\rho \) by \(\rho ^n\)) and \(\rho ^{n}{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ \text {in} \ L^{\infty }(\Omega )\), we have

Combining (29) and (30), we obtain \(\lambda _{1}^{*}=\lambda _{1}(\rho ^{*})\). The eigenfunction \(u_{1}^{*} \) corresponding to \( \lambda _{1}^{*}\) is also the eigenfunction corresponding to \(\lambda _{1}(\rho ^{*})\). That is, \(u_{1}^{*} \in M (\lambda _{1}(\rho ^{*}))\). Thus, we set \(u_{1}(\rho ^{*})\in M (\lambda _{1}(\rho ^{*}))\) to satisfy \(u_{1}^{*}=u_{1}(\rho ^{*})\).

For \( i=1,2,\ldots , k\), assuming that

we need to prove that \(\lambda _{k+1}^{*}=\lambda _{k+1}(\rho ^{*})\) and \(u_{k+1}^{*}=u_{k+1}(\rho ^{*})\). By (7) and (9) (replacing \(\rho \) by \(\rho ^*\)), (28) and (32), we have

Let \(u^*=\alpha _{1}u_{1}^{*}+\alpha _{2}u_{2}^{*}+...+\alpha _{k}u_{k}^{*}+\alpha _{k+1}u_{k+1}^{*}.\) By the orthogonality (28), we have

By (5) (replacing \(\rho \) by \(\rho ^*\)) and (31), we have

Then, we use reduction to absurdity to prove \(\lambda _{k+1}^{*}\ge \lambda _{k}^{*}\). If the claim is negated to assume that

combining with (34), (35) and (36), we have

By (33) and (38), we can get \(\lambda _{k+1}(\rho ^{*})<\lambda _{k}^{*}=\lambda _{k}(\rho ^{*})\), which incurs the contradiction. Therefore, we conclude that

Combining with (34), (35), (36) and (39), we obtain

Substituting (40) into (33), we obtain

On the other hand, we need to prove that \(\lambda _{k+1}(\rho ^{*})\ge \lambda _{k+1}^{*}.\) First, we can conclude that

If the claim is negated to assume that

since \(u_{1}(\rho ^{n}),u_{2}(\rho ^{n}),...,u_{k}(\rho ^{n})\) are orthogonal by (9) (replacing \(\rho \) by \(\rho ^n\)), then

Thus,

Taking the limitation of (44) as \(n\rightarrow \infty \), with (27), (32) and (9) (replacing \(\rho \) by \(\rho ^*\)), we obtain

Therefore, \(u_{k+1}(\rho ^{*})\equiv 0\), which contradicts the definition of a nontrivial eigenfunction.

By (7) (replacing \(\rho \) by \(\rho ^n\)) and (42), we have

Let \(u^n=\beta _{1}u_{1}(\rho ^{n})+\beta _{2}u_{2}(\rho ^{n})+,...,\beta _{k}u_{k}(\rho ^{n})+\beta _{k+1}u_{k+1}(\rho ^{*})\). By (9) and (4) (replacing \(\rho \) by \(\rho ^n\) and \(\rho ^*\), respectively), (11), (17), (27), (31), (32) and \(\rho ^{n}{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ in \ L^{\infty }(\Omega )\), we have

and

By (5) (replacing \(\rho \) by \(\rho ^*\)) and (47), we have

Combining (17), (46), (47), (48) and (49), it implies that

With (41) and (50), we conclude that \(\lambda _{k+1}^{*}=\lambda _{k+1}(\rho ^{*})\). Moreover, the eigenfunction \(u_{k+1}^{*}\) corresponding to \(\lambda _{k+1}^{*}\) is also the eigenfunction corresponding to \(\lambda _{k+1}(\rho ^{*})\). That is, \(u_{k+1}^{*} \in M (\lambda _{k+1}(\rho ^{*}))\). We set \(u_{k+1}(\rho ^{*})\in M (\lambda _{k+1}(\rho ^{*}))\) to satisfy \(u_{k+1}^{*}=u_{k+1}(\rho ^{*})\). By induction, we conclude that \(\lambda ^*_{i}=\lambda _{i}(\rho ^*)\) and \(u^*_{i}=u_{i}(\rho ^*)\), for \(i=1,2,\ldots ,N\). Combined with (17) and (27), the proof is complete. \(\square \)

Theorem 3.1

There exists at least one minimizer to the optimization problem (10) and (4).

Proof

By Lemma 3.1, we conclude that the minimization of \(F(\rho )\) is finite over the admissible set \({\mathcal {A}}\). Therefore, there exists a sequence \(\rho ^n\in {\mathcal {A}}\) such that

The uniform boundedness of the sequence \(\rho ^n\) in \(L^\infty (\Omega )\) implies that there exists a subsequence, also denoted by \(\rho ^n\), and some \(\rho ^*\in {\mathcal {A}}\) such that

By Lemma 3.3, we have

Now the convergence of \(\lambda _i(\rho ^n)\) and the lower semicontinuity of a norm imply that

Therefore \(\rho ^*\) is a minimizer of \(F(\rho )\). \(\square \)

Next theorem shows the stability of the optimization problem with respect to the data perturbation.

Theorem 3.2

For \(i=1,2,\ldots , N\), let \({\widehat{\lambda }}_i^n\) be a sequence such that

and \(\rho ^n\) be the minimizer of the optimization problem (10) and (4) with \({\widehat{\lambda }}_i\) replaced by \({\widehat{\lambda }}^n_i\). Then there exists a subsequence of \(\rho ^n\) weak\(-*\) converging in \(L^\infty (\Omega )\) to a minimizer of the optimization problem (10) and (4).

Proof

Since \(\rho ^n\) is the minimizer of the optimization problem (10) and (4) with \({\widehat{\lambda }}_i\) replaced by \({\widehat{\lambda }}^n_i\), for all \(\rho \in {\mathcal {A}}\), we have that

The uniform boundedness of the sequence \(\rho ^n\) in \(L^\infty (\Omega )\) implies that there exists a subsequence, also denoted by \(\rho ^n\), and some \(\rho ^*\in {\mathcal {A}}\) such that

By Lemma 3.3, there exists a subsequence of \(\lambda _i(\rho ^n)\), also denoted by \(\lambda _i(\rho ^n)\), such that

Thus, by the convergence of \({\widehat{\lambda }}_i^n\rightarrow {\widehat{\lambda }}_i\), we have

Now, for all \(\rho \in {\mathcal {A}}\), we have that

Therefore \(\rho ^*\) is a minimizer of (10) and (4). \(\square \)

Theorem 3.3

For \(\rho \in {\mathcal {A}} \) and \(i=1,2,\ldots ,N,\) if eigenvalues \(\lambda _i(\rho )\) of (4) are simple, then the functional \(F{: {\mathcal {A}}\subset L^{\infty }(\Omega )\rightarrow {\mathbb {R}}}\) is Fr\(\acute{e}\)chet differentiable and its Fr\(\acute{e}\)chet derivative \(F^\prime (\rho )\) at \(\rho \in {\mathcal {A}}\) is given by

Proof

Replacing \(\rho \) by \((\rho +\gamma )\) in (7), we define the i-th eigenpair \((\lambda _i (\rho +\gamma ), u_i(\rho +\gamma ))\) for \((\rho +\gamma )\) by

Similar to the proof of Lemma 3.3, we can show that as \(\gamma \rightarrow 0 \ \text {in} \ L^{\infty }(\Omega )\),

where \(u_{i}(\rho )\in M(\lambda _{i}(\rho ))\). Combined with (7) and (60), we have

With (4), (7) and (60), the numerator of (63) could be evaluated by

and the denominator of (63) could be evaluated by

Combining (64) and (65), (63) could be simplified by

Thus,

By (9) and (62), we have \(\Vert u_i(\rho )\Vert _{H^1(\Omega )}<C\) and \(\Vert u_i(\rho +\gamma )\Vert _{H^1(\Omega )}<C\) when \(\Vert \gamma \Vert _{L^\infty (\Omega )}\) is small enough. Therefore, the denominator of (67) could be bounded. Combined with Lemma 3.1, (67) is evaluated by

Thus, by (62), we have

The Fr\(\acute{e}\)chet derivative \(\lambda _i^\prime (\rho )\) at \(\rho \in {\mathcal {A}},\ i=1,2,\ldots ,N,\) is given by

Consequently,

\(\square \)

4 Finite-Element Approximation and Its Convergence

The finite-element method is applied to discretize the optimization problem (10) and (4). The domain \(\Omega \) is partitioned into regular elements \({\mathcal {T}}_h\). The linear finite-element space \(V_h\) is defined by

where \(P_1(T_i)\) denotes the space of linear (bilinear) polynomials on the element \(T_i\). The discrete admissible set of the \(\rho _h\) is defined by

and \({\mathcal {A}}_h\subset {\mathcal {A}} \). Then the optimization problem (10) and (4) is approximated by the discrete minimization optimization

where the eigenpairs \((\lambda _{i,h}(\rho _h),u_{i,h}(\rho _h)),\ i=1,2,\ldots , m=\dim V_h\), satisfy the following weak formulations

The i-th eigenpair \((\lambda _{i,h}(\rho _h),u_{i,h}(\rho _h))\) is obtained by the min-max principle [see 18]

where

By rearranging, (73) admits a sequence of real eigenvalues

and the corresponding eigenfunctions

which can be chosen to satisfy

Analogous to Lemma 3.1, the boundedness of \(\lambda _{i,h}(\rho _h)\) could be obtained as follows:

Lemma 4.1

For any \(\rho _h \in {\mathcal {A}}_h\), we have

Lemma 4.2

For \( i=1,2,\ldots ,m\), let \(\rho _h^{n},\ \rho _h^{*} \in {\mathcal {A}}_h\) and \(\rho _h^{n}\rightarrow \rho _h^{*} \) in any norm as \(n\rightarrow \infty \), then \(\lambda _{i,h}(\rho _h^n)\rightarrow \lambda _{i,h}(\rho _h^*)\). Moreover, there exists a subsequence \(u_{i,h}(\rho _h^n)\in M_h(\lambda _{i,h}(\rho _h^n))\), and some \(u_{i,h}(\rho _h^*)\in M_h(\lambda _{i,h}(\rho _h^*))\), satisfying \(u_{i,h}(\rho _h^n)\rightarrow u_{i,h}(\rho _h^*)\ in \ H^{1}(\Omega ),\) as \(n\rightarrow \infty \).

Proof

The proof is analogue to Lemma 3.3, replacing \(\rho ^{n},\ \rho ^{*}, \ \lambda _{i}(\rho ^n),\ u_{i}(\rho ^n)\) by \(\rho _h^{n},\ \rho _h^{*},\ \lambda _{i,h}(\rho _h^n), u_{i,h}(\rho _h^n)\), respectively. \(\square \)

Theorem 4.1

There exists at least one minimizer to the optimization problem (72) and (73).

Proof

By Lemma 4.1, we conclude that the minimization of \(F_h(\rho _h)\) is finite over the admissible set \({\mathcal {A}}_h\). Therefore, there exists a sequence \(\rho ^n_{h}\in {\mathcal {A}}_h\) such that

The uniform boundedness of the sequence \(\rho _h^n\) in \(L^\infty (\Omega )\) implies that there exists a subsequence, also denoted by \(\rho _h^n\), and some \(\rho _h^*\) such that

By Lemma 4.2, and the lower semicontinuity of a norm, we obtain

Therefore, \(\rho _h^*\) is a minimizer of \(F_{h}(\rho _h)\). \(\square \)

Lemma 4.3

Assume that \((\lambda _{i,h}(\rho _h),u_{i,h}(\rho _h))\) (\( i=1,2,\ldots ,M\)) are the eigenpairs of (73), and (\({\tilde{\lambda }}_i,{\tilde{u}}_i)\) are the eigenpairs of (4) replacing \(\rho \) by \({\tilde{\rho }}\), then

Proof

The proof is analogue to Lemma 3.2, replacing \(\rho , \ \lambda _{i}(\rho ),\ u_{i}(\rho )\) by \(\rho _h\), \(\lambda _{i,h}(\rho _h), u_{i,h}(\rho _h)\), respectively. \(\square \)

We introduce the standard interpolation operator \({\mathcal {I}}_h:\ W^{1,\infty }(\Omega )\rightarrow {V}_h\) and the projection operator \({\mathcal {R}}_h: \ H^{1}(\Omega )\rightarrow {V}_h\) defined by

It is well known [see 20, 21] that, for any \(p>d=dim(\Omega )\), we have

and

Lemma 4.4

Let \(\rho _h\in {\mathcal {A}}_h\), \(\rho ^*\in {\mathcal {A}} \ \text {in} \ L^{\infty }(\Omega )\), if \(\rho _h {\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*}\ \text {in} \ L^{\infty }(\Omega )\) as \(h\rightarrow 0\), then \(\lambda _{i,h}(\rho _{h})\rightarrow \lambda _{i}(\rho ^*)\). Moreover, there exists a subsequence \(u_{i,h}(\rho _{h})\in M_h(\lambda _{i,h}(\rho _{h}))\), and some \(u_{i}(\rho ^*)\in M(\lambda _i(\rho ^*))\), satisfying \(u_{i,h}(\rho _{h})\rightarrow u_{i}(\rho ^*)\ in \ H^{1}(\Omega ),\) as \(h\rightarrow 0\).

Proof

By Lemma 4.1, for \( i=1,2,\ldots , N\), we have

The finite-element solutions \(\lambda _{i,h}(\rho _0)\) and \(\lambda _{i,h}(\rho _1)\) have the following convergence [see 18]

Therefore,

It implies that there exists a subsequence, also denoted by \(\lambda _{i,h}(\rho _{h})\), such that

By (78), the eigenfunction \(u_{i,h}(\rho _h)\in M_h(\lambda _{i,h}(\rho _h))\) satisfies

and by Poincar\(\acute{e }\) inequality, we have

where C is a constant independent of h. Thus, there exists a subsequence, also denoted by \(u_{i,h}(\rho _h)\), such that

as \(h\rightarrow 0\).

We need to prove that \((\lambda _i^*,u_i^*)\) is the eigenpair of (4) corresponding to \(\rho ^*\) for \( i=1,2,\ldots , N\). Notice that the eigenpair \((\lambda _{i,h}(\rho _h), \ u_{i,h}(\rho _h))\) corresponding to \(\rho _h\) satisfies the weak formula (73). Substituting \(v_h\) in (73) by \({\mathcal {R}}_h v, \ \forall v\in H_{0}^{1}(\Omega )\), we obtain

The RHS item of equation of (89) could be rewritten by

By (11), (71), (87), (85), (88), (84) and \(\rho _h {\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*}\ \text {in} \ L^{\infty }(\Omega )\) as \(h\rightarrow 0\), we have

Taking the limitation of equation (89) as \(h\rightarrow 0\), combined with (91) and (92), we have

Therefore, it could conclude that \((\lambda _i^*,u_i^*)\ ( i=1,2,\ldots ,N)\) are eigenpairs of (4) corresponding to \(\rho ^*\). Replacing \({\tilde{\rho }},\ {\tilde{u}}_{i}, \ {\tilde{\lambda }}_{i}\) by \(\rho ^*,\ u_{i}^*, \ \lambda _{i}^*\) in Lemma 4.3, we have

with (11), (71), (88), (87), (85) and \(\rho _{h}{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ in \ L^{\infty }(\Omega )\). Combining (88) and (94), we have

as \(h\rightarrow 0\).

Taking the limitation of (78) as \(h\rightarrow 0\), by (71), (87), (95), (85) and \(\rho _h{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ in \ L^{\infty }(\Omega )\) as \(h\rightarrow 0\), the orthogonality of \(u_i^*\) is obtained by

Finally, we prove \((\lambda ^*_{i},u^*_{i})\) (\(i=1,2,\ldots ,N\)) is the i-th eigenpair of (4) corresponding to \(\rho ^*\) by induction. For \(i=1\), by (7) (replacing \(\rho \) by \(\rho ^*\)), we have

On the other hand, by (85), (74), (71), (84) and \(\rho _h{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ in \ L^{\infty }(\Omega )\) as \(h\rightarrow 0\), we have

Combining (97) and (98), we obtain \(\lambda _{1}^{*}=\lambda _{1}(\rho ^{*})\) and \(u_{1}^{*} \in M (\lambda _{1}(\rho ^{*}))\). We set \(u_{1}(\rho ^{*})\in M (\lambda _{1}(\rho ^{*}))\) to satisfy \(u_{1}^{*}=u_{1}(\rho ^{*})\).

For \( i=1,2,\ldots , k\), assuming that

we need to prove that \(\lambda _{k+1}^{*}=\lambda _{k+1}(\rho ^{*})\) and \(u_{k+1}^{*}=u_{k+1}(\rho ^{*})\). On one hand, similar to the proof of (41), we have

On the other hand, we can conclude that

If the claim is negated to assume that

since \(u_{1,h}(\rho _h),u_{2,h}(\rho _h),...,u_{k,h}(\rho _h)\) are orthogonal by (78), then

By the orthogonality (78), we obtain

Taking the limitation of (104) as \(h\rightarrow 0\), with (84), (95), (100) and (9) (replacing \(\rho \) by \(\rho ^*\)), we obtain

Therefore, \( {\mathcal {R}}_h u_{k+1}(\rho ^{*})\equiv 0\). By (84), it implies that \(u_{k+1}(\rho ^{*})\equiv 0\), which contradicts the definition of a nontrivial eigenfunction.

Let \({\tilde{u}}_h=\beta _{1}u_{1,h}(\rho _h)+\beta _{2}u_{2,h}(\rho _h)+,...,\beta _k u_{k,h}(\rho _h)+\beta _{k+1} {\mathcal {R}}_h u_{k+1}(\rho ^{*})\). By (78), (95), (100), (84), \(\rho _h{\mathop {\rightharpoonup }\limits ^{*}} \rho ^{*} \ in \ L^{\infty }(\Omega )\) as \(h\rightarrow 0\) and (9) (replacing \(\rho \) by \(\rho ^*\)), we have

and

By (5) and (9) (replacing \(\rho \) by \(\rho ^*\)), (107) could be further evaluated by

Combining (85), (106), (107), (108) and (109), it implies that

With (101) and (110), we obtain \(\lambda _{k+1}^{*}=\lambda _{k+1}(\rho ^{*})\). The eigenfunction \(u_{k+1}^{*}\) corresponding to \(\lambda _{k+1}^{*}\) is also the eigenfunction corresponding to \(\lambda _{k+1}(\rho ^{*})\). That is, \(u_{k+1}^{*} \in M (\lambda _{k+1}(\rho ^{*}))\). We set \(u_{k+1}(\rho ^{*})\in M (\lambda _{k+1}(\rho ^{*}))\) to satisfy \(u_{k+1}^{*}=u_{k+1}(\rho ^{*})\). By induction, we conclude that \(\lambda ^*_{i}=\lambda _{i}(\rho ^*), \ u^*_{i}=u_{i}(\rho ^*), \ i=1,2,\ldots , N\). Combined with (85) and (95), the proof is complete. \(\square \)

Lemma 4.5

(See [21, LEMMA 4.3]) \(C^\infty (\Omega )\) is weak-* dense in \(L^\infty (\Omega )\).

Theorem 4.2

Let \(\{\rho _h^*\}_{h>0}\) be a sequence of minimizers to the discrete minimization problem (72) and (73). Then each subsequence of \(\{\rho _h^*\}_{h>0}\) has a subsequence converging to a minimizer of the continuous optimization problem (10) and (4).

Proof

The uniform boundedness of the sequence \(\rho _h^*\) in \(L^\infty (\Omega )\) implies that there exists a subsequence, also denoted by \(\rho _h^*\), and some \(\rho ^*\) such that

Lemma 4.4 implies that

By Lemma 4.5, for any \(\rho \in {\mathcal {A}}\) and fixed \(\delta >0\), there exists a \(\rho ^{\delta }\in C^\infty (\Omega )\) such that

By its construction, \(\rho ^{\delta }\in {\mathcal {A}}\). Let \(\rho _h^{\delta }={\mathcal {I}}_h\rho ^{\delta }\in {\mathcal {A}}_h\). By (83) and Lemma 4.4, we have

Noting that \(\rho _h^*\) is the minimizer of \(F_h(\cdot )\) over \({\mathcal {A}}_h\), we have

Thus, by (112), (115), (114) and the lower semicontinuity of a norm, we have

Letting \(\delta \) tend to zero, we obtain that

Therefore, \(\rho ^*\) is a minimizer of \(F(\rho )\). \(\square \)

5 Algorithm

For \(\rho \in {\mathcal {A}} \) and \(i=1,2,\ldots ,N,\) if eigenvalues \(\lambda _i(\rho )\) of (4) are simple, we solve the optimization problem (10) and (4) by the Fletcher–Reeves conjugate gradient algorithm [22]. That is, with the Fr\(\acute{e}\)chet derivative (59), the decent direction is set by

With the Fletcher–Reeves scheme [22], the conjugate coefficient \(\beta _{k-1}\) is given by

and \(\beta _{-1}=0.\) The step length \(\alpha _{k}\) in the conjugate direction \(d_{k}\) is determined by

In our numerical algorithm, by taking the derivative of \(F(\rho ^{(k)}+\alpha d_{k})\) with respect to \(\alpha \) and setting it to be zero, the step length \(\alpha _{k}\) is approximated by

Now, we summarize the Fletcher–Reeves conjugate gradient algorithm as follows:

Algorithm 5.1

STEP 1. Initialize the density function \(\rho ^{(0)}\), and set the regularity coefficient \(\varepsilon \) and the input data \({\widehat{\lambda }}_i,i=1,2,\ldots , N.\)

STEP 2. Solve the eigenpairs \((\lambda _i(\rho ^{(k)}),u_{i}(\rho ^{k})),i=1,2,\ldots , N\) of (4).

STEP 3. Determine the gradient \(F^{\prime }(\rho ^{(k)})\) by (59) and calculate the descent direction \(d_{k}\) by (116) and (117).

STEP 4. Calculate the step length \(\alpha _{k}\) by (118).

STEP 5. Update the density function \(\rho ^{(k)}\) by

STEP 6. Set \(k=k+1\) and go to STEP 2. Repeat the procedure until a stopping criterion is satisfied.

Remark 5.1

In the numerical experiments, the stopping criterion is set that the norm of the decent direction is small enough. That is, if \(\vert \vert d_{k}\vert \vert _{L^2(\Omega )}<\delta \), where \(\delta \) is a given number, the iteration of the Fletcher–Reeves conjugate gradient algorithm is stopped.

6 Numerical Results

In this section, we present numerical results of the optimization problem (10) and (4) in 1D and 2D cases. The domain is partitioned into uniform meshes. The piecewise linear (bilinear) basis functions are used. The noisy data is generated by using the following formula:

where \(\zeta \) is a uniformly distributed random variable in \( [-1,1]\) and \(\sigma \) dictates the level of noise. The eigenpairs of (4) are solved by eigs found in MATLAB. The Algorithm 5.1 is conducted in MATLAB codes.

6.1 1D Case

Set the domain \(\Omega =(0,\pi )\), which is uniformly partitioned into 2000 elements. The exact density function is set by

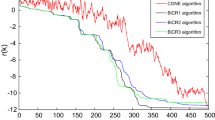

The first 5 least eigenvalues with noise level \(\sigma =0.001\) are listed in the first row of Table 1 and used as the input data. Set \(\varepsilon =10^{-6}\) and \( \delta =10^{-4}\). With the initialization of density function \(\rho ^{(0)}=1\) in the whole domain (see the dash line in Fig. 1a), the evolutions of density function are illustrated in Fig. 1, after \(k=10,~20,~30,~50,~156\) iterations. The solid curve represents the exact density function, while the dash curve represents the recovered density function. After 156 iterations, the curve of the recovered density function fits well with the one of the exact density function, but it has some oscillations. The initial and the finial first 5 least eigenvalues are listed in the second row and the third row of Table 1, respectively.

With more input data, the recovery of the density function is improved. Using the first 10 least eigenvalues as the input data (see Table 2), with the same setting parameters, the evolutions of density function are illustrated in Fig. 2, after \(k=10,~30,~50,~100,~483\) iterations. The solid curve represents the exact density function, while the dash curve represents the recovered density function. After 483 iterations, the recovered density function fits the exact one much better, compared to the numerical results shown in Fig. 1 using first 5 least eigenvalues as the input data. The initial and the finial first 10 least eigenvalues are listed in Table 2.

Various levels of noise are considered. Set \(\sigma =0.001,0.005,0.01,0.02,\) respectively. Set \(\varepsilon =10^{-6}\) and \( \delta =10^{-4}\). With the first 5 small least eigenvalues as the input data, we initialize the density function \(\rho ^{(0)}=1\) in the whole domain. The final recovered density function are illustrated in Fig. 3, with \(\sigma =0.001,0.005,0.01,0.02,\) respectively. The solid curve represents the exact density function, while the dash curve represents the recovered density function. Note that the higher level of noise incurs the inaccuracy of the recovery of density function. It may be caused by the sensitivity of the eigenvalue to the variation of the density function.

The measured spectral data with gaps are considered. As listed in the first row of Table 2, the eigenvalues \({\widehat{\lambda }}_1\), \({\widehat{\lambda }}_2\), \({\widehat{\lambda }}_3\), \({\widehat{\lambda }}_6\), \({\widehat{\lambda }}_9\) with noise level \(\sigma =0.001\) are chosen as the input data. Setting \(\varepsilon =10^{-6}\), \( \delta =10^{-4}\) and \(\rho ^{(0)}=1\) in the whole domain, the evolutions of density function are illustrated in Fig. 4, after \(k=10,~20,~30,~50,~94\) iterations. The solid curve represents the exact density function, while the dash curve represents the recovered density function. Compared to the numerical results shown in Fig. 1, it is observed that the first 5 least eigenvalues as the measured spectral data could recover the density function better than the 5 eigenvalues with gaps. After 94 iterations, the finial eigenvalues are \({\lambda }_1=0.91426600\), \({\lambda }_2=3.93801788\), \({\lambda }_3=8.47125761\), \({\lambda }_6=34.24481075 \), \({\lambda }_9=77.22502904\), respectively.

The discontinuous density case is also considered, which is defined by

The first 10 least eigenvalues with noise level \(\sigma =0.001\) are listed in the first row of Table 3 and used as the input data. Set \(\varepsilon =10^{-6}\) and \( \delta =10^{-4}\). With the initialization of density function \(\rho ^{(0)}=1\) in the whole domain (see the dash line in Fig. 5 (a)), the evolutions of density function are illustrated in Fig. 5, after \(k=10,~30,~50,~80,~368\) iterations. The solid curve represents the exact density function with discontinuity at \(x=\frac{5\pi }{16}\) and \( \frac{11\pi }{16}\), while the dash curve represents the recovered density function. After 368 iterations, the curve of the recovered density function fits well with the one of the exact density function at most part of the region \(\Omega =(0,\pi )\). It has some deviations in the vicinity of \(x=\frac{5\pi }{16}\) and \( \frac{11\pi }{16}\). The initial and the finial first 10 least eigenvalues are listed in Table 3.

6.2 2D Case

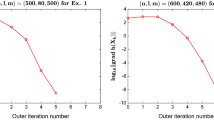

Set the domain \(\Omega =(0,\pi /a)\times (0,\pi )\) with \(a=\sqrt{0.9}\). It is uniformly partitioned into \(100\times 100\) rectangular elements. The exact density function is set by

The first 10 least eigenvalues with noise level \(\sigma =0.001\) are listed in the Table 4 and used as the input data. Set \(\varepsilon =10^{-8}\) and \( \delta =10^{-4}\). The exact density function is illustrated in Fig. 6a. With the initialization of density function \(\rho ^{(0)}=1\) in the whole domain (see Fig. 6b), the evolutions of density function are illustrated in Fig. 6, after \(k=10, ~30,~50,~165\) iterations. After 156 iterations, the recovered density function fits the exact one well. The initial and the finial first 10 least eigenvalues are listed in the Table 4.

Various levels of noise are considered. Set \(\sigma =0.001,0.005,0.01,0.02,\) respectively. Set \(\varepsilon =10^{-8}\) and \( \delta =10^{-4}\). With the first 10 small least eigenvalues as the input data and with the initialization of the density function \(\rho ^{(0)}=1\) in the whole domain, the final recovered density functions are illustrated in Fig. 7.

7 Conclusions

The inverse eigenvalue problem for a weighted Helmholtz equation is investigated. The continuity of the eigenvalue and the eigenfunction with respect to the density function is proved by induction. Then the properties of existence, stability and Fr\(\acute{e}\)chet derivative of the continuous optimization problems are established. The finite element method is applied to solve the weighted Helmholtz equation. The convergence of the discrete i-th eigenpair to the continuous i-th eigenpair is proved. The properties of existence and the convergence of the discrete optimization problems are derived. A conjugate gradient algorithm is proposed. In the numerical experiments, reconstructions of continuous density function from different input eigenvalue data are discussed, including the first 5 least eigenvalues, the first 10 least eigenvalues and 5 eigenvalues with gaps. Also, the reconstruction of a discontinuous density function from the first 10 least eigenvalues as the input data is investigated. The proposed algorithm has the capacity to reconstruct the density function efficiently, especially for the cases that the density function is continuous and the input eigenvalue data are the first few least ones without gaps.

Data Availability

The datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request.

References

Chu, M.T.: Inverse eigenvalue problems. SIAM Rev. 40(1), 1–39 (1998)

McLaughlin, J.R.: Analytical methods for recovering coefficients in differential equations from spectral data. SIAM Rev. 28(1), 53–72 (1986)

Nachman, A., Sylvester, J., Uhlmann, G.: An n-dimensional Borg–Levinson theorem. Commun. Math. Phys. 115(4), 595–605 (1988)

Rundell, W., Sacks, P.E.: Reconstruction techniques for classical inverse Sturm–Liouville problems. Math. Comput. 58(197), 161–183 (1992)

Barcilon, V.: A two-dimensional inverse eigenvalue problem. Inverse Prob. 6(1), 11–20 (1990)

McCarthy, C.M.: The inverse eigenvalue problem for a weighted Helmholtz equation. Appl. Anal. 77(1–2), 77–96 (2001)

McCarthy, C.M.: Recovery of a density from the eigenvalues of a nonhomogeneous membrane. In: Proceedings of the Third International Conference on Inverse Problems in Engineering: Theory and Practice, Port Ludlow, Washington (1999)

Andrew, A.L.: Numerical solution of inverse Sturm–Liouville problems. ANZIAM J. 45, 326–337 (2004)

Drignei, M.C.: Constructibility of an \({\rm L}_{\mathbb{R}}^2(0, a)\) solution to an inverse Sturm–Liouville problem using three dirichlet spectra. Inverse Prob. 26(2), 025003 (2009)

Jiang, X., Li, X., Xu, X.: Numerical algorithms for inverse Sturm–Liouville problems. Numer. Algorithms 89, 1278–1309 (2022)

Gao, Q., Huang, Z., Cheng, X.: A finite difference method for an inverse Sturm–Liouville problem in impedance form. Numer. Algorithms 70(3), 669–690 (2015)

Zhang, W., Du, Z., Sun, G., Guo, X.: A level set approach for damage identification of continuum structures based on dynamic responses. J. Sound Vib. 386, 100–115 (2017)

Zhang, Z., Dai, X., Chen, W.: A piecewise constant level set method for damage identification of continuum structures based on natural frequencies. Struct. Multidiscip. Optim. 60(6), 2477–2491 (2019)

Lee, U., Shin, J.: A frequency response function-based structural damage identification method. Comput. Struct. 80(2), 117–132 (2002)

Babuška, I., Osborn, J.: Eigenvalue problems. In: Finite Element Methods (Part 1). Handbook of Numerical Analysis, vol. 2, pp. 641–787. Elsevier, New York (1991)

Sun, J., Zhou, A.: Finite Element Methods for Eigenvalue Problems. CRC Press, New York (2016)

Babuška, I., Osborn, J.E.: Estimates for the errors in eigenvalue and eigenvector approximation by Galerkin methods, with particular attention to the case of multiple eigenvalues. SIAM J. Numer. Anal. 24(6), 1249–1276 (1987)

Babuška, I., Osborn, J.E.: Finite element-Galerkin approximation of the eigenvalues and eigenvectors of selfadjoint problems. Math. Comput. 52(186), 275–297 (1989)

Gilbert, A.D., Graham, I.G., Kuo, F.Y., Scheichl, R., Sloan, I.H.: Analysis of quasi-monte Carlo methods for elliptic eigenvalue problems with stochastic coefficients. Numer. Math. 142(4), 863–915 (2019)

Ciarlet, P.G.: The Finite Element Method for Elliptic Problems. Society for Industrial and Applied Mathematics, Paris (2002)

Jin, B., Zou, J.: Numerical estimation of the robin coefficient in a stationary diffusion equation. IMA J. Numer. Anal. 30(3), 677–701 (2010)

Fletcher, R., Reeves, C.M.: Function minimization by conjugate gradients. Comput. J. 7(2), 149–154 (1964)

Funding

This research is supported by Natural Science Foundation of Zhejiang Province, China (No. LY21A010011) and the European Union’s Horizon 2020 Research and Innovation Programme under the Marie Sklodowska-Cutie Grand Agreement (No. 823731 CONMECH).

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and algorithm design. The theoretical part is derived mainly by ZZ and XC. The numerical part is mainly performed by XG. The first draft of the manuscript was written by XG and all authors commented on previous versions of the manuscript. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Zhang, Z., Gao, X. & Cheng, X. Numerical Estimation of the Inverse Eigenvalue Problem for a Weighted Helmholtz Equation. J Sci Comput 96, 16 (2023). https://doi.org/10.1007/s10915-023-02242-1

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10915-023-02242-1