Abstract

In this paper we study the continuous-time Markov decision processes with a denumerable state space, a Borel action space, and unbounded transition and cost rates. The optimality criterion to be considered is the finite-horizon expected total cost criterion. Under the suitable conditions, we propose a finite approximation for the approximate computations of an optimal policy and the value function, and obtain the corresponding error estimations. Furthermore, our main results are illustrated with a controlled birth and death system.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Continuous-time Markov decision processes (CTMDPs) have wide applications to many areas, such as queueing systems, epidemiology, and telecommunication; see, for instance, Puterman (1994), Kitaev and Rykov (1995) and Guo and Hernández-Lerma (2009). Since the time interval in the real-world applications is always finite, it is meaningful to study the CTMDPs under the finite-horizon expected criterion. As is well known, a common approach to prove the existence of optimal policies for the finite-horizon expected total cost criterion is via the optimality equation which has been established under the different optimality conditions. More precisely, Miller (1968) dealt with the case of finite states and finite actions. Yushkevich (1977) and Bäuerle and Rieder (2011) discussed the case of a denumerable state space and bounded transition rates. Pliska (1975) and Gihman and Skohorod (1979) considered the case of a Borel state space and bounded transition rates. van Dijk (1988) and Guo et al. (2015) investigated the case of a denumerable state space and unbounded transition rates. Wei and Chen (2014) studied the case of a Borel state space and unbounded transition rates. All the aforementioned works discussed the finite-horizon expected total cost criterion in the class of all Markov policies except Yushkevich (1977) and Guo et al. (2015) which deal with the finite-horizon CTMDPs in the more general class of all history-dependent policies. They focus on showing that the value function is a unique solution to the optimality equation and that there exists an optimal Markov policy. Moreover, Guo et al. (2015) gave an example in which the precise forms of an optimal policy and the value function were obtained for some special cases, and solved an open problem proposed in Yushkevich (1977). However, the solution to the optimality equation cannot be solved explicitly in most cases. Hence, it is desirable to study the numerical methods for the approximate computations of an optimal policy and the value function. van Dijk (1988, (1989) used a method of time discretization to develop an approximation for the approximate computations of an optimal policy and the value function for the case of the denumerable state and action spaces and unbounded transition and cost rates, and obtained the orders of the accuracy of the approximation. From the theoretical viewpoint, the approximation in van Dijk (1988, (1989) can be computed by the recursive discrete-time dynamic programming. From the computational viewpoint, how to realize the approximation for the case of the denumerable state and action spaces is not involved in van Dijk (1988, (1989). In view of applications, it is of great importance to investigate the tractable numerical methods for the case of a denumerable state space and an uncountable action space.

In this paper we further study the issue of the approximate computations of an optimal policy and the value function for the finite-horizon expected total cost criterion basing on the existence results in Guo et al. (2015). The state space is a denumerable set and the action space is a Borel space. The transition and cost rates are allowed to be unbounded. Under the suitable conditions, we propose a finite approximation for the approximate computations of the value function and an optimal policy. To be more specific, this approximation can be divided into the following two steps. (I) The first step is to construct a sequence of the control models \(\{{\mathcal {M}}_n,n\ge 1\}\) satisfying that the state space and the set of all admissible actions are finite sets, and that the corresponding value functions converge to the value function of the original control model \({\mathcal {M}}\). To this end, we define the state space and transition rates of the control model \({\mathcal {M}}_n\) by employing the finite truncation of the corresponding elements of the original control model \({\mathcal {M}}\), and choose the set of all admissible actions of the control model \({\mathcal {M}}_n\) satisfying a certain condition. (II) The second step is to construct a suitable value iteration for approximating the value function of the control model \({\mathcal {M}}_n\). Since there are uncountable points in the finite time interval, it is infeasible to compute the value function directly from the computational viewpoint. Hence, we divide the time interval into several equal parts, and present a value iteration using a technique of time discretization and the optimality equation [see (4.11)]. Moreover, we can obtain an approximate optimal policy of the control model \({\mathcal {M}}\) as in the form of (4.12) from executing the iteration procedure. It should be mentioned that the method of time discretization in this paper is different from that in van Dijk (1988, (1989). More specifically, van Dijk (1988, (1989) employed the technique of time discretization to construct a discrete-time MDP with a fixed step size in the time parameter and then gave an approximation for the approximate computations of an optimal policy and the value function by solving the finite-horizon optimality equation of the discrete-time MDP. We use the approach of time discretization to obtain a partition of the time interval and directly provide a finite approximation for the approximate computations of an optimal policy and the value function without introducing an auxiliary discrete-time MDP as in van Dijk (1988, (1989).

Furthermore, we analyze the accuracy of the finite approximation. For the first step, we obtain an error estimation between the value function of the control model \({\mathcal {M}}_n\) and that of the original control model \({\mathcal {M}}\) by suitably choosing the set of all admissible actions of the control model \({\mathcal {M}}_n\) (see Theorem 4.1). For the second step, we give an error estimation between the values obtained from the iteration and the value function of the control model \({\mathcal {M}}_n\) at the discrete time points, and an error estimation of an approximate optimal policy of the control model \({\mathcal {M}}\) (see Theorem 4.2). Finally, we use a controlled birth and death system to illustrate the application of the finite approximation.

The rest of this paper is organized as follows. In Sect. 2, we introduce the control model and optimality criterion. In Sect. 3, we give the optimality conditions for the existence of optimal policies and preliminary results. In Sect. 4, we state and prove our main results. In Sect. 5, we illustrate our main results with a controlled birth and death system.

2 The control model

In this paper we consider the following model

where the state space S is assumed to be the set of all nonnegative integers endowed with the discrete topology and the action space A is assumed to be a Borel space with the Borel \(\sigma \)-algebra \({\mathcal {B}}(A)\). \(A(i)\in {\mathcal {B}}(A)\) represents the set of all admissible actions when the state of the system is \(i\in S\). Let \(K:=\{(i,a)|i\in S, a\in A(i)\}\) be the set of all the feasible state-action pairs. The transition rate q(j|i, a) is supposed to satisfy the following properties:

-

For each fixed \(i,j\in S\), q(j|i, a) is measurable in \(a\in A(i)\);

-

\(q(j|i,a)\ge 0\) for all \((i,a)\in K\) and \(j\ne i\);

-

\(\sum _{j\in S} q(j|i,a)=0\) for all \((i,a)\in K\);

-

\(q^*(i):=\sup _{a\in A(i)}|q(i|i,a)|<\infty \) for all \(i\in S\).

Finally, the real-valued cost rate function c(i, a) is measurable in \(a\in A(i)\) for each \(i\in S\).

A continuous-time Markov decision process evolves as follows. A decision-maker observes continuously the state of the system. If the system occupies state i, he/she chooses an action \(a\in A(i)\) according to some decision rule. As a consequence of this, the following happens: (i) a cost takes place at the rate c(i, a); (ii) the system remains in the state i for a random time following the exponential distribution with the tail function given by \(e^{q(i|i,a)t}\), and then jumps to a new state j with the probability \(-\frac{q(j|i,a)}{q(i|i,a)}\) (we make a convention that \(\frac{0}{0}:=0\)).

To define the optimality criterion, we need to introduce the definition of a policy. From Theorem 4.1 in Guo et al. (2015), we have that there exists an optimal Markov policy over the class of all randomized history-dependent policies for the finite-horizon expected total cost criterion. Thus, without loss of generality, we restrict the discussions to the class of all randomized Markov policies throughout the paper.

Definition 2.1

A randomized Markov policy is a family \(\pi :=\{\pi _t,t\ge 0\}\) of stochastic kernels that satisfy

-

(i)

for each \(t\ge 0\), \(\pi _t\) is a stochastic kernel on A given S such that \(\pi _t(A(i)|i)=1\) for all \(i\in S\);

-

(ii)

for each \(i\in S\) and \(D\in {\mathcal {B}}(A)\), \(\pi _t(D|i)\) is Borel measurable in \(t\in [0,\infty )\).

A policy \(\pi \) is called deterministic Markov if there exists a measurable function f on \(S\times [0,\infty )\) with \(f(i,t)\in A(i)\), such that \(\pi _t(\cdot |i)\) is the Dirac measure concentrated at \(f(i,t)\in A(i)\) for all \((i,t)\in S\times [0,\infty )\).

We denote by \(\Pi \) the set of all randomized Markov policies and by \(\Pi ^{D}\) the set of all deterministic Markov policies.

For any \(\pi \in \Pi \) and any initial state \(i\in S\), by Theorem 4.27 in Kitaev and Rykov (1995), there exist a unique probability measure \(P_{i}^{\pi }\) on some measurable space \((\Omega ,{\mathcal {B}}(\Omega ))\) and a state process \(\{\xi _t,t\ge 0\}\). Let \(E^{\pi }_{i}\) be the corresponding expectation operator with respect to \(P^{\pi }_{i}\).

Fix an arbitrary constant \(T>0\) denoting the horizon of the CTMDPs. For any \(i,j\in S\) and \(\pi \in \Pi \), we define the finite-horizon expected total cost from time t to the terminal time T as follows:

Since for each \(\pi \in \Pi \), \(\{\xi _t,t\ge 0\}\) is a Markov jump process, the definition of \(V^{\pi }(t,i)\) is independent of the state \(j\in S\). The corresponding value function is defined by

Definition 2.2

A policy \(\pi ^*\in \Pi \) is said to be optimal if \(V^{\pi ^*}(0,i)=V^*(0,i)\) for all \(i\in S\).

3 Preliminaries

In this section, we give some basic assumptions and preliminary results.

The following conditions are used to avoid the explosion of the process \(\{\xi _t,t\ge 0\}\) and guarantee the finiteness of the finite-horizon expected total cost criterion \(V^{\pi }(\cdot )\) and the value function \(V^*(\cdot )\); see, for instance, Guo and Hernández-Lerma (2009) and Guo and Zhang (2014).

Assumption 3.1

There exist a nondecreasing function \(w\ge 1\) on S with \(\lim _{i\rightarrow \infty }w(i)=\infty \), and constants \(\rho _1\in {\mathbb {R}}:=(-\infty ,+\infty )\), \(d_1\ge 0\), \(Q>0\) and \(M>0\) such that

-

(i)

\(\sum _{j\in S}w(j)q(j|i,a)\le \rho _1w(i)+d_1\) for all \((i,a)\in K\);

-

(ii)

\(q^*(i)\le Qw(i)\) for all \(i\in S\);

-

(iii)

\(|c(i,a)|\le Mw(i)\) for all \((i,a)\in K\).

In addition to Assumption 3.1, we also need the following conditions.

Assumption 3.2

-

(i)

There exist constants \(\rho _2\in {\mathbb {R}}\) and \(d_2\ge 0\) such that

$$\begin{aligned} \sum _{j\in S}w^2(j)q(j|i,a)\le \rho _2w^2(i)+d_2 \ \ \mathrm{for \ all} \ (i,a)\in K, \end{aligned}$$where w is as in Assumption 3.1.

-

(ii)

For each \(i\in S\), the set A(i) is compact.

-

(iii)

For any \(i,j\in S\) there exist constants \(L_i>0\) and \(L_{ij}>0\) such that

$$\begin{aligned} |c(i,a)-c(i,b)|\le L_id_A(a,b)\ \ \mathrm{and} \ \ |q(j|i,a)-q(j|i,b)|\le L_{ij}d_A(a,b) \end{aligned}$$for all \(a,b\in A(i)\), where \(d_A\) denotes the metric of the space A.

-

(iv)

For each \(i\in S\), the function \(\sum _{j\in S}w(j)q(j|i,a)\) is continuous in \(a\in A(i)\).

Remark 3.1

Assumption 3.2(i) is used to obtain the Ito–Dynkin formula; see Theorem 3.1 in Guo et al. (2015). Assumption 3.2(ii) and (iv), the standard continuity and compactness conditions, together with Assumption 3.2(iii), are used to ensure the existence of optimal policies; see, for instance, Guo and Hernández-Lerma (2009), Guo and Zhang (2014), Wei and Chen (2014) and Guo et al. (2015). Assumption 3.2(iii), the so-called Lipschitz continuity condition, is also used to give the error estimations of the finite approximation.

Then we have the following results.

Lemma 3.1

Suppose that Assumptions 3.1 and 3.2 are satisfied. Then the following statements hold.

-

(i)

\(E_j^{\pi }[w^l(\xi _t)|\xi _s=i]\le e^{\rho _l(t-s)}w^l(i) +\frac{d_l}{\rho _l}(e^{\rho _l(t-s)}-1)\) for all \(i,j\in S\), \(\pi \in \Pi \), \(t\ge s\ge 0\) and \(l=1,2\) (if \(\rho _l=0\), the righthand term is \(w^l(i)+d_l(t-s)\)).

-

(ii)

\(|V^{\pi }(t,i)|\le G_1w(i)\) for all \((t,i)\in [0,T]\times S\) and \(\pi \in \Pi \), where \(G_1=M[(\frac{1}{\rho _1}+\frac{d_1}{\rho _1^2}) (e^{\rho _1T}-1)-\frac{d_1}{\rho _1}T]\) (if \(\rho _1=0\), \(G_1=M (T+\frac{1}{2}d_1T^2)\)).

-

(iii)

The function \(V^*\) on \([0,T]\times S\) satisfies the following equation:

$$\begin{aligned} -\frac{\partial V^*}{\partial t}(t,i)=\inf _{a\in A(i)}\left\{ c(i,a)+\sum _{j\in S}V^*(t,j)q(j|i,a)\right\} \end{aligned}$$(3.1)for all \((t,i)\in [0,T]\times S\), where \(\frac{\partial V^*}{\partial t}\) denotes the derivative of \(V^*\) with respect to the variable t. Moreover, there exists \(f^*\in \Pi ^{D}\) with \(f^*(i,t)\in A(i)\) attaining the infimum in (3.1) and the policy \(f^*\) is optimal.

-

(iv)

\(|V^*(t_1,i)-V^*(t_2,i)|\le \left[ M+G_1(\rho _1I_ {\{\rho _1>0\}}+d_1+2Q)\right] w^2(i)|t_1-t_2|\) for all \(i\in S\) and \(t_1,t_2\in [0,T]\), where \(I_D\) denotes the indicator function of a set D.

Proof

(i) Part (i) follows from Lemma 6.3 in Guo and Hernández-Lerma (2009).

(ii) Fix any \(i,j\in S\), \(t\in [0,T]\) and \(\pi \in \Pi \). Then using (2.1), Assumption 3.1(iii) and part (i), we have

for \(\rho _1\ne 0\), which implies the desired assertion.

(iii) Part (iii) follows from the same techniques of Theorem 4.1 in Guo et al. (2015) and Theorem 4.1 in Wei and Chen (2014).

(iv) By (2.2) and (3.1), we have

for all \((t,i)\in [0,T]\times S\). Thus, direct calculations give

for all \(i\in S\) and \(t_1,t_2\in [0,T]\), where the inequality follows from part (ii) and Assumption 3.1. This completes the proof of the lemma. \(\square \)

4 Finite approximation

In this section, we present a finite approximation for the approximate computations of an optimal policy and the value function. To do so, we need to introduce the following notation.

For each integer \(n\ge 1\), we define the control model

with the following elements.

-

The state space is \(S_n:=\{0,1,\ldots , j_n\}\), where the sequence \(\{j_n, n\ge 1\}\) is increasing and \(\lim _{n\rightarrow \infty }j_n=\infty \).

-

The action space A is the same as in the model \({\mathcal {M}}\).

-

\(A_n(i)\), the set of all admissible actions in the state \(i\in S_n\), is an arbitrary finite set. Let \(K_n:=\{(i,a)|i\in S_n, a\in A_n(i)\}\).

-

For each \((i,a)\in K_n\) and \(j\in S_n\), the transition rate \(q_n(j|i,a)\) is given by

$$\begin{aligned} q_n(j|i,a):=\left\{ \begin{array}{ll} q(j|i,a), &{}\quad \text {if}\;j\ne j_n,\\ \sum _{k\ge j_n}q(k|i,a), &{}\quad \text {if}\;j=j_n. \end{array}\right. \end{aligned}$$ -

We still denote by c the restriction of the cost rate function c in the model \({\mathcal {M}}\) to \(K_n\).

We denote by \(\Pi _n\) the set of all randomized Markov policies and by \(\Pi _n^{D}\) the set of all deterministic Markov policies for the control model \({\mathcal {M}}_n\). Moreover, for any \(\pi \in \Pi _n\) and any initial state \(i\in S_n\), employing Theorem 4.27 in Kitaev and Rykov (1995), there exists a probability measure \(P^{i,\pi }_n\) on some measurable space \((\Omega _n, {\mathcal {B}}(\Omega _n))\). Denote by \(E^{i,\pi }_n\) the corresponding expectation operator with respect to \(P^{i,\pi }_n\). As in (2.1) and (2.2), we can also define the functions \(V^{\pi }_n\) and \(V^*_n\) on \([0,T]\times S_n\) with \(E^{i,\pi }_n\) and \(\Pi _n\) in lieu of \(E_i^{\pi }\) and \(\Pi \), respectively.

Let \({\mathcal {C}}\) be the set of all closed subsets of A. The Hausdorff metric on \({\mathcal {C}}\) is defined as

for all \(B_1,B_2\in {\mathcal {C}}\).

In order to obtain the error estimations of the finite approximation, we also need to impose the following condition.

Assumption 4.1

There exist constants \(\delta >2\), \(\rho _{\delta }\in {\mathbb {R}}\) and \(d_{\delta }\ge 0\) such that

where w comes from Assumption 3.1.

Below we state our first main result on the error estimation between the value function of the control model \({\mathcal {M}}_n\) and that of the original control model \({\mathcal {M}}\).

Theorem 4.1

Suppose that Assumptions 3.1, 3.2 and 4.1 hold. Let \(G_1\) be as in Lemma 3.1. If there exists a constant \({\widetilde{M}}>0\) such that for each \(n\ge 1\) and \(i\in S_n\), the set \(A_n(i)\) satisfies

then we have

for all \(t\in [0,T]\), where \(Q_1=\left[ {\widetilde{M}}+2G_1(|\rho _{\delta }|+d_{\delta }+Q)\right] \times [(\frac{1}{\rho _{\delta }}+\frac{d_{\delta }}{\rho _{\delta }^2})(e^{\rho _{\delta }T}-1) -\frac{d_{\delta }}{\rho _{\delta }}T]\) (if \(\rho _{\delta }=0\), \(Q_1=\left[ {\widetilde{M}}+2G_1(d_{\delta }+Q)\right] (T+\frac{1}{2}d_{\delta }T^2)\)).

Proof

Fix any \(n\ge 1\) and \(i\in S_n\). Let \(f^*\in \Pi ^{D}\) be as in Lemma 3.1(iii). Then we have

for all \(s\in [0,T]\). Moreover, direct calculations give

for all \(s\in [0,T]\), where the first inequality follows from Lemma 3.1(ii), the second one is due to the monotonicity of w, and the last one follows from Assumptions 3.1(ii) and 4.1. For each \(s\in [0,T]\), there exists \({\widetilde{f}}(i,s)\in A_n(i)\) satisfying

which together with Lemma 3.1(ii) and Assumption 3.2(iii) yields

and

Hence, by (4.2)–(4.5) we obtain

for all \(s\in [0,T]\), where \({\widetilde{Q}}:={\widetilde{M}}+2G_1(|\rho _{\delta }|+d_{\delta }+Q)\). Note that under Assumptions 3.1(i), (ii), 3.2(i) and 4.1, we have

for all \(a\in A_n(i)\), which together with Lemma 6.3 in Guo and Hernández-Lerma (2009) imply

for all \(j\in S_n\), \(\pi \in \Pi _n\), \(t\ge s\ge 0\) and \(l=1,2,\delta \) (if \(\rho _l=0\), the righthand term of (4.9) is \(w^l(i)+d_l(t-s)\)). Direct calculations give

(if \(\rho _2=0\), the last term is \(\left[ M+G_1(\rho _1I_{\{\rho _1>0\}}+d_1+2Q)\right] (T+\frac{1}{2}d_2T^2)w^2(i)\)) for all \(j\in S_n\), \(\pi \in \Pi _n\) and \(t\in [0,T]\), where the first inequality follows from Lemma 3.1 and Assumption 3.1, and the second one is due to (4.9). Moreover, by (4.6) we obtain

for all \(j\in S_n\) and \(t\in [0,T]\). Employing Theorem 3.1 in Guo et al. (2015) we have

for all \(j\in S_n\) and \(t\in [0,T]\). Hence, the last equality, (4.9) and (4.10) imply

(if \(\rho _{\delta }=0\), the last term is \(V_n^{*}(t,i)-{\widetilde{Q}} (T+\frac{1}{2}d_{\delta }T^2)\frac{w^{\delta }(i)}{w^{\delta -2}(j_n)}\)) for all \(j\in S_n\) and \(t\in [0,T]\). On the other hand, using the similar arguments, we have

for all \(t\in [0,T]\). Therefore, we obtain the desired assertion. \(\square \)

For each integer \(m\ge 1\), a partition of the interval [0, T] is as follows:

where \(t_l:=t_0-\frac{T}{m}l\) for all \(l=0,1,\ldots , m\). For each \(n\ge 1\), we define the following iteration

for all \(i\in S_n\) and \(l=1,\ldots , m\). For each \(n\ge 1\), \(i\in S_n\) and \(l\in \{1,\ldots , m\}\), let \({\mathcal {D}}_{n,l}(i)\) be the set of all the minimizers attaining the minimum in (4.11). For each \(n\ge 1\) and \(m\ge 1\), denote by \({\mathcal {O}}_{n,m}\) the set of all the policies with the following form:

for all \(i\in S_n\), where \(g_{n,l}(i)\) belongs to \({\mathcal {D}}_{n,l}(i)\) and \(a^*\in A_n(i)\) is arbitrarily fixed.

Next, we give our second main result on the error estimations of the approximate computations of the value function and an optimal policy for the control model \({\mathcal {M}}\) using the above iteration defined by (4.11).

Theorem 4.2

Suppose that the conditions in Theorem 4.1 are satisfied. Let \(Q_1\) be as in Theorem 4.1. Then the following statements hold: for each \(m\ge 1\) and \(n\ge 1\),

-

(i)

\(|V_n^*(t_l,i)-W_m(t_l,i)|\le \dfrac{M_1Tw^2(j_n)}{2Qm} \left( e^{2QTw(j_n)}-1\right) \) for all \(i\in S_n\) and \(l=0,1,\ldots , m\), where \(M_1=\left[ M+G_1(\rho _1I_{\{\rho _1>0\}}+d_1+2Q)\right] (\rho _2I_ {\{\rho _2>0\}}+d_2+2Q)\).

-

(ii)

\(|V^*(0,i)-W_m(0,i)|\le \dfrac{Q_1w^{\delta }(i)}{w^{\delta -2}(j_n)}+\dfrac{M_1Tw^2(j_n)}{2Qm }\left( e^{2QTw(j_n)}-1\right) \) for all \(i\in S_n\).

-

(iii)

Any policy \(f_{n,m}\in {\mathcal {O}}_{n,m}\) satisfies

$$\begin{aligned} |V^*(0,i)-V^{f_{n,m}}_n(0,i)|\le \dfrac{Q_1w^{\delta }(i)}{w^{\delta -2}(j_n)}+\dfrac{M_1T w^2(j_n)}{Qm}\left( e^{2QTw(j_n)}-1\right) \end{aligned}$$for all \(i\in S_n\).

Proof

(i) Fix any \(n\ge 1\), \(i\in S_n\), \(m\ge 1\) and \(l\in \{1,\ldots ,m\}\). By (4.7), (4.8) and the description of the control model \({\mathcal {M}}_n\), we see that Assumptions 3.1, 3.2 and 4.1 also hold for the transition rate \(q_n(j|i,a)\). Then it follows from Lemma 3.1 that

and

for all \(s,t\in [0,T]\). Thus, direct calculations give

where \(M_1\) equals \(\left[ M+G_1(\rho _1I_{\{\rho _1>0\}}+d_1+2Q)\right] (\rho _2I_{\{\rho _2>0\}}+d_2+2Q)\), the first equality is due to (4.11) and (4.13), and the third inequality follows from (4.7), (4.8) and (4.14). Hence, employing the last inequality, we have

which implies

Thus, it follows from the induction and the last inequality that

Therefore, part (i) follows from the last inequality.

(ii) Using the inequality \(|V^*(0,i)-W_m(0,i)|\le |V^*(0,i)-V^*_n(0,i)|+|V^*_n(0,i)-W_m(0,i)|\), we see that part (ii) follows directly from Theorem 4.1 and part (i).

(iii) Fix any \(n\ge 1\), \(i,i'\in S_n\), \(m\ge 1\), \(l\in \{1,\ldots ,m\}\) and \(f_{n,m}\in {\mathcal {O}}_{n,m}\). Then we have

for all \(t\in [0,T]\), where the first and fourth equalities are due to the definition of \(V^{f_{n,m}}_n\), and the second and third ones follow from the Fubini theorem and the Kolmogorov backward equation in Guo and Hernández-Lerma (2009, p. 211), respectively. Thus, using (4.15) and following the similar arguments of part (i), we have

Hence, the desired assertion follows from (4.16) and part (ii). \(\square \)

Remark 4.1

(a) For the original control model \({\mathcal {M}}\), Theorem 4.2 indicates that we can compute numerically the value function by the iteration constructed as in (4.11) and obtain an approximate optimal policy as in the form of (4.12).

(b) The method of time discretization is used to construct a discrete-time MDP with a fixed step size in the time parameter, from which an approximation for the approximate computations of an optimal policy and the value function is given in van Dijk (1988, (1989) for the case of the denumerable state and action spaces and unbounded transition and cost rates. To solve the finite-horizon optimality equation of the constructed discrete-time MDP is a crucial step in the approximation in van Dijk (1988, (1989). We employ the technique of time discretization to obtain a partition of the time interval and directly provide a finite approximation for the case of a denumerable state space, a Borel action space and unbounded transition and cost rates without introducing an auxiliary discrete-time MDP as in van Dijk (1988, (1989).

(c) If the state and action spaces of the model \({\mathcal {M}}\) are both finite sets, there exist positive constants \(\overline{Q}\) and \(\overline{M}\) such that \(q^*(i)\le \overline{Q}\) and \(|c(i,a)|\le \overline{M}\) for all \(i\in S\) and \(a\in A(i)\). For this particular class of the CTMDPs, there is no need to construct a sequence of the control models \(\{{\mathcal {M}}_n,n\ge 1\}\). Hence, using the similar arguments of Theorem 4.2, we can obtain

and

for all \(i\in S\), \(m\ge 1\) and \(l=0,1,\ldots ,m\), where the policy \(f^m\) is as in (4.12). Therefore, for this particular case, the last two inequalities imply that the accuracy of the approximation given by (4.11) and (4.12) is of order \(m^{-1}\).

5 An example

In this section, we use a controlled birth and death system to illustrate the finite approximation of the finite-horizon expected total cost criterion.

Example 5.1

(A controlled birth and death system) The control model is given as follows: \(S:=\{0,1,2,\ldots \}\), \(A(0):=[0,\kappa ]\times \{0\}\) (\(\kappa >0\)), \(A(i):=[0,\kappa ]\times [\zeta _1,\zeta _2]\) (\(\zeta _2>\zeta _1>0\)) for all \(i\ge 1\), \(q(1|0,(a_1,0))=-q(0|0,(a_1,0)):=a_1\) for all \(a_1\in [0,\kappa ]\), and for each \(i\ge 1\), \(a=(a_1,a_2)\in A(i)\),

where the positive constants \(\lambda \) and \(\mu \) denote the birth and death rates, respectively.

To ensure the existence of optimal policies, we consider the following conditions.

-

(C1)

There exists a constant \(M>0\) such that \(|c(i,a)|\le M(i+1)\) for all \((i,a)\in K\).

-

(C2)

For each \(i\in S\), there exists a constant \(L_i>0\) such that \(|c(i,a)-c(i,b)|\le L_id_A(a,b)\) for all \(a=(a_1,a_2), b=(b_1,b_2)\in A(i)\), where \(d_A(a,b):=|a_1-b_1|+|a_2-b_2|\).

Proposition 5.1

Under conditions (C1) and (C2), the controlled birth and death system satisfies Assumptions 3.1, 3.2 and 4.1.

Proof

Let \(w(i):=i+1\) for all \(i\in S\). Then direct calculations yield

for all \(i\ge 1\) and \(a=(a_1,a_2)\in A(i)\), and

for all \(a=(a_1,a_2)\in A(0)\). Thus, Assumptions 3.1(i), (ii), 3.2(i) and 4.1 hold with \(Q:=\lambda +\mu +(\kappa +\zeta _2-\lambda -\mu )I_{\{\kappa +\zeta _2-\lambda -\mu >0\}}\), \(\rho _1:=\lambda -\mu \), \(d_1:=\max \{0,\kappa +\mu -\lambda \}\), \(\rho _2:=2(\lambda -\mu )+(2\kappa -2\zeta _1-\lambda +3\mu )I_{\{2\kappa -2\zeta _1-\lambda +3\mu >0\}}\), \(d_2:=\max \{0,3\kappa +2\mu +\zeta _2-\lambda \}\), \(\delta :=3\), \(\rho _{\delta }:=3(\lambda -\mu )+(6\mu +3\kappa -3\zeta _1)I_{\{6\mu +3\kappa -3\zeta _1>0\}}+ (3\kappa +3\zeta _2-2\lambda -4\mu )I_{\{3\kappa +3\zeta _2-2\lambda -4\mu >0\}}\) and \(d_{\delta }:=\max \{0,7\kappa +3\mu -\lambda \}\). Moreover, it follows from the description of the model, condition (C1), (5.1) and (5.2) that Assumptions 3.1(iii), 3.2(ii) and (iv) hold. Finally, for each \(i,j\in S\), we have

for all \(a=(a_1,a_2), b=(b_1,b_2)\in A(i)\). Hence, Assumption 3.2(iii) follows from the last inequality with \(L_{ij}=1\) and condition (C2). This completes the proof of the proposition. \(\square \)

Next, we take \(c(i,a)=(|a_1-\eta _1|+|a_2-\eta _2|)i\) (\(\eta _1>0\), \(\eta _2>0\)) for all \((i,a)\in K\). Then direct calculations give

for all \(i\in S\) and \(a=(a_1,a_2), b=(b_1,b_2)\in A(i)\). Hence, conditions (C1) and (C2) are satisfied with \(M:=\max \{\eta _1, |\eta _1-\kappa |\}+\max \{|\zeta _1-\eta _2|, |\zeta _2-\eta _2|\}\) and \(L_i=i\).

For each \(n\ge 1\), choose the control model \({\mathcal {M}}_n\) with \(S_n=\{0,1,\ldots ,n\}\), \(A_n(0)=\{\frac{\kappa l}{n^3}: l=0,1,\ldots ,n^3\}\times \{0\}\), \(A_n(i)=\{\frac{\kappa l}{n^3}: l=0,1,\ldots ,n^3\}\times \{\zeta _1+\frac{(\zeta _2-\zeta _1)l}{n^3}:l=0,1,\ldots ,n^3\}\) for all \(i=1,\ldots ,n\). Then for each \(n\ge 1\) and \(i\in S_n\), we have

which together with the definition of the Hausdorff metric yields

Hence, (4.1) holds with \({\widetilde{M}}:=2(1+4G_1)(\kappa +\zeta _2-\zeta _1)\).

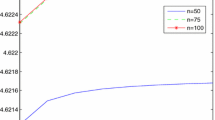

For a numerical experimentation of Example 5.1, we choose the following values of the parameters: \(T=10\), \(\lambda =0.9\), \(\mu =1\), \(\kappa =1\), \(\zeta _1=0.5\), \(\zeta _2=1\), \(\eta _1=2\), \(\eta _2=3\). Then for \(n=60, 80, 100\) and \(m=2000,\ldots ,10000\), employing the iteration constructed as in (4.11), we obtain the corresponding value \(W_m(0,2)\) as shown in Fig. 1. Empirically, the convergence is faster than that given in Theorem 4.2. This is due to the fact that the bounds used to obtain the error estimations in Theorem 4.2 are very conservative. Moreover, from Fig. 1, we get the approximate value \(V^*(0,2)\simeq 16.6493\).

References

Bäuerle N, Rieder U (2011) Markov decision processes with applications to finance. Springer, Berlin

Gihman II, Skohorod AV (1979) Controlled stochastic processes. Springer, Berlin

Guo XP, Hernández-Lerma O (2009) Continuous-time Markov decision processes: theory and applications. Springer, Berlin

Guo XP, Huang XX, Huang YH (2015) Finite horizon optimality for continuous-time Markov decision processes with unbounded transition rates. Adv Appl Probab 47:1064–1087

Guo XP, Zhang WZ (2014) Convergence of controlled models and finite-state approximation for discounted continuous-time Markov decision processes with constraints. Euro J Oper Res 238:486–496

Kitaev MY, Rykov VV (1995) Controlled queueing systems. CRC Press, Boca Raton

Miller BL (1968) Finite state continuous time Markov decision processes with finite planning horizon. SIAM J Control 6:266–280

Pliska SR (1975) Controlled jump processes. Stoch Process Appl 3:259–282

Puterman ML (1994) Markov decision processes: discrete stochastic dynamic programming. Wiley, New York

van Dijk NM (1988) On the finite horizon Bellman equation for controlled Markov jump models with unbounded characteristics: existence and approximation. Stoch Process Appl 28:141–157

van Dijk NM (1989) A note on constructing \(\varepsilon \)-optimal policies for controlled Markov jump models with unbounded characteristics. Stochastics 27:51–58

Wei QD, Chen X (2014) Strong average optimality criterion for continuous-time Markov decision processes. Kybernetika 50:950–977

Yushkevich AA (1977) Controlled Markov models with countable state and continuous time. Theory Probab Appl 22:215–235

Acknowledgments

I am greatly indebted to the anonymous referees and the associate editor for many valuable comments and suggestions that have greatly improved the presentation. The research was supported by National Natural Science Foundation of China (Grant No. 11526092).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

I declare that no conflict of interest exists in this paper.

Rights and permissions

About this article

Cite this article

Wei, Q. Finite approximation for finite-horizon continuous-time Markov decision processes. 4OR-Q J Oper Res 15, 67–84 (2017). https://doi.org/10.1007/s10288-016-0321-3

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10288-016-0321-3

Keywords

- Continuous-time Markov decision processes

- Finite-horizon expected total cost criterion

- Unbounded transition rates

- Finite approximation