Abstract

This paper is concerned with the explicit computation of the limiting distribution function of the largest real eigenvalue in the real Ginibre ensemble when each real eigenvalue has been removed independently with constant likelihood. We show that the recently discovered integrable structures in [2] generalize from the real Ginibre ensemble to its thinned equivalent. Concretely, we express the aforementioned limiting distribution function as a convex combination of two simple Fredholm determinants and connect the same function to the inverse scattering theory of the Zakharov–Shabat system. As corollaries, we provide a Zakharov–Shabat evaluation of the ensemble’s real eigenvalue generating function and obtain precise control over the limiting distribution function’s tails. The latter part includes the explicit computation of the usually difficult constant factors.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction and Statement of Results

Let \(\mathbf{X}\in \mathbb {R}^{n\times n},n\in \mathbb {Z}_{\ge 2}\) be a matrix whose entries are independent, identically distributed standard normal random variables with mean 0 and variance 1. In other words, \(\mathbf{X}\) is a matrix drawn from the real Ginibre ensemble (GinOE) [42]. This ensemble of random matrices appeared first in the 1965 paper [42] by Ginibre, the same paper which brought forth a threefold family of Gaussian random matrices (complex, real or real quaternion entries) and thereby initiated the study of eigenvalue statistics in the complex plane. At first, [42] served as a mathematical extension of Hermitian random matrix theory only but it proved to be valuable for the modeling of a wide range of physical phenomena later on: For instance, Ginibre matrices appear in the study of the fractional quantum Hall effect [29], in the stability analysis of complex biological systems [53] and neural networks [58], in quantum chaotic systems [32] and in financial market models [50]; see [48] for further applications and references. From the viewpoint of applicability and computability of the statistical properties of a given Ginibre random matrix, one commonly focuses on its extreme eigenvalues as they model extreme events in the corresponding physical system. Consequently, extreme eigenvalues correspond to events that are in general quite rare, but when they occur, then with serious consequences which makes their analysis valuable. It is precisely within the context of extreme values that the GinOE attains a special mathematical role due to its real peculiarities: the expected number of real eigenvalues of any \(\mathbf{X}\in \text {GinOE}\) is equivalent to \(\sqrt{2n/\pi }\) as \(n\rightarrow \infty \) [30] and the likelihood that all its eigenvalues are real is exactly \(2^{-n(n-1)/4}\) [31]. Both features are nowadays cornerstones of the edge behavior of real eigenvalues in the real Ginibre ensemble. In this paper, we contribute to the same field by providing original results for the limiting distribution function of the largest real eigenvalue in a thinned real Ginibre ensemble.

In order to be concrete, we first recall, cf. [10, 41, 59], that the eigenvalues \(\{z_j(\mathbf{X})\}_{j=1}^n\) of any \(\mathbf{X}\in \text {GinOE}\) form a Pfaffian point process, a fact which allows one to compute gap probabilities in the GinOE as Fredholm determinants. Of particular interest for us is the following result about the absence of real eigenvalues in \((t,\infty )\subset \mathbb {R}\), equivalently about the distribution function of the largest real eigenvalue in the finite n GinOE.

Proposition 1.1

([56, Proposition 2.2]) For every \(n\in \mathbb {Z}_{\ge 2}\),

where \(\chi _t\) is the operator of multiplication by the characteristic function \(\chi _{[t,\infty )}\) of the interval \([t,\infty )\subset \mathbb {R}\) and \(\mathbf{K}_n\) the following Hilbert–Schmidt integral operator on \(L^2(\mathbb {R})\oplus L^2(\mathbb {R})\),

Here, \(\rho \) multiplies by any differentiable, square-integrable weight function \(\rho (x)>0\) on \(\mathbb {R}\) such that \(\rho ^{-1}(x)\equiv 1/\rho (x)\) is polynomially bounded. Moreover \(S_n\) and \(\epsilon \) are the integral operators on \(L^2(\mathbb {R})\) with kernels

where \(\mathfrak {e}_n(z):=\sum _{k=0}^n\frac{1}{k!}z^k\) is the exponential partial sum, \(S_n^{*}\) the real adjoint of \(S_n\), D acts by differentiation on the independent variable and \(IS_n\) has kernel

and

Remark 1.2

The ordinary Fredholm determinant of \(\mathbf{K}_n\) is ill-defined since not all its entries vanish at \(\pm \infty \) and since \(\epsilon \) is not trace-class on \(L^2(\mathbb {R})\). This is a standard issue in random matrix theory, compare [62, Section VIII], [63, page 2199] or [27, page 79-84], and it is commonly bypassed either through the use of regularized determinants or weighted Hilbert spaces. In (1.1), we use the following regularized 2-determinant for block operators \(\text{\L} =\bigl [{\begin{matrix} L_{11} &{} L_{12}\\ L_{21} &{} L_{22}\end{matrix}}\bigr ]\) with trace class diagonal \(L_{11},L_{22}\) and Hilbert–Schmidt off-diagonal \(L_{12},L_{21}\), cf. [27, page 82],

where \(\det \) is the ordinary Fredholm determinant (which is well defined as \((1+\text{\L} )\mathrm {e}^{-\text{\L} }=1+\text {trace class}\) on \(L^2(\mathbb {R})\oplus L^2(\mathbb {R})\) for the given \(\text{\L} \), cf. [57, (3.5)]), the block operators act on \(L^2(\mathbb {R})\oplus L^2(\mathbb {R})\) and the trace in the exponent is taken in \(L^2(\mathbb {R})\). Note that (1.3) is slightly different from the Hilbert–Carleman determinant [57, Chapter 9] in that for trace class \(\text{\L} \) we have \(\det _2(1+\text{\L} )=\det (1+\text{\L} )\) and for any two of the above block operators

Moreover, as soon as \(\text{\L} \mathbf{M}\) and \(\mathbf{M}\text{\L} \) fit into the aforementioned class of block operators,

and \(\det _2(1+\text{\L} )\ne 0\) if and only if \(1+\text{\L} \) is invertible.

The finite n GinOE result (1.1) can be used to derive a limit theorem for the largest real eigenvalue of a real Ginibre matrix which in turn quantifies the well-known saturn effect. Indeed, in order to state the corresponding limit theorem for the largest real eigenvalue we first consider the following Riemann–Hilbert problem (RHP).

Riemann-Hilbert Problem 1.3

([2, RHP 1.5]) Given \(x,\gamma \in \mathbb {R}\times [0,1]\), determine \(\mathbf{Y}(z)=\mathbf{Y}(z;x,\gamma )\in \mathbb {C}^{2\times 2}\) such that

-

(1)

\(\mathbf{Y}(z)\) is analytic for \(z\in \mathbb {C}\setminus \mathbb {R}\) and continuous on the closed upper and lower half-planes.

-

(2)

The boundary values \(\mathbf{Y}_{\pm }(z):=\lim _{\epsilon \downarrow 0}\mathbf{Y}(z\pm \mathrm {i}\epsilon ),z\in \mathbb {R}\) satisfy

$$\begin{aligned}&\mathbf{Y}_+(z)=\mathbf{Y}_-(z)\begin{bmatrix}1-|r(z)|^2 &{} -\bar{r}(z)\mathrm {e}^{-2\mathrm {i}xz}\\ r(z)\mathrm {e}^{2\mathrm {i}xz} &{} 1\end{bmatrix},\\&z\in \mathbb {R}; \ \ r(z)=r(z;\gamma ):=-\mathrm {i}\sqrt{\gamma }\mathrm {e}^{-\frac{1}{4}z^2}. \end{aligned}$$ -

(3)

As \(z\rightarrow \infty \),

$$\begin{aligned} \mathbf{Y}(z)=\mathbb {I}+\mathbf{Y}_1(x,\gamma )z^{-1}+\mathcal {O}\big (z^{-2}\big );\ \ \ \ \ \mathbf{Y}_1(x,\gamma )=\big [Y_1^{jk}(x,\gamma )\big ]_{j,k=1}^2. \end{aligned}$$(1.6)

This problem is uniquely solvable for all \((x,\gamma )\in \mathbb {R}\times [0,1]\), cf. [2, Theorem 3.9], and its solution enables us to state the limit theorem for the largest real eigenvalue as follows. Eigenvalues off the real axis are much simpler to deal with, see [56, Theorem 1.2].

Theorem 1.4

([56, Theorem 1.3], [55, Theorem 1.1], [2, Theorem 1.6]) Let \(\mathbf{X}\in \mathbb {R}^{n\times n}\) be a matrix drawn from the GinOE with eigenvalues \(\{z_j(\mathbf{X})\}_{j=1}^n\subset \mathbb {C}\). Then for every \(t\in \mathbb {R}\),

where \(T:L^2(\mathbb {R})\rightarrow L^2(\mathbb {R})\) is trace class with kernel

and

The function \(y=y(x;1):\mathbb {R}\times [0,1]\rightarrow \mathrm {i}\mathbb {R}\) equals \(y(x;1):=2\mathrm {i}Y_1^{12}(x,1)\), which is expressed in terms of the matrix coefficient \(\mathbf{Y}_1(x,1)\) that appeared in (1.6).

Remark 1.5

The first equality in (1.7) is due to Rider and Sinclair [56, Theorem 1.2] with a subsequent algebraic correction of the factor \(\Gamma _t\) by Poplavskyi, Tribe and Zaboronski [55, Theorem 1.1]. The second equality was derived by the authors [2, Theorem 1.6] and should be viewed as the GinOE analogue of the famous Tracy–Widom law for the largest eigenvalue in the Gaussian orthogonal ensemble (GOE), compare [62, (53)]. Indeed, as far as the largest real eigenvalue is concerned, the overall difference between GinOE and GOE stems from the appearance of the function y, i.e., the solution of a distinguished inverse scattering problem for the Zakharov–Shabat system [2, Section 1.2], rather than the more familiar Painlevé-II Hastings-McLeod transcendent.

Remark 1.6

We emphasize that the limit law (1.7) is not a feature of the GinOE alone. In fact, Cipolloni, Erdős and Schröder recently proved in [21, Theorem 2.3] that the edge eigenvalue statistics of a large class of real non-Hermitian random matrices with i.i.d. centered entries match those of the GinOE. Thus, in complete analogy with the Tracy–Widom law for real Wigner matrices [60], the law (1.7) is a universal limit law. The same holds true for the upcoming limit law (1.14) for thinned real non-Hermitian random matrices at their spectral edge.

1.1 Fredholm Determinant Formula

In this paper, we are concerned with the limiting (\(n\rightarrow \infty \)) distribution of the largest real eigenvalue in the following thinned real GinOE process: consider the Pfaffian point process formed by the \(m_n\le n\) real eigenvalues of some \(\mathbf{X}\in \text {GinOE}\). Fix \(\gamma \in [0,1]\) and now discard each eigenvalue \(\mathbb {R}\ni z_j(\mathbf{X}),j=1,\ldots ,m_n\) independently with likelihood \(1-\gamma \). The resulting particle system

forms also a random point process, see [44, Chapter 6.2.1], and most importantly for us, this process is Pfaffian as stated in our first result below.

Lemma 1.7

The above-defined thinned real GinOE process is a Pfaffian random point process with

where the operator \(\mathbf{K}_n\) appeared in (1.2).

Identities similar to (1.10) have been derived in [13, Proposition 1.1] for the limiting GOE and the limiting Gaussian symplectic ensemble (GSE) based on Painlevé representations for the underlying eigenvalue generating functions, cf. [25, Theorem 2.1]. Our proof of Lemma 1.7 will rely on the observation that thinned Pfaffian point processes are Pfaffian with an appropriately \(\gamma \)-modified kernel, see Sect. 2, which is similar to the proof for determinantal point processes given in [51, Appendix A]. The fact that a thinned process built from a determinantal point process is also determinantal was first observed in [8]. In fact, it is the last paper [8] which re-ignited the interest in incomplete point processes in random matrix theory, simply because the incomplete or thinned matrix models allow one to transition between qualitatively different extreme behaviors. Such transitions have been studied foremost in Hermitian ensembles; compare Remarks 1.12 and 1.15 for some references; here, we are interested in the simplest thinned non-Hermitian matrix model with real entries.

Once (1.10) is established, we will then use this finite n result to derive the following limit theorem for the thinned real GinOE process, our second result. Set

and note that \(\bar{\gamma }\in [0,1]\) for \(\gamma \in [0,1]\). The limit is a convex combination of two simple Fredholm determinants.

Theorem 1.8

For any \((t,\gamma )\in \mathbb {R}\times [0,1]\), the limit

exists and equals

with \(\bar{\gamma }\) defined in (1.11). Here, \(S:L^2(\mathbb {R})\rightarrow L^2(\mathbb {R})\) is the trace class integral operator with kernel

The special value \(\gamma =1\) reduces (1.13) to

which was first proven by the authors in [2, Theorem 1.11]. Note that the formula for P(t; 1) is the analogue of the Ferrari–Spohn formula [35] in the GOE, generalized to the thinned GOE by Forrester in [39, Corollary 1]. Comparing (1.13) to the last reference (modulo the typo correction \(\xi \mapsto \bar{\xi }\) in the determinants in the first line of [39, (1.22)] and after completing squares), we spot a striking resemblance between the thinned GOE and the thinned GinOE: up to the kernel replacement

with the Airy function \(w=\text {Ai}(z)\), see [54, 9.2.2], the formulæare exactly the same.

1.2 Integrability of the Thinned Real GinOE Process

In our third result, we express the limiting distribution function \(P(t;\gamma )\) in (1.12) in terms of the solution of RHP 1.3 and thus in terms of the solution to an inverse scattering problem for the Zakharov–Shabat system. Here are the details:

Theorem 1.9

For any \((t,\gamma )\in \mathbb {R}\times [0,1]\),

where the function \(y=y(x;\gamma ):\mathbb {R}\times [0,1]\rightarrow \mathrm {i}\mathbb {R}\) is given by \(y(x;\gamma ):=2\mathrm {i}Y_1^{12}(x,\gamma )\) in terms of (1.6) and

Remark 1.10

Note that for every \((t,\gamma )\in \mathbb {R}\times [0,1]\),

We emphasize that the structure in the right-hand side of (1.14), (1.16) is completely similar to the one in the limiting distribution function for the largest eigenvalue in the thinned GOE ensemble, cf. [13, (1.6)]. It is only the appearance of the solution to the Zakharov–Shabat inverse scattering problem which sets the thinned GinOE apart from the thinned GOE—at least as far as the largest real eigenvalue is concerned; compare Remark 1.5 for the special case \(\gamma =1\). We further emphasize this point with our fourth result, a simple corollary to Theorem 1.13: let \(E(m,(t,\infty ))\) denote the limiting (as \(n\rightarrow \infty \)) probability that there are \(m\in \mathbb {Z}_{\ge 0}\) edge scaled real eigenvalues \(\mu _j(\mathbf{X}):=z_j(\mathbf{X})-\sqrt{n}\in \mathbb {R}\) of a matrix \(\mathbf{X}\in \text {GinOE}\) in the interval \((t,\infty )\subset \mathbb {R}\). Now define the associated generating function

which, as a consequence of Theorem 1.8 can also be evaluated in terms of the solution of RHP 1.3:

Corollary 1.11

For every \((t,\lambda )\in \mathbb {R}\times [0,1]\),

with \(\bar{\lambda }:=2\lambda -\lambda ^2\), the above function \(y(x;\lambda )=2\mathrm {i}Y_1^{12}(x,\lambda )\) and the antiderivative (1.15).

Formula (1.18) is a simple consequence of the inclusion–exclusion principle; see Sect. 6. The generating function is of interest from the random matrix theory viewpoint as it allows one to compute the limiting distribution function \(F_m(t)\) of the mth largest edge scaled real eigenvalue (\(m=1\) is the largest) in the GinOE in recursive form,

see [5, Section 6.3.2] for the standard probabilistic argument used in the derivation of such recursions in random matrix theory.

Remark 1.12

The analogue of (1.18) for the GOE was first derived in [25, Theorem 2.1] and then used for the computation of the limiting distribution function of the largest eigenvalue in the thinned GOE; see for example [13, Proposition 1.1]. For the GinOE, we will proceed in the reverse direction and first prove (1.14).

1.3 Tail Expansions

One major advantage of the explicit formula (1.14)—besides the fact that it places the thinned GinOE on firm integrable systems ground—originates from its usefulness in the derivation of tail expansions. Indeed, once the Riemann–Hilbert problem connection is in place, it is somewhat straightforward to obtain asymptotic information for the distribution function \(P(t;\gamma )\) in (1.12) as \(t\rightarrow \pm \infty \). We summarize the relevant estimates in our fifth result below.

Theorem 1.13

Let \(\gamma \in [0,1]\). We have, as \(t\rightarrow +\infty \),

with the complementary error function \(w=\text {erfc}(z):=\frac{2}{\sqrt{\pi }}\int _z^{\infty }\mathrm {e}^{-t^2}{\mathrm d}t\), see [54, 7.2.2]. On the other hand, as \(t\rightarrow -\infty \),

with

in terms of the polylogarithm \(w=\text {Li}_s(z):=\sum _{n=1}^{\infty }z^n/n^s\), see [54, 25.12.10].

Expansion (1.19) was first derived in [41] for \(\gamma =1\). The leading order exponential decay of the left tail (1.20) appeared in [55, (1.11)] for \(\gamma =1\) and for \(\gamma \in [0,1]\) in [38, (2.30)], albeit in somewhat implicit form. The notoriously difficult constant factor \(c_0(\gamma )\) in (1.20), difficult because it cannot be obtained via trace norm estimates (compare our discussion in (1.23)), was recently computed in [36, (3)] for \(\gamma =1\) using probabilistic arguments. In this paper, we derive (1.20) for all \(\gamma \in [0,1)\) by nonlinear steepest descent techniques. The evaluation of \(c_0(1)\) would require further analysis and we choose not to rederive \(c_0(1)\) in this paper. Nonetheless, we note that our result (1.20), (1.21) matches formally onto [55, (1.11)], [36, (3)], i.e., onto the \(t\rightarrow -\infty \) expansion

since \(c_1(1)=\frac{1}{2\sqrt{2\pi }}\zeta \left( \frac{3}{2}\right) \) and since \(c_0(\gamma )\) in (1.21) satisfies the following property

Lemma 1.14

The function \(c_0(\gamma )\) is continuous in \(\gamma \in [0,1]\) and equals

As it is standard (for instance in invariant random matrix theory ensembles), the right tail (1.19) of the extreme value distribution \(P(t;\gamma )\) follows from elementary considerations and does not need RHP 1.3. The left tail, however, is much more subtle since

becomes unbounded, yet the distribution function \(P(t;\gamma )\) converges to zero. It is this well-known issue which requires the full use of RHP 1.3 and associated nonlinear steepest descent techniques for its asymptotic analysis; see Sect. 7.

Remark 1.15

The explicit computation of constant factors such as \(c_0(\gamma )\) in (1.21) is a well-known challenge in the asymptotic analysis of correlation and distribution functions in nonlinear mathematical physics. Without aiming for completeness, we mention the following contributions to the field: In the theory of exactly solvable lattice models, the works [6, 7, 11, 12, 61]. In classical invariant random matrix theory, the works [3, 23, 24, 33, 34, 49], and most recently on \(\tau \)-function connection problems for Painlevé transcendents the works [46, 47]. Finally, related to thinned ensembles in random matrix theory, the works [13,14,15,16,17,18,19, 22].

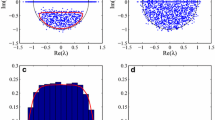

1.4 Numerics

The Fredholm determinant formula (1.13) provides us with an efficient way to evaluate \(P(t;\gamma )\) numerically, cf. [9]. Indeed, in order to showcase the applicability of (1.13) we now provide the following numerical evaluations for the limiting distribution of \(\max _j z_j^{\gamma }(\mathbf{X})\): First, Table 1 shows a few centralized moments for varying \(\gamma \).

Second, probability density and distribution function plots for varying \(\gamma \in [0,1]\) are shown in Fig. 1.

The distribution functions \(P(t;\gamma )\) of the largest real eigenvalue in the thinned real GinOE process for varying values of \(\gamma \). The plots were generated in MATLAB with \(m=50\) quadrature points using the Nyström method with Gauss–Legendre quadrature. On the left cdfs, on the right pdfs

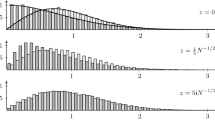

Third, we compare our asymptotic expansions (1.19) and (1.20) to the numerical results obtained from (1.13) in Figs. 2 and 3.

The distribution functions \(P(t;\gamma )\) in red for \(\gamma =1\) (left) and \(\gamma =0.75\) (right). We compare the numerical computed values from (1.13) to the left tail expansion (1.20) in a semilogarithmic plot. Again we used the Nyström method with Gauss–Legendre quadrature and \(m=50\) quadrature points

1.5 Methodology and Outline of Paper

The remainder of the paper is organized as follows. We prove Lemma 1.7 in Sect. 2 using a simple probabilistic argument. Afterward, we use (1.10) and carefully simplify the regularized Fredholm determinant in order to arrive at a finite n formula which is amenable to asymptotics. Our approach is somewhat similar to the ones carried out in [25, 56]; however, two issues arise along the way: One, the absence of Christoffel–Darboux structures throughout forces us to rely on the Fourier tricks used in [2, Section 2 and 3] in the derivation of (1.14). The other, unlike in the invariant ensembles, our computations depend heavily on the parity of n. We first work out the necessary details for even n in Sect. 3 and afterward develop a comparison argument to treat all odd n, see Sect. 3.3. The content of Subsect. 3.3 seemingly marks the first time that the extreme value statistics in the GinOE for odd n have been computed rigorously. Even for \(\gamma =1\), typos in [56, Section 4.2] have been pointed out in [55, Appendix B], but these had not been fixed until now. After several initial steps in Sect. 3 we complete the proof of Theorem 1.8 in Sect. 4. Once Theorem 1.8 has been derived, our proof of Theorem 1.9 in Sect. 5 is rather short, making essential use of the inverse scattering theory connection worked out in our previous paper [2]. This is followed by our short proof of (1.11) for the eigenvalue generating function in Sect. 6. Afterward, we prove Theorem 1.13 in Sect. 7. In fact, the asymptotic analysis is split into two parts, one part which deals with a total integral of \(y=y(x;\gamma )\) and a second part which computes the constant factor in the asymptotic expansion of the determinant

Unlike for invariant matrix ensembles (compare the discussion in [16, page 492,493]), we are here able to efficiently employ the \(\gamma \)-derivative method in the computation of the constant factor without having a differential equation in the spectral variable. Indeed, since our nonlinear steepest descent analysis in Appendix 7.3 does not use any local model functions, the cumbersome double integration in the \(\gamma \)-derivative method becomes manageable. This feature is comparable with Deift’s proof of the strong Szegő limit theorem in [26, Example 3] and the details of our analysis can be found in Sect. 7. The final two sections of the paper in Appendices 7.3 and 7.3 prove two curious integral identities used in the proof of Theorem 1.8 and present a streamlined version of the nonlinear steepest descent analysis of [2, Section 5] which is crucial in our proof of Theorem 1.13.

Remark 1.16

Our final remark in this Section concerns two possible routes for future investigations of the thinned real GinOE process. On the one hand, the large t asymptotic behavior of the cumulants of the counting function \(N(t,\gamma ):=\#\{k\in \mathbb {N}: z_k^{\gamma }(\mathbf{X})\le t\}\) is seemingly within reach by (1.18) and (1.20) and can afterward be contrasted to recent results for the sine, Airy, Bessel or Pearcey processes, cf. [17, 18, 22]. On the other hand, with access to the eigenvalue generating function through (1.14) and (1.20), one can wonder whether the refined rigidity analysis of the determinantal Airy process in [20] can be extended to the Pfaffian point process formed by the real eigenvalues \(z_j(\mathbf{X})\) of some \(\mathbf{X}\in \text {GinOE}\).

2 Proof of Lemma 1.7

It is known from [52] that the eigenvalues \(\{z_j(\mathbf{X})\}_{j=1}^n\subset \mathbb {C}\) of \(\mathbf{X}\in \mathbb {R}^{n\times n}\) drawn from the \(\text {GinOE}\) are distributed according to a random point process whose correlation functions are computable as Pfaffians, cf. [10, 41, 59]. In particular, the real eigenvalues form a Pfaffian process whose correlations are given by

with the skew-symmetric \(2\times 2\)-matrix kernel

Note that for any distinct points \(w_j\in \mathbb {R}\),

Thus, if \(\rho _{\ell }^{\gamma }\) denotes the \(\ell \)-th correlation function in the thinned real GinOE process, we find with \(1\le \ell \le m_{\gamma ,n}\),

since each eigenvalue is removed independently with likelihood \(1-\gamma \). In short, \(\rho _{\ell }^{\gamma }=\gamma ^{\ell }\rho _{\ell }\) which shows that the thinned Pfaffian point process is also a Pfaffian process and its kernel is simply given by \(\gamma \mathbf{K}_n^{\mathbb {R},\mathbb {R}}(x,y)\). Equipped with this insight, one now repeats the computations in [56, page 1630] and arrives at (1.10).

3 Proof of Theorem 1.8—first steps

Abbreviate

We will first simplify \(F_n\) for n even and afterward take the limit as \(n\rightarrow \infty \) with n even. Once done, we then compare the odd n case with the even n case and prove existence of the limit (1.12) all together.

3.1 Finite Even n Calculations

We consider \(F_{2n}\). Our overall approach follows closely [56, page 1640], keeping throughout track of the \(\gamma \)-modifications due to (1.10). First, the kernel \(\chi _t\mathbf{K}_{2n}\chi _t\) can be factorized as

and by using (1.5) we can move the factor on the left in (3.1) to the right, so \(F_{2n}(t,\gamma )\) equals the regularized 2-determinant of the operator with kernel

Next, we observe that the traces of the last operator’s powers of \(2,3,\ldots \) match the corresponding traces of the operator with kernel

Hence, by the Plemelj–Smithies formula for \(\det _2\), see [57, Theorem 9.3],

Factorizing the underlying kernel, we then obtain

and since both triangular factors are of the form identity plus block operator as in Remark 1.2, we are allowed to use (1.4). In fact the regularized 2-determinant of those triangular factors equals one, so we have just shown that the original determinant in (1.1) for even n simplifies to

Clearly, the determinant in (3.2) on \(L^2(\mathbb {R})\oplus L^2(\mathbb {R})\) is really a determinant on \(L^2(\mathbb {R})\) alone,

and as our upcoming computations will show (see in particular (3.8)) the operator \(\epsilon S_{2n}\chi _tD-S_{2n}^{*}\chi _t-\gamma S_{2n}^{*}\chi _t\epsilon \chi _tD\) is of finite rank, i.e., the regularized 2-determinant in (3.3) is an ordinary Fredholm determinant by Remark 1.2 and the conjugation with \(\rho \) now redundant. We have thus arrived at the following replacement of the equation right above [56, (4.6)],

In order to simplify (3.4) further, we now record

Lemma 3.1

([56, page 1640]) For any \(n\in \mathbb {Z}_{\ge 1}\),

Proof

The stated identity follows easily by induction on \(n\in \mathbb {Z}_{\ge 1}\) using only that

\(\square \)

Inserting (3.5) into (3.4), we find

We write \(\alpha \otimes \beta \) for a general rank one integral operator on \(L^2(\mathbb {R})\) with kernel \((\alpha \otimes \beta )(x,y)=\alpha (x)\beta (y)\). Noting \(\epsilon D\chi _t=-\chi _t\) and applying the commutator identity, cf. [62, (16)],

one part in (3.6) simplifies to

which (since \(\epsilon _t=\frac{1}{2}-\chi _{[t,\infty )},\epsilon _{\infty }=\frac{1}{2}\)) yields

Substituting (3.7) back into (3.6), we have thus (recall \(\bar{\gamma }=2\gamma -\gamma ^2\))

Next, from the definition of \(S_n\) in Proposition 1.1, we may write, see [56, (4.7)],

where \(T_n(x,y)\) is a symmetric kernel and

Lemma 3.2

Given \(t\ge 0\) and \(n\in \mathbb {Z}_{\ge 2}\), the trace class operator \(T_n:L^2(t,\infty )\rightarrow L^2(t,\infty )\) with kernel \(T_n(x,y)\) satisfies \(0\le T_n\le 1\) and \(1-\gamma T_n\) is invertible on \(L^2(t,\infty )\) for all \(\gamma \in [0,1]\).

Proof

For every \(f\in L^2(t,\infty )\),

which implies nonnegativity of \(T_n\). For the upper bound, we apply Schur’s test,

and conclude by self-adjointness of \(T_n\) that

i.e., \(T_n\le 1\) for any \(n\in \mathbb {Z}_{\ge 2}\). Next, using that \(\Vert T_n\Vert \le 1\), the invertibility of \(1-\gamma T_n\) on \(L^2(t,\infty )\) follows readily from the underlying Neumann series provided \(\gamma \in [0,1)\). The case \(\gamma =1\) has been addressed in [56, Lemma 4.2]. This concludes our proof. \(\square \)

In the following we will use the result of Lemma 3.2 for the operator \(\bar{\gamma }\chi _tT_{2n}\chi _t\) which acts on \(L^2(\mathbb {R})\). Inserting the operator decomposition \(S_n^{*}=T_n+\phi _n\otimes \psi _n\) into (3.8) and using the general identities \((\alpha \otimes \beta )(\gamma \otimes \delta )=\langle \beta ,\gamma \rangle (\alpha \otimes \delta )\) and \(A(\beta \otimes \gamma )D=(A\beta )\otimes (D^{*}\gamma )\) (for arbitrary operators A, D),

Here, \(\langle \cdot ,\cdot \rangle \) is the standard \(L^2(\mathbb {R})\) inner product. Since \(\chi _t^2=\chi _t,\chi _t^{*}=\chi _t\) and

we can then rewrite (3.10) by Sylvester’s identity [43, Chapter IV, (5.9)] as

Next, using that \(1-\bar{\gamma }\chi _tT_n\chi _t\) is invertible on \(L^2(\mathbb {R})\) for \(t\ge 0\) by Lemma 3.2, we factorize \(F_{2n}(t,\gamma )\) as follows:

Here, \(\alpha _j,\beta _k\) denote the six functions

By general theory, cf. [43, Chapter I, (3.3)]

with the \(L^2(\mathbb {R})\) inner product \(\langle \cdot ,\cdot \rangle \). We conclude our finite n calculation for even n with the following further algebraic simplifications.

Lemma 3.3

We have

followed by

Next, with \(R_n:=\bar{\gamma }T_n\chi _t(1-\bar{\gamma }\chi _tT_n\chi _t)^{-1},n\in \mathbb {Z}_{\ge 1}\) which is well defined as operator on \(L^2(\mathbb {R})\) by Lemma 3.2 for any \(t\ge 0\),

where \(c_n:=\langle \psi _n,1-\gamma \chi _{[t,\infty )}\rangle \). Moreover

with \(d_n:=\langle \psi _n,(1-\gamma \chi _t)\chi _{[t,\infty )}\rangle \).

Proof

We use self-adjointness of the operator \(T_n\) and write \(R_n(x,t)\) for (cf. [62, page 732])

\(\square \)

With Lemma 3.3 in place, we finally evaluate the Fredholm determinant in (3.13). Noting that the terms \(c_n,d_n\) cancel out due to multilinearity of the finite-dimensional determinant, we obtain

in terms of the three inner products \(\langle \alpha _1,\beta _k\rangle ,k=1,2,3\) and the four integrals \(I_j=I_j(t,\gamma ,2n)\) with

Identities (3.12) and (3.14) conclude our calculations for finite n, provided n is even.

Remark 3.4

The \(3\times 3\) determinant (3.14) is the analogue of the GOE computation [25, (3.63)].

3.2 The Limit \(n\rightarrow \infty \), n Even

In order to pass to the large n limit, we first shift the independent variable t according to \(t\mapsto t+\sqrt{2n}\); compare the left-hand side of (1.7). Under this scaling, we have

where \(\widetilde{T}_n:L^2(\mathbb {R})\rightarrow L^2(\mathbb {R})\) has kernel

Moreover, the entries in the \(3\times 3\) determinant (3.14) transform in a similar fashion, for instance

and likewise

which involve \(\widetilde{\phi }_n(x):=\phi _n(x+\sqrt{n})\) and \(\widetilde{\psi }_n(x):=\psi _n(x+\sqrt{n})\). The remaining four integrals \(I_k\), see (3.15), are treated the same way and every occurrence of \(R_n\) in them gets replaced by \(\widetilde{R}_n\) with

defined in terms of \(\widetilde{T}_n:L^2(\mathbb {R})\rightarrow L^2(\mathbb {R})\) with kernel (3.16). At this point, we collect a sequence of technical limits.

Lemma 3.5

Uniformly in \(x\in \mathbb {R}\) chosen from compact subsets,

and for any fixed \(s\in \mathbb {R}\) with \(p\in \{1,2\}\),

Here, \(w=\text {erfc}(z)\) denotes the complementary error function, cf. [54, 7.2.2].

Proof

The limits (3.17), (3.18) are mentioned en route in [56, page 1640] and we thus only give a few details: as \(n\rightarrow \infty \), uniformly in \(x\in \mathbb {R}\),

But on compact subsets of \(\mathbb {R}\ni x\),

which yields the second limit in (3.17). Since also for any \(x>0\) and \(n\in \mathbb {Z}_{\ge 2}\),

the dominated convergence theorem yields the first \(L^p(s,\infty )\) convergence in (3.18). For the limits involving \(\widetilde{\phi }_n\), we note that as \(n\rightarrow \infty \), uniformly in \(x\in \mathbb {R}\),

with the normalized incomplete gamma function \(w=P(z)\), cf. [54, 8.2.4]. But on compact subsets of \(\mathbb {R}\ni x\), see [54, 8.11.10],

which yields the first limit in (3.17). For the outstanding limit in (3.18), we use that as \(n\rightarrow \infty \), uniformly in \(x\in \mathbb {R}\),

with \(w=\Gamma (a,z)\) the incomplete Gamma function, see [54, 8.2.2]. But since for \(x>0\) and \(a\ge 1\) such that \(x+1-a>0\),

we find for any \(x>0\) and \(n\in \mathbb {Z}_{\ge 2}\),

Using also that on compact subsets of \(\mathbb {R}\ni x\),

the second limit in (3.18) follows from (3.19), (3.20), (3.21) and the dominated convergence theorem. This completes our proof. \(\square \)

The next limits concern the large n-behavior of the kernel function \(\widetilde{T}_n(x,y)\) and its total integrals. Recall the kernel T(x, y) defined in (1.8).

Lemma 3.6

([56, page 1642-644]) Uniformly in \(x,y\in \mathbb {R}\) chosen from compact subsets,

Moreover, for any fixed \(s,t\in \mathbb {R}\) and with \(p\in \{1,2\}\),

Proof

The limits (3.22) and (3.23) follow from the detailed discussion on page 1642 and 1643 in [56], see also [56, (4.16)]. We omit details. \(\square \)

Finally we state the central convergence result for the operator \(\chi _t\widetilde{T}_n\chi _t\) on \(L^2(\mathbb {R})\).

Lemma 3.7

([56, Lemma 4.2]) Given \(t\in \mathbb {R}\), the operator \(\chi _t\widetilde{T}_n\chi _t\) converges in trace norm on \(L^2(\mathbb {R})\) and in \(L^p(\mathbb {R})\) operator norm with \(p\in \{1,2,\infty \}\) to the operator \(\chi _tT\chi _t\). Additionally, for any \(\gamma \in [0,1]\),

in \(L^p(\mathbb {R})\) operator norm with \(p\in \{1,2,\infty \}\).

Proof

The convergences have been proven for \(\gamma =1\) in [56, Lemma 4.2]. The extension to \(\gamma \in [0,1)\) follows from the Neumann series expansion of the resolvents in (3.24); compare Lemma 3.2 and [2, Lemma 2.1]. \(\square \)

We now apply Lemmas 3.5, 3.6 and Lemma 3.7 in the large n analysis of the Fredholm determinants back in (3.12), after the rescaling \(t\mapsto t+\sqrt{2n}\). First the leading factor:

Lemma 3.8

For any \(\gamma \in [0,1]\) and \(t\in \mathbb {R}\),

Proof

We know from Lemma 3.2 that \(\widetilde{T}_n\) is trace class on \(L^2(t,\infty )\) and the same applies to T (since it is a product of Hilbert–Schmidt operators). Thus, with [43, Chapter IV, (5.14)],

But the operator difference in trace norm converges to zero by Lemma 3.7 and \(\Vert \chi _t\widetilde{T}_{2n}\chi _t\Vert _1\) remains bounded by the same result. This completes our proof. \(\square \)

Next, we move on to the \(L^2(\mathbb {R})\) inner products which appear in (3.14).

Lemma 3.9

For any \(\gamma \in [0,1]\) and \(t\in \mathbb {R}\),

Proof

In the first inner product, we write

and now use that, uniformly in \(x\in \mathbb {R}\),

But from Lemma 3.5 and Lemma 3.7, we also know that for \(p\in \{1,2\}\),

so with (3.18) and Hölder’s inequality therefore back in (3.25)

as claimed. For the second inner product, we write instead (with \(\chi _{[t,\infty )}(t)=1\))

and recall the previous decomposition of \(\widetilde{\phi }_n\) used in (3.25). Hence, with Lemma 3.7 and (3.17), (3.23) we find from Hölder’s inequality,

The derivation of the third inner product is completely analogous. \(\square \)

At this point, we are left with the computation of the large n limits of the rescaled integrals \(I_k\). Let R(x, y) denote the kernel of the resolvent \(R=\bar{\gamma }T\chi _t(1-\bar{\gamma }\chi _tT\chi _t)^{-1}\) on \(L^2(\mathbb {R})\).

Lemma 3.10

For every \(\gamma \in [0,1]\) and \(t\in \mathbb {R}\),

Proof

We begin with the kernel function identity (cf. [62, page 748]),

which, upon insertion into the integrand of \(I_1(t+\sqrt{2n},\gamma ,2n)\), leads to four integrals,

and

Apply Lemma 3.6 and conclude for the first two integrals

For the third integral in (3.26), we write

and note that each entry of the last \(L^2(\mathbb {R})\) inner product converges to its formal limits in \(L^2(\mathbb {R})\) sense, cf. Lemma 3.7, equation (3.23) and the workings in [56, page 1643]. The outstanding fourth integral is treated similarly, the difference being that the first entry in the corresponding \(L^2(\mathbb {R})\) inner product equals

Since both terms converge to their formal limits in \(L^2(\mathbb {R})\) sense (compare our reasoning above and (3.23)), we find all together,

which is the desired formula for \(I_1\), given that \(R=\bar{\gamma }T\chi _t(1-\bar{\gamma }\chi _tT\chi _t)^{-1}\). The derivation of the limit for \(I_2\) is completely analogous and in fact simpler since no integrals over \((-\infty ,t)\) occur. Moving ahead, the limit evaluation of \(I_3(t+\sqrt{2n},\gamma ,2n)\) also requires four integrals,

Note that

since \(\psi _{2n}\) is an odd function. Also

which converges to its formal limit as \(n\rightarrow \infty \); compare our reasoning for \(I_1\) and (3.18). The same is true for the remaining fourth integral and we obtain all together, as \(n\rightarrow \infty \),

which is the claimed identity. The derivation for \(I_4\) is again similar and does not use any integrals along \((-\infty ,t)\). This completes our proof. \(\square \)

With Lemma 3.8, 3.9 and 3.10 in place, we now obtain the following result.

Proposition 3.11

As \(n\rightarrow \infty \), uniformly for \(t\in \mathbb {R}\) chosen from compact subsets and any \(\gamma \in [0,1]\),

where u, v, p, q, r, w are the following six functions of \((t,\gamma )\in \mathbb {R}\times [0,1]\),

3.3 The Limit (1.12) for Odd n

In this subsection, we will compute the limit \(F_{2n+1}(t+\sqrt{2n+1},\gamma )\) using a comparison argument. Precisely, we show how the computations in Subsect. 3.1 have to be modified in order to account for odd \(n\in \mathbb {Z}_{\ge 3}\). These additional manipulations are necessary given the different structure of the operator \(IS_n\) in Proposition 1.1 for odd n. The details are as follows. We first relate \(\mathbf{K}_{2n+1}\) to \(\mathbf{K}_{2n}\):

Proposition 3.12

For any \(n\in \mathbb {Z}_{\ge 3}\),

and thus in turn,

Proof

Identity (3.30) follows from the equality

which appears in [56, page 1628] and which can be proven by induction on \(n\in \mathbb {Z}_{\ge 3}\) using the original definition of \(S_n(x,y)\) given in Proposition 1.1. Once (3.32) is known we find immediately (3.30) by comparison with (3.9). On the other hand,

which used (3.9) and

in the last equality. However, by the Legendre duplication formula [54, 5.5.5], for any \(n\in \mathbb {Z}_{\ge 1}\),

so (3.33) yields

and this is (3.31) after comparison with the kernel of \(IS_{2n+1}\) written in Proposition 1.1. \(\square \)

Inserting (3.30) and (3.31) into formula (1.2) for \(\mathbf{K}_{2n+1}\), we find that \(\mathbf{K}_{2n+1}=\mathbf{K}_{2n}+\mathbf{E}_{2n}\) where the operator \(\mathbf{E}_n\) has kernel

Note that \(\chi _t\mathbf{E}_n\chi _t\) is finite rank on \(L^2(\mathbb {R})\oplus L^2(\mathbb {R})\), so in particular trace class. Also, since for any \(x\in \mathbb {R}\),

Lemma 3.5 and triangle inequality yield that, in trace norm,

But \(1-\gamma \chi _{t+\sqrt{2n}}\mathbf{K}_{2n}\chi _{t+\sqrt{2n}}\) is invertible for sufficiently large n and any \((t,\gamma )\in \mathbb {R}\times [0,1]\) by the working of Sect. 3 and Remark 1.2. Hence, we use (1.4) and obtain for \(n\ge n_0\),

where the second (finite rank) determinant converges to one as \(n\rightarrow \infty \) because of (3.34). This shows that

for any \((t,\gamma )\in \mathbb {R}\times [0,1]\). In fact, the above convergence is uniform in \((t,\gamma )\in \mathbb {R}\times [0,1]\) chosen from compact subsets and since \(F_{2n+1}(t+\sqrt{2n},\gamma )\) is at least differentiable in \(t\in \mathbb {R}\) (this can be seen directly from (1.1) by scaling t into the kernel and then using the logic behind [1, Lemma 2.20]), we find

on compact subsets of \((t,\gamma )\in \mathbb {R}\times [0,1]\). Hence, combining (3.35) with (3.36) we arrive at the analogue of (3.28) for odd n, i.e.,

Proposition 3.13

Proposition 3.11 holds with \(F_{2n}(t+\sqrt{2n},\gamma )\) in the left-hand side of (3.28) replaced by \(F_{2n+1}(t+\sqrt{2n+1},\gamma )\).

Finally, merging Propositions 3.11 and 3.13 we have now established the existence of the limit (1.12). This completes the current section.

4 Proof of Theorem 1.8—final steps

In order to prove the outstanding representation (1.13) we now find a new representation for the \(3\times 3\) determinant in (3.28). To begin with, we list four algebraic relations between the functions u, v, p, q, r and w in Corollary 4.3. These follow from the next lemma. Recall \(R=(1-\bar{\gamma }T\chi _t\upharpoonright _{L^2(\mathbb {R})})^{-1}-1\) and the definitions of g and G in (1.9).

Lemma 4.1

For every \((t,\gamma )\in \mathbb {R}\times [0,1]\),

Proof

The first equality follows from [2, (4.9)] with the formal replacements \(\gamma \mapsto \bar{\gamma },G^{\gamma }\mapsto G\) and \(g^{\gamma }\mapsto g\), see [2, (4.3)]. The second and third are a consequence of (A.3). Indeed, we have

and, similarly,

Now choose \(K=T\) (which is self-adjoint since \(\phi (x)=\psi (x)=g(x)\) in (A.1)) and \(I=(0,\infty )\) in (A.3), so that

which is the second integral identity. For the third, we simply choose \(I=(-\infty ,0)\) in (A.3), and for the fourth we use (A.5), self-adjointness of T and \(\int _{-\infty }^{\infty }g(x){\mathrm d}x=1\) to find that

This completes our proof. \(\square \)

Remark 4.2

The first and third integral identities in Lemma 4.1 are the \(\bar{\gamma }\)-generalizations of the equalities [55, (2.6),(2.8),(2.10)] and [55, (2.3),(2.9)]. The second and fourth identities are seemingly new.

Corollary 4.3

For any \((t,\gamma )\in \mathbb {R}\times [0,1]\),

Proof

These follow from inserting the integral identities of Lemma 4.1 into the definitions of u, v, p, q, r and w. \(\square \)

Once we substitute (4.1) into the \(3\times 3\) determinant (3.28) we are left with two unknown, p and q, say, and the determinant simplifies to

Next, we define the two functions

for \((t,\gamma )\in \mathbb {R}\times [0,1],k=1,2\) and note that by (3.29)

Inserting (4.3) in (4.2), we find in turn

and now set out to simplify \(\tau _k\). First, by the second and fourth identity in Lemma 4.1,

Second, making essential use of the regularization scheme for Fredholm determinant and inner product manipulations in [62, Section VIII], we have the following two analogues of [37, (4.18),(4.21)] which we will use with \(a=\pm \sqrt{\bar{\gamma }}\).

Lemma 4.4

For any \((t,a)\in \mathbb {R}\times [-1,1]\),

where, for any test function f,

and \(S_t:L^2(0,\infty )\rightarrow L^2(0,\infty )\) denotes the trace class integral operator on \(L^2(0,\infty )\) with kernel

Proof

Note that

On the other hand, if \(T_t:L^2(0,\infty )\rightarrow L^2(0,\infty )\) has kernel \(T_t(x,y):=T(x+t,y+t)\), then

and we have \(T_t=S_tS_t\). Thus, without explicitly writing the underlying Hilbert spaces,

by self-adjointness of \(S_t\) and [2, Lemma 6.1]. Now using that \((1+aS_t)^{-1}=1-a(1+aS_t)^{-1}S_t\), we obtain at once the claimed identity from \(\langle \chi _0,\delta _0\rangle _{L^2(0,\infty )} =1\). \(\square \)

Lemma 4.5

For every \((t,a)\in \mathbb {R}\times [-1,1]\),

Proof

As outlined in [2, (6.9)], identity (4.6) is equivalent to

and thus to

where we do not indicate the underlying Hilbert spaces for compact notation. In proving (4.7), we use the following straightforward a-generalization of [2, (6.11)],

where \(\Delta _0\) denotes multiplication by \(\delta _0(x)\) and \(D(=\frac{{\mathrm d}}{{\mathrm d}x})\) differentiation. We have thus

i.e., identity (4.7). This completes our proof. \(\square \)

Hence, given that \(\det (1\mp \sqrt{\bar{\gamma }} S_t\upharpoonright _{L^2(0,\infty )})=\det (1\mp \sqrt{\bar{\gamma }}\chi _tS\chi _t\upharpoonright _{L^2(\mathbb {R})})>0\) with \(S:L^2(\mathbb {R})\rightarrow L^2(\mathbb {R})\) as in the formulation of Theorem 1.8, we obtain the following result from Lemma 4.4 and (4.6) with \(a=\pm \sqrt{\bar{\gamma }}\).

Proposition 4.6

For any \((t,\gamma )\in \mathbb {R}\times [0,1]\),

We now return to (4.4) and first use that \(\tau _1\tau _2=1\), so after simplification

But since

we then find (1.13) from (1.10), Propositions 3.11, 3.13 and equations (4.8), (4.9), (4.10). This completes our proof of Theorem 1.8.

5 Proof of Theorem 1.9

Our proof begins with the following analogue of [37, (4.12)].

Lemma 5.1

For any \((t,\gamma )\in \mathbb {R}\times [0,1]\), we have with \(\mu (t;\gamma )\) as in (1.15),

Proof

By definition of p and u in Proposition 3.11,

But with the formal replacement \(\gamma \mapsto \bar{\gamma }\) in [2, Section 4], we have

see [2, (4.19)], where (compare (1.15) and [2, Proposition 3.10]Footnote 1)

On the other hand, from [2, (4.11), (4.18)] after dividing out \(\sqrt{2\gamma }\) and replacing \(\gamma \mapsto \bar{\gamma }\),

so that with (5.2) and (5.3) back in the fourth equation in (4.1),

Thus, all together,

which is the analogue of [37, (4.12)]). The outstanding formula for \(\tau _1(t,\bar{\gamma })\) follows from (4.8). \(\square \)

In order to arrive at (1.14), we now apply (3.28), Proposition 3.13 and (4.9),

But for any \(a\in [0,1]\), see [2, (3.33)],

so with (5.1),

This is exactly (1.14).

6 Proof of Corollary 1.11

Note that with the abbreviation (1.12), for any \(\gamma \in [0,1]\),

since each eigenvalue is removed independently with likelihood \(1-\gamma \). But comparing the latter with (1.17) we find immediately (1.18). Note also that since \(\mu (x;\gamma )\) is in fact real analytic in \(x\in \mathbb {R}\) for any fixed \(\gamma \in [0,1]\) (see [2, Corollary 3.6] for continuity in x, real analyticity follows by a similar argument using the analytic Fredholm alternative in [64]) we obtain from Taylor’s theorem, (6.1) and (1.17),

This is the standard relation between the generating function and eigenvalue occupation probability known for any continuous one-dimensional statistical mechanical system, cf. [40, (8.1)].

7 Proof of Theorem 1.13 and Lemma 1.14

We prove (1.19), (1.20) and Lemma 1.14 in the upcoming three subsections.

7.1 Right Tail Asymptotics—Proof of (1.19)

From (5.5), i.e., [2, (3.33)],

and thus from [2, Lemma 3.11], as \(t\rightarrow +\infty \),

uniformly in \(\gamma \in [0,1]\). Moreover, from [2, (3.31), Proposition 3.10], as \(x\rightarrow +\infty \),

so that in (1.15), as \(t\rightarrow +\infty \),

uniformly in \(\gamma \in [0,1]\). Inserting (7.3) into (1.16) and combining the so-obtained result with (7.2) yields immediately (1.19).

7.2 Left Tail Asymptotics—Proof of (1.20)

From [2, Proposition 5.7], as \(t\rightarrow -\infty \) for any fixed \(a\in [0,1)\),

with the polylogarithm \(\text {Li}_s(z)\), [54, 25.12.10] and an unknown, t-independent, term \(D_1(a)\). Moreover, from [2, page 492], as \(t\rightarrow -\infty \) and fixed \(a\in [0,1)\),

with another unknown, t-independent, term \(D_2(a)\). Thus combining (7.4) and (7.5) in the right-hand side of (1.14) we find that for \(\gamma \in [0,1)\), as \(t\rightarrow -\infty \),

where \(\eta (\gamma )\) is, as of now, unknown. Since \(\eta (\gamma )\) comes from \(D_1(\bar{\gamma })\) and \(D_2(\bar{\gamma })\), we split its computation into two parts.

7.2.1 Total Integral Computation

We first address the computation of \(D_2(\bar{\gamma })\). Since

we need to evaluate a total integral. In order to achieve this, we follow the approach developed in [4], our net result being an analogue of [4, (28)]. Recall that \(\mathbf{Y}(z)=\mathbf{Y}(z;x,\gamma )\) solves RHP 1.3.

Lemma 7.1

The well-defined and invertible limit

satisfies

for arbitrary \(x,x_0\in \mathbb {R}\) and \(\gamma \in [0,1]\).

Proof

Define \(\mathbf{W}(z;x,\gamma ):=\mathbf{Y}(z;x,\gamma )\mathrm {e}^{-\mathrm {i}zx\sigma _3}\) for \(z\in \mathbb {C}\setminus \mathbb {R}\) with \((x,\gamma )\in \mathbb {R}\times [0,1]\) and where \(\sigma _3:=\bigl [{\begin{matrix}1 &{} 0\\ 0 &{} -1\end{matrix}}\bigr ]\). It is well-known, cf. [2, page 479], that \(\mathbf{W}=\mathbf{W}(z;x,\gamma )\) solves the Zakharov–Shabat system

Taking the limit \(z\rightarrow 0\) with \(\Im z<0\), we find that

with general solution (7.7). This completes our proof. \(\square \)

We now compute the limits of \(\cosh \nu \) and \(\sinh \nu \) as \(x\rightarrow +\infty \) and \(x_0\rightarrow -\infty \). By (7.7) these limits follow from the x-asymptotic behavior of \(\mathbf{V}(x,\gamma )\) and thus from \(\mathbf{Y}(z;x,\gamma )\). Some aspects of the asymptotic analysis of \(\mathbf{Y}(z;x,\gamma )\) were carried out in [2, Section 3.4], others can be found in Appendix 7.3.

Proposition 7.2

Let \(Y_1^{12}(x,\gamma )\) denote the (12)-entry of the matrix coefficient \(\mathbf{Y}_1(x,\gamma )\) in RHP 1.3, condition (3). Then for any fixed \(\gamma \in [0,1)\),

Proof

Since \(Y_1^{12}(\cdot ,\gamma )\in L^1(\mathbb {R})\) for any \(\gamma \in [0,1]\), see [2, Corollary 3.6] and [2, page 481,492], we will take \(x\rightarrow +\infty \) and \(x_0\rightarrow -\infty \) in (7.7) in order to compute the desired total integral. First, consider the limit

By [2, (3.28)], for any \(x>0\) and \(\gamma \in [0,1]\),

in terms of the solution \(\mathbf{T}(z;x,\gamma )\) of [2, RHP 3.12] evaluated at \(z=0\). But [2, (3.29),(3.30)] imply that \(\mathbf{T}(0;2x,\gamma )\rightarrow \mathbb {I}\) as \(x\rightarrow +\infty \), hence

Second, we compute

using the results of the nonlinear steepest descent analysis in Appendix 7.3. From (B.3) and (B.5), for any \(x<0\) and \(\gamma \in [0,1)\),

in terms of the solution \(\mathbf{M}(z;x,\gamma )\) of RHP B.4 evaluated at \(z=0\), where

But since the integrand in (7.9) is an odd function of s, the principal value integral in (7.9) equals zero. Furthermore, (B.8) implies that \(\mathbf{M}(0;2x,\gamma )\rightarrow \mathbb {I}\) as \(x\rightarrow -\infty \). Thus,

which inserted into (7.7) yields

and thus after simplification (with \(Y_1^{12}\in \mathbb {R}\)) the claimed integral identity. This completes our proof. \(\square \)

With Proposition 7.2 at hand, we obtain in turn

Corollary 7.3

For every fixed \(\gamma \in [0,1)\), as \(t\rightarrow -\infty \),

and thus

The last corollary concludes our computation of \(D_2(\gamma )\) in (7.5).

7.2.2 Resolvent Integration

We now compute \(D_1(\bar{\gamma })\) in (7.4) using a different set of techniques. To be precise, we first recall from [2, Proposition 3.3],

with the oriented contour (see Fig. 4 in Appendix 7.3)

where \(\omega >0\) will be determined in Lemma 7.5 and \(G:L^2(\Omega ,|{\mathrm d}\lambda |)\rightarrow L^2(\Omega ,|{\mathrm d}\lambda |)\) has kernel

The algebraic form (7.13) of its kernel identifies the operator G as an integrable operator, cf. [45], whose resolvent \(R=-1+(1-G)^{-1}\) on \(L^2(\Omega )\), if existent, has the form (B.1). Choosing right-sided limits for definiteness, we have from (B.1) and (B.2), for \(\lambda ,\mu \in \mathbb {R}\),

and for \(\lambda ,\mu \in \mathbb {R}+\mathrm {i}\omega \),

where \(\mathbf{S}(z)\) connects to RHP 1.3 via \(\mathbf{S}(z;t,\gamma )=\mathbf{Y}(z;\frac{t}{2},\gamma ),z\in \mathbb {C}\setminus \mathbb {R}\); compare [2, (3.20)]. Next, we record the following standard differential identity.

Proposition 7.4

For any \((t,\gamma )\in \mathbb {R}\times [0,1]\),

with the kernel \(R(\lambda ,\mu )\) of the resolvent \(R=-1+(1-G)^{-1}\) on \(L^2(\Omega )\).

Proof

We know from [2, page 475] that the resolvent operator exists for any \((t,\gamma )\in \mathbb {R}\times [0,1]\), thus by straightforward differentiation of (7.12) and (7.13),

This concludes our proof. \(\square \)

In order to apply (7.16), we use the explicit formula (B.1) for the kernel of \(R(\lambda ,\mu )\) (see [45] for regularity properties of \(R(\lambda ,\mu )\)),

and combine it with the asymptotic results of Appendix 7.3, afterward we integrate in (7.16). In more detail, once the \(t\rightarrow -\infty \) asymptotic expansion of the kernel \(R(\lambda ,\lambda )\) is known uniformly with respect to fixed \(\gamma \in [0,1)\) and any \(\lambda \in \Omega \) we simply integrate

and arrive at (1.20) and (1.21). The detailed steps of this approach are as follows: From (B.3), (B.5) for any \((t,\gamma )\in (-\infty ,0)\times [0,1)\), provided we choose \(\omega >0\) so that \(\Sigma _\mathbf{M}\cap (\mathbb {R}+\mathrm {i}\frac{\omega }{|t|})=\emptyset \), see Lemma 7.5,

for \((\lambda ,\mu )\in \Omega \times \Omega \) with the unimodular factors

and

Inserting (7.18) into the right-hand side of (7.16), we obtain after a short computation

with

Given the particular shape of \(\mathbf{f}(\lambda )\) and \(\mathbf{g}(\lambda )\) in (7.13), the first integral in (7.19) evaluates to zero. For the fourth integral, we record the following estimate.

Lemma 7.5

There exists \(c>0\) such that for every fixed \(\gamma \in [0,1)\) we can find \(t_0=t_0(\gamma )>0\) so that

for all \((-t)\ge t_0\).

Proof

If \(\gamma =0\), then \(\mathbf{A}_k(\lambda )\equiv \mathbb {I}\) for \(k=1,2\) and likewise \(\mathbf{M}(z)\equiv \mathbb {I}\); compare RHP B.4. Thus, the integral in question is identically zero and the claim trivially true. If \(\gamma \in (0,1)\) is fixed, pick \(\omega :=\frac{1}{4}\sqrt{-\ln \gamma }>0\) so that \(0<\frac{\omega }{|t|}<\delta _{t\gamma }\) for \((-t)\ge t_0\), and first note from (B.8),

thus, since \(\text {dist}(\Sigma _\mathbf{M},\mathbb {R})\ge \delta _{t\gamma }>0\), we indeed obtain the right-hand side in (7.20) as upper bound. On the other hand, by explicit computation using again (B.8),

where \(\text {dist}(\Sigma _\mathbf{M},\mathbb {R}+\mathrm {i}\frac{\omega }{|t|})\ge \frac{3}{4}\delta _{t\gamma }>0\) by choice of \(\omega \). We thus also obtain the right-hand side of (7.20) as upper bound and have therefore completed our proof. \(\square \)

The remaining two integrals in (7.19) yield non-trivial contributions. We first state a lemma which is used in their evaluation.

Lemma 7.6

For any \(a,b,\omega >0\),

Proof

Integration by parts in the variable s, as well as in the variable \(\lambda \), yields

and therefore

since both remaining integrals are standard Gaussians. This proves (7.21). \(\square \)

We now compute the two outstanding integrals in (7.19)

Lemma 7.7

For every \(\gamma \in [0,1)\),

Proof

Inserting the formulae for \(\mathbf{f}(\lambda ),\mathbf{g}(\lambda )\) and \(\mathbf{A}_2(\lambda )\), we find

Integrating by parts, collapsing \(\mathbb {R}+\mathrm {i}\omega \) to \(\mathbb {R}\) and using the oddness of a part of the integrand, we see that the first remaining integral yields

In the second (double) integral, we use geometric progression and the power series expansion \(\ln (1-z)=-\sum _{n=1}^{\infty }\frac{1}{n}z^n,|z|<1\) for \(h(s;1,\gamma )\),

since \(\frac{{\mathrm d}}{{\mathrm d}x}\text {Li}_{\frac{1}{2}}(x)=\frac{1}{x}\text {Li}_{-\frac{1}{2}}(x)\) and \(\text {Li}_{-\frac{1}{2}}(0)=\text {Li}_{\frac{1}{2}}(0)=0\). This completes our proof. \(\square \)

Lemma 7.8

For every \(\gamma \in [0,1)\),

Proof

Using the above formula for \(\mathbf{A}_1(\lambda )\) and (7.13) we find at once

Here, the first remaining integral was already computed in the proof of Lemma 7.7,

For the second one, we use the Plemelj–Sokhotski formula,

and note that by oddness of the integrand,

Thus, integrating by parts and adding (7.25), we find

Now change the contour \(\mathbb {R}\ni \lambda \) to \(\mathbb {R}+\mathrm {i}\omega \) by Cauchy’s theorem while using the analytic continuation (7.24) for the second round bracket. The result equals

after another integration by parts in the last equality. The obtained result is identical to the second (double) integral in the proof of Lemma 7.7, and we therefore find (7.23) all together. \(\square \)

We now combine (7.20), (7.23), (7.22) and (7.17) to obtain the following result.

Proposition 7.9

There exists \(c>0\) such that for every fixed \(\gamma \in [0,1)\) we can find \(t_0=t_0(\gamma )>0\) so that

for \((-t)\ge t_0\) where the error term \(r(t,\gamma )\) is differentiable with respect to \(\gamma \) and satisfies

Proof

We have, as \(t\rightarrow -\infty \),

uniformly in \(\gamma \in [0,1)\). Integrating this expansion in (7.17) from 0 to \(\gamma <1\) yields immediately the two leading terms in (7.26), and for the error term we estimate as follows:

This completes our proof. \(\square \)

.

Combining our results, we finally arrive at (1.20).

Corollary 7.10

As \(t\rightarrow -\infty \), for any \(\gamma \in [0,1)\),

Proof

From (1.14), (7.1), (7.4), (7.11) and (7.26) (substituting \(\gamma \mapsto \bar{\gamma }\) in the last equation),

as \(t\rightarrow -\infty \). The claim follows now after writing

\(\square \)

7.3 Proof of Lemma 1.14

Since \(\text {Li}_{\frac{1}{2}}(x)=x+\mathcal {O}(x^2)\) as \(x\rightarrow 0\) and, cf. [54, 25.12.12],

we see that

converges as \(\bar{\gamma }\uparrow 1\), so \(c_0(\gamma )\) is indeed continuous in \(\gamma \in [0,1]\). On the other hand, from the power series representation of the polylogarithm,

with

for some \(c>0\). Thus, for any \(0\le t<1\),

which verifies (1.23) for \(0\le \gamma <1\) through (1.21). But using again [54, 25.12.12], we also have that

so by Abel’s convergence theorem,

since \(\frac{1}{n}a_n\) is summable, see (7.28). The proof of Lemma 1.14 is now complete.

References

Amir, G., Corwin, I., Quastel, J.: Probability distribution of the free energy of the continuum directed random polymer in \(1+1\) dimensions. Comm. Pure Appl. Math. 64(4), 466–537 (2011). https://doi.org/10.1002/cpa.20347

Baik, J., Bothner, T.: The largest real eigenvalue in the real Ginibre ensemble and its relation to the Zakharov–Shabat system. Ann. Appl. Probab. 30(1), 460–501 (2020). https://doi.org/10.1214/19-AAP1509

Baik, J., Buckingham, R., DiFranco, J.: Asymptotics of Tracy-Widom distributions and the total integral of a Painlevé II function. Comm. Math. Phys. 280(2), 463–497 (2008). https://doi.org/10.1007/s00220-008-0433-5

Baik, J., Buckingham, R., DiFranco, J.: Its Alexander Total integrals of global solutions to Painlevé II, Nonlinearity. Nonlinearity 22(5), 1021–1061 (2009). https://doi.org/10.1088/0951-7715/22/5/006

Baik, J., Deift, P., Suidan, T.: Combinatorics and random matrix theory, Graduate Studies in Mathematics, 172 American Mathematical Society, Providence, RI (2016)

Basor, E.L., Tracy, C.A.: Asymptotics of a tau-function and Toeplitz determinants with singular generating functions. Int. J. Modern Phys. A 7(Suppl. 1A), 83–107 (1992). https://doi.org/10.1142/S0217751X92003732

Bleher, P., Bothner, T.: Calculation of the constant factor in the six-vertex model. Ann. Inst. Henri Poincaré Comb. Phys. Interact. 1(4), 363–427 (2014). https://doi.org/10.4171/AIHPD/11

Bohigas, O., Pato, M.P.: Randomly incomplete spectra and intermediate statistics. Phys. Rev. E 74(3), 036212 (2006). https://doi.org/10.1103/PhysRevE.74.036212

Bornemann, F.: On the numerical evaluation of Fredholm determinants. Math. Comp. 79(270), 871–915 (2010). https://doi.org/10.1090/S0025-5718-09-02280-7

Borodin, A., Sinclair, C.D.: The Ginibre ensemble of real random matrices and its scaling limits. Comm. Math. Phys. 291(1), 177–224 (2009). https://doi.org/10.1007/s00220-009-0874-5

Bothner., T.: A short note on the scaling function constant problem, Journal of Statistical Physics, 170(4), 672–683, (2018), Springer https://doi.org/10.1007/s10955-017-1947-z

Bothner, T., Warner, W.: Short Distance Asymptotics for a Generalized Two-point Scaling Function in the Two-dimensional Ising Model, Mathematical Physics, Analysis and Geometry, 21(4), 21–37, (2018), Springer, https://doi.org/10.1007/s11040-018-9296-y

Bothner, T., Buckingham, R.: Large deformations of the Tracy-Widom distribution I: Non-oscillatory asymptotics. Comm. Math. Phys. 359(1), 223–263 (2018). https://doi.org/10.1007/s00220-017-3006-7

Bothner, T.: Deift, Percy, Its, Alexander, Krasovsky, Igor, On the asymptotic behavior of a log gas in the bulk scaling limit in the presence of a varying external potential I. Comm. Math. Phys. 337(3), 1397–1463 (2015). https://doi.org/10.1007/s00220-015-2357-1

Bothner, T.: Deift, Percy, Its, Alexander, Krasovsky, Igor, On the asymptotic behavior of a log gas in the bulk scaling limit in the presence of a varying external potential II, BOOKLarge truncated Toeplitz matrices, Toeplitz operators, and related topics. Oper. Theory Adv. Appl. 259, 213–234 (2017)

Bothner, T.: Its Alexander Prokhorov Andrei On the analysis of incomplete spectra in random matrix theory through an extension of the Jimbo-Miwa-Ueno differential. Adv. Math. 345, 483–551 (2019). https://doi.org/10.1016/j.aim.2019.01.025

Charlier, C.: Large gap asymptotics for the generating function of the sine point process. Proc. Lond. Math. Soc., (2) 123(2), 103–152 (2021). https://doi.org/10.1112/plms.12393

Charlier, C.: Exponential moments and piecewise thinning for the Bessel point process, Int. Math. Res. Not. IMRN, International Mathematics Research Notices. IMRN, (21), 16009–16073, (2021) https://doi.org/10.1093/imrn/rnaa054

Charlier, C.: Claeys, Tom, Large gap asymptotics for Airy kernel determinants with discontinuities. Comm. Math. Phys. 375(2), 1299–1339 (2020). https://doi.org/10.1007/s00220-019-03538-w

Claeys, T., Fahs, B., Lambert, G., Webb, C.: How much can the eigenvalues of a random Hermitian matrix fluctuate? Duke Math. J. 170(9), 2085–2235 (2021). https://doi.org/10.1215/00127094-2020-0070

Cipolloni, G: Erdős, László, Schröder, Dominik, Edge universality for non-Hermitian random matrices. Probab. Theory Related Fields, Probability Theory and Related Fields 179(1–2), 1–28 (2021). https://doi.org/10.1007/s00440-020-01003-7

Dai, D., Xu, S-X., Zhang, L.: On the deformed Pearcey determinant, (2020), available at arXiv:2007.12691

Deift, P., Its, A., Krasovsky, I.: Asymptotics of the Airy-kernel determinant. Comm. Math. Phys. 278(3), 643–678 (2008). https://doi.org/10.1007/s00220-007-0409-x

Deift, P., Its, A., Krasovsky, I., Zhou, X.: The Widom-Dyson constant for the gap probability in random matrix theory. J. Comput. Appl. Math. 202(1), 26–47 (2007). https://doi.org/10.1016/j.cam.2005.12.040

Dieng, M.: Distribution functions for edge eigenvalues in orthogonal and symplectic ensembles: Painlevé representations, Int. Math. Res. Not., (37), 2263–2287,(2005) https://doi.org/10.1155/IMRN.2005.2263

Deift, P.: Integrable operators. Differ. Operat. Spectral Theory 189, 69–84 (1999). https://doi.org/10.1090/trans2/189/06

Deift, P., Gioev, D.: Random matrix theory: invariant ensembles and universality, Courant Lecture Notes in Mathematics, 18, Courant Institute of Mathematical Sciences, New York; American Mathematical Society, Providence, RI (2009) https://doi.org/10.1090/cln/018

Deift, P., Zhou, X.: A steepest descent method for oscillatory Riemann-Hilbert problems. Ann. of Math. (2) 137(2), 295–368 (1993). https://doi.org/10.2307/2946540

Di Francesco, P., Gaudin, M., Itzykson, C., Lesage, F.: Laughlin’s wave functions, Coulomb gases and expansions of the discriminant. Int. J. Mod. Phys. A 9, 4257–4351 (1994). https://doi.org/10.1142/S0217751X94001734

Edelman, A., Kostlan, E., Shub, M.: How many eigenvalues of a random matrix are real? J. Amer. Math. Soc. 7(1), 247–267 (1994). https://doi.org/10.2307/2152729

Edelman, A.: The probability that a random real Gaussian matrix has \(k\) real eigenvalues, related distributions, and the circular law, J. Multivariate Anal., 60(2), 203–232 (1997) https://doi.org/10.1006/jmva.1996.1653

Efetov, K.B.: Directed Quantum Chaos, journal Phys. Rev. Lett. 79(3), 491–494 (1997). https://doi.org/10.1103/PhysRevLett.79.491

Ehrhardt, T.: Dyson’s constant in the asymptotics of the Fredholm determinant of the sine kernel. Commun. Math. Phys. 262, 317–341 (2006). https://doi.org/10.1007/s00220-005-1493-4

Ehrhardt, T.: The asymptotics of a Bessel-kernel determinant which arises in random matrix theory. Adv. Math. 225(6), 3088–3133 (2010). https://doi.org/10.1016/j.aim.2010.05.020

Ferrari, Patrik L.: Spohn, Herbert, A determinantal formula for the GOE Tracy-Widom distribution, J. Phys. A, Journal of Physics. A. Mathematical and General, 38(33), L557–L561, (2005) https://doi.org/10.1088/0305-4470/38/33/L02

FitzGerald, W., Tribe, R., Zaboronski, Ol.: Sharp asymptotics for Fredholm Pfaffians related to interacting particle systems and random matrices, Electron. J. Probab., Electronic Journal of Probability, 25, Paper No. 116, 15, (2020) https://doi.org/10.1214/20-ejp512

Forrester, P.J., Desrosiers, P.: Relationships between \(\tau \)-functions and Fredholm determinant expressions for gap probabilities in random matrix theory. Nonlinearity 19(7), 1643–1656 (2006). https://doi.org/10.1088/0951-7715/19/7/012

Forrester, P. J.: Diffusion processes and the asymptotic bulk gap probability for the real Ginibre ensemble, J. Phys. A, Journal of Physics. A. Mathematical and Theoretical, 48(32), 324001, 14,(2015) https://doi.org/10.1088/1751-8113/48/32/324001

Forrester, P.J.: Hard and soft edge spacing distributions for random matrix ensembles with orthogonal and symplectic symmetry. Nonlinearity, Nonlinearity 19(12), 2989–3002 (2006). https://doi.org/10.1088/0951-7715/19/12/015

Forrester, P.J.: Log-gases and random matrices, London Mathematical Society Monographs Series, 34. Princeton University Press, Princeton, NJ (2010)

Forrester, P.J., Nagao, T.: Eigenvalue Statistics of the Real Ginibre Ensemble. Phys. Rev. Lett. 99(5), 050603 (2007). https://doi.org/10.1103/PhysRevLett.99.050603

Ginibre, J.: Statistical ensembles of complex, quaternion, and real matrices. J. Math. Phys. 6, 440–449 (1965). https://doi.org/10.1063/1.1704292

Gohberg, I., Goldberg, S., Krupnik, N.: Traces and determinants of linear operators, Operator Theory: Advances and Applications, 116, Birkhäuser Verlag, Basel (2000)

Illian, J., Penttinen, A., Stoyan, H., Stoyan, D.: Statistical analysis and modelling of spatial point patterns, Statistics in Practice, John Wiley & Sons, Ltd., Chichester (2008)

Its, A. R., Izergin, A. G., Korepin, V. E., Slavnov, N. A.: Differential equations for quantum correlation functions, BOOKProceedings of the Conference on Yang-Baxter Equations, Conformal Invariance and Integrability in Statistical Mechanics and Field Theory, Internat. J. Modern Phys. B, 4(5), 1003–1037 (1990). https://doi.org/10.1142/S0217979290000504

Lisovyy, O., Prokhorov, A.: Its Alexander Monodromy dependence and connection formulæfor isomonodromic tau functions. Duke Math. J. 167(7), 1347–1432 (2018). https://doi.org/10.1215/00127094-2017-0055

Its, A.: Prokhorov, Andrei, Connection problem for the tau-function of the sine-gordon reduction of Painlevé-III equation via the Riemann-Hilbert approach. Int. Math. Res. Not. 2016(22), 6856–6883 (2016). https://doi.org/10.1093/imrn/rnv375

Khoruzhenko, B.A.: Sommers, Hans-Jürgen, Non-Hermitian ensembles, BOOKThe Oxford handbook of random matrix theory, 376–397. Oxford Univ. Press, Oxford (2011)

Krasovsky, I.: Gap probability in the spectrum of random matrices and asymptotic of polynomials orthogonal on an arc of the unit circle. Int. Math. Res. Not. 25, 1249–1272 (2004)

Kwapien, J., Drozdz, S., Gorski, A.Z., Oswiecimka, P.: Asymmetric matrices in an analysis of financial correlations, Acta Phys. Pol. B 37 (3039), (2006)

Lambert, G.: Incomplete determinantal processes: from random matrix to Poisson statistics. J. Stat. Phys. 176(6), 1343–1374 (2019). https://doi.org/10.1007/s10955-019-02345-w

Lehmann, N., Sommers, H.-J.: Eigenvalue statistics of random real matrices. Phys. Rev. Lett. 67(8), 941–944 (1991). https://doi.org/10.1103/PhysRevLett.67.941

May, R.: Will a Large Complex System be Stable? Nature 238(5364), 413–414 (1972). https://doi.org/10.1038/238413a0

Olver, Frank W. J.: NIST handbook of mathematical functions, Lozier, Daniel W., Boisvert, Ronald F. Clark, Charles W. U.S. Department of Commerce, National Institute of Standards and Technology, Washington, DC; Cambridge University Press, Cambridge (2010)

Poplavskyi, M, Tribe, R, Zaboronski, O: On the distribution of the largest real eigenvalue for the real Ginibre ensemble. Ann. Appl. Probab. 27(3), 1395–1413 (2017). https://doi.org/10.1214/16-AAP1233

Rider, B., Sinclair, C.D.: Extremal laws for the real Ginibre ensemble. Ann. Appl. Probab. 24(4), 1621–1651 (2014). https://doi.org/10.1214/13-AAP958

Simon, B.: Trace ideals and their applications, Mathematical Surveys and Monographs, 120. American Mathematical Society, Providence, RI (2005)

Sommers, H.J., Crisanti, A., Sompolinsky, H., Stein, Y.: Spectrum of Large Random Asymmetric Matrices. Phys. Rev. Lett. 60(19), 1895–1898 (1988). https://doi.org/10.1103/PhysRevLett.60.1895

Sommers, Hans-Jürgen, Symplectic structure of the real Ginibre ensemble, J. Phys. A, Journal of Physics. A. Mathematical and Theoretical, 40(29), F671–F676, (2007) https://doi.org/10.1088/1751-8113/40/29/F03

Soshnikov, A.: Universality at the edge of the spectrum in Wigner random matrices. Comm. Math. Phys. 207(3), 697–733 (1999). https://doi.org/10.1007/s002200050743

Tracy, C.A.: Asymptotics of a \(\tau \)-function arising in the two-dimensional Ising model. Comm. Math. Phys. 142(2), 297–311 (1991)

Tracy, C.A.: Widom, Harold, On orthogonal and symplectic matrix ensembles. Comm. Math. Phys. 177(3), 727–754 (1996)

Tracy, CA., Widom, H.: Matrix kernels for the Gaussian orthogonal and symplectic ensembles, Ann. Inst. Fourier (Grenoble), Université de Grenoble. 55(6), 2197–2207 (2005)

Zhou, X.: The Riemann-Hilbert problem and inverse scattering, SIAM J. Math. Anal., 20(4), 966–986, (1989) https://doi.org/10.1137/0520065

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Vadim Gorin.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The work of J.B. is supported in part by the NSF grants DMS-1664692 and DMS-1954790. T.B. acknowledges support by the Engineering and Physical Sciences Research Council through grant EP/T013893/2.

Appendices

Appendix 8. Integral Identities

Given two continuous functions \(\phi ,\psi :\mathbb {R}\rightarrow \mathbb {R}\) which decay exponentially fast at \(+\infty \), we define

and the associated integral operator K on \(L^2(\mathbb {R})\) with kernel K(x, y). We denote by \(f_y(x):=f(x+y)\) the horizontal shift of a function f by \(-y\).

Lemma A.1

Let \(I\subset \mathbb {R}\) be an interval and

Then for any \(y,t\in \mathbb {R}\) and \(k\in \mathbb {Z}_{\ge 1}\),

where \(K^{*}\) is the real adjoint of K.

Proof

We proceed by induction on \(k\in \mathbb {Z}_{\ge 1}\). For \(k=1\), the left-hand side in (A.2) equals

and hence by Fubini’s theorem and the definition of \(\Phi (x)\),

which is the right-hand side in (A.2). Now assume (A.2) holds true for general k, then

where we used Fubini’s theorem in the second equality and the induction hypothesis in the third. Continuing further with Fubini’s theorem and the fact that \(K^{*}(x,y)=K(y,x)\), we have then

which is the right-hand side of (A.2) with \(k-1\mapsto k\), as desired. This concludes our proof. \(\square \)

Lemma A.1 implies the following integral identity.

Corollary A.2

For any \(t\in \mathbb {R}\) and \(k\in \mathbb {Z}_{\ge 1}\),

Proof

By (A.2) (with \(y=t\)) and \(\chi _{[t,\infty )}(t)=1\),

However, from [2, Proposition B.1] we have

since in the kernel of \(K^{*}\) the functions \(\phi \) and \(\psi \) are simply interchanged; compare (A.1). But \(\psi _0\equiv \psi \) and for any function f we have \(f_{u-t}(t)=f(u)\) by definition of the shift. Thus all together,

as claimed.\(\square \)

Lemma A.3

Assume \(\phi \in L^1(\mathbb {R})\) in (A.1). Then, for any \(y,t\in \mathbb {R}\) and \(k\in \mathbb {Z}_{\ge 1}\),

Proof

We use once more induction on \(k\in \mathbb {Z}_{\ge 1}\). For \(k=1\), the left-hand side in (A.4) equals

so by Fubini’s theorem

which is the right-hand side in (A.4) for \(k=1\). Assuming now that (7.2) holds for general k, we compute

Inserting (A.1) for K(u, v) and using Fubini’s theorem, we find that

by Fubini’s theorem. Using Fubini’s theorem again and the induction hypothesis, the above simplifies to

and from (A.1) we conclude that

which is the right-hand side of (A.4) with \(k\mapsto k+1\), as needed. This completes our proof. \(\square \)

The special case \(y=0\) in (A.4) will be useful for us; we summarize it below.

Corollary A.4

For any \(t\in \mathbb {R}\) and \(k\in \mathbb {Z}_{\ge 1}\),

provided \(\phi \in L^1(\mathbb {R})\) in (A.1).

Appendix 9. Streamlined Nonlinear Steepest Descent Analysis

The purpose of this section is to simplify and streamline [2, Section 5]. The analysis presented in loc. cit. is sufficient for the t-derivative method of [2, Proposition 3.7] but not ideal for our current needs, i.e., for Proposition 7.4. Here are the necessary steps: From [2, Proposition 3.3],

where the integrable operator G, see (7.13), is naturally associated with the following RHP.

Riemann-Hilbert Problem B.1

([2, RHP 3.4]) For \((t,\gamma )\in \mathbb {R}\times [0,1]\), determine \(\mathbf{N}(z)=\mathbf{N}(z;t,\gamma )\in \mathbb {C}^{2\times 2}\) such that

-

(1)

\(\mathbf{N}(z)\) is analytic for \(z\in \mathbb {C}\setminus \Omega \) where \(\Omega =\mathbb {R}\cup (\mathbb {R}+\mathrm {i}\omega )\), oriented from left to right as shown in Fig. 4. Moreover, \(\mathbf{N}(z)\) extends continuously to \(\{z\in \mathbb {C}:\Im z\ge \omega \}\cup \{z\in \mathbb {C}:0\le \Im z\le \omega \}\cup \{z\in \mathbb {C}:\Im z\le 0\}\).

-

(2)

The limiting values \(\mathbf{N}_{\pm }(z),z\in \Omega \) from either side of \(\mathbb {C}\setminus \Omega \) satisfy

$$\begin{aligned}&\mathbf{N}_+(z)=\mathbf{N}_-(z)\begin{bmatrix}1 &{} -\mathrm {i}\sqrt{\gamma }\mathrm {e}^{-\frac{1}{4}z^2-\mathrm {i}tz}\\ 0 &{} 1\end{bmatrix},\ z\in \mathbb {R};\\&\mathbf{N}_+(z)=\mathbf{N}_-(z)\begin{bmatrix}1 &{} 0\\ -\mathrm {i}\sqrt{\gamma }\mathrm {e}^{-\frac{1}{4}z^2+\mathrm {i}tz} &{} 1\end{bmatrix},\ z\in \mathbb {R}+\mathrm {i}\omega . \end{aligned}$$ -

(3)

As \(z\rightarrow \infty \), we enforce the normalization

$$\begin{aligned} \mathbf{N}(z)=\mathbb {I}+\mathcal {O}\big (z^{-1}\big ). \end{aligned}$$

It was shown in [2, Corollary 3.6] that the above RHP B.1 is uniquely solvable for every \((t,\gamma )\in \mathbb {R}\times [0,1]\) and its solution allows us to compute the resolvent \(R=1+(1-G)^{-1}\) on \(L^2(\Omega )\) in the form

In order to solve RHP B.1 asymptotically as \(t\rightarrow -\infty \) with \(\gamma \in [0,1)\) we first collapse the two jump contours in Fig. 4 and thus define

This leads us to the problem summarized below.

Riemann-Hilbert Problem B.2

For any \((t,\gamma )\in \mathbb {R}\times [0,1]\), the function \(\mathbf{S}(z)=\mathbf{S}(z;t,\gamma )\in \mathbb {C}^{2\times 2}\) defined in (B.2) satisfies

-

(1)

\(\mathbf{S}(z)\) is analytic for \(z\in \mathbb {C}\setminus \mathbb {R}\) and extends continuously to the closed upper and lower half-planes.

-

(2)

With \(\mathbf{S}_{\pm }(z)=\lim _{\epsilon \downarrow 0}\mathbf{S}(z\pm \mathrm {i}\epsilon ),z\in \mathbb {R}\), we have

$$\begin{aligned} \mathbf{S}_+(z)=\mathbf{S}_-(z)\begin{bmatrix}1-\gamma \mathrm {e}^{-\frac{1}{2}z^2} &{} -\mathrm {i}\sqrt{\gamma }\mathrm {e}^{-\frac{1}{4}z^2-\mathrm {i}tz}\\ -\mathrm {i}\sqrt{\gamma }\mathrm {e}^{-\frac{1}{4}z^2+\mathrm {i}tz} &{} 1\end{bmatrix},\ \ z\in \mathbb {R}. \end{aligned}$$ -

(3)

As \(z\rightarrow \infty \),

$$\begin{aligned} \mathbf{S}(z)=\mathbb {I}+\mathcal {O}\big (z^{-1}\big ). \end{aligned}$$

Observe that (B.2) relates to the solution of RHP 1.3 via the simple identity \(\mathbf{S}(z;t,\gamma )=\mathbf{Y}(z;\frac{t}{2},\gamma )\). Next, fix \(t<0\), and define

with the g-function from [2, (5.4)], i.e.,

where \(h(s;t,\gamma ):=-\ln (1-\gamma \mathrm {e}^{-\frac{1}{2}t^2s^2})\) is Hölder continuous in \(s\in \mathbb {R}\) for every \(\gamma \in [0,1)\). Thus, by the standard Plemelj–Sokhotski formula, we arrive at the following problem:

Riemann-Hilbert Problem B.3

For any \((t,\gamma )\in (-\infty ,0)\times [0,1)\), the function \(\mathbf{T}(z)=\mathbf{T}(z;t,\gamma )\in \mathbb {C}^{2\times 2}\) defined in (B.3) satisfies

-

(1)

\(\mathbf{T}(z)\) is analytic for \(z\in \mathbb {C}\setminus \mathbb {R}\) and extends continuously to the closed upper and lower half-planes.

-

(2)

The boundary values \(\mathbf{T}_{\pm }(z)=\lim _{\epsilon \downarrow 0}{} \mathbf{T}(z\pm \mathrm {i}\epsilon )\) are related by the jump condition

$$\begin{aligned} \mathbf{T}_+(z)=\mathbf{T}_-(z)\begin{bmatrix}1 &{} -\mathrm {i}\sqrt{\gamma }f_1(z;t,\gamma )\mathrm {e}^{-2g_+(z;t,\gamma )}\\ -\mathrm {i}\sqrt{\gamma }f_2(z;t,\gamma )\mathrm {e}^{2g_-(z;t,\gamma )} &{} 1-\gamma \mathrm {e}^{-\frac{1}{2}t^2z^2}\end{bmatrix},\ \ z\in \mathbb {R}, \end{aligned}$$with

$$\begin{aligned} f_k(z)\equiv f_k(z;t,\gamma ):=\frac{\mathrm {e}^{-t^2(\frac{1}{4}z^2+(-1)^k\mathrm {i}z)}}{1-\gamma \mathrm {e}^{-\frac{1}{2}t^2z^2}},\ \ z\in \mathbb {R},\ \ k=1,2. \end{aligned}$$(B.4) -

(3)

As \(z\rightarrow \infty \),