Abstract

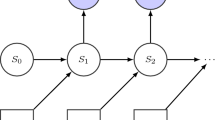

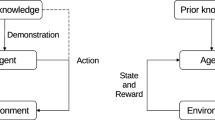

Learning from Observation (a.k.a. learning from demonstration) studies how computers can learn to perform complex tasks by observing and thereafter imitating the performance of an expert. Most work on learning from observation assumes that the behavior to be learned can be expressed as a state-to-action mapping. However most behaviors of interest in real applications of learning from observation require remembering past states. We propose a Dynamic Bayesian Network approach to learning from observation that addresses such problem by assuming the existence of non-observable states.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Argall, B.D., Chernova, S., Veloso, M., Browning, B.: A survey of robot learning from demonstration. Robot. Auton. Syst. 57, 469–483 (2009)

Bauer, M.A.: Programming by examples. Artificial Intelligence 12(1), 1–21 (1979)

Bengio, Y., Frasconi, P.: Input/output hmms for sequence processing. IEEE Transactions on Neural Networks 7, 1231–1249 (1996)

Dempster, A.P., Laird, N.M., Rubin, D.B.: Maximum likelihood from incomplete data via the em algorithm. Journal of the Royal Statistical Society, Series B 39(1), 1–38 (1977)

Fernlund, H.K.G., Gonzalez, A.J., Georgiopoulos, M., DeMara, R.F.: Learning tactical human behavior through observation of human performance. IEEE Transactions on Systems, Man, and Cybernetics, Part B 36(1), 128–140 (2006)

Floyd, M.W., Esfandiari, B., Lam, K.: A case-based reasoning approach to imitating robocup players. In: Proceedings of the Twenty-First International Florida Artificial Intelligence Research Society (FLAIRS), pp. 251–256 (2008)

Ghahramani, Z.: Learning dynamic Bayesian networks. In: Caianiello, E.R. (ed.) Adaptive Processing of Sequences and Data Structures, International Summer School on Neural Networks. Tutorial Lectures, pp. 168–197. Springer, London (1998)

Lozano-Pérez, T.: Robot programming. Proceedings of IEEE 71, 821–841 (1983)

Moriarty, C.L., Gonzalez, A.J.: Learning human behavior from observation for gaming applications. In: FLAIRS Conference (2009)

Ng, A.Y., Russell, S.: Algorithms for Inverse Reinforcement Learning. In: in Proc. 17th International Conf. on Machine Learning, pp. 663–670 (2000)

Ontañón, S., Mishra, K., Sugandh, N., Ram, A.: On-line case-based planning. Computational Intelligence Journal 26(1), 84–119 (2010)

Papoulis, A., Pillai, S.U.: Probability, Random Variables, and Stochastic Processes. McGraw-Hill Series in Electrical and Computer Engineering. McGraw-Hill (2002)

Pomerleau, D.: Alvinn: An autonomous land vehicle in a neural network. In: Touretzky, D.S. (ed.) Advances in Neural Information Processing Systems, vol. 1. Morgan Kaufmann (1989)

Rabiner, L.R.: A tutorial on hidden markov models and selected applications in speech recognition. Proceedings of the IEEE, 257–286 (1989)

Sammut, C., Hurst, S., Kedzier, D., Michie, D.: Learning to fly. In: Proceedings of the Ninth International Workshop on Machine Learning (ML 1992), pp. 385–393 (1992)

Schaal, S.: Learning from demonstration. In: NIPS, pp. 1040–1046 (1996)

Sidani, T.: Automated Machine Learning from Observation of Simulation. Ph.D. thesis, University of Central Florida (1994)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Ontañón, S., Montaña, J.L., Gonzalez, A.J. (2013). A Dynamic Bayesian Network Framework for Learning from Observation. In: Bielza, C., et al. Advances in Artificial Intelligence. CAEPIA 2013. Lecture Notes in Computer Science(), vol 8109. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-40643-0_38

Download citation

DOI: https://doi.org/10.1007/978-3-642-40643-0_38

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-40642-3

Online ISBN: 978-3-642-40643-0

eBook Packages: Computer ScienceComputer Science (R0)