Abstract

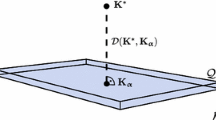

In this paper, we review existing radius-incorporated Multiple Kernel Learning (MKL) algorithms, trying to explore the similarities and differences, and provide a deep understanding of them. Our analysis and discussion uncover that traditional margin based MKL algorithms also take an approximate radius into consideration implicitly by base kernel normalization. We perform experiments to systematically compare a number of recently developed MKL algorithms, including radius-incorporated, margin based and discriminative variants, on four MKL benchmark data sets including Protein Subcellular Localization, Protein Fold Prediction, Oxford Flower17 and Caltech101 in terms of both the classification performance, measured by classification accuracy and mean average precision. We see that overall, radius-incorporated MKL algorithms achieve significant improvement over other counterparts in terms of classification performance.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

References

Bach, F.R., Lanckriet, G.R.G., Jordan, M.I.: Multiple kernel learning, conic duality, and the smo algorithm. In: ICML, pp. 649–657 (2004)

Damoulas, T., Girolami, M.A.: Probabilistic multi-class multi-kernel learning: on protein fold recognition and remote homology detection. Bioinformatics 24(10), 1264–1270 (2008)

Do, H., Kalousis, A., Woznica, A., Hilario, M.: Margin and radius based multile kernel learning. In: ICML, pp. 330–343 (2009)

Gai, K., Chen, G., Zhang, C.: Learning kernels with radiuses of minimum enclosing balls. In: NIPS, pp. 649–657 (2010)

Kloft, M., Brefeld, U., Sonnenburg, S., Zien, A.: ℓ p -norm multiple kernel learning. JMLR 12, 953–997 (2011)

Liu, X., Wang, L., Yin, J., Liu, L.: Incorporation of radius-info can be simple with simplemkl. Neurocomputing 89, 30–38 (2012)

Liu, X., Wang, L., Yin, J., Zhu, E., Zhang, J.: An efficient approach to integrating radius information into multiple kernel learning. IEEE T. Cybernetics 43(2), 557–569 (2013)

Nilsback, M.E., Zisserman, A.: A visual vocabulary for flower classification. In: CVPR, vol. 2, pp. 1447–1454 (2006)

Nilsback, M.E., Zisserman, A.: Delving deeper into the whorl of flower segmentation. Image Vision Comput. 28(6), 1049–1062 (2010)

Chapelle, O., Vapnik, V., Bousquet, O., Mukherjee, S.: Choosing multiple parameters for support vector machines. Machine Learning 46, 131–159 (2002)

Rakotomamonjy, A., Bach, F., Grandvalet, Y., Canu, S.: Simplemkl. JMLR 9, 2491–2521 (2008)

Wang, L.: Feature selection with kernel class separability. IEEE Trans. PAMI 30, 1534–1546 (2008)

Xu, Z., Jin, R., Yang, H., King, I., Lyu, M.R.: Simple and efficient multiple kernel learning by group lasso. In: ICML, pp. 1175–1182 (2010)

Ye, J., Ji, S., Chen, J.: Multi-class discriminant kernel learning via convex programming. JMLR 9, 719–758 (2008)

Zien, A., Ong, C.S.: Multiclass multiple kernel learning. In: ICML, pp. 1191–1198 (2007)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Liu, X., Yin, J., Long, J. (2014). On Radius-Incorporated Multiple Kernel Learning. In: Torra, V., Narukawa, Y., Endo, Y. (eds) Modeling Decisions for Artificial Intelligence. MDAI 2014. Lecture Notes in Computer Science(), vol 8825. Springer, Cham. https://doi.org/10.1007/978-3-319-12054-6_20

Download citation

DOI: https://doi.org/10.1007/978-3-319-12054-6_20

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-12053-9

Online ISBN: 978-3-319-12054-6

eBook Packages: Computer ScienceComputer Science (R0)