Abstract

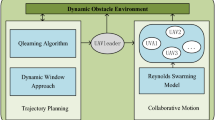

Obstacle avoidance is very important for UAV flying in unknown environment. In this paper, the UAV’s obstacle avoidance method in unknown environment is proposed from two different view combined with the forward looking perception information of UAV. One is to propose a local real-time planning method from the perspective of optimization. The other is to combined with reinforcement learning to propose a method of avoiding action selection. In this paper, simulation experiments and comparative experiments are carried out to prove the effectiveness of the method.

This work was supported by National Nature Science Foundation (NNSF) of China under Grant 61876187.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Nonami K, Kendoul F, Suzuki S et al (2010) Autonomous flying robots: unmanned aerial vehicles and micro aerial vehicles. Springer Science & Business Media

Usenko V, von Stumberg L, Pangercic A et al (2017) Real-time trajectory replanning for MAVs using uniform B-splines and a 3D circular buffer. IEEE/RSJ Int Conf Intell Robots Syst (IROS) 2017:215–222

Nießner M, Zollhöfer M, Izadi S et al (2013) Real-time 3D reconstruction at scale using voxel hashing. ACM Trans Graph (ToG) 32(6):1–11

Oleynikova H et al (2016) Voxblox: Building 3D signed distance fields for planning. arXiv-1611

Oleynikova H et al (2018) Safe local exploration for replanning in cluttered unknown environments for microaerial vehicles. IEEE Robot Autom Lett:1474–1481

Hornung A, Wurm KM, Bennewitz M et al (2013) OctoMap: an efficient probabilistic 3D mapping framework based on octrees. Auton Robot 34(3):189–206

Hu J, Niu Y, Wang Z (2017) Obstacle avoidance methods for rotor UAVS using realsense camera. In: 2017 Chinese automation congress (CAC). IEEE, pp 7151–7155

Ma Z, Wang C, Niu Y et al (2018) A saliency-based reinforcement learning approach for a UAV to avoid flying obstacles. Robot Auton Syst 100:108–118

LaValle SM (1998) Rapidly-exploring random trees: a new tool for path planning

Kuffner JJ, LaValle SM (2000) RRT-connect: an efficient approach to single-query path planning. In: Proceedings 2000 ICRA. Millennium conference. IEEE international conference on robotics and automation. Symposium proceedings. IEEE, vol 2, pp 995–1001

Shan E, Dai B, Song J et al (2009) A dynamic RRT path planning algorithm based on B-spline. In: 2009 second international symposium on computational intelligence and design. IEEE, vol 2, pp 25–29

Karaman S, Frazzoli E (2011) Sampling-based algorithms for optimal motion planning. Int J Robot Res 30(7):846–894

Webb DJ, Van Den Berg J (2013) Kinodynamic RRT*: asymptotically optimal motion planning for robots with linear dynamics. In: 2013 IEEE international conference on robotics and automation. IEEE, pp 5054–5061

Mellinger D, Kumar V () Minimum snap trajectory generation and control for quadrotors. In: 2011 IEEE international conference on robotics and automation. IEEE, pp 2520–2525

Richter C, Bry A, Roy N (2016) Polynomial trajectory planning for aggressive quadrotor flight in dense indoor environments. Robot Res:649–666

Oleynikova H, Burri M, Taylor Z et al (2016) Continuous-time trajectory optimization for online UAV replanning. In: 2016 IEEE/RSJ international conference on intelligent robots and systems (IROS). IEEE, pp 5332–5339

Gao F, Lin Y, Shen S (2017) Gradient-based online safe trajectory generation for quadrotor flight in complex environments. In: 2017 IEEE/RSJ international conference on intelligent robots and systems (IROS). IEEE, pp 3681–3688

Oleynikova H, Taylor Z, Siegwart R et al (2018) Safe local exploration for replanning in cluttered unknown environments for microaerial vehicles. IEEE Robot Autom Lett 3(3):1474–1481

Li Y (2018) Deep reinforcement learning (2018) ICASSP 2018—2018 IEEE international conference on acoustics, speech and signal processing (ICASSP)

Li Y (2017) Deep reinforcement learning: an overview

Mnih V, Badia A P, Mirza M et al (2016) Asynchronous methods for deep reinforcement learning

Yen GG, Hickey TW (2004) Reinforcement learning algorithms for robotic navigation in dynamic environments. ISA Trans 43(2):217–230

Cheng Y, Zhang W (2018) Concise deep reinforcement learning obstacle avoidance for underactuated unmanned marine vessels. Neurocomputing:272

Xie L, Wang S, Markham A et al (2017) Towards monocular vision based obstacle avoidance through deep reinforcement learning

Wang Z, Schaul T, Hessel M et al (2016) Dueling network architectures for deep reinforcement learning. In: International conference on machine learning, pp 1995–2003

Van Hasselt H, Guez A, Silver D (2015) Deep reinforcement learning with double Q-learning. Comput Sci

Xie L, Wang S, Rosa S et al (2018) Learning with training wheels: speeding up training with a simple controller for deep reinforcement learning

Ma Z (2018) Learning based sense-and-avoid of UAVs. National University of Defense Technology

Kim I, Shin S, Wu J et al (2017) Obstacle avoidance path planning for UAV using reinforcement learning under simulated environment. In: IASER 3rd international conference on electronics, electrical engineering, computer science, Okinawa, pp 34–36

González-Prelcic N, Akl N, Behroozi A et al (2018) Deep reinforcement learning for aerial obstacle avoidance using monocular RGB images

Wang C, Wang J, Zhang X (2018) A deep reinforcement learning approach to flocking and navigation of UAVS in largescale complex environments. In: 2018 IEEE global conference on signal and information processing (GlobalSIP). IEEE, pp 1228–1232

Qin K (2000) General matrix representations for B-splines. Vis Comput 16(3–4):177–186

Lee T, Leok M, McClamroch NH (2010) Geometric tracking control of a quadrotor UAV on SE (3). In: 49th IEEE conference on decision and control (CDC). IEEE, pp 5420–5425

Watkins CJCH, Dayan P (1992) Technical note: Q-learning. Mach Learn 8(3–4):279–292

Rangel A, Camerer C, Montague PR (2008) A framework for studying the neurobiology of value-based decision making. Nat Rev Neurosci 9(7):545–556

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this chapter

Cite this chapter

Ma, Z., Hu, J., Niu, Y., Yu, H. (2021). Reactive Obstacle Avoidance Method for a UAV. In: Koubaa, A., Azar, A.T. (eds) Deep Learning for Unmanned Systems. Studies in Computational Intelligence, vol 984. Springer, Cham. https://doi.org/10.1007/978-3-030-77939-9_3

Download citation

DOI: https://doi.org/10.1007/978-3-030-77939-9_3

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-77938-2

Online ISBN: 978-3-030-77939-9

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)