Abstract

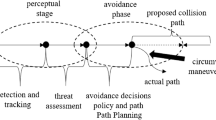

An algorithm for UAV collision avoidance based on the reinforcement learning is used for small fixed-wing unmanned aerial vehicles (UAVs). The proposed algorithm realized the obstacle avoidance of UAV in unknown environment and the result is close to the global optimal path. The simulation results show that the collision avoidance algorithm can adapt to various complex environments. Meanwhile, the UAV can quickly get close to the target while avoiding obstacles.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Radmanesh, M., Kumar, M., Guentert, P.H., et al.: Overview of path planning and obstacle avoidance algorithms for UAVs: a comparative study. Unmanned Syst. 6(2), 95–118 (2018). https://doi.org/10.1142/S2301385018400022

Dechter, R., Pearl, J.: Generalized best-first search strategies and the optimality of A*. J. ACM 32(3), 505–536 (1985)

Colorni, A.: An investigation of some properties of an “Ant Algorithm”. Parallel Prob. Solving Nat. 2(4), 515–526 (1992)

Liang, K., Chunxia, Z., Jianhui, G.: Improved path planning of mobile robot based on BUG algorithm in unknown environment. J. Syst. Simul. 21(17), 5414–5422 (2009)

Borenstein, J., Koren, Y.: Real-time obstacle avoidance for fast mobile robots. IEEE Trans. Syst. Man Cybern. 19(5), 1179–1187 (1989)

Park, M.G., Jeon, J.H., Lee, M.C.: Obstacle avoidance for mobile robots using artificial potential field approach with simulated annealing. In: IEEE International Symposium on Industrial Electronics. IEEE (2001)

Whitehead, S.D.: A complexity analysis of cooperative mechanisms in reinforcement learning. In: AAAI, pp. 607–613 (1991)

Chen, C., Li, H.-X., Dong, D.: Hybrid control for robot navigation-a hierarchical Q-learning algorithm. Robot. Autom. Mag. 15(2), 37–47 (2008)

Zhang, Q., Li, M., Wang, X., Zhang, Y.: Reinforcement learning in robot path optimization. J. Softw. 7(3), 657–662 (2012)

Yijing, Z., Zheng, Z., Xiaoyi, Z., et al.: Q learning algorithm based UAV path learning and obstacle avoidance approach. In: 2017 36th Chinese Control Conference (CCC), pp. 3397–3402. IEEE (2017)

Kim, I., Shin, S., Wu, J., et al.: Obstacle avoidance path planning for UAV using reinforcement learning under simulated environment. In: IASER 3rd International Conference on Electronics, Electrical Engineering, Computer Science, Okinawa, pp. 34–36 (2017)

Bin, F., XiaoFeng, F., Shuo, X.: Research on cooperative collision avoidance problem of multiple UAV based on reinforcement learning. In: 2017 10th International Conference on Intelligent Computation Technology and Automation (ICICTA), pp. 103–109. IEEE (2017)

Ma, Z., Wang, C., Niu, Y., et al.: A saliency-based reinforcement learning approach for a UAV to avoid flying obstacles. Robot. Auton. Syst 100, 108–118 (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Liu, J., Wang, Z., Zhang, Z. (2020). The Algorithm for UAV Obstacle Avoidance and Route Planning Based on Reinforcement Learning. In: Wang, R., Chen, Z., Zhang, W., Zhu, Q. (eds) Proceedings of the 11th International Conference on Modelling, Identification and Control (ICMIC2019). Lecture Notes in Electrical Engineering, vol 582. Springer, Singapore. https://doi.org/10.1007/978-981-15-0474-7_70

Download citation

DOI: https://doi.org/10.1007/978-981-15-0474-7_70

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-15-0473-0

Online ISBN: 978-981-15-0474-7

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)