Abstract

Nature-inspired optimization algorithms, especially evolutionary computation-based and swarm intelligence-based algorithms are being used to solve a variety of optimization problems. Motivated by the obligation of having optimization algorithms, a novel optimization algorithm based on a lion’s unique social behavior had been presented in our previous work. Territorial defense and territorial takeover were the two most popular lion’s social behaviors. This paper takes the algorithm forward on rigorous and diverse performance tests to demonstrate the versatility of the algorithm. Four different test suites are presented in this paper. The first two test suites are benchmark optimization problems. The first suite had comparison with published results of evolutionary and few renowned optimization algorithms, while the second suite leads to a comparative study with state-of-the-art optimization algorithms. The test suite 3 takes the large-scale optimization problems, whereas test suite 4 considers benchmark engineering problems. The performance statistics demonstrate that the lion algorithm is equivalent to certain optimization algorithms, while outperforming majority of the optimization algorithms. The results also demonstrate the trade-off maintainability of the lion algorithm over the traditional algorithms.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Nature-inspired computing plays a great role in solving uncertain, partially true, imprecise, highly conflicting and complex problems with the aid of nature’s behavior and its inspiration [1, 2]. Bio-inspired computing can be said as a subset of natural computing that has emerged from the day of solving real-life problems with biological motivation [3, 4]. The nature computing has become popular among all the researchers, when it has broken barriers of classical computing [5], solving classification and prediction problems and more specifically optimization problems. Numerous optimization algorithms or optimal search algorithms have been developed based on natural inspiration since 1970 [6]. Since then, bio-inspired optimization algorithms such as genetic algorithm, particle swarm optimization algorithm, and many more find applications in almost all the emerging fields [5, 7,8,9].

In the family of bio-inspired optimization algorithms, a new optimization algorithm called as lion algorithm is introduced in this paper. Previously, a basic model of the algorithm (was termed as lion’s algorithm) was proposed in [10]. Further, we extended the algorithm (named as lion algorithm) and solved large scale bilinear system identification [11]. Since the development, numerous researchers have adopted our algorithm for various applications [12,13,14]. However, it has not been well-studied for its versatility. Hence, this paper investigates the performance of the lion algorithm on different test suites (downloadable at https://sites.google.com/view/lionalgorithm/test-suite) and engineering optimization problems.

2 Proposed lion algorithm

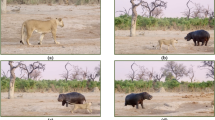

2.1 Biological inspiration

Distinct from other cat species, lions survive with an interesting social system/behavior, termed as pride, to strengthen their own species at every generation. Generally, a pride is comprised of 1–3 lion pairs in which the resident females attend males to give birth to offspring. They share an area called a territory with peaceful interactions. The dominating lion of a territory (often called as territorial lion) rules the territory by fighting against other attacking animals including nomadic lions. The territorial defense continues till the cubs attain sexual maturity, probably for 2–4 years. During these 2–4 years, nomadic lions try to invade the pride. Frequent survival fights take place between the nomadic lions and the pride (for which territorial lion is responsible) for the territorial defense. A coalition among the lions, which are in pride, helps to defeat the nomadic lion. However, if the territorial lion is defeated, it will be killed or driven out by the pride of the nomadic lion. The nomadic lion becomes the territorial lion by taking charge of the pride. It kills the cubs of a lost lion and drives the lioness to estrus. Copulation begins between the lioness and the new territorial lion to give birth to offspring of a new lion. The behavior continues until cubs of a territory get matured.

Once the cubs become adult and if proved as they are stronger than the territorial lion, territorial takeover happens. Like territorial defense, the territorial laggard lion will be either killed or driven out from the pride of completely grown cubs. The new stronger pride lion drives/kills the cubs or weak lions of the pride and copulate with pride lioness to give birth to their own cubs [15].

2.2 Algorithm structure

The basic model of the lion algorithm was introduced as a searching algorithm in the year of 2012 [10]. Subsequently, it has been restructured with upgrades and presented in [11]. It comprises of six processing stages namely, (1) Pride generation, (2) Fertility evaluation, (3) Mating, (4) Territorial defense, (5) Territorial takeover and (6) Termination.

Pride generation is the initiating process of lion algorithm, which is similar to the initialization steps of most of the evolution and swarm-based optimization algorithms. Mating is the most responsible process for deriving new lions (called as cubs) from the parent lions after subjecting to fertility evaluation, which is the second process. Territorial defense and territorial takeover are identified as the unique processes over other optimization algorithms, as they are the explicit inspiration of lion’s social behavior. These two steps play the primary roles to guide the algorithm in determining the optimal solutions from a huge search space. The termination process of lion algorithm is problem dependent and hence that could be either based on a number of iterations/generations or based on the optimality of the obtained solutions. More details about individual processing stages are described in [11], while their concise description is given further. The mathematical background on the paper is presented in Appendix.

3 Processes and operators of lion algorithm

In this section, the search processes and operators are explained in detail with the reference of Fig. 1. Despite two variants of lion’s algorithm are given in [10]. This paper presents only real-coded lion algorithm. However, binary-coded lion algorithm is presented here as a special case of real-coded lion algorithm when the dimension of the solution variable is one.

3.1 Problem formulation

Let us consider the objective function given in Eq. (1)

In Eq. (1), \(f( \bullet )\) is a continuous unimodal or multimodal function for which the solution space is of size \({\Re ^n}\) where, \(\Re\) represents real numbers, \({x_i}:i=1,2, \ldots ,n\) is the \({i^{th}}\) solution variable and \(n\) is the dimension of the solution vector, \(x_{i}^{{\text{min} }}\) and \(x_{i}^{{\text{max} }}\) are the minimum and maximum limits of ith solution variable, respectively. \({X^{optimal}}\) is the optimal/target solution to be obtained from the given optimization algorithm and it can be represented as in Eq. (3). The size of the solution space of \(f( \bullet )\) can be determined as follows

where, \(X\) is the solution vector with the representation \(X=\left[ {{x_1},{x_2} \ldots {x_n}} \right]\). Equation (1) presents the objective function to be solved as a minimization function. In some cases, this could be a maximization function and hence appropriate selection process has to be used in the optimization algorithm.

3.2 Pride generation

According to the pride definition [10] and Eq. (1), pride is initialized with a territorial lion \({X^{male}}\), its lioness \({X^{female}}\) and a nomadic lion \({X^{normal}}\). The nomadic lion is not a member of pride, despite its generation is discussed in pride generation process. The lions representation is as similar to the solution vector representation. The vector elements of \({X^{male}}\), \({X^{female}}\) and \({X^{nomad}}\), i.e., \(x_{l}^{{male}}\), \(x_{l}^{{female}}\) and \(x_{l}^{{nomad}}\) are arbitrary integers within the minimum and maximum limits when \(n>1\) (search with real encoding), where, \(l=1,2, \ldots ,L\). Here, \(L\) represents the length of the lion that can be determined as follows

where, \(m\) and \(n\) are integers to decide the length of lions. When \(n=1\), the algorithm has to search with binary encoded lion and so the vector elements are either be generated as 1 or 0, provided the constraints are given in Eqs. (5) and (6) have to be satisfied.

where,

Equations (5) and (7) ensure that the generated binary lion is within the solution space and Eq. (6) ensures that the numbers of binary bits before and after the decimal point are equal. As we experiment only with real-coded lion algorithm, we do not further discussed about the binary-encoded lion and henceforth all the discussions explicitly represent only real-coded lion algorithm.

The generated \({X^{nomad}}\) fills one of the two nomadic lion positions as we assume that there are two nomadic lions try to invade territory. The other nomadic lion will be initialized only at the time of territorial defense. Hence, for the time being the position remains null and \({X^{nomad}}\) will be represented as \(X_{1}^{{nomad}}\).

3.3 Fertility evaluation

In the sequential process of lion algorithm, every territorial lion and lioness begin to age or sometimes infertile. This makes the lion as laggard either at survival fights or territorial takeover. If \({X^{male}}\) and \({X^{female}}\) become saturated by their fitness, then either they would have reached global optima or local optima from which they could not take us to better solutions. Fertility evaluation can help to skip from local optimal solutions. In this process, the \({X^{male}}\) is found as becoming laggard and its laggardness rate \({L_r}\) is increased by one, if \(f\left( {{X^{male}}} \right)\) is greater than \({f^{ref}}\), which is reference fitness. When \({L_r}\) exceeds its maximum limit \(L_{r}^{{\text{max} }}\) then territorial defense takes place. The fertility of \({X^{female}}\) is ensured by sterility rate \({S_r}\), which is increased by one after crossover. If \({S_r}\) exceeds the tolerance \(S_{r}^{{\text{max} }}\), then \({X^{female}}\) undergoes update as given in Eq. (8). When the updated female \({X^{female+}}\) is taken as \({X^{female}}\) due to its improvement, the mating process can be performed. On contrary, the updating continues till the female generation count \({g_c}\) reaches \(g_{c}^{{\text{max} }}\). If there is no \({X^{female+}}\) to replace \({X^{female}}\) throughout the updating process, it can be decided that the \({X^{female}}\) is still fertile enough to produce better cubs [11].

where, \(x_{l}^{{female+}}\) and \(x_{{^{k}}}^{{female+}}\) are the \({l^{th}}\) and \({k^{th}}\) vector elements of \({X^{female+}}\), respectively, \(k\) is a random integer generated within the interval \([1,L]\), \(\nabla\) is the female update function, \({r_1}\) and \({r_2}\) are random integers generated within the interval \([0,1]\).

3.4 Mating

In lion algorithm, mating involves two primary steps and one supplementary step. Crossover and mutation are considered as the primary steps and gender clustering is called here as the supplementary step. In the literature, numerous works have been discussed about the crossover and mutation operations and their need for evolutionary algorithms [16,17,18,19]. These operators highly motivate us and hence we embed them in our algorithm. By performing crossover and mutation, \({X^{male}}\) and \({X^{female}}\) give birth to cubs, which are solutions derived from both the elements of \({X^{male}}\) and \({X^{female}}\). We follow the maximum natural littering rate, i.e., four cubs (mostly) in a lioness pregnancy [20] and so our crossover process gives four cubs. The proposed crossover operation for generating one cub is portrayed in Fig. 2, while the definition is given in Definition 1 of Appendix. The crossover mask \(B\) is varied to generate each cub, i.e. \({p^{th}}\) mask \({B}_{p}\) is used to obtain \({X^{cubs}}(p)\). These four cubs are further subjected to mutation to produce new four cubs. Henceforth, we use the terms ‘\({X^{cubs}}\)’ to represent cubs that are obtained from crossover and ‘\({X^{new}}\)’ to represent cubs that are obtained from mutation. These eight cubs occupy cub pool and are subjected to gender clustering to finalize \({X^{m\_cub}}\) and \({X^{f\_cub}}\). The resultant \({X^{m\_cub}}\) and \({X^{f\_cub}}\) follows cub growth function to get self-update [11].

3.5 Lion operators

Territorial defense [10, 11] not only facilitates wide searching of solution space, but also guides to algorithm to evade from local optimal point as well as identifying diverse solutions with similar fitness. The territorial defense can be sequenced here as generating nomad coalition [21, 22], survival fight [23] and then pride and nomad coalition updates. The nomad coalition process is simplified by applying winner take-all approach [24] to identify \({X^{e\_nomad}}\). Subsequently, \({X^{e\_nomad}}\) is selected if the criteria given in Eq. (11)–(13) are met.

Pride is updated only when \({X^{male}}\) is defeated, whereas nomad coalition is updated only when \({X^{e\_nomad}}\) is defeated. The pride updating is a process of replacing \({X^{male}}\) by \({X^{e\_nomad}}\), whereas updating a nomad coalition means selection of only one \({X^{nomad}}\), which has \({E^{nomad}}\) greater than or equal to the exponential of unity (please refer theorem 2 in Appendix) and the other position will be filled only at the time of next territorial defense [11].

Territorial takeover [10] drives the algorithm to update \({X^{male}}\) and \({X^{female}}\) if \({X^{m\_cub}}\) and \({X^{f\_cub}}\) are matured, i.e. when the age of cubs exceeds the maximum age for cub maturity \({A_{\text{max} }}\) [11].

3.6 Termination

The algorithm execution is terminated when atleast one of the following two termination criteria is met

where, \({N_g}\) is the number of generations, which is initialized as zero and incremented by one when a territorial takeover takes place, \(N_{g}^{{\text{max} }}\) and \({e_T}\) are the maximum number of generations and error threshold, respectively and \(\left| \bullet \right|\) is the absolute difference. Please note that second criterion, given in Eq. (15) can be considered only when the target minimum \(f\left( {{X^{optimal}}} \right)\) (or maximum) is known and \(f\left( {{X^{optimal}}} \right)\) does not mean that \({X^{optimal}}\) is known.

4 Experimental setup

4.1 Implementation

The steps to be followed to simulate lion algorithm are as follows

Step 1: Initialize \({X^{male}}\), \({X^{female}}\) and \(X_{{^{1}}}^{{nomad}}\)

Step 2: Calculate \(f\left( {{X^{male}}} \right)\), \(f\left( {{X^{female}}} \right)\) and \(f\left( {X_{{^{1}}}^{{nomad}}} \right)\)

Step 3: Set \({f^{ref}}=f\left( {{X^{male}}} \right)\) and \({N_g}=0\)

Step 4: Store \({X^{male}}\) and \(f\left( {{X^{male}}} \right)\)

Step 5: Perform fertility evaluation

Step 6: Perform mating and obtain cubpool

Step 7: Perform gender clustering and obtain \({X^{m\_cub}}\) and \({X^{f\_cub}}\)

Step 8: Initialize \({A_{cub}}\) as zero

Step 9: Execute cub growth function

Step 10: Perform territorial defense; if defense result 0, go to step 4

Step 11: If \({A_{cub}}<{A_{\text{max} }}\), go to step 9

Step 12: Perform territorial takeover and obtain updated \({X^{male}}\) and \({X^{female}}\)

Step 13: Increase \({N_g}\) by one

Step 14: If the termination criteria are not met, go to step 4, otherwise terminate the process.

5 Results and discussions

5.1 Test suite 1

The test suite 1 has 23 benchmark functions of unimodal and multimodal in nature. These benchmark functions have been widely used by various researchers to assess the performance of optimization algorithms [25,26,27]. The performance of the lion algorithm on test suite 1 is compared in two phases. In phase 1, traditional evolutionary computation methods are used, whereas phase 2 considers renowned optimization algorithms.

5.1.1 Phase 1 of test suite 1

The traditional evolutionary computation methods include classical evolutionary programming (CEP) [28, 29], fast evolutionary programming (FEP) [27], canonical evolutionary strategies (CES) [30], fast evolutionary strategies (FES) [31], Covariance Matrix Adaptation-Evolution Strategy (CMA-ES) [32, 33], evolutionary strategies learned with automatic termination criteria (ESLAT) [34]. To ensure fair comparison, the published results of CEP and FEP are collected from [27], CES and FES from [31], CMA-ES and ESLAT from [34]. As unimodal functions and multimodal functions with many local minima are having higher dimension than multimodal functions with few local minima, at least 1000 executions are required to ensure the reliability of the obtained results [25]. Hence, 1000 executions of lion algorithm are made and the statistical measures are determined and tabulated in Tables 1 and 2. Table 3 depicts the statistical results for 50 runs on multimodal benchmark functions with few local minima.

From Table 1, it can be seen that the lion algorithm outperforms four algorithms when solving schwefel 2.21 and step functions. When solving schwefel 2.22, it dominates over three algorithms (FEP, CES and FES) while it dominates over two algorithms (CEP and CES) when solving schwefel 1.2 function. Despite the lion algorithm is incompetent to solve sphere and rosenbrock functions, it is the most competent algorithm to solve quartic function.

Table 2 shows that among all the other multimodal functions with many local minima, the penalized function is the most challenging function for lion algorithm as it gives the least performance over other algorithms. However, from the same Table, lion algorithm is found to be the best of the six other evolutionary computation algorithms to solve rastrigin, Ackley and penalized 2 functions. It dominates over five algorithms (CEP, FEP, CES, FES and CMA-ES) while solving schwefel function and two algorithms (CEP and CES), while solving griewangk function.

From Table 3, it can be seen that all the seven algorithms found global minima of sixhump, branin and Hartman 3 functions and hence they grab the rank 1. However, lion algorithm provides zero standard deviation for the first two functions and second lesser standard deviation for Hartman 3 function. Similarly, lion algorithm dominates over other algorithms by zero standard deviation when solving goldstein-price function, despite CEP, CES, FES and ESLAT share rank 1 position. Lion algorithm is found as the best algorithm to solve foxholes function when compared to other algorithms. Even though lion algorithm is not dominating for the remaining multimodal functions with few local minima, it outperforms two algorithms while solving Hartman 6 and shekel 7 functions, three algorithms while solving shekel 10 function and four algorithms while solving kowalik function.

Based on final ranks of lion algorithm, it can be known that lion algorithm is far better than two, five and four evolutionary computation algorithms when solving unimodal, multimodal with many local minima and multimodal with few local minima functions, respectively.

5.1.2 Phase 2 of test suite 1

In this phase, four popular optimization algorithms such as genetic algorithm (GA) [35], particle swarm optimization (PSO) [36], artificial bee colony algorithm (ABC) [37] and group search optimizer (GSO) [25] are compared with lion algorithm. Like comparison with evolutionary computation algorithms, we have adopted the published results of GA, PSO and GSO from [25] and ABC from [26]. The comparison results for unimodal functions, multimodal functions with many local minima and multimodal functions with few local minima are tabulated in Tables 4, 5 and 6.

Table 4 shows that the lion algorithm is better than GA while solving sphere, schwefel 2.22 and rosenbrock functions. It outperforms GA and GSO while solving schwefel 1.2 function, while it is better than GA, PSO and GSO for solving step functions. The results of schwefel 2.21 and quartic functions showed that lion algorithm is the best among all the five algorithms.

From Table 5, it can be said as lion algorithm dominates PSO and GA while solving schwefel and Ackley functions respectively, whereas it is better than both PSO and GA for solving griewank function. In spite of lion algorithm’s failure to perform in solving penalized function, it is proved to be the best algorithm for rastrigin and penalized 2 functions (source: Table 5).

From Table 6, the performance of lion algorithm over other algorithms for solving multimodal functions with few local minima can be seen. Lion algorithm outperforms PSO when solving foxholes and hartman6 functions. While solving these functions, both GA and LA shares third position, however performance deviation (standard deviation) is lesser than GA. For shekel 5, shekel 7 and shekel 10 functions, lion algorithm is found to be better than GA and GSO, while it dominates GA and ABC for solving kowalik functions. Lion algorithm finds the global solution of sixhump and gold-stein price function at every run (because there is zero standard deviation) and remains in the top position among all the algorithms. It again remains in the top position while solving Hartman 3 function with minimum standard deviation. For branin function, though lion algorithm found global minima, it exhibits a second minimum standard deviation function when compared to other algorithms. Despite the lion algorithm is not securing first ranks, it finds equivalent to GSO and ESLAT as per no free lunch theorem [38], and outperforming over other algorithms.

5.2 Test suite 2

The second test suite of this paper consists of 42 benchmark functions. The performance of lion algorithm on this test suite 2 is compared with the swarm algorithms such as Artificial Bee colony (ABC) [37], Bacterial Foraging Optimization Algorithm (BFO) [39], Cuckoo Search (CS) [40], Firefly (FF) [41], Group Search Optimization (GSO) [25], Moth-flame Optimization (MFO) [42], particle swarm optimization [36], Dragon Fly algorithm [43], Grey Wolf Optimization(GWO) [44], Whale Optimization Algorithm (WOA) [45] and Crow Search Algorithm (CrS) [46]. The results are presented in Tables 7 and 8. Subsequently, the lion algorithm is compared with three renowned evolutionary algorithms such as biogeography-based optimization (BBO) [47], differential evolution (DE) [48] and genetic algorithm (GA) [35] as well as three human/physics inspired algorithms such as gravitational search algorithm (GSA) [49], harmony search algorithm (HSA) [50] and simulated annealing (SA) [51] and presented in Tables 9 and 10.

Each algorithm attains diverse ranks on finding the optimal solution for the 42 benchmark functions. When compared with the swarm intelligence, the mean performance of the lion algorithm positions it in the second position (as per Table 7), but the performance meets low deviation (as per Table 7). This shows that the lion algorithm dominates all the swarm intelligence, except GSO. However, the lion algorithm finds stable searching of solution at any instant. According to Tables 8, 9 and 10, the lion algorithm manages to secure first rank over the evolutionary algorithms and human/physics inspired algorithms.

5.3 Test suite 3

Here, the lion algorithm is tested on 17 benchmark functions, which have been widely used by various researchers to assess the performance of optimization algorithms [25,26,27]. However, they are not large scale. This test suite considers these functions in large scale, i.e., the dimension of the solution as 1000, because of its high significance [25]. Similar to test suite 2, the comparative study is conducted with swarm algorithms and evolutionary, human/physics inspired algorithms separately.

According to Tables 11, 12, 13 and 14, the lion algorithm finds first position in handling large scale optimization problems. The multiple updating stages in the lion algorithm, updating based on self-improvement, exploration and exploitation leads the solution update in lion algorithm in all possible dimensions. Moreover, the provision to find the diverse solution with similar fitness function makes it highly suitable for multimodal functions, which are in multiple in large scale problems.

5.4 Test suite 4

Test suite 4 has five widely exploited engineering problems such as welded beam design, pressure vessel design, gear train design, tension/compression spring design and three-bar truss design. The problem models are referred from [46]. Similar to test suite 2 and 3, the comparative study is conducted against swarm algorithms, evolutionary algorithms and physics/human inspired algorithms. Each algorithm is subjected to 20 test runs and the resultant statistics are presented in Tables 15 and 16.

Tables 15 and 16 reveals interesting outcomes about the performance of the lion algorithm over the conventional algorithms. The lion algorithm outperforms swarm algorithms on solving welded beam, pressure vessel design and gear train design, while it secures second position on solving compression/tension spring design and three-bar truss design. On contrary, the lion algorithm outperforms evolutionary algorithms and human/physics inspired algorithms on solving three-bar truss design, gear train design and welded beam design. The deviation from mean performance (standard deviation) gets fluctuated in the lion algorithm. However, the lion algorithm maintains maximum trade-off between the mean performance and standard deviation to outperform both the group of algorithms and hence remain in the first position of all the algorithms. The obtained best solution by the lion algorithm is given in Table 17.

6 Conclusion and future work

In the family of nature-inspired algorithms, a new optimization algorithm called as lion algorithm had been proposed from the inspiration of lion’s unique social behavior, which keeps the animal stronger than other mammals. A detailed study on the performance of the lion algorithm using four diverse test suites has been conducted in this paper. First two test suites are benchmark optimization problems with unimodal and multimodal characteristics. The results from first test suite are compared against the published results of evolutionary computation methods such as CES, FES, CEP, FEP, CMA-ES, ESLAT, and other popular optimization algorithms such as GA, PSO, ABC and GSO. In test suite 2, a wide comparison has been performed with state-of-the-art optimization algorithms. Test suite 3 has been presented as large-scale optimization problems and test suite 4 has engineering optimization problems. The comparative analysis has disclosed interesting outcomes about the lion algorithm. It was found to be competing over majority of the evolutionary computation methods when solving multimodal functions. In addition, it has better-averaged results than GA and PSO. For unimodal functions, lion algorithm has outperformed over two other evolutionary computation methods, whereas equal to GSO and better than GA. Trade-off has also been maintained between the mean performance and standard deviation and so the stability of the achieved performance has been guaranteed. The algorithm was also found to be competing over swarm algorithms on solving welded beam design problem, pressure vessel design problem and gear train design problem. While solving three bar truss design and tension/compression spring design problem, the lion algorithm outperforms evolutionary and human/physics inspired algorithms. Despite the lion algorithm underperforms certain algorithms on solving these test suites, the final rank discloses remarkable performance of the lion algorithm.

As the obtained results are encouraging, we have planned to apply lion algorithm on various engineering problems and applications to study its performance. Perhaps, lion algorithm requires certain amendments according to the problems. However, the presented algorithm structure can play as a cornerstone for all the future enhancements.

References

Rozenberg G, Bck T, Kok JN (2011) Handbook of natural computing, 1st edn. Springer Publishing Company, New York

Yang XS, Deb S, Fong S, He X, Zhao YX (2016) From swarm intelligence to metaheuristics: nature-inspired optimization algorithms. Computer 49(9):52–59

Shadbolt N (2004) Nature-inspired computing. IEEE J Intell Syst 19(1):2–3

Neumann F, Witt C (2010) Bioinspired computation in combinatorial optimization algorithms and their computational complexity. Natural computing series, XII, p 216

Corne D, Deb K, Knowles J, Yao X (2010) Selected applications of natural computing. In: Rozenberg G, Back T, Kok JN (eds) Handbook of natural computing. Springer, Berlin

Holland JH (1975) Adaptation in natural and artificial systems. University of Michigan Press, Ann Arbor

Bongard J (2009) Biologically inspired computing. IEEE Comput J 42(4):95–98

Forbes N (2000) Biologically inspired computing. Comput Sci Eng 2(6):83–87

Mahdiani HR, Ahmadi A, Fakhraie SM, Lucas C (2010) Bio-inspired imprecise computational blocks for efficient VLSI implementation of soft-computing applications. IEEE Trans Circuits Syst I Regul Pap 57(4):1549–8328

Rajakumar BR (2012) The lion’s algorithm: a new nature-inspired search algorithm. In: Second international conference on communication, computing and security, vol 6, pp 126–135. https://doi.org/10.1016/j.protcy.2012.10.016

Rajakumar BR (2014) Lion algorithm for standard and large scale bilinear system identification: a global optimization based on lion’s social behavior. In: 2014 IEEE congress on evolutionary computation (CEC), pp 2116–2123

Chander S, Vijaya P, Dhyani P (2016) ADOFL: multi-kernel-based adaptive directive operative fractional lion optimisation algorithm for data clustering. J Intell Syst 27:317

Babers R, Hassanien AE, Ghali NI (2015) A nature-inspired metaheuristic lion optimization algorithm for community detection. In: 2015 11th IEEE international computer engineering conference (ICENCO)

Chander S, Vijaya P, Dhyani P (2017) Multi kernel and dynamic fractional lion optimization algorithm for data clustering. Alex Eng J 57:267

Bauer H, Iongh de HH, Silvestre I (2003) Lion social behaviour in the West and Central African Savanna belt. Mamm Biol 68(1):239–243

Fogel LJ, Owens AJ, Walsh MJ (1966) Artificial intelligence through simulated evolution. Wiley Publishing, New York

Doerr B, Happ E, Klein C (2012) Crossover can probably be useful in evolutionary computation. Theor Comput Sci 425:17–33

Back T, Hoffmeister F, Schwefel HP (1993) An overview of evolutionary algorithms for parameter optimization. J Evol Comput 1(1):1–24 (De Jong K (ed), Cambridge: MIT Press)

Jong De KA (1975) An analysis of the behavior of a class of genetic adaptive systems. Doctoral thesis, Dept. Computer and Communication Sciences, University of Michigan, Ann Arbor

Packer C, Pusey AE (2016) Divided we fall: cooperation among lions. Sci Am 276:52–59

Packer C, Pusey AE (1982) Cooperation and competition within coalitions of male lions: Kin selection or game theory? Nature 296(5859):740–742

Grinnell J, Packer C, Pusey AE (1995) Cooperation in male lions: Kinship, reciprocity or mutualism? Anim Behav 49(1):95–105

Packer C, Pusey AE (1982) Cooperation and competition within coalition of male lions: Kin selection or game theory. Macmillan J 296(5859):740–742

Lotfi E, Akbarzadeh-T MR (2016) A winner-take-all approach to emotional neural networks with universal approximation property. Inform Sci 346:369–388

He S, Wu QH, Saunders JR (2009) Group search optimizer: an optimization algorithm inspired by animal searching behavior. IEEE Trans Evol Comput 13(5):973–990

Karaboga D, Akay B (2009) A comparative study of artificial bee colony algorithm. Appl Math Comput 214(1):108–132

Yao X, Liu Y, Lin G (1999) Evolutionary programming made faster. IEEE Trans Evol Comput 3(2):82–102

Fogel DB (1995) Evolutionary computation: toward a new philosophy of machine intelligence. IEEE Press, New York

Fogel LJ, Owens AJ, Walsh MJ (1965) Artificial intelligence through a simulation of evolution. In: Proc. 2nd cybern. sci. symp. biophysics cybern. syst., Washington: Spartan Books, pp 131–155

Schwefel HP (1995) Evolution and optimum seeking. Wiley, New York

Yao X, Liu Y (1997) Fast evolution strategies. Control Cybern 26(3):467–496

Hansen N, Ostermeier A (1996) Adapting arbitrary normal mutation distributions in evolution strategies: the covariance matrix adaptation. In: IEEE int. conf. evolution. comput. (ICEC) proc, pp 312–317

Hansen N (2006) The CMA evolution strategy: a comparing review. In: Lozano JA, Larraaga P, Inza I, Bengoetxea E (eds) Towards a new evolutionary computation. Springer, Berlin

Hedar A, Fukushima M (2006) Evolution strategies learned with automatic termination criteria. In: Proceedings of SCIS-ISIS 2006, Tokyo, Japan

Goldberg DE (1989) Genetic algorithms in search optimization and machine learning. Addison Wesley, Reading, p 41

Kennedy J, Eberhart R (1995) Particle swarm optimization. In: Proceedings of IEEE international conference on neural networks, IV, pp 1942–1948. https://doi.org/10.1109/ICNN.1995.488968

Karaboga D (2005) An idea based on honey bee swarm for numerical optimization. Technical Report-TR06, Erciyes University, Engineering Faculty, Computer Engineering Department

Wolpert DH, Macready WG (1997) No free lunch theorems for optimization. IEEE Trans Evol Comput 1(1):67–82

Cheng YP, Li Y, Wang G, Zheng Y-F, Cui XT (2017) A novel bacterial foraging optimization algorithm for feature selection. Expert Syst Appl 83:1–17

Yang X-S, Deb S (2009) Cuckoo search via Lévy flights. In: World congress on nature and biologically inspired computing (NaBIC 2009), IEEE Publications, pp 210–214

Yang X-S (2010) Nature inspired metaheuristic algorithms, 2nd edn. Luniver Press, London

Mirjalili S (2015) Moth-flame optimization algorithm: a novel nature-inspired heuristic paradigm. Knowl Based Syst 89:228–249

Mirjalili S (2016) Dragonfly algorithm: a new meta-heuristic optimization technique for solving single-objective, discrete, and multi-objective problems. J Neural Comput Appl 27(4):1053–1073

Mirjalili SM, Mirjalili A, Lewis (2014) Grey wolf optimizer. Adv Eng Softw 69:46–61

Mirjalili S, Lewis A (2016) The whale optimization algorithm. Adv Eng Softw 95:51–67

Askarzadeh A (2016) A novel metaheuristic method for solving constrained engineering optimization problems: crow search algorithm. Comput Struct 169:1–12

Li LL, Yang YF, Wang C-H, Lin K-P (2018) Biogeography-based optimization based on population competition strategy for solving the substation location problem. Expert Syst Appl 97:290–302

Price VK, Storn MR (1997) Differential evolution: a simple evolution strategy for fast optimization. Dr Dobb’s J 22:18–24

Rashedi E, Nezamabadi-Pour H, Saryazdi S (2009) GSA: a gravitational search algorithm. Inf Sci 179(13):2232–2248

Geem ZW, Kim JH, Loganathan GV (2001) A new heuristic optimization algorithm: harmony search. Simulation 76:60–68

Ingber L (1993) Simulated annealing: practice versus theory. Math Comput Model 18(11):29–57

Funding

None.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Definition 1

An \({X^{cub}}\) of an \({X^{male}}\) and an \({X^{female}}\) is the sum of Hadamard product of crossover mask and \({X^{male}}\) and Hadamard product of complement of the same crossover mask and \({X^{female}}\), provided the crossover mask is essentially to be a binary vector with \({C_r} \cdot L\) number of binary ones.

Assumption 1

Within a pride, male lions are always stronger than female lions, applicable to cubs also.

Lemma 1

If the product of \({C_r}\) and \(L\) is equal to zero, then \({X^{cubs}}\) are equal to \({X^{female}}\).

Proof of Lemma 1

According to Definition 1, \(B\), which is a vector with \(L\) elements, has \({C_r} \cdot L\)number of ones and\(L\left( {1 - {C_r}} \right)\) number of zeros. If \({C_r} \cdot L=0\), then \(B\) has no ones and \(L\) number of zeros, which means \(B\) is a vector of zeros. By applying this \(B\) in the mathematical representation of crossover operation given in [11], the first term of RHS becomes \(B\) and the second term of RHS becomes \({X^{female}}\) as \(\bar {B}=1 - B\). Hence, \({X^{cubs}}\) becomes \({X^{female}}\) when \({C_r} \cdot L=0\).

Lemma 2

If the product of \({C_r}\) and \(L\) is equal to one, then \({X^{cubs}}\) are equal to \({X^{male}}\).

Proof of Lemma 2

Similar to the proof of Lemma 1, \(B\) is a vector of ones, when \({C_r} \cdot L=L\) and \(\bar {B}\) becomes a vector of zeros. This in turn, makes the first RHS term of Eq. (11) as \({X^{male}}\) and the second RHS term as a vector of zeros. Hence, \({X^{cubs}}\) become \({X^{male}}\) when \({C_r} \cdot L=L\).

Lemma 3

\(E_{1}^{{nomad}}\) is greater than \(E_{2}^{{nomad}}\), if \({d_1}\) and \(f\left( {X_{1}^{{nomad}}} \right)\) are greater than \({d_2}\) and \(f\left( {X_{2}^{{nomad}}} \right)\), respectively.

Proof of Lemma 3

According to the lemma,

Hence, Eqs. (24) and (25) (from Theorem 2) takes the form,

\(E_{2}^{{nomad}}\) can be greater than \(\exp \left( 1 \right)\)only if \(f\left( {X_{1}^{{nomad}}} \right)>>f\left( {X_{2}^{{nomad}}} \right)\) as the first term produces exponential decay from \(\exp \left( 1 \right)\), when \({d_1}>{d_2}\). But, if \(f\left( {X_{1}^{{nomad}}} \right)>>f\left( {X_{2}^{{nomad}}} \right)\), then probably \({d_1}>>{d_2}\)due to its linear relationship with \(f\left( {X_{1}^{{nomad}}} \right)\), which leads \(\exp \left( \bullet \right)\) towards zero, and hence \(E_{2}^{{nomad}}\) becomes lesser than \(\exp \left( 1 \right)\). Thus it is proved that \(E_{1}^{{nomad}}>E_{2}^{{nomad}}\), when \({d_1}>{d_2}\) and\(f\left( {X_{1}^{{nomad}}} \right)>f\left( {X_{2}^{{nomad}}} \right)\).

Lemma 4

\(E_{1}^{{nomad}}\) is greater than \(E_{2}^{{nomad}}\), if \({d_1}\) and \(f\left( {X_{2}^{{nomad}}} \right)\) are greater than \({d_2}\) and \(f\left( {X_{1}^{{nomad}}} \right)\), respectively.

Proof of Lemma 4

According to the lemma,

As \(\frac{{f\left( {X_{2}^{{nomad}}} \right)}}{{f\left( {X_{1}^{{nomad}}} \right)}}>1\) from Eq. (21)

Hence, it is proved that \(E_{1}^{{nomad}}>E_{2}^{{nomad}}\), when \({d_1}>{d_2}\) and \(f\left( {X_{2}^{{nomad}}} \right)>f\left( {X_{1}^{{nomad}}} \right)\).

Lemma 5

\(E_{2}^{{nomad}}\) is greater than \(E_{1}^{{nomad}}\), if \({d_2}\) and \(f\left( {X_{1}^{{nomad}}} \right)\) are greater than \({d_1}\) and \(f\left( {X_{2}^{{nomad}}} \right)\), respectively.

Lemma 6

\(E_{2}^{{nomad}}\) is greater than \(E_{1}^{{nomad}}\), if \({d_2}\) and \(f\left( {X_{2}^{{nomad}}} \right)\) are greater than \({d_1}\) and \(f\left( {X_{1}^{{nomad}}} \right)\), respectively.

Proof of Lemma 5 and 6

Lemma 5 is the vice versa of lemma 3 as \(E_{2}^{{nomad}}>\exp \left( 1 \right)\) and \(E_{1}^{{nomad}}<\exp \left( 1 \right)\). Lemma 6 is the vice versa of lemma 4 as \(E_{2}^{{nomad}}=\exp \left( 1 \right)\) and probably \(E_{1}^{{nomad}}<\exp \left( 1 \right)\) proved through Axiom 3.

Axiom 1

\({C_r} \cdot L=L\) is an integer possibly between \(1\) and \(L - 1\), i.e.,\({C_r} \cdot L \in \left( {1,L - 1} \right)\).

Axiom 2

\(X_{{^{1}}}^{{nomad}}\) and \(X_{2}^{{nomad}}\) are essentially different and hence \({d_1}\) is not always equal to \({d_2}\).

Axiom 3

\(f\left( {X_{1}^{{nomad}}} \right)\) and \(f\left( {X_{2}^{{nomad}}} \right)\) exhibit linear variation with respect to \({d_1}\) and \({d_2}\), respectively.

Theorem 1

\({X^{cubs}}\) can be a subset of both \({X^{male}}\) and \({X^{female}}\) only if \({C_r}\) is selected in such a way that \({C_r} \cdot L=L\).

Proof of Theorem 1

It is known that \(B\) has \({C_r} \cdot L\) number of ones in arbitrary vector positions and zeros in the remaining positions. Hence, \({X^{male}} \circ B\) have elements of \({X^{male}}\) and zeros from the positions where \(B\) has ones and zeros, respectively. In contrast, \({X^{female}} \circ \bar {B}\) has elements of \({X^{female}}\) and zeros from the positions where \(B\) has zeros and ones, respectively, as \(\bar {B}\) is the one’s complement of \(B\). Hence, it can be said that \({X^{male}} \circ B \subset {X^{male}}\) and \({X^{female}} \circ \bar {B} \subset {X^{female}}\). As ‘+’ operator in the mathematical representation of crossover operation given in [11] is equivalent to set union operation, the resultant \({X^{cubs}}\) are subset of both and only \({X^{male}}\) and \({X^{female}}\), i.e., \({X^{cubs}} \subset {X^{male}},{X^{female}}\).

Theorem 2

In a nomad coalition of only two lions, the evaluation score \(E_{1}^{{nomad}}\) will be always greater than \(E_{2}^{{nomad}}\) when \(E_{1}^{{nomad}}\) is greater than or equal to exponential function of unity and vice versa.

Proof of Theorem 2

Let \(E_{1}^{{nomad}}\) and \(E_{2}^{{nomad}}\) be the evaluation scores of \(X_{{^{1}}}^{{nomad}}\) and \(X_{2}^{{nomad}}\), respectively. The evaluation scores can be calculated as

where \({d_1}\) is the Euclidean distance between \(X_{{^{1}}}^{{nomad}}\) and \({X^{male}}\), \({d_2}\) is the Euclidean distance between \(X_{2}^{{nomad}}\) and \({X^{male}}\).

From lemmas 3 and 4, it can be said that if \(E_{1}^{{nomad}}=\exp \left( 1 \right)\) (according to lemma 3) and \(E_{1}^{{nomad}}>\exp \left( 1 \right)\) (according to lemma 4), then \(E_{2}^{{nomad}}<\exp \left( 1 \right)\). Similarly, lemmas 5 and 6 asserts \(E_{2}^{{nomad}}>E_{1}^{{nomad}}\), if \(E_{2}^{{nomad}} \geq \exp \left( 1 \right)\). Hence, the theorem states that it is not necessary to calculate both \(E_{1}^{{nomad}}\) and \(E_{2}^{{nomad}}\) to evaluate \(X_{{^{1}}}^{{nomad}}\) and \(X_{2}^{{nomad}}\), respectively. It is sufficient to calculate either of them and can be concluded by comparing it with \(\exp \left( 1 \right)\).

Rights and permissions

About this article

Cite this article

Boothalingam, R. Optimization using lion algorithm: a biological inspiration from lion’s social behavior. Evol. Intel. 11, 31–52 (2018). https://doi.org/10.1007/s12065-018-0168-y

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12065-018-0168-y