Abstract

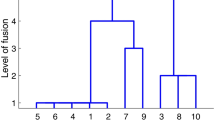

The cluster analysis problem of partitioning a set of objects from dissimilarity data is here handled with the statistical model-based approach of fitting the “closest” classification matrix to the observed dissimilarities. A classification matrix represents a clustering structure expressed in terms of dissimilarities. In cluster analysis there is a lack of methodologies widely used to directly partition a set of objects from dissimilarity data. In real applications, a hierarchical clustering algorithm is applied on dissimilarities and subsequently a partition is chosen by visual inspection of the dendrogram. Alternatively, a “tandem analysis” is used by first applying a Multidimensional Scaling (MDS) algorithm and then by using a partitioning algorithm such as k-means applied on the dimensions specified by the MDS. However, neither the hierarchical clustering algorithms nor the tandem analysis is specifically defined to solve the statistical problem of fitting the closest partition to the observed dissimilarities. This lack of appropriate methodologies motivates this paper, in particular, the introduction and the study of three new object partitioning models for dissimilarity data, their estimation via least-squares and the introduction of three new fast algorithms.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Asuncion A, Newman DJ (2007) UCI Machine Learning Repository, University of California, School of Information and Computer Science, Irvine, CA. http://www.ics.uci.edu/~mlearn/MLRepository.html

Ball GH, Hall DJ (1967) A clustering technique for summarizing multivariate data. Behav Sci 12: 153–5

Bandelt HJ (1990) Recognition of the tree metrics. SIAM J Discrete Math 3(1): 1–6

Bock HH (1974) Automatische klassifikation. studia mathematica. Vandenhoek und Ruprecht, Göttingen

Bock HH et al (1998) Probabilistic aspects in Classification. In: Hayashi (eds) Data science, classification and related methods. Springer, Heidelberg, pp 3–21

Carroll JD, Pruzansky S (1975) Fitting of hierarchical tree structure (HTS) models, mixtures of HTS models, and hybrid models, via mathematical programming and alternating least squares. Paper presented at U. S.-Japan Seminar on Theory, Methods and Applications of Multidimensional Scaling and Related Techniques, San Diego, August 20–24

Carroll JD, Pruzansky S (1980) Discrete and hybrid scaling models. In: Lantermann ED, Feger (eds) Similarity and choice. Huber, Bern, pp 108–139

Cattell RB (1966) The scree test for the number of factors. Multivar Behav Res 1: 245–276

Chandon JL, Lemaire J, Pouget J (1980) Construction de 1’ultramétrique la plus proche d’une dissimilarité an sens des moindres carrés. Recherche Operationelle Operations Research 14: 157–70

Cormack RM (1971) A review of classification (with discussion). J R Stat Soc A 134: 321–67

Critchley F, Fichet B (1994) The partial order by inclusion of the principal classes of dissimilarity on finite set, and some of the basic properties. In: Cutsem B (eds) Classification and dissimilarity analysis, Lecture Notes in Statistics. Springer, Berlin, pp 5–65

Dahlhaus E (1993) Fast parallel recognition of ultrametrics and tree metrics. SIAM J Discrete Math 6(4): 523–532

De Soete G (1984) A least squares algorithm for fitting an ultrametric tree to a dissimilarity matrix. Pattern Recognit Lett 2: 133–7

Fisher L, Van Ness JW (1971) Admissible clustering procedures. Biometrika 58: 91–104

Fraley C, Raftery AE (2002) Model based clustering, discriminant analysis and density estimation. J Am Stat Assoc 97: 611–631

Gordon AD (1987) Parsimonious trees. J Classif 4: 85–101

Gordon AD (1999) Classification: methods for the exploratory analysis of multivariate data. Chapman and Hall, London

Gower JC (1966) Some distance properties of latent root and vector methods used in multivariate analysis. Biometrika 53: 325–38

Grötschel M, Wakabayashi Y (1989) A cutting plane algorithm for a clustering problem. Math Program 45: 59–96

Hansen P, Jaumard B, Sanlaville E (1994) Partitioning problems in cluster analysis: a review of mathematical programming approaches. In: Diday E, Lechevallier Y, Schader M, BertrandP Burtschy B (eds) New approaches in classification and data analysis. Springer, Berlin, pp 228–40

Hartigan JA (1967) Representation of similarity matrices by trees. J Am Stat Assoc 62: 1140–58

Hartigan JA (1975) Clustering algorithms. Wiley, New York

Hennig C (2007) Cluster-wise assessment of cluster stability. Comput Stat Data Anal 52: 258–271

Hubert L, Arabie P (1985) Comparing partitions. J Classif 2: 193–218

Hubert L, Arabie P, Meulman J (1997) Hierarchical clustering and the construction of (optimal) ultrametrics using in lecture Notes, Monograph Series, vol 31. Institute of Mathematical Statistics, Hayward, CA, pp 457–72

Jain AK, Dubes RC (1988) Algorithms for clustering data. Prentice-Hall, Englewood Cliffs, NJ

Kaufman L, Rousseeuw PJ (1990) Finding groups in data: an introduction to cluster analysis. Wiley, New York

Krivánek M, Morávek J (1986) NP-hard problems in hierarchical-tree clustering. Acta Inform 23: 311–323

Keller JB (1962) Factorization of matrices by least squares. Biometrika 49: 239–242

Marcotorchino F, Michaud P (1982) Agregation de similarites en classification automatique. Revue de Statistique Appliquée 30(2): 21–44

MacQueen J (1967) Some methods for classification and analysis of multivariate observations. In: Le Cam LM, Neyman J (eds) Proceedings of the fifth berkeley symposium on mathematical statistics and probability, vol 1. Statistics. University of California Press, Berkeley, pp 281–297

Mathar R (1985) The best Euclidean fit to a given distance matrix in prescribed dimensions. Linear Algebra Appl 67: 1–6

McLachlan G, Peel D (2000) Finite mixture models. Wiley, New York

Oh M-S, Raftery AE (2007) Model-based clustering with dissimilarities: a bayesian approach. J Comput Graph Stat. A Technical Report is available on http://www.stat.washington.edu/raftery/Research/bayes.html (to appear)

Powell MJD et al (1983) Variable metric methods for constrained optimization. In: Bachem A (eds) Mathematical programming: the state of the art. Springer, Berlin, pp 288–311

Régnier S (1965) Sur quelques aspects mathématiques des problemes de classification automatique. International Computation Centre Bulletin 4, 175–91. Reprinted in Mathématiques et Sciences Humaines, 82, 13–29 (1982)

Rubin J (1967) Optimal classification into groups:an approach to solve the taxonomy problem. J Theor Biol 15: 103–144

Sriram N (1990) Clique optimization: a method to construct parsimonious ultrametric trees from similarity data. J Classif 7: 33–52

Torgerson WS (1958) Theory and methods of scaling. Wiley, New York

Vicari D, Vichi M (2000) Non-hierarchical classification structures. In: Gaul W, Opitz O, Schader M (eds) Data analysis, studies in classification data analysis and knowledge organization. Springer, Berlin, pp 51–66

Vichi M (1993) Un algoritmo dei minimi quadrati per interpolare un insieme di classificazioni gerarchiche con una classificazione consenso, Metron, vol 51, 3–4, 139–163

Vichi M (1994) Un algoritmo per il consenso tra classificazioni gerarchiche con l’ausilio di tecniche multiway. Proc Italian Stat Soc 37: 261–268

Vichi M (1996) Computational complexity of one mode classification, In: Prat A (ed) Proceedings of COMPSTAT vol 96. Physica-Verlag

Zangwill WI (1969) Nonlinear programming: a unified approach. Prentice-Hall, Englewood Cliffs, NJ

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Vichi, M. Fitting semiparametric clustering models to dissimilarity data. Adv Data Anal Classif 2, 121–161 (2008). https://doi.org/10.1007/s11634-008-0025-4

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11634-008-0025-4