Abstract

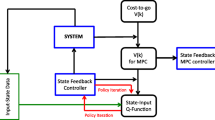

In this paper, a novel iterative Q-learning algorithm, called “policy iteration based deterministic Q-learning algorithm,” is developed to solve the optimal control problems for discrete-time deterministic nonlinear systems. The idea is to use an iterative adaptive dynamic programming (ADP) technique to construct the iterative control law which optimizes the iterative Q function. When the optimal Q function is obtained, the optimal control law can be achieved by directly minimizing the optimal Q function, where the mathematical model of the system is not necessary. Convergence property is analyzed to show that the iterative Q function is monotonically non-increasing and converges to the solution of the optimality equation. It is also proven that any of the iterative control laws is a stable control law. Neural networks are employed to implement the policy iteration based deterministic Q-learning algorithm, by approximating the iterative Q function and the iterative control law, respectively. Finally, two simulation examples are presented to illustrate the performance of the developed algorithm.

摘要

创新点

本文提出了一种新型策略迭代 Q 学习方法求解离散时间非线性系统最优控制问题. 本文主要思想是采用自适应动态规划(ADP)方法构建系统迭代控制控制律以及迭代 Q 函数, 使得系统性能指标达到最优. 当获得迭代 Q 函数后, 通过最小化迭代 Q 函数直接获得迭代控制而不需要系统的数学模型. 通过收敛性分析证明迭代 Q 函数单调递减收敛到最优. 论文首次分析策略迭代 Q 学习的稳定性, 证明了任意迭代控制均为容许控制. 最后通过仿真验证了方法的有效性.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Mohler R R, Kolodziej W J. Optimal control of a class of nonlinear stochastic systems. IEEE Trans Automat Contr, 1981, 26: 1048–1054

Liu C, Atkeson C G, Su J. Neighboring optimal control for periodic tasks for systems with discontinuous dynamics. Sci China Inf Sci, 2011, 54: 653–663

Wang J, Wang T, Yao C, et al. Active tension optimal control for WT wheelchair robot by using a novel control law for holonomic or nonholonomic systems. Sci China Inf Sci, 2014, 57: 112203

Liu Z, Wang Y, Li H. Two kinds of optimal controls for probabilistic mix-valued logical dynamic networks. Sci China Inf Sci, 2014, 57: 052201

Li X, Wang H, Ding B, et al. MABP: an optimal resource allocation approach in data center networks. Sci China Inf Sci, 2014, 57: 102801

Yu H, Tang W, Li S. Joint optimal sensing time and power allocation for multi-channel cognitive radio networks considering sensing-channel selection. Sci China Inf Sci, 2014, 57: 042313

Werbos P J. Advanced forecasting methods for global crisis warning and models of intelligence. General Systems Yearbook, 1977, 22: 25–38

Werbos P J. A Menu of Designs for Reinforcement Learning Over Time, in Neural Networks for Control. Massachusetts: MIT Press, 1991. 67–95

Modares H, Lewis F L. Optimal tracking control of nonlinear partially-unknown constrained-input systems using integral reinforcement learning. Automatica, 2014, 50: 1780–1792

Zhang H, Wei Q, Liu D. An iterative adaptive dynamic programming method for solving a class of nonlinear zero-sum differential games. Automatica, 2011, 47: 207–214

Kumar M, Rajagopal K, Balakrishnan S N, et al. Reinforcement learning based controller synthesis for flexible aircraft wings. IEEE/CAA J Automat Sin, 2014, 1: 435–448

Kamalapurkar R, Klotz J R, Dixon W E. Concurrent learning-based approximate feedback-Nash equilibrium solution of N-player nonzero-sum differential games. IEEE/CAA J Automat Sin, 2014, 1: 239–247

Zhang Z, Zhao D. Clique-based cooperative multiagent reinforcement learning using factor graphs. IEEE/CAA J Automat Sin, 2015, 1: 248–256

Zhong X, He H, Zhang H, et al. Optimal control for unknown discrete-time nonlinear Markov jump systems using adaptive dynamic programming. IEEE Trans Neural Netw Learn Syst, 2014, 25: 2141–2155

Wei Q, Liu D, Yang X. Infinite horizon self-learning optimal control of nonaffine discrete-time nonlinear systems. IEEE Trans Neural Netw Learn Syst, 2015, 26: 866–879

Prokhorov D V, Wunsch D C. Adaptive critic designs. IEEE Trans Neural Networks, 1997, 8: 997–1007

Wei Q, Liu D. Adaptive dynamic programming for optimal tracking control of unknown nonlinear systems with application to coal gasification. IEEE Trans Autom Sci Eng, 2014, 11: 1020–1036

Song R, Xiao W, Sun C. A new self-learning optimal control laws for a class of discrete-time nonlinear systems based on ESN architecture. Sci China Inf Sci, 2014, 57: 068202

Wei Q, Wang F, Liu D, et al. Finite-approximation-error based discrete-time iterative adaptive dynamic programming. IEEE Trans Cybern, 2014, 44: 2820–2833

Ni Z, He B, Zhong X, Prokhorov D V. Model-free dual heuristic dynamic programming. IEEE Trans Neural Netw Learn Syst, 2015, 26: 1834–1839

Molina D, Venayagamoothy G K, Liang J, et al. Intelligent local area signals based damping of power system oscillations using virtual generators and approximate dynamic programming. IEEE Trans Smart Grid, 2013, 4: 498–508

Bertsekas D P, Tsitsiklis J N. Neuro-Dynamic Programming. Belmont: Athena Scientific, 1996

Si J, Wang Y T. On-line learning control by association and reinforcement. IEEE Trans Neural Networks, 2001, 12: 264–276

Wei Q, Liu D. Data-driven neuro-optimal temperature control of water gas shift reaction using stable iterative adaptive dynamic programming. IEEE Trans Ind Electron, 2014, 61: 6399–6408

Dierks T, Jagannathan S. Online optimal control of affine nonlinear discrete-time systems with unknown internal dynamics by using time-based policy update. IEEE Trans Neural Networks, 2012, 23: 1118–1129

Dierks T, Thumati B, Jagannathan S. Optimal control of unknown affine nonlinear discrete-time systems using offlinetrained neural networks with proof of convergence. Neural Networks, 2009, 22: 851–860

Wei Q, Liu D. An iterative ε-optimal control scheme for a class of discrete-time nonlinear systems with unfixed initial state. Neural Networks, 2012, 32: 236–244

Wei Q, Song R, Yan P. Data-driven zero-sum neuro-optimal control for a class of continuous-time unknown nonlinear systems with disturbance using ADP. IEEE Trans Neural Netw Learn Syst, 2015, PP: 1

Lewis F L, Vrabie D, Vamvoudakis K G. Reinforcement learning and feedback control: using natural decision methods to design optimal adaptive controllers. IEEE Contr Syst, 2012, 32: 76–105

Modares H, Lewis F L, Naghibi-Sistani M B. Adaptive optimal control of unknown constrained-input systems using policy iteration and neural networks. IEEE Trans Neural Netw Learn Syst, 2013, 24: 1513–1525

Wei Q, Liu D, Y Xu. Policy iteration optimal tracking control for chaotic systems by adaptive dynamic programming approach. Chin Phys B, 2015, 24: 030502

Modares H, Lewis F L, Naghibi-Sistani M B. Integral reinforcement learning and experience replay for adaptive optimal control of partially-unknown constrained-input continuous-time systems. Automatica, 2014, 50: 193–202

Murray J J, Cox C J, Lendaris G G, et al. Adaptive dynamic programming. IEEE Trans Syst Man Cybern Part C-Appl Rev, 2002, 32: 140–153

Vamvoudakis K G, Lewis F L. Multi-player non-zero-sum games: online adaptive learning solution of coupled Hamilton-Jacobi equations. Automatica, 2011, 47: 1556–1569

Liu D, Wei Q. Policy iteration adaptive dynamic programming algorithm for discrete-time nonlinear systems. IEEE Trans Neural Netw Learn Syst, 2014, 25: 621–634

Song R, Xiao W, Zhang H, et al. Adaptive dynamic programming for a class of complex-valued nonlinear systems. IEEE Trans Neural Netw Learn Syst, 2014, 25: 1733–1739

Song R, Lewis F L, Wei Q, et al. Multiple Actor-critic structures for continuous-time optimal control using input-output data. IEEE Trans Neural Netw Learn Syst, 2015, 26: 851–865

Song R, Lewis F L, Wei Q, et al. Off-policy actor-critic structure for optimal control of unknown systems with disturbances. IEEE Trans Cybern, 2015, PP: 1

Al-Tamimi A, Lewis F L, Abu-Khalaf M. Discrete-time nonlinear HJB solution using approximate dynamic programming: convergence proof. IEEE Trans Syst Man Cybern Part B-Cybern, 2008, 38: 943–949

Lincoln B, Rantzer A. Relaxing dynamic programming. IEEE Trans Automat Contr, 2006, 51: 1249–1260

Wei Q, Wang D, Zhang D. Dual iterative adaptive dynamic programming for a class of discrete-time nonlinear systems with time-delays. Neural Comput Appl, 2013, 23: 1851–1863

Zhang H, Wei Q, Luo Y. A novel infinite-time optimal tracking control scheme for a class of discrete-time nonlinear systems via the greedy HDP iteration algorithm. IEEE Trans Syst Man Cybern Part B-Cybern, 2008, 38: 937–942

Wei Q, Liu D. Neural-network-based adaptive optimal tracking control scheme for discrete-time nonlinear systems with approximation errors. Neurocomputing, 2015, 149: 106–115

Wei Q, Liu D. Stable iterative adaptive dynamic programming algorithm with approximation errors for discrete-time nonlinear systems. Neural Comput Appl, 2014, 24: 1355–1367

Wei Q, Liu D. Numerically adaptive learning control scheme for discrete-time nonlinear systems. IET Control Theory Appl, 2013, 7: 1472–1486

Kiumarsi B, Lewis F L, Modares H, et al. Reinforcement image-learning for optimal tracking control of linear discretetime systems with unknown dynamics. Automatica, 2014, 50: 1167–1175

Liu D, Wei Q. Finite-approximation-error-based optimal control approach for discrete-time nonlinear systems. IEEE Trans Cybern, 2013, 43: 779–789

Wei Q, Liu D. A novel iterative θ-adaptive dynamic programming for discrete-time nonlinear systems. IEEE Trans Autom Sci Eng, 2014, 11: 1176–1190

Wei Q, Liu D, Shi G, et al. Optimal multi-battery coordination control for home energy management systems via distributed iterative adaptive dynamic programming. IEEE Trans Ind Electron, 2015, 42: 4203–4214

Wei Q, Liu D. Nonlinear neuro-optimal tracking control via stable iterative Q-learning algorithm. Neurocomputing, 2015, 168: 520–528

Watkins C. Learning from delayed rewards. Dissertation for the Doctoral Degree. Cambridge: Cambridge University, 1989

Watkins C, Danyan P. Q-learning. Mach Learn, 1992, 8: 279–292

Busoniu L, Babuska R, Schutter B D, et al. Reinforcement Learning and Dynamic Programming Using Function Approximators. Boca Raton: CRC Press, 2010

Wei Q, Liu D, Shi G. A novel dual iterative Q-learning method for optimal battery management in smart residential environments. IEEE Trans Ind Electron, 2015, 62: 2509–2518

Huang T, Liu D. A self-learning scheme for residential energy system control and management. Neural Comput Appl, 2013, 22: 259–269

Boaro M, Fuselli D, Aagelis F D, et al. Adaptive dynamic programming algorithm for renewable energy scheduling and battery management. Cognitive Comput, 2013, 5: 264–277

Fuselli D, Angelis F D, Boaro M, et al. Action dependent heuristic dynamic programming for home energy resource scheduling. Int J Elec Power Energ Syst, 2013, 48: 148–160

Prashanth L A, Bhatnagar S. Reinforcement learning with function approximation for traffic signal control. IEEE Trans Intell Transp Syst, 2011, 12: 412–421

Dorf R C, Bishop R H. Modern Control Systems. 12th ed. New York: Prentice Hall, 2011

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Wei, Q., Liu, D. A novel policy iteration based deterministic Q-learning for discrete-time nonlinear systems. Sci. China Inf. Sci. 58, 1–15 (2015). https://doi.org/10.1007/s11432-015-5462-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11432-015-5462-z

Keywords

- adaptive critic designs

- adaptive dynamic programming

- approximate dynamic programming

- Q-learning

- policy iteration

- neural networks

- nonlinear systems

- optimal control