Abstract

Objectives

The aim of this study was to evaluate the use of a convolutional neural network (CNN) system for detecting vertical root fracture (VRF) on panoramic radiography.

Methods

Three hundred panoramic images containing a total of 330 VRF teeth with clearly visible fracture lines were selected from our hospital imaging database. Confirmation of VRF lines was performed by two radiologists and one endodontist. Eighty percent (240 images) of the 300 images were assigned to a training set and 20% (60 images) to a test set. A CNN-based deep learning model for the detection of VRFs was built using DetectNet with DIGITS version 5.0. To defend test data selection bias and increase reliability, fivefold cross-validation was performed. Diagnostic performance was evaluated using recall, precision, and F measure.

Results

Of the 330 VRFs, 267 were detected. Twenty teeth without fractures were falsely detected. Recall was 0.75, precision 0.93, and F measure 0.83.

Conclusions

The CNN learning model has shown promise as a tool to detect VRFs on panoramic images and to function as a CAD tool.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Panoramic radiography is frequently used for screening for various abnormalities of the jaws and their adjacent structures, and is recognized as a reliable and convenient technique [1]. However, because of the complexity of the relationships between anatomical structures and the panoramic image layer, panoramic radiography images may sometimes be difficult to interpret, especially for inexperienced observers, with the result that critical disease may be overlooked [2]. In this regard, a number of computer-assisted detection/diagnosis (CAD) systems have been developed for various diseases, including maxillary sinusitis [3], osteoporosis [4, 5], and carotid artery calcification [6]. In these systems, image characteristics that are extracted by experienced human observers are input into the CAD system for diagnostic assistance. More recently, deep learning (DL) systems with convolutional neural networks (CNN) have been introduced into the field of oral and maxillofacial diagnostic imaging [7,8,9,10,11,12,13,14,15,16,17]. In this technique, the computer system can automatically learn to extract image characteristics suitable for performing various tasks such as classification, segmentation, and image enhancement. Object detection, which is one such function, involves the detection of objects learned during the training process. One such CNN-based DL system is DetectNet, which outputs the XY coordinates of a detected object as a colored box when a testing image is input into the trained learning model [18]. DetectNet has already been applied to diseases in various medical fields, such as mammary disease, and showed high diagnostic performance [19]. Although deep learning systems may provide a way to fully automate the detection of abnormalities or anatomic structures, there are relatively small number of studies reporting their application to panoramic imaging [11,12,13,14,15,16].

Vertical root fractures (VRF) are reported to occur in 3.7–30.8% of endodontically treated teeth, and are predominantly seen in the mandibular premolars and molars [20,21,22,23]. They are one of the most difficult dental diseases to treat conservatively, and almost all VRF teeth are either extracted or treated by hemisection or root separation techniques. However, early treatment involving the resection of affected roots can achieve relatively long survival times for the remaining roots, with 5- and 10-year survival rates of 94% and 64%, respectively [24]. In addition, VRFs are sometimes identified incidentally on panoramic images, because some endodontically treated teeth show no or only slight symptoms, even when a VRF is present [25]. Although the detectability of VRF is reported to be higher with cone-beam computed tomography (CBCT) for dental use than with intra-oral or panoramic radiography [20,21,22, 26,27,28,29,30,31,32], the radiation dose to patients from CBCT is relatively large [20, 30, 31], and CBCT is not therefore suitable for screening purposes.

The aims of the present study were to develop an object detection model to identify teeth with a VRF on panoramic images, and to evaluate the diagnostic performance of this model.

Materials and methods

Materials

A total of 1914 images were extracted from our hospital image database of 65,490 panoramic images stored between April 2013 and February 2019. These 1914 images were retrieved using the search engine of our radiology information system, using the reference words “root fracture” on the imaging reports. From these 1914 extracted images, 300 subjects (150 females and 150 males, with a mean age of 66.05 years) were selected through a review process involving two oral and maxillofacial radiologists (MF and EA) and an endodontist (KI). For each included subject, at least one tooth with a VRF could be clearly identified on panoramic images, with all three observers being in agreement. In 28 subjects, two teeth showed a VRF on a single image, while one subject had three teeth with a VRF. Consequently, 300 panoramic images of 1039 × 1378 pixels showing 330 teeth with a VRF were downloaded in jpeg format, and these served to create the dataset for the deep learning process. The distributions of the tooth types of these 330 teeth (described true boxes) are summarized in Table 1. The VRFs were most frequently observed in the mandibular molars (54.8%) and mandibular premolars (17.6%). Most of the teeth with a VRF (96.4%) could be judged as endodontically treated teeth according to the finding of root canal filling materials.

All 300 images were obtained on a panoramic machine (Veraviewepocs X550 P-CR, J. Morita Mfg Corp., Kyoto, Japan) with a tube voltage of 75 kVp, tube current of 9 mA, and acquisition time of 16 s.

Preparation of the dataset

Images of 900 × 900 pixels were cropped from the downloaded images and teeth with a VRF were labeled by the setting of arbitrary-sized rectangular regions of interest (ROI) sufficient to contain their crown and roots. These ROIs were set by an oral and maxillofacial radiologist (MF) using Image J software (National Institute of Health, Bethesda, Maryland, USA), and the coordinates of the upper left (X1, Y1) and lower right (X2, Y2) corners were recorded and converted to text form (Fig. 1).

Architecture of the deep learning system

The deep learning process was performed using the Digits version 5.0 training system (Nvidia, California, USA) with a customized DetectNet (https://devblogs.nvidia.com/detectnet-deep-neural-network-object-detection-digits/). The workstation had an Ubuntu 16.04 operating system and GeForce 1080Ti graphics processor unit (Nvidia).

Learning process and evaluation of diagnostic performance

A fivefold cross-validation method was applied to the training and testing process [33, 34] (Fig. 2). The dataset was divided into five parts, with four parts (b, c, d, and e) being used as training data and the remaining part (a) being used as testing data. This process was repeated five times, changing the testing dataset each time. The training processes involved 1000 epochs using the ADAM (adaptive moment estimation) solver with an initial learning rate of 0.0001. Five learning models were created, and the corresponding testing data were applied to the respective models. Detected areas were shown as red boxes on each image of the testing dataset (Fig. 3), and these were assigned as correct when they sufficiently included the root with the VRF and fracture lines.

Diagnostic performance was evaluated with recall, precision, and F measure values [35,36,37,38] as follows:

The “number of all true boxes” is the number of teeth that truly have a VRF (n = 330). In other word, recall and precision mean sensitivity and positive predictive value, respectively. These two indices have a trade-off relationship, therefore, their harmonic means which is the so-called F measure is also used to evaluate the performances of machine learning [35,36,37,38]. Estimated diagnostic performances were defined as the means of the results of the five learning models.

Results

The testing results are summarized in Table 1. Of the 267 boxes detected on the 300 images, 247 boxes correctly identified teeth having a VRF, but 20 boxes incorrectly marked teeth without a VRF. Among the incorrectly detected 15 mandibular molars, 2 received root separation surgery. Out of the 330 teeth with true VRFs, 83 teeth were not detected, with no relevant box being shown on the images. Seven (58.3%) of 12 teeth without endodontic treatment were not detected. The recall rates were low for the maxillary incisors but high for the mandibular premolars and molars, while the precision rates were high regardless of tooth type (Table 2). Consequently, the estimated recall, precision, and F measure were 0.75, 0.93, and 0.83, respectively.

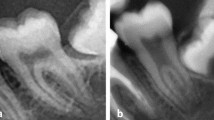

A typical correctly detected box is shown in Fig. 3, with a VRF being detected in the right mandibular first molar. Figure 4 shows a maxillary second molar without endodontic treatment that was not detected in the image. In Fig. 5, although a VRF is correctly detected in the mandibular left premolar, the first molar after root separation is incorrectly detected as a tooth with a VRF. Figure 6 shows a right first molar showing periapical and bifurcation radiolucency that was misdiagnosed as having a VRF.

Discussion

In the present study, VRFs were predominantly observed in the mandibular molars (54.8%), followed by the mandibular premolars (17.6%). These results support the descriptions in textbooks [39] and previous reports [20,21,22,23]. To the contrary, a study from Romania reported the maxillary incisors to be the predominant tooth type with fracture in teeth extracted from females and males [40]. This discrepancy could be attributed to various factors, including differences in the study population, inclusion of horizontal fractures as well as VRFs, and the influence of different VRF verification methods such as panoramic radiography, cone-beam CT, or endoscopy. Anyhow, the authors are in agreement with the finding that VRFs were mostly observed in endodontically treated teeth [20,21,22, 25, 29, 41].

The detectability of VRFs on CBCT is reported to be significantly higher than on panoramic or intra-oral radiography [20,21,22, 26,27,28,29,30,31,32]. Takeshita et al. compared diagnostic performance between these modalities and showed that the performance of panoramic radiography according to the area under the receiver operating characteristic curve was lower than that of CBCT, but equivalent to full-mouth intra-oral radiography [32]. They emphasized that attention should be paid to the diagnosis of VRFs of the incisors and premolars on panoramic radiography, because the former can be overlapped by the vertebrae and the latter may not be exposed on the orthoradial projection angle. Although CBCT allows three-dimensional visualization of the fracture line, it should not be used for screening because of the considerable exposure to ionizing radiation. Therefore, it is recommended that CBCT should only be used as an additional examination after screening of the whole teeth by panoramic radiography.

In recent years, various studies have been reported to evaluate the use of DL system for various oral and maxillofacial imaging procedures, such as periapical radiography [7, 8], panoramic radiography [9,10,11,12,13,14], and CT images [15]. The object detection functionality is also used for diagnosing abnormalities or anatomic structures on panoramic images [11,12,13,14,15,16]. For automated diagnosis, the object detection technique is thought to be more effective than classification method. Therefore, we conducted this study as a step towards creating a fully automated diagnostic system for panoramic images. Although our results demonstrate the possibility of the technique, the currently acquired performances may be insufficient for clinical practice. The low number of teeth used for the training process may be a reason for the low recall rates in the maxillary teeth and mandibular incisors. Moreover, more than half of the endodontically untreated teeth with a VRF were not detected in the present study. This result may be attributed to the low numbers of such teeth and the fact that the learning model probably extracted the characteristics of endodontically treated teeth. Therefore, a future study should be conducted with higher numbers of such teeth with a VRF, to create a more effective model.

In the case of falsely detected teeth, mandibular molars in the postoperative state of root separation might be assigned as having a VRF. Although these teeth were regarded as falsely detected boxes in the present study, the detection of separated teeth could be another purpose of the model. In this case, if they were to be assigned as correct detection, the performance would improve. In addition, even though a fracture line may not be detected on a radiograph, teeth with VRF frequently show characteristic bony resorption, which presents with special appearances on imaging [42,43,44], such as the so-called halo lesion. The deep learning system might learn and extract these characteristic appearances, and then detect such teeth on the basis of their boney appearance when the testing data sets are applied to the learning model. If so, the teeth that were assigned as falsely detected may include those teeth with a true VRF but that went undetected on radiographs. In this regard, a study should be planned using teeth with endoscopically verified VRFs.

This study has some limitations. First, there were too few training data items for the maxillary teeth, mandibular incisors, and endodontically untreated teeth. Second, we only selected panoramic radiography images that included clear VRF lines. Third, all the panoramic images were obtained in just a single hospital. Fourth, preparing the dataset and creating the model was a time-consuming task. Future studies should be addressed towards solving these problems.

Conclusion

We developed an artificial intelligence model for detecting vertical tooth fracture on panoramic radiography. Evaluation of the performance of this model revealed recall of 0.75, precision of 0.93, and an F measure of 0.83, thereby showing that a CAD system for panoramic radiography has potential for this particular function.

References

Choi JW. Assessment of panoramic radiography as a national oral examination tool: review of the literature. Imaging Sci Dent. 2011;41:1–6.

Nardi C, Calistri L, Grazzini G, Desideri I, Lorini C, Occhipinti M, et al. Is Panoramic radiography an accurate imaging technique for the detection of endodontically treated asymptomatic apical periodontitis? J Endod. 2018;44:1500–8.

Ohashi Y, Ariji Y, Katsumata A, Fujita H, Nakayama M, Fukuda M, et al. Utilization of computer-aided detection system in diagnosing unilateral maxillary sinusitis on panoramic radiographs. Dentomaxillofac Radiol. 2016;45:20150419.

Muramatsu C, Matsumoto T, Hayashi T, Hara T, Katsumata A, Zhou X, et al. Automated measurement of mandibular cortical width on dental panoramic radiographs. Int J Comput Assist Radiol Surg. 2013;8:877–85.

Muramatsu C, Horiba K, Hayashi T, Fukui T, Hara T, Katsumata A, et al. Quantitative assessment of mandibular cortical erosion on dental panoramic radiographs for screening osteoporosis. Int J Comput Assist Radiol Surg. 2016;11:2021–32.

Maia PRL, Medeiros AMC, Pereira HSG, Lima KC, Oliveira PT. Presence and associated factors of carotid artery calcification detected by digital panoramic radiography in patients with chronic kidney disease undergoing hemodialysis. Oral Surg Oral Med Oral Pathol Oral Radiol. 2018;126:198–204.

Chen H, Zhang K, Lyu P, Li H, Zhang L, Wu J, et al. A deep learning approach to automatic teeth detection and numbering based on object detection in dental periapical films. Sci Rep. 2019;9(1):3840.

Lee JH, Kim DH, Jeong SN, Choi SH. Detection and diagnosis of dental caries using a deep learning-based convolutional neural network algorithm. J Dent. 2018;77:106–11.

Murata M, Ariji Y, Ohashi Y, Kawai T, Fukuda M, Funakoshi T, et al. Deep-learning classification using convolutional neural network for evaluation of maxillary sinusitis on panoramic radiography. Oral Radiol. 2018. https://doi.org/10.1007/s11282-018-0363-7[Epub ahead of print].

Hiraiwa T, Ariji Y, Fukuda M, Kise Y, Nakata K, Katsumata A, et al. A deep-learning artificial intelligence system for assessment of root morphology of the mandibular first molar on panoramic radiography. Dentomaxillofac Radiol. 2019;48:20180218.

Ariji Y, Yanashita Y, Kutsuna S, Muramatsu C, Fukuda M, Kise Y, et al. Automatic detection and classification of radiolucent lesions in the mandible on panoramic radiographs using a deep learning object detection technique. Oral Surg Oral Med Oral Pathol Oral Radiol. 2019. https://doi.org/10.1016/j.oooo.2019.05.014[Epub ahead of print].

Tuzoff DV, Tuzova LN, Bornstein MM, Krasnov AS, Kharchenko MA, Nikolenko SI, et al. Tooth detection and numbering in panoramic radiographs using convolutional neural networks. Dentomaxillofac Radiol. 2019;48:20180051.

Lee JS, Adhikari S, Liu L, Jeong HG, Kim H, Yoon SJ. Osteoporosis detection in panoramic radiographs using a deep convolutional neural network-based computer-assisted diagnosis system: a preliminary study. Dentomaxillofac Radiol. 2018;48:20170344.

Ekert T, Krois J, Meinhold L, Elhennawy K, Emara R, Golla T, et al. Deep learning for the radiographic detection of apical lesions. J Endod. 2019;45(7):917–922.e5.

Vinayahalingam S, Xi T, Bergé S, Maal T, de Jong G. Automated detection of third molars and mandibular nerve by deep learning. Sci Rep. 2019;9(1):9007.

Krois J, Ekert T, Meinhold L, Golla T, Kharbot B, Wittemeier A, et al. Deep learning for the radiographic detection of periodontal bone loss. Sci Rep. 2019;9(1):8495.

Kise Y, Ikeda H, Fujii T, Fukuda M, Ariji Y, Fujita H, et al. Preliminary study on the application of deep learning system to diagnosis of Sjögren's syndrome on CT images. Dentomaxillofac Radiol. 2019. https://doi.org/10.1259/dmfr.20190019[Epub ahead of print].

Zhao ZQ, Zheng P, Xu ST, Wu X. Object detection with deep learning: a review. IEEE Trans Neural Netw Learn Syst. 2019. https://doi.org/10.1109/TNNLS.2018.2876865.

Kooi T, Litjens G, van Ginneken B, Gubern-Mérida A, Sánchez CI, Mann R, et al. Large scale deep learning for computer aided detection of mammographic lesions. Med Image Anal. 2017;35:303–12.

Ardakani FE, Razavi SH, Tabrizizadeh M. Diagnostic value of cone-beam computed tomography and periapical radiography in detection of vertical root fracture. Iran Endod J. 2015;10:122–6.

Safi Y, Aghdasi MM, Ezoddini-Ardakani F, Beiraghi S, Vasegh Z. Effect of metal artifacts on detection of vertical root fractures using two cone beam computed tomography systems. Iran Endod J. 2015;10:193–8.

Hekmatian E, Karbasi Kheir M, Fathollahzade H, Sheikhi M. Detection of vertical root fractures using cone-beam computed tomography in the presence and absence of gutta-percha. Sci World J. 2018;109:1920946.

Llena-Puy MC, Forner-Navarro L, Barbero-Navarro I. Vertical root fracture in endodontically treated teeth: a review of 25 cases. Oral Surg Oral Med Oral Pathol Oral Radiol Endod. 2001;92:553–5.

Prithviraj DR, Bhalla HK, Vashisht R, Regish KM, Suresh P. An overview of management of root fractures. Kathmandu Univ Med J. 2014;12:222–30.

Tsesis I, Rosen E, Tamse A, Taschieri S, Kfir A. Diagnosis of vertical root fractures in endodontically treated teeth based on clinical and radiographic indices: a systematic review. J Endod. 2010;36:1455–8.

Salineiro FCS, Kobayashi-Velasco S, Braga MM, Cavalcanti MGP. Radiographic diagnosis of root fractures: a systematic review, meta-analyses and sources of heterogeneity. Dentomaxillofac Radiol. 2017;46:20170400.

Ma RH, Ge ZP, Li G. Detection accuracy of root fractures in cone-beam computed tomography images: a systematic review and meta-analysis. Int Endod J. 2016;49:646–54.

Long H, Zhou Y, Ye N, Liao L, Jian F, Wang Y, et al. Diagnostic accuracy of CBCT for tooth fractures: a meta-analysis. J Dent. 2014;42:240–8.

Brady E, Mannocci F, Brown J, Wilson R, Patel S. A comparison of cone beam computed tomography and periapical radiography for the detection of vertical root fractures in nonendodontically treated teeth. Int Endod J. 2014;47:735–46.

Junqueira RB, Verner FS, Campos CN, Devito KL, do Carmo AM. Detection of vertical root fractures in the presence of intracanal metallic post: a comparison between periapical radiography and cone-beam computed tomography. J Endod. 2013;39:1620–4.

Kobayashi-Velasco S, Salineiro FC, Gialain IO, Cavalcanti MG. Diagnosis of alveolar and root fractures: an in vitro study comparing CBCT imaging with periapical radiographs. J Appl Oral Sci. 2017;25:227–33.

Takeshita WM, Chicarelli M, Iwaki LC. Comparison of diagnostic accuracy of root perforation, external resorption and fractures using cone-beam computed tomography, panoramic radiography and conventional and digital periapical radiography. Indian J Dent Res. 2015;26:619–26.

Xue Y, Zhang R, Deng Y, Chen K, Jiang T. A preliminary examination of the diagnostic value of deep learning in hip osteoarthritis. PLoS ONE. 2017;12:e0178992.

Wang H, Zhou Z, Li Y, Chen Z, Lu P, Wang W, et al. Comparison of machine learning methods for classifying mediastinal lymph node metastasis of non-small cell lung cancer from 18F-FDG PET/CT images. EJNMMI Res. 2017;7:11.

Özdemir B, Aksoy D, Eckert D, Pesaresi M, Ehrlich D. Performance measures for object detection evaluation. Pattern Recognit Lett. 2010;31:1128–37.

England JR, Cheng PM. Artificial intelligence for medical image analysis: a guide for authors and reviewers. AJR Am J Roentgenol. 2019;212(3):513–9.

Powers DMW. Evaluation: from precision, recall and F-measure to ROC, informedness, markedness and correlation. J Mach Learning Technol. 2011;2:37–633.

Yap MH, Pons G, Marti J, Ganau S, Sentis M, Zwiggelaar R, et al. Automated breast ultrasound lesions detection using convolutional neural networks. IEEE J Biomed Health Inform. 2018;22(4):1218–26.

Garg N, Garg A. Textbook of endodontics. 4th ed. New Delhi: Jaypee Brothers Medical Publishers; 2019.

Popescu SM, Diaconu OA, Scrieciu M, Marinescu IR, Drăghici EC, Truşcă AG, et al. Root fractures: epidemiological, clinical and radiographic aspects. Rom J Morphol Embryol. 2017;58:501–6.

Suksaphar W, Banomyong D, Jirathanyanatt T, Ngoenwiwatkul Y. Survival rates from fracture of endodontically treated premolars restored with full-coverage crowns or direct resin composite restorations: a retrospective study. J Endod. 2018;44:233–8.

Walton RE. Vertical root fracture: factors related to identification. J Am Dent Assoc. 2017;148:100–5.

Tamse A, Fuss Z, Lustig J, Ganor Y, Kaffe I. Radiographic features of vertically fractured, endodontically treated maxillary premolars. Oral Surg Oral Med Oral Pathol Oral Radiol Endod. 1999;88:348–52.

Lustig JP, Tamse A, Fuss Z. Pattern of bone resorption in vertically fractured, endodontically treated teeth. Oral Surg Oral Med Oral Pathol Oral Radiol Endod. 2000;90:224–7.

Acknowledgements

We thank Karl Embleton, PhD, from Edanz Group (www.edanzediting.com/ac) for editing a draft of this manuscript.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

None of the authors have any conflict of interest associated with this study.

Ethical approval

All procedures followed were in accordance with the ethical standards of the responsible committee on human experimentation (institutional and national) and with the Helsinki Declaration of 1964 and later versions.

Informed consent

Informed consent was obtained from all patients for being included in the study. This study obtained ethical approval from Aichi-Gakuin University ethics committee (No. 496).

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Fukuda, M., Inamoto, K., Shibata, N. et al. Evaluation of an artificial intelligence system for detecting vertical root fracture on panoramic radiography. Oral Radiol 36, 337–343 (2020). https://doi.org/10.1007/s11282-019-00409-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11282-019-00409-x