Abstract

In this work, we solve the general linearization problem for the generalized Bessel polynomials using their inversion formula. For some particular values, we get a recurrence relation satisfied by the linearization coefficients from which we deduce their nonnegativity. We also recover a result given by Berg and Vignat (Constr Approx 27:15–32, 2008) and derived an explicit formula that generalizes a result by Atia and Zeng (Ramanujan J 28:211–221, 2012).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Given a sequence of polynomials \(\{p_n(x)\}_{n\in {\mathbb {N}}_0}\), one may like to know something about the nonnegativity of the coefficients \(\displaystyle {L_{k}(m,n)}\) in

Equation (1) is called the linearization formula of the polynomial sequence \(\{p_n(x)\}_{n\in {\mathbb {N}}_0}\) and \(\displaystyle {L_{k}(m,n)}\) the linearization coefficients. It is often important to know whether the linearization coefficients are positive or non-negative (see e.g. [5, 9, 13, Chap. 9 and references therein], [14, 18]). In the sequel, we use the generalized hypergeometric series defined by

where \((a)_n\) denotes the Pochhammer symbol (or shifted factorial) given by

Consider the generalized Bessel polynomials defined (in 1949 by Krall and Frink [19]) for \(N\in {\mathbb {N}}\) by (see also [12, Chap. 2], [13, p. 123], [17, Section 9.13, p. 244])

and (see [12, p. 13], [13, p. 123], [17, Remarks, p. 246])

We refer to [12, 13, 17, 19] concerning references to the literature and the history about Bessel polynomials.

In this work, we give the linearization formulae for the generalized Bessel polynomials \((y_n(x;\alpha ))_n\) and \((\theta _n(x;\alpha ,\beta ))_n\); we find recurrence relations satisfied by the linearization coefficients of the polynomial family \((\theta _n(x;0,\beta ))_n\) (from which their nonnegativity is deduced) and the polynomial family \((y_n(x;\alpha ))_n\). For \(\beta =2\) in \((\theta _n(x;0,\beta ))_n\), we recover results given by Berg and Vignat [5] and we derived an explicit formula that generalizes a result by Atia and Zeng [3]. Following the work by Atia and Zeng [3], we simplify the linearization coefficients of the polynomial family \((\theta _n(x;0,\beta ))_n\) from a double sum to a single sum.

2 Linearization formulae of the Bessel polynomials

In this section, we solve the more general linearization problem

for the generalized Bessel polynomials. The linearization formula (4) follows from the hypergeometric representation of the generalized Bessel polynomials and their inversion formula, that is, a formula expanding the basis \((x^n)_{n}\) into a family of polynomials \((p_n(x))_n\) with \(\deg p_n =n\)

The inversion formula of the generalized Bessel polynomials \((y_n(x;\alpha ))_n\) is given by (see e.g. [7, 12, p. 73], [15, 23, Eq. (7), p. 294], [24, 27])

The polynomial \(\theta _n(x;\alpha ,\beta )\) is solution of the differential equation (see [12, Eq. (29), p. 13], compare [13, p. 123])

Equation (6) follows immediately from the differential equation (see e.g. [12, Eq. (26), p. 12], [17, p. 245], [19, Eq. (33)])

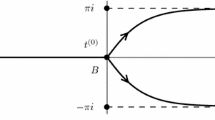

satisfied by \(y_n(x;\alpha )\) and the relation \(\theta _n(x;\alpha ,\beta )=x^ny_n(2(\beta x)^{-1};\alpha )\). In [12, Eq. (5), p. 42], the so-called pseudo-generating function for \(\theta _n(x;\alpha ,\beta )\) is given as follows:

From this pseudo-generating function, we prove that

Proposition 1

The inversion formula of the Bessel polynomials \((\theta _n(x;\alpha ,\beta ))_n\) is given by

Proof

From (7), we get

Since

and

it follows that

Therefore

From the relation [23, Eq. (1), p. 56]

we deduce by equating the coefficients of \(u^n\) in the latter expression that

from which the result follows. \(\square \)

Remark 2

For \(\alpha =0\) and \(\beta =2\), we have the Bessel polynomials (see [5, 12])

and (8) becomes (after multiplication and division by n!)

Since \((-m)_{n-m-1}=0\) if \(n-m-1>m\), i.e., if \(0\le m < \frac{n-1}{2}\), and \(\frac{(-n)_m(-m)_{n-m-1} }{(-1)^{m+1} m!n! }=\frac{(-1)^{n-m}}{(n-m)!(2m+1-n)!}\), the above inversion formula coincides with the one due to Carlitz [6] (see [3, Eq. (13)], [5, Eq. (17)], [12, p. 73])

To solve (4), we proceed in general as follows (see [1, 15]): If

then by the Cauchy product,

with

Combining the preceding result with the inversion formula

we get

It follows then from the representations (2) and (3) of the generalized Bessel polynomials and their inversion formulae (5) and (8) that

Theorem 3

The linearization formula for the generalized Bessel polynomials

has the coefficients

The linearization formula for the Bessel polynomials

has the coefficients

In the above theorem, \((a_1,a_2,\ldots ,a_k)_n=(a_1)_n(a_2)_n\cdots (a_k)_n\).

2.1 Special cases

For \(m=0\) and \(\alpha =\gamma \) in (10), we get the multiplication formula (see e.g. [8, 20, 25])

and for \(m=0,\, \lambda =\alpha ,\, \delta =\beta \), we deduce from (11) the multiplication formula

3 Recurrence equations and nonnegativity of the linearization coefficients

In what follows, we derive a recurrence equation for the linearization coefficients \(L^{(\beta )}_k(m,n,a,1-a)\) of the linearization formula

in the specific case \(\alpha =0\), \(b=1-a\). Moreover, we give conditions for those coefficients to be nonnegative and we recover the result by Berg and Vignat [5] as a particular case. We also derive a mixed recurrence relation satisfied by the linearization coefficients \(L_k^{(\alpha )}(m,n,a,b)\) of the linearization formula

Proposition 4

The polynomials \(\theta _n(x;\alpha ,\beta )\) and \(y_n(x;\alpha )\) satisfy, respectively, the structure relations (compare [13, Eq. (4.10.12)])

Proof

Substitute \(\theta _n(x;\alpha ,\beta )=A_nx^n+B_{n}x^{n-1}+C_nx^{n-2}+\ldots \) and \(y_n(x;\alpha )=A'_nx^n+B'_nx^{n-1}+C'_nx^{n-2}+\cdots \), respectively, in the structure relations

and equate the coefficients of \(x^n, x^{n-1}\) and \(x^{n-2}\) to get the coefficients \(c_n,\, d_n=-c_n,\, e_n=0, c'_n,\ d'_n,\ e'_n\). \(\square \)

Note that Relations (15) and (16) can also be obtained using the Maple procedure sumdiffrule of the hsum.mpl package (see [16]).

Now let \(m,n\ge 1\). Proceeding as Berg and Vignat [5], we differentiate (11) (for \(\lambda =\mu =\alpha \) and \(\delta =\gamma =\beta \)) to obtain

Using first (15) and then (11), this equation becomes

Since this relation is true for all x, therefore for \(x=0\), we have

This equation is valid if \((ac_n+bc_m)L_0(m,n,a,b)=0\), \(ac_n+bc_m-c_k=0,\, k=1,\ldots ,n+m\), that is, if \(L_0(m,n,a,b)=0\), \(\alpha =0\) and \(a+b=1\) since clearly from the definition (3), \(\beta \ne 0\). Under these conditions, we remain with

Finally dividing the above equation by \(\beta x\) and equating the coefficients of \(\theta _{k}(x;0,\beta )\) yields:

Proposition 5

For \(n,m\ge 1\) and \(k=0,1,\ldots ,n+m-1\), the recurrence equation

with \(L^{(\beta )}_0(m,n,a,1-a)=0\) is satisfied by the linearization coefficients \(L^{(\beta )}_k(n,m,a,1-a)\) of the linearization formula (13).

Remark 6

If the Bessel polynomials \(\theta _n(x;\alpha ,\beta )\) were orthogonal, this method for computing the recurrence relation of the linearization coefficients could be extended to the case \(a+b\ne 1\). In fact, Favard’s theorem [10] states that if they are orthogonal, they satisfy a three-term recurrence relation of the form

that we can use to substitute \(x\theta _k(x;\alpha ,\beta )\) in (17) and equate the coefficients of \(\theta _k(x;\alpha ,\beta )\) to get a mixed recurrence equation for the linearization coefficients.

If the recurrence equation (19) was valid, then to get the coefficients \(A_n, B_n, C_n\), we substitute \(\theta _n(x;\alpha ,\beta )=k_nx^n+k'_nx^{n-1}+k''_nx^{n-2}+\cdots \) in (19) and equate the coefficients of \(x^n, x^{n-1}, x^{n-2}\) to get \(A_n, B_n, C_n\). But it happens that this system has no solution meaning that such three-term recurrence relation doesn’t exist for the family \(\theta _k(x;\alpha ,\beta )\). Zeilberger’s algorithm (see e.g. [16, 21]) deals with sums of the form

and generates a homogeneous linear recurrence equation with polynomial coefficients for \(S_n\). Using Zeilberger’s algorithm implemented in Maple by the sumrecursion procedure of the hsum package [16], we find that the recurrence equation satisfied by \(\theta _n(x;\alpha ,\beta )\) is

This equation can also be obtained by substituting \(x\leftarrow \frac{2}{\beta x}\) in [17, Eq. (9.13.3), p. 245] and multiplying the resulting equation by \(x^{n+1}\).

Remark 7

With the help of a computer algebra system, extensive numerical investigations indicate that for all a and \(\beta \) in (13), the coefficients \(L^{(\beta )}_k(m,n,a,1-a)=0, 0\le k < \min (m,n), m,n\ge 1\).

Theorem 8

Let \(m,n\ge 1\). Then for \(0\le a \le 1\) and \(\beta >0, L^{(\beta )}_k(m,n,a,1-a)\ge 0, k=0,1,\ldots ,n+m\).

The proof of this theorem uses the following result.

Proposition 9

For \(k=0,1,\ldots ,n,\) the coefficients

of the multiplication formula

obtained from (12) by taking \(\alpha =0\) are nonnegative for \(0\le a\le 1\) and \(\beta >0\).

Proof

In fact for \(0\le a\le 1\) and \(\beta >0\),

It remains to prove that

From the formula (see e.g. [22, Eq. (3), p. 388])

we have for \(a_1=-k-1,\, a_2=-n+k,\, a_3=k+2,\, b_1=-2n+k,\, b_2=k+1,\ \omega =1,\, z=a\),

Note that the sum over j is now from 0 to \(k+1\) since \((-k-1)_j=0\) when \(j>k+1\). From the following formula (see e.g. [22, Eq. (82), p. 539])

for \(n=j, b=k+2, c=-n+k, d=-2n+k, e=k+1\), it follows that

This sum contains only two summands and simplifies to

We therefore deduce that

which is clearly nonnegative for \(0\le a\le 1\). \(\square \)

Proof of Theorem 8

Let \(0\le a\le 1\) and \(\beta >0\). The nonnegativity of the coefficients \(L^{(\beta )}_k(m,n,a,1-a)\) follows by induction (on m and n) from the nonnegativity of \(D_k(n,a,\beta )=L^{(\beta )}_k(0,n,a,1-a)\), \(D_k(m,1-a,\beta )=L^{(\beta )}_k(m,0,a,1-a)\) and the recurrence relation (18). \(\square \)

Proposition 10

The linearization coefficients \(L_k^{(\alpha )}(m,n,a,b)\) of the linearization formula (14) are solution of the mixed recurrence equation

Proof

Differentiate the both sides of (14) and multiply the result by \(x^2\) to use the structure relation (16). Rewrite the output with the help of (14) and use the three-term recurrence equation [17, Eq. (9.13.3)]

Equating the coefficients of \(y_k(x;\alpha )\) leads to the result. \(\square \)

Remark 6 may explain why some researchers are interested by the case \(b=1-a\). The special case \(\alpha =\lambda =\mu =0\) and \(\beta =\delta =\gamma =2\) leads to the Bessel polynomials \(q_n(x)\) defined in (9) and Eq. (11) becomes (see [4])

with

For \(b=1-a\), that is, for \(0\le a \le 1\) and \(\beta >0\), since \(L^{(\beta )}_k(m,n,a,1-a)\ge 0\) , we deduce for \(\beta =2\) from the relation \(L_k(m,n,a,1-a) =\frac{2^{n+m}n!m!(2k)!}{(2n)!(2m)!2^kk!} L^{(2)}_k(m,n,a,1-a)\) that \(L_k(m,n,a,1-a)\ge 0\) for \(0\le a \le 1\) and \(L_k(m,n,a,1-a) = 0\) for \(k<\min (m,n)\), which are the results obtained by Berg and Vignat [5]. Equation (20) is therefore a generalization of their linearization formula for all \(a>0\) and \(b>0\) and Theorem 8 a more general result of the nonnegativity of the linearization coefficients for all \(\beta >0\).

Berg and Vignat [5] wrote that they “were unable to derive the explicit expression of the linearization coefficients \(L_k(m,n,a,1-a)\)” of the polynomial family \((q_n(x))_n\) in (20). Nevertheless, they proved using a recursion formula for \(L_k(m,n,a,1-a)\) (see [5, Lemma 3.6], [3, Eq. (5)])

for \(k=0,1,\ldots ,m+n-1\) with \(L_0(m,n,a,1-a)=0\), \(L_{n+m}(m,n,a,1-a)=a^n(1-a)^m\), that these coefficients are nonnegative for \(0\le a\le 1\) and \(L_k(m,n,a,1-a) = 0\) if \(k<\min (m,n)\). Using this nonnegativity, they deduced that the distribution of a finite convex combination of independent Student t-random variables with arbitrary odd degrees of freedom has a density which is a finite convex combination of certain Student t-densities with odd degrees of freedom. Atia and Zeng [3] were the first to derive a double sum formula for \(L_k(m,n,a,1-a)\) that they simplified to the single sum

Note that the explicit expression of \(L_k(m,n,a,b)\) in (20) generalizes the result by Atia and Zeng and in the case \(b=1-a\), it reduces to

By direct computation, we get as in [3, p. 216]

We make a conjecture after further computation that (23) is equivalent to (22). In fact, from the relation \( L_k(m,n,a,1-a) =\frac{2^{n+m}n!m!(2k)!}{(2n)!(2m)!2^kk!} L^{(2)}_k(m,n,a,1-a)\) and the recurrence relation (18), it follows that \(L_k(m,n,a,1-a)\) is solution of the recurrence relation (21). Since (22) and (23) are solution of the same recurrence equation (21) with the same initial conditions, it follows that they are equivalent.

One question arise at this point: Is-it also possible to reduce the linearization coefficients \(L^{(\beta )}_k(m,n,a,1-a)\) given in (13) as double sum into a single sum? In this direction, we get

with \(L_{n+m}(m,n,a,1-a)\), \(L_{n+m-1}(m,n,a,1-a)\), \(L_{n+m-2}(m,n,a,1-a)\) given, respectively, by (24)–(26). It follows from (11) that

with

The latter expression and Eq. (22) lead to

from which we deduce for \(b=1-a\)

which is the single sum expression of \(L^{(\beta )}_{k}(m,n,a,1-a)\).

In the recent manuscript [4], Benabdallah and Atia derived the linearization formula (20) and wrote the linearization coefficients \(L_k(m,n,a,b)\) as a triple sum from which they deduce the following positivity result.

Corollary 11

(see [4]) For \(0\le k\le n+m\) and \(a+b\ne 0\), the linearization coefficients \(L_k(m,n,a,b)\) of (20) are positive if and only if \(0\le a\le a+b\le 1\).

From the above corollary and Relation (28), we deduce that

Corollary 12

For \(0\le k\le n+m\), \(a+b\ne 0\) and \(\beta >0\), the linearization coefficients \(L^{(\beta )}_{k}(m,n,a,b)\) of the linearization formula (27) are positive if and only if \(0\le a\le a+b\le 1\).

References

Area, I., Godoy, E., Ronveaux, A., Zarzo, A.: Solving connection and linearization problems within the Askey scheme and its \(q\)-analogue via inversion formulas. J. Comput. Appl. Math. 133, 151–162 (2001)

Askey, R., Gasper, G.: Convolution structures for Laguerre polynomials. J. Anal. Math. 31, 48–68 (1977)

Atia, M.J., Zeng, J.: An explicit formula for the linearization coefficients of Bessel polynomials. Ramanujan J. 28, 211–221 (2012)

Benabdallah, M., Atia, M.J.: Positivity and recursion formula of the linearization coefficients of Bessel polynomials. Asian–Eur. J. Math. 11, 1–13 (2018). https://doi.org/10.1142/S1793557118500808

Berg, C., Vignat, C.: Linearization coefficients of Bessel polynomials and properties of student \(t\)-distributions. Constr. Approx. 27, 15–32 (2008)

Carlitz, L.: A note on the Bessel polynomials. Duke Math. J. 24, 151–162 (1957)

Dickinson, D.: On Lommel and Bessel polynomials. Proc. Am. Math. Soc. 5, 946 (1954)

Doha, E.H., Ahmed, H.M.: Recurrences and explicit formulae for the expansion and connection coefficients in series of Bessel polynomials. J. Phys. A 37, 8045–8063 (2004)

Even, S., Gillis, J.: Derangements and Laguerre polynomials. Math. Proc. Camb. Philos. Soc. 79, 135–143 (1976)

Favard, J.: Sur les polynômes de Tchebychev. C. R. Acad. Sci. (Paris) 200, 2052–2053 (1935)

Grosswald, E.: The Student \(t\)-distribution of any degree of freedom is infinitely divisible. Z. Wahrsch. Verw. Gebiete 36, 103–109 (1976)

Grosswald, E.: Bessel Polynomials. Lecture Notes in Mathematics, vol. 698. Springer, New York (1978)

Ismail, M.E.H.: Classical and Quantum Orthogonal Polynomials in One Variable. Encyclopedia Math. Appl. vol. 98, Cambridge University Press, Cambridge (2005)

Kim, D., Zeng, J.: A combinatorial formula for the linearization coefficients of general Sheffer polynomials. Eur. J. Comb. 22, 313–332 (2001)

Koepf, W., Schmersau, D.: Representations of orthogonal polynomials. J. Comput. Appl. Math. 90, 57–94 (1998)

Koepf, W.: Hypergeometric Summation. An Algorithmic Approach to Summation and Special Function Identities, 2nd edn. Springer, London (2014)

Koekoek, R., Lesky, P.A., Swarttouw, R.F.: Hypergeometric Orthogonal Polynomials and Their \(q\)-Analogues. Springer, Berlin (2010)

Koornwinder, T.: Positivity proofs for linearization and connection coefficients of orthogonal polynomials sastisfying an addition formula. J. Lond. Math. Soc. 18, 101–114 (1978)

Krall, H.L., Frink, O.: A new class of orthogonal polynomials: The Bessel polynomials. Trans. Am. Math. Soc. 65, 100–115 (1949)

Lewanowicz, S.: The hypergeometric functions approach to the connection problem for the classical orthogonal polynomials. Technical Report, Institute of Computer Science, University of Wrocław (2003)

Petkovs̆ek, M., Wilf, H.: \(A = B\). A. K. Peters, Wellesley (1996)

Prudnikov, A.P., Brychkov, YuA, Marichev, O.I.: Integrals and Series, vol. 3. Gordon and Breach Science Publishers, New York (1990)

Rainville, E.D.: Special Functions. The Macmillan Company, New York (1960)

Sánchez-Ruiz, J., Dehesa, J.S.: Expansions in series of orthogonal hypergeometric polynomials. J. Comput. Appl. Math. 89, 155–170 (1997)

Tcheutia, D.D., Foupouagnigni, M., Koepf, W., Njionou Sadjang, P.: Coefficients of multiplication formulas for classical orthogonal polynomials. Ramanujan J. 39, 497–531 (2016)

Watson, G.N.: A Treatise on the Theory of Bessel Functions, 2nd edn. Cambridge University Press, Cambridge (1966)

Zarzo, A., Area, I., Godoy, E., Ronveaux, A.: Results for some inversion problems for classical continuous and discrete orthogonal polynomials. J. Phys. A 30, 35–40 (1997)

Acknowledgements

The author is grateful for the useful comments from the referee which improve considerably this manuscript.

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was supported by the Institute of Mathematics of the University of Kassel to whom I am very grateful.

Rights and permissions

About this article

Cite this article

Tcheutia, D.D. Nonnegative linearization coefficients of the generalized Bessel polynomials. Ramanujan J 48, 217–231 (2019). https://doi.org/10.1007/s11139-018-0006-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11139-018-0006-y