Abstract

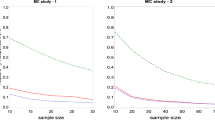

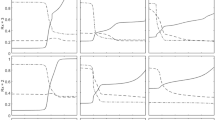

Linear principal component analysis (PCA) can be extended to a nonlinear PCA by using artificial neural networks. But the benefit of curved components requires a careful control of the model complexity. Moreover, standard techniques for model selection, including cross-validation and more generally the use of an independent test set, fail when applied to nonlinear PCA because of its inherent unsupervised characteristics. This paper presents a new approach for validating the complexity of nonlinear PCA models by using the error in missing data estimation as a criterion for model selection. It is motivated by the idea that only the model of optimal complexity is able to predict missing values with the highest accuracy. While standard test set validation usually favours over-fitted nonlinear PCA models, the proposed model validation approach correctly selects the optimal model complexity.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Kramer MA (1991) Nonlinear principal component analysis using auto-associative neural networks. AIChE J 37(2): 233–243

DeMers D, Cottrell GW (1993) Nonlinear dimensionality reduction. In: Hanson D, Cowan J, Giles L (eds) Advances in neural information processing systems 5. Morgan Kaufmann, San Mateo, pp 580–587

Hecht-Nielsen R (1995) Replicator neural networks for universal optimal source coding. Science 269: 1860–1863

Hsieh WW, Wu A, Shabbar A (2006) Nonlinear atmospheric teleconnections. Geophys Res Lett 33(7): L07714

Herman A (2007) Nonlinear principal component analysis of the tidal dynamics in a shallow sea. Geophys Res Lett 34: L02608

Scholz M, Fraunholz MJ (2008) A computational model of gene expression reveals early transcriptional events at the subtelomeric regions of the malaria parasite, Plasmodium falciparum. Genome Biol 9:R88. doi:10.1186/gb-2008-9-5-r88

Christiansen B (2005) The shortcomings of nonlinear principal component analysis in identifying circulation regimes. J Clim 18(22): 4814–4823

Gorban AN, Kégl B, Wunsch DC, Zinovyev A (2007) Principal manifolds for data visualization and dimension reduction. LNCSE, vol 58. Springer, Berlin

Roweis ST, Saul LK (2000) Nonlinear dimensionality reduction by locally linear embedding. Science 290(5500): 2323–2326

Saul LK, Roweis ST (2004) Think globally, fit locally: unsupervised learning of low dimensional manifolds. J Mach Learn Res 4(2): 119–155

Tenenbaum JB, de Silva V, Langford JC (2000) A global geometric framework for nonlinear dimensionality reduction. Science 290(5500): 2319–2323

Hastie T, Stuetzle W (1989) Principal curves. J Am Stat Assoc 84: 502–516

Kohonen T (2001) Self-organizing maps, 3rd edn. Springer, New York

Schölkopf B, Smola A, Müller KR (1998) Nonlinear component analysis as a kernel eigenvalue problem. Neural Comput 10: 1299–1319

Mika S, Schölkopf B, Smola A, Müller KR, Scholz M, Rätsch G (1999) Kernel PCA and de-noising in feature spaces. In: Kearns M, Solla S, Cohn D (eds) Advances in neural information processing systems 11. MIT Press, Cambridge, pp 536–542

Girard S, Iovleff S (2005) Auto-associative models and generalized principal component analysis. J Multivar Anal 93(1): 21–39. doi:10.1016/j.jmva.2004.01.006

Demartines P, Herault J (1997) Curvilinear component analysis: a self-organizing neural network for nonlinear mapping of data sets. IEEE Transac Neural Netw 8(1): 148–154

Efron B, Tibshirani RJ (1994) An introduction to the bootstrap. Chapman and Hall, New York

Harmeling S, Meinecke F, Müller KR (2004) Injecting noise for analysing the stability of ICA components. Signal Process 84: 255–266

Honkela A, Valpola H (2005) Unsupervised variational bayesian learning of nonlinear models. In: Saul YWL, Bottous L (eds) Advances in neural information processing systems 17 (NIPS’04), pp 593–600

Chalmond B, Girard SC (1999) Nonlinear modeling of scattered multivariate data and its application to shape change. IEEE Trans Pattern Anal Mach Intell 21(5): 422–432

Hsieh WW (2007) Nonlinear principal component analysis of noisy data. Neural Netw 20(4): 434–443. doi:10.1016/j.neunet.2007.04.018

Lu BW, Pandolfo L (2011) Quasi-objective nonlinear principal component analysis. Neural Netw 24(2): 159–170. doi:10.1016/j.neunet.2010.10.001

Ilin A, Raiko T (2010) Practical approaches to principal component analysis in the presence of missing values. J Mach Learn Res 11: 1957–2000

Lawrence ND (2005) Probabilistic non-linear principal component analysis with Gaussian process latent variable models. J Mach Learn Res 6: 1783–1816

Scholz M, Vigário R (2002) Nonlinear PCA: a new hierarchical approach. In: Verleysen M (ed) In: Proceedings of ESANN, pp 439–444

Scholz M, Kaplan F, Guy CL, Kopka J, Selbig J (2005) Non-linear PCA: a missing data approach. Bioinformatics 21(20): 3887–3895

Hinton GE (1987) Learning translation invariant recognition in massively parallel networks. In: Proceedings of the Conference on Parallel Architectures and Languages Europe (PARLE), pp 1–13

Hestenes MR, Stiefel E (1952) Methods of conjugate gradients for solving linear systems. J Res Nat Bureau Stand 49(6): 409–436

Hsieh WW (2001) Nonlinear principal component analysis by neural networks. Tellus A 53(5): 599–615

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Scholz, M. Validation of Nonlinear PCA. Neural Process Lett 36, 21–30 (2012). https://doi.org/10.1007/s11063-012-9220-6

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-012-9220-6