Abstract

The multi-source image fusion has been a hot topic during the recent years because of its higher detection rate. To improve the accuracy of pig-body multi-feature detection, a multi-source image fusion method was adopted in this field. However, the traditional multi-source image fusion methods could not obtain better contrast and more details of the fused image. To better detect shape and temperature feature of pig-body, a novel infrared and visible image fusion method was proposed in non-subsampled contourlet transform (NSCT) domain and named NSCT-GF-IAG. Through this technique, the visible and infrared images were first decomposed into a series of multi-scale and multi-directional sub-bands using NSCT. Then, to better represent the fine-scale of texture information and coarse-scale detail information, Gabor filter with even-symmetry and improved average gradient (IAG) were employed to fuse low-frequency and high-frequency sub-bands, respectively. Next, the fused coefficients were reconstructed into a final fusion image by inverse NSCT. Finally, the shape feature of pig-body was obtained by automatic threshold segmentation and optimized by morphological processing. Moreover, the highest temperature was extracted based on shape segmentation of pig-body. Experimental results showed that the proposed fusion method for detecting multi-feature was capable of achieving 2.175–5.129% higher average segmentation rate than the prevailing conventional methods. Besides this, the proposed method also improved efficiency in terms of time consumption.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Nowadays, the universal law of life has been recognized by the study of phenotypic features of common modal animals, such as zebrafish [10, 18], mouse [26], and rat [32] etc. Traditional modal animals have made contributions to the understanding of the elements, such as cells, tissues functions, and the basic mechanisms of life. Therefore, the modal animal phenotypic feature analysis is an effective way to understand human physiology and pathology. However, the life ways of small-scale modal animals are different from human, which do not meet the needs of human major diseases research. So the study on multi-feature representation method of large-scale modal animals has become an important research area. In this regard, the subject of this study encompasses the detection of pig-body shape and temperature feature for breeding purposes.

The current pig-body shape segmentation systems capture pig-bodies in a controlled environment as part of the body shape segmentation process. It is possible to control the illumination, background, and quality of images. Under these conditions, the performance of pig-body shape segmentation algorithms is greatly enhanced. However, there is still a need for more robust and efficient pig-body shape segmentation algorithms to address challenges such as variations in illumination, pose, etc.

With the development of imaging technology, the pig-body segmentation method based on visible images (VI) is proposed, which has achieved high accuracy [15]. However, it cannot effectively segment pig-body in low light, especially the tail and legs, as shown in Fig. 1e,g. As the hidden targets can be found in infrared (IR) images based on dim environment, the pig-body segmentation through IR images is achieved according to bull-body segmentation [34]. However, it cannot detect ears effectively, as shown in Fig. 1b, d, f, h. Under different environments, the segmentation results of various parts pig-body, such as ears, legs, and tails, are different in VI and IR images, as shown in Fig. 1. Therefore, the IR and VI image fusion can provide comprehensive and useful information through integrating complementary information and removing redundancy in a multi-source image [2, 42]. Moreover, the temperature feature of pig-body can effectively extract based on more accurate pig-body shape segmentation results and infrared pig-body images.

The structure of this paper is that the related works are introduced in section 2, materials and methods are presented in section 3, experimental results and discussion are provided in section 4, and the conclusions and future work are given in section 5.

2 Related works

2.1 Pig-body segmentation

Pig-body is important due to its unique shape and structure. So several methods have been devised to segment pig-body. To exactly recognize the size and features of pigs’ bodies, Shen and Luo [33] proposed a segmentation system based on visual image analysis. To automatically segment the three-dimensional structure of a pig-body, a 3D visual imaging based pig-body reconstruction system was proposed by Font-I-Furnols et al. [7]. However, the pig-body segmentation method based on visible images varies due to environmental changes, such as illumination, etc. Therefore, it is hard to obtain good results concerning a pig-body segmentation. Based on the advantages of the infrared imaging [34], some multi-source image fusion methods have been proposed for target segmentation [6, 27, 45]. The experimental results reveal that these methods, based on visible and infrared image fusion, achieve better segmentation.

2.2 Multi-source image fusion

The traditional multi-focus image fusion algorithms usually are divided into two aspects, based on spatial and transform domain. Spatial domain based fusion methods, such as principal component analysis (PCA) [14] and intensity hue saturation (IHS) [17], provide a fused image with lower contrast information. As the image features with various scales are sensitive to the human visual system [4], multi-scale transformations are effective for fusing the information of multi-focus images. For example discrete wavelet transform (DWT) [44], discrete cosine transform (DCT) [13, 37], contourlet pyramid (CT) [8], and shearlet transform (ST) [35]. However, there are two drawbacks. One is that these transform methods cannot fully represent the curves and edges of multisource images. The other is that ST and CT are sensitive to shift variance and easily cause Gibbs phenomenon in the fused image due to sampling operator. Nowadays, the learning-based fusion methods has proposed to improve the adaptability of algorithm, such as convolutional neural network (CNN) [21], generative adversarial network (GAN) [25]. However, they are time consuming for training and have high hardware requirements.

To effectively solve the above issues and improve the performance of pig-body segmentation rate, a novel multisource image fusion method is proposed for pig-body shape segmentation and temperature detection based on Gabor feature and IAG, named as NSCT-GF-IAG. Through this procedure, a pig-body multi-feature detection model is achieved to segment the shape feature by fusing VI and IR images using Gabor energy map and improved average gradient (IAG) in the NSCT domain. Then the fused images are segmented by automatic threshold method and morphological processing. Next, the temperature of pig-bodies is detected based on segmentation results. Finally, the experimental results show that the proposed fusion method has a better effect in the pig-body shape segmentation and provides a higher accuracy rate in a homemade database. The flowchart of the proposed method is shown in Fig. 2.

Generally, the advantages of the proposed method are summarized as follows:

-

(1)

In the low-frequency sub-bands, to construct a new significant measuring method, the local energy features of multisource pig-body images are extracted by even-symmetry Gabor filter with different parameters, respectively. Then, an effective fusion rule of low-frequency sub-bands is proposed based on the new significant measures for detecting pig-body.

-

(2)

In the high-frequency sub-bands, to better represent large-scale texture and edge information, the high-frequency energy features are extracted by improved average gradient based on pig-body images. Then, the maximum strategy is adopted for fusing high frequency sub-bands.

-

(3)

The proposed pig-body segmentation framework achieves a better segmentation rate than other prevailing methods, and improve the detection rate of pig-body shape and temperature.

3 Materials and methods

The goal of this work was to improve the segmentation rate of pig-body images based on situations with different illumination. For this purpose, an effective method was proposed based on Gabor filter with even-symmetry and improved average gradient in NSCT domain. The algorithm was divided into four major phases. First, IR and VI images were decomposed into a series of multi-scale and multi-directional sub-bands by NSCT. Next, the proposed fusion rules were adapted to fuse the low and high-frequency coefficients. Then, the fused images of pig-body were obtained by inverse NSCT based on different situations. Finally, binary images were presented by automatic threshold segmentation and morphological operation, and the temperature features were obtained based on segmentation results.

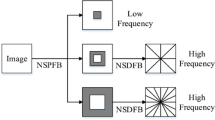

3.1 Non-subsampled contourlet transform

Due to the shift invariance of NSCT, the IR and VI images were decomposed into multiscale and multidirectional sub-bands using the non-subsampled pyramid filter bank (NSPFB) and the non-subsampled directional filter bank (NSDFB). First, the multiscale sub-bands were obtained by pyramid filter bank (PFB) without up-sampling and down-sampling. Thus, one low-frequency and one high-frequency sub-band were generated at each decomposition level. Non-subsampled pyramid (NSP) decomposition was achieved by iteratively decomposing the low-frequency sub-band. For the number of decomposition level (k), sub-bands (k + 1) were obtained by NSP, which were the same size as the original images. Then, the high-frequency sub-bands at each level were decomposed by NSDFB at l directions. There were 2l directional sub-bands generated, which contained richer directional information. The processing of decomposition with NSCT is shown in Fig. 3.

Here, H0(z) and H1(z) represent a low-pass and a high-pass filters respectively, in first step, H0(z2) and H1(z2) are low and high pass filters respectively in the second step, k = 3.

3.2 The fusion rule of low-frequency sub-bands

To enhance the curve structure and fine-scale texture information [38], the low-frequency sub-bands were described by Gabor filter with even-symmetry. The magnitude feature with eight orientations (0°, 22.5°, 45°, 67.5°, 90°, 112.5°, 135°, and 157.5°) [39] were extracted through Gabor filter with even-symmetry, which is represented by M{i}. The formula of Gabor filter with even symmetrical and multi-orientation is shown as

Where, fi is the center frequency of the ith orientation, θi is the angle of the ith orientation, i = 8, σ and γ are the scale of Gabor filter and the length-width radio of envelope respectively. Due to the different curve structure of IR and VI images, varying parameters were adapted in multisource images. σIR = 3 and σVI = 6, I(x, y) represents IR or VI image, and Re(G{i}) is the real part of G{i}.

Then, the Gabor energy feature was obtained by choosing the maximum of all directions in the m × m local window and the formula is shown as follows:

Where, i represents the number of orientations, MAX(.) is the maximum value in the energy of all orientations, E(.) is the Gabor energy feature, m = 7.

Finally, to better fine-scale feature description of pig-body in low-frequency sub-bands, the maximum fusion rule was adopted based on local Gabor-based energy feature.

Where, \( {E}_L^{IR} \) and \( {E}_L^{VI} \) are Gabor energy map of IR and VI low-frequency sub-bands, respectively. LFF represents the low-frequency fused sub-bands.

3.3 The fusion rule of high-frequency sub-bands

Previously, the average gradient fusion rule represented better coarse-scale textures of high-frequency sub-bands [8]. However, the diagonal gradients were ignored in the fusion rule. In this work, an improved average gradient (IAG) was adopted to enhance the representation of coarse-scale details in high-frequency coefficients fusion. For this purpose, the average gradient of region R was computed first, and formulated as follows:

Where, ∆Ix is derivative of I(x, y)in X direction, ∆Iy is derivative of I(x, y) in Y direction, ∆Id is derivative of I(x, y)in diagonal direction, ∆Ib is derivative of I(x, y) in back-diagonal direction. The size of region R is M × N.

Next, the fusion rule of high-frequency sub-bands was determined by choosing the maximum based on IAG.

Where, \( {G}_H^{IR} \) and \( {G}_H^{VI} \) are IAG of IR and VI high-frequency sub-bands, respectively. HFF is the high-frequency fused sub-bands.

3.4 Image reconstruction and binarization

To achieve pig-body image reconstruction, inverse non-subsampled contourlet transform (INSCT) was used to obtain the final fused image. Then, the binary pig-body images were obtained by automatic threshold segmentation, to remove the holes and noise, the morphological operation was adopted to optimize the binary pig-body image. Finally, highest temperature of pig-body was detected based on segmentation results.

4 Experimental results and discussion

The proposed detection framework was implemented using MATLAB R2014a on a standard desktop PC, which was equipped with an Intel(R) Core(TM) i5–6200 CPU @2.3GHz and 4GB RAM. To analyze the performance of the proposed pig-body multi-feature detection method, a homemade database was built by FLIR C2. In this section, the schematic diagram of mulit-source image pairs were shown in Fig. 4. The figure showed the difference illumination situation of visible pig-body images in each line. Moreover, in the following detailed experimental image results, a pair of multi-source images were selected under different illumination situations, and there were four pairs of images in total, which to test performance of proposed method under illumination variation situations.

4.1 Objective evaluation

In this study, seven objective metrics were adopted to evaluate the fusion and detection results, which were average gradient (AG) [1], information entropy (IE) [23], standard deviation (SD) [24], spatial frequency (SF) [16], average pixel intensity (API) [31], similarity of structure information measure (SSIM) [22] and Accuracy (Acc).

AG was used to measure the sharpness of the fused image and defined as:

Where M and N is the number of row and column in the fused image respectively.

IE was used to measure the abundance of information in the fused image and defined as:

Where \( {P}_i=\frac{N_i}{N} \), Ni is the number of pixels whose value is i, and N is the number of total pixels.

SD was used to measure the contrast of the fused image and defined as:

Where, \( \overline{I\left(i,j\right)} \) is the average of the fused image.

SF was used to measure the overall structural information in the fused image and defined as:

Where, \( RF=\sqrt{\frac{1}{M\times N}\sum \limits_{i=1}^M\sum \limits_{j=2}^N{\left(I\left(i,j\right)-I\left(i,j-1\right)\right)}^2, CF=\sqrt{\frac{1}{M\times N}\sum \limits_{i=2}^M\sum \limits_{j=1}^N{\left(I\left(i,j\right)-I\left(i-1,j\right)\right)}^2}} \)

API was used to measure the average brightness of the fused image and defined as:

Where I(i, j) is the intensity of pixel at (i, j), M and N are the number of row and column in the fused image respectively.

SSIM was used to measure the similarity of image structure information and defined as:

Where A and B are multi-source images, μx and σx are mean and variance of multisource image, respectively. μf and σf are mean and variance of fused image, respectively. σxf is covariance between multisource image and fused image.

Acc was used to measure the detection rate of the fused image and defined as:

Where TP and TN are true positive and true negative respectively, FP and FN are false positive and false negative respectively.

4.2 Fusion performance of the proposed model

To verify the performance of the proposed fusion method, four pairs of multisource pig-body were selected based on different situations and shown in Fig. 5a-b. The corresponding fused images based on the proposed method are shown in Fig. 5c. It can be clearly noticed that the fused pig-body images were approximately similar to the VI images by fusing the features of VI and IR images. Moreover, the visual effects of VI, IR, and the fused images were also verified by computing AG, API, IE, SD and SF. The results are shown in Table 1. Furthermore, the comparative results of IE and SD are shown in Fig. 5d-e, which depict that higher information entropy and standard deviation were obtained by the proposed fusion model. They also reflect that the proposed fusion model had a great improvement in information and contrast of fused images.

4.3 Fusion results of the proposed detection model

The performance of the proposed multi-source image fusion method was evaluated by different experimentation with pig-body images, which was further utilized to extract pig-body regions. To verify the performance of the fusion method, four VI and IR pig-body image pairs in different illumination were considered and shown in Fig. 6a-b. Moreover, the proposed fusion method was compared with six existing methods, namely, discrete wavelet transform (DWT) [3, 30], discrete cosine transform (DCT) [19, 29], contourlet transform (CT) [28, 41], shearlet transform (ST) [9, 20], NSCT [5, 43], NSCT-PCNN [36, 40], NSST [11, 12], CNN [21], GAN [25]. The main difference between proposed method and other considered methods was that the fusion methods of low-frequency and high-frequency subbands were different, which were shown in Table 2. The final fused images of different fusion methods based on different situations are shown in Fig. 6c-l. The figure shows that the proposed method had some advantages in the visual effect, especially in dimmer situations, it clearly represents the pig-body shape feature.

Furthermore, it was also superior to the other nine image fusion methods in objective evaluations, as shown in Table 3. To intuitively compare the performance of different multisource fusion methods, the comparative results are represented in scatter diagrams as shown in Fig. 7. This also reflects that due to the better description of Gabor filter with even-symmetry and IAG, the proposed method provided a significant improvement in visual effect, as well as, the objective evaluation based on different situations, especially dimmer situations.

To testify the performance of the proposed fusion model in homemade database, the comparative results were represented in line charts as shown in Fig. 8. The x-axis of Fig. 8 represented the number of multi-source image pairs of breeding pigs. The figure shows that the fused image quality is best among 10 fusion methods in homemade database with different situations. Moreover, the running times of various fusion methods based on transform domain are shown in Table 4. Compared with NSCT-PCNN, NSCT and NSST, the proposed fusion method consumed less running time. Because of the train processing of CNN and GAN take a long time, respectively, they are not advantageous in running time.

4.4 Pig-body segmentation results

To verify the performance of the proposed pig-body segmentation model, 24 pig-body image pairs were processed with the proposed fusion method and other existing fusion methods as mentioned above. After obtaining all the 240 fused images with 10 different fusion methods, shape features of pig-bodies were extracted by the automatic threshold method. To optimize the segmentation results, the morphological operation was utilized to remove holes and noise. To assess the performance of pig-body segmentation results based on 4 image pairs in different situations, the VI and IR pig-body images with different illumination were segmented in Fig. 9a-b, respectively. The binarization of fused images with different situations based on different fusion methods are shown in Fig. 9c-l. The extracted binary images in Fig. 9, reveal that the proposed pig-body segmentation model is capable to segment higher integrity of pig-body regions, especially the ears, legs, and tail, based on dimmer situations.

The proposed pig-body detection method achieved higher segmentation accuracy than all the considered fusion methods, as shown in Table 5 above. As per Table 5, the average performance of the proposed segmentation was 94.452%, which is 2.175–5.129% higher than other considered methods. To prove the segmentation performance of proposed method, the results were represented in line charts and shown in Fig. 10. The x-axis of Fig. 10 represented the number of multi-source image pairs of breeding pigs. The figure shows that the proposed detection method achieves highest segmentation rate among 10 different methods.

4.5 Pig-body temperature detection results

To eliminate the influence of ambient temperature on pig-body temperature detection, the highest temperature of pig-body was computed based on pig-body shape segmentation and the pixels of infrared pig-body image. Firstly, the shape segmentation results were normalized and multiplied with infrared pig-body image. Then, because of each pixel value was the temperature of pixel in infrared images, the highest temperature was extracted by comparing pixel by pixel. Finally, the position of reference temperature in different situations were obtained by FLIR Tools, as shown in Fig. 11. To detect the health degree of pig in a homemade database, the highest temperature of pig-bodies were represented in line charts as shown in Fig. 12. This figure shows that the highest temperature of pigs are all in the normal temperature range in the homemade database.

5 Conclusion and future works

A novel infrared and visible image fusion method for pig-body detection was proposed based on different illumination, named NSCT-GF-IAG. First, the NSCT was adapted to decompose multisource images into a series of multi-scale and multi-directional sub-bands. Then, Gabor filter with even-symmetry and IAG were used to fuse low-frequency and high-frequency sub-bands, respectively. Next, the fused coefficients were directly fused into final pig-body fusion image. Finally, the binary images of pig-body were presented by the automatic threshold method and morphological operation. Further, the highest temperature of pig-body was obtained based on segmentation results. The experimental results show that the proposed detection method had a superior performance in improving the average detection rate (94.452%) based on pig-body images in four different situations, which is 2.175–5.129% higher than other considered methods. It lays a foundation for accurately measuring the maximum temperature of pig-body.

This work mainly focused on improving the problem of pig-body shape and temperature detection under different situations. Some parameters of the proposed algorithm were not selected automatically for optimal fusion performance. Future studies should focus on the automatic selection of algorithm parameters, which may replace the process of parameter determination with empirical values and experimentation. Moreover, the running time of the proposed algorithm needs to be further reduced.

References

Bai XZ (2015) Infrared and visual image fusion through feature extraction by morphological sequential toggle operator, infrared Phys. Technol. 71:77–86

Bai X, Zhou F, Xue B (2011) Fusion of infrared and visual images through region extraction by using multiscale center-surround top-hat transform. Opt Express 19(9):8444–8457

Balakrishnan S, Cacciola M, Udpa L, Rao BP, Jayakumar T, Raj B (2012) Development of image fusion methodology using discrete wavelet transform for eddy current images. Ndt & E International 51(10):51–57

Bhatnagar G, Wu QMJ, Liu Z (2015) A new contrast based multimodal medical image fusion framework. Neurocomputing 157:143–152

Chen Y, Sang N (2015) Attention-based hierarchical fusion of visible and infrared images. Optik - International Journal for Light and Electron Optics 126(23):4243–4248

Fendri E, Boukhriss RR, Hammami M (2017) Fusion of thermal infrared and visible spectra for robust moving object detection. Pattern Analysis & Applications 20(10):1–20

Font-I-Furnols M, Carabús A, Pomar C, Gispert M (2015) Estimation of carcass composition and cut composition from computed tomography images of live growing pigs of different genotypes. Animal 9(01):166–178

He LI, Lei L, Chao Y, Wei H (2016) An improved fusion algorithm for infrared and visible images based on multi-scale transform. Semiconductor Optoelectronics 74:28–37

Hong Z, Chen Z, Yan X, Chen H (2012) Visible and infrared image fusion algorithm based on shearlet transform. Chinese Journal of Scientific Instrument 33(7):1613–1619

Howe K, Clark MD, Torroja CF, Torrance J, Stemple DL (2013) Corrigendum: the zebrafish reference genome sequence and its relationship to the human genome. Nature 496(7446):498–503

Huang Z, Ding M, Zhang X (2017) Medical image fusion based on non-subsampled shearlet transform and spiking cortical model. Journal of Medical Imaging & Health Informatics 7(1):229–234

Huang Y, Bi D, Wu D (2018) Infrared and visible image fusion based on different constraints in the non-subsampled shearlet transform domain. Sensors 18(4):1169

Jin X, Jiang Q, Yao S, Zhou D, Nie R, Lee SJ et al (2017) Infrared and visual image fusion method based on discrete cosine transform and local spatial frequency in discrete stationary wavelet transform domain Infrared Physics & Technology:88

Kadar, I. . (1998). Pixel-level image fusion: the case of image sequences. Proceedings of SPIE - The International Society for Optical Engineering, 3374, 378-388.

Kashisha MA, Bahr C, Ott S, Moons C, Niewold TA, Tuyttens F et al (2013) Automatic monitoring of pig activity using image analysis. Springer International Publishing, Advanced Concepts for Intelligent Vision Systems

Kong WW, Lei Y, Ren MM (2016) Fusion method for infrared and visible images based on improved quantum theory model. Neurocomputing 212:12–21

Leung Y, Liu J, Zhang J (2013) An improved adaptive intensity–hue–saturation method for the fusion of remote sensing images. IEEE Geoscience & Remote Sensing Letters 11(5):985–989

Li M, Zhao L, Pagemccaw PS, Chen W (2016) Zebrafish genome engineering using the crispr – cas9 system. Science 8(11):2281–2308

Liu C, Jin L, Tao H, Li G, Zhuang Z, Zhang Y (2014a) Multi-focus image fusion based on spatial frequency in discrete cosine transform domain. IEEE Signal Processing Letters 22(2):220–224

Liu X, Zhou Y, Wang J (2014b) Image fusion based on shearlet transform and regional features. AEUE - International Journal of Electronics and Communications 68(6):471–477

Liu Y, Chen X, Peng H, Wang Z (2017) Multi-focus image fusion with a deep convolutional neural network. Information Fusion 36:191–207

Ma K, Zeng K, Wang Z (2015) Perceptual quality assessment for multiexposure image fusion. IEEE Trans Image Process 24(11):3345–3356

Ma Y, Chen J, Chen C, Fan F, Ma JY (2016a) Infrared and visible image fusion using total variation model. Neurocomputing 202:12–19

Ma JY, Chen C, Li C, Huang J (2016b) Infrared and visible image fusion via gradient transfer and total variation minimization. Information Fusion 31:100–109

Ma, J. Y. , Yu, W. , Liang, P. W. , Li, C. , & Jiang, J. J. . (2018). Fusiongan: a generative adversarial network for infrared and visible image fusion. Information fusion, S1566253518301143-.

Mcculloch JETA (1961) A direct measurement of the radiation sensitivity of normal mouse bone marrow cells. Radiat Res 14(2):213–222

Meng F, Song M, Guo B, Shi R, Shan D (2016) Image fusion based on object region detection and non-subsampled contourlet transform Computers & Electrical Engineering:S0045790616303044

Moghadam, F. V. , and Shahdoosti, H. R. . (2017). A new multifocus image fusion method using contourlet transform.

Paramanandham N, Rajendiran K (2017) Infrared and visible image fusion using discrete cosine transform and swarm intelligence for surveillance applications Infrared Physics & Technology:88

Pu T, Ni G (2000) Contrast-based image fusion using the discrete wavelet transform. Opt Eng 39(8):2075–2082

Qian, J. X., Jiang, S. W., Yao, et al. (2018). Infrared and visual image fusion method based on discrete cosine transform and local spatial frequency in discrete stationary wavelet transform domain. Infrared physics and technology.

Séverine R, Tesson L, Séverine M, Usal C, Cian AD, Thepenier V (2014) Efficient gene targeting by homology-directed repair in rat zygotes using tale nucleases. Genome Res 24(8):1371–1383

Shen G, Luo Z (2011) On the research of pig individual identification and automatic weight collecting system. International Conference on Digital Manufacturing & Automation, IEEE

Stajnko D, Brus M, Hočevar M (2008) Estimation of bull live weight through thermographically measured body dimensions. Comput Electron Agric 61(2):233–240

Wang L, Li B, Tian LF (2014) Eggdd: an explicit dependency model for multi-modal medical image fusion in shift-invariant shearlet transform domain. Information Fusion 19(11):29–37

Xiang T, Yan L, Gao R (2015) A fusion algorithm for infrared and visible images based on adaptive dual-channel unit-linking pcnn in nsct domain. Infrared Phys Technol 69:53–61

Xie, K. . (2005). Multifocus image fusion based on discrete cosine transform. Computer Engineering & Applications.

Yang, J. , and Yang, J. . (2009). Multi-Channel Gabor filter Design for Finger-Vein Image Enhancement. Fifth international conference on image and graphics (pp.87-91). IEEE computer society.

Yang J, Shi Y, Yang J (2011) Personal identification based on finger-vein features. Comput Hum Behav 27(5):1565–1570

Yang, G. , Ikuta, C. , Zhang, S. , Uwate, Y. , Nishio, Y. , and Lu, Z. . (2017). A novel image fusion algorithm using an nsct and a pcnn with digital filtering. International Journal of Image & Data Fusion, 1-13.

Yang, S. , Wang, X. , Zhen, L. I. , Zhao, W. , and Yang, L. . (2018). Research on fingerprint image reconstruction based on contourlet transform. Technology Innovation & Application.

Zambottivillela L, Yamasaki SC, Villarroel JS, Alponti RF, Silveira PF (2014) Novel fusion method for visible light and infrared images based on nsst-sf-pcnn. Infrared Phys Technol 65(7):103–112

Zhang Q, Guo BL (2009) Multifocus image fusion using the nonsubsampled contourlet transform. Signal Process 89(7):1334–1346

Zheng Y (2005) Advanced discrete wavelet transform fusion algorithm and its optimization by using the metric of image quality index. Opt Eng 44(3):037003

Zijun F, Xiaoling Z, Huijie Z (2012) Fusion scheme of infrared and visible images for moving object detection. Computer Engineering & Applications 48(7):9–11

Acknowledgements

The authors would like to thank their colleagues for their support of this work. The detailed comments from the anonymous reviewers were gratefully acknowledged. This work was supported by the Key Research and Development Project of Shandong Province (Grant No. 2019GNC106091) and the National Key Research and Development Program (Grant No. 2016YFD0200600-2016YFD0200602).

Author information

Authors and Affiliations

Corresponding authors

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Zhong, Z., Gao, W., Khattak, A.M. et al. A novel multi-source image fusion method for pig-body multi-feature detection in NSCT domain. Multimed Tools Appl 79, 26225–26244 (2020). https://doi.org/10.1007/s11042-020-09044-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-020-09044-9