Abstract

This paper concerns a short-update primal-dual interior-point method for linear optimization based on a new search direction. We apply a vector-valued function generated by a univariate function on the nonlinear equation of the system which defines the central path. The common way to obtain the equivalent form of the central path is using the square root function. In this paper we consider a new function formed by the difference of the identity map and the square root function. We apply Newton’s method in order to get the new directions. In spite of the fact that the analysis is more difficult in this case, we prove that the complexity of the algorithm is identical with the one of the best known methods for linear optimization.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The area of linear optimization (LO) has become very active since Karmarkar’s [27] first interior-point method (IPM) was published on this subject. Later on, a large amount of papers appeared and the most important results were summarized in the monographs written by Roos, Terlaky and Vial [46], Wright [57] and Ye [58]. It turned out that IPMs are more efficient in practice than pivot algorithms in the case of large sparse problems. Illés and Terlaky [26] presented a comparison between the IPMs and pivot methods from practical and theoretical point of view. Nowadays, state-of-the-art implementations of interior-point solvers are available. Lustig et al. [34, 35] as well as Mehrotra [38] made important contributions to this field. Some significant aspects of implementations techniques were presented by Andersen et al. [7], by Mészáros [39] and by Gondzio and Terlaky [18], respectively.

Recently, the algorithms proposed for solving LO problems have been extended to more general optimization problems such as semidefinite optimization (SDO), second-order cone optimization (SOCO), symmetric optimization (SO) and linear complementarity problems (LCPs). Nesterov and Nemirovskii [41] introduced the concept of self-concordant barrier functions in order to define IPMs for solving convex optimization problems. Klerk [30] proposed a general framework for solving SDO problems. Beside this, he studied the concept of self-dual embeddings for SDO. Alizadeh and Goldfarb [6] used Euclidean Jordan algebras in order to investigate the theory of SOCO. Schmieta and Alizadeh [47] extended the commutative class of primal-dual IPMs for SDO proposed by Monteiro and Zhang [40] to SO. Furthermore, Vieira [50] presented different IPMs for SO based on kernel functions.

Some key contributions to the field of LCPs are summarized in the monograph of Cottle et al. [12]. Kojima et al. [31] presented a unified approach to IPMs for LCPs. Kojima, Mizuno and Yoshise [33] proposed an IPM for a class of LCPs with positive semidefinite matrices. Kojima, Megiddo and Ye [32] introduced a new potential reduction algorithm for solving LCPs. Potra and Sheng [44, 45] defined a predictor-corrector algorithm and a large step infeasible IPM for the \(P_*(\kappa )\)-matrix LCPs. Moreover, Illés and Nagy [22] and Potra [43] devised Mizuno-Todd-Ye type algorithms for LCPs. It turned out that LCPs have important applications in the field of economics. One of these is the Arrow-Debreu market equilibrium model. Ye [59] showed that some market equilibrium models are equivalent to LCPs that are not necessarily sufficient. Therefore, Illés, Nagy and Terlaky [23–25] analysed the question of solvability of general LCPs.

It emerged that specifying a proper search direction plays a key role in the analysis of the IPMs. In [42] Peng, Roos and Terlaky introduced the notion of self-regular barriers and they determined new large-update IPMs with better iteration bounds than the long step interior-point algorithms studied before. The first algorithm which works in a wide neighbourhood and enjoys the same complexity as the best known short-update IPMs was presented by Ai and Zhang [5] for monotone LCPs.

In 2002 Darvay introduced a new technique for finding search directions for LO problems [13, 15]. The new technique is based on an equivalent transformation on the centering equations of the central path. The new search directions are obtained by applying Newton’s method to the resulting system. Infeasible IPMs for LO based on this technique were proposed in [4, 17]. In 2006, Achache generalized this method for convex quadratic programming [2]. Many variations of the technique appeared for LCPs. Achache [1] and Wang et al. [55, 56] generalized the weighted-path-following method introduced in [14] to LCPs, monotone mixed LCPs and monotone horizontal LCPs, respectively. Achache [3], Asadi and Mansouri [8, 9], Mansouri and Pirhaji [36] and Kheirfam [29] presented numerical results on LCPs based on this technique. Wang and Bai [52] and Mansouri et al. [37] extended this approach to SDO problems. Furthermore, Bai, Wang and Luo proposed a new method for convex quadratic SDO [11]. Bai, Sun and Chen extended this algorithm to semidefinite LCPs [10]. Wang and Bai presented new full Nesterov-Todd step primal-dual algorithms for SOCO [53] and for SO [54]. Kheirfam introduced an infeasible IPM for SO in [28]. Wang defined a new polynomial interior-point algorithm for the monotone LCPs over symmetric cones with full Nesterov-Todd step [51].

In the above mentioned papers generally the square root function is used to obtain an equivalent form of the central path. The idea of using a new function, namely the difference of the identity and the square root function was raised in [16]. In this paper we analyse the complexity of the algorithm proposed in [16] and we prove its polynomiality. The paper is organized in the following way. In the second section the LO problem and the central path are presented. Section 3 contains the method used for the determination of the search direction. In the fourth section the new primal-dual algorithm is introduced, which is based on the new search direction. The fifth section is about the complexity analysis of the algorithm, where it is proved that the method solves the problem in polynomial time. The sixth section contains some numerical results. Finally, some conclusions are enumerated.

We present some notations used throughout the paper. Let x and s be two n-dimensional vectors. Then, xs denotes the componentwise product of the vectors x and s, i.e. \( x s=[x_1 s_1, x_2 s_2, \ldots , x_n s_n]^T.\) We also use the notation \(\frac{x}{s}=\left[ \frac{x_1}{s_1}, \frac{x_2}{s_2},\ldots ,\frac{x_n}{s_n}\right] ^T\), where \(s_i \ne 0\) for all \(1 \le i \le n\). For an arbitrary univariate function f and a vector x, if each component of x belongs to the domain of f, then we define \(f( x)=\left[ f(x_1), f(x_2), \ldots , f(x_n)\right] ^T\). Thus, if \(x \ge 0\), then \(\sqrt{x}\) is the vector obtained by taking square roots of the components of x. Furthermore, \(e= [1, 1, \ldots , 1]^T\) denotes the n-dimensional all-one vector and let \(\mathbb {R}^+ = \{ x\in \mathbb {R} \mid x\ge 0\}\). Moreover, diag(x) is a diagonal matrix, which contains on his main diagonal the elements of x in the original order. Beside these, \(\Vert x \Vert \) denotes the Euclidean norm, \(\Vert x \Vert _\infty \) the Chebyshev norm, \(\Vert x \Vert _1\) the 1-norm, and \(\min (x)\) the minimal component of x. Finally, if \(f(t) \ge 0\) and \(g(t) \ge 0\) are real valued functions, then \(f(t) = O( g(t) )\) means that there exists a positive constant \(\gamma \) so that \(f(t) \le \gamma g(t)\).

2 The LO problem

In this section we introduce the LO problem. Let us consider the following problem:

where \(A \in \mathbb {R}^{m \times n}\) with \(rank(A)=m\), \(b \in \mathbb {R}^m\) and \(c \in \mathbb {R}^n\). The dual of this problem can be written in the following form:

We suppose that the interior-point condition (IPC) holds for both problems, which means that there exists \(( x^0, y^0, s^0)\) such that

Using the self-dual embedding model presented by Ye, Todd and Mizuno [60] and Terlaky [49] we conclude that the IPC can be assumed without loss of generality. In this case the all-one vector can be considered as a starting point.

The optimal solution of the primal-dual pair can be given with the following system of equations:

The first two equations of system (2.1) are named feasibility conditions and the last one is called complementarity condition. In IPMs we replace the complementary condition by a parameterized equation. Hence, we obtain the following system of equations:

If the IPC holds, then for a fixed \(\mu >0\) system (2.2) has unique solution, which is called the \(\mu \) -center or analytic center (Sonnevend [48]). The set of \(\mu \)-centers formes an analytic curve [19, 20], which is called central path. If \(\mu \) tends to zero, then the central path converges to the optimal solution of the problem.

Detailed analysis of the existence and uniqueness of the central path and some important concepts such as the IPC and self-dual embedding can be found in Chapter 2 of [46] and in Chapters 1 and 2 of [21].

3 Search directions

Using [15] we define a method for finding search directions for IPMs. Let us consider the continuously differentiable function \(\varphi :\mathbb {R}^+ \rightarrow \mathbb {R}^+.\) Assume that the inverse function \(\varphi ^{-1}\) exists. Observe that system (2.2) can be written in the following equivalent form:

and applying Newton’s method yields new search directions. Let

and assume that we have \(A x= b\), and \(A^T y+ s= c\) for a triple ( x, y, s) such that \( x> 0\) and \( s> 0\). We get

Let

Then,

and

Let us introduce the \(\bar{A}=\frac{1}{\mu }Adiag(\frac{ x}{ v})\) notation. Thus, system (3.2) can be written in the form

where

For different \(\varphi \) functions we obtain different values for the \(p_v\) vector that lead to new search directions. An alternative approach for determining the \(p_v\) vector could be using self-regular barriers [42]. Let us consider \(p_v\) as a function of v. In all the cases studied in the literature this function is defined on the whole positive orthant. However, if the points generated by the algorithm are perfectly centered, then we have the \(v=e\) equality. Therefore, our goal is to analyse a case where the domain of the above mentioned function is a restriction of the positive orthant, but it contains the e vector. That is why we propose a new \(\varphi \) function, namely \(\varphi (t)=t-\sqrt{t}\), which gives a new search direction.

4 A new primal-dual algorithm

This section presents a new form of the primal-dual interior-point algorithm based on the corresponding search directions, using the function \(\varphi (t)=t-\sqrt{t}\). In this case

It should be mentioned that the components of the vector \(p_v\) are defined on the \(\left( \frac{1}{2}, +\infty \right) \) interval. We can give a proximity measure to the central path as follows:

Let

Considering (3.5) we have \( d_x^T d_s=0\). Thus, the vectors \( d_x\) and \( d_s\) are orthogonal, which implies

We mention that as an effect of this relation we can also express the proximity measure using \(q_v\), thus

Moreover,

and we have

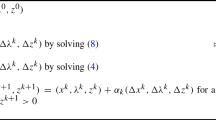

Thus, the algorithm can be defined as in Fig. 1.

The following section is meant to prove the polynomiality of the algorithm.

5 Convergence analysis

The first lemma will investigate the feasibility of the full-Newton step. We prove that feasibility is accomplished if a condition on the proximity measure holds. Let \( x_+= x+\Delta x\) and \( s_+= s+\Delta s\) be the vectors obtained after a full-Newton step.

Lemma 5.1

Let \(\delta =\delta ( x, s; \mu )<1\) and assume that \(v>\frac{1}{2}e\). Then,

Thus, we conclude that the full-Newton step is strictly feasible.

Proof

For each \(0\le \alpha \le 1\) denote \( x_+(\alpha )= x+\alpha \Delta x\) and \( s_+(\alpha )= s+\alpha \Delta s\). Therefore,

From (3.5) and (4.2) it follows that

Moreover, from (4.1) we obtain

and for this reason we get

Using \(v>\frac{1}{2}e\) we obtain

Thus,

The inequality \( x_+(\alpha ) s_+(\alpha )> 0\) holds if

We have

Thus, we obtain that for each \(0\le \alpha \le 1\) the \( x_+(\alpha ) s_+(\alpha )> 0\) inequality holds, which means that the linear functions of \(\alpha \), \( x_+(\alpha )\) and \( s_+(\alpha )\) do not change sign on the interval [0, 1]. Consequently, \( x_+(0)= x> 0\) and \( s_+(0)= s> 0\) yields \( x_+(1)= x_+> 0\) and \( s_+(1)= s_+> 0\). \(\square \)

The following lemma will be used in the next part of the analysis.

Lemma 5.2

Let \(f{:}D \rightarrow \left( 0, +\infty \right) \) be a decreasing function, where \(D=[d,+\infty ]\), \(d>0\). Furthermore, let us consider the vector \(v \in \mathbb {R}_+^{n}\) such that \(\min (v)>d\). Then,

Proof

We have

\(\square \)

The second lemma is meant to analyse the conditions under which the Newton process is quadratically convergent.

Lemma 5.3

Suppose that \(\delta =\delta ( x, s; \mu )<\frac{1}{2}\) and \(v> \frac{1}{2}e\). Then, \(v_+>\frac{1}{2}e\) and

In addition,

which means that the full-Newton step ensures local quadratic convergence of the proximity measure.

Proof

We know from Lemma 5.1 that \( x_+> 0\) and \( s_+> 0\). Considering the notation

and the substitution \(\alpha =1\) in (5.3), after some reductions we obtain

From the condition \(v>\frac{1}{2}e\) we get \(v^2+2v-e>0\) and this implies \(v_+^2 \ge e - \frac{q_v^2}{4}\). Consequently,

From \(\delta <\frac{1}{2} \) it follows that \(min(v_+) > \frac{\sqrt{3}}{2}\), hence \(v_+ > \frac{1}{2}e\). Moreover, we have

Let us consider the function \(f(t)=\frac{t}{(2t-1)(1+t)}>0\) for all \(t>\frac{1}{2}\). From \(f'(t)<0\) it follows that f is decreasing, therefore using (5.7) and Lemma 5.2 we obtain

We write f(t) in the following way:

Substituting \(\sqrt{1-\delta ^2}\) and making some elementary reductions we obtain

We have \(1<\frac{t^2+2t-1}{t^2}\le 2\) for all \(t > \frac{1}{2}\) and

Thus,

and using (5.8) and (5.9) we get (5.4), which proves the first part of the lemma. Moreover, we have

Let

From \(\delta < \frac{1}{2}\) it follows that \(1+\sqrt{1-\delta ^2} > \frac{2+\sqrt{3}}{2} \). We get

and

Furthermore, if \(\delta <\frac{1}{2}\), then \(4\delta ^2 < 1\). Thus, \( \frac{1}{3-4\delta ^2} < \frac{1}{2}\) and we obtain

A simple calculus yields

We have \(\frac{1}{1+2\sqrt{1-\delta ^2}} <\frac{\sqrt{3}-1}{2}\) and

From (5.11) and (5.12) we obtain

and this results in (5.5), which proves the lemma. \(\square \)

The next lemma examines what is the effect of the full-Newton step on the duality gap.

Lemma 5.4

Let \(\delta =\delta ( x, s;\mu )\) and suppose that the vectors \( x_+\) and \( s_+\) are obtained using a full-Newton step, thus \( x_+= x+\Delta x\) and \( s_+= s+\Delta s\). We have

and if \(\delta <\frac{1}{2}\), then \(( x_+)^T s_+<\mu \left( n+\frac{1}{4} \right) \).

Proof

Substituting \(\alpha =1\) in (5.1) and using (3.5) we have

Using (4.1) and (5.2) we deduce

Therefore, using the orthogonality of the vectors \(d_x\) and \(d_s\) we obtain

This completes the first part of the lemma. From \(\delta <\frac{1}{2}\) we have \(\delta ^2<\frac{1}{4}\). Using this we obtain

which proves the second statement of the lemma.

In the next lemma we analyse the effect which a Newton step followed by an update of the parameter \(\mu \) has on the proximity measure. Suppose that \(\mu \) is reduced by the factor \((1-\theta )\) in every iteration.

Lemma 5.5

Let \(\delta =\delta ( x, s; \mu )<\frac{1}{2}\), \(v>\frac{1}{2}e\), \(\mu _+=(1-\theta )\mu \), \(v_\sharp =\sqrt{\frac{x_+s_+}{\mu _+}}\) and \(\eta =\sqrt{1-\theta }\), where \(0<\theta <1\). Then \(v_\sharp >\frac{1}{2}e\) and

Moreover, if \(\theta =\frac{1}{27\sqrt{n}}\) and \(n\ge 4\), then we have \(\delta ( x_+, s_+; \mu _+)<\frac{1}{2}\).

Proof

We have \(v_\sharp =\frac{1}{\eta }v_+\). Using Lemma 5.3 we obtain \(v_+>\frac{1}{2}e\), which yields \(v_\sharp >\frac{1}{2}e\). Hence, we get

Let us consider the function \(h(t)=\frac{t}{(2t-\eta )(\eta +t)}\) for all \(t>\frac{\eta }{2}\). Thus, we may write

From \(h'(t)<0\) it follows that h is a decreasing function. Then, similar to the proof of Lemma 5.2 using (5.7) we get

Using \(\delta <\frac{1}{2}\) we have \(\sqrt{1-\delta ^2}>\frac{\sqrt{3}}{2}\) and \(h\left( \sqrt{1-\delta ^2}\right) <h\left( \frac{\sqrt{3}}{2}\right) \). From \(h\left( \frac{\sqrt{3}}{2}\right) =\frac{\sqrt{3}}{-2\eta ^2+\sqrt{3}\eta +3}\), it follows that

Using (5.10) we have

so we may write

Using the function \(g(\eta )=\frac{1}{-2\eta ^3+\sqrt{3}\eta ^2+3\eta }\) for all \(0 < \eta <1\) we have

This implies that g is decreasing on the interval (0, 1). Now suppose that \(n\ge 4\) and \(\theta =\frac{1}{27\sqrt{n}}\). Then, we obtain \(\eta \ge \sqrt{\frac{53}{54}}\). From \(\delta <\frac{1}{2}\) we get

and a simple calculus gives \(\delta ( x_+, s_+; \mu _+)<\frac{1}{2}\). \(\square \)

Lemma 5.5 shows that the algorithm is well defined, more exactly that the conditions \(x > 0\), \(s > 0\), \(\delta ( x, s;\mu )<\frac{1}{2}\) and \(v > \frac{1}{2}e\) hold throughout the algorithm. The following lemma investigates the question of the bound on the number of iterations.

Lemma 5.6

We assume that the pair \(( x^0, s^0)\) is strictly feasible, \(\mu ^0=\frac{\left( x^0 \right) ^T s^0}{n}\) and \(\delta ( x^0, s^0;\mu ^0)<\frac{1}{2}\). Let \( x^k\) and \( s^k\) be the two vectors obtained by the algorithm given in Fig. 1 after k iterations. Then, for

we have \(\left( x^k \right) ^T s^k < \epsilon \).

Proof

From Lemma 5.4 we get

This implies that \(\left( x^k\right) ^T s^k < \epsilon \) holds if

Taking logarithms, we may write

As \(-\log (1-\theta )\ge \theta \) we see that the inequality is valid only if

This proves the lemma. \(\square \)

As the self-dual embedding allows us to assume without loss of generality, that \( x^0= s^0= e\), we have \(\mu ^0=1\). This question leads us to the next lemma.

Theorem 5.7

Suppose that \( x^0= s^0= e\). If we consider the default values for \(\theta \) and \(\tau \) we obtain that the algorithm given in Fig. 1 requires no more than

interior-point iterations. The resulting vectors satisfy \( x^T s < \epsilon \).

6 Numerical results

We implemented the algorithm given in Fig. 1 in the \(C++\) programming language in order to analyse its efficiency for different \(\varphi \) functions. We considered the search directions obtained by using the following three \(\varphi \) functions: the identity, the square root map and the difference between the square root and the identity functions. The algorithm performed only full-Newton steps and the barrier update parameter \(\mu \) was reduced by the factor \(1-\theta \). We used for the update parameter \(\theta \) each constant from the set \(\{0.1,\; 0.2,\; 0.3,\; 0.7,\; 0.8,\; 0.9 \}\). In all cases the accuracy parameter had the value \(\epsilon = 10^{-5}\).

Hereafter, we present three problems for which we tested the different versions of the algorithm. It should be mentioned that for all the problems the starting points \(x^0\), \(y^0\) and \(s^0\) satisfy the following condition: \(\sqrt{\frac{x^0s^0}{\mu ^0}}>\frac{e}{2}\), where \(\mu ^0=\frac{\left( x^0 \right) ^T s^0}{n}\).

6.1 Problem 1

We consider the primal-dual pair (P)–(D), where

The used starting points are the following: \(x^0= \left[ 1,\; 11,\; 4,\; 1,\; 7,\; 11,\; 6 \right] ^T\), \(y^0=\left[ -1,\; 10,\; 8,\; -3 \right] ^T\) and \(s^0= \left[ 7,\; 4,\; 9,\; 7,\; 2,\; 4,\; 5 \right] ^T.\)

In Table 1 we summarize the obtained numbers of iterations for the different search directions and the used update parameters.

6.2 Problem 2

Let

We consider the following starting points: \(x^0= \left[ 1,\; 2,\; 2,\; 1,\; 4,\; 5,\; 2,\; 4,\; 2,\; 4 \right] ^T\), \(y^0=\left[ -9,\; 10,\; -6,\; 5,\; 0,\; 3,\; 10,\; 7 \right] ^T\) and \(s^0= \left[ 8,\; 10,\; 5,\; 4,\; 9,\; 1,\; 4,\; 6,\; 5,\; 1 \right] ^T.\)

Table 2 contains the obtained results that refer to Problem 2.

6.3 Problem 3

Let us consider

We used the following starting points: \(x^0= \left[ 5,\; 9,\; 6,\; 1,\; 5,\; 3,\; 5,\; 2,\; 8 \right] ^T\), \(y^0=\left[ -3,\; 6,\; -3,\; 6,\; 10,\; 6,\; 3 \right] ^T\) and \(s^0= \left[ 4,\; 1,\; 3,\; 5,\; 9,\; 6,\; 5,\; 6,\; 2 \right] ^T.\)

The obtained numbers of iterations for the different cases are presented in Table 3.

It can be observed that for the selected problems the algorithm which uses the proposed search direction gives better results than the two other studied methods. The used problems are small-sized and dense. Therefore, as a further research the effieciency of our algorithm could be analysed for large, sparse problems as well.

7 Conclusion

In this paper we used the method introduced in [15] to obtain a new search direction for IPMs. This approach is based on applying Newton’s method on an equivalent form of the central path (2.2). We used the function \(\varphi (t)=t-\sqrt{t}\) in order to define a new primal-dual IPM. Because of the specific character of this function we had to ensure that in each iteration of the algorithm the components of the vectors in the v-space are greater than \(\frac{1}{2}\). Therefore, the complexity analysis turned out to be more difficult than in the usual case. We proved that this short-update algorithm has the iteration bound \(O(\sqrt{n}\log \frac{n}{\epsilon })\). Moreover, we provided some numerical experiments that demonstrate the efficiency of the proposed algorithm.

References

M. Achache, A weighted-path-following method for the linear complementarity problem. Studia Univ. Babeş-Bolyai. Ser. Informatica 49(1), 61–73 (2004)

M. Achache, A new primal-dual path-following method for convex quadratic programming. Comput. Appl. Math. 25(1), 97–110 (2006)

M. Achache, Complexity analysis and numerical implementation of a short-step primal-dual algorithm for linear complementarity problems. Appl. Math. Comput. 216(7), 1889–1895 (2010)

K. Ahmadi, F. Hasani, B. Kheirfam, A full-Newton step infeasible interior-point algorithm based on Darvay directions for linear optimization. J. Math. Model. Algorithms Oper. Res. 13(2), 191–208 (2014)

W. Ai, S. Zhang, An O\((\sqrt{n} {L})\) iteration primal-dual path-following method, based on wide neighborhoods and large updates, for monotone LCP. SIAM J. Optim. 16(2), 400–417 (2005)

F. Alizadeh, D. Goldfarb, Second-order cone programming. Math. Program. 95(1), 3–51 (2003)

E. Andersen, J. Gondzio, Cs Mészáros, X. Xu, Implementation of interior point methods for large scale linear programs, in Inter. Point Methods Math. Program., ed. by T. Terlaky (Kluwer Academic Publishers, Dordrecht, 1996), pp. 189–252

S. Asadi, H. Mansouri, Polynomial interior-point algorithm for \({P}_*(\kappa )\) horizontal linear complementarity problems. Numer. Algorithms 63(2), 385–398 (2013)

S. Asadi, H. Mansouri, A new full-Newton step \({O}(n)\) infeasible interior-point algorithm for \({P}_*(\kappa )\)-horizontal linear complementarity problems. Comput. Sci. J. Moldova 22(1), 37–61 (2014)

Y.Q. Bai, L.M. Sun, Y. Chen, A new path-following interior-point algorithm for monotone semidefinite linear complementarity problems. Dyn. Contin. Discrete Impuls. Syst., Ser. B: Appl. Algorithms 17, 769–783 (2010)

Y.Q. Bai, F.Y. Wang, X.W. Luo, A polynomial-time interior-point algorithm for convex quadratic semidefinite optimization. RAIRO-Oper. Res. 44(3), 251–265 (2010)

R.W. Cottle, J.S. Pang, R.E. Stone, The Linear Complementarity Problem. Computer Science and Scientific Computing (Academic Press, Boston, 1992)

Zs. Darvay, A new algorithm for solving self-dual linear optimization problems. Studia Univ. Babeş-Bolyai, Ser. Informatica 47(1), 15–26 (2002)

Zs. Darvay, A weighted-path-following method for linear optimization. Studia Univ. Babeş-Bolyai, Ser. Informatica 47(2), 3–12 (2002)

Zs. Darvay, New interior point algorithms in linear programming. Adv. Model. Optim. 5(1), 51–92 (2003)

Zs. Darvay, Á. Felméri, N. Forró, I.-M. Papp, P.-R. Takács, A new interior-point algorithm for solving linear optimization problems, in XVII. FMTU, ed. by E. Bitay (Transylvanian Museum Society, Cluj-Napoca, 2012), pp. 87–90. In Hungarian

Zs. Darvay, I.-M. Papp, P.-R. Takács, An infeasible full-Newton step algorithm for linear optimization with one centering step in major iteration. Studia Univ. Babeş-Bolyai, Ser. Informatica 59(1), 28–45 (2014)

J. Gondzio, T. Terlaky, A computational view of interior point methods for linear programming, in Advances in Linear and Integer Programming, ed. by J. Beasley (Oxford University Press, Oxford, GB, 1995)

M. Halická, Analytical properties of the central path at boundary point in linear programming. Math. Program. 84(2), 335–355 (1999)

M. Halická, Two simple proofs for analyticity of the central path in linear programming. Oper. Res. Lett. 28(1), 9–19 (2001)

T. Illés, Lineáris optimalizálás elmélete és belsőpontos algoritmusai. Operations Research Reports 2014-04, Eötvös Loránd University, Budapest, pp. 1–95 (2014)

T. Illés, M. Nagy, A Mizuno-Todd-Ye type predictor-corrector algorithm for sufficient linear complementarity problems. Eur. J. Oper. Res. 181(3), 1097–1111 (2007)

T. Illés, M. Nagy, T. Terlaky, EP theorem for dual linear complementarity problems. J. Optim. Theory Appl. 140(2), 233–238 (2009)

T. Illés, M. Nagy, T. Terlaky, Polynomial interior point algorithms for general linear complementarity problems. Alg. Oper. Res. 5(1), 1–12 (2010)

T. Illés, M. Nagy, T. Terlaky, A polynomial path-following interior point algorithm for general linear complementarity problems. J. Global. Optim. 47(3), 329–342 (2010)

T. Illés, T. Terlaky, Pivot versus interior point methods: Pros and Cons. Eur. J. Oper. Res. 140, 6–26 (2002)

N. Karmarkar, A new polynomial-time algorithm for linear programming. Combinatorica 4(4), 373–395 (1984)

B. Kheirfam, A new infeasible interior-point method based on Darvay’s technique for symmetric optimization. Ann. Oper. Res. 211(1), 209–224 (2013)

B. Kheirfam, A predictor-corrector interior-point algorithm for \({P}_*(\kappa )\)-horizontal linear complementarity problem. Numer. Algorithms 66(2), 349–361 (2014)

E.D. Klerk, Aspects of Semidefinite Programming: Interior Point Algorithms and Selected Applications (Kluwer Academic Pubishers, Norwell, MA, 2002)

M. Kojima, N. Megiddo, T. Noma, A. Yoshise, A Unified Approach to Interior Point Algorithms for Linear Complementarity Problems, vol. 538, Lecture Notes in Computer Science (Springer, Berlin, 1991)

M. Kojima, N. Megiddo, Y. Ye, An interior point potential reduction algorithm for the linear complementarity problem. Math. Program. 54(1–3), 267–279 (1992)

M. Kojima, S. Mizuno, A. Yoshise, A polynomial-time algorithm for a class of linear complementarity problems. Math. Program. 44(1–3), 1–26 (1989)

I. Lustig, R. Marsten, D. Shanno, On implementing Mehrotra’s predictor-corrector interior-point method for linear programming. SIAM J. Optim. 2(3), 435–449 (1992)

I. Lustig, R. Marsten, D. Shanno, Computational experience with a globally convergent primal-dual predictor-corrector algorithm for linear programming. Math. Program. 66, 123–135 (1994)

H. Mansouri, M. Pirhaji, A polynomial interior-point algorithm for monotone linear complementarity problems. J. Optim. Theory Appl. 157(2), 451–461 (2013)

H. Mansouri, T. Siyavash, M. Zangiabadi, A path-following infeasible interior-point algorithm for semidefinite programming. Iran. J. Oper. Res. 3(1), 11–30 (2012)

S. Mehrotra, On the implementation of a primal-dual interior point method. SIAM J. Optim. 2(4), 575–601 (1992)

C. Mészáros, The efficient implementation of interior point methods for linear programming and their applications. Ph.D. thesis, Eötvös Loránd University of Sciences, Ph.D. School of Operations Research, Applied Mathematics and Statistics, Budapest (1996)

R.D.C. Monteiro, Y. Zhang, A unified analysis for a class of long-step primal-dual path-following interior-point algorithms for semidefinite programming. Math. Program. 81(3), 281–299 (1998)

Y. Nesterov, A. Nemirovskii, Interior Point Polynomial Methods in Convex Programming: Theory and Algorithms, SIAM Studies in Applied Mathematics, vol. 13 (SIAM Publications, Philadelphia, 1994)

J. Peng, C. Roos, T. Terlaky, Self-Regular Functions: A New Paradigm for Primal-Dual Interior-Point Methods (Princeton University Press, Princeton, 2002)

F.A. Potra, The Mizuno-Todd-Ye algorithm in a larger neighborhood of the central path. Eur. J. Oper. Res. 143(2), 257–267 (2002)

F.A. Potra, R. Sheng, Predictor-corrector algorithm for solving \({P}_*(\kappa )\)-matrix LCP from arbitrary positive starting points. Math. Program. 76(1), 223–244 (1996)

F.A. Potra, R. Sheng, A large-step infeasible-interior-point method for the \({P}^*\)-Matrix LCP. SIAM J. Optim. 7(2), 318–335 (1997)

C. Roos, T. Terlaky, J-Ph Vial, Theory and Algorithms for Linear Optimization (Springer, New York, 2005)

S. Schmieta, F. Alizadeh, Extension of primal-dual interior point algorithms to symmetric cones. Math. Program., Ser. A 96(3), 409–438 (2003)

G. Sonnevend, An ”analytic center” for polyhedrons and new classes of global algorithms for linear (smooth, convex) programming. in System Modelling and Optimization: Proceedings of the 12th IFIP-Conference held in Budapest, Hungary, September 1985, Lecture Notes in Control and Information Sciences. ed. by A Prékopa, J Szelezsán, B Strazicky, vol. 84 (Springer, Berlin, 1986) pp. 866–876

T. Terlaky, An easy way to teach interior-point methods. Eur. J. Oper. Res. 130(1), 1–19 (2001)

M.V.C Vieira, Jordan algebraic approach to symmetric optimization. Ph.D. thesis, Electrical Engineering, Mathematics and Computer Science, Delft University of Technology (2007)

G.Q. Wang, A new polynomial interior-point algorithm for the monotone linear complementarity problem over symmetric cones with full NT-steps. Asia-Pac. J. Oper. Res. 29(2), 1250,015 (2012)

G.Q. Wang, Y.Q. Bai, A new primal-dual path-following interior-point algorithm for semidefinite optimization. J. Math. Anal. Appl. 353(1), 339–349 (2009)

G.Q. Wang, Y.Q. Bai, A primal-dual interior-point algorithm for second-order cone optimization with full Nesterov-Todd step. Appl. Math. Comput. 215(3), 1047–1061 (2009)

G.Q. Wang, Y.Q. Bai, A new full Nesterov-Todd step primal-dual path-following interior-point algorithm for symmetric optimization. J. Optim. Theory Appl. 154(3), 966–985 (2012)

G.Q. Wang, Y.J. Yue, X.Z. Cai, Weighted-path-following interior-point algorithm to monotone mixed linear complementarity problem. Fuzzy Inf. Eng. 1(4), 435–445 (2009)

G.Q. Wang, Y.J. Yue, X.Z. Cai, A weighted-path-following method for monotone horizontal linear complementarity problem. in Fuzzy Information and Engineering, Advances in Soft Computing. ed. by B.y. Cao, C.y. Zhang, T.f. Li, vol. 54, (Springer, Berlin, 2009) pp. 479–487

S. Wright, Primal-Dual Interior-Point Methods (SIAM, Philadelphia, 1997)

Y. Ye, Interior Point Algorithms. Theory and Analysis (Wiley, Chichester, 1997)

Y. Ye, A path to the Arrow-Debreu competitive market equilibrium. Math. Program. 111(1–2), 315–348 (2008)

Y. Ye, M. Todd, S. Mizuno, An \(O(\sqrt{n}L)\)-iteration homogeneous and self-dual linear programming algorithm. Math. Oper. Res. 19, 53–67 (1994)

Acknowledgments

The authors gratefully acknowledge the support of the Edutus College (Collegium Talentum) and the Transylvanian Museum Society. The authors are thankful to the editor and the reviewer for their helpful suggestions that improved the presentation of this paper. We also thank Ágnes Felméri and Nóra Forró for their useful comments on the paper. The work of the third author was supported by a grant of the Romanian National Authority for Scientific Research, CNCS-UEFISCDI, project number PN-II-ID-PCE-2011-3-0024.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Darvay, Z., Papp, IM. & Takács, PR. Complexity analysis of a full-Newton step interior-point method for linear optimization. Period Math Hung 73, 27–42 (2016). https://doi.org/10.1007/s10998-016-0119-2

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10998-016-0119-2