Abstract

Given limited funding for school-based science education, non-school-based programs have been developed at colleges and universities to increase the number of students entering science- and health-related careers and address critical workforce needs. However, few evaluations of such programs have been conducted. We report the design and methods of a controlled trial to evaluate the Stanford Medical Youth Science Program’s Summer Residential Program (SRP), a 25-year-old university-based biomedical pipeline program. This 5-year matched cohort study uses an annual survey to assess educational and career outcomes among four cohorts of students who participate in the SRP and a matched comparison group of applicants who were not chosen to participate in the SRP. Matching on sociodemographic and academic background allows control for potential confounding. This design enables the testing of whether the SRP has an independent effect on educational- and career-related outcomes above and beyond the effects of other factors such as gender, ethnicity, socioeconomic background, and pre-intervention academic preparation. The results will help determine which curriculum components contribute most to successful outcomes and which students benefit most. After 4 years of follow-up, the results demonstrate high response rates from SRP participants and the comparison group with completion rates near 90 %, similar response rates by gender and ethnicity, and little attrition with each additional year of follow-up. This design and methods can potentially be replicated to evaluate and improve other biomedical pipeline programs, which are increasingly important for equipping more students for science- and health-related careers.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Background

Recent reports have highlighted a severe lack of preparation for science-related careers among US students, and large socioeconomic disparities in science achievement (National Center for Education Statistics 2011). To help address these problems, a growing number of programs have been developed outside of schools to augment traditional school-based science education. Such programs are increasingly important given the limited funding for K-12 science education (National Center for Education Statistics 2007; US Department of Education 2007). The aim of these programs is to excite students about science, develop their academic skills, and broaden the pipeline of students entering science- and health-related careers. However, the effectiveness of these programs has seldom been evaluated, and few evaluations have had an appropriate comparison group and the ability to adjust adequately for confounding factors.

This article describes the design and methods of a controlled trial to evaluate the Stanford Medical Youth Science Program’s (SMYSP) Summer Residential Program (SRP), one of the most established university-based science education programs in the US. Founded in 1988, this 5-week program links very low income, predominantly underrepresented minority high school students with the science-rich resources at Stanford’s School of Medicine and the broader University (Winkleby 2007; Winkleby et al. 2009). Each year, 24 students are selected to live at Stanford under the guidance of 10 Stanford undergraduate student staff. The curriculum focuses on: (1) inquiry-based and experiential learning through anatomy and laboratory practicums, (2) hospital internships, (3) faculty seminars and college admission workshops, (4) research projects with a focus on public health issues, and (5) long-term college and career guidance. Previous reports have described the 5-week curriculum in detail (Winkleby 2007; Winkleby et al. 2009). In 2012, SMYSP received the US Presidential Award for Excellence in Science, Mathematics and Engineering Mentoring, the highest honor bestowed by the US government for mentoring in these fields.

Evaluation of educational and career outcomes has been a major emphasis of SMYSP since its inception. All 571 students who have completed the SRP since 1988 have been followed up through telephone and online surveys, and more recently through the National Student Clearinghouse (http://www.studentclearinghouse.org). Nearly all (99 %) SRP graduates have been admitted to college. Among those not currently attending high school or college, 90 % have earned a 4-year college degree, and among these, 47 % are attending or have completed graduate or medical school. Forty-four percent of the college graduates have entered science or health professions.

Despite these notable outcomes, there are two limitations that call for a controlled evaluation of the program. First, although college and career achievements may be a result of participation in the SRP, they may also be due to selection bias. Without an appropriate comparison group of similar students who did not participate in the SRP, it is unclear whether these outcomes are a program versus selection effect. Second, although SMYSP has examined long-term college and career outcomes, more proximate outcomes have not been studied, including types of colleges attended, adjustment to the college environment, science-related college courses and research experiences, college majors, and anticipated careers. These short-term measures may provide additional insights into SMYSP’s longer-term educational and career outcomes.

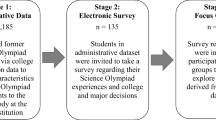

In 2009, a matched cohort study was designed and implemented to test the effectiveness of the SRP using a well-matched comparison group. This study was funded by the Science Education Partnership Award (sponsored by the National Center for Research Resources, part of the National Institutes of Health), which provides grants for innovative educational programs that create partnerships among biomedical researchers and K-12 schools. The purpose of this study is to test the effectiveness of program interventions, determine which curriculum components contribute most to successful outcomes, and identify which students benefit most. This article reports the design and methods of this ongoing study so that they might be replicated to evaluate and improve other science education programs. It describes: (1) primary hypotheses, (2) selection criteria, (3) matching criteria, (4) potential biases, (5) enrollment, (6) types of data collected, (7) analytic approaches, and (8) preliminary results after 4 years of follow-up.

Design and Methods

Hypotheses

This matched cohort study tests the hypotheses that SRP participants will be more likely than non-participants to:

-

1.

Report having experiences that prepare them for college, including completion of more high school science classes and involvement in more science-related activities;

-

2.

Report higher confidence about preparation for college and capability in succeeding in science-related courses;

-

3.

Complete more science and math courses in college and receive higher grades in such courses;

-

4.

Adjust more successfully to college;

-

5.

Declare a major or minor in science- or health-related fields;

-

6.

Plan for a science- or health-related career.

Selection of SRP Participants and Comparison Group

Students are chosen to participate in the 5-week SRP using a comprehensive application process that helps select those who best meet program criteria. Students who meet the following criteria are encouraged to apply: (1) attend public high school in Northern or Central California, (2) have completed tenth or eleventh grade, (3) are from a low-income family, (4) have an interest in the sciences and health, and (5) have achieved an overall grade average of C or above and/or earned at least a B in biology. Priority is given to students who are from underrepresented minority groups (American Indians or Alaska Natives, Blacks or African-Americans, Hispanics or Latinos, Native Hawaiians or Other Pacific Islanders), who are the first in their families to attend college, who have faced personal hardship (e.g., death or disability of a parent, or foster care placement), who are from under-resourced schools and/or communities (e.g., rural and inner-city schools, agricultural labor camps), who lack knowledge about the college admissions process, and/or have poor academic preparation. Those selected receive a full scholarship for tuition, room, and board.

Each year, student application forms are posted on the SMYSP website (http://smysp.stanford.edu/) and mailed to approximately 300 high schools and community-based organizations in 21 counties in Northern and Central California. The application consists of a description of family background; official high school transcript; two letters of recommendation from teachers, principals, or counselors; and six 100–400 word essays about the student’s science interest, career goals, and personal hardships. Low-income status is determined by parents’ or guardians’ occupations and educational levels, sources and amounts of family income, and number of people supported by the family. Nearly all participants are below the federal poverty threshold level.

Approximately 250–300 students apply each year. Applications are reviewed by the 10 Stanford undergraduate student staff and the program’s executive director. The top 100 students are interviewed by telephone, and 45 finalists are interviewed in person. A final class of 24 students is selected, with equal numbers of low-income young women and men and approximately equal numbers from the following four ethnic categories: African-American, Asian, Latino, and other ethnicity.

The comparison group is chosen from students who apply to the SRP but are not selected to participate. This approach has several advantages. First, the large number of program applicants creates a sufficient pool of students from which to select a well-matched comparison group. Second, because these students have completed program applications, extensive demographic and academic information is available for matching them as closely as possible to SRP participants (matching criteria are described below). Third, all applicants and their parents provide written consent to be contacted in the future for educational research even if the student is not selected for participation, thus giving signed consent at the time of application for follow-up. Finally, students in the comparison group are unlikely to be influenced by SRP participants because they rarely know the participants or attend the same schools. Many other science education programs have a competitive pool of applicants, and therefore, the opportunity to select a comparison group in a similar fashion as described here.

Matching Criteria

Given the relatively small number of SRP participants, each one is matched to two comparison students to improve statistical power and precision of the outcome estimates. The matching criteria are gender (male/female), ethnicity (African-American, Asian, Latino, other ethnicity), year in school (10th or 11th grade), grade point average (GPA, within 0.25 grade points; e.g., a GPA of 3.00 is matched to a GPA of 2.75–3.25), and location of school (urban/rural). These criteria were chosen because they are potential confounding factors, i.e., associated with the exposure (participation in the SRP) and, independently, with the outcomes (e.g., success in science classes, or sustained interest in a science-related career).

To date, 96 SRP participants have been matched to 192 comparison students (four cohorts). All matches were exact for gender, year in school, and GPA. In addition, 87.5 % have been matched on ethnicity and urban/rural status. Nineteen (9.9 %) SRP participants did not have an exact match on ethnicity, and 5 (2.6 %) were not matched on urban/rural status. Exact matches on these factors were difficult to achieve, especially for African-American young men, because there are fewer male than female applicants.

Potential Biases

The above procedure for selecting a comparison group has two potential biases, but both are likely conservative (i.e., in favor of better outcomes among the comparison group). First, because SMYSP prioritizes the selection of students from low socioeconomic backgrounds, those who are selected to participate in the SRP are more likely to have experienced personal adversity and lack academic preparation than those not selected. Based on answers to application questions, SRP participants are less likely than the comparison group to have standardized test-taking experience, guidance from high school counselors, exposure to college campuses or faculty, or college role models among siblings or friends. Second, students in the comparison group might be somewhat positively influenced by their slight exposure to the SRP through completing the program application or participating in the on-campus interview, which would also lend a conservative bias (i.e., would narrow the observed differences in outcomes between SRP participants and the comparison group). Preliminary data have suggested that this effect is more likely than the opposite bias, e.g., that non-selection to participate in the SRP would result in decreased self-efficacy or sense of worth that may negatively affect outcomes.

Enrollment of SRP Participants and Comparison Group

SRP participants are enrolled in the matched cohort study at the beginning of the SRP, at which time they and their parents sign consent forms to participate in the baseline and follow-up online surveys. Matched comparison students are identified from the pool of applicants not selected for the SRP, then trained survey researchers contact them by telephone to gain student and parent consent for participation in these surveys. After consenting, students are enrolled in the study and directed to the baseline online survey. If the first matched comparison student cannot be reached by telephone or refuses to participate, an alternate matched student is selected. At the beginning of this study, it was unclear whether comparison students would be willing to participate in follow-up surveys given that they had applied but were not chosen to participate in the SRP. However, pilot data suggested that they would participate if they were given a $20 American Express gift card and a letter of participation on School of Medicine letterhead stating that the student was involved in a Stanford research study. Both incentives (given annually) have been effective, especially the Stanford letter of participation.

SRP participants and the comparison group are then contacted annually by email and when needed by telephone to complete the annual online survey, which takes approximately 15 min. To enhance response rates, survey researchers use tracking protocols that involve calling students at convenient times, making multiple calls when a student is not at home, being willing to return calls at unusual hours, and encouraging parents and other household members to have students return phone calls. Incentives are mailed after the survey is completed. SRP participants and the comparison group are also mailed a postcard twice each year to maintain contact. The first postcard wishes students a happy holiday season and the second reminds them of the upcoming annual survey.

Data Collection

The baseline survey ascertains contact information for the student, parents, and two relatives/close friends to aid in tracking (telephone numbers and email addresses, when available); comprehensive sociodemographic data (student date of birth and ethnicity; parent/guardian education, income, occupation, and country of birth; family language); high school academic background; college and career plans; academic support; and attitudes/beliefs about science- and health-related careers. Data are also collected on other factors that may be moderators, mediators, or confounding factors, including parental education, support of science career by family, and academic preparation.

Final high school transcripts for SRP participants and the comparison group are requested by mail at the time of high school graduation to complement self-reported survey data. Information is abstracted on GPA; class ranking; the number of science, math, and English classes taken post-intervention (i.e., after participation in the SRP, or after the last day of the SRP for comparison students); and academic performance in these classes.

An annual online survey was designed to assess 10 main constructs covered in the SRP (Table 1). For each construct, a question was developed to elucidate two domains: “extent of science-related experience” and “self-efficacy” (perceived capability for success in science-related classes and careers). The original questions also included the domain of “motivation”, but this was omitted in the final survey due to high correlation with “self-efficacy” responses.

The annual survey also collects other information tailored to the student’s year in high school or college, including types of courses taken and grades, science and research experiences, intended major and career, and other factors that may influence educational and career choices. Questions are matched as closely as possible to the components of the SRP (e.g., hospital internships, research projects, etc.) to enable an accurate assessment of the program interventions. To enhance validity, standardized questions were adapted when possible from larger science-based surveys that have been previously validated, including the Student Attitudes about Science Instruction (Swept Study; Silverstein et al. 2009) and the Longitudinal Study of American Youth (Xin 2001). Many questions are asked on a 5-point Likert scale to measure intensity from strongly agree to strongly disagree. Many also are repeated each year to enable an assessment of interval change. Students who do not attend college are given a survey that collects other information relevant to their situation (e.g., employment, preparation for college, community service, and leave from college due to family-related emergency) and possible future educational plans. Completed surveys are checked for potential survey bias (such as selecting the same response for all items) or incompleteness, in which case the student is re-contacted to confirm survey accuracy and completeness.

Analytic Approaches

Matching of SRP participants to non-participants on potential confounding variables effectively controls for confounding provided that there is minimal loss to follow-up, as in the current study. Furthermore, in a matched cohort study (unlike a matched case–control study), matching need not be accounted for in the analysis to avoid bias (Cummings and McKnight 2004). Effectiveness of the SRP will be tested by comparing participants to non-participants for differences in outcomes (e.g., academic success in high school, acceptance and success in college, intended major, or career choices) using different regression models depending on the distribution of the respective outcome. For example, logistic regression will be used for dichotomous outcomes (such as college major in a health/science field vs. others), ordinal logistic regression for ordinal polytomous outcomes (such as Likert scores), and linear regression for continuous outcomes (such as grade point average). Model diagnostics will be used to assess the appropriateness of each model. First-order interactions will be examined to assess differential effects across subgroups (e.g., different outcomes by primary language spoken at home) using a likelihood ratio test. All statistical tests will be two-tailed using an α-level of 0.05.

Preliminary Results

After 4 years of follow-up, the preliminary results indicate high response rates from SRP participants and the comparison group with completion rates near 90 %, similar response rates by gender and ethnicity, and little attrition with each additional year of follow-up. Complete results will be reported at the end of the 5-year follow-up period.

Discussion

This controlled trial builds upon previous evaluations of SMYSP’s SRP that have tracked college and career outcomes but lacked a suitable comparison group (Winkleby 2007; Winkleby et al. 2009). It demonstrates high feasibility of a controlled evaluation of program effectiveness while adjusting appropriately for potential confounding factors. It also allows an assessment of more proximate outcomes to complement the longer-term educational and career outcomes previously reported (Winkleby 2007; Winkleby et al. 2009). This design and methods can potentially be replicated to evaluate other non-school-based science education programs, which are increasingly needed to broaden the pipeline of students entering science- and health-related careers. Replication in other programs may potentially enable collaborative analysis of the findings in a consortium-like effort to achieve more comprehensive evaluation and improvement of these programs.

Universities, medical schools, and other professional schools have a unique capacity to connect young people with state-of-the art science internships, laboratories, technology, and faculty and student role models (Winkleby and Ned 2010). Compared with school-based programs, they often have greater flexibility in developing activities that engage students in stimulating, experiential, and cooperative learning. Such programs also have reciprocal benefits for universities. They provide multidisciplinary and cross-departmental teaching, leadership, and learning opportunities for undergraduate, graduate, and medical students, as well as faculty. They affirm the availability of academic opportunities for all students as a core university value. The benefits of university-based biomedical pipeline programs also extend to a broad spectrum of students, including gifted youth, those who can afford fee-based programs, and low-income students who otherwise may not be exposed to learning in a university environment.

Despite decades of federal investment in science and math education (US Department of Education 2007), there is a dearth of evidence for effective practices. Few science education pipeline programs have examined and reported long-term outcomes, such as science achievement or completion of college, graduate or medical school (National Center for Education Statistics 2007). Previous evaluations have also been compromised by small sample sizes and high loss to follow-up. Among programs that have attempted evaluations (Beck et al. 1978; Butler et al. 1991; Cregler 1993; Davis and Davidson 1982; Felix et al. 2004; Jones and Flowers 1990; Marshall 1975; McKendall et al. 2000; Nickens et al. 1994; Rohrbaugh and Corces 2011; Rosenbaum et al. 2007; Sikes and Schwartz-Bloom 2009; Thurmond and Cregler 1994), few have been funded to conduct controlled trials in which program participants are compared with an appropriate control group. Additional controlled trials are needed to better evaluate program efficacy, and to determine which components are most successful and which students benefit most. Such trials are especially needed for programs that reach out to low-income and underrepresented minority students, who have the greatest disparities in science-related educational and career outcomes (American Psychological Association 2012).

The 2009 National Assessment of Educational Progress highlighted a severe lack of science preparation among US students and the implications for the national workforce. Only 21 % of high school seniors scored at or above the proficient level in science education, and only 1–2 % at the advanced level, whereas 47 % failed to meet the most basic level (National Center for Education Statistics 2011). Large achievement disparities were reported by ethnicity, income, and public- versus private-school students (National Center for Education Statistics 2011). These findings led US Secretary of Education Arne Duncan to conclude that “the next generation will not be ready to be world-class inventors, doctors, and engineers” (US Department of Education 2011). SMYSP’s SRP and other similar programs have the potential to address these disparities and help the US workforce meet its social, health, and technological needs over the next few decades. Underrepresented minority youth in particular comprise a growing proportion of the population and are an important source of new employees entering the workforce. Given the opportunity to develop their academic potential, these students can make a major contribution to meeting critical needs in the science and health professions.

References

American Psychological Association, Presidential Task Force on Educational Disparities (2012) Ethnic and racial disparities in education: psychology’s contributions to understanding and reducing disparities. Retrieved on 1 Feb 2013, from http://www.apa.org/ed/resources/racial-disparities.aspx

Beck P, Githens JH, Clinkscales D, Yamamoto D, Riley CM, Ward HP (1978) Recruitment and retention program for minority and disadvantaged students. J Med Educ 53(8):651–657

Butler WT, Thomson WA, Morrissey CT, Miller LM, Smith QW (1991) Baylor’s program to attract minority students and others to science and medicine. Acad Med 66(6):305–311

Cregler LL (1993) Enrichment programs to create a pipeline to biomedical science careers. J Assoc Acad Minor Physicians 4(4):127–131

Cummings P, McKnight B (2004) Analysis of matched cohort data. Stata J 4(3):274–281

Davis JA, Davidson CP (1982) The Med-COR study: preparing high school students for health careers. J Med Educ 57(7):527–534

Felix DA, Hertle MD, Conley JG, Washington LB, Bruns PJ (2004) Assessing precollege science education outreach initiatives: a funder’s perspective. Cell Biol Educ 3(3):189–195

Jones F, Flowers JC (1990) New York’s statewide approach to increase the number of minority applicants to medical school. Acad Med 65(11):671–674

Marshall EC (1975) An experiment in health careers recruitment: a summer program at Indiana University. J Am Optom Assoc 46(12):1284–1292

McKendall SB, Simoyi P, Chester AL, Rye JA (2000) The health sciences and technology academy: utilizing pre-college enrichment programming to minimize post-secondary education barriers for underserved youth. Acad Med 75(10 Suppl):S121–S123

National Center for Education Statistics, U.S. Department of Education (2007) The condition of education 2007 (NCES 2007–064). U.S. Government Printing Office, Washington

National Center for Education Statistics, U.S. Department of Education (2011) The nation’s report card: science 2009, national assessment of educational progress (NAEP) at grades 4, 8, and 12 (NCES 2011-451). Institute of Education Sciences, U.S. Department of Education, Washington. Retrieved on 1 Feb 2013, from http://nces.ed.gov/pubs2007/2007064.pdf

Nickens HW, Ready TP, Petersdorf RG (1994) Project 3000 by 2000. Racial and ethnic diversity in U.S. medical schools. N Engl J Med 331(7):472–476

Rohrbaugh MC, Corces VG (2011) Opening pathways for underrepresented high school students to biomedical research careers: the Emory University RISE program. Genetics 189(4):1135–1143

Rosenbaum JT, Martin TM, Farris KH, Rosenbaum RB, Neuwelt EA (2007) Can medical schools teach high school students to be scientists? Fed Am Soc Exp Biol J 21:1954–1957

Sikes SS, Schwartz-Bloom RD (2009) LEAP! launch into education about pharmacology: transforming students into scientists. Mol Interv 9(5):215–219

Silverstein SC, Dubner J, Miller J, Glied S, Loike JD (2009) Teachers’ participation in research programs improves their students’ achievement in science. Science 326(5951):440–442

Thurmond VB, Cregler LL (1994) Career choices of minority high school student research apprentices at a health science center. Acad Med 69(6):507

U.S. Department of Education (2007) Report of the Academic Competitiveness Council. U.S. Department of Education, Washington. Retrieved on 1 Feb 2013, from http://cahsi.cs.utep.edu/Portals/0/Resources/Literature/Report_of_the_AcademicCompetitiveness_Council.pdf

U.S. Department of Education (2011) Statement by U.S. Secretary of Education Arne Duncan on the Release of the NAEP Science Report Card. U.S. Department of Education, Washington. Retrieved on 1 Feb 2013, from http://www.ed.gov/news/press-releases/statement-us-secretary-education-arne-duncan-release-naep-science-report-card

Winkleby MA (2007) The Stanford medical youth science program: 18 years of a biomedical program for low-income high school students. Acad Med 82(2):139–145

Winkleby M, Ned J (2010) Promoting science education. J Am Med Assoc 303(10):983–984

Winkleby MA, Ned J, Ahn D, Koehler AR, Kennedy J (2009) Increasing diversity in science and health professions: a 21-year longitudinal study documenting college and career success. J Sci Educ Technol 18:535–545

Xin M (2001) Participation in advanced mathematics: do expectation and influence of students, peers, teachers, and parents matter? Contemp Educ Psychol 26(1):132–146

Acknowledgments

This work was supported by the Science Educational Partnership Award (SEPA) from the National Center for Research Resources, a component of the National Institutes of Health (R25RR026011). The authors thank Justine Aguilar-Blake, Diana Austria, Nell Curran, Anna Felberg, and Dale Lemmerick for contributions to SMYSP and follow-up evaluation. In-kind resources are provided by Stanford University, Stanford School of Medicine, Stanford Prevention Research Center, Stanford Hospital and Clinics, Lucile Packard Children’s Hospital, and the Veterans Affairs Palo Alto Health Care System.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Winkleby, M.A., Ned, J., Ahn, D. et al. A Controlled Evaluation of a High School Biomedical Pipeline Program: Design and Methods. J Sci Educ Technol 23, 138–144 (2014). https://doi.org/10.1007/s10956-013-9458-4

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10956-013-9458-4