Abstract

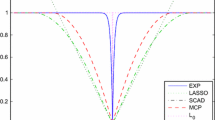

We consider the median regression with a LASSO-type penalty term for variable selection. With the fixed number of variables in regression model, a two-stage method is proposed for simultaneous estimation and variable selection where the degree of penalty is adaptively chosen. A Bayesian information criterion type approach is proposed and used to obtain a data-driven procedure which is proved to automatically select asymptotically optimal tuning parameters. It is shown that the resultant estimator achieves the so-called oracle property. The combination of the median regression and LASSO penalty is computationally easy to implement via the standard linear programming. A random perturbation scheme can be made use of to get simple estimator of the standard error. Simulation studies are conducted to assess the finite-sample performance of the proposed method. We illustrate the methodology with a real example.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Chen, S., Donoho, D. (1994). Basis pursuit. In 28th Asilomar Conference Signals. Asilomar: Systems Computers.

Efron B., Johnstone I., Hastie T., Tibshirani R. (2004) Least angle regression (with discussions). Annals of Statistics 32: 407–499

Fan J., Li R. (2001) Variable selection via nonconcave penalized likelihood and its oracle properties. Journal of the American Statistical Association 96: 1348–1360

Fan J., Li R. (2002) Variable selection for Cox’s proportional hazards model and frailty model. Annals of Statistics 30: 74–99

Hurvich C.M., Tsai C.L. (1990) Model selection for Least absolute Deviations Regressions in Small Samples. Statistics and Probability Letters 9: 259–265

Knight K., Fu W.J. (2000) Asymptotics for Lasso-type estimators. Annals of Statistics 28: 1356–1378

Koenker R., D’Orey V. (1987) Computing regression quantiles. Applied Statistics 36: 383–393

Shen X., Ye J. (2002) Adaptive model selection. Journal of the American Statistical Association 97: 210–221

Pakes A., Pollard D. (1989) Simulation and the asymptotics of optimization estimators. Econometrica 57: 1027–1057

Pollard, D. (1990). Empirical Processes: Theory and Applications, Reginal Conference Series Probability and Statistics: Vol. 2. Hayward: Institute of Mathematical Statistics.

Pollard D. (1991) Asymptotics for least absolute deviation regression estimators. Econometric Theory 7: 186–199

Portnoy S., Koenker R. (1997) The Gaussian hare and the Laplacian tortoise: Computability of squared-error versus absolute-error estimators. Statistical Science 12: 279–296

Rao C.R., Zhao L.C. (1992) Approximation to the distribution of M-estimates in linear models by randomly weighted bootstrap. Sankhyā A 54: 323–331

Ronchetti E., Staudte R.G. (1994) A Robust Version of Mallows’s C p . Journal of the American Statistical Association 89: 550–559

Stamey T., Kabalin J., McNeal J., Johnstone I., Freiha F., Redwine E., Yang N. (1989) Prostate specific antigen in the diagnosis and treatment of adenocarcinoma of the prostate, ii: Radical prostatectomy treated patients. Journal of Urology 16: 1076–1083

Tibshirani R.J. (1996) Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society Series B 58: 267–288

Xu, J. (2005). Parameter estimation, model selection and inferences in L 1-based linear regression. PhD dissertation. Columbia University.

Zou H. (2006) The adaptive lasso and its oracle properties. Journal of the American Statistical Association 101: 1418–1429

Zou H., Hastie T., Tibshirani R. (2007) On the “degrees of freedom” of the LASSO. Annals of Statistics 35: 2173–2192

Author information

Authors and Affiliations

Corresponding author

About this article

Cite this article

Xu, J., Ying, Z. Simultaneous estimation and variable selection in median regression using Lasso-type penalty. Ann Inst Stat Math 62, 487–514 (2010). https://doi.org/10.1007/s10463-008-0184-2

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10463-008-0184-2