Abstract

Colour image enhancement not only is of high importance in consumer electronics, but also plays significant role in medical imaging, remotely sensed imaging, etc. Moreover, low-exposure colour images inherently lack sufficient image details which are exclusively necessary for workings in these domains. To address this less explored problem, a novel enhancement algorithm involving estimation of the fuzzy histogram with thresholding based on the computed effect of exposure value has been proposed. The algorithm operates on the lightness (\(L^{*}\)) component of the input image in \(L^{*}a^{*}b^{*}\) colour space, while preserving the colour-opponent dimensions (\(a^{*} \) and \( b^{*}\)) to maintain the natural outlook of the image. This technique has been experimentally demonstrated over a dataset consisting of images generated at different exposure levels. Quantitative and qualitative analysis of the relative performance of the proposed algorithm has been shown with respect to state-of-the-art enhancement algorithms over the \(L^{*}a^{*}b^{*}\) space.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Depending on the perception of the human visual system (HVS) (Gonzalez and Woods 2008), contrast can be defined as the luminance difference between the brightest and the darkest regions in the image. Contrast is one of the most significant factors for providing better perception of the image details. In general, the contrast of an image gets influenced by the exposure of the photosensitive sensors of the capturing devices to the available light. Physically, the exposure is decided by the ISO, shutter speed and the aperture of the camera. This is actually the proportion of light per unit area gained by the sensors of the camera. It can easily be implied that these three deciding factors about exposure values of an image remain unchanged over any postprocessing. Nevertheless, a colour image with inherently poor exposure value is hardly useful for any postprocessing operations, such as segmentation. Therefore, over the years, different approaches have been developed and applied to handle the challenge (Bhandari and Muarya 2019; Azetsu and Suetake 2019; Bianco et al. 2019; Bora 2018; Cai et al. 2018; Cepeda-Negrete et al. 2018; Chiang et al. 2018; Gupta and Tiwari 2019; Jia et al. 2018; Kumar et al. 2018; Lecca 2018; Ma et al. 2018; Mahapatra et al. 2015; Sinha et al. 2018; Tao et al. 2018; Tian and Cohen 2018; Tseng and Lee 2018; Veluchamy and Subramani 2019; Yu et al. 2018; Zhang et al. 2017)

Different enhancement techniques have been developed to deal with images having poor contrast. As these algorithms were mainly designed for greyscale images, they can be employed over only a particular component of colour space at a time. Therefore, to complete the enhancement task, first all the three components of the RGB space need to be enhanced separately and then integrated together to get the enhanced image. But performing the enhancement in this manner might make the pixel values to exceed bounds after the enhanced R, G, B components get integrated together to obtain the enhanced image. To avoid this scenario in this work, all the considered techniques will therefore be restricted to operate only over the lightness (\(L^{*}\)) component of the \(L^{*}a^{*}b^{*}\) space. Because unlike RGB space, only lightness (\(L^{*}\)) component of the \(L^{*}a^{*}b^{*}\) space contributes towards the contrast of the image. Also this will maintain uniformity for comparison of performances of different algorithms. Moreover, \(L^{*}a^{*}b^{*}\) space is more perceptually linear than other available separable colour spaces. Here, perceptually linear means the change of visual perception is being linear with respect to the change of colour value. In other words, this colour space is more perceptually sensitive with respect to the change of colour values. Therefore, it portrays human visual system better than the rest. Thus, for all the discussed algorithms, the input low-exposed RGB image will first be converted to the \(L^{*}a^{*}b^{*}\) space, enhanced and then reconverted to the RGB space for subsequent quantitative and qualitative assessment.

The most basic enhancement technique is histogram equalization (HE) (Gonzalez and Woods 2008). It reassigns the intensity levels of the image in accordance with its probability distribution. But it has also the trait of changing the mean brightness of the input image. Due to this particular reason, images with low-exposure values suffer from over-enhancement in patches.

A plethora of histogram separation algorithms have been developed for handling the problem of mean brightness modification. The brightness-preserving bi-histogram equalization (BBHE) (Kim 1997), dualistic sub-image histogram equalization (DSIHE) (Wan et al. 1999), recursive mean separate histogram equalization (RMSHE) (Chen and Ramli 2003a), recursive sub-image histogram equalization (RSIHE) (Sim et al. 2007), etc. are a few from this family. One of the most prominent and successful methods among these is minimum mean brightness error bi-histogram equalization (MMBEBHE) (Chen and Ramli 2003b). Here, the separation intensity is computed to return the minimum brightness error (MBE) between the input and the enhanced image. Using this threshold, the input histogram is divided into two sub-histograms and HE is used to equalize them separately in order to generate the enhanced image.

The brightness-preserving dynamic fuzzy histogram equalization (BPDFHE) (Sheet et al. 2010) is another promising enhancement algorithm, involving a fuzzy mechanism for histogram computation from its crisp version. It separates the smoothed histogram depending on the local maxima intensities and uses brightness normalization mechanism for preserving brightness. Yet this technique fails to generate significant results for low- exposure images.

Another family of methods emerged to solve the existing problems, in the concerned field, by means of controlling the rate of enhancement. These algorithms use clipping of the histogram values based upon some definite plateau limit. Few notable algorithms from this class are bi-histogram equalization plateau limit (BHEPL) (Ooi et al. 2009), self-adaptive plateau histogram equalization (SAPHE) (Wang et al. 2006), quadrants dynamic histogram equalization (QDHE) (Ooi and Isa 2010), etc. Median-mean-based sub-image-clipped histogram equalization (MMSICHE) (Singh and Kapoor 2014) is a recently developed technique from this family. It takes the median value of the brightness of the histogram as the plateau limit for the clipping operation. However, the clipping scheme leads to unnecessary information loss, which also results in significant loss of structural and feature similarities.

Taking into account all shortcomings of the presently available algorithms in the domain, here a novel method involving Fuzzy histogram estimation and exposure thresholding for low-exposure color image enhancement (FuzzyCIE) is proposed. Quantitative and qualitative analysis of the methodology is also discussed with reference to a novel dataset. Section 2 gives a detailed account of the design of the proposed algorithm. The dataset considered, along with all experimental results, is elaborately described in Sect. 3. Finally, Sect. 4 draws a conclusion of the work.

2 Methodology of FuzzyCIE

FuzzyCIE, the proposed algorithm, is fundamentally constituted of five steps, viz (i) at first converted the input RGB (colour) image into \(L^{*}a^{*}b^{*}\), (ii) estimated the fuzzy histogram over the \(L^{*}\) component of the \(L^{*}a^{*}b^{*}\) image, (iii) computed the effect of exposure for choosing proper threshold and divided the histogram into two parts (here, lower part is assumed to be underexposed and high part is overexposed), (iv) performed equalization over the two sub-histograms to generate the final enhanced image and (v) in the final stage of the process, the enhanced \(L^{*}\) component is combined with \(a^{*}\) and \(b^{*}\) components and converted into the enhanced RGB image. A graphical representation of the stages in the process is depicted in Fig. 1. This proposed novel scheme of combining these tasks effectively tackles all the problems in the concerned domain as explained in the previous section.

The contribution of proposed algorithm can be attributed to the quality of the enhanced images obtained after the processing. This is shown more appropriately through the quantitative indices given in Sect. 3. The main advantages of the proposed algorithm are in its preservation quality of the structural (Wang et al. 2004) and feature (Zhang et al. 2011) information to the highest degree in comparison with state-of-the-art algorithms. Although entropy does not reflect the visual quality completely, but in this case the entropy also supported the higher quality of the output images enhanced by the proposed algorithm. These findings are also supported by the visual analysis. Due to the use of the fuzzy histogram (Jawahar and Ray 1996), the ambiguities in the lightness level are properly taken care of and as a result the output image showed very high quality. Exposure-based threshed selection helped in focusing the enhancement on the low-exposed region. Ultimately, the equalization on two sub-histograms enhances the image suitably while maintaining the balance between high and low regions of the lightness levels. In the proposed algorithm, the \(L^{*}\) component of the \(L^{*}a^{*}b^{*}\) space is exploited to enhance the intensity of the image, while preserving the colour-opponent dimensions to retain their natural appearances.

2.1 Estimating fuzzy histogram of the \(L^{*}\) component

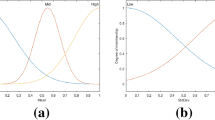

Changing the lightness level l to \(l\pm 1\) (where the scaled version of l is in the range 0–255) would hardly invoke any kind of significant change in visual details in an image. But these types of impressions (Sheet et al. 2010) of lightness values have not been considered in most of the existing enhancement techniques. In order to deal with such inexactness (Jawahar and Ray 1996), the fuzzy histogram of the \(L^{*}\) component is computed instead of its crisp counterpart. This allows the development of a smooth histogram. Importance of this step becomes all the more evident due to the existence of frequent fluctuations of lightness level around the compressed portion on the left side of the histogram. A symmetric fuzzy membership function, which appropriately conveys the idea of fuzzy lightness level “around l”, is employed to calculate the fuzzy histogram.

Let \(\mu (L^*(x,y),l)\) be the Gaussian fuzzy membership function defined on l and expressed as

where \(\sigma \) is the standard deviation estimated based on \(L^*\) of the image. Here, \(L^*(x,y)\) is the lightness value at pixel (x, y). Considering every lightness level and its fuzzy representation, the fuzzy histogram is calculated as:

where M is the highest lightness level available in the considered image.

2.2 Thresholding based on the effect of exposure index

The effect of the exposure (Hanmandlu et al. 2009) is the most vital point of consideration in the design of the FuzzyCIE. This is because the value of exposure allows the lightness histogram to be appropriately divided into ‘underexposed’ and ‘overexposed’ portions. Since the problem domain consists of low-exposure colour images, the thresholding with \(T_{exposure}\) aids in concentrating the degree of equalization more towards the underexposed section. Here, exposure and \(T_{exposure}\) are defined as

It can be easily deduced that \(0\le exposure \le 1\). As the considered domain consists of low-exposure colour images only, always here \(exposure\le 0.5\). Thresholding the image with \(T_{exposure}\) would generate two separate parts, viz underexposed lightness component \(L^{*}_u\) and overexposed lightness component \(L^{*}_o\).

2.3 Equalizing sub-histograms

The underexposed component \(L^{*}_u\) is represented as \(\{h_{f} (0), h_{f} (1), \cdots ,\)\(h_{f} (T_{exposure})\}\), while the overexposed component \(L^{*}_o\) is rendered as \(\{h_{f} ( T_{exposure} +1),h_{f} ( T_{exposure} +2), \ldots ,h_{f} (M-1)\}\). The corresponding probability density functions (pdf) of those two components are defined as

Analogously, the cumulative density functions (cdf) of components \(L^{*}_u\) and \(L^{*}_o\) can be interpreted as

In order to equalize (Gonzalez and Woods 2008) the lightness components \(L^{*}_u\) and \(L^{*}_o\) separately, two transformation functions \(f_u (l)\) and \(f_o (l)\) are established as

Integrating the two equalized lightness components, the resultant enhanced image is obtained.

3 Experimental results and analysis

A novel dataset has been formulated from scratch, by taking images from Kodak lossless true colour image suite and generating colour images at different exposure levels for these images. Experimentation is carried out on a variety of images. The results were more or less similar in nature. However, to demonstrate the performance, five typical images from different categories are taken and the results are evaluated under the same settings and indices. These original images are named as ‘hat’, ‘raft’, ‘lighthouse’, ‘motorcross’ and ‘woman’, and displayed in Fig. 2. Consecutive exposure levels were generated from these images by condensing their lightness component histograms towards zero, so that the effect of exposure index value decreases by \(30\%\) (approximately) from the input. Mathematically, if the effect of exposure index at level i is \(exposure_{i}\), then

Here, \(exposure_0\) is the effect of exposure on the lightness of the original image. For the sake of convenience and uniformity, the test images with low-exposure values have been generated by Adobe Photoshop Elements 11. With subsequent level i, (\(i = 1, 2,3 , \ldots \)), the quality of an image would be poorer and it would steadily lose its natural appearance. This mechanism allows testing the capability of the algorithms in enhancing from different degrees of low exposures.

Using the generated dataset, the relative performance of FuzzyCIE has been analysed with respect to HE, MMBEBHE, BPDFHE and MMSICHE. Care has been taken so that every compared algorithm represents a different family of techniques. Keeping in mind the principal aim of FuzzyCIE, a novel testing strategy has also been devised with quantitative indices.

3.1 Quantitative analysis

Three different indices have been utilized for the quantitative assessment of FuzzyCIE. Entropy (Wang and Ye 2005), structural similarity (Wang et al. 2004) and feature similarity (Zhang et al. 2011) produce thorough evaluation of the enhanced images, as demonstrated in Tables 1, 2 and 3. The formulations of these quantitative indices are briefly stated in Appendix. Using the quantitative indices, the results are given for test(input) images (i.e., images at different levels of exposure) and their enhanced versions using different algorithms along with proposed technique.

In case of MSSIM and FSIM computation, the original image (i.e., the image at normal exposure) is taken as the reference image. Therefore, MSSIM and FSIM are always computed between the images enhanced from the low-exposed test images and the original images with normal exposure values (instead of the input test images). This allows us to evaluate how well each algorithm could enhance an image starting from its low-exposed version and how well these enhanced images could be able to emulate from the viewpoint of structural similarity and feature similarity that of the original image.

Table 1 displays the entropy values of the input images and their enhanced versions. It is evident that FuzzyCIE retains highest amount of image detail in every instance. MSSIM (\(0\le \mathrm{MSSIM} \le 1\)) being closer to 1 denotes higher structural similarity between the two compared images. Table 2 represents the MSSIM values of the low-exposure test images, along with those for their enhanced versions (computed w.r.t. their original versions). As before, the MSSIM values of FuzzyCIE are found to be higher than the rest of the algorithms compared, thereby establishing its superiority. As before, FSIM (\(0\le \mathrm{FSIM} \le 1\)) closer to 1 indicates a higher feature similarity between the compared images. Table 3 exhibits the FSIM values of the input low-exposure images and their enhanced versions (computed w.r.t. the normal exposure instances). Again the corresponding values of FuzzyCIE are found to be better than those for the compared algorithms.

It is evident from the quantitative results that FuzzyCIE generates predominantly better quality images than the other compared techniques. It can also be observed from Tables 2 and 3 that FuzzyCIE produces MSSIM and FSIM values very close to 1. Therefore, its superiority in generating output image (almost similar to the original image) from its low-exposure versions can be stressed. Moreover, FuzzyCIE also produces better results even when the exposure levels decrease. In other words, even when the degree of degradation in the test images becomes higher as observed from the column (B) of Tables 1, 2 and 3, the FuzzyCIE-enhanced results demonstrate negligible changes as observed from the last columns of Tables 1, 2 and 3, unlike those of the other compared algorithms. This further establishes the consistency of FuzzyCIE.

3.2 Qualitative analysis

For visual evaluation, the test images with the computed enhanced results along with their individual histograms are depicted in Figs. 3, 4, 5, 6 and 7. These test images portray exposure levels 2, 3, 4 and 5 for all the five images. Here, exposure level is defined as exposure index at level i. It is determined using equation 11.

The results produced by HE in Figs. 3b, 4b, 5b, 6b and 7b columns suffer from excessive enhancement, thereby damaging the natural appearance of the images. For example, the colour of the hats in Fig. 3b column displays intensity saturation. It can be noticed from Figs. 3c, 4c, 5c, 6c and 7c columns that MMBEBHE not only fails to enhance the image but it also introduces abnormal artefacts in all cases. Different spots in the waterbody and the raft in Fig. 4c column are just a few of such instances. BPDFHE is hardly able to produce any enhancement in the generated images in Figs. 3d, 4d, 5d, 6d and 7d columns. Moreover, it exhibits unnatural noise artefacts, as in the walls of the lighthouse and houses in Fig. 5d column. Analogously, MMSICHE produces unsatisfactory results throughout Figs. 3e, 4e, 5e, 6e and 7e columns, with abnormal artefacts as well as excessive enhancement in patches (such as in the metal frames of Fig. 6e column, in the cheeks and the necklace of Fig. 7e column, etc.).

On the other hand, the results generated by FuzzyCIE are better in every aspect. The images in Figs. 3f, 4f, 5f, 6f and 7f columns are visual proofs of the more effective enhancement by this novel algorithm over the rest. The greatest challenge for any enhancement algorithm is to maintain the natural appearance of the images while enhancing from low-quality versions. The proposed algorithm could successfully do so, even with input images under very low-exposure conditions, as compared with several state-of-the-art algorithms. The corresponding histograms also demonstrate that FuzzyCIE successfully emulates the histograms of the original images of Fig. 2. Again, as the considered exposure levels decrease in the test images, the results computed by FuzzyCIE remains free from any unwanted visual artefacts or intensity saturation. But in the compared algorithms, the occurrence of such deformities increases with subsequent exposure levels. This highlights the claim that even with extremely low-exposure value, FuzzyCIE would still be able to create satisfactory enhancements which are very close to the original.

To show the superior performance of FuzzyCIE, two naturally camera-captured low-exposure images and their enhanced versions are displayed in Figs. 8 and 9. In Fig. 8, the ‘sea’ image and in Fig. 9 the ‘garden’ image can easily be visually interpreted as two images captured in low exposure. It can be observed that the enhanced images produced by the other algorithms contain considerable visual artefacts and unnatural brightness in patches. But Figs. 8f and 9f generated by the proposed FuzzyCIE algorithm are free from those effects. Also quantitatively, the generated entropy of the ‘sea’ and ‘garden’ images enhanced by FuzzyCIE has values of 6.922 and 7.442, respectively. The generated entropies of the enhanced images produced by the other algorithms (6.896 by HE, 5.878 by MMBEBHE, 6.025 by BPDFHE, 6.584 by MMSICHE for ‘sea’ image and 7.405 by HE, 5.806 by MMBEBHE, 5.062 by BPDFHE, 5.106 for MMSICHE for ‘garden’ image) are significantly lower than FuzzyCIE-enhanced images. As the normal-exposure version of these considered images (ground truths) are not available for comparison, it was not possible to compute the MSSIM and FSIM in these cases.

FuzzyCIE produces superior results for medical images with low exposure and remotely sensed images with low exposure as well. One example from both of these categories is shown in Figs. 10 and 11, respectively. The FuzzyCIE-enhanced retinal fundus image in Fig. 10f is completely free from visual artefacts, while the enhanced images generated by the other algorithms are filled up with unnatural brightness in patches. It can be easily observed that the important features of the retina image (such as the blood vessels) have been properly enhanced by FuzzyCIE only. In the similar way, the FuzzyCIE-enhanced ‘Kolkata’ image in Fig. 11f displays higher visual quality over the enhanced images by the other algorithms. Moreover, unlike the other compared algorithms, FuzzyCIE has managed to perform remarkable enhancement of the major features of the considered image. For example, the road next to the river in the top-left corner of the image is clearly visible and free from any unnatural brightness in the FuzzyCIE-enhanced image only. These results further corroborate the fact that FuzzyCIE can be ideally used in preprocessing tasks for medical images and remotely sensed images with low-exposure values.

In Fig. 12, results generated by three different instances of FuzzyCIE are displayed, where three different membership functions (Gaussian, triangular and trapezoidal) have been used to estimate the fuzzy histogram. It has been observed that the choice of the type of the membership function has no significant effect on the resultant enhanced images. Also the computed values of entropy, MSSIM and FSIM for FuzzyCIE with different membership functions are found to be almost the same. Therefore, one can choose any of these memberships to compute the fuzzy histogram. In the present work, Gaussian membership function has been used.

4 Conclusion

A novel enhancement algorithm FuzzyCIE has been developed for low-exposed colour images. A fusion strategy has been imbibed during design, to prevent all previous shortcomings in the working domain. The input colour images were considered in \(L^{*}a^{*}b^{*}\) space and their \(L^{*}\) components enhanced to preserve the colour-opponent dimensions. Therefore, the enhanced images could maintain their natural appearances. FuzzyCIE was found to be inherently free from gamut problem due to the low-exposure values of the images. The estimation of fuzzy histograms of the \(L^{*}\) components made FuzzyCIE free from the innate vagueness of the lightness values to aid in preserving higher structural and feature information. Therefore, the thresholding based on the effect of the exposure index channelled the focus of equalization more towards the condensed portion of the histogram. Finally, the equalization of the sub-histograms performed the desired enhancement. Detailed quantitative as well as qualitative analyses endorse the dominance of FuzzyCIE over other state-of-the-art algorithms in the literature.

Though the principal aim of developing this algorithm is to solve low-light photography issues in consumer electronics, the application of the proposed technique can also be extended to medical imaging, remotely sensed imaging, etc. Further research on this platform includes extension of this novel concept to the realm of high-exposure images, by taking care of the probable gamut problems in such scenario.

References

Bhandari AK, Muarya S (2019) Cuckoo search algorithm-based brightness preserving histogram scheme for low-contrast image enhancement. Soft Comput 1–27

Azetsu T, Suetake N (2019) Hue-preserving image enhancement in CIELAB color space considering color gamut. Opt Rev 26:1–12

Bianco S, Cusano C, Piccoli F, Schettini R (2019) Learning parametric functions for color image enhancement. In: International workshop on computational color imaging. Springer, New York, pp 209–220

Bora DJ (2018) An ideal approach for medical color image enhancement. In: Advanced computational and communication paradigms. Springer, New York, pp 351–361

Cai X, Ma J, Wu C, Ma Y (2018) Low-illumination color image enhancement using intuitionistic fuzzy sets. In: The Euro-China conference on intelligent data analysis and applications, pp 199–209

Cepeda-Negrete J, Sanchez-Yanez RE, Correa-Tome FE, Lizarraga-Morales RA (2018) Dark image enhancement using perceptual color transfer. IEEE Access 6:14935–14945

Chen SD, Ramli AR (2003a) Contrast enhancement using recursive mean-separate histogram equalization for scalable brightness preservation. IEEE Trans Consum Electron 49(4):1301–1309. https://doi.org/10.1109/TCE.2003.1261233

Chen SD, Ramli AR (2003b) Minimum mean brightness error bihistogram equalization in contrast enhancement. IEEE Trans Consum Electron 49(4):1310–1319. https://doi.org/10.1109/TCE.2003.1261234

Chiang CY, Chen KS, Chu CY, Chang YL, Fan KC (2018) Color enhancement for four-component decomposed polarimetric SAR image based on a CIE-Lab encoding. Remote Sens 10(4):545

Gonzalez RC, Woods RE (2008) Digital image processing, 3rd edn. Prentice Hall Inc., Upper Saddle River

Gupta B, Tiwari M (2019) Color retinal image enhancement using luminosity and quantile based contrast enhancement. Multidimens Syst Signal Process 1–9

Hanmandlu M, Verma OP, Kumar NK, Kulkarni M (2009) A novel optimal fuzzy system for color image enhancement using bacterial foraging. IEEE Trans Instrum Meas 58(8):2867–2879. https://doi.org/10.1109/TIM.2009.2016371

Jawahar CV, Ray AK (1996) Incorporation of gray-level imprecision in representation and processing of digital images. Pattern Recognit Lett 17(5):541–546. https://doi.org/10.1016/0167-8655(96)00002-5

Jia X, Feng X, Wang W, Zhang L (2018) An extended variational image decomposition model for color image enhancement. Neurocomputing 322:216–228

Kim YT (1997) Contrast enhancement using brightness preserving bihistogram equalization. IEEE Trans Consum Electron 43(1):1–8. https://doi.org/10.1109/30.580378

Kumar S, Pant M, Ray AK (2018) De-ie: differential evolution for color image enhancement. Int J Syst Assur Eng Manag 9(3):577–588

Lecca M (2018) Star: a segmentation-based approximation of point-based sampling milano retinex for color image enhancement. IEEE Trans Image Process 27(12):5802–5812

Ma S, Ma H, Xu Y, Li S, Lv C, Zhu M (2018) A low-light sensor image enhancement algorithm based on hsi color model. Sensors 18(10):3583

Mahapatra PK, Ganguli S, Kumar A (2015) A hybrid particle swarm optimization and artificial immune system algorithm for image enhancement. Soft Comput 19(8):2101–2109

Ooi CH, Isa NAM (2010) Quadrants dynamic histogram equalization for contrast enhancement. IEEE Trans Consum Electron 56(4):2552–2559. https://doi.org/10.1109/TCE.2010.5681140

Ooi CH, Kong NSP, Ibrahim H (2009) Bi-histogram equalization with a plateau limit for digital image enhancement. IEEE Trans Consum Electron 55(4):2072–2080. https://doi.org/10.1109/TCE.2009.5373771

Sheet D, Garud H, Suveer A, Mahadevappa M, Chatterjee J (2010) Brightness preserving dynamic fuzzy histogram equalization. IEEE Trans Consum Electron 56(4):2475–2480. https://doi.org/10.1109/TCE.2010.5681130

Sim KS, Tso CP, Tan YY (2007) Recursive sub-image histogram equalization applied to grey scale images. Pattern Recognit Lett 28(10):1209–1221. https://doi.org/10.1016/j.patrec.2007.02.003

Singh K, Kapoor R (2014) Image enhancement via median–mean based sub-image-clipped histogram equalization. OPTIK Int J Light Electron Opt 125(17):4646–4651. https://doi.org/10.1016/j.ijleo.2014.04.093

Sinha RK, Subudhi P, Mukhopadhyay S (2018) A morphological color image contrast enhancement technique using Hilbert 3d space filling curve. In: Advanced computational and communication paradigms. Springer, New York, pp 453–463

Tao F, Yang X, Wu W, Liu K, Zhou Z, Liu Y (2018) Retinex-based image enhancement framework by using region covariance filter. Soft Comput 22(5):1399–1420

Tian QC, Cohen LD (2018) A variational-based fusion model for non-uniform illumination image enhancement via contrast optimization and color correction. Signal Process 153:210–220

Tseng CC, Lee SL (2018) A low-light color image enhancement method on CIELAB space. In: 2018 IEEE 7th global conference on consumer electronics (GCCE), pp 141–142

Veluchamy M, Subramani B (2019) Image contrast and color enhancement using adaptive gamma correction and histogram equalization. Optik 183:329–337

Wan Y, Chen Q, Zhang BM (1999) Image enhancement based on equal area dualistic sub-image histogram equalization method. IEEE Trans Consum Electron 45(1):68–75. https://doi.org/10.1109/30.754419

Wang BJ, Liu SQ, Li Q, Z H-X (2006) A real-time contrast enhancement algorithm for infrared images based on plateau histogram. Infrared Phys Technol 48(1):77–82. https://doi.org/10.1016/j.infrared.2005.04.008

Wang C, Ye Z (2005) Brightness preserving histogram equalization with maximum entropy: a variational perspective. IEEE Trans Consum Electron 51(4):1326–1334. https://doi.org/10.1109/TCE.2005.1561863

Wang Z, Bovik AC, Sheikh HR, Simoncelli EP (2004) Image quality assessment: from error visibility to structural similarity. IEEE Trans Image Process 13(4):600–612. https://doi.org/10.1109/TIP.2003.819861

Yu H, Inoue K, Hara K, Urahama K (2018) Saturation improvement in hue-preserving color image enhancement without gamut problem. ICT Express 4(3):134–137

Zhang L, Zhang L, Mou X, Zhang D (2011) Fsim: a feature similarity index for image quality assessment. IEEE Trans Image Process 20(8):2378–2386. https://doi.org/10.1109/TIP.2011.2109730

Zhang M, Zou F, Zheng J (2017) The linear transformation image enhancement algorithm based on HSV color space. In: Advances in intelligent information hiding and multimedia signal processing. Springer, New York, pp 19–27

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

This article does not contain any studies with human participants or animals, performed by any of the authors.

Additional information

Communicated by V. Loia.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix

Entropy

The expected value (average) of the information content in an image is estimated by its entropy. It is defined as

where p(i) denotes the probability of intensity i and N is the number of grey levels of the considered image. The higher the entropy, the more information content (Wang and Ye 2005) is preserved in the output image.

Mean structural similarity index (MSSIM)

MSSIM assesses the structural similarity (Wang et al. 2004) criterion of an image, with respect to another, by simulating traits of the human visual system (HVS).

Here, \(I_r\) and \(I_e\) are the reference and the enhanced images, respectively, K is the total count of the image windows used for measurement and \(r_i\) and \(e_i\) are the sub-images contained in the ith windows of \(I_r\) and \(I_e\), respectively. The structural similarity index (SSIM) evaluates the luminance, contrast and correlation factors (Wang et al. 2004) of the image. It is represented as

where \(\mathcal {L}(r_i,e_i)\), \(\mathcal {C}(r_i,e_i)\) and \(\mathcal {S}(r_i,e_i)\) are the luminance, contrast and correlation factors of the image, respectively, and \(\alpha , \beta \), \(\gamma \) are the three parameters employed to tune the relative importance of these three factors (here, for experiments, \(\alpha = \beta = \gamma = 1 \) is chosen).

Feature similarity index (FSIM)

Based upon the intuition that HVS appraises an image by means of its low-level features (Zhang et al. 2011), the feature similarity index (FSIM) blends phase congruency (PC) and image gradient magnitude to compare the two images. It is defined as

Here, S(i) is the similarity between the reference (\(I_r\)) and the enhanced (\(I_e\)) images, and \(PC_{\max }(i)\) represents the maximum value of PC maps between \(I_r\) and \(I_e\).

Rights and permissions

About this article

Cite this article

Mandal, S., Mitra, S. & Shankar, B.U. FuzzyCIE: fuzzy colour image enhancement for low-exposure images. Soft Comput 24, 2151–2167 (2020). https://doi.org/10.1007/s00500-019-04048-6

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00500-019-04048-6