Abstract

Given a collection of science-based computational models that all estimate states of the same environmental system, we compare the forecast skill of the average of the collection to the skills of the individual members. We illustrate our results through an analysis of regional climate model data and give general criteria for the average to perform more or less skillfully than the most skillful individual model, the “best” model. The average will only be more skillful than the best model if the individual models in the collection produce very different forecasts; if the individual forecasts generally agree, the average will not be as skillful as the best model.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Scientific models of environmental systems are based on accepted physical, chemical and biological principles of energy and mass transfer. The goal of a science-based environmental system model is to approximate selected states of the system, and science-based computational models are often used to forecast system states. Weather prediction, climate studies, and analyses of groundwater flow and transport provide examples too numerous to list. The accuracy of model forecasts must be assessed when management and policy decisions are based on them. In general, forecasts are assessed by comparing predicted states to observations taken over given periods, locations, or both. A wide range of evaluation measures has been used for assessment, including correlations between observed states and forecasts (Epstein and Murphy 1989; Murphy 1989), anomaly correlation coefficients (Wilks 2005), ranked probability score (Epstein 1969; Murphy 1971), receiver operating characteristic under the curve (Swets 1973), Peirce skill score (Peirce 1884), potential predictability (Boer 2004), various information criteria (Neuman 2003; Ye et al. 2008), odds ratio skill score (Thornes and Stephenson 2001), and mean-square error (Wilks 2005), which is the basis of square-error skill scores used in weather forecasting (Murphy 1989, 1996) and is also the basis for the analysis in this paper.

Assessing model forecasts is complicated by the existence of alternative models that produce different estimates of system states. The question of how to accommodate differences among models naturally arises, and one response has been to average model forecasts, the idea being that an average can achieve a consensus among individuals that emphasizes their points of agreement. In both climate (Palmer et al. 2004; Latif et al. 2006) and groundwater applications (Ye et al. 2004, 2005, 2008; Beven 2006; Poeter and Anderson 2005), the (weighted) average of a collection of models seems to produce better forecasts than any individual model when evaluated by standard measures of skill. For example, the average of models is in general the “… ‘best’ model in its ability to simulate current climate, at least in terms of typical second order measures such as mean square differences, spatial correlation, and the ratio of variances” (Boer 2004). Nonetheless, the reasons for that are not completely understood (Latif et al. 2006).

We use observed mean-square error,

to compare the relative skill of individual models and their averages because it is a standard measure of forecast skill (e.g., Murphy 1989, 1996; Epstein and Murphy 1989; Boer 2004; Palmer et al. 2004; Wilks 2005; Poeter and Anderson 2005; Ye et al. 2008). Observed MSE is a strong measure of skill in the sense that it directly compares model forecasts, X t ∈ (X 1,…,X T ) to observed state variables, Y t ∈ (Y 1,…,Y T ) obtained over an interval of length T. The observed variables may be direct observations of the system state or a related variable, for instance, some combination of the principal components of the observed state and/or model. From here on “skill” means observed square error skill.

Our goal is to compare the skill of forecasts of a system state at t made by m = 1,…,M individual models, X (m) t , to the skill of their average,

In many climate applications, e.g., Meehl et al. (2007), model weights are uniform, w m = 1/M, and we use uniform weights in our climate example (Sect. 3). However, that is not necessary. In geohydrology, e.g., Neuman (2003), Ye et al. (2004, 2005, 2008), weights are often chosen by Bayesian methods. We make no assumptions about weights, beyond those just stated in (2), to derive our general results (Sect. 4), which are therefore independent of the method used to choose weights. In some approaches, for instance Palmer et al. (2004), the values X (m) t are themselves the result of stochastic averaging, but that also does not affect our analysis. Krishnamurti and his colleagues have considered weighted ensembles where some of the weights may be negative, but that approach raises several questions that go beyond the scope of this paper and so we only consider nonnegative weightings.

We show by example (Sect. 3) and analysis (Sect. 4) that a forecast produced by averaging outputs from a collection of M models will be more skillful than the forecast of any individual model, \( X^{(m)} , \) only if the models in the collection do not correspond too closely. In other words, for the average to be more skillful than any \( X^{(m)} , \) it is necessary that the collection of models include a diverse set of distinctly different forecasts. Second, if the forecasts of individual models are too similar, the average will produce worse forecasts than the most skillful individual model. Intuitively, averaging in this case dilutes the best forecast with other, similar forecasts that are not as good.

2 Components of MSE(\( \bar{X},\;Y \))

Using w m ≥ 0 for all m, the MSE of the average of a collection of models,

depends on the MSEs of the individual models, as well as the correspondences between models,

The correspondence has an obvious geometrical interpretation,

due to its dependence on the angle, \( \theta_{{m,m^{\prime}}} \) between the vectors

and \( \vec{Z}^{{(m^{\prime})}} \) in ℜT. We use this to motivate our general results in Sect. 4. If the models are unbiased, i.e., E [X (m) t ] = Y t , the correspondence is proportional to the correlation between models and has similar mathematical properties. But it should be emphasized that we are focusing on the model results as a fixed set of outcomes and are not assuming additional probability structure in this problem. From now on we write \( {\text{MSE(}}\vec{Z}^{(m)} )= {\text{MSE(}}X^{m} ,Y ) \) when it is convenient.

3 North American climate example

To illustrate these relationships, we consider the departures,

produced by 19 global climate models (m = 1,…,19) when estimating normal winter temperature for Western North America (WNA), Central North America (CNA) and Eastern North America (ENA). The normal is defined for the period 1970–1999, and winter consists of December, January and February. The data are normalized by summing over months and through the normal period 1970–1999,

Here we use i = WNA, CNA, or ENA instead of t to emphasize that the data are normalized and range over regions.

The model results are taken from the coordinated modeling effort supporting the Intergovernmental Panel on Climate Change (IPCC) Fourth Assessment Report (Meehl et al. 2007). The model output fields were re-gridded to a common 5° grid and compared to the observational data set from the Climate Research Unit (CRU), East Anglia, and the Hadley Centre, UK MetOffice (Jones et al. 1999). More details of these data and a global analysis of this multi-model sample can be found in Tebaldi et al. (2005), Tebaldi and Knutti (2007), Jun et al. (2008), Knutti et al. (2008). The regions WNA, CNA, and ENA are a subset of the Giorgi and Mearns (2002) divisions.

The model departures from regional normals and MSEs are shown in Table 1 where models correspond to rows and regions to columns. The last two rows are average departures over (1) the full suite of models, \( \bar{Z}_{19} , \) including the most skillful, and (2) the suite of models with the most skillful left out, \( \bar{Z}_{18} . \) As noted, models are uniformly weighted in this section, w m = 1/M for all m, where M = 18 or 19 depending on which average is being considered.

Looking at the suite of all 19 models first, the average, \( \bar{X}_{19} , \) is not as skillful as the best model, m = 16, for these data: MSE(\( X^{{({ \min }_{19} )}} , \) Y) = MSE(X (16), Y) = 0.08 while MSE(\( \bar{X}_{19} , \) Y) = 0.39. When model 16 is removed from the set of models, MSE(\( X^{{(\min_{18} )}} , \) Y) = MSE(X (2), Y) = 0.47 while the average of the reduced set, \( \bar{X}_{18} , \) yields MSE(\( \bar{X}_{18} , \) Y) = 0.45. These two cases illustrate the sensitivity of model evaluations to the set of models. There are perhaps other factors that affect these results, such as the specific observation interval and choice of regions, however, our analysis does not address these points.

Additional insight can be gained by decomposing

which makes clear that the model average is more skillful than the best model when r ≤ −m 2, i.e., when the models do not correspond too much. Values of r and m corresponding to the full suite of nineteen models with model 16 included are r = 0.16 and −m 2 = −0.15 (Table 2). This is a case where the best model is so much more skillful than all others that averaging only dilutes its individual skill. When model 16 is removed, there is then enough disagreement among the remaining models for the average to perform better than any of the rest: r = −0.17 and −m 2 = −0.15 (Table 2).

4 General results

Our general results consist of a sufficient condition for the model average to perform less skillfully than the best model, and a necessary condition for the average to perform better. Each result only requires that the weights be non-negative and sum to 1 (Eq. 2).

4.1 Result 1

If the models correspond too closely, the average is less skillful than the best model. Intuitively, this is because the other models dilute the performance of the best model in this case. Referring to Eq. 9, it is clear that MSE(\( \bar{Z} \)) > MSE(\( \vec{Z}^{(\min )} \)) if Rm,m′ > MSE(Z(min)), for all m and m′.

Another way for the best model to be more skillful than the average is for the best to be much more skillful than all the other models, i.e., \( {\text{MSE}}(\vec{Z}^{(\min )} ) \ll {\text{MSE}}(\vec{Z}^{(m)} ) \) for all m ≠ min, as was the case in the climate example. In that case, MSE\( (\bar{Z}_{n + 1} ) - {\text{MSE}}(\vec{Z}^{(\min )} ) \cong {\text{MSE}}(\bar{Z}_{n + 1} ) \ge 0 \). However, Result 1 shows it is not necessary for one model to be much more skillful than the rest for the average to be less skillful; it is enough for all the models to correspond at a level that only depends on MSE(Z (min)).

4.2 Result 2

The average is more skillful than the best model only if some individual models do not correspond too much. This is derived from Result 1 as a proof by contradiction (modus tollens).

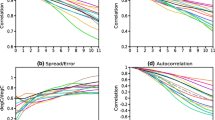

To emphasize the role of geometry, we illustrate the results in ℜT, the T-dimensional vector space of model forecasts (Figs. 1, 2). Letting \( \bar{Z} = (\bar{Z}_{1} , \ldots ,\bar{Z}_{T} ) \) and taking \( \vec{Z}^{(m)} \) from Eq. 6, the MSE are equivalent to squared lengths in ℜT, \( {\text{MSE}}(\bar{Z}) = {\frac{1}{T}}\left\| {\bar{Z}} \right\|^{2} \) and \( {\text{MSE}}(\vec{Z}^{(m)} ) = {\frac{1}{T}}\left\| {\vec{Z}^{(m)} } \right\|^{2}, \) while \( R_{{m,m^{\prime}}} = \sqrt {{\text{MSE}}(X^{(m)} ){\text{MSE}}(X^{{(m^{\prime})}} )} \cos \theta_{{m,m^{\prime}}} \) is the vector product \( \vec{Z}^{(m)} \cdot \vec{Z}^{{(m^{\prime})}} . \) In the figures, T = 3 for convenience of illustration, so each \( \vec{Z}^{(m)} \) = (Z (m)1 , Z (m)2 , Z (m)3 ). The thin vectors represent the performances of different models, while the thick vector is the average, \( \bar{Z}, \) their lengths corresponding to MSE(\( \bar{Z} \)) and MSE(Z (m)) respectively.

Figure 1 shows a typical case of Result 1 leading to MSE(\( \bar{Z} \)) > MSE(\( \vec{Z}^{{({ \min })}} \)). The models all vary similarly about \( \vec{Y}, \) as evidenced by their approximate colinearity, but \( \vec{Z}^{(\min )} \) performs much better than the others. The result of averaging is to “stretch” \( \bar{Z} \) away from \( \vec{Z}^{(\min )} \) in the general direction of the other models. Figure 2 illustrates Result 2, a case when MSE(\( \vec{Z}^{(\min )} \)) ≥ MSE(\( \bar{Z} \)). The role of the anti-correspondence requirement,

is clear: MSE(Z (min)) ≥ MSE(\( \bar{Z} \)) because the two sets of vectors “pull” against each other to produce a reduced \( \bar{Z}. \)

5 Summary and discussion

Alternate science-based computational models of a given environmental system always forecast system states that differ somewhat, and sometimes forecast states that differ considerably. Model averaging has been proposed as a means for dealing with differences among model forecasts, and it has been noted that in some cases the average of a collection of models produces “better” forecasts than any individual model in the collection (e.g., Boer 2004). We have compared models and their averages on the basis of mean-square difference skill score, which is a standard assessment measure in weather forecasting, climate studies and groundwater hydrology. In addition to its ubiquity, no other measure of skill has the straightforward metric properties of mean square error, the normalized distance between observations and forecasts.

We investigated two climate examples to compare the sensitivity of the skill of an average of a collection of models to the most skillful model (the “best”) in the collection. The best model in the first example was so much more skillful than any other model that the average simply could not perform as well as the best. When the best model was removed from the first collection, the average performed better than any individual model in the reduced collection. In that case, none of the remaining climate models performed well enough to dominate the others. Furthermore, the models disagreed, thus giving an example of the general result (Result 2) that the average can be more skillful than the best model only if some models make markedly different forecasts. The example also illustrated the sensitivity of skill assessments to the collection of models, but we did not go more deeply into that point.

Our general results give a sufficient condition for the best model to be more skillful than the average (Result 1) and a necessary condition for the average to be more skillful than the best individual (Result 2). In general, (1) the average is less skillful than the best individual if the forecasts of individuals correspond closely to each other when compared to the skill of the best model, and (2) the average is more skillful than the best model only if the forecasts of some individuals do not correspond. These results depend just on simple geometric properties of the collection of models, and are independent of (i) how the models make their forecasts and (ii) the weighting scheme used to derive their average (except the weights must be positive and sum to 1).

These results, and the example, suggest a certain amount of caution should be applied when making strong claims that model averages are more skillful than individual models, at least if those claims are based on second-order performance measures like observed square-error skill. At the same time, the results should not be over-interpreted. In the first place, Result 2 does not indicate that collections of models should be assembled with the idea of maximizing the differences among individuals. The goal is to make skillful forecasts, not to merely have a collection of models whose average is more skillful on a set of observations than any individual model. At the same time, Result 1 does not imply that an average is never useful when models agree. A collection of good models (models based on reasonable physical assumptions and estimates of system parameters) can be expected to produce forecasts that correspond strongly with each other, but differ in their details. An average might be useful in that setting even if it is not as skillful as the best model on a set of observations.

The discussion of the relative skill of science-based environmental models and their averages has taken place so far in the absence of statistical tests. The complex probability distributions of environmental forecasts in realistic settings is one reason for that. What is needed is a statistical test (or tests) to evaluate hypotheses about the means of forecasts made by complex physics models whose uncertain physical parameters are not Gaussian, or even necessarily unimodal (cf., Rubin 1995; Gomez-Hernandez and Wen 1998; Winter and Tartakovsky 2000, 2002; Christakos 2003; Guadagnini et al. 2003; Neuman and Wierenga 2003). In the absence of such tests, the results in this paper indicate the sources of apparent forecasting skills of model averages have a simple geometric explanation in some cases.

References

Beven K (2006) A manifesto for the equifinality thesis. J Hydrol 320:18–36

Boer GJ (2004) Long time-scale potential predictability in an ensemble of coupled climate models. Clim Dyn 23:29–44

Christakos G (2003) Another look at the conceptual fundamentals of porous media upscaling. Stoch Env Res Risk Assess 17(5):276–290

Epstein ES (1969) A scoring system for probability forecasts of ranked categories. J Appl Meteorol 8:985–987

Epstein ES, Murphy AH (1989) Skill scores and correlation coefficients in model verification. Mon Weather Rev 117:572–581

Giorgi F, Mearns LO (2002) Calculation of average, uncertainty range, and reliability of regional climate changes from AOGCM simulations via the “reliability ensemble averaging” (REA) method. J Clim 15:1141–1158

Gomez-Hernandez JJ, Wen X-H (1998) To be or not to be multi-Gaussian? A reflection on stochastic hydrogeology. Adv Water Resour 21(1):47–61

Guadagnini A, Guadagnini L, Tartakovsky DM, Winter CL (2003) Random domain decomposition for flow in heterogeneous stratified aquifer. Stoch Env Res Risk Assess 17(6):394–407

Jones PD, New M, Parker DE, Martin S, Rigor IG (1999) Surface air temperature and its variations over the last 150 years. Rev Geophys 37(173):173–199

Jun M, Knutti R, Nychka DW (2008) Spatial analysis to quantify numerical model bias and dependence: how many climate models are there? J Am Stat Assoc 103(483):934–947

Knutti R, Jun M, Nychka DW (2008) Local eigenvalue analysis of CMIP3 climate model errors. Tellus A 60(5):992–1000

Latif M, Collins M, Pohlmann H, Keenlyside N (2006) A review of predictability studies of Atlantic sector climate on decadal time scales. J Clim 19:5971–5987

Meehl GA, Stocker TF, Collins WD, Friedlingstein P, Gaye AT, Gregory JM, Kitoh A, Knutti R, Murphy JM, Noda A, Raper SCB, Watterson IG, Weaver AJ, Zhao ZC (2007) Global climate projections. In: Climate change 2007: the physical science basis. Contribution of working group I to the fourth assessment report of the intergovernmental panel on climate change. Cambridge University Press, Cambridge

Murphy AH (1971) A note on the ranked probability score. J Appl Meteorol 10:155–156

Murphy AH (1989) Skill scores based on the mean-square error and their relationships to the correlation coefficient. Mon Weather Rev 116:2417–2424

Murphy AH (1996) General decompositions of MSE based skill scores: measures of some basic aspects of forecast quality. Mon Weather Rev 124:2353–2369

Neuman SP (2003) Maximum likelihood Bayesian averaging of uncertain model predictions. Stoch Env Res Risk Assess 17(5):291–305

Neuman SP, Wierenga PJ (2003) A comprehensive strategy of hydrogeologic modeling and uncertainty analysis for nuclear facilities and sites (NUREG/CR-6805). Office of Nuclear Regulatory Research, U.S. Nuclear Regulatory Commission, Washington, DC, July

Palmer TN, Alessandri A, Andersen U, Cantelaube P, Davey M, Deleclue P, Deque M, Diez E, Doblas-Reyes J, Feddersen H, Graham R, Gualdi S, Gueremy J-F, Hagedorn R, Hoshen M, Keenlyside H, Latif M, Lazar A, Maisonnave E, Marletto V, Morse AP, Orfila B, Rogel P, Terres J-M, Thomson MC (2004) Development of a European multimodel ensemble system for seasonal-to-interannual prediction (DEMETER). Bull Am Meteorol Soc 6:853–872

Peirce CS (1884) The numerical measure of the success of predictions. Science 4:453–454

Poeter E, Anderson D (2005) Multimodel ranking and inference in ground water modeling. Ground Water 43(4):597–605

Rubin Y (1995) Flow and transport in bimodal heterogeneous formations. Water Resour Res 31(10):2461–2468

Swets JA (1973) The relative operating characteristic in psychology. Science 182:990–1000

Tebaldi C, Knutti R (2007) The use of the multi-model ensemble in probabilistic climate projections. Philos Trans R Soc A 365(1857):2053–2075

Tebaldi C, Smith RL, Nychka D, Mearns LO (2005) Quantifying uncertainty in projections of regional climate change: a Bayesian approach to the analysis of multimodel ensembles. J Clim 18(10):1524–1540

Thornes JE, Stephenson DB (2001) How to judge the quality and value of weather forecast products. Meteorol Appl 8:307–314

Wilks DS (2005) Statistical methods in the atmospheric sciences, 2nd edn. Academic Press, New York

Winter CL, Tartakovsky DM (2000) Mean flow in composite porous media. Geophys Res Lett 27(12):1759–1762

Winter CL, Tartakovsky DM (2002) Groundwater flow in heterogeneous composite aquifers. Water Resour Res 38(8):1148

Ye M, Neuman SP, Meyer PD (2004) Maximum likelihood Bayesian averaging of spatial variability models in unsaturated fractured tuff. Water Resour Res 40(5):W05113

Ye M, Neuman SP, Meyer PD, Pohlmann KF (2005) Sensitivity analysis and assessment of prior model probabilities in MLBMA with application to unsaturated fractured tuff. Water Resour Res 41(12):W12429

Ye M, Meyer PD, Neuman SP (2008) On model selection criteria in multimodel analysis. Water Resour Res 44(3):W03428

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Winter, C.L., Nychka, D. Forecasting skill of model averages. Stoch Environ Res Risk Assess 24, 633–638 (2010). https://doi.org/10.1007/s00477-009-0350-y

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00477-009-0350-y