Abstract

In this paper we consider a regression model that allows for time series covariates as well as heteroscedasticity with a regression function that is modelled nonparametrically. We assume that the regression function changes at some unknown time \(\lfloor ns_0\rfloor \), \(s_0\in (0,1)\), and our aim is to estimate the (rescaled) change point \(s_0\). The considered estimator is based on a Kolmogorov-Smirnov functional of the marked empirical process of residuals. We show consistency of the estimator and prove a rate of convergence of \(O_P(n^{-1})\) which in this case is clearly optimal as there are only n points in the sequence. Additionally we investigate the case of lagged dependent covariates, that is, autoregression models with a change in the nonparametric (auto-) regression function and give a consistency result. The method of proof also allows for different kinds of functionals such that Cramér-von Mises type estimators can be considered similarly. The approach extends existing literature by allowing nonparametric models, time series data as well as heteroscedasticity. Finite sample simulations indicate the good performance of our estimator in regression as well as autoregression models and a real data example shows its applicability in practise.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Change point analysis has gained attention for decades in mathematical statistics. There is a vast literature on testing for structural breaks when the possible timing of such a break, the change point, is unknown, see for instance Kirch and Kamgaing (2012) and reference mentioned therein. This paper, however, is concerned with the estimation of the change point when assuming its existence.

The most simple set of models can be described as follows

where \(s_0\in (0,1)\) is the (rescaled) change point, \(\mu _1\) and \(\mu _2\) the signal before and after the break, respectively, and \((\varepsilon _t)_t\) being stationary and centred errors. These models are often referred to as AMOC-models (at most one change). The problem naturally moved from the standard case with independent errors (see Ferger and Stute (1992) among others) to the time series context. Both Bai (1994) and Antoch et al. (1997) allow for linear processes and Hušková and Kirch (2008) more generally for dependent errors.

Additional information on the form of the signal can be expressed through a process of covariates \((X_t)_t\) resulting in linear regression models with a change in the regression parameter, such as

where \(\beta _1\) and \(\beta _2\) are the regression coefficients before and after the break, respectively. Bai (1997), Horváth et al. (1997) and Aue et al. (2012) among others consider the estimation of a change point in (multiple) linear regression models making use of least squares estimation. Considering \(X_t=Y_{t-1}\) in the linear regression model from above, one obtains autoregressive models with one change in the autoregressive parameter. The estimation of the parameters and the unknown change point in AR(1) models was for instance considered by Chong (2001), Pang et al. (2014) and Pang and Zhang (2015).

Our aim is to propose an estimator for the change point \(s_0\) in a nonparametric version of the regression model from above, namely

for some nonparametric regression functions \(m_{(1)}, m_{(2)}\) (before and after the break) and in addition also investigate the autoregressive case where \(X_t=Y_{t-1}\). While the investigation of points of discontinuity in (nonparametric) regression functions has been studied to some extend (see for instance Döring and Jensen (2015) for an overview), not that much research has been devoted to change point analysis in nonparametric models as the one above, where the change occurs in time. Delgado and Hidalgo (2000) propose estimators for the location and size of structural breaks in a nonparametric regression model imposing scalar breaks in time or values taken by some regressors, as in threshold models. Their rates of convergence and limiting distribution depends on a bandwidth, chosen for the kernel estimation. Chen et al. (2005) estimate the time of a scalar change in the conditional variance function in nonparametric heteroscedastic regression models using a hybrid procedure that combines the least squares and nonparametric methods.

The paper at hand extends existing literature, on the one hand by allowing for nonparametric heteroscedastic regression models with a general change in the unknown regression function where both errors and covariates are allowed to be time series, and on the other hand by investigating the autoregressive case. The achieved rate of convergence for the proposed estimator of \(O_P(n^{-1})\) is optimal as described in Hariz et al. (2007).

The remainder of the paper is organized as follows. The model and the considered estimator are introduced in Sect. 2. Section 3 contains the regularity assumptions as well as the asymptotic results for the proposed estimator. Section 4 is concerned with the special case of lagged dependent covariates, that is the autoregressive case. In Sect. 5 we describe a simulation study and discuss a real data example, whereas Sect. 6 concludes the paper. Proofs of the main results as well as auxiliary lemmata can be found in the Appendix.

2 The model and estimator

Let \(\{(Y_t,\varvec{X}_t):t\in \mathbb {N}\}\) be a weakly dependent stochastic process in \(\mathbb {R}\times \mathbb {R}^d\) following the regression model

The unobservable innovations are assumed to fulfill \(E[U_t|\mathcal {F}^t]=0\) almost surely for the sigma-field \(\mathcal {F}^t=\sigma (U_{j-1},{\varvec{X}}_j:j\le t)\). We assume there exists a change point in the regression function such that

where \(\lfloor ns_0\rfloor \) with \(s_0\in (0,1)\) is the unknown time the change occurs. Note that we keep above notations for simplicity reasons, however, the considered process is in fact a triangular array process \(\{(Y_{n,t},\varvec{X}_{n,t}):1\le t\le n,n\in \mathbb {N}\}\) and will be treated appropriately.

Assuming \((Y_1,{\varvec{X}}_1),\dots ,(Y_n,{\varvec{X}}_n)\) have been observed, the aim is to estimate \(s_0\). The idea is to base the estimator on the sequential marked empirical process of residuals, namely

for \(s\in [0,1]\) and \(\varvec{z}\in \mathbb {R}^d\), where \(\varvec{x}\le \varvec{y}\) is short for \(x_j\le y_j\) for all \(j=1,\dots , d\), \(\omega _n(\cdot )=I\{\cdot \in \varvec{J}_n\}\) being from assumption (V) below and \({\hat{m}}_n\) being the Nadaraya-Watson estimator, that is

with kernel function K and bandwidth \(h_n\) as considered in the assumptions below. Then we want to estimate \(s_0\) by

Note that \({{\hat{s}}}_n=\left\lfloor n {{\hat{s}}}_n\right\rfloor /n\).

Remark

The advantage of using marked residuals in comparison to using the classical CUSUM \({{\hat{T}}}_n(s,\varvec{\infty })\) to estimate the change point is that in the first case the estimator is consistent for all changes of the form (2.2) whereas there are several examples in which the use of \({{\hat{T}}}_n(s,\varvec{\infty })\) leads to a non-consistent estimator. To this end see the remark below the proof of Theorem 3.1 and compare to Mohr and Neumeyer (2019).

Remark

Mohr and Neumeyer (2019) constructed procedures based on functionals of \({\hat{T}}_n\), e.g. a Kolmogorov-Smirnov test statistic \(\sup _{s\in [0,1]}\sup _{\varvec{z}\in \mathbb {R}^d}|{\hat{T}}_n(s,\varvec{z})|\), to test the null hypothesis of no changes in the unknown regression function against change point alternatives as in (2.2). Given that such a test has rejected the null, the use of an M-estimator as in (2.3) seems natural. Furthermore, Cramér-von Mises type test statistics of the form \(\sup _{s\in [0,1]}\int _{\mathbb {R}^d}|{\hat{T}}_n(s,\varvec{z})|^2\nu (\varvec{z})d\varvec{z}\) for some integrable \(\nu :\mathbb {R}^d\rightarrow \mathbb {R}\) were also considered by Mohr and Neumeyer (2019). Assuming strict stationarity of the covariates and the existence of a density f such that \({\varvec{X}}_t\sim f\) for all t, as in (IX.1) below, the Cramér-von Mises approach from above with \(\nu \equiv f\) leads to an alternative estimator for \(s_0\), namely

However, to obtain a feasible estimator one needs to replace the integral \(\int _{\mathbb {R}^d}|{\hat{T}}_n(s,\varvec{z})|^2f (\varvec{z})d\varvec{z}\) by its empirical counterpart \(\frac{1}{n}\sum _{k=1}^{n}|{\hat{T}}_n(s,\varvec{X}_k)|^2\) in practise as f is not known.

3 Asymptotic results

In this section we will derive asymptotic properties for \({\hat{s}}_n\). To this end we introduce the following assumptions.

-

(I)

For all \(t\in \mathbb {Z}\) let \(E[U_t|\mathcal {F}^t]=0\) a.s. for \(\mathcal {F}^t=\sigma (U_{j-1},{\varvec{X}}_j:j\le t)\) and \(E[|U_{t}|^q]\le C_{U}\) for some \(C_U<\infty \) and \(q> 2\).

-

(II)

For all \(t\in \mathbb {Z}\) let \(E[|m_{(1)}(\varvec{X}_t)-m_{(2)}(\varvec{X}_t)|^r]\le C_{m}\) for some \(C_m<\infty \) and \(r> 2\).

-

(III)

Let \(\{(Y_t,{\varvec{X}}_t):1\le t\le n,n\in \mathbb {N}\}\) be strongly mixing with mixing coefficient \(\alpha (\cdot )\). For q, r from assumptions (I) and (II) and \(b:=\min (q,r)\) let \(\alpha (t)=O(t^{-{\bar{\alpha }}})\) with some \({\bar{\alpha }}>\max \big ((1+(b-1)(1+d))/(b-2), (b+2)/(b-2)\big )\).

-

(IV)

For b from assumption (III) let \(E[|Y_t|^b]<\infty \) and let \(\varvec{X}_t\) be absolutely continuous with density function \(f_t:\mathbb {R}^d\rightarrow \mathbb {R}\) that satisfies \(\sup _{\varvec{x}\in \mathbb {R}^d}E[|Y_t|^b|\varvec{X}_t=\varvec{x}]f_t(\varvec{x})<\infty \) and \(\sup _{\varvec{x}\in \mathbb {R}^d}f_t(\varvec{x})<\infty \) for all \(t\in \{1,\dots ,n\}\) and \(n\in \mathbb {N}\). Let there exist some \(L\ge 0\) such that \(\sup _{|i-j|\ge L}\sup _{\varvec{x}_i,\varvec{x}_j}E[|Y_iY_j||\varvec{X}_i=\varvec{x}_i,\varvec{X}_j=\varvec{x}_j]f_{ij}(\varvec{x}_i,\varvec{x}_j)<\infty \) for all \(n\in \mathbb {N}\), where \(f_{ij}\) is the density function of \((\varvec{X}_i,\varvec{X}_j)\).

-

(V)

Let \((c_n)_{n\in \mathbb {N}}\) be a positive sequence of real valued numbers satisfying \(c_n\rightarrow \infty \) and \(c_n=O((\log {n})^{1/d})\) and let \(\varvec{J}_n=[-c_n,c_n]^d\).

-

(VI)

For some \(C<\infty \) and \(c_n\) from assumption (V) let \(\varvec{I}_n=[-c_n-Ch_n,c_n+Ch_n]^d\) and let \(\delta _n^{-1}=\inf _{\varvec{x}\in \varvec{J}_n}\inf _{1\le t\le n}f_t(\varvec{x})>0\) for all \(n\in \mathbb {N}\). Further, let for all \(n\in \mathbb {N}\)

$$\begin{aligned} p_n= & {} \max \limits _{|\varvec{k}|=1}\sup \limits _{\varvec{x}\in \varvec{I}_n}\sup \limits _{1\le t\le n}|D^{\varvec{k}}f_t(\varvec{x})|<\infty \\ 0<q_n= & {} \max \limits _{0\le |\varvec{k}|\le 1}\sup \limits _{\varvec{x}\in \varvec{I}_n}\max _{j=1,2}|D^{\varvec{k}}m_{(j)}(\varvec{x})|<\infty , \end{aligned}$$where \(|\varvec{i}|=\sum _{j=1}^{d}i_j\) and \(D^{\varvec{i}}=\frac{\partial ^{|\varvec{i}|}}{\partial x_1^{i_1}\dots \partial x_d^{i_d}}\) for \(\varvec{i}=(i_1,\dots ,i_d)\in \mathbb {N}_0^d\).

-

(VII)

Let \(K:\mathbb {R}^d\rightarrow \mathbb {R}\) be symmetric in each component with \(\int _{\mathbb {R}^d}K(\varvec{z})d\varvec{z}=1\) and compact support \([-C,C]^d\). Additionally let \(|K(\varvec{u})|<\infty \) for all \(\varvec{u}\in \mathbb {R}^d\) and \(|K(\varvec{u})-K(\varvec{u'})|\le \Lambda \Vert \varvec{u}-\varvec{u'}\Vert \) for some \(\Lambda <\infty \) and for all \(\varvec{u},\varvec{u'}\in \mathbb {R}^d\), where \(\Vert \varvec{x}\Vert =\max _{i=1,\ldots ,d}|x_i|\).

-

(VIII)

With b and \({\bar{\alpha }}\) from assumption (III) let

$$\begin{aligned} \frac{\log {(n)}}{n^{\theta }h_n^d}=o(1) \text { for } \theta =\frac{{\bar{\alpha }}-1-d-\frac{1+{\bar{\alpha }}}{b-1}}{{\bar{\alpha }}+3-d-\frac{1+{\bar{\alpha }}}{b-1}}. \end{aligned}$$For \(\delta _n,p_n,q_n\) from assumption (VI) let

$$\begin{aligned} \left( \sqrt{\frac{\log (n)}{nh_n^{d}}}+h_np_n\right) p_nq_n\delta _n=o(n^{-\zeta }) \end{aligned}$$for some \(\zeta >0\).

-

(IX.1)

For all \(1\le t\le n, n\in \mathbb {N}\) let \(f_t(\cdot )= f(\cdot )\), for some density f.

-

(IX.2)

For all \(1\le t\le n, n\in \mathbb {N}\) let \(f_t(\cdot )= f_{(1)}(\cdot )\) for all \(t=1,\ldots ,\lfloor ns_0\rfloor \) and \(f_t(\cdot )= f_{(2)}(\cdot )\) for all \(t=\lfloor ns_0\rfloor +1,\ldots , n\), for some densities \(f_{(1)}\), \(f_{(2)}\).

Remark

The assumptions on the error terms and the mixing assumptions particularly allow for conditional heteroscedasticity. Assumptions (I), (II) and (III) are a trade off between the existence of moments and the rate of decay of the mixing coefficient. Assumptions (III), (IV), (VII) and the first part of (VIII) are reproduced from Kristensen (2012). Together with (V) and (VI), they are used to obtain uniform rates of convergence for \({\hat{m}}_n\) stated in Lemma A.1 in the Appendix. In (IX.1), we assume stationarity of the covariates for the whole observation period, while in the case of (IX.2) we assume stationarity before and right after the change occurs. Nevertheless both assumptions rule out general autoregressive effects such as \({\varvec{X}}_t=(Y_{t-1},\dots ,Y_{t-d})\). We will address this issue separately in Sect. 4.

Theorem 3.1

Assume (I), (II), (III), (IV), (V), (VI), (VII) and (VIII). Furthermore let either (IX.1) or (IX.2) hold. Then the change point estimator \({{\hat{s}}}_n\) is consistent, i. e.

Theorem 3.2

Under the assumptions of Theorem 3.1 for the change point estimator \({{\hat{s}}}_n\) it holds that

where \(r_n=n\).

The proofs of the theorems can be found in Appendix A.2. We state both theorems seperately since we need Theorem 3.1 to prove Theorem 3.2.

Remark

To obtain the rates of convergence we make use of the fact that \({\hat{s}}_n\) can be expressed using the sup norm on \( l^{\infty }(\mathbb {R}^d)\), i.e.

where \( l^{\infty }(\mathbb {R}^d)\) is the space of all uniformly bounded real valued functions on \(\mathbb {R}^d\). Note that similarly \({\tilde{s}}_n\) can be expressed using the \(L_2(P)\) norm, when \(({\varvec{X}}_t)_{t}\) is strictly stationary with marginal distribution P, namely

Using \({\tilde{N}}(g)\le N(g)\) for all \(g\in l^{\infty }(\mathbb {R}^d)\), corresponding results for \({\tilde{s}}_n\) as in Theorem 3.1 and Theorem 3.2 can be proven in a similar matter.

4 The autoregressive case

In this section we will consider the case where the exogenous variables include finitely many lagged values of the endogenous variable, we will refer to this model as the autoregressive case. We will focus on one dimensional covariates, however, the results do not depend on the dimension and can also be formulated for higher order autoregression models. Consider the nonparametric autoregression

with unobservable innovations \(U_t\) and one change in the regression function occurring at some unknown time \(\left\lfloor ns_0 \right\rfloor \) as in (2.2).

Furthermore assume the following.

-

(IX.3)

For all \(1\le t\le n, n\in \mathbb {N}\) let \(X_t:=Y_{t-1}\) be absolutely continuous with density \(f_{t}\). Let there exist densities \(f_{(1)}\) and \(f_{(2)}\) such that \(f_{t}(\cdot )= f_{(1)}(\cdot )\) for all \(t=1,\ldots ,\lfloor ns_0\rfloor \) and \(R_{n}(x):=\frac{1}{n}\sum _{j=\left\lfloor ns_0\right\rfloor +1}^{n}f_j(x)-\frac{n-\left\lfloor ns_0\right\rfloor }{n}f_{(2)}(x)\rightarrow 0\) for all \(x\in \mathbb {R}\) and \(n\rightarrow \infty \).

Remark

Note that (IX.3) requires on the one hand strict stationarity up to the time of change \(\left\lfloor ns_0\right\rfloor \). On the other hand the time series needs to reach its (new) stationary distribution fast enough after the change. This is a generalization of (IX.2) where we assumed stationarity both before and right after the change point, which can not be fulfilled in the model (4.1). A necessary condition then is that there exists a stationary solution of equation (4.1) under both \(m_{(1)}(\cdot )\) and \(m_{(2)}(\cdot )\) as regression functions.

Example

Consider the AR(1)-model

with standard normally distributed innovations \((\varepsilon _t)_t\) and \(a_t=a\in (-1,1)\) for \(t\le \lfloor ns_0\rfloor \), \(a_t=b\in (-1,1)\) for \(t>\lfloor ns_0\rfloor \), \(a\ne b\). Then assumption (IX.3) is fulfilled. Note to this end that \(X_t:=Y_{t-1}\sim \mathcal {N}(0,1/(1-a^2))\) for \(t\le \lfloor ns_0\rfloor \). The distribution after the change point is given by \(X_{\lfloor ns_0\rfloor +1+k}\sim \mathcal {N}\big (0,b^{2(k+1)}/(1-a^2)+\sum _{i=0}^kb^{2i}\big )\) for all \(k>0\). Thus with \(\sigma _j^2:=b^{2(j-\lfloor ns_0\rfloor )}/(1-a^2)+\sum _{i=0}^{j-\lfloor ns_0\rfloor -1}b^{2i}\) by the mean value theorem it holds for some \(\xi _j\) between \(\sigma _j^2\) and \((1-b^2)^{-1}\) that

for some constant \(C<\infty \) for all \(x\in \mathbb {R}\). Further we can conclude

and thus \(R_n(x)\xrightarrow [n\rightarrow \infty ]{}0\) for all \(x\in \mathbb {R}\).

In general verifying assumption (IX.3) for model (4.1) means to compare the distribution of a stochastic process that is not yet in balance with its stationary distribution. A well known technique to deal with this task is coupling, see e. g. Franke et al. (2002).

Under (IX.3) we get the following consistency result for our change point estimator in the autoregressive case.

Theorem 4.1

Assume model (4.1) under (I), (II), (III), (IV), (V), (VI), (VII), (VIII) and (IX.3). Then the change point estimator \({{\hat{s}}}_n\) is consistent, i. e.

The proof can be found in Appendix A.2.

Remark

Another possibility to handle the autoregressive case would be to model the change in a different way, namely

for two stationary processes \(\big (Y^{(1)}_t\big )_t\), \(\big (Y^{(2)}_t\big )_t\), see e. g. Kirch et al. (2015). In this case assumption (IX.2) is fulfilled and thus Theorems 3.1 and 3.2 apply.

5 Finite sample properties

5.1 Simulations

To investigate the finite sample performance of our estimator, we generate data from two different basic models, namely

-

(IID)

\(Y_t=m_t(X_t)+\sigma (X_t)\varepsilon _t\), where the observations \((X_t)_t\) are i.i.d., univariate and standard normally distributed, just as the errors \((\varepsilon _t)_t\).

-

(TS)

\(Y_t=m_t(X_t)+\sigma (X_t)\varepsilon _t\), where \((\varepsilon _t)_t\) i.i.d. \(\sim N(0,1)\) and the univariate observations \((X_t)_t\) stem from a time series \(X_t=0.4X_{t-1}+\eta _t\) with standard normal innovations \((\eta _t)_t\).

For both models we generate data both for the homoscedastic case \(\sigma \equiv 1\) as well as for the heteroscedastic case \(\sigma (x)=\sqrt{1+0.5x^2}\). The results for both are very similar in all situations, thus we only present the results for the heteroscedastic case. To model the change in the regression function we use three different scenarios

-

(C1)

\(m_t={\left\{ \begin{array}{ll} -0.5x,\quad &{} t=1,\ldots ,\lfloor ns_0\rfloor \\ 0.5 x\quad &{} t=\lfloor ns_0\rfloor +1,\ldots ,n,\end{array}\right. }\)

-

(C2)

\(m_t={\left\{ \begin{array}{ll} 0.1x,\quad &{} t=1,\ldots ,\lfloor ns_0\rfloor \\ 0.9 x\quad &{} t=\lfloor ns_0\rfloor +1,\ldots ,n,\end{array}\right. }\)

-

(C3)

\(m_t={\left\{ \begin{array}{ll} 0.5x,\quad &{} t=1,\ldots ,\lfloor ns_0\rfloor \\ (0.5+3\exp (-0.8x^2)) x\quad &{} t=\lfloor ns_0\rfloor +1,\ldots ,n,\end{array}\right. }\)

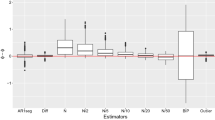

where we let \(s_0\) range from 0.1 to 0.9. In Fig. 1 the results for 1000 replications and sample sizes \(n=100,500,1000\) are shown, where we plot \(s_0\) against the estimated mean squared error of our estimator \({{\hat{s}}}_n\). The kernel for \({{\hat{m}}}_n\) is chosen as the Epanechnikov kernel of order four and the bandwidth is determined by a cross-validation method. It can be seen that our estimator performs quite well even for the smallest sample size \(n=100\) when \(s_0\) is 0.5 or close to it whereas for a change point that lies closer to the boundaries of the observation interval a larger sample size is needed to get satisfying results. This is due to the fact that if \(s_0=0.1\) or \(s_0=0.9\) there are only 10 observations before and after the change point respectively for \(n=100\) and thus the estimation of \(m_{(1)}\) and \(m_{(2)}\) respectively are poor. Moreover an asymmetry in the results is striking. This stems from the CUSUM type statistic that our estimator is based on. For \(s_0=0.1\) e. g. the sum consists of only 0.1n summands and thus the estimation of e. g. \(E[U_t]\) is worse than if \(s_0=0.9\) and the estimation is based on 0.9n summands. The effect of a decreasing performance of the estimators the closer \(s_0\) gets to the boundaries is typical for change point estimators based on CUSUM statistics and can be antagonized by the use of appropriate weights, see e. g. Ferger (2005).

To stress our estimator a little further we simulate the scenario that there is also a change in the variance function \(\sigma \) at a different time point than the change in the regression function m. In this situation the estimator should still be able to detect \(s_0\), the change point in the regression function. The results are shown in Fig. 2 for model (IID) and model (TS) with change point scenario (C1) where \(\sigma _t(x)=\sqrt{1+0.1x^2}\) for \(t\le 0.4n\) and \(\sigma _t(x)=\sqrt{1+0.8x^2}\) for \(t>0.4n\). They confirm the good performance of our estimator even in this more difficult situation.

As discussed in Sect. 4 our estimator can also be applied to the autoregressive case. To investigate the finite sample performance in this situation we generate data according to the model

-

(AR)

\(Y_t=m_t(Y_{t-1})+\sigma (Y_{t-1})\varepsilon _t\), where \((\varepsilon _t)_t\) i.i.d. \(\sim N(0,1)\).

For \(\sigma \equiv 1\) and change point scenario (C1) as well as (C2) assumption (IX.3) is fulfilled, see the example in Sect. 4. Simulation results for these cases are shown in Fig. 3 where the setting is the same as described above. They look very similar to the results of model (IID) and (TS) and thus confirm the theoretical result of Theorem 4.1. Even for examples where assumption (IX.3) can not be verified easily the performance of our estimator is satisfying, see Fig. 4 for model (AR) with \(\sigma \equiv 1\) and change point scenario (C3) as well as the heteroscedastic model (AR) with \(\sigma =\sqrt{1+0.5x^2}\) and change point scenario (C1).

As stated in the remark in Sect. 2 it is also possible to base the estimator on a Cramér-von Mises type functional of the marked empirical process of residuals. The simulation results for this type of estimator are very similar to those presented here for the Kolmogorov-Smirnov type estimator \({{\hat{s}}}_n\) and are omitted for the sake of brevity.

5.2 Data example

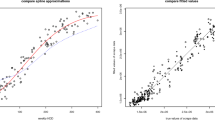

Finally, we will consider a real data example. The data at hand contains 36 measurements of the annual flow volume of the small Czech river, Ráztoka, recorded between 1954 and 1989 as well as the annual rainfall during that time. It was considered by Hušková and Antoch (2003) to investigate the effect of controlled deforestation on the capability for water retention of the soil. To this end it is of interest if and when the relationship between rainfall and flow volume changes. We set \(X_t\) as the annual rainfall and \(Y_t\) as the annual flow volume. Mohr and Neumeyer (2019) applied their Kolmogorov-Smirnov test to this data set, which clearly rejects the null of no change in the conditional mean function, indicating the existence of a change in the relationship between rainfall and flow volume. Using \({\hat{s}}_n\) to estimate the unknown time of change suggests a change in 1979. Note that this is consistent with the literature. As was pointed out by Hušková and Antoch (2003) large scale deforestation had started around that time. Figure 5 shows on the left-hand side the scatterplot \(X_t\) against \(Y_t\) using dots for the observations after the estimated change and crosses for the observations before the estimated change. On the right-hand side the figure shows the cumulative sum, \({n}^{1/2}\sup _{z\in \mathbb {R}}|{\hat{T}}_n(\cdot ,z)|\), as well as the critical value of the test used in Mohr and Neumeyer (2019) (red horizontal line) and the estimated change (green vertical line). Note that \({\tilde{s}}_n\) leads to the same result.

6 Concluding remarks

In this paper we consider nonparametric regression models with a change in the unknown regression function that allows for time series data as well as conditional heteroscedasticity. We propose an estimator for the rescaled change point that is based on the sequential marked empirical process of residuals and show consistency as well as a rate of convergence of \(O_P(n^{-1})\). In an autoregressive setting we additionally give a consistency result for the proposed estimator.

If more than one change occurs, the proposed estimator is not consistent for one of the changes in some situations. For detecting multiple changes we refer the reader to alternative procedures such as the MOSUM procedure proposed by Eichinger and Kirch (2018) or the wild binary segmentation procedure by Fryzlewicz (2014) (see also Fryzlewicz (2019)).

Investigating the asymptotic distribution of the proposed estimator is a subsequent issue. Certainly, it is of great interest as it can be used to obtain confidence intervals. However, this subject goes beyond the scope of the paper at hand and is postponed to future research.

References

Antoch J, Hušková M, Prášková Z (1997) Effect of dependence on statistics for determination of change. J Stat Plan Inference 60:291–310

Aue A, Horváth L, Hušková M (2012) Segmenting mean-nonstationary time series via trending regressions. J Econ 168:367–381

Bai J (1994) Least squares estimation of a shift in linear processes. J Time Ser Anal 15:435–472

Bai J (1997) Estimation of a change point in multiple regression models. Rev Econ Stat 79:551–563

Chen G, Choi YK, Zhou Y (2005) Nonparametric estimation of structural change points in volatility models for time series. J Econ 126:79–114

Chong TT-L (2001) Structural change in AR(1) models. Econ Theory 17:87–155

Delgado MA, Hidalgo J (2000) Nonparametric inference on structural breaks. J Econ 96:113–144

Döring M, Jensen U (2015) Smooth change point estimation in regression models with random design. Ann Inst Stat Math 67:595–619

Eichinger B, Kirch C (2018) A MOSUM procedure for the estimation of multiple random change points. Bernoulli 24:526–564

Ferger D (2005) Weighted least squares estimators for a change-point. Econ Qual Control 20:255–270

Ferger D, Stute W (1992) Convergence of changepoint estimators. Stoch Process Appl 42:345–351

Franke J, Kreiss J-P, Mammen E, Neumann M (2002) Properties of the nonparametric autoregressive bootstrap. J Time Ser Anal 23:555–585

Fryzlewicz P (2014) Wild binary segmentation for multiple change-point detection. Ann Stat 42:2243–2281

Fryzlewicz P (2019) Detecting possibly frequent change-points: wild binary segmentation 2 and steepest-drop model selection. Preprint on arXiv:1812.06880

Hariz SB, Wylie JJ, Zhang Q (2007) Optimal rate of convergence for nonparametric change-point estimators for nonstationary sequences. Ann Stat 35:1802–1826

Horváth L, Hušková M, Serbinowska M (1997) Estimators for the time of change in linear models. Statistics 29:109–130

Hušková M, Antoch J (2003) Detection of structural changes in regression. Tatra Mt Math Publ 26:201–215

Hušková M, Kirch C (2008) Bootstrapping confidence intervals for the change? Point of time series. J Time Ser Anal 29:947–972

Kirch C, Kamgaing JT (2012) Testing for parameter stability in nonlinear autoregressive models. J Time Ser Anal 33:365–385

Kirch C, Muhsal B, Ombao H (2015) Detection of changes in multivariate time series with application to EEG data. J Am Stat Assoc 110:1197–1216

Kosorok MR (2008) Introduction to empirical processes and semiparametric inference. Springer, New York

Kristensen D (2009) Uniform convergence rates of kernel estimators with heterogeneous dependent data. Econ Theory 25:1433–1445

Kristensen D (2012) Non-parametric detection and estimation of structural change. Econ J 15:420–461

Liebscher E (1996) Strong convergence of sums of \(\alpha \)-mixing random variables with applications to density estimation. Stoch Process. Appl. 65:69–80

Mohr M (2018) Changepoint detection in a nonparametric time series regression model. PhD thesis, University of Hamburg. http://ediss.sub.uni-hamburg.de/volltexte/2018/9416/

Mohr M, Neumeyer N (2019) Consistent nonparametric change point detection combining CUSUM and marked empirical processes. Preprint on arXiv:1901.08491

Pang T, Zhang D (2015) Asymptotic inferences for an AR(1) model with a change point and possibly infinite variance. Commun Stat Theory Methods 44:4848–4865

Pang T, Zhang D, Chong TT-L (2014) Asymptotic inferences for an AR(1) model with a change point: stationary and nearly non-stationary cases. J Time Ser Anal 35:133–150

Acknowledgements

Open Access funding provided by Projekt DEAL. Financial support by the DFG (Research Unit FOR 1735 Structural Inference in Statistics: Adaptation and Efficiency) is gratefully acknowledged.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix: Proofs

Appendix: Proofs

1.1 Auxiliary results

Lemma A.1

Under the assumptions (III), (IV), (V), (VI), (VII) and (VIII), it holds that

where

The proof is similar to the proof of Lemma 2.2 in Mohr (2018). The key tool is an application of Theorem 1 in Kristensen (2009). Details are omitted for the sake of brevity.

Remark

Under (IX.1) we have

under (IX.2) and (IX.3) we have

where

with \(R_{n}(\cdot )\) from assumption (IX.3).

Lemma A.2

Under the assumptions of Theorem 3.1 as well as under those of Theorem 4.1 there exists a constant \({{\bar{C}}}={{\bar{C}}}(C)<\infty \) such that

for all \(L=0,1,\ldots ,n-\kappa _n\), \(1\le \kappa _n\le n\), \(n\in \mathbb {N}\) and all \(C>0\) with q from assumption (I).

Proof of Lemma A.2

The proof follows along similar lines as the proof of Lemma A.3 in Mohr (2018). Throughout the proof the values of C and \({{\bar{C}}}\) may vary from line to line but they are always positive, finite and independent of n. Further note that deterministic terms that are of order \(O(\kappa _n)\) can be omitted as we can choose constants appropriately. It holds that

where (A.1) is of the desired rate in probability since

by Markov’s inequality with

Considering the term (A.2) we define the function class

to rewrite the assertion as

Now we will cover [0, 1] by finitely many intervals and \(\mathcal {F}_n\) by finitely many brackets to replace the supremum by a maximum. Let therefore

part the interval [0, 1] in \(K_n\) subintervals of length \({{\bar{\epsilon }}}_n\) with \({{\bar{\epsilon }}}_n=\kappa _n^{- \frac{1}{q}}\). Then

and \(2\kappa _n^{\frac{1}{q}}\left( \kappa _n{\bar{\epsilon }}_n+1\right) =2(\kappa _n+\kappa _n^{\frac{1}{q}})=O(\kappa _n)\). Further let

and

form the brackets \([\varphi _{{\varvec{j}}}^l,\varphi _{\varvec{j}}^u]_{{\varvec{j}}\in \times _{i=1}^d\{1,\ldots ,N_i\}}\) of \(\mathcal {F}_n\), where \({\varvec{z}}_{\varvec{j}}:=(z_{j_1,1},\ldots ,z_{j_d,d})\) and

gives a partition of \(\mathbb {R}\) for all \(i=1,\ldots ,d\). Then for all \({\varvec{z}}\in \mathbb {R}^d\) there exists a \(\varvec{j}\in \times _{i=1}^d\{1,\ldots ,N_i\}\) such that \({\varvec{z}}_{\varvec{j-1}}<{\varvec{z}}\le {\varvec{z}}_{\varvec{j}}\) where \(\varvec{j-1}:= (j_1-1,\ldots ,j_d-1)\). Thus every element \(\varphi \) of \(\mathcal {F}_n\) lies in one of the brackets \([\varphi _{{\varvec{j}}}^l,\varphi _{\varvec{j}}^u]\), i. e. \(\varphi _{\varvec{j}}^l(u,{\varvec{x}})\le \varphi (u,{\varvec{x}})\le \varphi _{\varvec{j}}^u(u,{\varvec{x}})\) for all \((u,{\varvec{x}})\). We say a bracket \([\varphi _{{\varvec{j}}}^l,\varphi _{\varvec{j}}^u]\) is of size \(\epsilon _n\) if \(\int (\varphi _{\varvec{j}}^u-\varphi _{\varvec{j}}^l)dP\le \epsilon _n\). The total number of brackets of size \(\epsilon _n\) needed to cover \(\mathcal {F}_n\) is denoted by \(J_n:=N_{[\ ]}(\epsilon _n,\mathcal {F}_n,\Vert \cdot \Vert _{L_1(P)})\) and is of order \(J_n=O(\epsilon _n^{-d})\), which follows analogously to but easier than the proof of Lemma A.7 in Mohr (2018).

For all \(\varphi \in \mathcal {F}_n\) there exists a \({\varvec{j}}\) with \(\varphi _{\varvec{j}}^l\le \varphi \le \varphi _{\varvec{j}}^u\) and thus

and

Therefore for all \(s\in [0,1]\)

and \(\kappa _n\epsilon _{n}=O(\kappa _n)\) if we choose \(\epsilon _n\) constant. Thus it remains to show that

and the same with \(\varphi _{\varvec{j}}^u\) replaced by \(\varphi _{\varvec{j}}^l\). Recall that

We will only consider the first summand in more detail since the rest works analogously. To prove that (A.3) is stochastically of the desired rate we apply a Bernstein type inequality for \(\alpha \)-mixing processes, see Liebscher (1996) Therorem 2.1. Following his notation we define

for fixed \({\varvec{z}}\in \mathbb {R}^d\) and \(s\in [0,1]\). Note that \(|Z_i|\le 2\kappa _n^{\frac{1}{q}}=:S(\kappa _n)\), \(Z_i\) is centered and

by assumption (I). Thus Liebscher’s Theorem can be applied with \(N=\lfloor \kappa _n^{1-\frac{2}{q}}\rfloor \). This means that

for some constants \(C_1,C_2,{{\bar{C}}}\) where the second to last inequality follows from the fact that \(\exp (-x)<x^{-k}k!\) for all \(k\in \mathbb {N}\) and \(x\in \mathbb {R}_{+}\) and the last inequality is true by assumption (III) which implies \({\bar{\alpha }}>(q+2)/(q-2)\). This completes the proof. \(\square \)

Lemma A.3

Under the assumptions of Theorem 3.1 as well as under those of Theorem 4.1 there exists a constant \({{\bar{C}}}={{\bar{C}}}(C)<\infty \) such that

and

for all \(L=0,1,\ldots ,n-\kappa _n\), \(1\le \kappa _n\le n\), \(n\in \mathbb {N}\) and all \(C>0\) with r from assumption (II).

Proof of Lemma A.3

First we will distinguish between the cases \(L+\lfloor \kappa _n s\rfloor \le \lfloor ns_0\rfloor \) and \(L+\lfloor \kappa _n s\rfloor > \lfloor ns_0\rfloor \). In the first case we can write

and analogously for the second case

We will only examine the case \(L+\lfloor \kappa _n s\rfloor \le \lfloor ns_0\rfloor \) in detail since the other case works analogously.

The remainder of the proof is similar to the proof of Lemma A.2. With \(g({\varvec{X}}_{i}):=(m_{(1)}({\varvec{X}}_{i})-m_{(2)}({\varvec{X}}_{i}))\) and \({{\bar{f}}}_n^{(s_0)}({\varvec{X}}_i)=\frac{\sum _{j=\lfloor ns_0\rfloor +1}^n f_{j}({\varvec{X}}_{i})}{\sum _{j=1}^nf_{j}({\varvec{X}}_{i})}\) it holds

and further

by the Markov inequality with

for all i and for some \(C_m<\infty \) by assumption (II). Thus we can rewrite our assertion as

with the function class

To replace the supremum over \(\varphi \) by a maximum we cover \(\mathcal {F}_n\) by finitely many brackets \([\varphi _{\varvec{j}}^l,\varphi _{\varvec{j}}^u]_{{\varvec{j}}\in \times _{i=1}^d\{1,\ldots ,N_i\}}\) where

and

and \({\varvec{j}}\), \({\varvec{z}}_{\varvec{j}}\) are defined as in the proof of Lemma A.2. The total number of brackets \(J_n:=N_{[\ ]}(\epsilon _n,\mathcal {F}_n,\Vert \cdot \Vert _{L_1(P)})\) needed to cover \(\mathcal {F}_n\) is again of order \(J_n=O(\epsilon _n^{-d})\), which follows analogously to but easier than the proof of Lemma A.7 in Mohr (2018). Now we proceed completely analogously to the proof of Lemma A.2 by replacing the supremum over s by a maximum as well and applying Liebscher’s Theorem. Since the arguments are the same as in the aforementioned proof we omit this part for the sake of brevity. \(\square \)

Lemma A.4

Under the assumptions of Theorem 3.1 as well as under those of Theorem 4.1 it holds

for all \(L=0,1,\ldots ,n-\kappa _n\), \(1\le \kappa _n\le n\), \(n\in \mathbb {N}\) and all \(C>0\) with \(\zeta >0\) from assumption (VIII).

Proof of Lemma A.4

It holds

by the Markov inequality. Further by Lemma A.1 with assumption (VIII) it holds that

which implies

and thus for sufficiently large n

for \(\kappa _n\le n\). This completes the proof. \(\square \)

1.2 Proof of main results

We will proof Theorem 3.1 under the assumption (IX.1) and simply make a note on the parts that change under (IX.2).

Proof of Theorem 3.1

First note that for all \(s\in [0,1]\) and \(\varvec{z}\in \mathbb {R}^d\)

where \(A_{n}(s,\varvec{z})=A_{n,1}(s,\varvec{z})+A_{n,2}(s,\varvec{z})+A_{n,3}(s,\varvec{z})+A_{n,4}(s,\varvec{z})\) with

and

since by inserting the definition of \({\bar{m}}_n\) we obtain for \(s\le s_0\)

and for \(s>s_0\)

Note that we use the notation \(\int _{(\varvec{\infty },\varvec{z}]}g(\varvec{x})d\varvec{x}=\int _{-\infty }^{z_d}\dots \int _{-\infty }^{z_1}g(x_1,\dots ,x_d)dx_1\dots dx_d\) here. Due to the dominated convergence theorem and assumption (II), it holds that

uniformly in \(s\in [0,1]\) and \(\varvec{z}\in \mathbb {R}^d\), where

Note that under (IX.2) the same assertion holds with

and

By Lemmata A.2, A.3 and A.4 with \(\kappa _n=n\), it holds that \(A_n(s,\varvec{z})=o_P(1)\) uniformly in \(s\in [0,1]\) and \(\varvec{z}\in \mathbb {R}^d\). Hence, we have shown that

uniformly in \(s\in [0,1]\) under both cases (IX.1) and (IX.2). The assertion then follows by Theorem 2.12 in Kosorok (2008) as \(s_0\) is well-separated maximum of \([0,1]\rightarrow \mathbb {R}, \ s\mapsto \Delta _{1}(s)\). \(\square \)

Remark

Note that there are examples of \(m_{(1)}\), \(m_{(2)}\) and f resp. \(f_{(1)}\), \(f_{(2)}\) that lead to \(\Delta _{2}(\varvec{\infty })=0\). In those cases a change point estimator based on the classical CUSUM \({{\hat{T}}}_n(s,\varvec{\infty })\) is not consistent.

Proof of Theorem 3.2

First note that \(s_0=\frac{\left\lfloor ns_0\right\rfloor }{n}+O(n^{-1})\) and \({{\hat{s}}}_n=\frac{\left\lfloor n{{\hat{s}}}_n\right\rfloor }{n}\). Thus we can consider \(\left| \frac{\left\lfloor n{\hat{s}}_n\right\rfloor }{n}-\frac{\left\lfloor ns_0\right\rfloor }{n}\right| \) instead of \(|{{\hat{s}}}_n-s_0|\). The proof follows mainly along the same lines as the proof of Theorem 1 in Hariz et al. (2007). Consider the norm \(N:l^{\infty }(\mathbb {R}^d)\rightarrow \mathbb {R}, \ g\mapsto \sup _{{\varvec{z}}\in \mathbb {R}^d}|g({\varvec{z}})|\) and let \(M>0\). We will show below that for all \(\eta >0\) and \(b,c>0\) it holds

where

with \(C:=b-2c\). Now it holds that \(E_{n,4}\rightarrow 0\) for all \(\eta >0\), due to Theorem 3.1. Further, \(E_{n,2}\rightarrow 0\) for all \(c>0\) as \(A_n(s_0,\varvec{z})=o_P(1)\) holds uniformly in \(\varvec{z}\in \mathbb {R}^d\). Finally choose \(b>0\) and \(n'=n'(b)\in \mathbb {N}\) such that \(E_{n,3}= 0\) for all \(n\ge n'\), which exists as \(\Delta _{1}(s_0)N(\Delta _2(\cdot ))>0\) and \(\Delta _{n,1}(s_0)N(\Delta _{n,2}(\cdot ))=\Delta _{1}(s_0)N(\Delta _2(\cdot ))+o(1)\). We then choose \(c>0\) such that \(b-2c>0\). To see the validity of (A.9) first note that for all \(s\in [0,1]\)

Applying the norm and triangular inequality we obtain for all \(s\in [0,1]\)

which is equivalent to

Due to the definition of \({\hat{s}}_n\) it holds that \(N({\hat{T}}_n({\hat{s}}_n,\cdot ))-N({\hat{T}}_n(s_0,\cdot ))\ge 0\). Additionally using the specific definition of \(\Delta _{n,1}\) we obtain

where we again make use of the triangular inequality in the last step. Putting the results together we obtain

Finally we will investigate \(E_{n,1}\). To do this we define shells

and choose \(L_n=L_n(\eta )\) such that \(2^{L_n}<r_n\eta \le 2^{L_n+1}\) for some \(\eta \le \frac{1}{2}\). Then

for some constant \({{\tilde{C}}}<\infty \) by Lemmata A.2, A.3 and A.4 with \(\kappa _n=\left\lfloor 2^{l+1}\frac{n}{r_n}\right\rfloor \) with q from assumption (I), r from assumption (II) and \(\zeta >0\) from assumption (VIII). Now choosing \(r_n=n\) and letting n and thus \(L_n\) tend to infinity and then M to infinity, the assertion of Theorem 3.2 follows. \(\square \)

Proof of Theorem 4.1

Under (IX.3) we have for all \(s\in [0,1]\) and \(z\in \mathbb {R}\)

with \(A_n(s,z)\) and \(\Delta _{n,1}(s)\) from the proof of Theorem 3.1, and with

and

Now it holds that

and \({\tilde{\Delta }}_n(s,z)\rightarrow 0\) uniformly in \(s\in [0,1]\) and \(z\in \mathbb {R}\), due to dominated convergence and assumption (II). Hence we have uniformly in s and z

with \(\Delta _{1}(s)\) as in the proof of Theorem 3.1. The rest goes analogously to the proof of Theorem 3.1. \(\square \)

Remark

Note that for finite \(n\in \mathbb {N}\) we do not get the decomposition of \({\hat{T}}_n\) as in (A.4) in the proof of Theorem 3.1. We only obtain this kind of decomposition when letting n tend to infinity. The decomposition for finite n, however, is essential for the proof of the rates of convergence in Theorem 3.2.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Mohr, M., Selk, L. Estimating change points in nonparametric time series regression models. Stat Papers 61, 1437–1463 (2020). https://doi.org/10.1007/s00362-020-01162-8

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00362-020-01162-8

Keywords

- Change point estimation

- Time series

- Nonparametric regression

- Autoregression

- Conditional heteroscedasticity

- Consistency

- Rates of convergence