Abstract

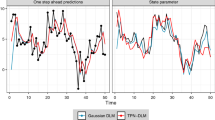

This article presents a method for training Dynamic Factor Graphs (DFG) with continuous latent state variables. A DFG includes factors modeling joint probabilities between hidden and observed variables, and factors modeling dynamical constraints on hidden variables. The DFG assigns a scalar energy to each configuration of hidden and observed variables. A gradient-based inference procedure finds the minimum-energy state sequence for a given observation sequence. Because the factors are designed to ensure a constant partition function, they can be trained by minimizing the expected energy over training sequences with respect to the factors’ parameters. These alternated inference and parameter updates can be seen as a deterministic EM-like procedure. Using smoothing regularizers, DFGs are shown to reconstruct chaotic attractors and to separate a mixture of independent oscillatory sources perfectly. DFGs outperform the best known algorithm on the CATS competition benchmark for time series prediction. DFGs also successfully reconstruct missing motion capture data.

Chapter PDF

Similar content being viewed by others

References

Barber, D.: Dynamic bayesian networks with deterministic latent tables. In: Advances in Neural Information Processing Systems NIPS 2003, pp. 729–736 (2003)

Bengio, Y., Simard, P., Frasconi, P.: Learning long-term dependencies with gradient descent is difficult. IEEE Transactions on Neural Networks 5, 157–166 (1994)

Dempster, A., Laird, N., Rubin, D.: Maximum likelihood from incomplete data via the EM algorithm. Journal of the Royal Statistical Society B 39, 1–38 (1977)

Ghahramani, Z., Roweis, S.: Learning nonlinear dynamical systems using an EM algorithm. In: Advances in Neural Information Processing Systems NIPS 1999 (1999)

Kschischang, F., Frey, B., Loeliger, H.-A.: Factor graphs and the sum-product algorithm. IEEE Transactions on Information Theory 47, 498–519 (2001)

Ilin, A., Valpola, H., Oja, E.: Nonlinear dynamical Factor Analysis for State Change Detection. IEEE Transactions on Neural Networks 15(3), 559–575 (2004)

Lang, K., Hinton, G.: The development of the time-delay neural network architecture for speech recognition. Technical Report CMU-CS-88-152, Carnegie-Mellon University (1988)

LeCun, Y., Bottou, L., Bengio, Y., Haffner, P.: Gradient-based learning applied to document recognition. Proceedings of the IEEE 86, 2278–2324 (1998a)

LeCun, Y., Bottou, L., Orr, G., Muller, K.: Efficient backprop. In: Orr, G.B., Müller, K.-R. (eds.) NIPS-WS 1996. LNCS, vol. 1524, p. 9. Springer, Heidelberg (1998b)

Lendasse, A., Oja, E., Simula, O.: Time series prediction competition: The CATS benchmark. In: Proceedings of IEEE International Joint Conference on Neural Networks IJCNN, pp. 1615–1620 (2004)

Levin, E.: Hidden control neural architecture modeling of nonlinear time-varying systems and its applications. IEEE Transactions on Neural Networks 4, 109–116 (1993)

Lorenz, E.: Deterministic nonperiodic flow. Journal of Atmospheric Sciences 20, 130–141 (1963)

Mattera, D., Haykin, S.: Support vector machines for dynamic reconstruction of a chaotic system. In: Scholkopf, B., Burges, C.J.C., Smola, A.J. (eds.) Advances in Kernel Methods: Support Vector Learning, pp. 212–239. MIT Press, Cambridge (1999)

Muller, K., Smola, A., Ratsch, G., Scholkopf, B., Kohlmorgen, J., Vapnik, V.: Using support vector machines for time-series prediction. In: Scholkopf, B., Burges, C.J.C., Smola, A.J. (eds.) Advances in Kernel Methods: Support Vector Learning, pp. 212–239. MIT Press, Cambridge (1999)

Sarkka, S., Vehtari, A., Lampinen, J.: Time series prediction by kalman smoother with crossvalidated noise density. In: Proceedings of IEEE International Joint Conference on Neural Networks IJCNN, pp. 1653–1657 (2004)

Takens, F.: Detecting strange attractors in turbulence. Lecture Notes in Mathematics, vol. 898, pp. 336–381 (1981)

Taylor, G., Hinton, G., Roweis, S.: Modeling human motion using binary latent variables. In: Advances in Neural Information Processing Systems NIPS 2006 (2006)

Wan, E.: Time series prediction by using a connectionist network with internal delay lines. In: Weigend, A.S., Gershenfeld, N.A. (eds.) Time Series Prediction: Forecasting the Future and Understanding the Past, pp. 195–217. Addison-Wesley, Reading (1993)

Wan, E., Nelson, A.: Dual kalman filtering methods for nonlinear prediction, estimation, and smoothing. In: Advances in Neural Information Processing Systems (1996)

Wang, J., Fleet, D., Hertzmann, A.: Gaussian process dynamical models. In: Advances in Neural Information Processing Systems, NIPS 2006 (2006)

Wierstra, D., Gomez, F., Schmidhuber, J.: Modeling systems with internal state using Evolino. In: Proceedings of the 2005 Conference on Genetic and Evolutionary Computation, pp. 1795–1802 (2005)

Williams, R., Zipser, D.: Gradient-based learning algorithms for recurrent networks and their computational complexity. In: Backpropagation: Theory, Architectures and Applications, pp. 433–486. Lawrence Erlbaum Associates, Mahwah (1995)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Mirowski, P., LeCun, Y. (2009). Dynamic Factor Graphs for Time Series Modeling. In: Buntine, W., Grobelnik, M., Mladenić, D., Shawe-Taylor, J. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2009. Lecture Notes in Computer Science(), vol 5782. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-04174-7_9

Download citation

DOI: https://doi.org/10.1007/978-3-642-04174-7_9

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-04173-0

Online ISBN: 978-3-642-04174-7

eBook Packages: Computer ScienceComputer Science (R0)