Abstract

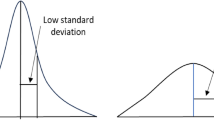

Information-theoretic measures are suitable to characterize datasets with discrete attributes (or continuous which can be transformed). They can find information that can be decisive in order to analyze the behavior of different learning algorithms with specific datasets. The objective of the work presented in this paper is to study by means of three similar datasets from UCI Repository Machine Learning, the possible reasons for which breast-cancer-wisconsin dataset, in comparison with other 20 datasets, showed in a previous research that Stacking by Meta-Decision Trees (MDT) was significant better than all other multiclassifier models, including Stacking by Multi-Response Linear Regression (MLR). In our experiments the proportion of missing values, among other significant changes in different measure values, provided evidences about the possible origin of the different behaviors presented by these multiclassifier schemes depending on data characteristics.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Fan, D., Chan, P., Stolfo, S.: A comparative evaluation of Combiner and Stacked Generalization. In: Proceedings of AAAI 1996 Workshop on Integrating Multiple Learned Models, pp. 40–46 (1996)

Chan, P., Stolfo, S.: On the accuracy of Meta-learning for Scalable Data Mining. Journal of Intelligent Information Systems 8(1), 5–28 (1997)

Peng, Y., Flach, P., Brazdil, P., Soares, C.: Decision Tree-Based Data Characterization for Meta-Learning. In: Proceedings of the Second International Workshop on Integration and Collaboration Aspects of Data Mining, Decision Support and Meta-Learning (IDDM 2002), pp. 111–122. Helsinki University Printing House (2002)

Zenko, B., Todorovski, L., Dzeroski, S.: A comparison of stacking with meta decision trees to other combining methods. In: Proceedings of the Fourth International Multi-Conference Information Society, vol. A, pp. 144–147. Jozef Stefan Institute, Ljubljana (2001)

Wolpert, D.: Stacked generalization. Neural Networks 5, 241–259 (1992)

Peng, Y., Flach, P., Soares, C., Brazdil, P.: Improved Dataset Characterisation for Meta-learning. In: Lange, S., Satoh, K., Smith, C.H. (eds.) DS 2002. LNCS, vol. 2534, pp. 141–152. Springer, Heidelberg (2002)

Rendell, L., Seshu, R., Tcheng, D.: Layered Concept Learning and Dynamically Variable Bias Management. In: Proceedings of the 10th International Joint Conference on Artificial Intelligence, pp. 308–314 (1987)

Vilalta, R., Giraud-Carrier, C., Brazdil, P.: Meta-Learning: Concepts and Techniques. In: Data Mining and Knowledge Discovery Handbook: A Complete Guide for Practitioners and Researchers. Springer, Heidelberg (2005)

Köpf, C., Taylor, C., Keller, J.: Meta-analysis: from data characterisation for meta-learning to meta-regression. In: Proceedings of the PKDD-2000 Workshop on Data Mining, Decision Support, Meta-Learning and ILP (2000)

Michie, D., Spiegelhalter, D., Taylor, C. (eds.): Machine Learning, Neural and Statistical Classification, volume: Artificial Intelligence. Ellis Horwood (1994)

Kalousis, A., Hilario, M.: Model selection via meta-learning: a comparative study. In: Proceedings of the 12th International IEEE Conference on Tools with AI. IEEE Press, Los Alamitos (2000)

Bensusan, H., Giraud-Carrier, C., Kennedy, C.: A higher-order approach to meta-learning. In: Proceedings of the ECMLS 2000 Workshop on Meta-Learning: Building Automatic Advice Strategies for Model Selection and Method Combination, pp. 109–117 (2000)

Pfahringer, B., Bensusan, H., Giraud-Carrier, C.: Tell me who can learn you and i can tell you who you are: Landmarking various learning algorithms. In: Proceedings of the 17th International Conference on Machine Learning, pp. 743–750 (2000)

Hilario, M., Kalousis, A.: Building Algorithm Profiles for Prior Model Selection in Knowledge Discovery Systems. In: Proceedings of the IEEE SMC 1999, International Conference on Systems, Man and Cybernetics, Tokyo (October 1999)

Ho, T.K., Basu, M.: Complexity measures of supervised classification problems. IEEE Transactions on Pattern Analysis and Machine Intelligence 24, 289–300 (2002)

Bernardo, E., Ho, T.K.: On classifier domain of competence. In: Proceedings of the 17th International Conference on Pattern Recognition, Cambridge, UK, pp. 136–139 (2004)

Studer, R., Staab, H.A., Mädche, A., Jetter, U.: Vorlesung Knowledge Discovery. Data Characterization Tool (DCT). Institute AIFB, Universität Karlsruhe (1999)

Köpf, C.: Meta-learning: Strategies, Implementations, and Evaluations for Algorithm Selection. Dissertations in Database and Information Systems-Infix, vol. 91. IOS Press, Amsterdam (2006)

Taha, I., Gosh, J.: Characterization of the Wisconsin breast cancer database using a hybrid symbolic-connectionist system. Technical Report UT-CVI-TR-97007. The Computer and Vision Research Center, University of Texas, Austin (1996)

Petrak, J.: The METAL Machine Learning Experimentation Environment V3.0 (METAL-MLEE) Manual - Version 3.0. Austrian Research Institute for Artificial Intelligence (October 2002), http://www.metal-kdd.org/

Farrand, J.: WekaMetal. University of Bristol (February 2002), http://www.cs.bris.ac.uk/~farrand/wekametal

University of Waikato. Weka (1999), http://www.cs.waikato.ac.nz/~ml/weka/index.html

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Segrera, S., Pinho, J., Moreno, M.N. (2008). Information-Theoretic Measures for Meta-learning. In: Corchado, E., Abraham, A., Pedrycz, W. (eds) Hybrid Artificial Intelligence Systems. HAIS 2008. Lecture Notes in Computer Science(), vol 5271. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-87656-4_57

Download citation

DOI: https://doi.org/10.1007/978-3-540-87656-4_57

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-87655-7

Online ISBN: 978-3-540-87656-4

eBook Packages: Computer ScienceComputer Science (R0)